Legal Aspects of Data - IDM

1/21

Earn XP

Description and Tags

The ethical & legal implications of data & design for software engineers (for the CSE1500 course))

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

22 Terms

What is Datafication

Activities once invisible or private are now captured, stored, analysed and monetised

—> Building systems that collect as much data as possible

List the different risks to privacy

– Re-identification: “anonymised” data is often not anonymous.

– Data breaches: once leaked, data can’t be “un-leaked.”

– Surveillance capitalism: companies track people to influence behaviour and maximise profit.

– Permanent records: data persists long after the context of collection is forgotten.

What are dark patterns?

Technically complying with the law but pushing users into agreeing with things they’d normally wouldn’t approve.

—> E.g. Facebook’s pay-or-consent system

What is ethical design (privacy-wise)

– transparency in how algorithms work

– user control over personalization

– resisting “dark patterns”

Known EU regulations & law

GDPR

Digital Services Act

AI act

Difference Law & Governance

Law sets the rules; governance ensures those rules are operationalized inside organizations.

• Law (GDPR, EU AI Act, etc)

– Defines rights, obligations, and prohibitions

– Identifies what is allowed and what is illegal

– Sets penalties for non-compliance

– Applies uniformly across all organizations

• Governance

– The strategies, processes, documentation, and roles organizations create to comply with the law

– Turns abstract legal duties into day-to-day practices

– Ensures accountability, oversight, and continuous improvement.

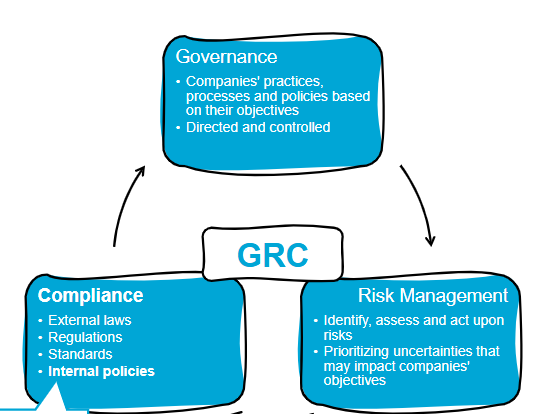

What does GRC stand for?

Direct vs Indirect Identifier Examples

Direct Identifier Examples

– Name, address, phone number

– Email with a name (e.g., anna.smith@...)

– Passport, ID, student id, social security numbers

– Bank account or customer numbers

Indirect Identifier Examples

– IP addresses, cookie IDs, device IDs

– Job title in small organization

– Demographic data (age, ZIP code)

– Vehicle registration numbers

What do we mean with sensitive data?

Data that can be used with malicious intent.

race, ethnicity, religious / political beliefs

trade union membership, orientation, etc…

List the GDPR Key Principles

• Lawfulness, Fairness, Transparency

• Purpose limitation: Data may only be collected for specific, explicit purposes and used only in ways compatible with those purposes.

• Data minimization: Collect and process only the personal data that is strictly necessary for the intended purpose.

• Accuracy: only store correct data

• Storage limitation: only as long as necessary

• Integrity and confidentiality: protected & secured

• Accountability: Organizations are responsible for complying with GDPR and must be able to demonstrate this compliance at any time.

List the Data Subject Rights (the rights the people have who’s data is collected)

You have the right to:

to be informed

to access

to rectification

to erasure (to be forgotten)

to restrict processing

to data portability (able to request & download data in a readable way

to object

to automated decision-making & profiling (e.g. hiring process)

What is DPIA?

A Data Protection Impact Assessment (DPIA) is a systematic process designed to help organizations identify and minimize the privacy risks of a project or system that involves personal data. It’s a key tool under data protection laws like the EU’s General Data Protection Regulation (GDPR), ensuring that privacy is considered from the start and throughout the lifecycle of data processing activities.

Explain what the DSA does

• safety, accountability, and transparency rules for large online intermediaries, including social media, service platforms, and marketplaces.

• Aims to prevent illegal content, harmful online activities, and disinformation, enforcing stricter obligations on platforms based on size

• Special rules for VLOPs (Very Large Online Platforms) with more than 45 Million EU users

Objectives:

Safety

Transparency

User Empowerment

What does FAIR Data stand for?

Findable: Data and metadata should be easy to locate

Accessible:

Interoperable: Data must be able to integrate with other dataset and work with other applications

Reusable

—> FAIR ≠ Open!

What is the Open Data?

The EU’s principal framework for open data remains the Open Data Directive (Directive (EU) 2019/1024), which governs the re-use of public-sector information.

– Enhancing openness and utility of public-sector data.

– Promoting dynamic data publication and API-based access.

– Strengthening transparency in public–private agreements.

What is Open Science

Open Science as the EU’s research default

– Efficient, transparent, and collaborative

– FAIR (Findable, Accessible, Interoperable, Reusable)

– Inclusive of open publishing, open data, open methodologies, and cross-sector collaboration.

– Open research data policies are primarily embedded in

the EU’s Open Science strategy, which is now a legal and mandatory component of EU research funding programmes—especially Horizon Europe.

What’s the link between open access & Horizon Europe?

The Horizon Europe funding program requires Mandatory Open Access of research.

– Immediate open access to all peer-reviewed

publications from funded research.

– Open access to research data by default (“as

open as possible, as closed as necessary”).

– Requirement to deposit datasets and publications in

trusted repositories.

– Data Management Plans (DMPs) are mandatory.

==> AKA lots of export regulations

Explains Anonymization

It permanently removes the ability to identify a person based on the data.

Data cannot be

Explains Pseudo-Anonymization

Replaces identifiers with codes, but there are still ways to purposefully de-anonymize

• Definition:

– Replaces identifiers with artificial identifiers (keys,

hashes, tokens) but keeps the possibility of re-

identification using additional information.

• Data Linkability:

– Possible through a separate key or mapping table.

• GDPR Status:

– Still considered personal data → GDPR fully

applies.

Why anonymization is useful?

When you need irreversible privacy

Anonymization used for:

– Research and statistics

– Data sharing with third parties

– Long-term storage without GDPR obligations

– Open data initiatives

List the different anonymization techniques

– Data masking / redaction:

Removing names, addresses, phone numbers.

Example: A hospital removes all patient identifiers and keeps only aggregated statistics.

– Aggregation Summarizing data (e.g., “20% of users are 18–25”).

– Generalization Replacing precise values with broader categories (e.g., “age

43” → “40–50”).

– Noise addition / differential privacy

Adding statistical noise so individuals cannot be singled out.

– Data swapping / shuffling

Mixing attributes between records to break linkability.

List the different pseaudo-anonymization techniques

Tokenization

Replacing identifiers with tokens (e.g., “John Doe” → “User1234”).

Example: Credit card numbers replaced with tokens in a financial

dataset.

– Hashing (with salt)

Transforming identifiers into hash values that can be reversed only

with the original input.

– Encryption

Encrypting identifiers so only key-holders can decrypt.

– Lookup tables / mapping keys

A separate file maps pseudonyms back to real identities.

Example: An e-commerce platform stores customer IDs in one

database and the mapping table in another.