IS 531--Kettles FINAL

1/314

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

315 Terms

Which IT group featured in this chapter does NOT benefit from releasing new software?

A. DBAs

B. Software developers

C. Operations Staff

D. Data Center Engineers

C. Operations Staff

Which of the following is an innovation that most directly supports DevOps?

A. SCRUM

B. Lean Software Development

C. Infrastructure As Code

D. Cloud Computing

E. Retrospectives

C. Infrastructure As Code

True or False: Only AWS has a service that supports Infrastructure as Code.

FALSE

"The main cloud providers like Google, Azure, and AWS each have an IaC platform that allows you to create a script that will create an IT infrastructure in their environment. In Azure it is called Automation. In Google Cloud, it is called Deployment Manager. and in AWS, it is called CloudFormation."

The IaC platforms within any given cloud provider are unique to their environment; hence, you can't create a script for CloudFormation and have it work in Azure.

True or false: there are open-source technologies like Terraform that allow you to create infrastructure scripts that are cross-platform

TRUE

if you are building an infrastructure that requires AWS and Azure and Google Cloud and possibly others, you can learn just one IaC platform and leverage it across many different environments.

if you only plan to use one cloud provider, then that vendor's IaC platform may come out on top and is likely to have the best overall set of features for that environment. However, if you need to support more than one cloud infrastructure provider, Terraform is likely the top choice.

what are some of the benefits of IAC?

*It greatly simplifies the knowledge required to build and maintain infrastructure,

*it speeds up the process to create environments,

* it makes building environments cheaper.

*IaC reduces risks,

*makes policy compliance easier

*makes mistakes less likely.

* It also gives you freedom to experiment and make mistakes without putting your environment into an unrecoverable state.

Which of the following is required in a CloudFormation template?

A. Parameters

B. Mappings

C. Conditions

D. Outputs

E. None of the Above

E. None of the Above

The only portion that is required in a CloudFormation template is the Resources section

explain AWS CloudFormation at a high level

if you have a text document that is written with the correct syntax, it can be parsed by the CloudFormation service and the service will build your AWS infrastructure for you.

what are some Infrastructure categories that may be included in your text document in CloudFormation?

networking, compute, storage, and security.

what is a template & what is a stack in CloudFormation?

the text document that contains instructions for building infrastructure is called a template. Once the template is run, it creates an entire AWS environment that is called a stack

Templates can be written in two ways: either using _________ _____ or _______.

JSON syntax or YAML

The primary difference between the two is that YAML doesn't require curly braces and some people argue that this increases readability.

True or false: You can't just buy templates that others have created and then adapt them to your needs.... its impossible

FALSE! you can buy templates and then change them to fit your needs.

what are the 10 sections of a CloudFormation template?

1. AWS template format version

2. description

3. metadata

4. parameters

5. rules

6. mappings

7. conditions

8. transform

9. resources

10. outputs

The only portion that is required in a CloudFormation template is the Resources section

Which language can you use for creating CloudFormation templates?

A. CloudFormation template language (CTL)

B. JSON

C. Python

D. JavaScript

B. JSON

what is the JSON outline for the resources section of a CloudFormation template?

Resources:

"Logical ID": {

"type":

"properties": {

set of properties

}

____________________________________________________________________

Logical ID: This is a name that is made up by you and can be referred to in other sections of the document. For example, the example EC2 instance being created is called "MyEC2Instance."

Type: This is the AWS resource that you wish to create. (AWS::EC2::Instance)

Properties: For any given resource, this is the section that can be used to add configuration options. In the case of EC2 instances, this includes the subnet, the security group, the hard drive, and the instance type.

stack

the name of the live environment full of AWS resources that gets created when you run a template

A stack is a live, running environment comprised of AWS resources. In order to create a stack, you need a template,

which is a file written in code that can create a stack. You could create unlimited stacks from a single template. If you had a perfect template for creating an "accounting VPC", complete with EC2 instances, etc., you could create unlimited copies (aka stacks) of that environment with a

properly written template file

create stack options

template is ready (compose a document on your local computer from scratch), use a sample template, create template in designer (CloudFormation Designer)

!Ref in the IAC template code?

how one resource can reference the name (Logical ID) of another.

"There is a command "!Ref WebSecurityGroup" on this line which assigns a security group with the specified name to the EC2 instance."

one reason that developers love IAC so much?

IaC helps you analyze and manage your security and control your settings by looking at a small number of

individual templates, rather than sifting through thousands of services that were configured by hand.

CloudFormation Desginer

a visual way of designing a CloudFormation template. At its simplest, using

the CloudFormation Designer involves dragging and dropping names from the left onto the

canvas on the right side of the page. The names on the left represent AWS resources. Once the

resources are on the canvas, you can connect them together by dragging arrows among them

How did I know what to type in the VPC resource object?

AWS cloudformation template . locate AWS::EC2::VPC and click on the link. On this page, you will find

documentation for the VPC JSON object, as well as examples (far down the page) of how you

can fill out the object.

In the user guide for CloudFormation, locate AWS:EC2:SecurityGroup. There are six possible properties. Is GroupDescription a required property? Yes or No.

A. Yes

B. No

A. Yes

in the lab, were there any resources that did not have icons that we had to add manually in the resources area of the IAC? (using the designer)

yes! VPCGatewayAttachment & SubnetARouteTableAssociation

"Examine the diagram on the canvas. Note that lines have been draw that show the relationships among different resources. If you had resources that weren't connected to anything else, that might indicate a problem."

IAC code diagram

how can you update your IAC?

One of the cool things about IaC like CloudFormation is you can update your stack by updating the

template. For example, if you wanted to change your security group policy to add or remove something,

you could run your updated template, and CloudFormation is smart enough to see what has changed,

and it will only update the Security Group. It won't take down or break or restart other parts of the

infrastructure

can you install a web server onto your EC2 through IAC?

YES!

"Right now, if you want to configure your EC2 instance that was created with your stack, you would have

to SSH in with your server key. Then you could do things like install a web server, a programming

language, upload your code for your application, etc. You can actually script all of that ahead of time

and not have to do that manually ever again (i.e. you can avoid the need to ever SSH into your server to

configure it). Anything you want to do at the command line once a server has started up can be put in a

text file called UserData."

Anything you want to do at the command line once a server has started up can be put in a text file called ________

UserData

this approach of using UserData is an alternative to using a golden image.

Which is not one of the steps in a code pipeline?

A. Planning

B. Coding

C. Building

D. Configuration

E. Testing

F. Deployment

D. Configuration

This term means that software is deployed to production as soon as it passes automated tests:

A. Continuous Delivery

B. Constant Delivery

C. Continuous Integration

D. Continuous Deployment

D. Continuous Deployment

A synonym for code pipeline is:

A. Continuous Integration/Continuous Delivery Pipeline

B. Continuous Compilation/Continuous Delivery Pipeline

C. Continuous Integration/Testing Pipeline

D. Continuous Integration Pipeline

E. Continuous Deployment Pipeline

F. DevOps Pipeline

A. Continuous Integration/Continuous Delivery Pipeline

With blue/green deployment, changes to an environment are rolled out in increments until an entire fleet of servers is updated.

True

False

false

Which type of deployment involves releasing software in waves, with more and more instance getting the new software in each wave?

A. Canary Testing

B. A/B Testing

C. Rolling Deployment

D. Blue/Green Deployment

E. Big Bang Deployment

C. Rolling Deployment

What are the two major issues that plague companies when it comes to deploying software code?

1. downtime

2.excessive delays.

for #2=Part of the reason for the long lead times in rolling out software is that manual procedures are continually added to the rollout process. Every time a problem occurs that takes down a production environment, a company will add procedures to prevent that problem from ever happening again. Some preventative measures include introducing code reviews, manager check offs, and limiting access to production for everyone but a designated few (such as a deployment engineer).

Ironically, taking more time to put together a software release makes it more likely to fail in production.

When rollouts are infrequent, code merging becomes infrequent, and the overall application becomes forked into incompatible versions that are more difficult to reconcile with each passing day.

what does the devops movement push?

The DevOps movement has advanced the idea that frequent code merging (integrating) and frequent, small releases are critical to solving the issues related to code deployments that were identified in the previous section. DevOps also advocates the use of automation wherever possible in the deployment process.

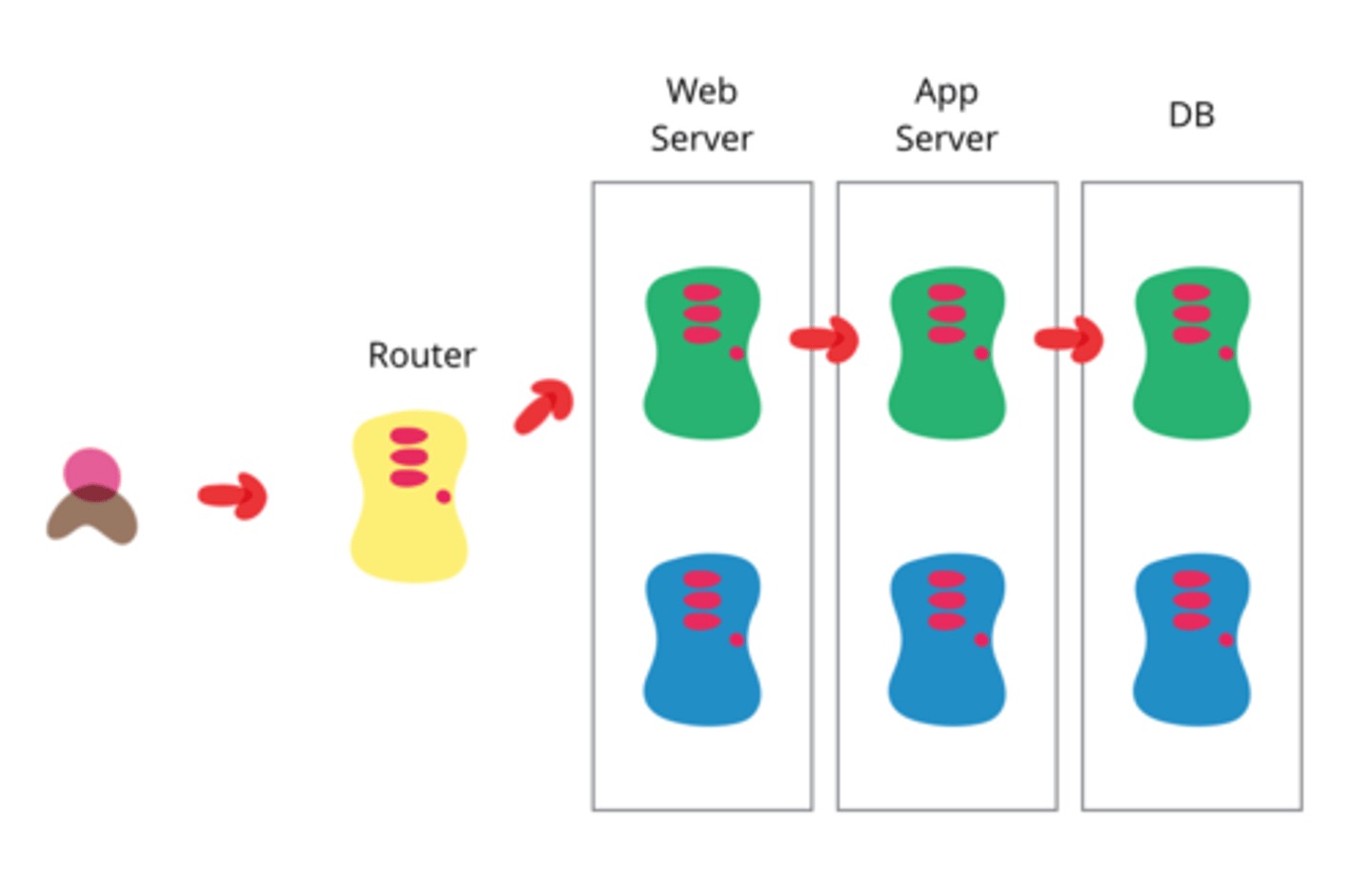

devops system

planning--> coding--> building (continuous integration with coding) -->testing--> deployment

planning

planning, is where organizational requirements are turned into feature requests or product/sprint backlogs. In short, it is the list of features that an organization wants in its next software release.

continuous integration

the words "Continuous Integration" on it depicts the activity of constantly merging source code from the code repository with code that developers are actively working on.

For example, imagine that a developer is working on a new feature in one part of an application and takes a week to complete their task. Before submitting his changes to the source code repository, he pulls down the code that has been added to the code repository over the course of the week, merges (integrates it) with his new code, runs another set of tests to make sure nothing is broken, and if everything passes, then he submits his changes to the code repository.

testing

Testing refers to running testing suites that look for errors in code that has been checked into a code repository. In the context of a code pipeline, it may include deploying the entire application into a testing or staging environment that mimics the production environment and running tests there.

deployment

Deployment refers to moving the tested version of a software release into production. There are many alternatives involved with deployment such as releasing to every production server at once or gradually deploying onto production servers one at a time to make sure nothing goes wrong.

code pipeline & manufacturing

Just as software companies often fall into the trap of stockpiling lots of features before attempting a software release, manufacturers used to do something similar. Manufacturers used to stockpile lots of parts and move them in batches between assembly stations. Over time, manufacturers came to pursue "one piece flow," meaning that rather than completing large batches of parts and moving them between stations occasionally, the goal was to get a single completed piece on to the next station immediately so that it could became part of a sellable product as fast as possible.

This new goal in manufacturing to get a sellable manufactured good out that door as quickly as possible is THE inspiration for code pipelines.

A code pipeline is a _______________for software features that can put them into production one at a time as fast as they are completed.

The ideal use of code pipelines is that if a single feature is completed in an application, such as updating a block of text on a website, as soon as the developer checks in that change, it makes its way through every step of the code pipeline as fast as possible, and it is deployed to production. A code pipeline is a conveyer belt for software features that can put them into production one at a time as fast as they are completed.

Two terms associated with code pipelines that relates to the idea of quickly releasing new code to production are_______________ and _________________.

continuous deployment & continuous delivery

Continuous deployment

is the concept that as soon as a feature is completed (correctly and without finding any bugs in it), that it is released to production. It also means that as soon a developer checks completed code into the code repository, it is automatically deployed to production.

Continuous delivery

is an alternative to continuous deployment that allows for a manual step to take place before software is released to production.

a software version has been tested and is ready to be released to production, but needs authorization before it is released. Under continuous delivery, software code is constantly being delivered in a state ready for deployment, but it awaits final approval and the press of a button before it actually goes into production.

A working code pipeline is achieved with two types of software:

1. The first type of software refers to products that perform distinct steps (or stages) in a code pipeline (such as build code from source or test software),

2. the other type of software monitors the execution of each step (or stage) and moves the code to the next step in the pipeline.

The starting point for a code pipeline is a

code repository.

build software

Once source code has been submitted to a code repository, if it is in a compiled language such as Java, it needs to be built into a deployable package. Software that performs this task is referred to as build software.

blue/green deployment

Under this approach, there are two versions of the software running simultaneously, but only one environment at a time is viewable by customers.

While the new fleet of servers is in standby mode, it is called a green environment and the previous live environment is called a blue environment. As soon as a change is made at the load balancer (similar to flipping a light switch), 100 percent of the traffic gets directed to the new fleet of servers. The old fleet of servers becomes the green (standby) environment, and the new fleet of servers becomes the blue (live) environment. At this point, if there is a problem, the same "light switch" approach can be used to switch all of the traffic back to version 1. If version 2 is functioning correctly, then the old environment can be terminated.

canary testing

canary testing in software deployments releases a new version of software to a small portion of customers to test for possible problems. If no problems are detected, then the new version of the software is released to all customers.

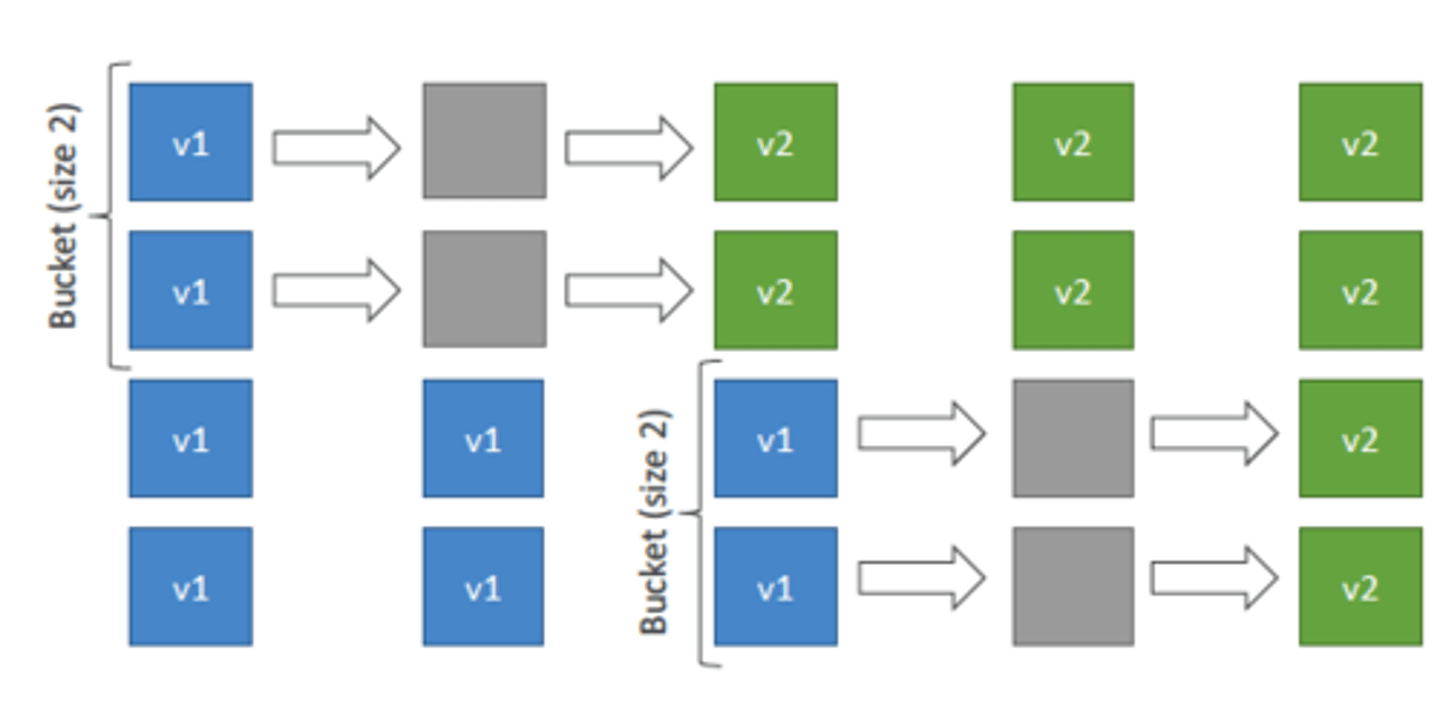

rolling deployment

In this type of deployment, a percentage of the production environment is updated with a new version and then a waiting period elapses. If no problems are detected, another wave of instances is updated with the new software and a waiting period begins again. This cycle is repeated until all servers in production are updated with the new version of software.

aws codecommit

a fully-managed source control service that makes it easy for companies to host secure and highly scalable private Git repositories.

AWS CodeCommit is the name of a git-compatible code repository service. The AWS CodePipeline service is actively monitoring the AWS CodeCommit code repository for any changes. As soon as a source code change is detected, AWS CodePipeline retrieves the code and delivers the new code to AWS CodeDeploy. AWS CodeDeploy is a highly configurable service that can take software code and deploy it to EC2 instances.

Remember that AWS CodePipeline is like the _____ that ties other developer tools services together.

glue

creating a new pipeline

1-"Create pipeline," you will see a five-step wizard that begins with Figure 11. In the first step, you give your pipeline a name. You also create a "role" that will give CodePipeline temporary security permissions to interact with the other related AWS services (like CodeDeploy) whenever it runs.

2- In Step 2 of the create pipeline wizard, it asks you to specify where code comes from that will be used in the pipeline. Note that in the dropdown shown here that CodePipeline can retrieve code from six different code repositories, and several of those code repositories are hosted outside of AWS. The implication here is that if you want to, you can use AWS CodeCommit as your code source, but the code can also be somewhere outside of the AWS ecosystem, so there is flexibility.

3- Step 3 is to select a build provider (Figure 14). Note that the two options available are to use the integrated AWS CodeBuild service or Jenkins. You are allowed to click the white button that says, "Skip build stage," which is what I will do when building a two-stage pipeline,

4-The final step in the AWS CodePipeline wizard asks you to select a deploy provider. Figure 17 shows 11 different providers that can take code from your pipeline and deploy it into an environment that they manage. AWS CodeDeploy can be used to publish your code to fleets of EC2 instances, while some of the other options listed allow you to publish your code to other kinds of deployment environments like S3 buckets or docker containers (which you will learn about in future chapters as alternatives to using EC2 instances).

AWS codepipeline listens to the changes in any given stage and

when necessary will move code from one stage to another..

CodePipeline moves assets between stages

codepipeline developer tools (menu)

Source-- code repos..where you get code

artifacts (library)

build-- code build

deploy-- code deploy

pipeline -- oversees everything

At the top it says "Developer Tools". A better

title for this menu would be "Code Pipeline Tools." CodePipeline is the fifth item listed in the navigation

menu, but each of the items preceding it (Source, Artifacts, Build, Deploy, Pipeline) are things that can

be created and included in a CodePipeline.

a role is a __________ in AWS

permission

How does codepipeline help with commiting code changes to a repo?

CodePipeline will be watching for commits to the main branch of the code repository. When it notices code newly committed into CodeCommit, it will retrieve all of the application code and deliver that code to CodeDeploy, which will deploy the code to a single EC2 instance that is in place (aka already running in production)

CodePipeline Lab steps

pipeline setting five-step wizard.

1: we will give the pipeline a name,

2: we will choose a source code repository,

3: we will define a code build (compiling) service,

4: we will select a code deployment service,

5: we will review and launch our pipeline

T/F in the code pipeline lab, everything needed to be in the same region-- including the IAM

FALSE

Everything in this lab mustbe done in the same region (except the IAM portion), or things will not work.

difference between git and codecommit

The difference between using GitHub and CodeCommit is

that by default GitHub makes your code public to the world (not acceptable for most corporations). If

you are going to pay for a private git-compatible source repository, CodeCommit is cheaper (its storage

cost will be essentially nothing for this lab

in the Code pipeline lab we created an "agent"... what is it and why?

In order to automate deployment of code in your code pipeline, we are going to install an "agent", or

program on your EC2 instances that will handle the code updates on your behalf. It will both accept

incoming files for code updates and perform the actual updates. The agent needs to be given limited

permissions to act on your behalf within your AWS account, so let's set that up first. We create limited

permissions by creating a "role" that will be assigned to your EC2 instance. we used IAM

IAM roles in codecommit

You are going to be creating a role that allows your EC2 instance to perform authorized actions

on your behalf. If you recall from your readings, roles are similar to users. Both users and roles

allow you to define granular access to all of the resources in AWS. One key difference is that

users rely on fixed credentials like a username and password, whereas roles get a set of

credentials that expire quickly. Typically, we assign users to real people and roles to computer

services (but sometimes to people too). In practice, what this means is that if you need your EC2

instance (a computer service) to talk to the CodeDeploy service (and talking to any service in

AWS requires granular permissions), then you will allow the EC2 instance to be granted

temporary credentials to perform that action. The credentials quickly expire and are useless

afterwards. At a future point, when the EC2 instance wants to talk to CodeDeploy again, it gets

another set of temporary credentials

difference between IAM role and user

Both users and roles

allow you to define granular access to all of the resources in AWS. One key difference is that

users rely on fixed credentials like a username and password, whereas roles get a set of

credentials that expire quickly. Typically, we assign users to real people and roles to computer

services (but sometimes to people too).

why is it important to use roles?

Security note: When you need software code in your AWS environment to interact with AWS

services, it is worse to put the username and password for an AWS Administrator account right

in your software code on an AWS instance, and it is better to use the IAM system that utilizes

roles and temporary credentials.

As an example of why this is important, on production

websites I've sometimes seen an application crash and raw source code (passwords and all) was

displayed in a web page rather than html (shudder).

Recall that all permissions in AWS are allowed via

policy documents

in the code pipeline lab we created an EC2 instance and we added a new tag in the "keys & value" field... why?

Normally, we use Keys to label our resources as being part of some group: like production infrastructure, testing infrastructure, etc. If you don't label things,

then as you spin up hundreds of resources (VPCs, databases, security groups, EC2 instances,

virtual hard drives, etc.), you have no idea whether a resource is disposable or not.

In this use case, however, the tag "PDCHomePageServer" will *allow our code deployment

software to attempt to deploy to any EC2 instances that have this tag associated with them.*

Without this tag, finding the "right" server to deploy to among a sea of EC2 instances could be

like playing "Where's Waldo".

in the code pipeline lab, when launching an EC2 instance we put NO key pair (gasp)... how will we ever interact with it?

Under Key pair (login) select "Proceed without a key pair". Neither you nor anyone else will

ever be able to SSH into this machine.....That is okay! All of the configuration and software updates will be handled by your 'User data' script that you will enter later on in the page and the CodeDeploy agent that you will install using that script.

when setting up the EC2 in the code pipeline lab we gave our instance persmissions...

Under Advanced details at the bottom, under IAM instance profile, choose EC2InstanceRole.

Note: Now your EC2 instance will have permissions just like a real user would—even though it is

just a computer.

we then put code in the User data box

what does the "user data" box do in the EC2 instance?

Can you tell what the User data box is for? *It runs commands (linux or bash) on the EC2

instance immediately after it starts.* If this were a Windows instance, you could use PowerShell

commands in the User data box instead. Using this box effectively can eliminate the need for

you to ever log into a machine via SSH.

Can you tell what the Linux commands do? They update the operating system with the

latest patches, install a programming language, and later, grab the AWS CodeDeploy software

install file from a remote URL, and lastly run the CodeDeploy install program.

AWS CodeDeploy

AWS CodeDeploy is an AWS service that manages the act of deploying software. Your target for deployment could be a single EC2 instance, thousands of EC2 instances, or other types of compute such as docker containers.

CodeDeploy is highly configurable and allows you to select how you want to deploy your software to a fleet of servers. For example, do you want to deploy to one server at a time in a fleet of servers, or all at once?

In addition to selecting how to deploy software, it also allows you to configure what the destination server should do when you copy over updated code. Most software engines running on servers (e.g. NodeJS) require a restart when new code is deployed. We'll cover restarting a software engine or web server in the next section.

CodeDeploy application

which is defined as a resource containing

software that you want to deploy.

To understand applications in CodeDeploy, imagine that you have a website that you want to deploy to many different environments (test servers, production servers). The

application will be a name to organize your different environments to which you will deploy your

website. Later, we will combine CodeDeploy with AWS CodePipeline to automate deployment.

T/F in the lab we did NOT have to make a role for the code deploy to interact with other AWS resources?

FALSE

Create role, AWSCodeDeployRole. We needed to create a CodeDeploy service role to allow CodeDeploy to interact with other AWS resources

production deployment group

An application, like you just created, can have multiple, different deployment groups in it.

Each deployment group can have different EC2 instances as its targets. This would be handy if, for example, you had some instances that were for production, and others were for testing and staging.

In the Tag group, enter "PDCHomePageServer". (Note, it will try to deploy to any

instances with this tag name associated with them)

what is CodeDeploy Configured to do:

It is configured to allow code to be deployed to destination instances with the tag you

selected and applied to them. It is configured to replace the code on the server, rather than launch and

replace the server with each update

what is CodeDeploy NOT configured to do:

We haven't told CodeDeploy where the source code is at. That is actually the job of CodePipeline, to find the source and deliver it to CodeDeploy (hence, the name CodePipeline and the imagery of a pipe that carries its contents to selected destinations that in this case are software services like compiling[building], testing, or deployment).

Here is another huge thing we haven't told

CodeDeploy yet: what will you do with the actual code? What folder does it get put into on the

destination computer? In addition, once the code is on the destination server, what services need to be

stopped and restarted (e.g. application server, language engine, web server, etc.)? Because each

application environment is different (nginx, node express, Apache, etc.) you need to specify and 'code'

each step of application deployment process within the EC2 instance

what happens Once the CodeDeploy service running in the AWS cloud is notified that new code is ready to deploy?

it will interact with the CodeDeploy agent that is installed on your selected EC2 instance(s). The agent

needs some scripting code so that it can perform necessary activities including copying the new code to

the right folders and stopping/starting application services.

in the lab we had to add what scripting code files to our repository so the agent could perform necessary activities including copying the new code to the right folders and stopping/starting application services?

appspec.yml

scripts/install_dependencies

scripts/start_server

scripts/stop_server

All of the "code" that is needed by the CodeDeploy agent gets packaged with your application source

code (so you can keep it in your source code repository). The main CodeDeploy file is called

appspec.yml and it can reference several other scripts as needed from there.

appspec.yml

You'll notice that this critical file (also used for other AWS services like Elastic Beanstalk), has a files

section with a specified source file (index.html in this CodeCommit repository) and a destination folder

(the folder to put the file in on the EC2 server).

If you need to put in additional files, you can do it by repeating the source section, e.g.

Note the "hooks" section of the yml file. It has intuitive sections that indicate what should happen on

the server before the application is installed (the temporary files are copied to their destination

directory), or what should happen when an application is stopped. There are lots of lifecycle event

hooks possible, and you can find them by Googling "appspec.yml documentation" and finding your way

over to the "AppSpec 'hooks' section for EC2/On-Premesis deployment"

T/F we had to give our pipeline a service role?

TRUE

how many roles were needed in the codepipeline lab?

Three!

a. EC2InstanceRole

b. AWSCodePipelineServiceRole

c. CodeDeployRole

Pipeline Steps

source, build, deploy

T/F Code Pipeline lets you update our code and have it automatically deploy to production.

true

how to codecommit, codepipeline, and codedeploy are interact?

code will be checked into CodeCommit (a git repository CodePipeline will be watching for commits to the main branch of the code repository. When it notices code newly committed into CodeCommit, it will retrieve all of the application code and deliver that code to CodeDeploy, which will deploy the code to a single EC2 instance that is in place (aka already running in production).

Unlike a previous lab (which displayed static HTML content), the EC2 instance in this lab will be running a website supported by software code. Because software code changes typically require _________________

CodeDeploy will be used to ______________ when code rollouts occur

application restarts, restart the application

what are the steps to setting up an RDS database that links to MySQL??

in AWS:

MySQL

Dev/Test Template (other options include production & free tier)

Single DB Instance

set master username/password

DB Cluster Name: concert-db

DB Instance class: Burstable db.t3.micro instance

General Purpose storage, 20 GB storage (minimum).

Concert-sponsor-vpc

---> after a database is created you can't change its VPC

Public access: Yes

New security group called "concert"

Note the URL of your RDS Endpoint. You'll need this later to tell your application where to connect to

Double check the Security Group for inbound traffic on my "concert" rule. make sure port 3306 is open to your IP address

Create a connection in MySQL Workbench

you can "Clone" your codecommit repository... why is that important?

When you connect to a CodeCommit repository for the first time, you typically clone its contents to your local machine. After Git creates the directory, it pulls down a copy of your CodeCommit repository into the newly created directory. After you successfully connect your local repo to your CodeCommit repository, you are now ready to start running Git commands from the local repo to create commits, branches, and tags and push to and pull from the CodeCommit repository.

you get a URL of your repo

what is coud9 and why did we use it in our lab?

Cloud9 is a computer workstation in the cloud, an EC2 instance, that comes preinstalled with git and

Node AND has an IDE that looks and works similar to Visual Studio Code. Furthermore, it is browser

based and accessible from anywhere in the world. It works great for development purposes

Dr. Kettles didn't want to ask us to install Node and Git on our local computer so instead we rented a development computer in the cloud (cloud9).

did we write all the code in our cloud9 environment or copy it?

we copied it!

You can use the bash terminal window in Cloud9 to copy code from a public repo to your local computer

and then push that code to your personal repo

"git clone --mirror https://github.com/degank/concert-sponsor.git starter-files"

After running this command, you should see that the contents of the repository have been pulled down

to your development machine as a new folder

how did we get our code from cloud9 over to our cloudcommit?

we pushed all the files from the cloud9 repo into your own CodeCommit repo.

Replace the third statement in

the following command with your repo URL.

git push https://git-codecommit.us-east-2.amazonaws.com/v1/repos/concert-app-djk-2024-01-01 --all

Verify that the code is now in your CodeCommit repository. Click on your repository in the CodeCommit

console and see the list of files there:

why did we need the the Public IPv4 address for your workstation instance, VPC ID of the workstation ec2 instance, and the Security group for your EC2 instance running your Cloud9 workstation?

IPv4: need to determine the public

IP address of your Cloud9 workstation so that we can then run the IP address:3000 in our browser---(whenever your workstation is idle for over 30 minutes, its IP address will change the next time you

log into it.)

VPC ID:

Security Group: needed to open up the RDS instance inbound rules to allows MYSQL/Aurora type traffic from the security group for your Cloud9 environment

When running Node applications that are websites, by default they run on port ________, so we need to ________ ____ that port on your workstation firewall (security group).

3000, open up

In the codepipeline 2 lab, we had to edit the inbound rules of our RDS instance... why and how did we do this?

The RDS database is virtual machine instance similar to an EC2 instance, and it has its own firewall/security group.

We need to update its firewall so that it receives

connections from the Cloud9 workstation. One way we can do this is to tell its firewall to accept traffic

from the security group that the workstation is currently in.

On the Edit inbound rules page, add an entry that allows MYSQL/Aurora type traffic from the *security

group* for your Cloud9 environment.

Now you just need to update the database connection code in the sample app and you should be ready

to go. Back in your Cloud9 IDE, open the file "index.js". Update line 8 for the host and enter your

endpoint URL for your database. Also update any password data that you need to.

Rather than running the code on your

development workstation, you want it to run on one or more production servers. How do you do this?

create a new EC2 server with the CodeDeploy agent installed. With that agent correctly

installed, you will be able to push code changes directly to that server from your code repository

*Under Auto-assign public IP, choose enable

*Under Firewall, choose Select existing security group, and choose the one associated with your

cloud9 environment--Note: technically it would be better to create a new security group that allows in port 3000, but

then you'd need to update the security group for your RDS instance to allow in this new security

group too and we'll avoid that extra work for now.

*Under Advanced details, choose EC2InstanceRole for IAM instance profile (this was created in a

previous lab for this chapter).

*In the User data box put bash commands

Now that the code is ready in a code repository and the production server is ready to receive code, we need to set up the _____________ portion of the pipeline which will receive a set of changed files from a repository and then go through any steps necessary to start/stop the production server and run the new files

deploy

how does the code deploy know what instances to deploy?

Environment configuration: Amazon EC2 instances

In the Tag group, enter "Production". (Note, it will try to deploy to any instances with this tag name associated with them)

that is why when setting up our ec2 instance set up we did this:

* Add an additional tag

* In the Key Value field, enter "Production"

The important feature of CodeDeploy is that it runs a bunch of scripts on your server each time an application update occurs in the code repository. The scripts have already been written for you for this lab. They are in ____________ and the scripts folder in your code repository

appspec.yml

Who is most responsible for the majority of the software found on a typical Linux machine (i.e. the GNU project software)?

A. Bill Gates

B. Steve Jobs

C. Linus Torvalds

D. Richard Stallman

D. Richard Stallman

You can configure a Windows server computer so that a desktop GUI is not installed or running

True

False

True

Which is NOT a common daily task for a systems administrator?

A. Creating and modifying scripts that run at selected intervals

B. Checking hard drive utilization

C. Adding new services/program

D. Tracking all systems changes

E. Deploying custom applications written by developers

F. None of the above (they do all of those things)

F. None of the above (they do all of those things)

Which of the following is NOT one of the folders in the root directory of Amazon Linux?

A. bin

B. etc

C. root

D. home

E. program files

F. var

G. sbin

E. program files