Lecture 2 - Multisensory integration

1/32

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

33 Terms

sensory conflicts

-different sense might provide conflicting information about a sensory stimulus

-this conflict needs to be resolved

mcgurk effect

-audio never changes

-video shows pronunciation of different syllables

-what we hear depends on what we see

-affects auditory perception of what is actually heard

example of mcgurk effect

-person says var var throughout

-at beginning pronounce different syllables → var, dar, bar

-can see that these are pronounced differently by tracking their mouth movements to help us understand and this overrides the audio we hear

-so the audio is the same but the visual feedback changes

-so visual system is dominant

sensory uncertainty

-occurs due to:

perceptual limits

neural noise

cognitive resources

perceptual limits (sensory uncertainty)

-senses can pick up certain information but not other information

-may not pick up enough information to make certain decision

-only so many things we can pay attention to at any given moment

-limits what information comes through and may not able to use all information we receive

rock & victor - method (testing conflicts between vision and touch)

-asked to judge the size of object by vision and touch

-setup:

manipulate object to look small (vision)

could feel the object - felt bigger than looked

-so had to judge the size of the object but mismatch between visual and haptic feedback

rock & victor - results (testing conflicts between vision and touch)

-conflict between visual and touch tells us whether people depend more on haptic feedback or visual feedback

-found generally followed sense of vision → visual capture

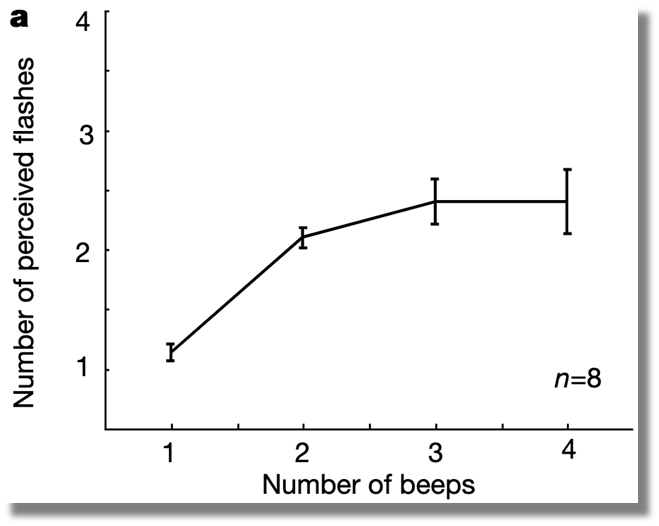

shams - method (is there a strict sensory hierarchy)

-asked to report number of visual flashes

-at the same time an auditory distractor was played

-auditory feedback does not matter for the task

→ do not need to integrate information between visual and auditory information but if they did would support automaticity of body schema

shams - results (is there a strict sensory hierarchy)

-found the number of auditory beeps determines reported number of visual flashes

-more closely followed auditory perception over visual perception

-on graph correct answer was always 1 → if show single flash and more beeps the number of flashes people report increases

modality precision hypothesis

-modality with the highest precision/lowest uncertainty is chosen depending on the task:

spatial task use vision

temporal task use audition

ernst - method (changing precision of sensory modality)

-asked to judge height of the bar

-virtual reality set-up

-could touch bar with two fingers to get estimate (haptic feedback)

-could also see the bar (visual feedback)

-introduce discrepancy between different modal inputs by changing the visual and tactile uncertainty independently

ernst - vision method (changing precision of sensory modality)

-change height of the bar

-modify uncertainty by adding visual noise → decreasing the quality of the sensory feedback

-making virtual bar more blurry to see if they still rely on visual feedback as much

ernst - haptics method (changing precision of sensory modality)

-force feedback device

-change height of bar

-robot arm locks into place in certain position, similar to as if people were bumping into a given object

-depending on the position where the robot locked in place

→ then modify the height of the bar that people are perceiving

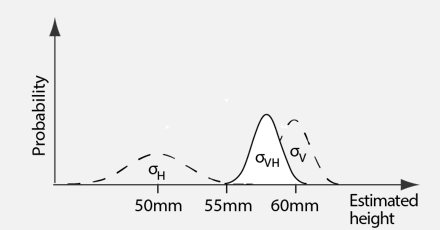

ernst - analysis (changing precision of sensory modality)

-compare against bar without sensory conflict (55mm tall)

-determine point of subjective equivalent (PSE)

-manipulate sensory uncertainty of visual feedback

-set experiment so discrepancy between modalities:

haptic feedback tells participant the bar is 50mm tall

visual feedback tells participant the bar is 60mm tall

-set bar at 55mm → so if people trust haptic feedback more they will say the bar is shorter and if they trust visual feedback more will say the bar is taller

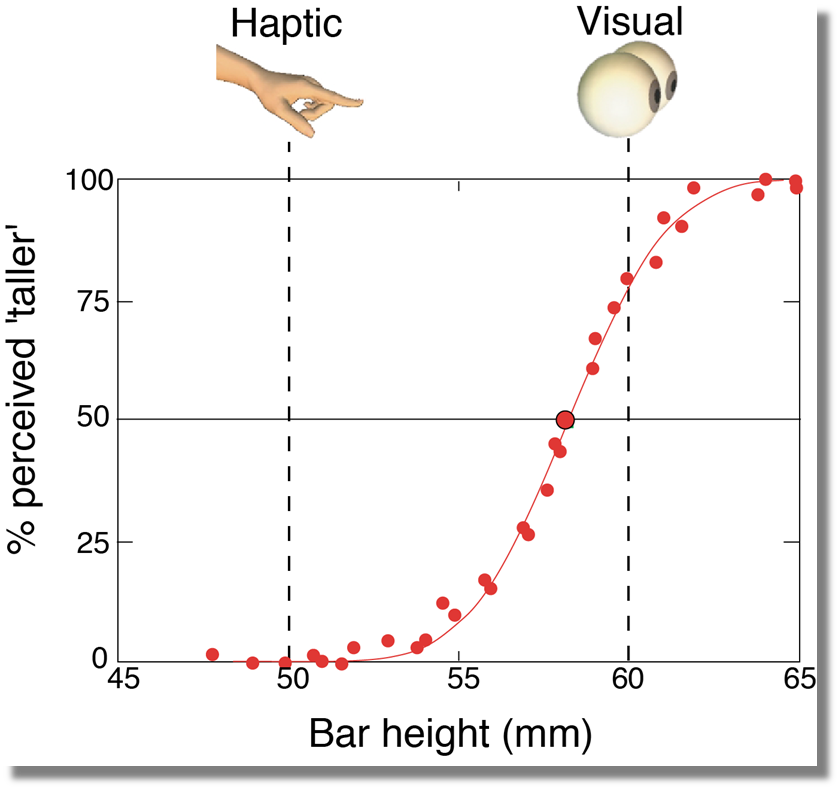

ernst - no added visual noise results (changing precision of sensory modality)

-perception of bar heigh biased towards visual input

-50% line is subjective ambivalence → both bars have exactly same height as people are unsure which bar is taller and cannot distinguish between the height of two bars

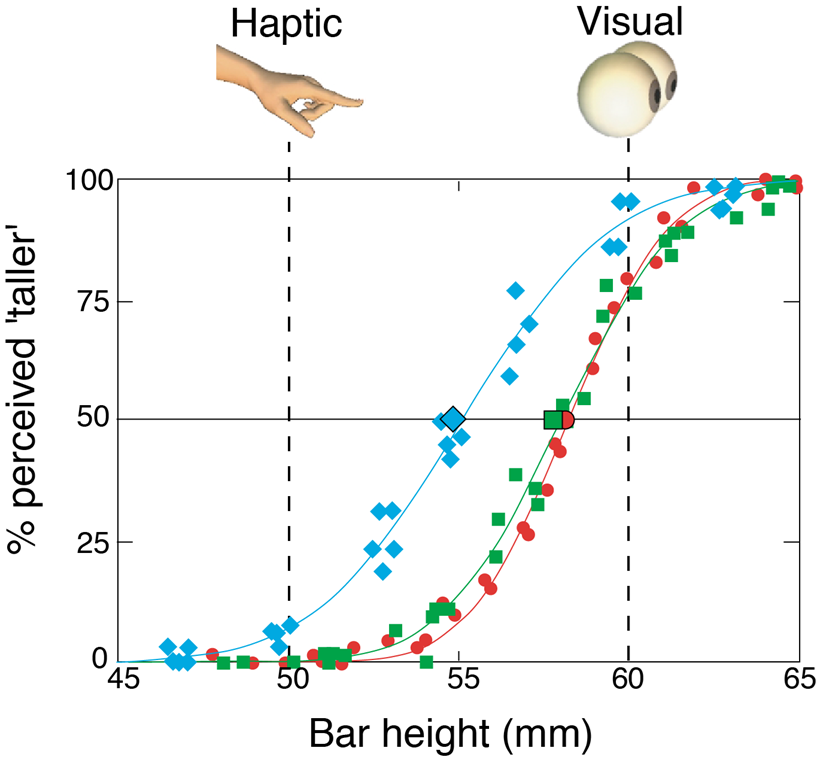

ernst - added visual noise results (changing precision of sensory modality)

-blue curve → perception of bar height not determined by both visual and haptic inputs

-clearly shifted to the left, point of subjective equivalence is almost exactly in the middle of visual and haptic bar height

-visual dominance appears to have disappeared simply by degrading quality of visual sensory feedback

ernst - high visual noise results (changing precision of sensory modality)

-yellow curve

-perception of bar height not determined by haptic inputs

-people mostly rely on haptic feedback and almost no reliance on visual feedback

ernst - conclusion (changing precision of sensory modality)

-suggests strict hierarchy between different senses

-brain takes into account momentary uncertainty in sensory feedback and provides combination where most influenced by the sense that gives us the most reliable information at that time

normative model

-how a problem should be solved

-the optimal solution

-based on theory

-can establish bounds → best that can be done

process model

-how a problem is actually solved

-based on data

normative model for sensory integration

-integration methods can have different levels of integrated uncertainty

-pick integration model that minimizes sensory uncertainty by combining different sensory signals in a way with smallest level of uncertainty

-two different sources of input - haptic + visual

-integrated into signal that reflects a final judgement about sensory stimulus we are experiencing

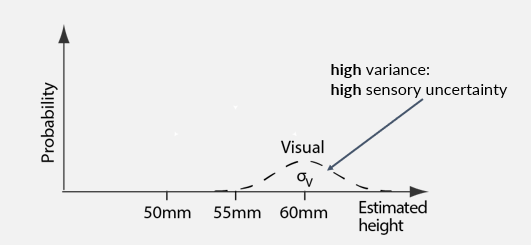

integrating probabilities - high variance (blurred stimulus)

-bar is 60mm tall

-probability distribution centred at value of 60mm → true value that sensory feedback is telling us

-distribution has some variance → reflects variance and uncertainty we have with visual feedback

-can give us best guess and can be used to quantify uncertainty we experience

distribution is relatively broad → high variance and high sensory uncertainty

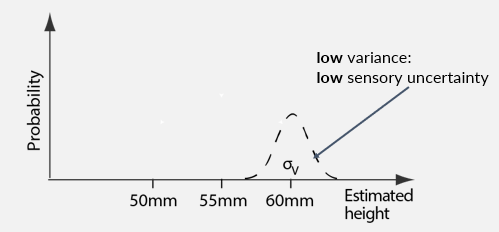

integrating probabilities - low variance

-bar is 60mm tall

-not as broad, sharper

-removing blur from visual feedback

-low sensory uncertainty and low variance

-more extreme values not as likely and can be discarded

-high confidence in what vision can tell us when stimulus is not blurred

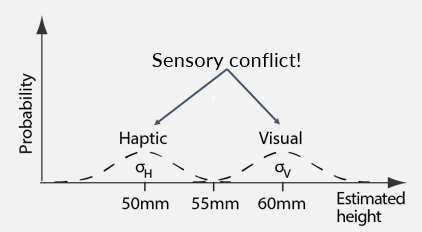

integrating probabilities - sensory conflict (blurred stimulus)

-integrating from different sensory modalities

-what is felt haptically is shown at 50mm

-what is felt visually is shown at 60mm

two different distributions - deliberately introduced mismatch in experiment

-now have their own uncertainty with each distribution

-normative model tells us that integration should be proportional to uncertainty in two signals → helps to resolve conflict

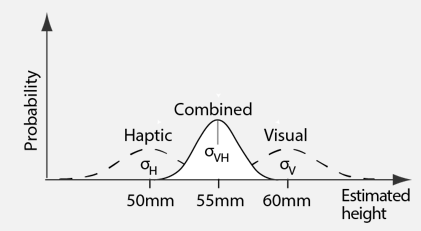

integrating probabilities - combined estimate (blurred stimulus)

-combined estimate of both visual and haptic feedback has lower uncertainty/smaller variance than either estimate alone

narrower and more peak on graph

-normative model predicts this is how we should act

-likely why we integrate across our senses → helps reduce overall uncertainty in sensory estimations

-adding more sources of information to a signal helps improve certainty of final estimate

integrating probabilities - combined estimate (unblurred stimulus)

-back to sharp signal

-low associated uncertainty in visual signal

-haven’t changed anything about haptic signal which still has high variance and uncertainty

-combined estimate is biased towards the visual estimate → as this has lower uncertainty than the haptic estimate

-more reliable signal weighted more highly than the unreliable signal when combining the two → doesn’t ignore worse signal

-theory predicts would make use of all available information and should always integrate even if signals have high uncertainty → still helps narrow down sensory uncertainty

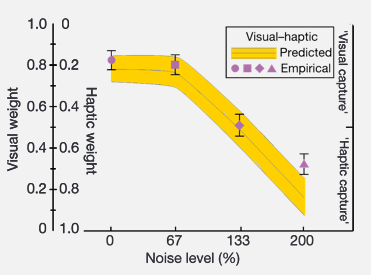

human performance vs MLE

-on left axis is visual weight - if trust vision completely at 1 would then not trust haptic at 0 and vice versa

-x axis is is changing noise level of visual feedback

-pink points are empirical data points from Ernst experiment → show that with less noise trust vision more → see a steep change in trusting haptic feedback more when visual feedback is blurred

-if people integrating information in the best way pink dots would follow yellow line

-yellow line is prediction from normative model → shows human integration follows sensory rules about what is most effective

-not followed precisely meaning maths regarding probabilities needs to be integrated into the brain as there is a close match between theory and what is actually done

do we always integrate information optimally?

need to know uncertainty for optimal integration

-can be be hard to estimate

-easier in sensory perception than cognitive reasoning

calculations can be intractable or take a long time

-heuristics are suboptimal but fast

-good enough solutions often satisfactory

are probabilities encoded in the brain?

-uncertainty needs to be represented in some way

-little direct electrophysiological evidence, but several plausible schemes proposed

mean and variance (probabilities encoded in the brain)

-mean to keep track of best guess

-variance to figure out what integration should be

full distribution (probabilities encoded in the brain)

-keep track of full distribution

-different neurons that take care of different parts of distribution

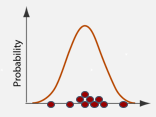

samples (probabilities encoded in the brain)

-take random values from distribution

-this estimates probability distribution

-middle between mean/variance and full distribution

correspondence problem

-assume always want to integrate across senses but may not always be the case

-mismatch between what visual and audition tells us

-each will integrate between visual and audition - where is dog located

-could be that both senses are correct and there are two dogs - one dog can see and one dog can hear → dog you see is silent and dog you hear is hidden → so have two dogs and don’t want to integrate across different senses

-seeing a dog and hearing a meow → should integrate across different senses or is this two distinct objects?