PSYCH 85 Section 4 (lectures 9-11)

1/45

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

46 Terms

cognitive architecture

a theoretical framework and computational blueprint for modeling the human mind's structure and processes—such as memory, perception, and reasoning—to create artificial intelligence that acts autonomously, learns, and makes decisions, rather than just recognizing patterns

symbolic models

computational frameworks that represent human thought as the manipulation of explicit symbols, rules, and logic. They operate on the principle that cognition is analogous to computer processing (physical symbol systems), focusing on high-level reasoning, language, and problem-solving. These models are interpretable, often using if-then rules.

cognitive architecture (class defintion)

unified formalizations of the mind as a whole that specifies:

the format of mental representations

how those representations constitute a larger sys of representations

the nature of the algorithms that operate on those reps

mental language (aka mental representations)

concepts - atomic symbols and functions (metaphor : word)

atomic - cannot be disected further

abstract building blocks that are reusable (functions?)

thoughts - combinations of concepts and functions (metaphor - phrase/sentence)

algorithms - procedures for constructing and evaluating thoughts from concepts

Symbolic language of thought (mentalese)

posits that thinking occurs in a physical, symbolic internal language with its own syntax and semantics, similar to a language in the head. It suggests complex thoughts are built from atomic components, enabling systematic and productive thinking

Representational Theory of Mind (RTM): LOTH assumes that thoughts are mental representations that possess structure. It implies that mental states involve "tokening" (using) symbols in the brain that represent concepts.

Compositionality and Structure: Just as sentences are built from words, mental representations are built from smaller constituent symbols. For example, the thought "John loves Mary" is a composed structure of symbols representing "John," "Loves," and "Mary".

Computational Nature: The system acts as a "physical symbol system," where cognitive processes are operations on these symbols, much like a computer manipulates data according to rules.

Systematicity: The ability to think one thought (e.g., "John loves Mary") often entails the ability to think related thoughts (e.g., "Mary loves John"), because the same mental "words" are rearranged.

Key Proponent: Philosopher Jerry Fodor is widely credited with developing the LOTH.

what makes something a representation in a language of thought? (criteria)

discrete constituents :

constitutent - proper part, structurally complete and “legal”

can be deleted on its own from a larger expression

independence of roles (function) vs fillers (word, atom): the same constituent can fill different roles ; identity of a role is independent of what fills it

chase (dad, mom)

chase (mom, dad)

functions that take arguments

logical operators (AND, NOT, OR, IF, etc)

what are the purposes of using a symbolic architecture?

compositionality

an expression makes the same contribution to different functions/structures it is part of

meaning is derived from consituents and functions

systematic generalization

reusing symbols and strucutres in new ways allows us to solve new problems, not just recognize instances seen before

productivity

inifinite use of finite means

to entertain and represent a huge number of thoughts

what is characteristic of a symbolic structure but not a connectionist structure?

inheritance hierarhcies = you have the assumption from a higher level, and varying reaction times for the assumptions

a canary is a canary < a canary is a bird < a canary is an animal

bird can fly, but a penguin cannot

what is the major strength of symbolic strucutres?

it is a code for common sense

transparent, human readable

inheritance hierarchies

An inheritance hierarchy is a tree-like, taxonomic structure in object-oriented design that organizes classes into a parent-child relationship, often representing an "is-a" association. It enables code reuse and specialization, with a base class (root) defining common attributes and specialized child classes (leaves) branching below

all or none generalization : non learning of environmental statsitics

economical

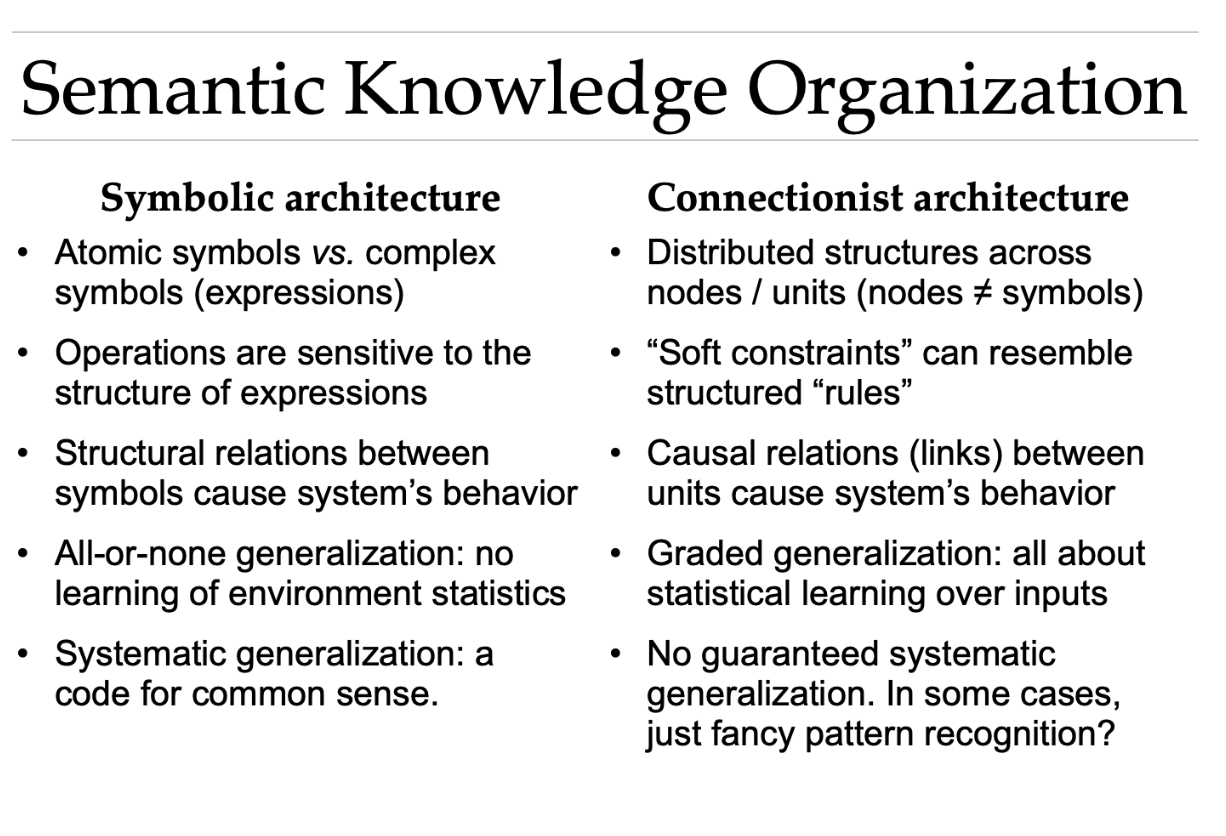

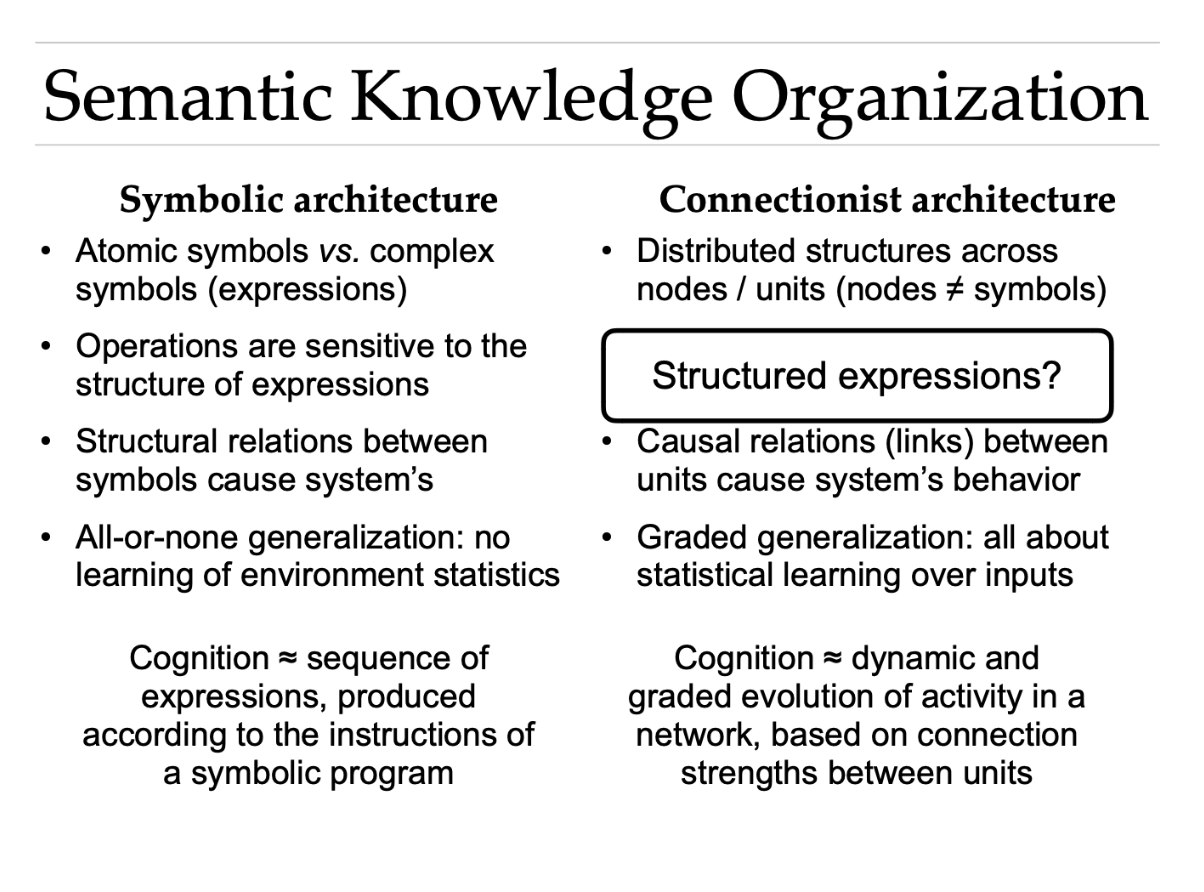

semantic knowledge organization

the structured arrangement of concepts, entities, and information based on their meanings, relationships, and context, rather than just alphabetical or keyword indexing. It forms interconnected networks (e.g., taxonomies, ontologies) mapping how ideas relate, such as "is-a" (taxonomic) or "part-of" (associative) associations

concept > words

network structure

semantic relations

neural network

a machine learning model inspired by the human brain's structure, consisting of interconnected layers of "neurons" (algorithms/nodes) that analyze data to identify complex patterns. They process information by passing inputs through hidden layers—adjusting internal weights through training—to classify data, make predictions, and solve problems like image recognition and natural languange processing

neural network (bullet points)

type of connectionist architecture

links weighted (stronger than others)

activation of knowledge is graded (threshold, not all or none)

perceptron

the fundamental building block of an artificial neural network, acting as a single-layer, binary classifier that models a biological neuron. It computes a weighted sum of inputs, adds a bias, and passes the result through an activation function to output a 0 or 1. It learns by adjusting weights to classify linearly separable data

A perceptron can only solve linearly separable problems means

a single-layer perceptron can only classify data that can be divided into two distinct categories using a single straight line or flat hyperplane. It cannot solve complex, non-linear problems, such as the XOR logical operation, because it can only learn linear decision boundaries

deep learning for neural networks

hidden layers + non-linear threshold functions

๏ As many hidden layers as you want (“deep” learning)

๏ As many hidden units as you want (even more than input)

๏ Non-linear thresholds

A neural network with a single hidden layer can approximate

any continuous function

A simple neural network architecture is powerful enough in theory to represent any continuous function.

But in practice:

it may require too many neurons

it may overfit the training data.

This is why modern neural networks usually use many layers with fewer neurons each, rather than one extremely large hidden layer.

overfitting: If the hidden layer has too many neurons, the network can memorize the training data instead of learning the underlying pattern.

Anything you can do with a Turing machine, you can do with a

neural network (they are “universal Turing machines”)

A Turing machine is a theoretical model of a computer.

It can perform any computation that an algorithm can perform:

arithmetic

logic

simulations

running programs

Modern computers are essentially practical implementations of Turing machines.

So when something is Turing complete, it means:

it can compute anything that is computable

how to build a neural net

links will continuously change their strength with experience (training)

Putting knowledge into neural networks: “training”

๏ Pre-specified (by you): structure / architecture (#layers,

#units, which units are allowed to connect to one another)

๏ Learned: the specific weights (connection strengths)

(e.g., many networks for vision are not “fully connected”)

• Finding the right weights often uses supervised learning

๏ Training set: pairs of [input pattern, correct output]

๏ For each input, compare network output to correct output

๏ Change weights (a bit) to make output closer to correct one

(initial weights are assigned randomly, usually weak)

๏ Keep gradually adjusting the weights throughout training

supervised learning examples:

nput: image of object; output: name (label) of object in image

• Input: part of sentence (word sequence); output: next word

(“self-supervised”: humans do not need to manually generate

the correct output for each input; can be done automatically)

• Input: audio waves from speech; output: word being uttered

• Input: audio waves from music; output: musical genre

• Input: text of movie review; output: positive vs. negative

• Simple example:

Input: “canary” + “IS-A”; output: “bird”

discrete symbols

discrete symbol in a Turing machine is a single, distinct character from a finite alphabet (eg, 1, a, b, or a blank space) stored in a cell on the machine's tape. These symbols are the only units the read/write head can recognize and manipulate, allowing it to perform logical computation on countable data.

what does it mean that neural nets use continuous values?

instead of 0—> 1, it has 0.63.

learn gradually through small adjustments

represent degrees of confidence

model smooth relationships.

quote from traditional symbolic AI

Representations in Neural Networks

“Every distributed representation is a pattern of activity across all

the units,

in neural net, information in not stored in a single node/neuron, but instead represented by a pattern of activations across many neurons at once

this is called a distributed representation

so there is no principled way to distinguish between

simple and complex representations.

everything is just patterns of numbers, hard to distinguish complexity (like if dog then bark = simple)

Sure, representations are composed out of the activities of the

individual units.

Neural representations are built from individual neuron activations. But those neurons are not meaningful symbols on their own.

But none of these “atoms/neurons” codes for any symbol.

individual neurons do not represent discrete symbolic objects like : dog, cat, run, tree

instead they represent partial statistical features

combination of weak features

The representations are sub-symbolic:

the system operates below the level of explicit symbols, (not dog—> animal, but it just manipulates numerical patterns, not speaking human language)

analysis into their

components leaves the symbolic level behind.”

symbolic AI: mind works like a rule-based program manipulating symbols (IF dog THEN animal; human language common sense)

connectionism (neural nets): mind works like distributed neural activity patterns; meaning is stored in statistical patterns, not explicit symbols

summary of connectionist approach quote

summary:

represent information as distributed patterns

not as discrete symbolic structures

therefore their internal representations are sub-symbolic.

Analysis Levels: Analyzing a neural network at the component level (individual units) does not reveal the high-level symbolic logic; rather, it "leaves the symbolic level behind," focusing on statistical patterns.

concept - pattern across many neurons,

not one neuron - your grandma

systematic generalization in neural networks

the ability to recombine known components (parts, concepts, or rules) to understand or generate novel combinations, mimicking human cognitive flexibility,

It allows models to handle unseen data structures by utilizing learned, compositional building blocks, addressing a major weakness of traditional, purely statistical neural network learning.

Q: can neural nets represent structured expressions? Do they show systematic generalization (both are equivalent?)

they can represent structured expressions (implicitly, not explicitly) but they do not always exhibit systematic generalization automatically. They often fail to exhibit human-like systematicity.

Structured expressions

why did object recognition neural network models fail to correctly classify glass figurines accoridng to the objects that they depict (eg classifying a glass swan as a swan) in case 3 lecture 11 (on systematic generalization in neural networks?)

glass figures have a different texture from the real objects that they depict

which of the following is a problem taht a simple perceptron (with no hidden layers) cannot possibly solve?

logical XOR (exclusive-or)

logical AND

Both conditions must be true

example: you pass a class only if you study AND attend

example 2: you can go out only if your friend is free AND your homework is done

logical XOR (exclusive OR)

exactly one is true, not both

example: a light controlled by two switches, on with only one is flipped

a problem in neural nets, bc XOR is not linearly separable

harder to find in real life, tests something non-obvious

guaranteed mismatch

logical OR

either condition is sufficient or true (ie, if one is true, its true)

exmaple: you go on a date if your find them attractive OR you find them interesting

OR = low threshold, easy to access

linearly separable problems

a category for a type of problem in which you can separate the outputs using a single straight line (or hyperplane).

AND

OR

NAND

NOR

Not XOR (requires a hidden layer, deeper networks)

you can separate using straight line bc

everything on one side = pass

everyone on the other side = fail

nonlinear problems

problems that cannot be separated by a single straight line

* XOR is the simplest nonlinear problem

XNOR

a circle

logical NAND (NOT AND)

everything is allowed except both conditions combined

exmaple: reject only if rude and unrealiable

important you can build all logic with only NAND gates

logical NOR (NOT OR)

activates only when none are true

logical XNOR (non exclusive OR)

only when inputs are the same (o/o, or 1/1)

* you both want somehting casual or you both want something serious

guaranteed alignment

level of thresholds

logic AND (high standards)

logic OR (flexible)

logic XOR (only one condition allowed, rare, unnatural)

threshold in neural nets

boundary for the minimum activation need to “turn on” the neuron

* can be a line, or curved

a decision value that determines whether a neuron activates (fires) or stays silent based on its input. It acts as a limit for the weighted sum of inputs; if the sum surpasses this threshold, the neuron outputs a 1, otherwise 0. Modern networks often use activation functions (like ReLU) instead of strict thresholds.

continuous function

a function whose graph forms a single, unbroken curve, meaning it can be drawn without lifting the pen

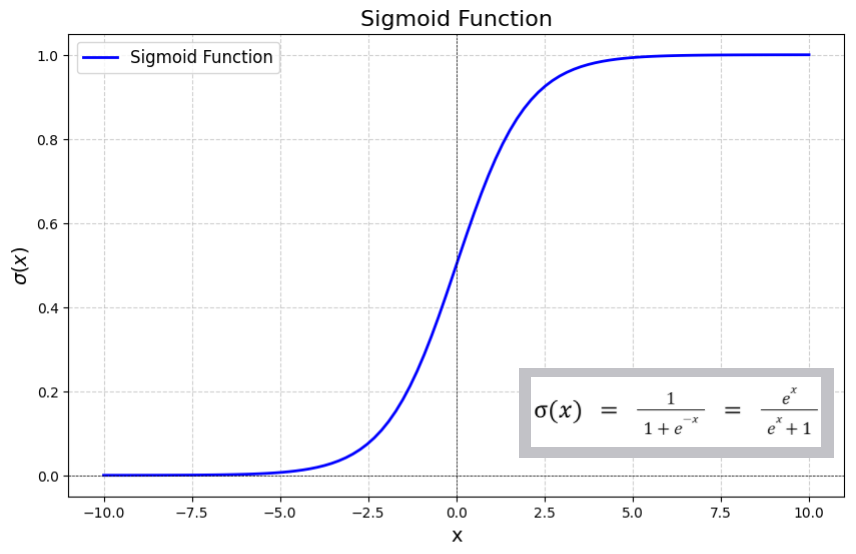

sigmoid function

like a log function but between 0-1

transforms any real-valued input into a probability-like output between 0 and 1. It is primarily used in the output layer for binary classification tasks and sometimes in hidden layers to introduce non-linearity.

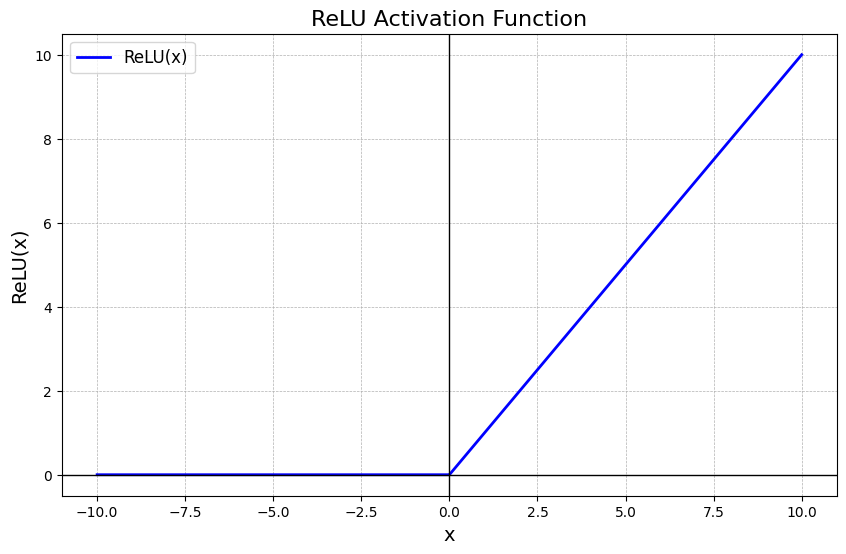

reLU activation function

𝑓(𝑥)=max(0,𝑥), is the most popular activation function in deep learning,bringing non-linearity by outputting input directly if positive, and zero otherwise.

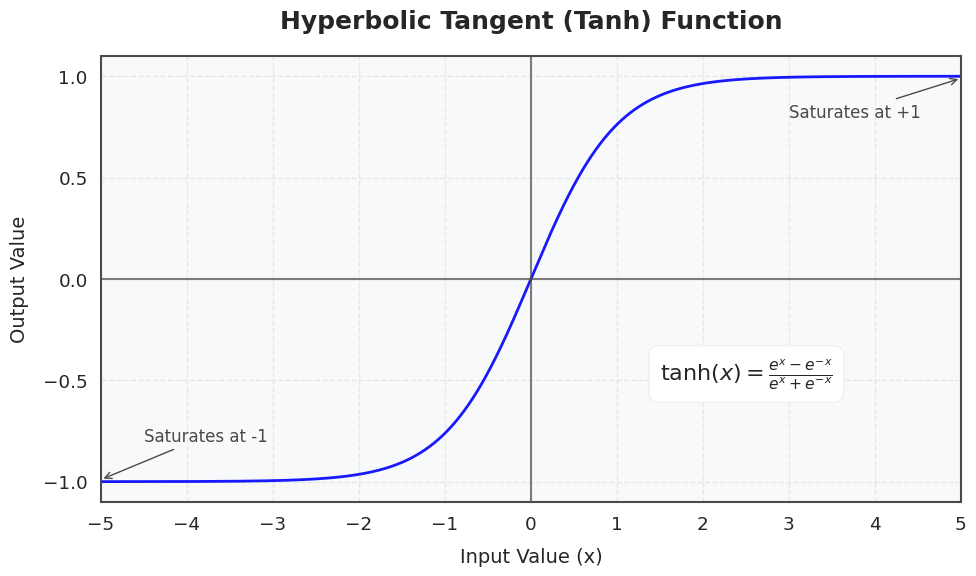

tanh function

maps input values to a range btw -1 and 1, 0 centered

(preferred over the sigmoid function in hidden layers because its stronger gradients and zero-centered outputs allow for faster, more stable convergence during training)

case studies lecture 11, structure sensitivity in neural nets

trivial changes to known puzzles (not deviate from the known example)

understanding visual relations (wrong or incorrect DALLE outputs)

recognizing objects - wrong labeling of images/silouette shapes; texture

english grammar - to show understanding of the rules of language

do current neural nets automatically learn to use a symbolic like representaion?

not always. systematic generalization in these models is not guaranteed. and it has to be scientifically evaluated with careful tests even models that appear to be intelligent might be riht for the wrong reaosns

the 3 views on Connectionism

connectionist and symbolic architecutres are equivalent

connectionist architecutres use a different kind of “symbols”

connectionist architectures do not need symbols

everyone agrees the brain is connectionist

debate is about software, program

last slide of leture 11