Neural Network key elements: Perceptron, loss, epoch (A4.3)

1/11

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

12 Terms

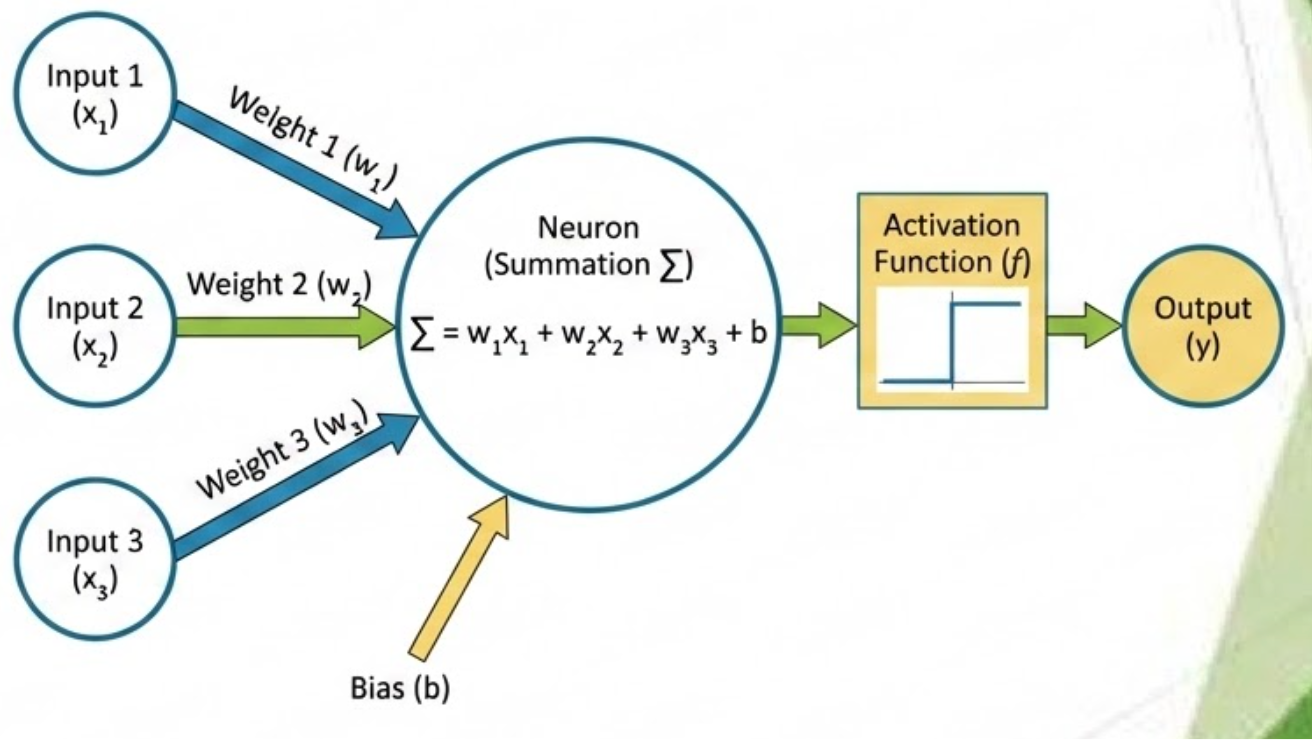

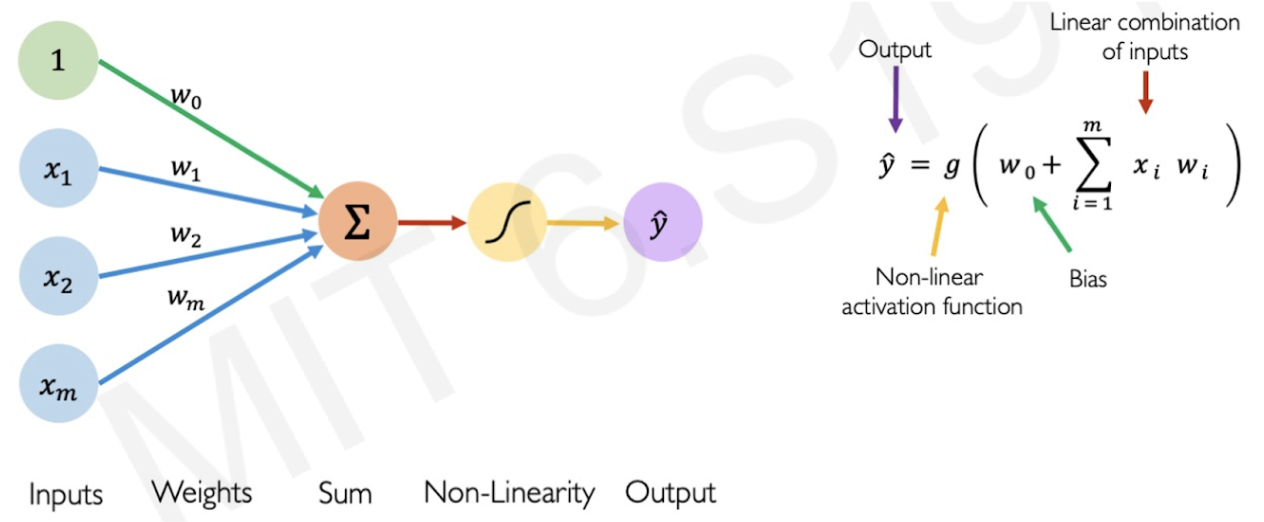

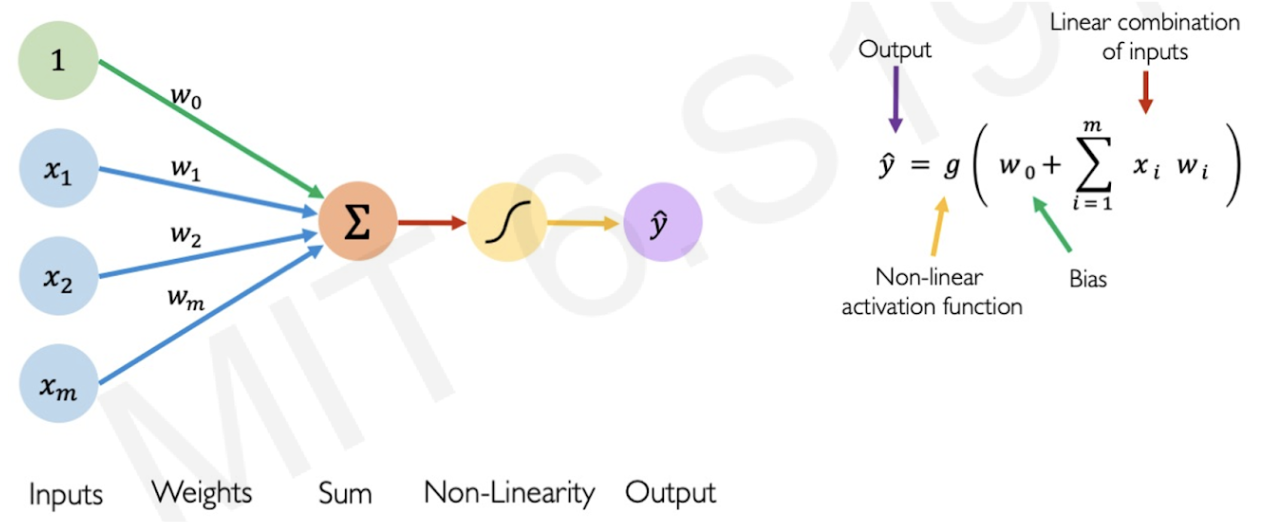

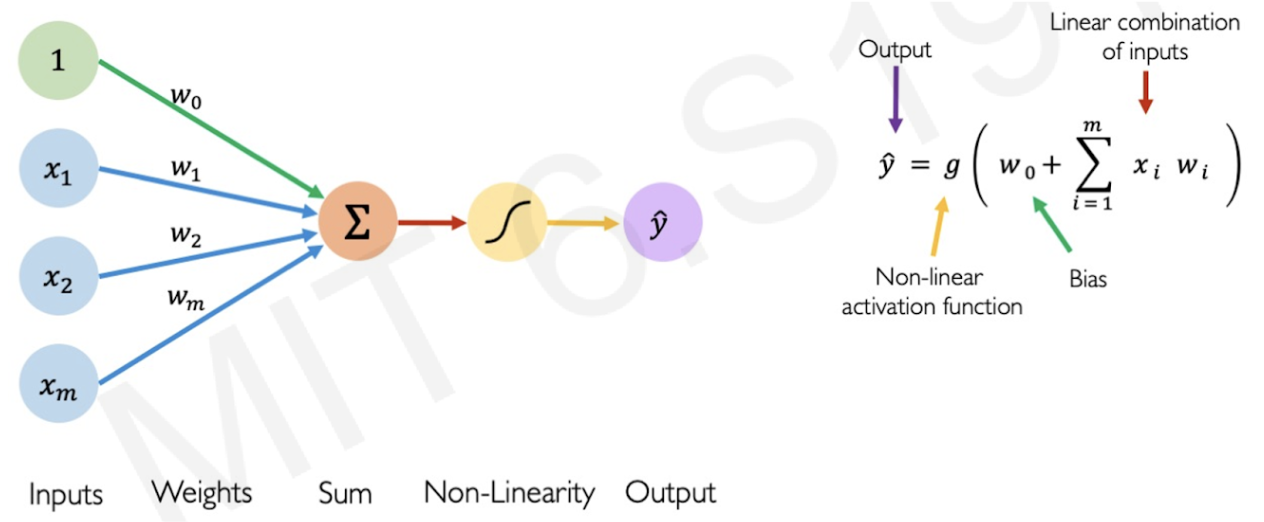

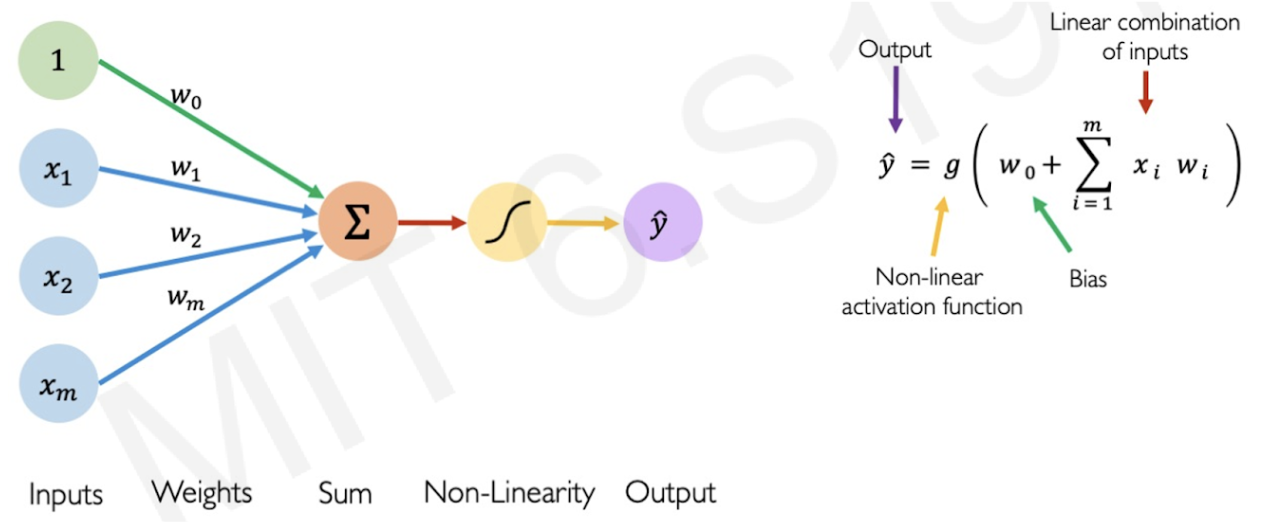

I. Perceptron

Atomic unit: represents single artificial neuron that takes in inputs, weights, and generates output

Inputs (I. Perceptron component)

Includes inputted data and bias

Bias - extra value added to products of weights and inputs (^ flexibility)

Prevent overfitting

Shifts activation threshold so model can activate even when input is 0

Weights (I. Perceptron component)

Values assigned pseudorandomly to determine the strength/importance of each input

Sum/summation (I. Perceptron component)

Adds all the products of the inputs and weights

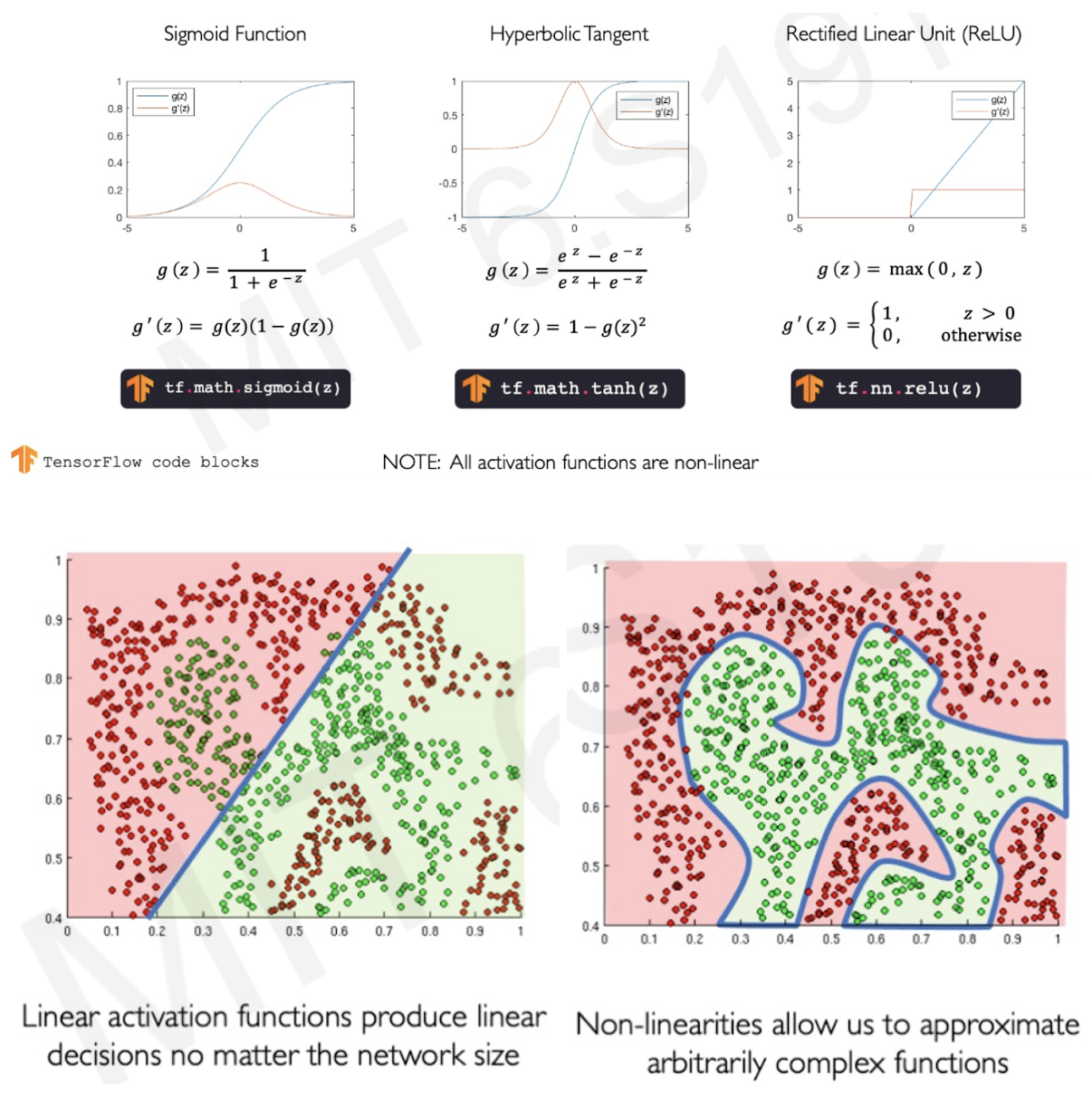

Activation function (I. Perceptron component)

Introduces non-linearity: one perceptron outputs one curve, multiples curves create complex function

Types: Sigmoid function, hyperbolic tangent, rectified linear unit (ReLU)

Output (I. Perceptron component)

Final predicted value

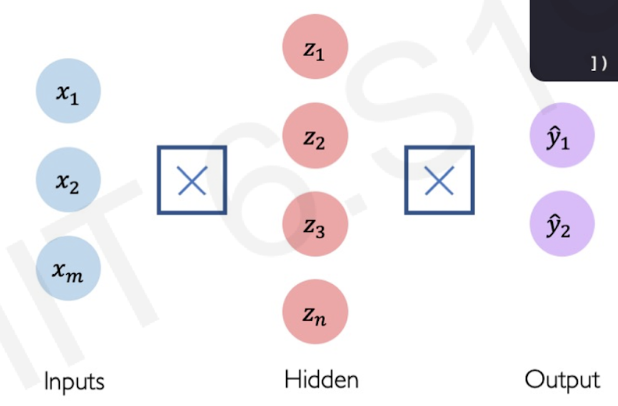

Dense/hidden layers (I. Perceptron)

Multi-output perceptron: output becomes others’ input

^ accuracy in extracting complex features

II. Quantifying loss function

Loss - cost incurred from incorrect prediction (predicted - actual)

Empirical loss (II. Types of loss functions)

Measure total loss over entire dataset: Sum total of losses

Cross entropy loss (II. Types of loss functions)

Better for models that output probability between 0 and 1

Mean squared error loss (II. Types of loss functions)

Better for regression models that output continuous real numbers

III. Epoch

Number of training iterations/cycles

Ex: epoch = 20 —> go through neural network 20 times, updating weights 20 times