PSCL 353 Psyc of Learning

1/211

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

212 Terms

Learning

Definition (from slide):

A relatively permanent change in behavior that occurs as a result of experience with events

Important exclusions (from slide):

❌ Not temporary changes (e.g., fatigue)

❌ Not changes due to maturation

Why this matters:

This definition separates learning from other causes of behavior change.

Maturation

Definition (implied by slide):

Behavior change that occurs due to the passage of time and physical growth, not experience

Why it’s important here:

The slide explicitly contrasts maturation with learning to clarify what does NOT count as learning.

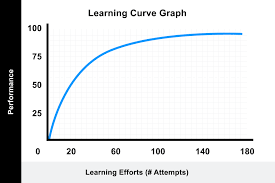

Learning Curve

Definition (from slide):

A graph showing the acquisition of a behavior change over many learning trials

Key properties (from slide):

Monotonic → learning moves in one direction (improves)

Negatively accelerated → learning slows down over time

Why it matters:

Learning is fastest early on, then gains become smaller — a core empirical pattern in learning research.

MCQ Memory Anchor

Learning = experience → lasting behavior change

Maturation = growth, not learning

Learning curve = learning over trials, slows with time

Maturation

Definition (implied by slide):

Behavior change that occurs due to the passage of time and physical growth, not experience

Why it’s important here:

The slide explicitly contrasts __ with learning to clarify what does NOT count as learning

Learning Curve

Definition (from slide):

A graph showing the acquisition of a behavior change over many learning trials

Key properties (from slide):

Monotonic → learning moves in one direction (improves)

Negatively accelerated → learning slows down over time

Why it matters:

Learning is fastest early on, then gains become smaller — a core empirical pattern in learning research.

Memory

Definition (from slide):

The retention or retrieval of information over time

Key emphasis (from slide):

__ focuses on how behavior change or information is retained, not how it is acquired.

Engram (Memory Trace)

Definition (from slide):

The record of information stored in memory, also called the memory trace

Why this matters:

It reflects the assumption that memory has a physical or representational basis in the organism.

Context the slide gives (important distinction):

Learning → acquisition of behavior change

Memory → retention and retrieval of that change

This distinction explains why learning and memory are studied as related but separate processes.

MCQ Memory Anchor

Memory = retention or retrieval

Engram = stored record of information

Learning ≠ memory (acquisition vs retention)

Engram (Memory Trace)

Definition (from slide):

The record of information stored in memory, also called the __ __

Why this matters:

It reflects the assumption that memory has a physical or representational basis in the organism.

Comparative Psychology

Definition (from slide):

The scientific study of differences between species

Explanation (from slide context):

__ __ compares human and nonhuman animal behavior, but researchers must be cautious about how much mental complexity they assume in animals.

Morgan’s Canon

Person (from slide):

C. Lloyd Morgan (1903)

Definition (from slide):

Animal behavior should not be interpreted using higher psychological processes if it can be explained using simpler processes

Explanation:

Morgan’s Canon argues for parsimonious explanations, meaning scientists should choose the simplest explanation that fully accounts for the behavior, rather than assuming complex mental abilities.

Example (aligned with slide intent):

If an animal solves a task through trial-and-error learning, we should not assume reasoning or insight unless simpler explanations fail.

MCQ Memory Anchor

__ __ = compare species

Morgan’s Canon = use the simplest (parsimonious) explanation

Philosophical Origins

Philosophical Origins refers to the early philosophical questions and ideas that shaped how psychologists think about knowledge, learning, and the mind. These ideas set the foundation for scientific theories of learning and memory.

Epistemology

Definition (from slide):

The philosophical study of the nature of knowledge — asking how we come to have knowledge

Explanation (connected to the title):

Epistemology is included here because learning and memory research depends on understanding where knowledge comes from and how it is formed.

MCQ Memory Anchor

Philosophical origins = ideas about knowledge before psychology

Epistemology = how knowledge is possible

Psychological Origins

Refers to early schools of psychology that shaped how researchers began to study the mind and behavior before modern learning theories.

Person: Wilhelm Wundt

Definition: Focus on the structure of the conscious mind, used introspection (a method in which one looks carefully inward, reporting on inner sensations and experiences.)

In other words, the study of the structure of the mind → the sensations, images, and feelings

Wilhelm Wundt (first psych lab, practiced introspection)

Edward Titchener → worked at Cornell with Wundt; attempted to study the structure of the unconscious mind (dubbed it functionalism)

William James

Definition:

An approach that focused on what mental processes do and how they help individuals adapt to their environment. William James → proposed the approach of __ (emphasis on the functions of consciousness); his approach was influenced by Darwin

E.g., asked questions like, “how does the mind adapt to new circumstances?”

Example:

Studying memory in terms of how it helps us learn and survive, not just what it is made of.

Why it matters:

Cognitive psychology shares this goal of understanding how thinking helps us function.

Explanation:

Structuralism aimed to break conscious experience into basic elements, making it one of the first attempts to scientifically study the mind.

MCQ Memory Anchor

___ (Wundt) = structure of conscious experience

___ (James) = purpose of consciousness

Structuralism

Person:

Wilhelm Wundt

Definition:

The view that the proper topic for psychology is conscious processes and immediate experience, studied mainly through introspection.

Explanation:

___ aimed to break conscious experience into basic elements, making it one of the first attempts to scientifically study the mind.

Functionalism

Person:

William James

Definition:

An approach emphasizing the functions of consciousness and how mental processes help individuals adapt to their environment.

Explanation:

__ focused on what the mind does, which influenced later theories of learning and behavior.

Scientific Origins:

This slide explains how evolutionary theory shaped psychology by viewing learning and behavior as adaptations that help organisms survive and reproduce.

Evolution

Definition (from slide):

Characteristics of species change over time, so descendants may differ from earlier members.

Explanation:

Evolution provides the biological foundation for why learning exists — it helps organisms adapt to their environment.

Natural Selection

Person:

Charles Darwin

Definition (from slide):

Individuals vary in traits

Some traits increase survival and reproduction

These traits are passed to offspring

Helpful traits become more common over generations

Explanation:

Learning is adaptive because it increases an organism’s chances of survival.

Environment of Evolutionary Adaptiveness (EEA)

Definition (from slide):

The environment that existed when a trait was evolving.

Explanation:

Some learning mechanisms evolved to solve problems in ancestral environments, even if those problems are different today.

Historical Contrast on the Slide: Lamarck vs. Darwin

Jean-Baptiste Lamarck (false start):

Proposed that acquired characteristics (traits gained during life) could be inherited.

Why he’s mentioned:

The slide includes Lamarck to show an early but incorrect idea about evolution that was later replaced.

Darwin (accepted view):

Traits are inherited only if they are genetically passed on, through natural selection.

MCQ Memory Anchor

Lamarck = acquired traits inherited (wrong)

Darwin = natural selection (correct)

Evolution → learning as adaptation

Evolution

Definition (from slide):

Characteristics of species change over time, so descendants may differ from earlier members.

Explanation:

__ provides the biological foundation for why learning exists — it helps organisms adapt to their environment.

Natural Selection

Person:

Charles Darwin

Definition (from slide):

Individuals vary in traits

Some traits increase survival and reproduction

These traits are passed to offspring

Helpful traits become more common over generations

Explanation:

Learning is adaptive because it increases an organism’s chances of survival.

Environment of Evolutionary Adaptiveness (EEA)

Definition (from slide):

The environment that existed when a trait was evolving.

Explanation:

Some learning mechanisms evolved to solve problems in ancestral environments, even if those problems are different today.

An Example of Evolution-Based Psychological Theory

This slide gives a concrete example of how evolutionary theory is used to explain learning and behavior, instead of just describing evolution in general.

Buss’ Sexual Strategies Theory

Person: David Buss

What Buss thought (main claim):

He argued that human mating behavior can be explained using evolutionary principles (like other species) — men and women evolved different mating strategies because they faced different reproductive pressures.

Definition:

Human mating behavior evolved via natural selection, leading men and women to pursue short-term and long-term mating strategies.

Core idea (why sexes differ):

Differences come from different biological investments in reproduction.

Key logic (helpful examples):

Men: lower biological investment → more emphasis on short-term mating + fertility cues

Women: higher biological investment → more emphasis on resources/commitment and selectivity; short-term mating can sometimes provide resources or genetic benefits

Humans are unusual (from slides):

Single-offspring births

Paternal investment

Female menopause

Hidden ovulation

Sex outside fertile periods

MCQ memory anchor:

Buss = evolutionary explanation of sex differences in mating strategies

Wants to explain human mating behavior in the = terms as behavior in other species, considers (takes into account) environment of evolutionary adaptiveness

Human mating behavior is unusual in some respects:

Single offspring birth (typically shared with some primates)

Paternal investment in humans (greater in humans)

Female menopause (useful for mothers to be around, e.g., grandma)

Hidden ovulation in females

F → sexually receptive even when not ovulating (in humans, other species may kill male)

Sex is private (humans)

What it involves:

Both sexes pursue short-term + long-term mating strategies

Men devote > part of their effort to short-term mating

Successful short-term mating (Men) → many partners, no parental involvement, only with potentially fertile women

Successful long-term mating (Men) ⇒ probable fertility, certainty of paternity (more guarding)

Vs Females

Successful short-term mating → providing resources (being able to stay alive)

Prospects (likelihood) for long-term mating

Cheating: better genetic contribution than potential long-term mate (happens through behavior unconsciously)

Long-term mating

Find mates who will be able and willing to provide resources (care for personally too; intelligence, they will be able to care for children)

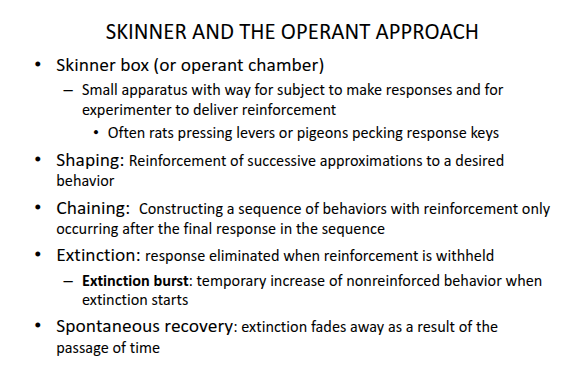

Behaviorism

This slide introduces behaviorism, an approach that explains learning by focusing on observable behavior rather than internal mental processes.

Person(s) (from slide):

John B. Watson (1913)

B. F. Skinner (radical behaviorism)

Definition (from slide):

An approach that views psychology as the study of observable events, defining learning in terms of stimulus–response (S–R) relationships

Key features emphasized on the slide:

Antimentalistic → rejects mentalistic explanations

Empiricist & associationist → behavior shaped by experience

Reductionist → explains behavior using basic components

Skinner’s contribution (from slide):

Radical behaviorism attempted to remove all mentalistic concepts from psychology.

Why this slide matters:

Behaviorism strongly influenced early learning research and later motivated the development of cognitive approaches that reintroduced mental processes.

MCQ Memory Anchor

Behaviorism = observable behavior only

Watson → S–R psychology

Skinner → radical behaviorism, no mental terms

Cognitive Approaches to Learning

This slide introduces an approach that explains learning by focusing on mental processes that guide behavior, not just observable actions.

Definition (from slide):

An approach to learning that uses measures of behavior to develop and test theories about mental processes.

What this approach assumes (from slide):

The mind processes information by encoding, transforming, storing, and retrieving it

The mind is often compared to a computer

Learning involves forming an internal (mental) representation that guides behavior

The organism is an active processor of information, not a passive responder

Why this matters (as intended by the slide):

The cognitive approach contrasts with behaviorism by arguing that mental representations must be studied to fully understand learning.

MCQ Memory Anchor

Cognitive approach = behavior used to infer mental processes

Key idea = internal representations guide behavior

Repetition Priming

Definition (PROF’s exact slide words):

“Processing of a stimulus is affected by a previous presentation of it.”

Examples from the slide:

Can often be shown in preference (liking) judgments:

Maslow (1937): Preference for familiar tasks, familiar lab, and familiar pictures.

Not just humans: Rats show neophobia (fear of new things) for foods, places, and people.

Other methods of __ __:

Priming in perceptual identification: Easier to identify rapidly-shown words if they had been shown earlier.

Priming in word completion: Easier to complete word fragments (-V---V-, ---F-M-) if words had been seen earlier (e.g., EVASIVE, PERFUME).

Explanation (mine):

__ __ occurs when a previous exposure to a stimulus affects how you process it later. Essentially, if you see something multiple times, you’re faster and more accurate at recognizing or completing it. For example, seeing the word "EVASIVE" earlier makes you more likely to complete a word fragment like "-V---V-" with that word.

The Maslow (1937) example also shows that familiarity with a task or environment (like being in the same lab or seeing the same picture) leads to more positive reactions, because the brain processes familiar stimuli more easily.

Habituation

Definition (PROF’s exact slide words):

“Decrease in response to a stimulus after repeated presentations”

Bullets from the slide

Response declines with repetition

Not due to sensory adaptation or fatigue

Considered a simple form of learning

Examples from the slide

Looking response in infants decreases after seeing the same stimulus repeatedly

Coolidge effect: When a male animal’s sexual response decreases after repeated exposure to the same female (habituation). However, when a new female is introduced, the male’s sexual response suddenly increases again.

Fear responses Animals show decreased fear responses after repeated exposure to the same stimuli.

Siphon withdrawal in Aplysia: If Aplysia receives identical touches six times within a few minutes, there is almost no withdrawal response

Neural explanation: There is a change in the strength of the connection between the sensory neuron and the motor neuron involved in the response

Explanation (mine)

Across infants, animals, and even sea slugs, repeated exposure weakens the response because the nervous system learns the stimulus is not important. This learning can be seen all the way down at the level of synaptic connections between neurons.

Dishabituation

Definition (PROF’s exact slide words):

“Recovery of a response after habituation when a new stimulus is presented”

Example from the slide (fully explained)

Siphon withdrawal in Aplysia

Aplysia is a sea slug with a simple nervous system.

It has a body part called a siphon. When touched, it reflexively withdraws the siphon for protection.

If the siphon is touched repeatedly (six times within a few minutes), the withdrawal response becomes very small. This is habituation.

After this habituation, if you shine a bright light on the animal and then touch the siphon again, the siphon withdraws strongly.

Explanation (mine)

The strong withdrawal after the bright light shows the animal was not tired or fatigued. It had learned to ignore the repeated touch. The new stimulus (light) resets the nervous system, and the response returns.

Discrimination

Definition (PROF’s exact slide words):

“The ability to tell the difference between two stimuli”

Example from the slide (now fully explained)

Bornstein et al. (1976): Use habituation and discrimination to show that 4-month-old infants can distinguish colors like adults.

Habituate the baby to a greenish blue (480 nm): Fixation times drop with repeated presentations.

After a break, present one of three slides: More habituation to blue (450 nm) than bluish green (510 nm).

Habituate to bluish green (510 nm): Fixation times drop with repeated presentations.

After a break, present one of three slides: More habituation to green (540 nm) than greenish blue (480 nm).

Explanation (mine)

The experiment shows infants’ ability to discriminate between colors using habituation (getting used to a stimulus) and discrimination (noticing differences between stimuli). After the infants are repeatedly exposed to a color, their response decreases. But when a different color is introduced, their attention increases, proving they can tell the difference.

Aplysia

Definition (PROF’s exact slide words):

“__ californica is a giant marine snail with a very simple nervous system with few neurons. The neurons are large and therefore easy to study.”

Example from the slide

The behavior studied was siphon withdrawal: Aplysia uses a siphon to take in sea water, and in response to danger, it can withdraw the siphon back into its body.

Siphon withdrawal shows habituation: If Aplysia receives identical touches six times within a few minutes, there is almost no withdrawal response.

Neurally, this happens because there is a change in the strength of the connection between the sensory neuron and motor neuron involved in the response.

Siphon withdrawal shows dishabituation: After habituation has occurred, if a bright light is shined, the animal will show a big siphon withdrawal again after a touch.

Explanation (mine)

Aplysia is used for studying simple forms of learning because it has large neurons, making it easy to observe neural changes. The siphon withdrawal reflex is a protective behavior. When Aplysia is repeatedly touched, it stops withdrawing its siphon (habituation). When a new stimulus (like the bright light) is added, the withdrawal response comes back (dishabituation), showing that the organism’s nervous system is learning to ignore some stimuli while responding to others.

Sensitization

Definition (PROF’s exact slide words):

“Magnification of a response as a result of repetition”

Examples from the slide

Siphon withdrawal in Aplysia: After a strong stimulus (such as a bright light), Aplysia shows an increased response to a mild touch, demonstrating __.

Emotional responses: Exposure to a painful stimulus (e.g., a bee sting) can make you more __ to minor stimuli afterward.

Explanation (mine)

___ occurs when an intense stimulus increases the magnitude of the response to subsequent, less intense stimuli. It’s the opposite of habituation, where repeated exposure decreases a response. In Aplysia, a bright light makes the animal more sensitive to a touch afterward, showing this change in neural sensitivity.

Thompson et al. (1973): Dual-Process Theory of Habituation and Sensitization

Definition (PROF’s exact slide words):

“Response to stimulus depends on two different sets of neurons”

Examples from the slide:

Type H neurons: These neurons are most directly involved in the reflex arc and tend to habituate to repeated stimulation.

Type S neurons: These are more central and reflect the general state of arousal in the organism; they enhance responsiveness (leading to sensitization).

Explanation (mine):

Thompson’s theory explains that habituation and sensitization are controlled by two separate systems of neurons:

Type H neurons are responsible for habituation. These neurons “get used to” the stimulus and start to respond less over time.

Type S neurons are responsible for sensitization. These neurons become more active when an organism is in a heightened state of arousal, making the response stronger.

The important takeaway: habituation and sensitization aren’t opposites. They are controlled by different neural systems that can operate simultaneously to shape behavior. For example, an animal might habituate to a repetitive noise (Type H neurons) but become more sensitive to a loud sound if it’s associated with danger (Type S neurons).

Habituation and Sensitization

Habituation: A decrease in response to a stimulus after repeated exposure.

Sensitization: An increase in response to a stimulus following repeated exposure to that stimulus, particularly if the stimulus is intense or noxious.

Examples from the Slide:

Habituation

Example:

Siphon withdrawal in Aplysia: If Aplysia is repeatedly touched on the siphon (a sensory organ), the response of withdrawing the siphon decreases with repeated exposure.

Infant looking times: Babies may initially react to a new toy, but over time, they lose interest if the toy is presented repeatedly.

Sensitization

Example:

Loud noise and startle response: After hearing a loud sound (e.g., a firecracker), you may become more sensitive to other sounds, jumping at every small noise.

Shock response: After experiencing an intense shock, you may show heightened reactions to smaller, less intense stimuli.

Explanation (mine):

Habituation occurs when we stop responding to a stimulus after we’ve been exposed to it repeatedly. For instance, if you live near a busy street, at first, the sound of traffic might disturb you. But over time, you become less sensitive to the noise because you have learned to ignore it.

Sensitization, on the other hand, increases your sensitivity to a stimulus after experiencing it several times, especially if it’s strong or unpleasant. For example, if you’re shocked by a loud noise, you might jump at every small sound afterward.

Acquired Motivation

Definition (PROF’s exact slide words):

“Our emotional reactions to stimuli may change as a result of experience.”

Example from the slide

Motivation is not purely innate but may reflect learning.

Many emotional responses are biphasic (two different stages).

Explanation (mine)

Emotional reactions change over time with experience. For example:

Unpleasant stimuli may become more pleasant (like how fear can become excitement).

Pleasing stimuli may become unpleasant after too much exposure (like eating too much of your favorite food).

Opponent-process Theory of Acquired Motivation

Definition (PROF’s exact slide wording):

The __ ___theory suggests that emotional responses (both positive and negative) trigger a counteracting response after they occur. Over time, the initial emotional response (A) habituates, while the counteracting response (B) sensitizes, leading to stronger and more lasting emotional reactions.

Key Examples from the Slide:

Drug Tolerance and Addiction

Example: As a person becomes tolerant to a drug (like heroin), the initial euphoria (A) diminishes, but the negative withdrawal symptoms (B) become more pronounced. Over time, the person requires more of the drug to reach the same pleasurable effects, and the negative withdrawal feelings are amplified.

Skydiving

Example: Initially, a person might feel intense fear (A) during their first skydiving experience, followed by exhilaration (B). However, after multiple jumps, the fear response diminishes, and the excitement grows stronger, as their emotional system adapts.

Romantic Passion

Example: In romantic relationships, intense passion (A) may be followed by feelings of longing or loss (B). Over time, these feelings of longing may increase, even though the initial intensity of the romantic attraction weakens.

Shock Example (from the slide)

Example: If you are shocked (intense emotional experience A), your heart rate may increase drastically. However, after repeated shocks, the initial heart rate increase (A) will become less pronounced, while the drop in heart rate (B) after the shock becomes more pronounced and long-lasting. This shows that intense emotional reactions (A) tend to habituate while the counteracting responses (B) sensitize over time.

Explanation (mine):

The ___ ___ theory explains that emotions don’t happen in isolation — they trigger opposite responses (counterreaction B). The initial emotion (reaction A) weakens over time due to habituation, but the opposite emotional response (B) grows stronger through sensitization.

For example:

In drug addiction, you stop feeling the initial pleasure (A), but the withdrawal symptoms (B) become stronger and more intense.

In skydiving, your fear response (A) lessens, but the excitement (B) increases as you get more used to the activity.

Significance:

___ ___ theory explains how addiction and emotional adaptation work. It’s not just about the initial emotional experience; it’s about how the emotional system balances itself by adjusting over time.

This theory is useful for understanding tolerance in addiction and emotional changes in activities like skydiving or romantic passion.

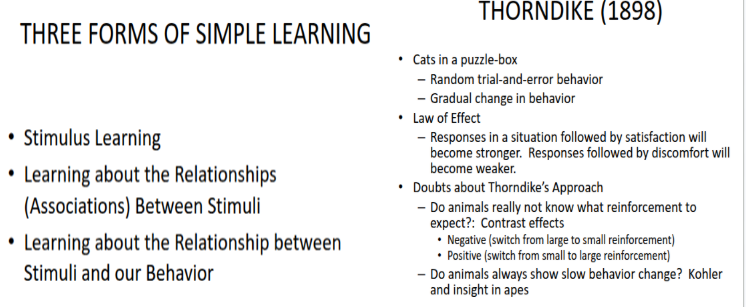

Stimulus Learning

Definition (PROF’s exact slide words):

“Learning about the relationships (associations) between stimuli.”

Examples from the Slide:

Recall / Recognition

Example: Free recall, cued recall, and recognition tasks measure how well we can retrieve information from memory.

Repetition Priming

Example: You identify a word faster if you’ve seen it before, such as identifying “cat” faster if it was shown to you earlier.

Habituation / Sensitization

Example:

Habituation: Over time, you stop reacting to a familiar stimulus (e.g., you stop noticing a clock ticking).

Sensitization: You become more sensitive to a stimulus after a strong or noxious experience (e.g., after an initial shock, you become more sensitive to other noises).

Opponent-process Theory of Acquired Motivation

Example: If you experience a positive emotion (e.g., excitement), you might eventually feel the opposite (e.g., boredom) after prolonged exposure.

Perceptual Learning

Example: After seeing a face repeatedly, you recognize and distinguish facial features (e.g., recognizing someone in a crowd).

Explanation (mine):

__ ___ occurs when organisms learn through exposure to stimuli and form associations between them. There are various ways of stimulus learning:

Habituation (ignoring repetitive, non-important stimuli)

Sensitization (becoming more sensitive to important stimuli)

Perceptual Learning (learning to recognize and distinguish specific stimuli like faces).

Repetition Priming shows us that previous exposure makes it easier to identify stimuli later.

Hedonic Treadmill

Definition (Prof’s exact slide wording):

Emotion systems adapt to life circumstances, returning us to our emotional set point.

Life experiences have only a temporary effect on our level of happiness.

Wealth and physical attractiveness have only a very weak relationship with happiness.

Lottery winners

Widows/widowers

Crippling accidents

Explanation (mine)

__ __ is a concept from Diener et al. (2006) that suggests that our happiness levels tend to return to a baseline after experiencing significant life events, whether positive or negative. This model proposes that even major events, like winning the lottery or suffering from a traumatic incident (e.g., the death of a loved one or a serious injury), have only a temporary effect on our happiness. Over time, we tend to adapt to these experiences, returning to a natural emotional set point.

Examples:

Lottery winners often report an increase in happiness initially, but after a period, their happiness tends to return to baseline levels.

Widows/widowers, while they experience a period of grieving and lower happiness, eventually return to their baseline emotional state.

Crippling accidents may lead to a period of distress, but people typically adapt, and their happiness levels stabilize over time.

This suggests that material gains or losses do not contribute to long-term happiness—internal factors (e.g., emotional set point, personality) and how we adapt to life events play a larger role in our happiness over time.

Perceptual Learning

Definition (from the slide):

“Once we have learned how to perceive or identify a stimulus, it is easier to learn other things about it.”

Key Examples from the Slide:

Gibson & Gibson (1955)

Example: Participants were shown “scribble” cards (coiled drawings differing in tightness and left-right orientation). After repeated exposure to one "standard" scribble, participants were asked to determine if other scribbles matched it. With repeated trials, they became more accurate at distinguishing between scribbles.

Explanation: This shows that repeated exposure to similar stimuli helps improve the ability to distinguish subtle differences.

Gibson & Walk (1956)

Example: Rats raised in an enriched environment (exposed to shapes like circle and triangle) were faster to discriminate between the two shapes compared to rats in a control environment.

Explanation: This shows how repeated exposure to stimuli helps improve discrimination ability over time.

Additional Concepts and Mechanisms (added for depth):

Differentiation

Definition: Learning to make finer distinctions between stimuli that appear similar at first glance.

Example: In the Gibson & Gibson (1955) experiment, participants became more sensitive to subtle differences between scribbles as they practiced sorting them.

Explanation: Differentiation refers to the ability to notice minor differences between similar stimuli, which is key for recognizing and distinguishing things in our environment (e.g., identifying different kinds of animals or objects).

Stimulus Storage

Definition: Storing specific features of stimuli to enable faster recognition when new, similar stimuli are encountered.

Example: A person who has frequently encountered a particular type of flower will quickly identify a similar flower in a new environment because they’ve stored key features.

Explanation: When we are repeatedly exposed to stimuli, our brain stores important characteristics of that stimulus to help us recognize similar stimuli more quickly in the future.

Attention Weighting

Definition: Learning to focus on important features of stimuli while ignoring irrelevant ones.

Example: In the case of a penny, people learn to focus on the shape and color rather than irrelevant features like the scratches on the surface.

Explanation: Attention weighting allows us to prioritize which features of a stimulus are most relevant, which is why we don’t notice all aspects of an object (e.g., when looking at a face, we focus on the eyes and mouth, not the shape of the ears).

Unitization

Definition: Learning to combine stimuli into larger, cohesive units to process them more easily and holistically.

Example: The Word-superiority effect shows that it’s easier to recognize a whole word like “CAT” than to recognize the individual letters “C”, “A”, and “T” on their own.

Explanation: Unitization refers to the ability to process stimuli as a whole, rather than as separate parts. When we recognize something as a unit (like a word), our brain processes it more efficiently and accurately.

Memory Hook:

__ __ = Improved ability to perceive or identify stimuli with experience.

Significance:

These concepts show that __ ___ isn’t just about learning to recognize stimuli, it also involves fine-tuning how we process them, from differentiating between similar objects to focusing on relevant features.

As we become more experienced with a type of stimulus, we refine our ability to process and identify similar stimuli, making it easier to recognize and interact with the world around us.

Perceptual Learning in Holistic Face Recognition

Definition (from the slide):

Perceptual learning in face recognition refers to the process by which faces are recognized more easily when viewed as holistic units (whole faces), rather than as parts.

Key Examples from the Slide:

Composites Effect

Example (from slide):

Participants are shown half of a famous face. When this half is paired with half of another famous face, it becomes much more difficult to recognize. This happens because we treat faces holistically (as a whole), not just as individual parts.

However, it becomes easier to recognize the faces when the two halves are misaligned.Explanation: This demonstrates that face recognition works better when we process faces as integrated wholes, not by individual features. The composite effect shows that holistic processing is important for recognizing faces. Misaligning the halves reduces the holistic effect, making it easier to recognize the faces.

Whole Advantage

Example (from slide):

Participants study a full face. When asked to recognize it later, they can distinguish it from another face that differs only in the nose. However, they can’t recognize just the nose alone in isolation.Explanation: This shows that we process whole faces better than individual features. The Whole Advantage implies that face recognition is more accurate when the full face is presented rather than a single feature, such as the nose.

Inversion Effect

Example (from slide):

When faces are presented upside down, it disrupts face recognition, especially sensitivity to spatial relations between facial features (e.g., the distance between eyes, nose, and mouth).Explanation: The Inversion Effect demonstrates that face recognition is much more difficult when faces are inverted. We process faces better when they are upright, and inversion disrupts our ability to process them holistically and accurately. This effect underscores how specialized face recognition mechanisms are in the brain, optimized for upright faces.

Explanation (mine):

In this slide, the key focus is on holistic face processing, which means we tend to recognize faces as a whole rather than focusing on individual features. The Composites Effect and Whole Advantage illustrate this by showing how much harder it is to recognize faces when they are split into parts or isolated features (like the nose). The Inversion Effect further emphasizes that our face recognition abilities are orientation-dependent, and we struggle more with faces presented upside down.

Significance:

The Composites Effect and Whole Advantage show that holistic processing is a fundamental aspect of face recognition.

The Inversion Effect highlights the specialization of the brain for processing faces in their natural, upright position.

This research has important implications for understanding how the brain processes faces differently from other objects

🔥 The difference in one sentence

Composite Effect: The whole interferes with recognizing a part

Whole Advantage: The whole helps you recognize a part

Both prove: faces are processed holistically, not by features.

But:

Composite = whole is a problem

Whole advantage = whole is a benefit

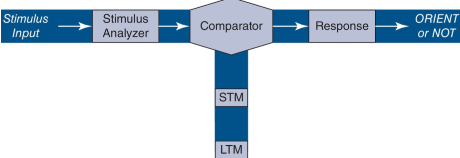

Wagner (1976) Cognitive Theory of Habituation

This theory emphasizes the distinction between short-term memory and long-term memory.

Habituation could reflect either short-term memory or long-term memory.

Responses are reduced if stimulus is recognized.

From the slide

Short-term habituation

Stimulus is likely to be recognized if it is still in short-term memory.

Massed repetitions increase probability that one or more occurrences are in STM.

Long-term habituation

Stimulus may be recognized if it can be retrieved from long-term memory.

Spaced repetitions increase probability that occurrences can be retrieved.

Expectancies / Missing stimulus effect

Our memories allow us to build expectancies.

We may show greater orienting response if expected stimulus fails to occur.

Diagram from the slide (what it means)

Stimulus Input → Stimulus Analyzer → Comparator → Response → ORIENT or NOT

The Comparator checks the current stimulus against what is stored in:

STM (recent exposures)

LTM (earlier exposures)

If the stimulus is recognized → reduced orienting (habituation)

If the stimulus is not recognized or expected but missing → strong orienting response

Explanation (mine)

Wagner is saying habituation is not just “help I’m bored of this stimulus.”

It is a memory process.

You stop responding because your brain says:

“I’ve seen this before.”

If you saw it very recently → STM → massed trials cause habituation

If you saw it a while ago but remember it → LTM → spaced trials cause habituation

If you expect it and it doesn’t happen → you react more, not less (missing stimulus effect)

This is why:

Massed trials = short-term habituation

Spaced trials = long-term habituation

Reminder: this slide is the reason your professor cares about spacing vs massing and memory in habituation.

Contingency Learning

Exact idea from the slide

Acquisition of knowledge about correlations between stimuli

Learning that one stimulus predicts another stimulus

even when that relationship is irrelevant to the task.

Example from the slide — Lin & MacLeod (2018)

Subjects complete 8 blocks, 48 trials each.

On every trial they see a word:

MONTH, UNDER, PLATE, CLOCK

printed in red, yellow, or green.

Their only task is to press a key for the color.

They are not told to pay attention to the word.

Critical manipulation

One word appears equally often in all three colors → baseline

Other words appear:

83.33% of the time in one color → high contingency

8.33% in the other colors → low contingency

Example pattern the brain starts picking up:

MONTH → usually red

UNDER → usually green

Results from the slide

Compared to baseline:

High-contingency trials → faster responses

Low-contingency trials → slower responses

This happens:

Even though the word is irrelevant

In the first block (very fast learning)

What this actually shows (the part that was missing)

Your brain learns:

“This word predicts this color”

without trying, without awareness, and without needing it for the task.

So when MONTH appears, your brain is already biased toward red before you even look at the color.

That prediction makes you faster when the prediction is correct and slower when it is violated.

Why this is ___ ___

Because you learned the correlation:

Word ↔ Color

That is learning about the statistical relationship between stimuli.

That is __ ___.

Classical Conditioning

Classical Conditioning Origin of the idea — Ivan Pavlov

Ivan Pavlov was not a psychologist. He was a physiologist studying digestion in dogs.

While studying salivation, he noticed something strange. The dogs began salivating before food was placed in their mouths. They salivated when they heard the footsteps of the lab assistant, the sound of the door, or any cue that reliably occurred before food.

Pavlov realized the dogs were not simply reacting to food. They were learning that one stimulus predicts another stimulus.

This discovery is called Classical Conditioning.

Definition

Classical conditioning is learning about the relationship between stimuli.

A neutral stimulus becomes meaningful because it predicts something important.

Example from Pavlov:

Food naturally causes salivation.

If a tone is repeatedly presented before food, the tone alone will later cause salivation.

The dog learned: the tone predicts food.

Pavlov’s Basic Components (from slides)

Unconditioned stimulus — food

Unconditioned response — salivation to food

Conditioned stimulus — tone

Conditioned response — salivation to tone

The conditioned response is not necessarily identical to the unconditioned response

Extinction — elimination of a conditioned response when the conditioned stimulus is presented alone

Spontaneous recovery — extinction fades away with time

Other Common Procedures (from slides)

These are different laboratory methods used to demonstrate the same learning process.

Eyeblink conditioning

An airpuff to the eye naturally causes a blink. If a tone is repeatedly presented before the airpuff, the tone alone will later cause blinking. The organism learned the tone predicts the airpuff.

Fear conditioning (Conditioned Emotional Response)

A shock naturally causes freezing. If a tone is repeatedly presented before the shock, the tone alone will later cause freezing. The organism learned the tone predicts danger.

Skin Conductance Response (Galvanic Skin Response)

Skin conductance measures sweating and arousal. Humans show increased skin conductance to a stimulus that has been paired with shock. This is used to study the role of awareness in conditioning. The key point from the slide is that awareness is sufficient but not necessary for conditioning.

What your professor corrects on later slides

For many years, classical conditioning was seen as primitive: if conditioned stimulus–unconditioned stimulus pairing is repeated enough, the response to the unconditioned stimulus transfers to the conditioned stimulus.

The slides say this is wrong.

Many repetitions are not needed (for example, conditioned taste aversion)

Repetition alone is not sufficient; the value of information in the conditioned stimulus is critical

The conditioned response is not identical to the unconditioned response

Classical conditioning is a sophisticated cognitive process that allows organisms to predict the occurrence of important events.

Evidence: Devaluation Experiments (from slides)

A dog learns that a tone predicts food and salivates to the tone.

Then the food is devalued by making the dog sick after eating it.

When the tone is played again, the dog no longer salivates.

If conditioning were just reflex transfer, the dog would still salivate. Instead, the dog learned that the tone predicts food, and when food loses value, the response disappears.

Rescorla (1967) — Proper Control Condition

The conditioned stimulus works because it provides information about the unconditioned stimulus.

The informativeness of the conditioned stimulus is defined as the difference between:

the probability of the unconditioned stimulus when the conditioned stimulus is present

the probability of the unconditioned stimulus when the conditioned stimulus is absent

The ideal control condition is the Truly Random Control Procedure, where these probabilities are equal. In this case, no learning occurs because the conditioned stimulus provides no information.

Explanation (mine)

Classical conditioning is not about repetition. It is about how well one stimulus allows the organism to predict another. The organism is learning predictions, not reflexes.

Unconditioned Stimulus (US)

Definition: e.g., food

Example from the slide

Food naturally produces salivation.

Explanation (mine)

This is a stimulus that automatically causes a response.

No learning is required.

Food → salivation happens naturally.

Unconditioned Response (UR)

Definition: e.g., salivation to food

Example from the slide

Salivating when food is placed in the mouth.

Explanation (mine)

This is the natural response to the unconditioned stimulus.

It happens before any conditioning.

Conditioned Stimulus (CS)

Definition: e.g., tone

Example from the slide

Originally, the tone does not cause salivation.

After being paired with food repeatedly, the tone alone produces salivation.

Explanation (mine)

The __ __ is a stimulus that starts out neutral.

It only becomes important because the animal learns:

“This stimulus predicts the US.”

The tone does not naturally cause salivation.

It gains meaning only through learning.

That’s why it’s called conditioned.

Conditioned Response (CR)

Definition - e.g., salivation to tone, not necessarily identical to unconditioned response

Example from the slide

Dog salivates to the tone.

Explanation (mine)

This is the learned response to the conditioned stimulus

Important: It is not just a copy of the UR — it is a predictive response.

Extinction

Definition

Elimination of a conditioned response as a result of presentation of the conditioned stimulus alone

Example from the slide

Tone is presented repeatedly without food → salivation disappears.

Explanation (mine)

The animal learns:

“The CS no longer predicts the US.”

Spontaneous Recovery

Definition:

Extinction fades away as a result of the passage of time

Example from the slide

After extinction, if time passes and the tone is played again, salivation briefly returns.

Explanation (mine)

Extinction does not erase learning.

The association is still there and can come back after time.

Conditioned Inhibition

Definition:

A conditioned stimulus can become associated with the absence of the unconditioned stimulus, rather than its occurrence.

Most classical conditioning teaches an organism that a stimulus means something is about to happen. ___ ___ teaches the organism that a stimulus means something is not about to happen.

Summation Test (Pavlov)

Phase 1

A dog is first trained with two separate pairings:

A bell is followed by food. The dog salivates.

A light is followed by food. The dog salivates.

At this point, the dog has learned that both the bell and the light predict food.

Phase 2

Now the experimenter changes the situation:

The bell is presented together with a tone.

No food is given.

This happens many times.

The dog begins to learn that when the tone is present, food will not occur, even though the bell normally predicts food.

Test

The experimenter now presents the light together with the tone.

Normally, the light causes salivation.

But if salivation is greatly reduced when the tone is present, this shows that the tone has become a conditioned inhibitor. The dog has learned that the tone signals the absence of food.

Retardation of Acquisition Test

Phase 1

The dog is trained:

A bell is followed by food.

The dog salivates to the bell.

Phase 2

Now the bell and a tone are presented together and no food follows.

The dog learns that the tone means food will not happen.

Test

The experimenter now tries to condition the tone with food by pairing the tone with food.

If the dog is very slow to learn that the tone now predicts food, this shows that the tone had already been strongly learned as a signal for the absence of food. This slow learning is called retardation of acquisition.

Explanation (mine)

These experiments prove that the organism is not only learning that a stimulus predicts an event. The organism can also learn that a stimulus predicts that an event will not occur. This is a different kind of learning and shows that animals track both presence and absence of important events.

Basic Phenomena of Classical Conditioning

Pre-exposure of conditioned stimulus

Latent inhibition of conditioned stimulus–unconditioned stimulus association

Acquisition (learning) curve

Monotonic negatively accelerated curve

Effects of conditioned stimulus–unconditioned stimulus interval

Two ways of varying the interval:

Delay Conditioning: Conditioned stimulus starts before unconditioned stimulus and stays on until unconditioned stimulus is done

Trace Conditioning: Conditioned stimulus starts before unconditioned stimulus and turns off before unconditioned stimulus starts

Intermediate interval is best; depends on response

Little evidence for simultaneous or backward conditioning

Intensity of unconditioned stimulus

Typically enhances learning

Intensity of conditioned stimulus

Effect found in within-subject designs

Explanation (mine)

These are the regular patterns researchers always observe when doing classical conditioning experiments.

Latent inhibition: If the organism hears the tone many times before it is ever paired with food, it becomes harder for the tone to form an association with food later. The organism learned the tone was meaningless.

Acquisition curve: Learning happens fast at first and then slows down as it approaches a maximum level of responding.

Delay vs Trace: Learning works best when the conditioned stimulus predicts the unconditioned stimulus. If they happen at the same time or the unconditioned stimulus happens before the conditioned stimulus, learning is very weak.

Intensity of unconditioned stimulus: Stronger shocks, stronger airpuffs, or more food lead to faster learning.

Intensity of conditioned stimulus: Stronger tones or lights are easier to learn from when compared within the same subject.

This slide tells you the rules of how classical conditioning works in real experiments.

Conditioned Taste Aversion

Definition:

Avoidance of food as response to illness

Relative preparedness defined by the number of learning experiences that must occur before behavior change is reliable

Unusual Aspects (from slide)

Long delay

In normal classical conditioning, the conditioned stimulus must occur shortly before the unconditioned stimulus. In taste aversion, the illness can occur hours after the food was eaten and learning still happens.One-trial learning

Most conditioning requires many pairings. Here, a single experience is enough for the organism to permanently avoid that food.Only certain aspects are learned (taste in rats)

The rat associates the illness specifically with the taste, not with other things that were present like sounds, sights, or the environment.

What this looks like in an experiment (explained)

A rat drinks a new flavored water for the first time.

Several hours later, the rat becomes sick.

The next time the rat is offered that flavor, it refuses to drink it — even though:

The sickness happened much later

It only happened once

Other stimuli were present at the time but were ignored

The rat learned that the taste caused the illness.

Preparedness (what this explains)

Preparedness means some associations are biologically easier to learn than others.

Organisms are “prepared” to learn that taste predicts illness because this helps them avoid poisonous food. The number of experiences required before behavior reliably changes is called relative preparedness.

Taste and illness require very few experiences.

Other associations (like sound and illness) require many and often do not occur at all.

Explanation (mine)

This slide is showing that classical conditioning does not always follow the usual rules. Some types of learning happen extremely easily because the brain is built to make those connections for survival.

Preparedness

Definition

Relative __ defined by the number of learning experiences that must occur before behavior change is reliable

What this means (from slides across examples)

___ explains why some associations are learned extremely easily while others are very difficult to learn.

Organisms are biologically predisposed to form certain associations because those associations were important for survival in evolutionary history.

Examples from the slides

Conditioned taste aversion

A rat drinks a new flavored water and becomes sick hours later. After one experience, the rat avoids that taste forever.

The rat associates the illness specifically with the taste, not with sights or sounds that were present.Phobias and fear conditioning (Ohman & Mineka, 2001)

Humans easily develop fear of snakes, spiders, heights, and angry faces. These are stimuli that were dangerous in our evolutionary past.The fear response:

Happens automatically and involuntarily

Is difficult to override with logic or reasoning

Involves specialized neural circuits such as the amygdala

Little Albert (Watson & Rayner, 1920)

A child learned to fear a white rat when it was paired with a loud noise. He then generalized this fear to other white furry objects.

Explanation (mine)

__ shows that classical conditioning is not equally easy for all stimuli. The brain is wired to quickly learn associations that helped our ancestors survive, such as taste and illness or visual cues and danger. The fewer experiences required to learn an association, the more “prepared” the organism is to learn it.

Rescorla–Wagner Theory of Classical Conditioning

Definition:

Classical conditioning has evolved to allow animals to predict events.

Classical conditioning is primarily stimulus–stimulus learning.

Core Ideas (from slide)

Conditioning takes place to the extent that the unconditioned stimulus cannot successfully be predicted. In other words, the unconditioned stimulus must be surprising for learning to occur.

Every unconditioned stimulus is limited in the amount of associative strength it can support, and possible conditioned stimuli compete with each other for that associative strength.

The amount of learning that occurs on any trial depends on the difference between how much learning is possible for that unconditioned stimulus and how much conditioning has already occurred to the conditioned stimuli present.

Delta V = f(Lambda − V)

Lambda is the amount of conditioning the unconditioned stimulus can support.

V is the total associative strength of all conditioned stimuli present on that trial.

What this looks like in an experiment (explained)

At the beginning of learning, a tone is followed by food. The food is surprising because nothing predicts it yet. A large amount of learning occurs.

As trials continue, the tone becomes a good predictor of food. The food is no longer surprising. Learning slows down.

If a second stimulus, such as a light, is added along with the tone, very little learning occurs to the light because the food is already predicted by the tone. The tone has already taken most of the associative strength the food can support. This explains blocking.

Explanation (mine)

This theory explains that conditioning is driven by prediction error. Learning happens when the outcome is unexpected. Once the organism can fully predict the unconditioned stimulus, no more learning occurs. Conditioned stimuli compete with each other because the unconditioned stimulus has a limited amount of learning it can support.

Overshadowing

Definition:

A stronger or more salient conditioned stimulus may be learned at the expense of a weaker conditioned stimulus

What actually happens in the experiment

An animal is trained with two stimuli at the same time:

A loud tone and a dim light are presented together → food.

This pairing happens many times.

Later, the experimenter tests each stimulus alone.

The animal salivates strongly to the tone, but barely responds to the light.

Why this happens (explained)

Both stimuli were paired with food the same number of times.

But the tone was more noticeable.

Because the tone stood out more, the animal mostly learned that the tone predicts food and paid little attention to the light.

The stronger stimulus “overshadowed” the weaker one during learning.

Blocking (Kamin, 1969)

Definition: Phase 1: Conditioned stimulus 1 – unconditioned stimulus

Phase 2: Conditioned stimulus 1 and conditioned stimulus 2 – unconditioned stimulus

No conditioned response to conditioned stimulus 2

What actually happens in the experiment

First, an animal learns:

A tone is followed by food → salivation.

Now the animal fully expects food when it hears the tone.

Then the experimenter changes the setup:

Tone and light are presented together → food.

After many trials, the experimenter tests the light alone.

The animal does not salivate to the light.

Even though the light was paired with food many times, no learning happened to it.

Why this happens (explained)

Because the tone already perfectly predicted the food.

The food was not surprising anymore.

Since the light added no new information, the animal did not learn it.

This proves conditioning is about prediction, not just pairing.

__ : Pre-existing learning blocks the new stimulus (CS2) from being conditioned, because CS1 already predicts the US well, and no new information is provided by CS2.

Unblocking: Increased intensity of the US allows the new stimulus to be conditioned, despite prior learning.

Unblocking

Definition (from the slide):

Occurs when a new stimulus (Stimulus 2) can become associated with the unconditioned stimulus (US) after the intensity of the US is increased, allowing it to become a more predictive signal for the response.

Slide Example (from the slide):

Phase 1: A light (Stimulus 1) is paired with a weak shock (Unconditioned Stimulus), causing a mild response.

Phase 2: A bell (Stimulus 2) is paired with the light (Stimulus 1) and a stronger shock.

Test: The bell (Stimulus 2) now causes a stronger response than before, because the stronger shock allowed the bell to be conditioned alongside the light.

Explanation (mine):

In __, you first have a conditioned response where Stimulus 1 (like the light) predicts something (like the shock).

When the shock’s intensity is increased, the brain recognizes this new “stronger” signal, and it allows Stimulus 2 (like the bell) to become associated with the US (shock) as well.

Without this increase in US intensity, Stimulus 2 would not have been able to make the same prediction about the shock.

Memory Hook: "__" learning by increasing the intensity of the unconditioned stimulus (US).

Imagine a weak shock (Stimulus 1) blocks learning for a new stimulus (Stimulus 2). When the shock's intensity is increased, the block is removed, and the new stimulus can now trigger a response.

Blocking: Pre-existing learning blocks the new stimulus (CS2) from being conditioned, because CS1 already predicts the US well, and no new information is provided by CS2.

___: Increased intensity of the US allows the new stimulus to be conditioned, despite prior learning.

Safety Learning

Definition: An example of inhibitory classical conditioning in which a stimulus becomes a signal that something painful will not occur.

What the slide describes

Rescorla–Wagner theory emphasizes prediction error. Learning happens when what occurs does not match what is expected.

In this situation, Stimulus A becomes associated with something painful, such as a shock.

However, when Stimulus X is present along with Stimulus A, no shock occurs.

Over time, Stimulus X becomes a safety signal.

What this looks like in an experiment

An animal is trained so that a tone is followed by a shock. The animal shows fear when it hears the tone.

Now the experimenter presents the tone together with a light, and no shock occurs.

After repeated trials, the animal learns that when the light is present, the shock will not happen.

The light becomes a signal for safety, even though the tone normally predicts danger.

Explanation (mine)

This is inhibitory learning because the organism is not learning that the stimulus predicts something. It is learning that the stimulus predicts the absence of something painful. This explains how organisms can feel safe in situations that would normally cause fear when a safety cue is present.

Superconditioning

Definition (from the slide):

An extremely strong association between an excitatory conditioned stimulus and an unconditioned stimulus when that conditioned stimulus overrules an inhibitory conditioned stimulus.

Procedure from the slide:

Phase 1: Inhibitory conditioning of Stimulus 2 to the unconditioned stimulus. Stimulus 2 becomes a signal that the unconditioned stimulus will not occur.

Phase 2: Stimulus 1 and Stimulus 2 are presented together, followed by the unconditioned stimulus.

Phase 3: Test Stimulus 1 alone. Very strong response occurs.

What this looks like in an experiment (Example):

Phase 1: First, an animal learns that a light means no shock will happen. The light becomes a safety signal.

Phase 2: Now, the experimenter presents a tone together with the light, and a shock occurs.

Phase 3: Later, when the tone is presented alone, the fear response is much stronger than normal.

Explanation (mine):

Phase 1 involves the learning of a safety signal: the animal learns that the light predicts the absence of shock, so it doesn’t react when the light is present.

In Phase 2, the tone is presented alongside the safety signal (the light), and a shock occurs. The shock is unexpected because the animal has been conditioned to expect no shock when the light is present.

Phase 3 tests the tone alone, and the animal now shows a stronger response than usual, because the shock was unexpected in the previous phase, creating a large prediction error. This strong learning is transferred to the tone.

Memory hook:

__ = A huge surprise after the safety signal is learned, causing an unexpectedly strong reaction to the associated stimulus.

Overexpectation Effect

Definition:

Phase 1: Conditioned stimulus 1–unconditioned stimulus trials intermixed with conditioned stimulus 2–unconditioned stimulus trials

Phase 2: Conditioned stimulus 1 and conditioned stimulus 2 together–unconditioned stimulus trials

Result: Reduced responding to conditioned stimulus 1 and conditioned stimulus 2

What actually happens in the experiment

First, an animal learns:

A tone is followed by food → salivation

A light is followed by food → salivation

Each stimulus alone strongly predicts food.

Now the experimenter presents:

Tone and light together → food

Later, the experimenter tests the tone alone and the light alone.

The animal salivates less to each than before.

Why this happens (explained)

When the tone and light are presented together, the animal expects a lot of food because both previously predicted food.

But only the same amount of food is delivered.

The outcome is less than expected, creating a negative prediction error.

Because of this, associative strength for both stimuli decreases — even though food was still presented.

Explanation (mine)

This is called extinction without extinction trials because responding decreases even though the unconditioned stimulus was never removed. The decrease happens because the outcome did not match the organism’s high expectation.

Hall-Pearce Negative Transfer

Definition:

Evidence that subjects may reduce attention to a conditioned stimulus when its association with an unconditioned stimulus seems to be well-understood.

It is called __ __ because learning of one association in Phase 1 impairs learning of a different association in Phase 2.

The experiment (Hall & Pearce, 1979 — from slide)

Phase 1

A tone is followed by a weak shock for 66 trials.

The animal learns that the tone predicts a weak shock.

Phase 2

The same tone is now followed by a strong shock.

The animal learns this new association very slowly. It learns much more slowly than a control group that never heard the tone in Phase 1.

What this shows

Because the animal already “thought it knew” what the tone predicted, it paid less attention to the tone in Phase 2.

This reduced attention makes new learning about the tone harder.

Explanation (mine)

This experiment shows something the Rescorla–Wagner theory cannot explain. The theory focuses only on prediction error, but this experiment shows that attention to the conditioned stimulus changes over time. When an organism believes it understands what a stimulus predicts, it stops paying attention to it, which interferes with later learning.

Perruchet Effect

Definition (EXACT words from the slide):

“Subjects’ conscious expectations may differ from physiological responses during classical conditioning.”

Original Experiment (Perruchet, 1985) — from the slide

Eyeblink conditioning

p(airpuff | tone) = .5

This means:

Every time the tone plays, there is a 50% chance an airpuff will hit the eye.

So sometimes the tone is followed by an airpuff, and sometimes it is not.

Two things were measured:

Whether the person blinked when the tone played (the learned physiological response)

What the person thought would happen (they rated how likely the airpuff was on a 0–7 scale)

What the slide says happened

If several recent trials had no airpuff after the tone:

People’s expectancy ratings went UP

They believed: “The airpuff is now more likely” (gambler’s fallacy)But their eyeblink response went DOWN

Their body had learned the tone is less predictive

If several recent trials did have airpuffs after the tone:

People’s expectancy ratings went DOWN

But their eyeblink response went UP

What the graph slide shows

As the number of tone-alone trials increases:

Expectancy ratings ↑

Eyeblink probability ↓

They move in opposite directions.

Replication slide (Perruchet et al., 2006)

Same effect using reaction times:

A light sometimes predicts a tone (50% of the time)

Reaction times get faster when light predicts tone

After many trials where the tone does NOT follow the light:

Reaction-time benefit decreases

But people’s predictions that the tone will occur increase

Same mismatch.

Implication (EXACT from slide)

“Classical conditioning in humans could reflect two distinct processes:”

Automatic, unconscious associative processes (shown by the eyeblink response)

Conscious expectations driven by cognitive processes (shown by expectancy ratings)

Explanation (mine)

The person’s body learns from actual experience.

The person’s mind predicts using logic.

So the person thinks the airpuff is more likely…

while their eyeblink shows they’ve learned it’s less likely.

That contradiction is the __ ___.

Evaluative Conditioning

Definition (exact slide words):

“change in affective response (usually ratings) to a previously neutral stimulus after pairing with another (more emotion-provoking) stimulus.”

Step 1 — What “affective response” means

Affective response = how you feel about something

Do you like it? dislike it? feel neutral?

This slide is about changing feelings, not changing reflexes.

Step 2 — What is “previously neutral stimulus”

Something you had no feelings about before.

Example: a random picture.

Step 3 — What does “pairing” mean here

The neutral thing is shown at the same time as something that already makes you feel something.

You are not told to learn.

You are not predicting anything.

You are just experiencing them together.

Slide Example 1 — Razran (1938)

People looked at pictures

While they were eating food

Eating food = pleasant emotional state.

Later…

Those same pictures were rated more attractive.

Why?

Because your brain linked:

picture + feeling good

So now the picture feels good.

Slide Example 2 — Hammerl et al. (1997)

Neutral pictures paired with liked pictures

Neutral pictures paired with disliked pictures

Later shown alone and rated

Results:

Paired with liked → now liked

Paired with disliked → now disliked

Again, the neutral picture did not predict anything.

It just shared emotional space with something else.

What this is teaching (very important)

Earlier in this unit you saw:

Eyeblink conditioning

Fear conditioning

Skin conductance

Those showed:

learning to react

This slide shows:

learning to feel

That is a completely different kind of learning.

Why this matters (connection to phobias slide)

Think about a phobia.

A neutral thing (dog, elevator, airplane)

gets paired with a scary experience.

Now the thing itself feels scary.

That is evaluative conditioning.

Explanation (mine)

You are not learning:

“This predicts something.”

You are learning:

“This makes me feel good”

or

“This makes me feel bad.”

And you don’t even realize it happened.

That’s why this explains:

Phobias

Preferences

Advertising

Emotional biases

Causal Learning

Definition (from slides)

__ ___: How do we decide that one event causes another?

Classical conditioning represents learning of associations between events. Does this sort of learning underlie other sorts of cognitive processing?

Key idea from slides

We often use the same associative principles from classical conditioning when deciding whether one thing causes another.

Major example from slides — Gluck & Bower (1988): medical diagnosis task

Subjects study patient files listing:

Symptoms

Diagnosis (imaginary diseases)

Later they are tested by:

Rating how strongly symptoms are associated with diseases, or

Seeing symptoms and making a diagnosis

This shows how people learn cause–effect relationships the same way they learn CS–US relationships.

Connection to classical conditioning (explicit slide bullets)

__ __ shows the same effects as conditioning:

Frequency of Cause–Effect pairing → learning curve

Effect of relative validity

Effect of redundant information (blocking)

Effect of more salient cause (overshadowing)

These are Rescorla-Wagner effects showing up in human reasoning.

Philosophical background from slides

David Hume (1748): We never see causation directly. We judge it when:

Cause and effect are close in time and space

Cause happens before effect

There is no other likely cause

Bertrand Russell (1912): causation is not obvious in the world — it is inferred.

Your professor includes this to show:

Causality is a cognitive construction, built from associations.

Important limitation from slides

Association strength is not the only factor in causal learning.

Other factors:

Our prior knowledge

Understanding of mechanisms

Strong beliefs can create illusory correlations (Chapman & Chapman, 1969) — seeing relationships that do not exist.

What this term is really teaching

Humans use associative learning mechanisms (like conditioning) as a foundation for:

Diagnosing problems

Predicting events

Planning actions

Judging what causes what

Causal reasoning is conditioning plus cognition.

Explanation (mine)

This term shows that classical conditioning is not just about dogs and tones. The same learning rules explain how humans decide that “this causes that.” Blocking, overshadowing, and relative validity are not just lab effects — they shape how we interpret the world.

Delay Conditioning

Definition: Conditioned stimulus starts before unconditioned stimulus and stays on until the unconditioned stimulus ends.

What the slide is showing

The conditioned stimulus and unconditioned stimulus overlap in time.

Example (apply Pavlov)

Tone starts → food comes while tone is still playing → tone stops after food.

The dog hears the tone during the food.

Explanation (mine)

This is the most effective form of classical conditioning because the organism experiences the conditioned stimulus while the important event is happening.

It makes prediction easy: “This sound is happening at the same time as the food.”

Trace Conditioning

Definition: Conditioned stimulus starts before unconditioned stimulus and turns off before the unconditioned stimulus begins.

What the slide is showing

There is a gap between conditioned stimulus and unconditioned stimulus.

Example (apply Pavlov)

Tone plays → tone stops → short pause → food appears.

The dog must remember the tone during the gap.

Explanation (mine)

This requires memory. The organism must keep a “trace” of the conditioned stimulus in mind to connect it to the unconditioned stimulus.

This is harder and usually produces weaker conditioning than delay conditioning.

Sensory Preconditioning

Definition:

Phase 1: Two neutral stimuli are paired together (no food yet)

Phase 2: One of those stimuli is paired with food

Test: The other stimulus now causes salivation

Concrete Example

Phase 1:

A light turns on at the same time as a tone. No food. Nothing happens.

The dog just learns: light and tone go together.

Phase 2:

The tone is now paired with food. The dog salivates to the tone.

Test:

The light alone now causes salivation.

Explanation (mine)

The dog learned the relationship between the light and the tone first, before either meant anything.

When the tone later gained meaning, the light inherited that meaning.

This proves conditioning is stimulus–stimulus learning.

__ ___: Two neutral stimuli (e.g., A and B) are paired before any conditioning. Later, when Stimulus A is conditioned, Stimulus B will also evoke a response, even though it was never directly paired with the unconditioned stimulus.

Second-Order Conditioning: Stimulus A is already conditioned to elicit a response. Then, Stimulus B is paired with Stimulus A, and Stimulus B can now elicit the same response, even though it was never paired with the unconditioned stimulus.

Key difference: In __ __, the pairing of stimuli happens before conditioning. In Second-Order Conditioning, the first stimulus is conditioned before pairing with the second one.

Second-Order Conditioning

Definition (from slide)

Phase 1: A stimulus is paired with food

Phase 2: A new stimulus is paired with that stimulus

Test: The new stimulus causes salivation

Concrete Example

Phase 1:

Tone → food. Dog salivates to tone.

Phase 2:

A light turns on before the tone. No food yet.

Test:

The light alone now causes salivation.

Explanation (mine)

The light never touched the food.

It works because it predicts something that predicts food.

The dog is learning chains of prediction.

Sensory Preconditioning: Two neutral stimuli (e.g., A and B) are paired before any conditioning. Later, when Stimulus A is conditioned, Stimulus B will also evoke a response, even though it was never directly paired with the unconditioned stimulus.

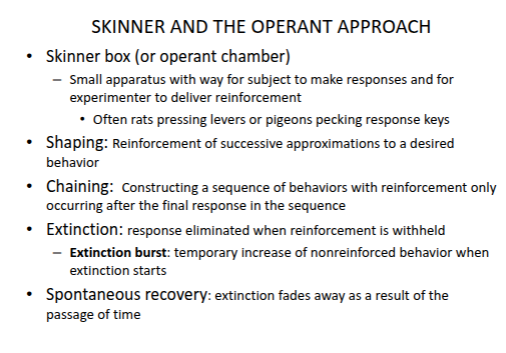

__ ___: Stimulus A is already conditioned to elicit a response. Then, Stimulus B is paired with Stimulus A, and Stimulus B can now elicit the same response, even though it was never paired with the unconditioned stimulus.