Marketing Research Quiz #2 Study Guide

1/49

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

50 Terms

All surveys are wrong;

But good surveys are useful (i.e., actionable)

What are the sections of a survey?

Request for cooperation, screening, information sought, classification data

What’s a screening question used for?

Determine "terminates"

Target information (key issues being studied)

What is the best flow for a typical survey?

qualifying questions

warm-ups

transitions

difficult and complicated questions

classifying and demographic questions

Survey pretesting guidelines:

Question reliability: Did all respondents interpret it in the same way?

Question validity: Did they understand it?

Survey length: Were respondents bored? fatigued?

Scaling issues: Did they understand the scales? Were scale points clear?

Response Bias: Was there a lot of end-piling going on? (give high ratings on all attributes)

Survey pretesting watchouts:

Do you have to read the question often or repeat several times

Respondents are replying in phrases that are not related to the scale questions

Too many respondents using the same scale category (enough discrimination)

Comments to open-ended questions are inconsistent with responses to close-end questions

High number of "don't know" or "does not apply"

Non-response rate to a question is unusually high (>10% is a rule of thumb)

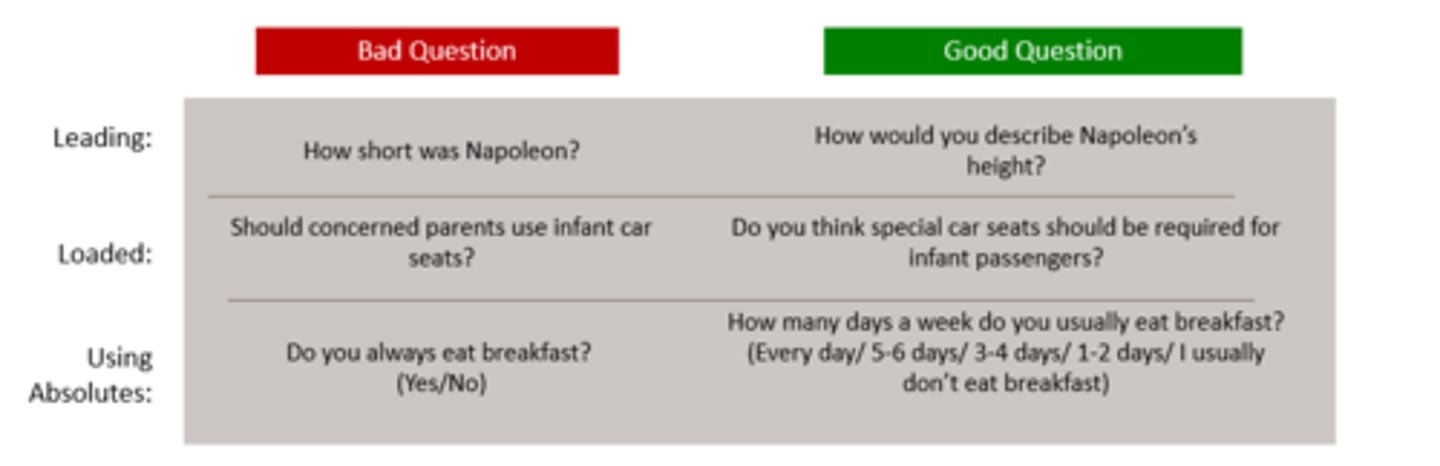

Bad Questions:

Leading, Loaded, Absolutes, Double-Barreled, No instructions, Bad grammar/typos

Biased questions lead to unreliable results and ultimately wrong decisions

Leading Question

a question that suggests a particular answer (How short was Napoleon?)

Loaded Question

has an assumption in the question (Have you stopped cheating on tests? or What made you decide to ignore the return policies?)

Double Barrel Question

asking for two things in one question, “and” (How often do you visit our website and shops?)

Sampling

The process of obtaining information from a subset of a larger group, we then take the results from the sample and project them to the larger group

Sample

a subset of the population (ex: Surveying some of the U.S. dog owners)

Census

a sample that is the whole population, not very feasible (ex: Surveying all U.S. dog owners)

Population

refers to the entire group of people about whom we need to obtain information (ex: all U.S. dog owners)

Population of Interest

the target population

A population (N) is all cases that meet designated specifications for membership in the group

Be very clear and precise in defining the target population

The more specific your target population definition, the harder and more costly it is to find sample: geographic area, demographics, product or service usage, brand awareness, etc

should also define the characteristics of individuals who should be excluded / Screening criteria

Statistical Notation N

A characteristic or measure of a population

Statistical Notation n

A characteristic or measure of a sample

Sampling Frame

The source material - or list of population elements - from which a sample (n) will be drawn

Commonly used frames: customer databases, lists developed by data compilers, trade associations, media companies

What are Sampling methods?

Nonprobability Sample

Probability Sample

Nonprobability Sample

Relies on personal judgment in the element selection process

Neither sampling error nor the margin of sampling error can be estimated or calculated

Probability Sample

Each target population element has a known, non-zero chance of being included in the sample

The laws of probability allow calculation of the extent to which a sample value can be expected to differ from a population value

This difference is referred to as sampling error

Types of Nonprobability Samples?

Convenience

Judgment

Snowball

Quota

Types of Probability Samples?

Simple Random

Systematic

Stratified

Cluster (Area)

Convenience Sample

nonprobability

the right place at the right time, “on street” interviews

Judgement Sample

nonprobability

handpicking people who are knowledgeable about the issue

Snowball Sample

nonprobability

low-incidence or rare populations, respondents refer others who might be interested

Quota Sample

nonprobability

interview equal # of people in each subgroup, even if it’s not representative of the actual population (50 freshman and 50 sophomores even if there’s more total freshman than sophomore)

Simple Random Sample

probability

everyone has an equal chance of being selected

Probability of selection = sample size / population size

Systematic Sample

probability

counting every kth person that walks in (k = sampling interval, like 2 or 3)

Skip Interval = population size / sample size

Simpler, less time-consuming, less expensive than simple random sampling

Stratified Sample

probability

divide population into subgroups (mutually exclusive), then randomly select from each group using a simple random sample

most used!

Cluster (Area) Sample

probability

sampling units are selected from a number of small geographic areas to reduce data-collection costs

ex: divide the building into floors and randomly choose 10 floors. on each floor, randomly choose 3 apartments and interview it’s occupants

Level of Acceptable Error (E) Impact on Sample Size

inversely related

As the E increases, the sample size (Z) decreases (less precision)

As the E decreases, the sample size (Z) increases (more precision)

As required confidence levels increase/decrease, how does it impact the sample size required?

If you can tolerate a lower level of confidence, it allows for a smaller sample size

if you require a higher level of confidence, you will need a larger sample size

Elements needed to determine appropriate sample size

Margin of error (E)

Confidence level (95%, 99%, etc.) desired (Z)

Variability in population (s [Standard Deviation] or p [Proportion])

What is Rule of thumb / Normal Distribution (n>=30)?

The sampling distribution of the mean for simple random samples that have 30 or more observations has the following characteristics:

The distribution is a normal distribution.

The distribution has a mean equal to the population mean.

The distribution has a standard deviation, the standard error of the mean.

Implications of Sample Sizes that are too big

Transform small differences into statistically significant differences, even when they are, in reality, insignificant.

Expensive

Takes a lot of time to collect data

Implications of Sample Sizes that are too small

Cannot detect differences in the sample at a statistically significant level, even though differences may exist

More variable

Less power

Survey Error

All the stuff that makes the data inaccurate

Sampling Error + Non-Sampling Error = Total Error

Selection error

Incomplete, improper sampling

minimized by developing selection procedures that will ensure randomness and by developing quality control checks

Noncoverage error

missing groups of people in your sample

This error can be minimized by getting the best sampling frame possible and doing preliminary quality control checks

Nonresponse error/bias

This error is minimized by doing everything possible to encourage those chosen for the sample to respond.

Response error

tendency of people to answer incorrectly through either deliberate falsification or unconscious misrepresentation

This error can be minimized by paying special attention to questionnaire design. The impact of culture must be completely understood when creating international surveys.

Recording error

Interviewer/qnr bias/ error

This error is minimized by careful interviewer selection and training. Questionnaire error is minimized only by careful questionnaire design and pretesting.

Office error

Input error / Data error

Use software checks to find illogical response. Data coding or analysis errors.

Response Rates Cost implications

low response rate = higher # of contacts = increased budget

Response Rates Calculation

Survey response rate = (# of people who finished the survey / total # of people you sent it to) * 100

How to improve response rates

Survey length - Shorter is better

Guarantee of confidentiality or anonymity

Interviewer characteristics and training - Develop an effective script and provide training

Personalization

Response incentives

Follow-up surveys

Incentives

A gift or payment made to respondents in return for them taking part in a research project

Pros of Incentives:

Incentives help attract participants, hard to find targets, spend more time (a longer survey or an interview)

Cons of Incentives

Introduce bias to those that are motivated by the reward vs the broader target population