MBAN 504 Notes LEC 2

1/28

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

29 Terms

k-anonymity

Idea for datasets that a person must be identical to at least k other records;

Large vs Small K anon

Larger is not as secure; Smaller is more secure; but want to be in between of small and large

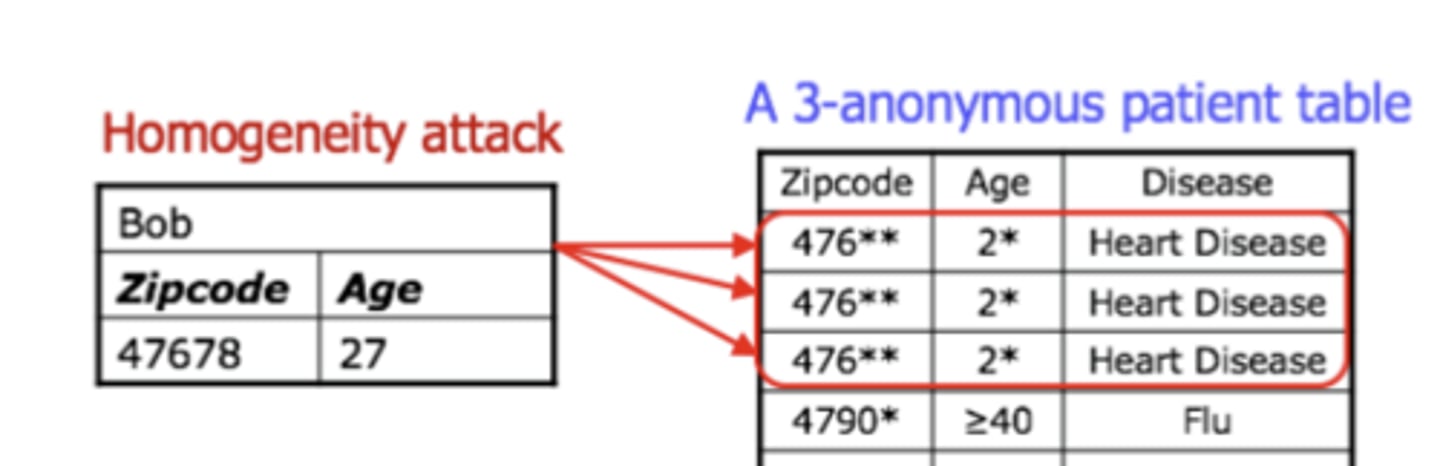

Homogeneity Attack

A privacy breach occurring when anonymized data contains a group of individuals who share the same sensitive attribute

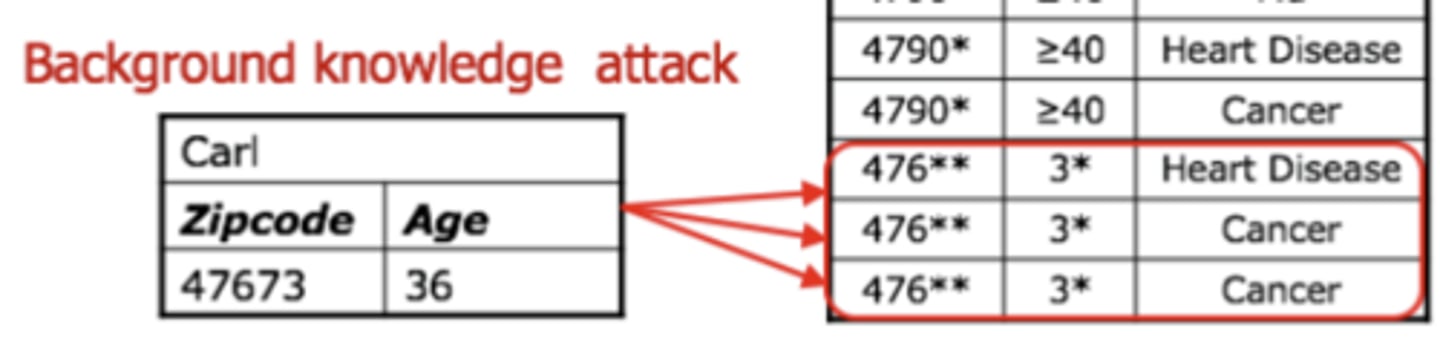

Background Knowledge Attack

Adversary combines released anonymized data with external/public/known information to re identify individuals and reveal sensitive data

Differential privacy

compromise between data protection

(providing plausible deniability to users) and utility of data

What are "Neighboring Datasets" (the input part)?

Two datasets (D and Dprime) that are identical except for the record of one single individual

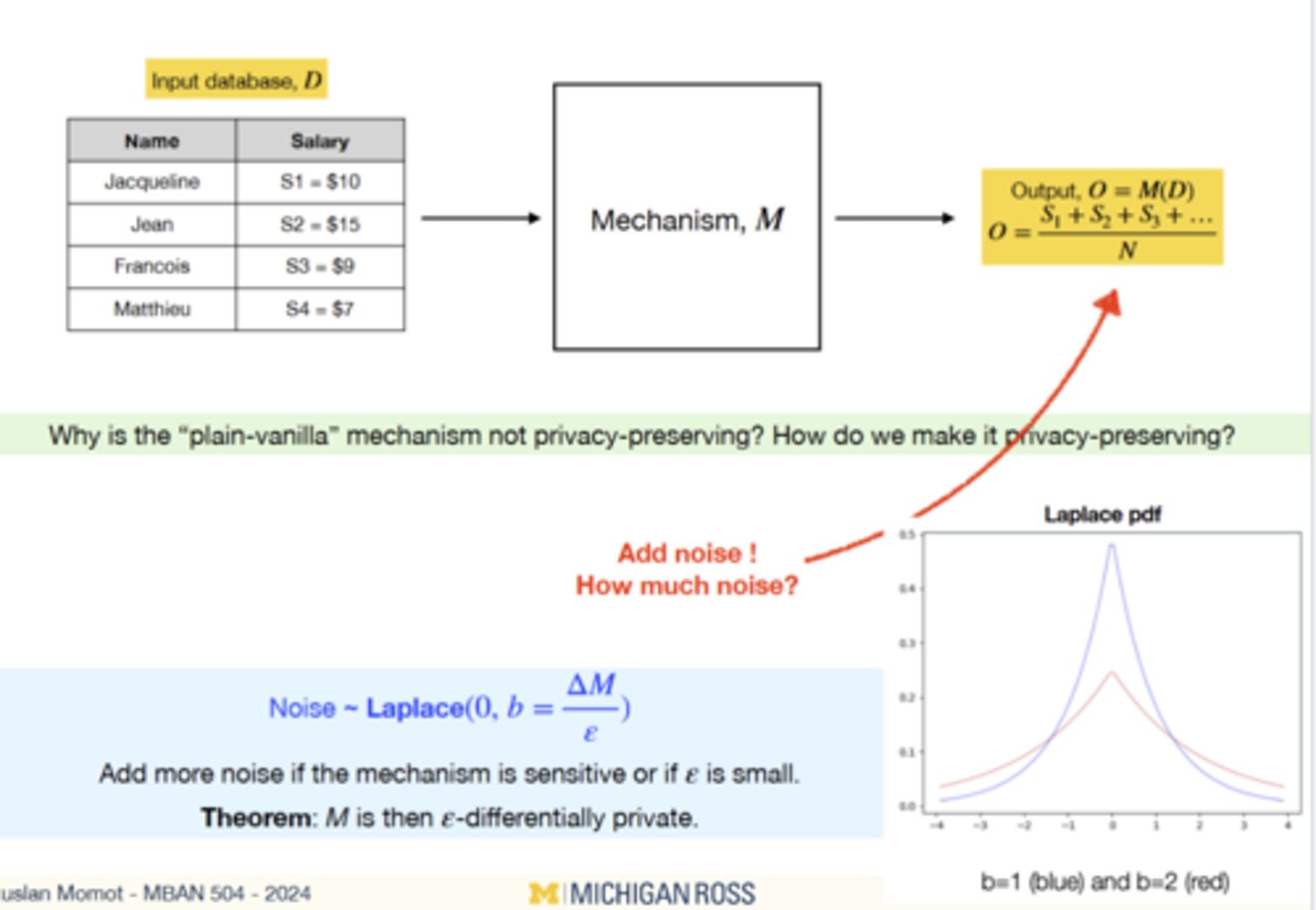

What is the "Mechanism" (M)?

Randomized algorithm that adds "noise" to the data before it is released to protect privacy

Loose Definition of Differential Privacy

A mechanism is differentially private if exclusion/inclusion of any individual in the input

dataset does not change significantly the output of the mechanism.

If M(D) aproxx M(D'), what does that imply?

An observer cannot determine if a specific person was part of the OG dataset or not

What does D'=D mean?

Dataset D' is equal to dataset D minus the individual

What is the trade off?

Privacy vs Utility. More noise means more privacy but less accurate results

Randomized response

Ensures differential privacy for binary outcomes

Epsilon = 0

Full Privacy D[0] <= D'[0']

Epsilon = 1

Not as private but middle D[0] <= 2.7D'[0']

Epsilon = ∞

No Privacy D[0] <= ∞

Laplace

A way to hide individuals value by adding a specific amount of random noise to the final anwser

Increase Epsilon

The noise decreases. (Larger epsilon less privacy = more accuracy).

High sensitivity

The noise increases. High sensitivity requires more "fuzz" to hide an individual's impact.

Problems with differential privacy

Adversary can query the mechanism

multiple (many) times, the average of the

outputs will be the true answer.

Local DP

Adding noise to raw info; More secure but less useful

Central DP

Adding noise to the output; Less secure but more useful

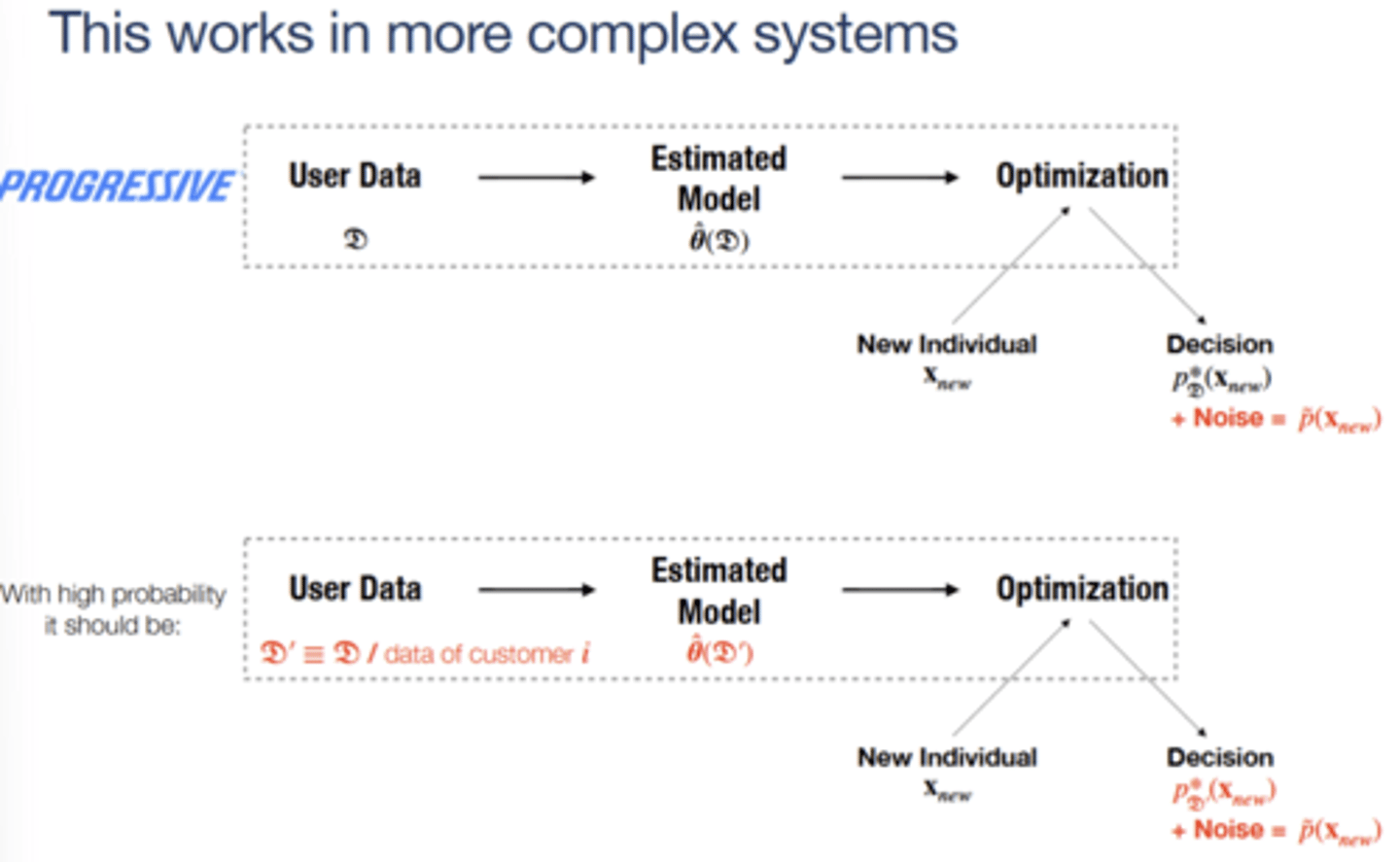

What does this progressive example show?

DP works for Machine learning/optimization not just averages

Why is traditional attribution a privacy issue?

Requires tracking a user’s exact path across multiple different apps and websites.

How does Pinterest protect privacy in attribution?

use Local Differential Privacy

How to protect users' data if we have many devices, many

datasets, etc (if our system is complex)?

Layered defense

User Device

Local Differential Privacy; Prevents raw data from ever leaving the user

Data Transit

Federated Learning;Avoids the risk of "Massive Data Lakes" being hacked.

Data Analysis

Laplace Mechanism;Ensures internal employees can't accidentally see private info.

System Wide

epsilon -composition;Prevents "linking" users across different datasets.