Experimental Psych

1/121

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

122 Terms

science

helps build explanations that are predictive and consistent

based on facts, theory, hypotheses, scientific method

verifying information

cross check

contextualize- what does the author have to gain

empiricism

the use of verifiable evidence as the basis of conclusions

collecting data systematically and using it to develop, support, or challenge a theory

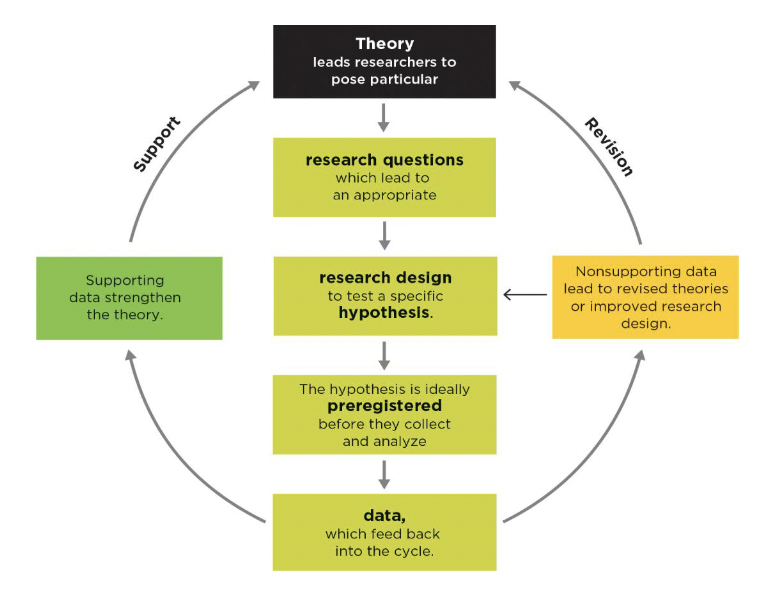

theory data cycle

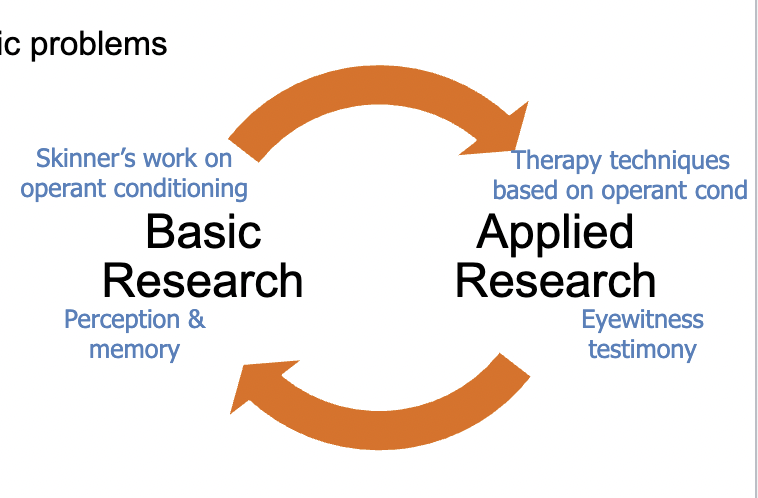

applied research

immediately applicable

basic reserach

fundamental questions

relationship between basic and applied research

cyclical

publication process

peer-review

replication ensures the finding is ‘real’

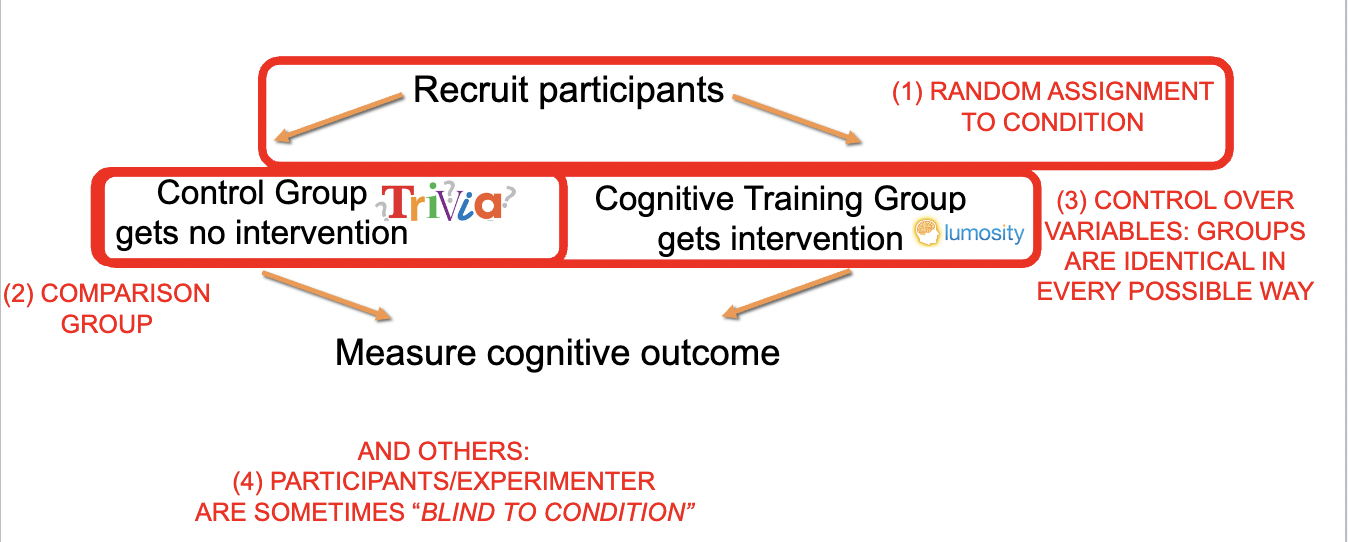

experiments

can isolate cause and effect

because manipulate IV and measure DV

can rule out potential alternative explanations for results

control variables

can show you what would have happened

comparison condition

goal- to manipulate IV so it is the only thing different between conditions, everything else is held constant

independent variable

gets manipulated, looking to cause a change

dependent variable

gets measured, looking for an effect in

special features of experiments

random assignment to condition

comparison group

control over variables- keep groups as similar as possible

(blind to condition)

theory

a systematic body of ideas about a particular topic/phenomenon

describes a relationship among variables

organizes/summarizes findings

describes, explains, predicts behavior

supported by data

falsifiable

(parsimonious)- simple

Occam’s Razor- simplest answer is usually the right one

NOT

a guess

necessarily complex

“proof”

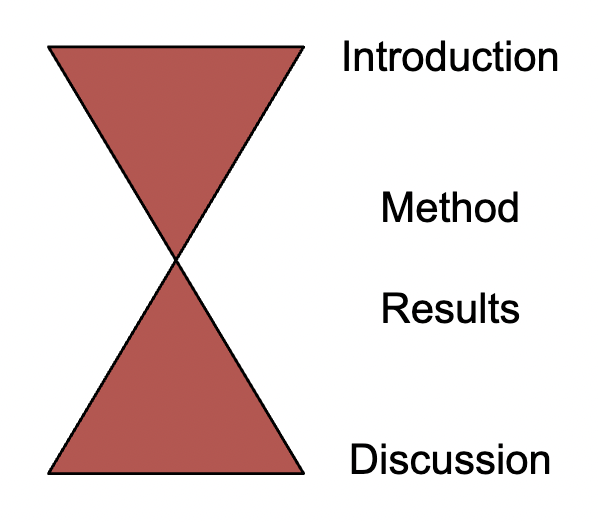

journal articles structure

referring to prior literature

Concept X (citation)

In citation, they…

abstract

brief summary of article’s content

introduction

introduces problem and explains why it is important

prior literature

end of intro- method, variables, hypothesis

method

how you conducted the study

reader should be able directly replicate study

sections: participants, materials, procedure

results

study’s numerical results: statistical tests, tables, and/or figures

discussion

summarize and explain results

describe how/if they support hypothesis

evaluate study, next steps

experience as a source, problems

no comparison group

confounds

probability- experience is not probabilistic

empirical research is…

probabilistic- describes majority of cases, uses samples >1

systematic- hold everything constant, change one thing at a time

using intuition as a source, problems

sometimes inconsistent

sometimes describe the past, not predictive

good story

availability heuristic

confirmation bias- seek disconfirming evidence!

bias blindspot

present-present bias- failing to think of what we can’t remember/see

variable

varies in a study, >= 2 levels

IV, DV

constant

could vary, but doesn’t in study

measured variables

observed and recorded as they occur naturally

manipulated variables

controlled by the experimenter

conceptual variable/construct

name for concept being studied

conceptual definition

abstract, general, theoretical definition

operational definition

concrete, a specific way to measure something

types of measures

self-report

observational or behavioral

physiological

none inherently better than another

levels of variable

nominal- categories, names

ordinal- rankings

scale

how you choose to operationalize a variable

frequency claims

one variable, measured rate or degree of that variable

association claims

2 variables are linked (correlated) typically both measured

causal claims

one variable causes change in the other, one must be manipulated

3 requirements for causal claim

covariance- did the IV seem to show a difference in the DV

temporal precedence- causal variable clearly comes first before the effect variable

internal validity- no alternate explanations for the results

similar people in each condition (random assignment)

holding everything possible constant except for IV

unsystematic variance, noise

makes it harder to find significant results but not a threat to internal validity

doesn’t vary with the IV

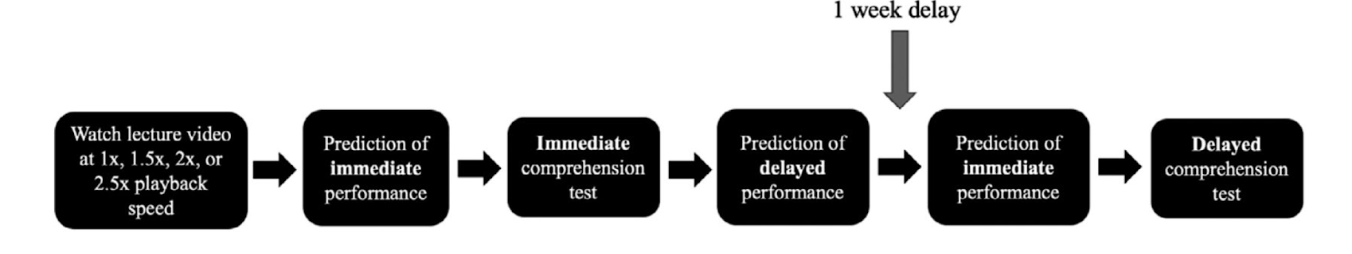

Murphy et al. IV and DV

IV: rate of information playback

DVs: comprehension and prediction

Murphy hypotheses: experiment 1

Participants' immediate comprehension will be preserved at faster video speeds

After a delay, increased video speeds may lead to poorer memory performance compared with normal speed

Participants will predict that both immediate and delayed retention would be minimally affected by video speed

Murphy Experiment 1

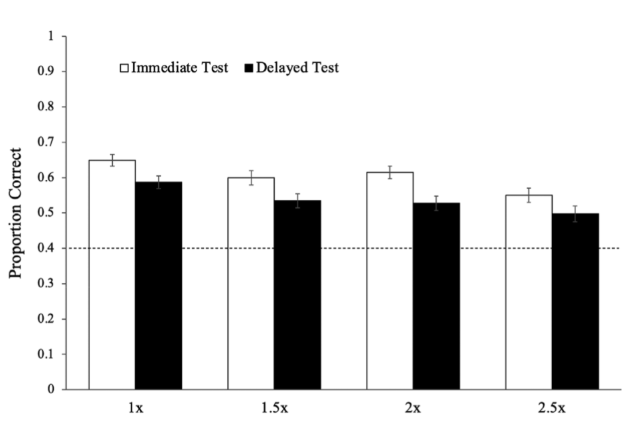

Murphy Experiment 1 primary findings

Main effect of test time: immediate better than delayed.

Main effect of video speed: little effect for normal, 1.5x , and 2x speed (nonsignificant); comprehension (and therefore performance) is only impaired at 2.5x speed (significant).

Murphy Experiment 2- purpose

to take advantage of/analyze benefits of repetition without additional time spent studying

watched the videos twice in immediate succession for 2a and then for 2b did the 2x speed once and the second time immediately before the exam

a: Normal speed once vs. 2x speed twice (1x vs. 2x - 2x - test)

b: 1x vs. 2x-week delay-2x-test

Murphy Experiment 2 findings

Experiment 2a Results

-performance: 1x=2x

Experiment 2b Results- answer

-performance: 1x < 2x

Murphy experiment 3 findings

3a: slow - fast vs. fast-slow equal performance

3b: slow-week delay-fast vs. fast-week delay-slow equal performance

construct validity

quality of measures and manipulations

how good is the operationalization

how reliable are the measures

extra important for frequency claims

external validity

context of the study

to what extent can we generalize from the study

to other participants

to other settings

to other operationalizations of same conceptual variables

who we ask to be in sample

inclusion/exclusion criteria effects who we can derive inference about

where do we conduct our study

lab vs. field

balance between minimizing variance and maximizing ability to generalize

statistical validity

how well do the numbers support the claim

ex- p-values, eta²

internal validity

no alternative causal explanations for the outcome

free of confounds

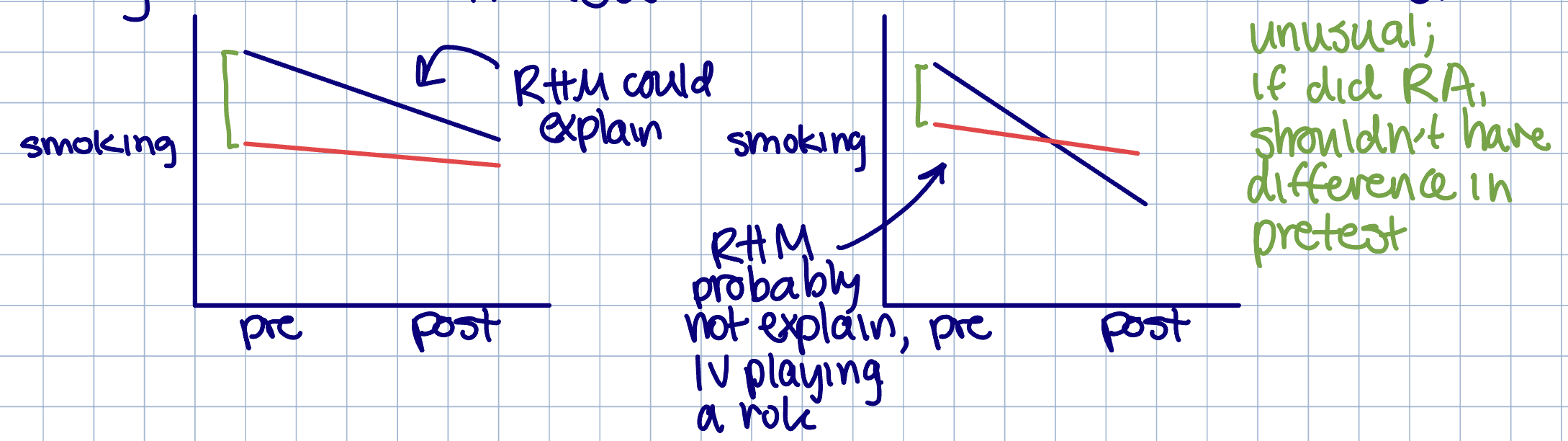

random assignment

strong control over variables

balancing validities: internal vs. external validity

internal- tightly-controlled. less variability

external- different people, settings

internal validity is prioritized first, external validity explored later

quiz 2

design confound

alternative explanation in the design of the study (varies with the IV, systematic)

selection effect

participants in different conditions are different

prevention- random assignment

matched groups- usually for smaller samples

first measure participants on variable that might matter to DV

match participants in pairs of similar trait and randomly assign each to condition

manipulation check

ensure construct validity

collect more data with same participants to quantify how well manipulation worked

pilot study

ensure construct validity

smaller scale (often preliminary) study with a different group of participants completed to confirm the effectiveness of the manipulation, test DV measurement

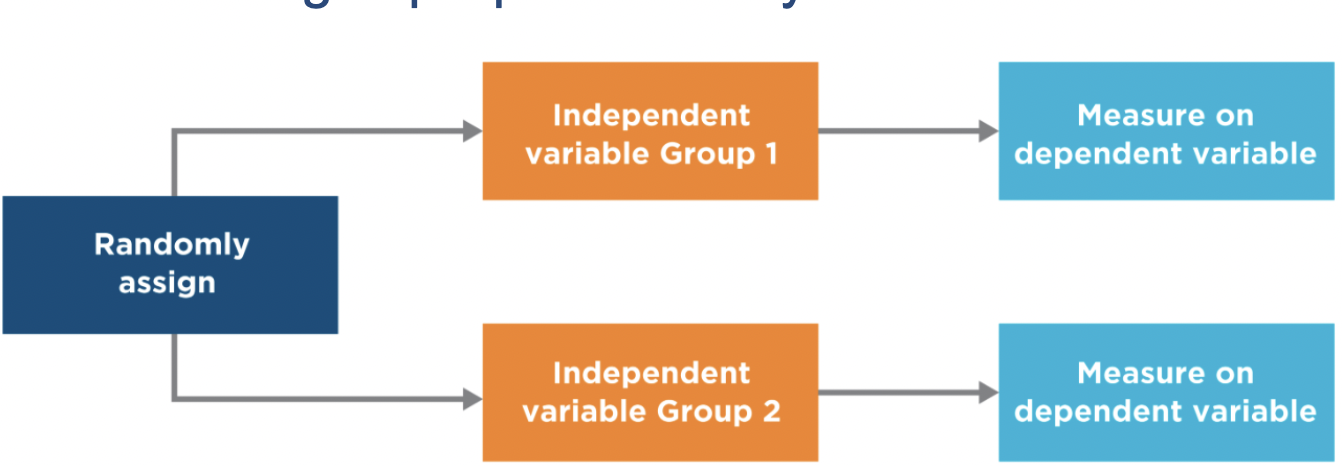

between-groups design

participants only get one level of the IV

pros- harder for participants to guess what is happening

cons- selection effects, more participants, less power

post-test only design

default

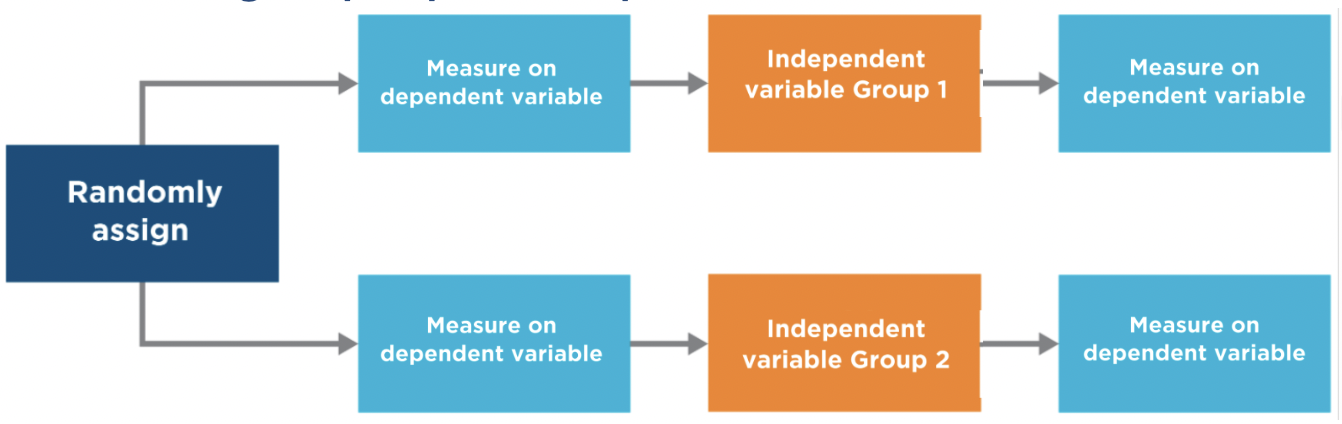

pretest/posttest design

why?

interested in change over time

check if groups are equivalent

sometimes not possible

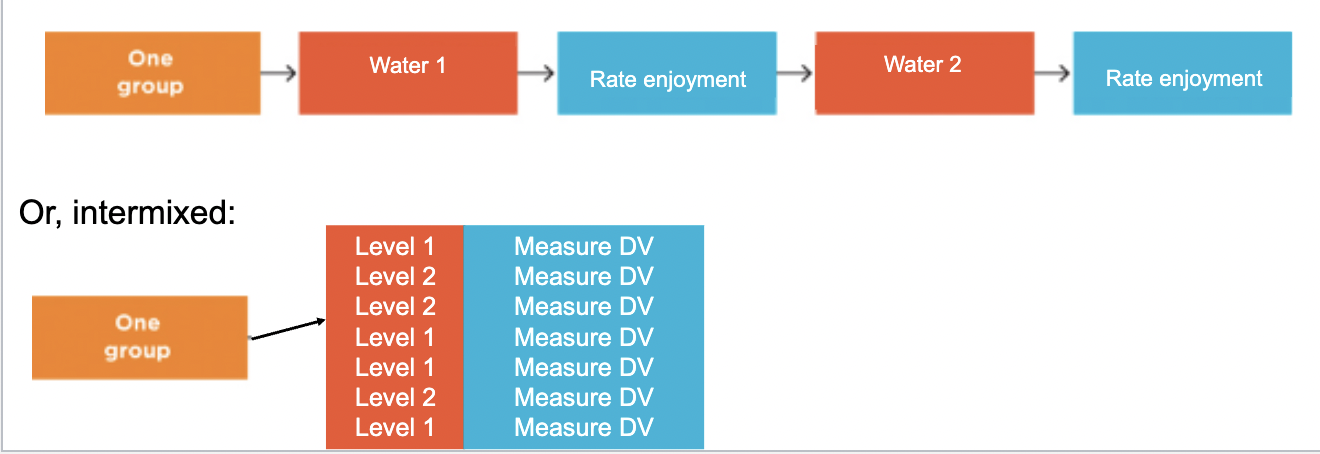

within-groups design

participants get all level of the IV

pros- participants serve as own comparison, no selection effects, fewer participants, more power (ability to detect effect if it’s really there, eliminating one source of noise)

cons- easier for participants to figure out what is being studied

order effects

issue with within-groups design

exposure to one level of IV influences reaction to other level of IV

practice effects

issue with within-groups design

participants get better at a task

fatigue effects

issue with within-groups design

participants get worse at a task

carryover effects

issue with within-groups design

occur when a participant's experience in one condition influences their performance or behavior in subsequent conditions, confounding results

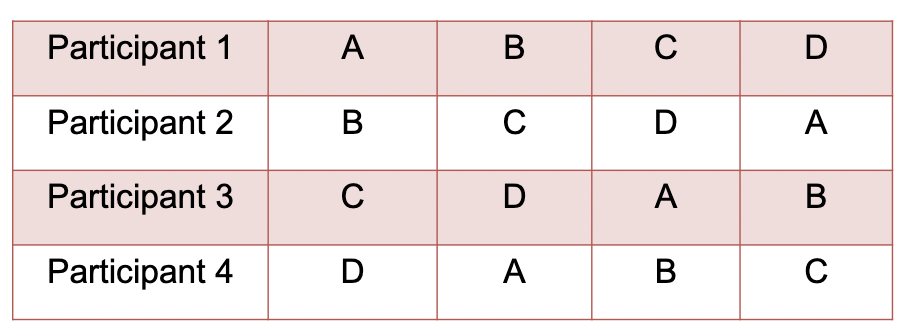

counterbalancing

solution to issues with within-groups design

presenting levels of IV in different sequences (ex- AB vs BA)

full- all possible orders

partial- present only some orders

latin square- minimizes confounds, each level appears once in each order position, each level appears with nothing before and after it

but A still followed by B more often

maturation

change in behavior that emerge spontaneously over time

ex- children’s cognitive abilities naturally improve over time

prevention- use a comparison group

history

event that effects experiment that is not a part of your manipulation

prevention- comparison group

regression to the mean

extreme scores become less extreme over time

*only an issue when people are selected for being extreme

any variable measurement = true score + error

prevention- comparison group

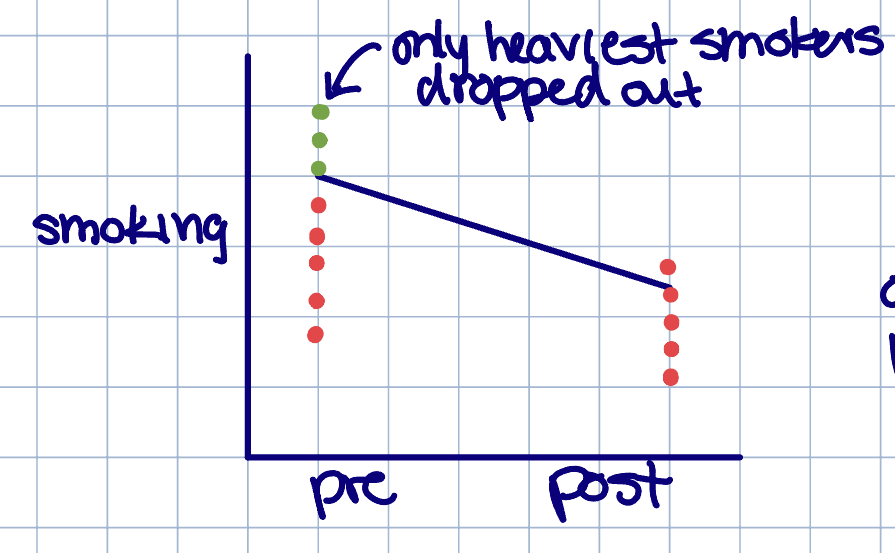

attrition/mortality

participant drop-out

even if you have 2 groups equal on a pretest, dropout can still make groups look unequal at posttest

prevention- comparison groups; exclude their data, but now potential threat to external validity

quiz 3

testing

change in participants as a result of experiencing the DV more than once

usually fatigue/boredom or practice

prevention- posttest only, comparison group

instrumentation

characteristics of the measurement change over time

measurement

observation (self or observer- more skillful, fatigued, desensitized, change standards)

tests- pre and post tests are unequal (eg- difficulty)

prevention- posttest only, comparison group

combined threats

can occur even if you have comparison group and random assignment

selection-history threat- an outside event systematically affects participants at one level of IV

selection-attrition threat- participants in one group experience more/less attrition

demand characteristic

participants figure out what study is about and change their behavior in the expected direction

prevention- run a (double)blind study, between-groups design, try to convince participant study is about something else

placebo effects

people receive treatment and improve, but only because they believe they are receiving a valid or effective treatment

prevention ex- have a true therapy, placebo therapy, and no therapy groups (helps identify the placebo effect)

interrogating null effects

what if the IV doesn’t effect the DV

t = between-condition difference / within-condition variability

F ratio = between-condition variance / within-condition variance

significance tests = between-condition variance / within-condition variance

need to maximize our ability to detect differences between our conditions

need to minimize within-condition variability (noise)

power

finding a statistically significant effect when the IV really has an effect

studies with a lot of power are more likely to detect true differences

maximizing between-group variance, beware of

weak manipulation

insensitive measures- obscures ability to see effects in DV

ceiling and floor effects- people performing too well or too poorly

design confounds

manipulation checks and pilot study can help

minimizing within-condition variability, beware of

measurement error → use reliable and precise measurements, establish construct validity, use established measures, measure more instances

individual differences → more homogenous sample, within-groups/matched-pairs design, more participants

situation noise → control experiment surroundings

increase power!

factorial design

interaction- when the effect of one IV on the DV depends on the level of the other IV

3 research questions

is there an effect of first IV?

is there an effect of second IV

is there an interaction

(need stats to answer, p < .05)

designs:

both IVs have a between-groups design

both IVs have a within-groups design

mixed design

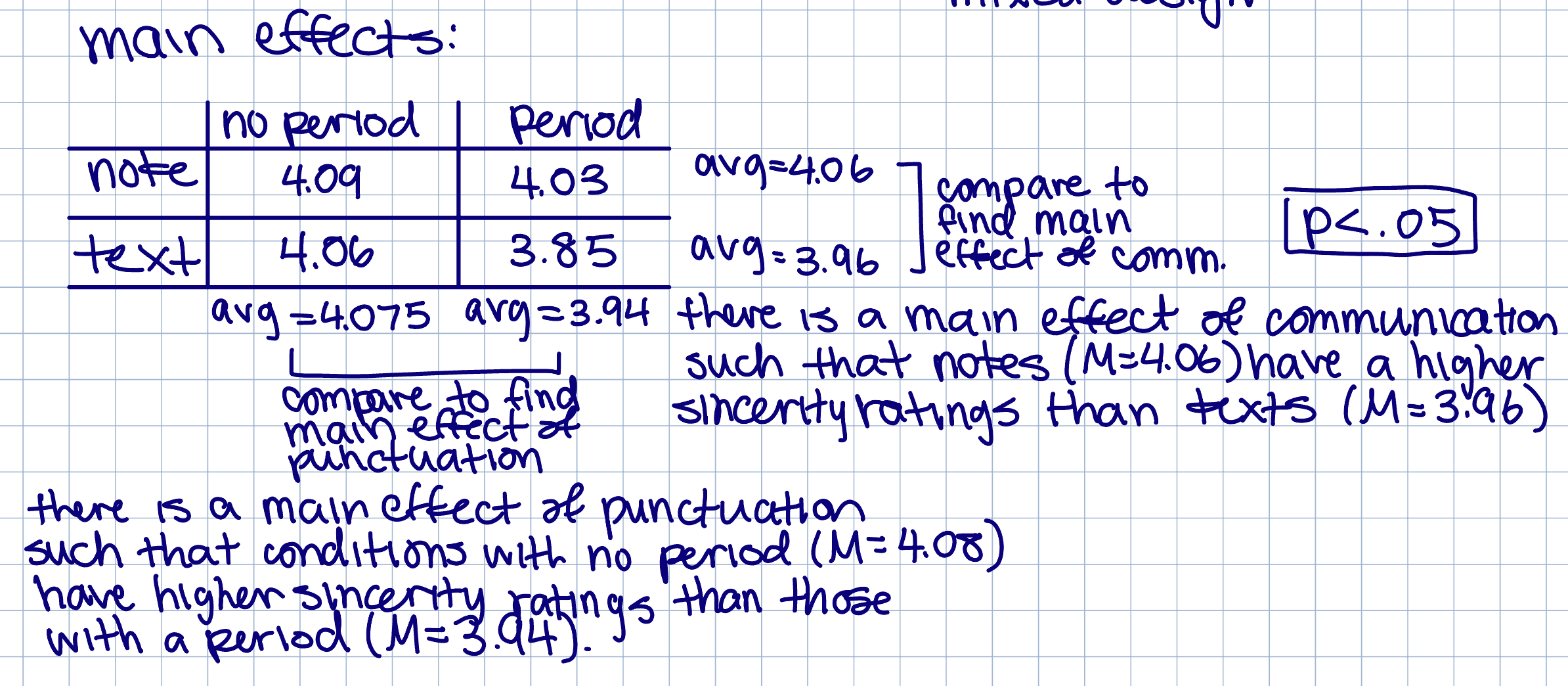

main effects

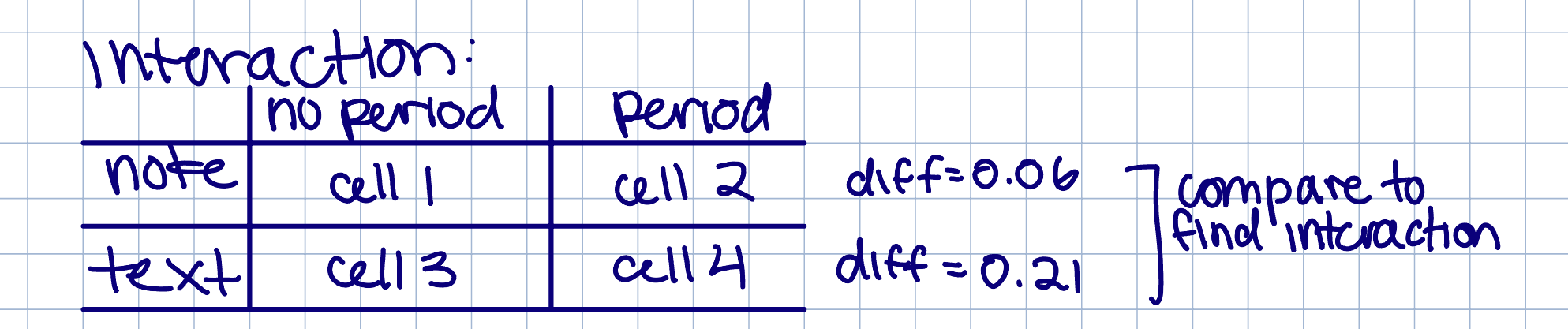

interaction

simple effect- relationship of IV1 and DV at one level of IV2

for notes, no period shows similar sincerity to period

for texts, no period shows igher sincerity than period

why do we care about interactions

factorial designs can test for

boundary conditions (limits)- under what setting is something applicable

moderator- when, for whom, and under what conditions are two variables related, “it depends”

external validity- does this effect extend to other situations/groups

can be applied to both IVS

theories- what situations theories are operating in

describe # of IVs

“one-way” “two-way” etc

describe conditions within IV

__(# of levels)___ x __(# of levels)___

number of digits = number of IVs

post hoc tests

statistical analyses conducted after an ANOVA shows significant differences among three or more group means

3 IVs

*three way interaction- when one condition of 3 IV has a two-way interaction different from other condition two-way interaction,

no interaction vs. interaction

interaction vs. different interaction

*most important to analyze

3 main effects

3 two-way interactions

quiz 4

correlation

a descriptive and inferential analysis we typically use to assess association claims

typically describe and predict how variables are naturally related in real world

coefficient r

-1 to +1, magnitude tells strength

measure of effect size

construct and external validity- association claims

construct validity- quality of measures and manipulation

sometimes the factors that make it impossible to an experiment also make construct validity high

external validity

sometimes the factors that make it impossible to an experiment also make external validity high

moderator analysis

statistical validity- association claims

effect-size

precision (CI)- contain 0, not stat sig

issues

outlier- can inflate (on trend line) or deflate (off trend line) a correlation

have larger impact on a small sample

non-linearity- curvilinear

establishing internal validity- non-experimental design

covariance- is there a correlation

*temporal precedence-

directionality problem- does method establish which variable came first in time

*internal validity-

third variable problem- is there a C variable that is associated with A and B independently

*limitations which make causal statements challenging

mediator

mechanism that explains a relationship

subjective ways to assess validity

face validity- does it accurately reflect the construct of interest

content validity- does it include all the important components of the construct

empirical ways to assess validity (self-report)

criterion validity- measure predicts real-world outcome

correlational or known-groups

convergent validity- measure should be more associated with a similar measure (can be negatively associated)

discriminant validity- measure is less associated with a dissimilar measure (not negatively associated)

test-retest reliability

first and second measurement should be similar, even when separated in time

relevant if expected to stay constant

interrater reliability

participants are rated similarly by two raters

relevant if >2 raters

internal reliability

participants give a consistent set of answers no matter how the questions have been phrased

items tapping the same theoretical variable correlate strongly with one another

AIC (average of the inter-item correlations in the whole scale) or Cronbach’s alpha (AIC accounting for # of items); usually want > .7/.8

relevant if survey/questions

surveys and polls

helpful for

facts/demographics

attitudes beliefs

behavior

open-ended questions pros and cons

pros

rich information

cons

needs to be coded

difficult to interpret

forced-choice questions, pros and cons

Likert scale (1-5) or Likert-type scale

pros

easy to process

cons

limited info

making a survey: DONTS

make leading questions

make double-barreled questions (2 questions or 2 adjectives)

make negatively worded questions

provide too few (less than 5) or too many (more than 7) options