physics A michaelmas: experimental methods

1/28

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

29 Terms

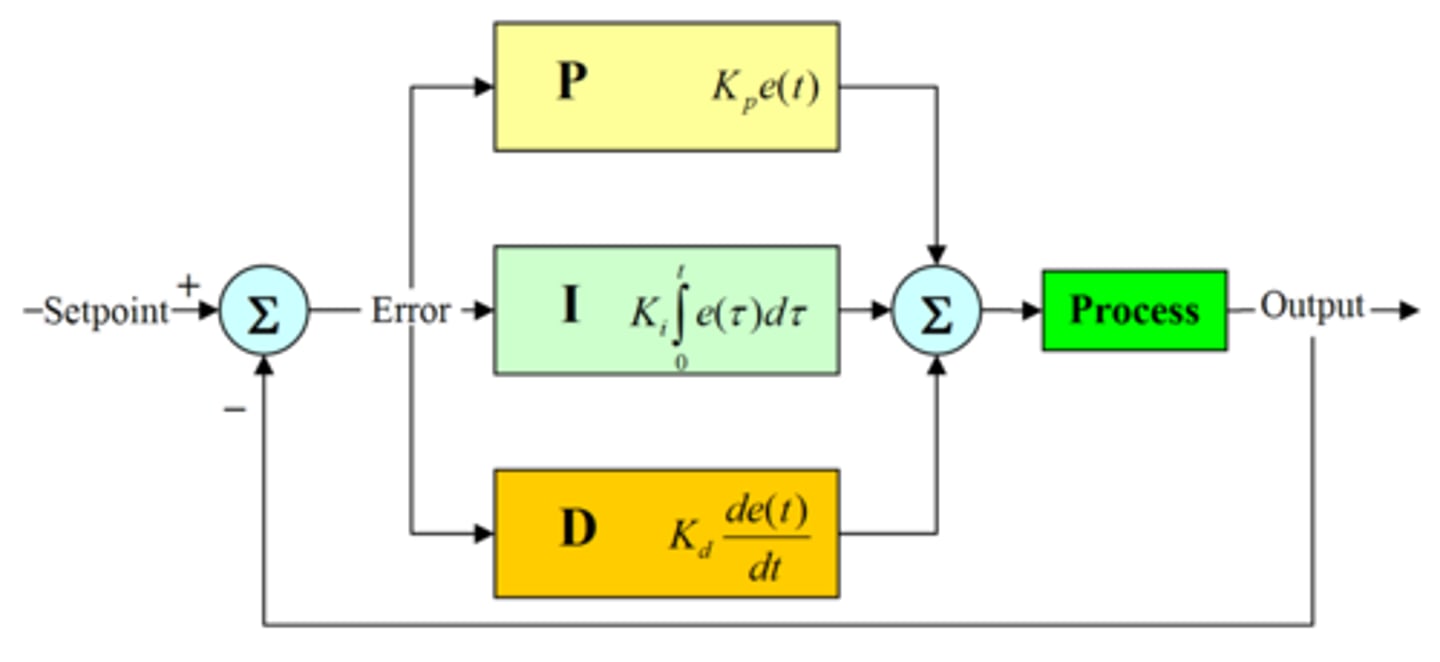

flow of a control loop, and formula for the output of each round in terms of K, G, and r

reference goes to controller K(ω), then ststem G(ω), then the error e = rKG - r is fed back into the controller.

y = [GK / (1 +GK)]r

![<p>reference goes to controller K(ω), then ststem G(ω), then the error e = rKG - r is fed back into the controller.</p><p>y = [GK / (1 +GK)]r</p>](https://knowt-user-attachments.s3.amazonaws.com/243fe3ee-fa0b-416b-b8c0-31b890453e3a.jpg)

PID control: components and what are the dis/ advantages of each

proportional: Kp = Const(ω). ✔ simple, cheap, fast response. ✘ finite gain at ω→0 → steady-state error remains

integral: Ki ∝ 1/ω . ✔ eliminates steady-state error, excellent low-frequency tracking & disturbance rejection. ✘ slower response, can cause overshoot / instability

derivative: Gain ∝ω. ✔ predictive, improves damping & transient response. ✘ amplifies high-frequency noise, rarely used alone.

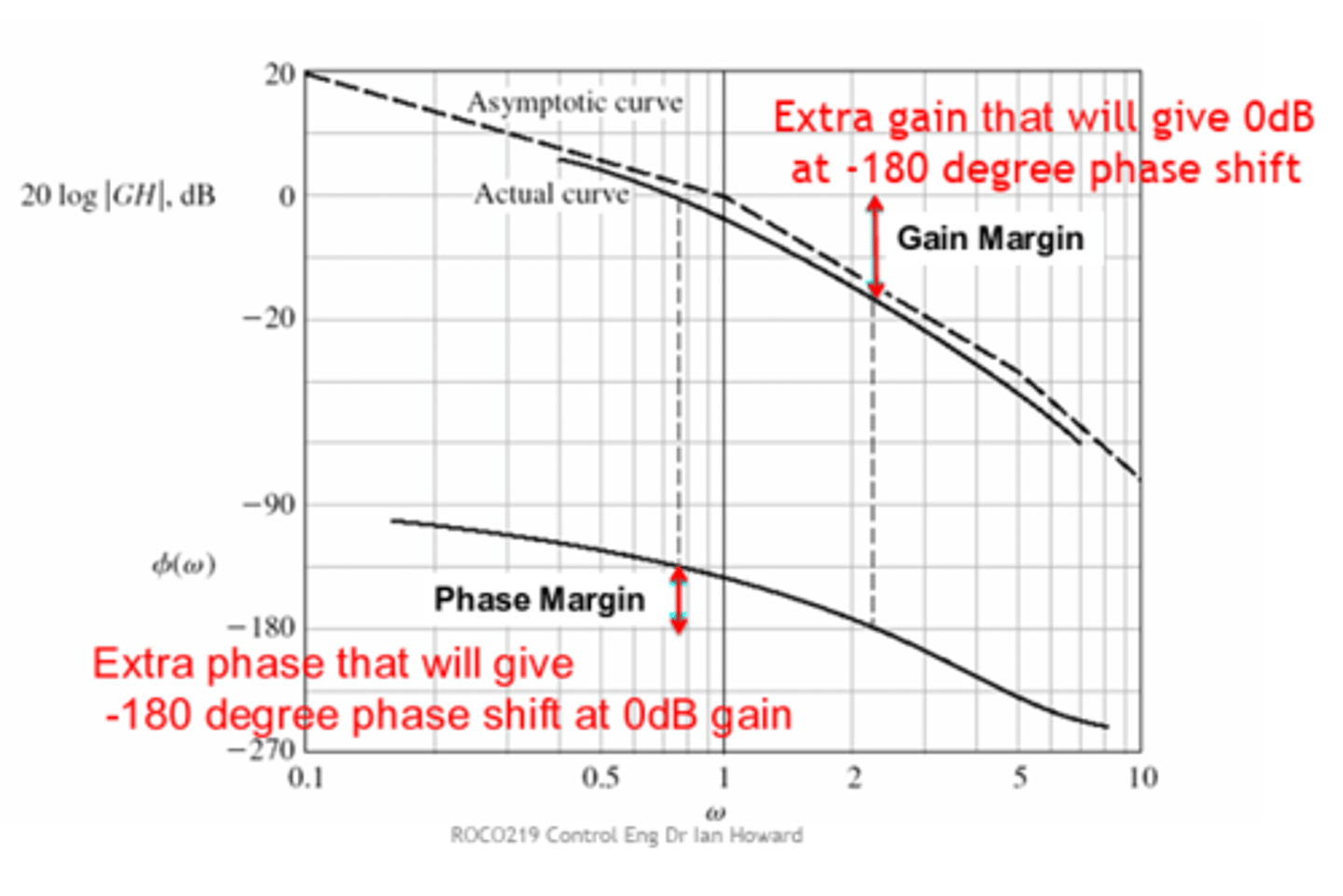

What does a Bode plot represent?

A log-log plot of gain and a lin-log plot of phase shift as a function of frequency.

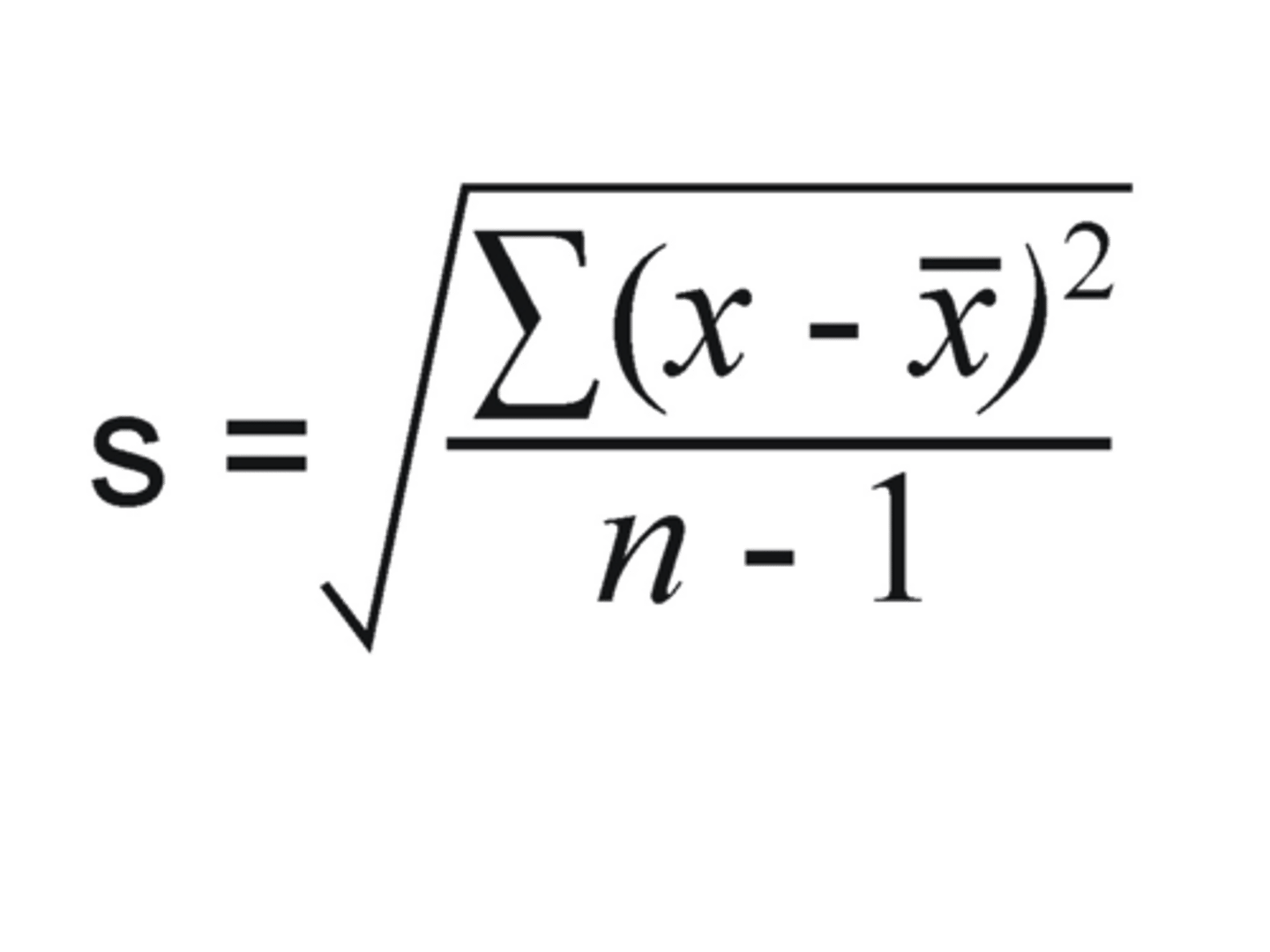

variance and standard deviation formula

σ² = ∑(x - X)²/n-1

Why do we use N-1 instead of N when estimating random error in a single datum?

Because we use the data to estimate the mean, which introduces bias.

variance of a sum of independent quantities

The variance of the sum equals the sum of the variances.

standard error in the mean

It reduces the variance of the mean.

standard error vs standard deviation

Standard Deviation (= uncertainty = error) is within one sample while Standard Error estimates the sample mean's accuracy compared to the true population mean.

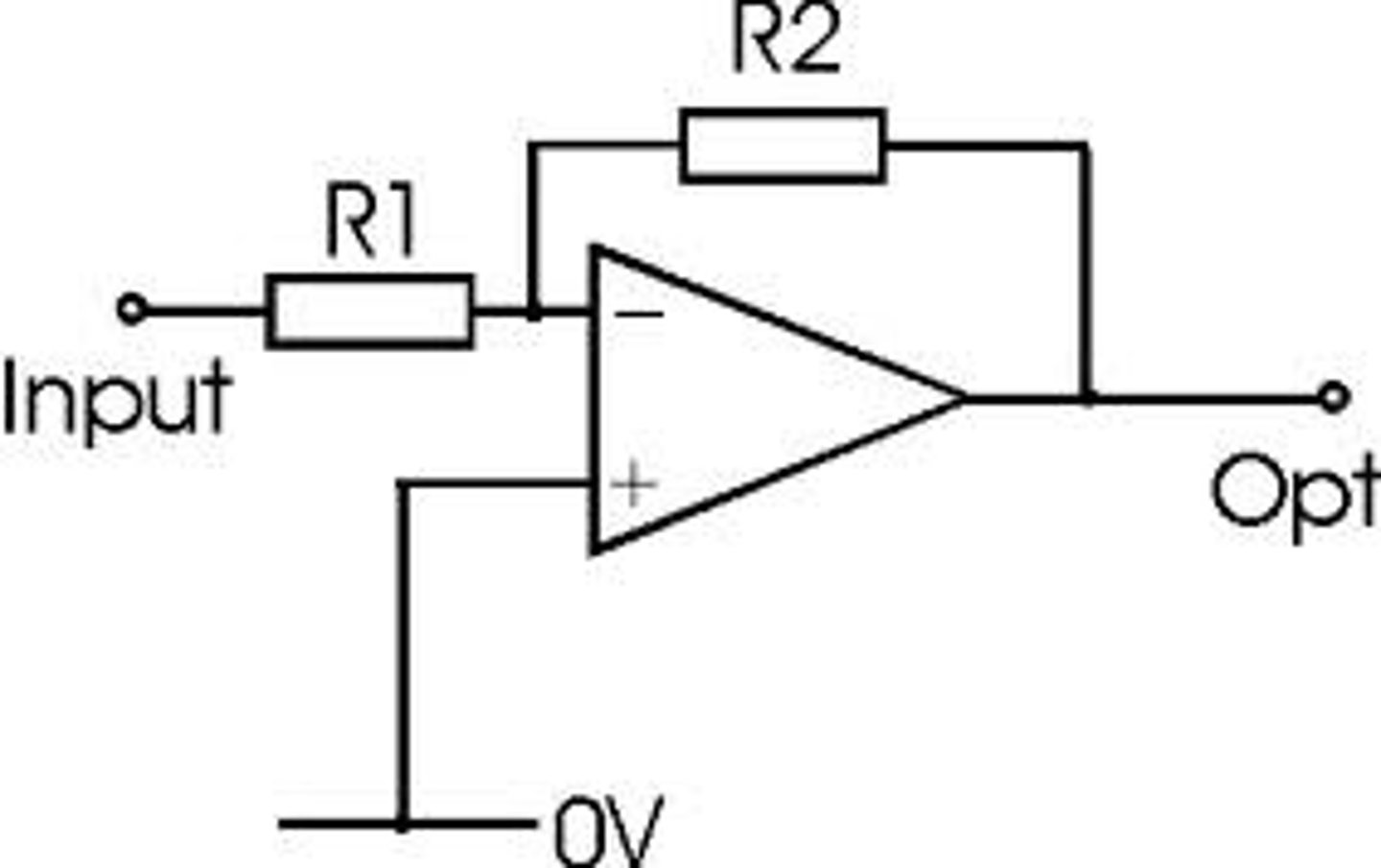

op amps:

Vout relation to A,

golden rules

Vout = A( V⁺ - V⁻)

the inputs draw no current

V⁺ = V⁻ unless the output is saturated (Vout≈Vsupply)

What is the output voltage equation for a simple inverting amplifier?

Vout = - (R2/R1) * Vin.

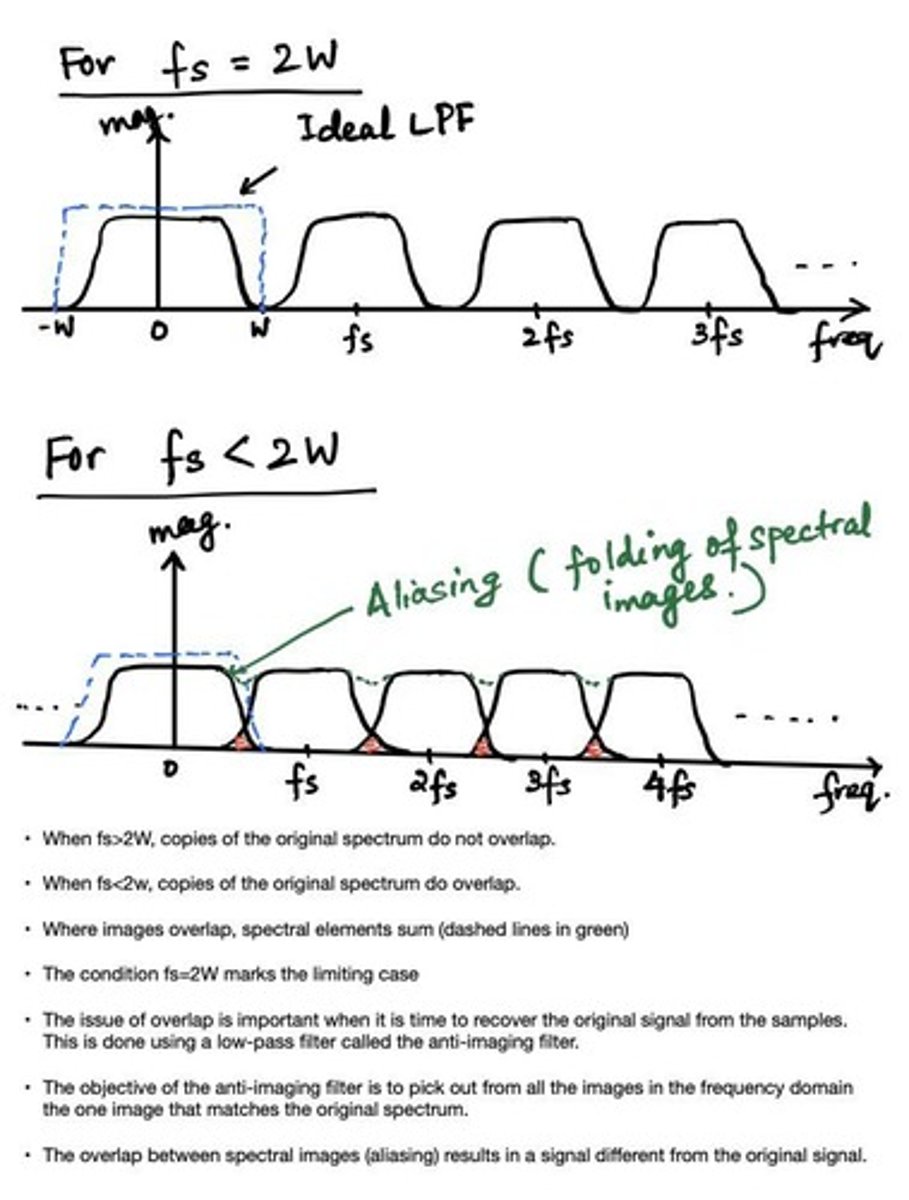

What is the Nyquist criterion?

To sample a signal at least twice per cycle to avoid aliasing.

Convolution of two functions

h(z) = f*g = ∫f(t)g(z-t)dt from -inf to

+inf.

What is aliasing in signal processing?

higher frequencies are misrepresented as lower frequencies due to insufficient sampling.

autocorrelation function meaning and formula

Cff(𝜏) = ∫f(t)f(t+𝜏)dt

how similar a function remains to itself over time

Fourier transform

F[f(t)] = 1/√(2π) ∫f(t)exp(-iωt)dt

a continuous signal f(t) total energy given by Parseval's theorem

E = ∫|f(t)|²dt = ∫|g(ω)|²dt

Oversampling meaning

f the signal contains random noise, then faster sampling taking more measurements, and so averaging of N multiple samples improves the S/N by a factor of N. reduces the quantization error for a finite number of quantizing levels

phase and gain margins meaning

gain: At 180° phase, how far is the gain below 1?

phase: At the highest frequency with unity gain ( ), how far is phase from 180° ?

noise types by power spectral density

White noise: psd = const - fully uncorrelated.

Brownian noise: psd ∝ 1/ω². Results e.g. from temporal integration of white noise

pink noise: psd ∝ 1/ω. movement of defects.

Johnson(-Nyquist) noise: white noise specifically due to thermal fluctuations. psd ∝ K_B T. Solved by cooling.

Phase sensitive detection

multiplies a sine wave by an sqaure wave with a known phase difference (and identucal frequency to ref). This results in the wave being flipped for half of its period, with the phase difference determining the average of the resulting signal:

Vout = VsG/2 cos(phi).

since noise has a very different frequency, after mixing it will average down to zero unless it's very close to ωr.

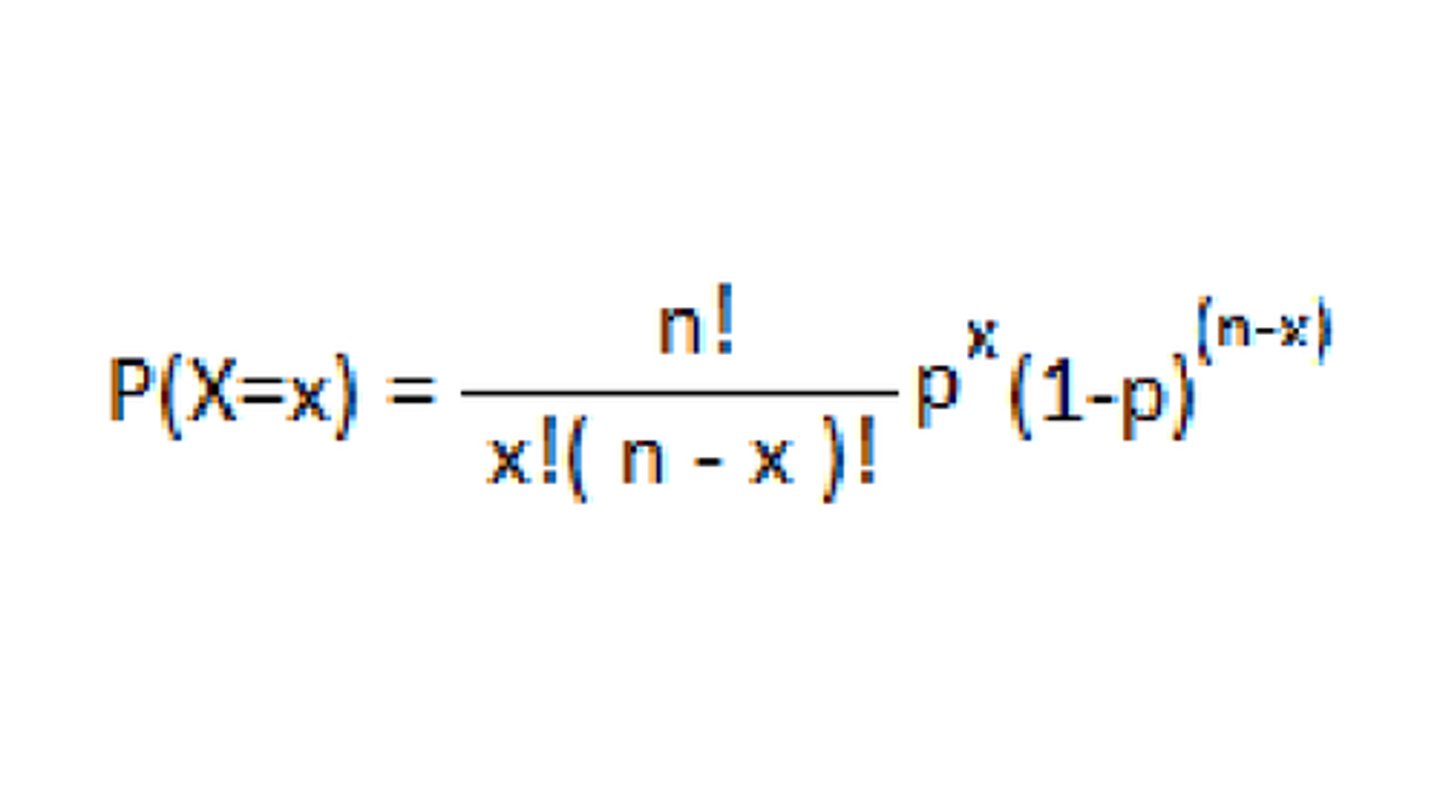

Binomial Probability Distribution: when to use

formula

mean

variance

identical trials with only 2 possible outcomes

(picture) with p = success prob per trial, N is the number of trials, x is the number of successes

var(x) =

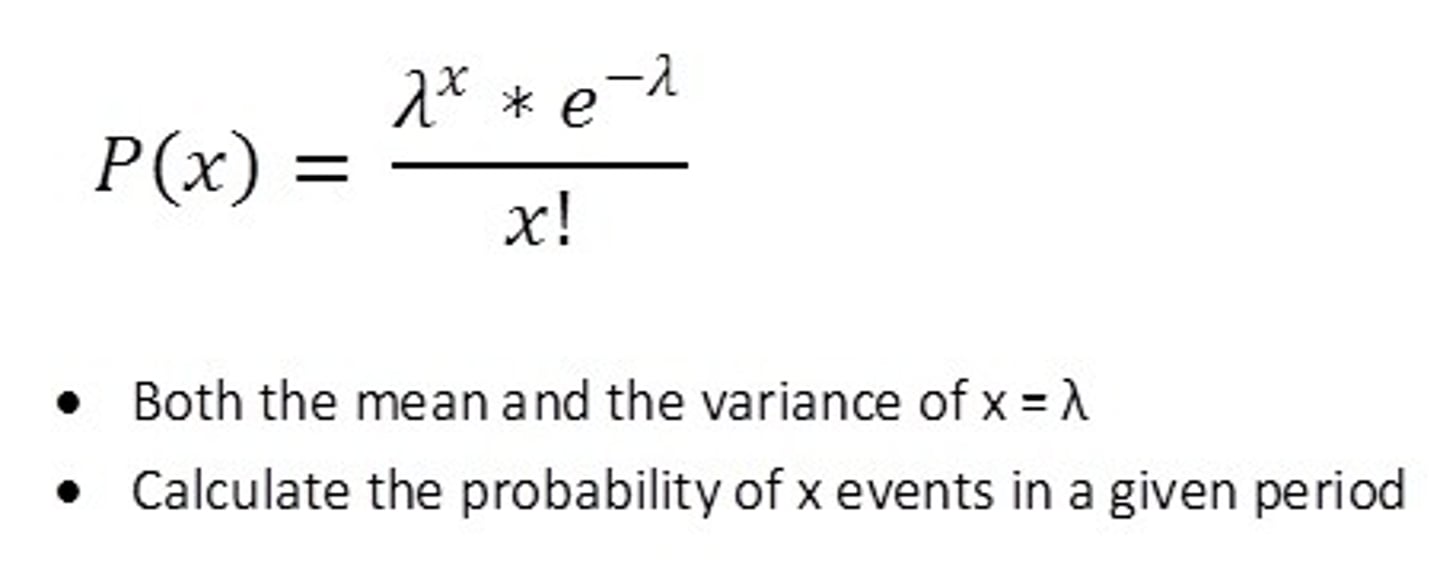

Poisson Probability Distribution:

when to use

formula

mean

variance

we know the number of outcomes, but not the number of trials (eg light flashing)

λ events/time interval,

x is the number of events

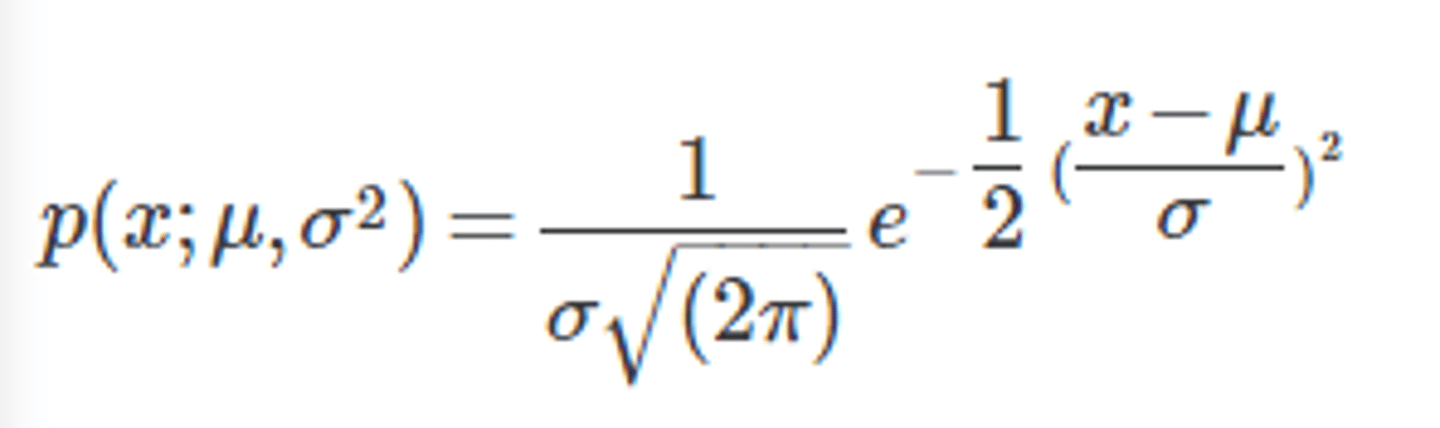

Gaussian:

when to use

formula

normalisation

mean

variance

∫exp(-ax²)dx

mainy random errors

∫Pdx =1 from -inf to +inf.

µ

σ²

∫exp(-ax²)dx = √(π/a)

expected value using probability density

Shot Noise meaning and Equation

Poisson statistics gives standard deviation of current (averaged over as : ∆I =√N ×e/∆t

square of residuals

SR(data,a) = Σ(y_i - f(x_i,a))

where a = (m,c)

chi-squared

χ = Σ[(y_i - f(x_i,a))/σ_i]²

for constant std,

χ = SR/σ²

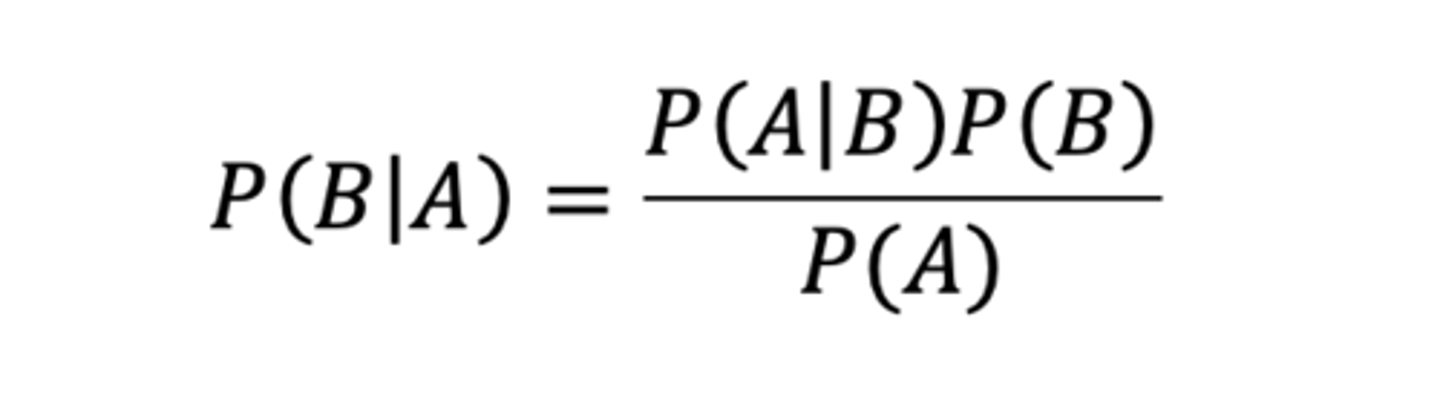

baye's theorem

sometimes they want you to make extra assumptions

fitted m and c of a line from data

m̂ = ( (xy)⁻ − (x)⁻(y)⁻ ) / ((xx)⁻ − (x)⁻(x)⁻ )

= Cov(x, y) / Var(x)

ĉ = ( (xx)⁻·(y)⁻ − (x)⁻·(xy)⁻ ) / ( (xx)⁻ − (x)⁻·(x) ⁻)

= (y)⁻ − m(x)⁻