Intro to AI 2 - Puzzles and Search

1/46

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

47 Terms

What is necessary when defining a search problem?

state description: an abstract representation of the current state of the puzzle

goal function: checks whether a state description satisfies the goal

What is a state space, in the context of a search problem?

a collection of all state descriptions of a puzzle

What is a Simple Exhaustive Search? How does it work?

it means you try out every single possible state of a puzzle and check them all with the goal function. you don’t use heuristics, nor constraints to reduce the number of states.

you do this until you’ve found the solution or until all states have been checked (if all have been checked and non satisfy the goal function, then there is no solution to the puzzle).

What is a Local Search? How does it work?

heuristic method for solving hard computational problem: you choose one state and try to improve it by maximizing a heuristic evaluation. similar to solving a puzzle by hand.

Local search algorithms move from solution to solution in the space of candidate solutions (the search space) by applying local changes, until a solution deemed optimal is found or a time bound is elapsed.

if you keep track of the solutions you’ve tried, you will either find a solution or the certainty that there is not solution. without keeping track, if there is no solution, the program might run forever.

Heuristic = approach to problem solving that employs a pragmatic method that is not fully optimized, perfected, or rationalized, but is nevertheless "good enough" as an approximation or attribute substitution. Where finding an optimal solution is impossible or impractical, heuristic methods can be used to speed up the process of finding a satisfactory solution.

What are the advantages of the Local Search?

uses very little memory

quick

What are the disadvantages of the Local Search?

no guarantee for completeness (unless you keep track of the tried solutions)

no guarantee for optimality (that best/shortest solution is found)

What is the 8 Queen Problem?

it’s a puzzle where you need to put 8 queens on a chess board.

considering that a queen can move any number of tiles in horizontal, vertical and diagonal direction, all queens must be placed without them being in the hit-path of any of the other 7 queens.

What is an heuristic?

any approach to problem solving that employs a pragmatic method that is not fully optimized, perfected, or rationalized, but is nevertheless "good enough" as an approximation or attribute substitution.[6][7] Where finding an optimal solution is impossible or impractical, heuristic methods can be used to speed up the process of finding a satisfactory solution

comes from Greek. in search algorithms, it’s often a function that estimates how close to the goal a certain state is.

On the example of the 8-Queens-Problem, how does the basic idea of an heuristic work?

heuristic = the number of queens, that are in each others hit paths, is calculated for each state.

the algorithm follows the state where the heuristic is getting smaller until it reaches the goal (zero).

What is a different name for Hill-climbing Search?

Greedy Local Search

How does the Hill-Climbing Search work?

local optimization algorithm that continuously moves in the direction of increasing value (uphill) to find the best solution. Starting from a random solution, it evaluates neighboring states and moves to the best neighbor, repeating until no superior neighbors exist, indicating a local maximum

(starting with the current state, the algorithm calculates all possible states that can exist, if one move is made. from these possible states it chooses the one with the higher evaluation (the best one). it does this until all possible future states would be worse than the current one.)

What is the main problem of the Hill-Climbing Search?

it finds Local Optima (not Global): the algorithm stops as soon as it has reached the top of a hill, even if it isn’t the highest hill there is.

What is Random Restart Hill-Climbing?

its a randomized variant of the Hill-Climbing Search. once the algorithm has reached a top, it starts again with a different starting position. after x iterations of this, a solution should be found.

a meta-heuristic algorithm designed to overcome local optima in optimization problems by executing multiple, independent hill-climbing searches. Each run begins from a randomly generated initial state, and the best overall result among all trials is selected. This approach turns a local search into a global search strategy

What is Stochastic Hill-Climbing?

it is a randomized variant of the Hill-Climbing Search. Instead of always moving the the state with the best evaluation, you select a random state to move to.

a local search optimization algorithm that improves solutions by randomly selecting from available "uphill" moves (better neighbors) rather than always choosing the steepest ascent

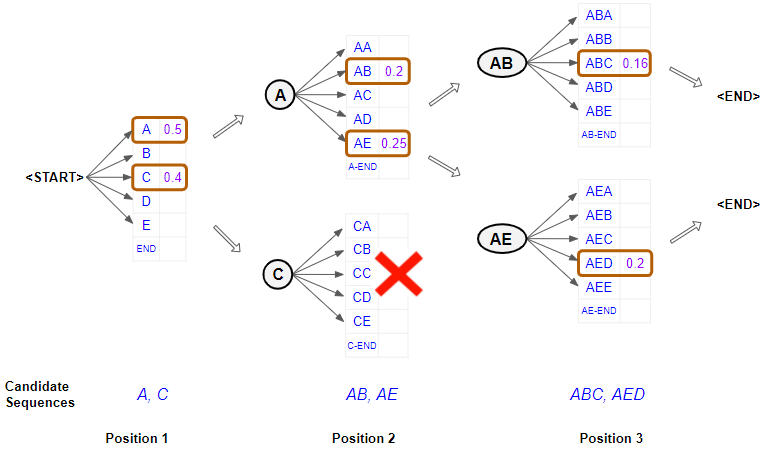

What is a Beam Search? How does it work?

a heuristic search algorithm that explores a graph by expanding the most promising node in a limited set. Beam search is a modification of best-first search that reduces its memory requirements.

it is similar to the Hill-Climbing Search, but instead of moving to the one best possible future state, the algorithm moves to the k best possible future states.

k = number of states it should move to = beam size

the information from different beams is combined.

What is a problem of the Beam Search?

it suffers from lack of diversity of the k-number of states.

means: if one state has better successors, it’s chosen more often than other states.

therefore, it is often no more effective than Hill-Climbing

What is Stochastic Beam Search?

randomized variant of the Beam Search. it chooses k-number of successors at random.

What does the term “Annealing” mean? Where does it come from?

it’s comes from metallurgy and material science. it’s a heat treatment of materials to change its properties like strength and hardness by heating and then cooling it very slowly.

What is Simulated Annealing Search? How does it work?

probabilistic, metaheuristic algorithm used to find the global optimum of a function in a large search space

The algorithm works by navigating a search space through a process of random moves that allow for both improvement and, less frequently, degradation.

Initialize: Start with an initial candidate solution and a high initial temperature T

Perturb: Make a small, random change (perturbation) to the current solution to create a neighbor.

Evaluate: Calculate the change in cost of the new solution compared to the old one.

Accept/Reject:

If the new solution is better, accept it.

If it is worse, accept it with a probability (Metropolis acceptance criterion).

Cooling: Reduce the temperature slowly based on a cooling schedule (e.g., linear or geometric).

Terminate: Repeat steps 2-5 until a maximum number of iterations is reached or the solution converges.

What is a Genetic Algorithm? How does it work?

In a genetic algorithm, a population of candidate solutions (called individuals, creatures, organisms, or phenotypes) to an optimization problem is evolved toward better solutions. Each candidate solution has a set of properties (its chromosomes or genotype) which can be mutated and altered; traditionally, solutions are represented in binary as strings of 0s and 1s, but other encodings are also possible

The evolution usually starts from a population of randomly generated individuals, and is an iterative process, with the population in each iteration called a generation. In each generation, the fitness of every individual in the population is evaluated; the fitness is usually the value of the objective function in the optimization problem being solved. The more fit individuals are stochastically selected from the current population, and each individual's genome is modified (recombined and possibly randomly mutated) to form a new generation. The new generation of candidate solutions is then used in the next iteration of the algorithm. Commonly, the algorithm terminates when either a maximum number of generations has been produced, or a satisfactory fitness level has been reached for the population.

In regards to Genetic Algorithms, what is Selection?

In Genetic Algorithms (GAs), Selection is the process of choosing individuals (candidate solutions) from the current population to serve as parents for the next generation. This operator simulates "survival of the fittest" in natural selection, aiming to improve the overall population fitness by favoring high-quality solutions

In regards of Genetic Algorithms, what is Mutation?

In Genetic Algorithms (GAs), mutation is a genetic operator used to maintain genetic diversity from one generation of a population to the next. It involves making small, random, and spontaneous alterations to one or more gene values in a chromosome (solution

In regards to Genetic Algorithms, what is Cross-Over?

a genetic operator used to combine the genetic information (genes/features) of two parent solutions to generate new offspring. It is the primary exploration operator in GAs, mimicking biological sexual reproduction to create new solutions that might inherit good traits from both parents, with the goal of finding a superior solution

Not all pairs are crossed over. The algorithm uses a probability rate (typically high, e.g., 0.7 to 0.9) to decide whether to perform crossover or simply clone the parents

What are the advantages of Genetic Algorithms?

genetic algorithms are better suited to finding the global best solution in large, complex search spaces

hey are highly efficient because they do not require derivative information and can handle parallel, discrete, and non-differentiable objective functions

Who popularized Genetic Programming?

John Koza

Who is John R Koza?

computer scientist known for pioneering genetic programming

What is Genetic Programming?

evolutionary algorithm, an artificial intelligence technique mimicking natural evolution, which operates on a population of programs. It applies the genetic operators selection according to a predefined fitness measure, mutation and crossover.

What are the Towers of Hanoi?

The Towers of Hanoi is a classic mathematical puzzle invented by Édouard Lucas in 1883, featuring three rods and multiple disks of different sizes. The objective is to move the entire stack from one rod to another, obeying three rules: only one disk moves at a time, only the top disk can be moved, and no larger disk can be placed on a smaller one

What is Divide-and-Conquer in regards to problem solving?

a fundamental problem-solving technique in computer science and mathematics that breaks a complex problem into smaller, manageable sub-problems. These sub-problems are solved recursively (conquered) and then combined to form the final solution, often leading to more efficient, scalable, and faster algorithms compared to brute-force approaches

What are Constraint Satisfaction Problems?

mathematical questions defined as a set of objects whose state must satisfy a number of constraints or limitations.

a mathematical framework for solving combinatorial problems by finding values for a set of variables, chosen from finite domains, that satisfy specific constraints. Defined as a triplet of variables, domains, and constraints V D C:

Variables

Domains = set of possible values for Variables

constraints = rules restricting the values variables can take simultaneously

Solution: complete assignment of values to Variables that satisfies Constraints

CSPs are central to artificial intelligence, used for scheduling, logistics, and planning

What are some examples of Constraint Satisfaction Problems in the real world?

finding values for variables (e.g., time slots, routes) that satisfy specific rules (e.g., no overlaps, capacity limits). Common examples include scheduling (exams, employees), logistics (vehicle routing), product configuration, map coloring, and puzzles like Sudoku

What is a Constraint Graph?

graphical representation of a Constraint Satisfaction Problem (CSP), where nodes represent variables and edges connect nodes that share a direct constraint.

In a map-coloring problem, each country is a node, and an edge connects countries that share a border, enforcing a constraint that they cannot share the same color.

What types of constraints are there for the Constraint Satisfaction Problem?

Unary Constraints: Involve a single variable, restricting its specific domain (e.g. A =/= Green).

Binary Constraints: Involve pairs of variables, establishing a relationship between them (e.g. A=/=B, A>B). These are often represented as a constraint graph.

Higher-Order/Non-binary Constraints: Involve three or more variables, often seen in complex, real-world problems (e.g.X1+X2+X3<X4).

Global Constraints: Involve an arbitrary number of variables, describing common patterns or relationships

Soft/Preference Constraints: Express preferences rather than strict requirements, used in optimization to define a "good" solution rather than just a valid one

By Structure&Domain:

Implicit/Explicit Constraints: Explicit constraints list all valid value combinations, while implicit constraints provide a functional relationship

Linear vs. Non-Linear: Constraints can be linear functions (simpler to solve) or non-linear.

Discrete/Finite Domains: Constraints applied to sets of finite possibilities (e.g., Map Coloring, N-Queens)

What are hard and soft constraints (in Constraint Satisfaction Problems)?

hard constraints are mandatory rules that must be satisfied for a solution to be valid. Soft constraints are preferences or desirable goals that should be met but can be violated at a cost, often used in optimization to find the best possible, rather than just any, solution

When solving Constraint Satisfaction Problems, how does the principle of Search work?

systematically exploring the space of possible variable assignments to find a combination that satisfies all constraints. Because CSPs are generally NP-hard, this process often combines systematic search (backtracking) to explore partial solutions with inference (constraint propagation) to prune the search space

Backtracking is the most common algorithm for solving CSPs. It is a form of depth-first search that incrementally builds candidates for solutions and abandons a candidate ("backtracks") as soon as it determines the candidate cannot lead to a valid solution

When solving Constraint Satisfaction Problems, how does the principle of Constraint Propagation work?

iteratively reducing the possible values (domains) of variables based on constraints, rather than relying solely on brute-force search. It works by enforcing local consistency—ensuring that a value in a variable's domain is compatible with at least one value in the domain of neighboring variables—effectively pruning the search space to eliminate values that cannot be part of a valid solution

Initialization: The algorithm starts with the full initial domain of possible values for every variable.

Filtering (Reducing): When a variable's value is assigned or restricted, the constraint propagation algorithm examines all connected constraints. It removes any values from neighboring variables' domains that violate those constraints.

Propagation (Iterative Updating): When a variable's domain is reduced, this change is communicated to all constraints involving that variable. If these constraints then reduce the domains of other variables, this process repeats, "propagating" the reductions throughout the network.

Stable State (Fixed Point): The process continues until no further reductions can be made (a stable state is reached) or an empty domain is found, indicating that the current branch is invalid and requires backtracking

How do you calculate the number of possibilities in Naive Search?

In the Naive String Search algorithm (also called the Brute Force approach), the number of possibilities—specifically the number of possible starting positions or "shifts"—is calculated based on the lengths of the text and the pattern.

1. Number of Possible Shifts (Alignments)

The algorithm slides the pattern over the text one character at a time. The number of valid starting positions where the entire pattern can fit within the text is:

n - m + 1

n: Length of the text (haystack).

m: Length of the pattern (needle)

How can the complexity of Naive Search be reduced?

by using specific algorithms to avoid redundant character comparisons (skip redundant comparisons - character by character, )

What is Backtracking Search?

a depth-first algorithm used to find solutions to constraint satisfaction problems (CSPs) by incrementally building candidates. It abandons a partial candidate (backtracks) as soon as it determines the candidate cannot lead to a valid solution, making it more efficient than brute-force

What types of heuristics are there for Constraint Satisfaction Problems?

essential for reducing the search space and speeding up the resolution of NP-complete problems

Variable ordering h (choosing which variable to assign next): These determine the order in which variables are instantiated in a backtracking search

Value ordering h (choosing which value to assign to a chosen variable):

What types of General-purpose heuristics are there?

1. Core Cognitive Heuristics

These mental shortcuts are often used to make judgments under uncertainty:

Availability: Judging probability based on how easily examples come to mind.

Representativeness: Evaluating situations based on how well they match a mental prototype.

Anchoring and Adjustment: Relying too heavily on an initial piece of information.

Affect, Familiarity, Scarcity, & Simulation: Using emotions, past experience, perceived rarity, or ease of imagination to guide decisions.

2. Fast and Frugal Heuristics

Popularized by Gerd Gigerenzer, these strategies are designed for accuracy in uncertain environments, often outperforming complex models:

Recognition: Choosing the option that is recognized over the unknown.

Take-the-Best: Making decisions based on the first key cue that differentiates options.

Satisficing: Selecting the first option that meets a minimum threshold, rather than searching for the absolute best.

Default & Social Proof: Relying on pre-set options or copying the behavior of others.

3. Problem-Solving Heuristics

Techniques used to navigate complex tasks:

Trial and Error: Testing various actions until success.

Working Backward & Means-Ends Analysis: Starting from the goal or breaking down large problems into smaller sub-goals

What is Constraint Propagation?

an AI technique that reduces the search space in constraint satisfaction problems (CSPs) by iteratively eliminating inconsistent values from variable domains based on constraints. When one variable is fixed, the algorithm propagates this information to connected variables, narrowing their possible values, which can trigger further reductions

What is Node Consistency?

a fundamental, preprocessing technique in Constraint Satisfaction Problems (CSPs) where all unary constraints on a variable are satisfied by every value in its domain. It ensures that each node (variable) in the constraint graph is valid on its own, reducing search space by removing inconsistent values

Global consistency ensures uniformity across the entire system, dataset, or brand, adhering to a single standard worldwide or across all data structures

What is Local Consistency?

Local consistency in constraint satisfaction problems (CSPs) is a technique that enforces constraints locally across subsets of variables, rather than globally, to reduce the search space and prune impossible values. It simplifies complex problems by iteratively removing inconsistent values from variable domains that cannot satisfy constraints, improving efficiency

Local consistency focuses on maintaining uniformity within small, specific subsets or neighborhoods (e.g., nearby data points or specific local markets), ensuring immediate, adjacent elements match

What is Forward Checking?

a constraint satisfaction problem (CSP) algorithm that enhances backtracking search by checking future variables whenever a new variable is assigned. It removes inconsistent values from the domains of unassigned variables that conflict with the current assignment, allowing for early detection of potential failures and reduced search space

Forward checking checks only the constraints between the current variable and the future variables

What is Arc Consistency?

examining the arcs between pairs of variables (x, y). It removes those values from the domain of x that aren't consistent with the constraints between x and y. The algorithm keeps a collection of arcs that are yet to be checked; when the domain of a variable has any values removed, all the arcs of constraints pointing to that pruned variable (except the arc of the current constraint) are added to the collection. Since the domains of the variables are finite and either one arc or at least one value are removed at each step, this algorithm is guaranteed to terminate.

a preprocessing or constraint propagation technique used to solve Constraint Satisfaction Problems (CSPs) by removing inconsistent values from variable domains. A directed arc (X, Y) is consistent if, for every value in variable X's domain, there exists at least one value in Y's domain that satisfies the binary constraint between them

(For illustration, here is an example of a very simple constraint problem: X (a variable) has the possible values {0, 1, 2, 3, 4, 5}—the set of these values are the domain of X, or D(X). The variable Y has the domain D(Y) = {0, 1, 2, 3, 4, 5, 6, 7, 8, 9}. Together with the constraints C1 = "X must be even" and C2 = "X + Y must equal 4" we have a CSP that AC-3 can solve. Notice that the actual constraint graph representing this problem must contain two edges between X and Y since C2 is undirected but the graph representation being used by AC-3 is directed.

AC-3 solves the problem by first removing the non-even values from of the domain of X as required by C1, leaving D(X) = { 0, 2, 4 }. It then examines the arcs between X and Y implied by C2. Only the pairs (X=0, Y=4), (X=2, Y=2), and (X=4, Y=0) match the constraint C2. AC-3 then terminates, with D(X) = {0, 2, 4} and D(Y) = {0, 2, 4}.

What is Min-Conflicts Heuristic?

a local search technique used to solve Constraint Satisfaction Problems (CSPs) by iteratively repairing an initial solution. It works by selecting a variable involved in a conflict (violated constraint) and reassigning it a value that minimizes the total number of conflicts. This method is highly effective for large-scale scheduling