Exam 3: PBSI 340

1/92

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

93 Terms

Instrumental Learning

synonymous to response outcome learning

learning in which the probability of a given behavior is altered by its consequences

the behavior is a tool “instrument” in producing the outcome

Operant Learning/Conditioning

A form of instrumental learning in which the organism specifically learns to manipulate its environment to achieve a desired outcome.

Subset of instrumental learning

OL=IL but not vice versa

Instrumental Criteria (Same as before)

The behavior modification depend on a form of neural plasticity

The modification depends on the organism’s experiential history

The modification outlasts the environmental conditions and the experience has a lasting effect on performance

Imposing a temporal relationship between a response and an outcome alters the response

Additional Operant Criteria (new)

The nature of the behavioral change is not constrained (either an increase OR decrease in response can be established)

The nature of the reinforcer is not constrained ( a variety of outcomes can be used to produce the behavioral deficit)

Discrete-Trial Method

A form of instrumental learning where an organism may only perform a behavior to earn a response only at specific times.

→ Ex: iClicker quizzes

Free-Operant Method

A form of instrumental learning where an organism may perform a behavior to earn a response at any time.

→ Ex: Checking socials

The type of instrumental learning depends on…

Nature of the reinforcer (+) or (-)

Relation to the behavior

Reward Learning/Positive Reinforcement

Behavior leads to an appetitive outcome.

increases likelihood of behavior

Punishment Learning/Positive Punishment

Behavior leads to an aversive outcome

decreases likelihood of behavior

Omission Learning/Negative Punishment

Behavior removes an appetitive outcome.

Decreases likelihood of behavior.

Ex: press → no food

Escape/Avoidance Learning or Negative Reinforcement

Behavior removes an aversive outcome.

Ex: shuttle → no shock

Increases likelihood of behavior

Skinner Box

Animal presses lever → reward or punishment

Used for:

Reward: bar press → food

Omission: bar press → no food

Punishment: bar press → shock

Shuttle box

Animal moves compartments to avoid shock

Used for: Escape/Avoidance learning

Cannot use Skinner for Escape/Avoidance because of biological constraints

Learning occurs in…

a heterogenous behavioral substrate

some action-outcome relationships are learned more easily than others

Contingency in Instrumental learning

Extra US’s undermine Pavlovian conditioning

Extra outcomes disrupt instrumental conditioning

Temporal Relationship

timing between response and outcome matter or

timing between CS and US matter

Continuous Reinforcement (CRF)

every response is rewarded

1:1 ratio of behavior to reinforcer

Behavior always produces the reinforcer

Fixed Ratio (FRX)

X responses → reward

Stable X:1 ratio of behavior to reinforcer

X number of responses always produces the reinforcer

Variable Ratio (VRX)

unpredictable number of responses

Average of X:1 ratio of behavior to reinforcer

the number of responses needed to produce the reinforcer will vary, but average out to X

Fixed Interval (FIX)

reward after a fixed time

stable interval separates rewardable behaviors

behavior will only produce reinforcer after a constant amount of time has passed

Variable Interval (VIX)

reward after variable time

average interval separates rewardable behaviors

behavior will only produce reinforcer after a variable amount of time has passed

Learned Helplessness

Idea that unavoidable aversive events impair performance on future avoidable aversive events

Uncontrollable events → impair future learning

failure to act even when escape is possible

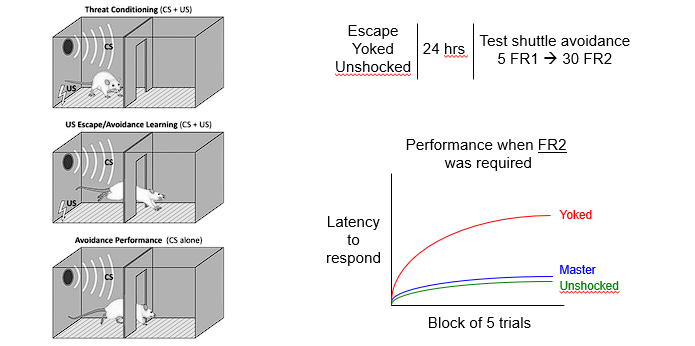

Learned Helplessness: Experiment (Maier, Overmier, Seligman)

Master (escapable shock)

Yoke (inescapable shock)

Unshocked

Learned Helplessness: Signal Active Avoidance

3 groups:

Escape → can escape

Yoked → cannot escape

Unshocked

Findings:

After 24 hrs all groups were allowed to move to the side with no shock

Yoke too the longest to learn the second time

Learned helplessness hypothesis

Causes motivational and associative deficit

Related to Julian Rotter’s locus of control

→ Internal: “I believe that I can control my own future”

→ External: “My life is directed by forces outside of my control”

Contributes to common psychiatric disorders, including anxiety, depression and PTSD

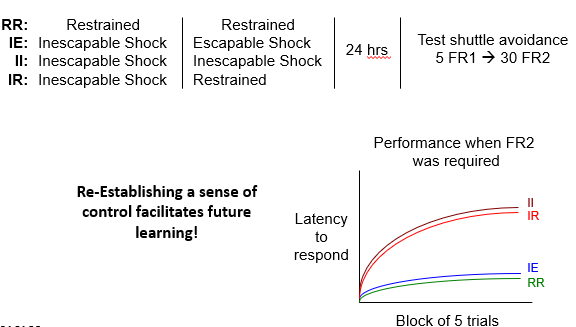

Learned Helplessness: Behavioral Therapy

Groups:

IR = Inescapable shock → then restrained

IE = Inescapable shock → then escapable

RR = Restrained → Restrained (control)

II= Inescapable → Inescapable

Key Points:

Animals that experience control again → start responding normally + escape faster + show recovery

LH is NOT permanent!

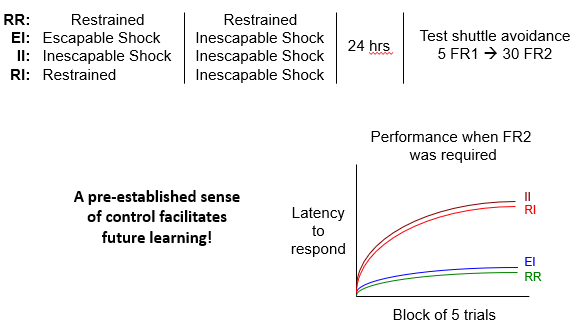

Learned Helplessness: Behavioral Immunization

Groups:

EI = Escapable → Inescapable

II = Inescapable only

RI = control

What happens:

Animal gets ES → learns I can control

Later gets IE → no control is possible

Findings:

Animals with prior control stilly try to escape + resist helplessness

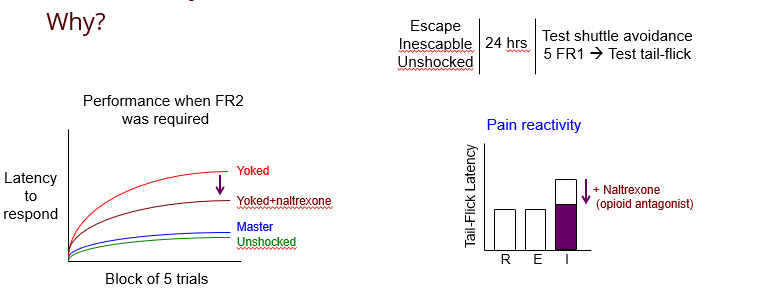

Learned Helplessness: Endogenous Opioids

Learned helplessness can be conditioned in most mammalian species

But Why? Experiment:

Groups:

Escapable shock → Master

Inescapable shock → Yoked

Unshocked control

Then, measure tail flick latency (pain sensitivity test)

Findings:

Normal Reaction: w/o Naltrexone

After IE → brain releases opioids

Animals feel less pain so less likely to learn.

Reaction with Naltrexone:

Naltrexone blocks the endogenous opioids

Pain is felt normally → more likely to learn.

Response-Outcome Contiguity is Key

Organisms are always behaving in some way!

Example: Dog training

Dog sits on command:

Receives treat immediately

→ Dog associates sitting with treat

Dog poops in house while owner is away:

Dog walks around, eats food, sleeps, goes on abt its day

Dog greets owner upon return, owner yells at dog about poop

→ Dog learns the wrong lesson - if there is a long delay, it becomes harder to identify which behavior is responsible

Exceptions to the Importance of Contiguity

Secondary (Conditional) Reinforcers

Marking Stimuli

Secondary ( Conditional) Reinforcers

Something that is not inherently rewarding on its own becomes reinforcing through association with a primary reinforcer

ex: gold stars in classrooms

Why this matters for contiguity:

secondary reinforcers can be delivered immediately, even when the primary reward is delayed

child does something good → gold star → gold star gets exchanged for treasure chest

Gold star preserves the behavioral connection by bridging the time gap.

Marking Stimuli

A brief cue that identifies the target response from among the many behaviors occurring around it.

brief, auditory or visual

not necessarily valued

Ex: Shaping activity

when the organism emits a closer approximation to the desired behavior, the trainer may use a click or other brief marker, this helps distinguish the correct response from other recent actions.

Instrumental Behavior: Goal Directed or Habitual?

Typically begins at goal-directed and becomes habitual over time

Goal-Directed Behavior

A behavior is goal-directed if it is controlled by the current value of the outcome.

organism represents outcome

organism cares about whether that outcome is still desirable

organism uses that valuation to guide responding

Habitual Behavior

A behavior is habitual if it continues even when the outcome is no longer valued.

That means:

the response has become more automatic

the behavior is less sensitive to the current worth of the outcome

action may be controlled more by stimulus-response history than by outcome evaluation

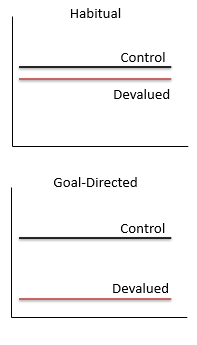

Reinforcer Devaluation

Similar to US devaluation

Procedure:

Establish an R-O contingency

→ ex: lever press → sucrose

Devalue the outcome

→ ex: sucrose paired with LiCI (illness/nausea) or sucrose left alone as control

Test whether animal still performs the response

Findings:

If the anima reduces lever pressing after sucrose has been devalued, the behavior was goal-directed.

If animal keeps pressing despite devaluation, the behavior is habitual.

Feedback Functions

A feedback function describes how changes in response rate affect the rate of reinforcement

In other words: if the organism responds faster, how much more reinforcement does it get?

Ratio Schedules: Feedback Functions

Ratio Schedules produce more responding than interval schedules

On a ratio schedule, reinforcement depends directly on the number of responses, so → more responses = more rewards

This means the extra effort is profitable

Interval schedules: Feedback Functions

Interval schedules reach an asymptote earlier than ratio feedback functions

On an interval schedule, reinforcement depends mainly on time passing. Once the interval has passed, the NEXT response may be rewarded, but responding faster during the interval does not increase the reward rate indefinitely.

Concurrent Schedules of Reinforcement

A concurrent schedule exists when the organism has 2 response options available at the same time, and each leads to reinforcement according to its own schedule.

Concurrent Schedules: Example

Level A: gets a large pile of food, but on a 10-minte fixed interval schedule

Level B: single pellet, but on a 5:1 fixed ratio schedule

What the organism must evaluate:

amount of reward

schedule structure

delay

work requirement

A bigger reward is not always better if it is available less often or less directly, a smaller reward may attract more responding if it is easier or more frequently obtainable

The Matching Law

If:

the response alternatives require similar effort

switching between them is easy

Then:

the distribution of responses is directly proportional to the rate of reinforcement received for that behavior, esp when multiple options are available

Generalized Matching Law

OG matching law works only under ideal conditions — this is used when not under those conditions

Generalized Matching law takes differences in effort, reinforcer strength and switching from one response to the other

Concurrent Chain Schedules

Lock the organism’s initial choice in for the remainder of a trial

Choice Link: Organism can select either option

Terminal Link: Once choice is made, the choice is locked in + only the selected choice is available for the rest of the trial

Drive-Reduction Theory: Clark Hull

Reinforcers satisfy biological needs, and they do so by reducing internal drives.

Hull’s View

If an organism has a physiological deficit that creates a drive a reinforcer works bc it reduces that drive

ex: food is reinforcing → reduces hunger, water → reduces thirst

Problems with Drive-Reduction Theory

Long and Implausible list of drives

DR can’t explain all of reinforcement → you’d have to invent drives for many behaviors

Ex: Monkeys working to watch a toy train → not reducing any real biological drive

Learning can occur without Drive Reduction

Ex: Rats learn to seek saccharine bc it’s sweet even tho no nutritional value

if reinforcement required reduction of physiological need, then this should not be a strong reinforcer bc it does not solve caloric deficiency

Premack Principle

When 2 behaviors are available, the more probably behavior can reinforce the less probable behavior, but the less probable behavior will not reinforce the more probable behavior.

Ex: First do your homework, then you get to watch TV

But, A reinforcer is not universally reinforcing, its reinforcing power depends on the organism’s existing preference structure

Premack vs. Hull

Differences:

Premack didn’t designate a special class of events as reinforcing

Problems with Premack’s Approach

Difficult to quantify time spent performing individual behaviors

How to quantify drinking behavior?

How do we determine which behavior is probable?

Early View: NB Mechanisms of Instrumental learning

Believed that all learning occurred via common mechanism

Modern View: NB mechanism of Instrumental Learning

Multiple systems underlie different types of memory

Learning in a T-maze

Early in training: Place learning (spatial/explicit)

Lesioning HC disrupts place learning, facilitates habit learning

Late in training; Response learning (habit/implicit)

Lesioning the Basal ganglia disrupts habit learning

Basal Ganglia: Structures

Striatum and Substantia Nigra

Striatum: Structures

Global Pallidus

Ventral Pallidum

Substantia Nigra: Structures

Subthalamic nucleus

Ventrolateral nucleus of the thalamus

Striatum: Function

involved in motivation, reinforcement + planning movements

it connects: reward → action

receives dopaminergic input from the substantia nigra

2 key receptors

Substantia Nigra: Function

Produces dopamine

Neurons synapse onto neurons in the striatum

Habit System

Amygdala

Basolateral nucleus encodes reward magnitude and valence

Striatum

Integrates positive and negative with behavior over multiple trials

Habit learning is incremental → more trials = stronger habit

Executive Control

Executive control is making choices after weighing things like immediate reward, future reward and punishment to make the best decision.

organisms need to do more than just represent the reward

Orbital Frontal Cortex

allows the organism to weigh the relative value of alternative outcomes

Value: Estimate of relative gain both now and in the future

Delayed Discounting

Value decreases as delay increases

can be modeled in both animals and humans

Lesions of OFC → bias behavior to immediate reward (in rats)

Increased limbic activity → immediate reward (in humans)

OFC activity → delayed reward

People with substance-use disorders tend to be biased towards immediate rewards

Direct vs Indirect Pathways

Two classes of striatal projection neuron

distinguished by type of dopamine receptor (D1 v D2)

D1 vs D2

D1:

receptor expressing direct pathway

involved in reward learning and initiating action

D2:

receptor expressing indirect pathway

involved in punishment learning and inhibiting action

Direct v Indirect PW: Experiment

Set- Up:

2 groups of rats implanted w mechanist to stimulate either 1. direct pathway (D1 medium spiny neurons) or indirect pathway (D2 medium spiny neurons)

Both groups were presented with 2 levers:

Lever 1: engaged stimulation

Lever 2: no stimulation

Findings:

Direct pathway mice worked for stimulation

Indirect pathway mice avoided stimulation

Conclusion:

D → encodes reward

I → controls punishment

Both involve dopamine

Direct vs Indirect: CPP

Another experiment utilizing the D&I were used in relation to CPP:

Results:

Direct pathway rats preferred stimulation side

Indirect pathway rats avoided the stimulation side

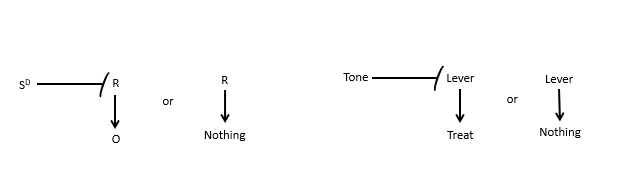

Discriminative Stimulus

indicates whether the R-O relation is in effect

Basically → a stimulus that signals whether a response will produce an outcome

SD kind of sets the conditions under which the response is effective

Ex:

Tone on → lever gives treat

Tone off → lever press gives nothing

Here the animal is not just learning Lever press → food

It is learning:

Tone present: lever press → food

Tone absent: lever press → nothing

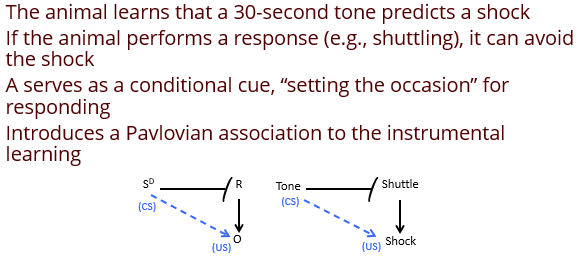

Avoidance learning (SD)

Avoidance learning is not purely instrumental and not purely Pavlovian. It is a hybrid:

The cue predicts danger, which is Pavlovian.

The cue signals when the instrumental response can successfully avoid the outcome.

Why do animals exhibit a conditional avoidance response?

Hypothesis: It is reinforced by the non-occurrence of shock.

Two-Factor Theory of Avoidance

Avoidance is explained by 2 learning processes, one pavlovian and one instrumental.

Factor 1: Pavlovian

Early in training:

Tone (CS) paired w shoch (US)

Tone → fear

Animal learns:

Tone predicts shock

Tone becomes aversive bc it produces conditioned fear

Factor 2: Instrumental fear reduction

Later in training:

animal shuttles when tone comes on

shuttling turns off tone or prevents shock

reduces fear (reinforcer)

The reinforcer is no longer “non-occurrence of shock” in the abstract.

Instead, the theory says the true reinforcer is:

reduction of conditioned fear

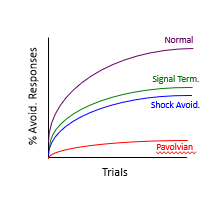

Problems with 2 factor theory: Kamin Experiment

Prediction of 2FT → if fear reduction is the reinforcer then, ending the tone shd be enough

Experiment Conditions:

Normal Avoidance

Signal Termination only

Shock avoidance only

Pavlovian

Results:

Normal = best learning

Signal termination = weaker than expected

Shock avoidance = better than expected

Prediction does not always match reality

Other Problems with 2 Factor Theory

Presenting CS along should extinguish conditional fear

Animals show no true signs of fear to the CS under normal circumstances

→ Fear expression returns IF the animal is blocked from performing the avoidance response

A higher magnitude shock should increase avoidance performance BUT they actually decrease performance

Solutions to 2 Factor Theory

View the avoidance response as a conditional inhibitor

Differentiate between different response patterns to aversive outcomes

Add a third phase to the two-factor theory

Low-Imminence threat

Pre- encounter behavior

Anxiety

environmental stimuli indicate a predator could be near

High-imminence threat

Post encounter behavior

Fear

Predator is near

Fight or Flight

Circa-Strike behavior

Panic

Predator is attacking

Two-Way Shuttle-Box Active Avoidance

Involves the animal learning to shuttle back and forth to avoid shock in response to a tone

Features:

Active → fear behaviors (defensive freezing) ineffective for successful avoidance

Two-Way → Neither side of the chamber is considered safe

One-Way Avoidance

Involves a shuttle box with 2 very different chambers. Measures latency to enter the other chamber

Features:

Can be active or passive:

Active → starts on dangerous side of chamber

Passive → starts on safe side of chamber

One Way → one side of the chamber is always safe

Platform Mediated Avoidance

Involves a food deprived animal given a lever to press to earn food pellets. Animal must learn to stop pressing for food and escape to safe platform when tone plays.

Features:

Active or Passive strat

Involves multiple schedules of reinforcement

Active avoidance learning involves suppressing …

freezing → primary defensive behavior in mammals

Freezing depends on…

2 regions of the Amygdala:

Basolateral Amygdala

involved in emotional associations (fear)

Central Amygdala

involved in fight or flight behaviors (freezing)

Avoidance depends on…

Basolateral Amygdala → emotional associations

PFC → suppressing freezing

Striatum → motivated action (avoidance)

Dorsal medial → Goal-directed avoidance

Dorsal lateral → habitual avoidance

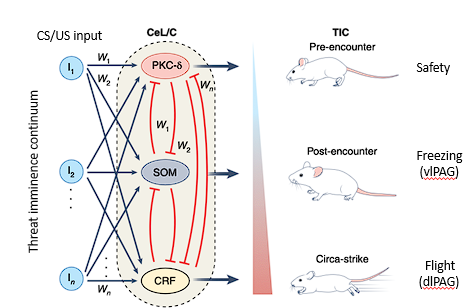

Basolateral Amygdala neurons..

Different types mediate diff levels of threat imminence

PKC → Pre-encounter → Safety

SOM → Post-encounter → Freezing

CRF → Circa strike → ForF

Stimulus Control

ability of a given stimulus to control behavior

Ex: Like a traffic light

Color: High Stimulus Control

Location/Shape: low stimulus control

Stimulus Dimensions

One Aspect of a particular stimuli

color, location, shape, pitch, texture etc.

Stimulus Generalization

occurs when responding to 1 stimulus is also observed when a dif stimulus is presented

common when a stimulus dimension has high stimulus control

Stimulus Discrimination

occurs when responding to 1 stimulus but not another

common when a stimulus dimension has low stimulus control

Differential Responding

a strategy where changes to 1 stimulus dimension are made, then you study the differences in responding

Stimulus Generalization Gradient

Extent to which responding changes across stimulus variations

Factors impacting stimulus control

Sensory capacity - can organism perceive stimulus?

Sensory orientation - is organism properly oriented to perceive stim?

Stimulus intensity - is stimulus bright/loud enough?

Motivation - is organism motivated to attend to the stimuli?

Stimulus Filter

bias toward visual or auditory cues during specific motivational states

Stimulus Discrimination Training