Theorems, Propositions, and Facts

1/59

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

60 Terms

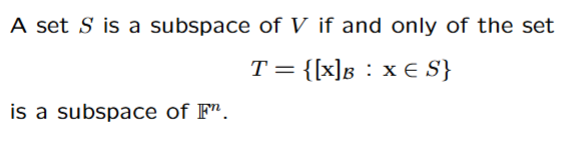

For any subspaces S and T of the same vector space V, S ∩ T is also a subspace of V

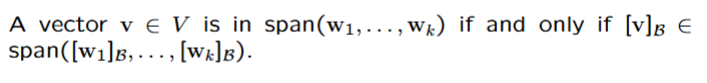

If v1, . . . , vp are in a vector space V, then Span{v1, . . . , vp} is a subspace of V.

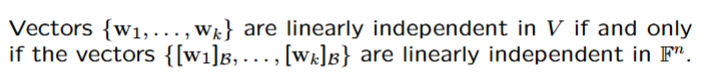

A set S of vectors in a vector space V is linearly dependent if and only if there is at least one vector in S that can be expressed as a linear combination of other vectors in S.

A set containing the zero vector is linearly dependent.

A set containing just one vector v is linearly independent if

and only if v does not equal 0.

A set of two vectors is linearly dependent if and only if one is a scalar multiple of the other.

Every vector space has a basis

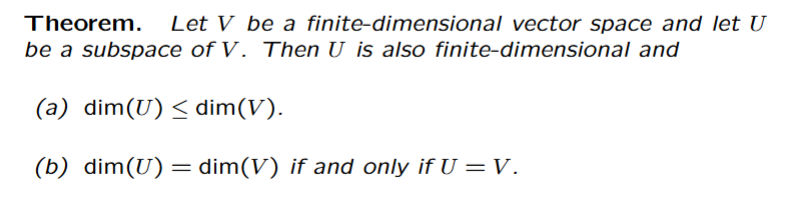

A basis of a vector space is not unique, but any two bases for V contain the same number of elements.

If that number of elements is an integer n then we say the dimension of V is n, denoted dim(V ) = n, and that V is finite-dimensional.

If V does not have a finite spanning set then V is infinite-dimensional.

The empty set is a basis for the zero subspace

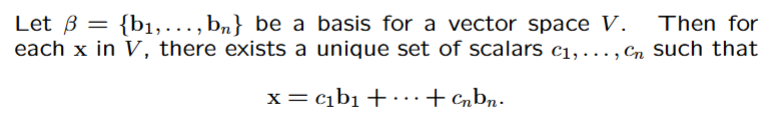

The Spanning Set Theorem

A finite basis can be constructed from a finite spanning set of vectors by discarding vectors which are linear combinations of preceding vectors in the set.

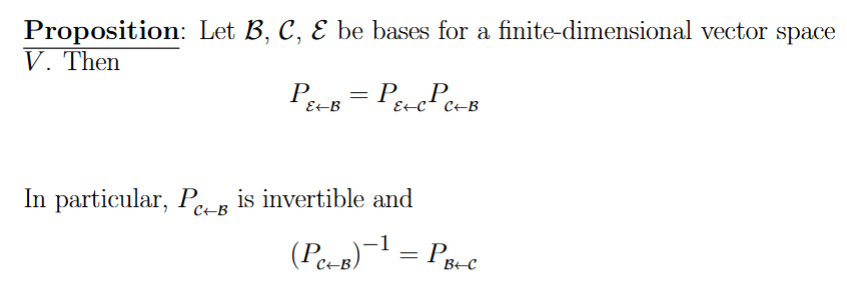

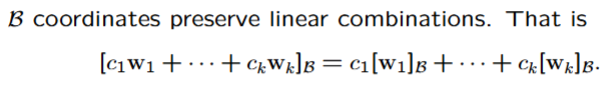

The Unique Representation Theorem

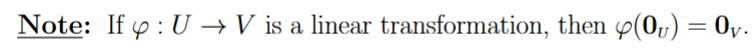

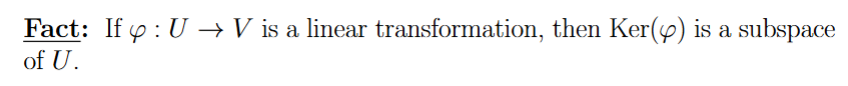

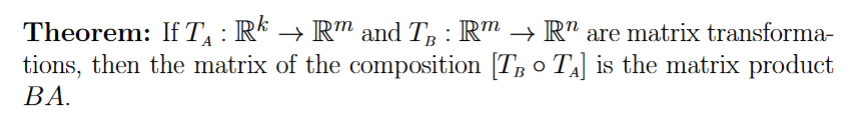

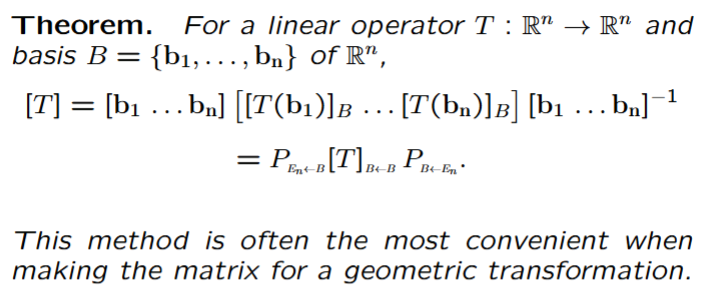

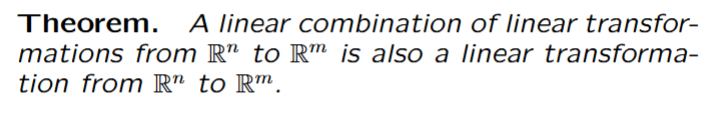

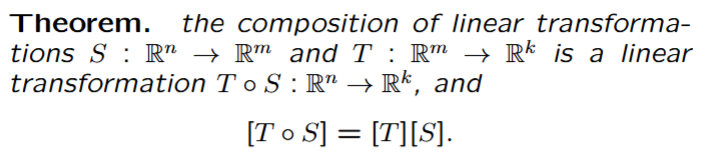

Combining Linear Transformations

Composition of Linear Transformations

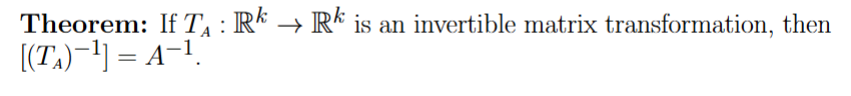

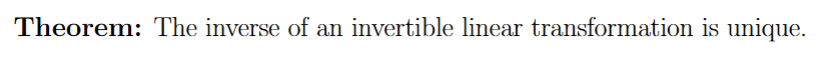

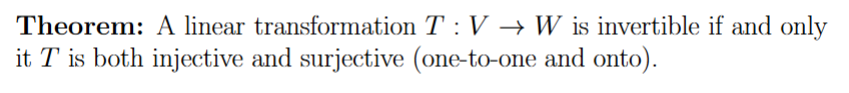

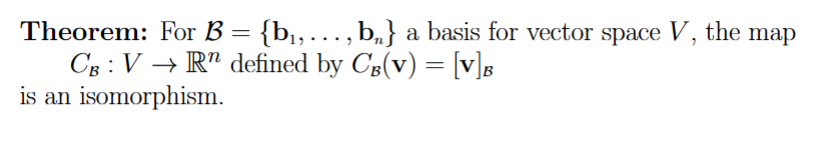

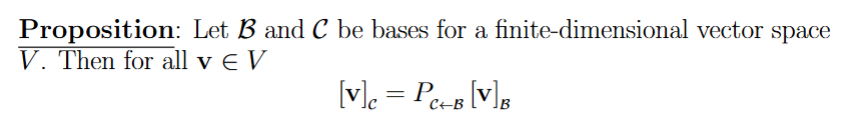

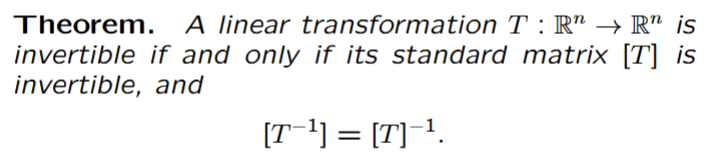

Invertible Linear Transformations

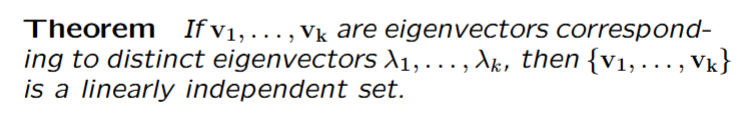

An eigenvector is NONZERO!!!!!

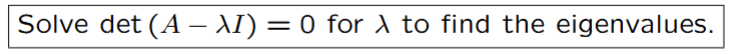

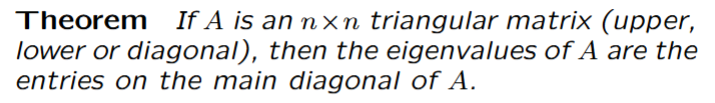

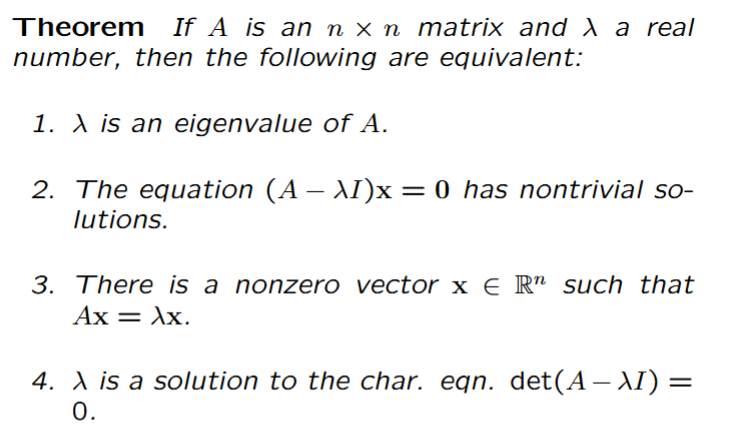

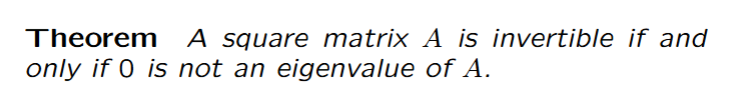

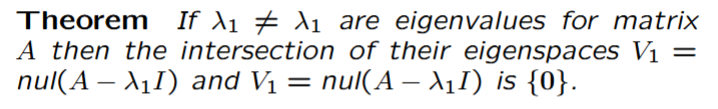

The roots of the characteristic equation of an nxn matrix are the eigenvalues of that matrix

λ = 0 can be an eigenvalue of A, but 0 ∈ Rn can never be an eigenvector of A!

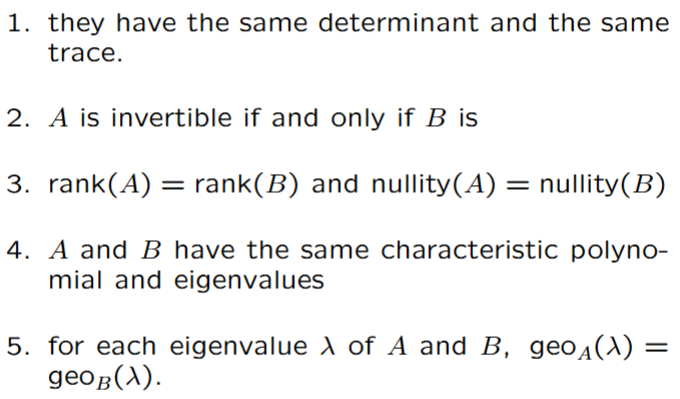

If A and B are similar matrices, then

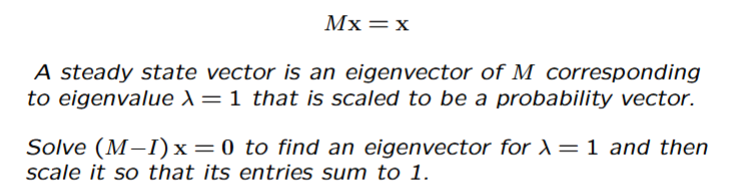

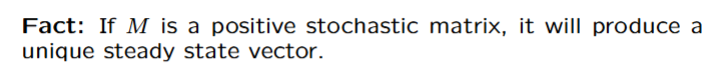

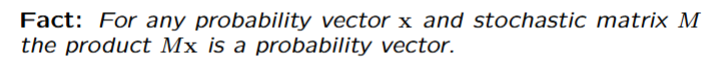

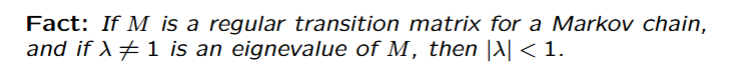

Finding a Steady State Vector