Lecture 19 - Spatial & Cognitive Maps I

1/35

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced |

|---|

No study sessions yet.

36 Terms

What information do we use to navigate?

senses!

visual, auditory, sensory information, etc.

SENSORY INFO

prior experience, landmarks, maps

MAP

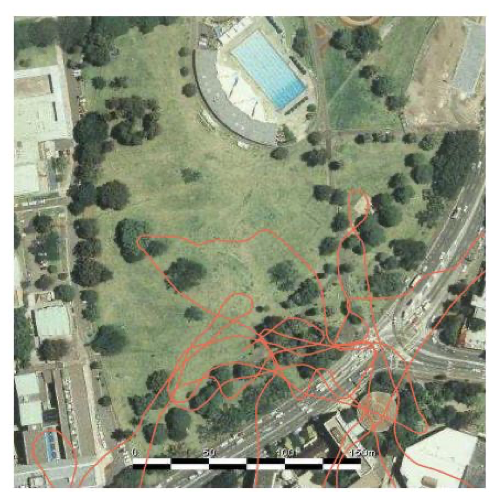

What were early robotics experiments that people did using motion data to estimate location?

simple robot that had to navigate a town park

it has a motor command to reach a new heading angle and move to reach a new location

robot has to do PATH INTEGRATION

it needs to integrate the signal over steps to estimate the trajectory

trying to estimate its location using only motion commands and internal sensors

Why is the path integration of the robot so inaccurate?

accumulating noise and errors will compound

Every step uses the previous (already slightly wrong) position and heading to compute the next step.

So error at time t → becomes larger error at time t+1 → becomes huge error by time t+1000

NO REFERENCE TO ANCHOR

How can we reduce the amount of errors in the robot task?

use self-motion information and calibrate estimates to a global map of the environment

use landmarks! - measuring distance to landmarks

using visual landmarks to recalibrate yourself/map of environment allows for more precise navigation

What are the two types of information we use for accurate navigation?

self motion information

calibration estimates to a global map of the environment

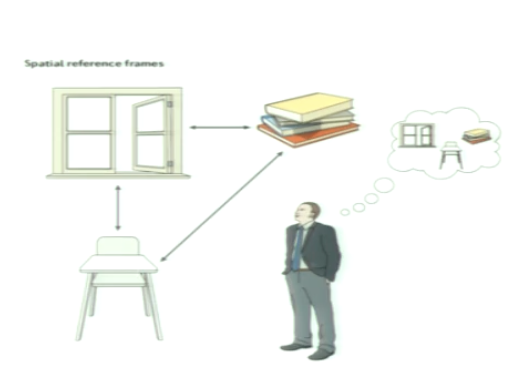

What are two types of space representations?

egocentric

allocentric

you need both for navigation!

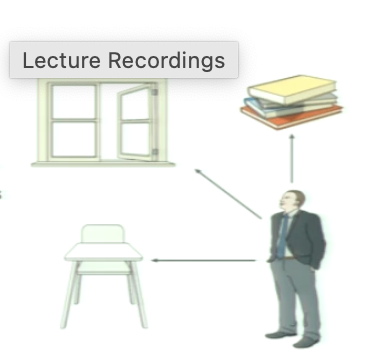

What are egocentric representations?

self-to-object

representation of space and objects relative to body position (self-centred)

What are allocentric representations?

object-to-object

representation of space and objects relative to location of other objects (world-centred)

you can move around room and distance between 2 objects don’t change

e.g. a map

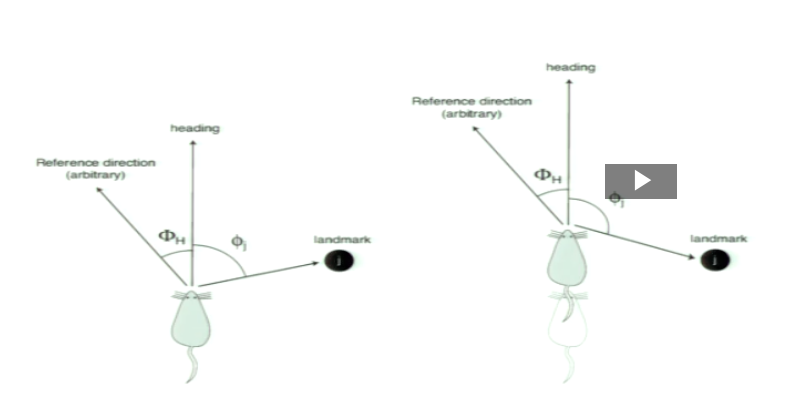

What is egocentric and allocentric bearing with respect to heading direction?

as rat moves with a constant heading, its egocentric bearing relative to a landmark changes while its allocentric bearing relative to a reference direction remains constant

What type of representation is the tuning of V1 simple cells and their retinotopic organization relative to the viewer?

egocentric

it is about where you are and looking at it in a given direction

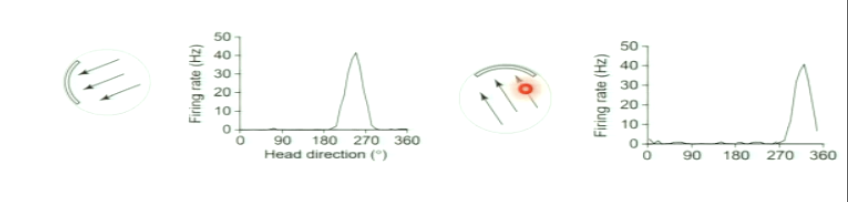

How did Taube et al. record head-direction cells in rats? What was the setup?

Rats freely explored a circular arena while researchers recorded neural activity from the postsubiculum

Visual cues on the arena walls served as stable reference landmarks

combinining neural recordings with tracked head angle, researchers could determine which neurons fired at specific orientations

What did the experiment reveal about neural representation of orientation?

The recorded neurons fired only when the rat’s head was facing a specific direction (e.g., “north”), regardless of where the rat was in the arena

these “head-direction cells” function like an internal compass

can be in any location in box but facing a certain direction

they provide directional information independent of place or movement

What type of representation are the head direction cells?

allocentric

not dependent on specific location of animal

Where are head direction cells? What are they?

recorded in the postsubiculum

they as a function of the animal’s head direction in the horizontal plane

independent of animal’s behaviour, location or trunk position

high firing for an orientation

Gaussian tuning where HD cells prefer one direction

What does the Gaussian function of HD cells refer to?

the directional heading at which the peak of the Gaussian function is located is referred to as the cell’s preferred firing direction

This shows HD cells encode orientation, not position

What do HD cells show in terms of orientation tuning?

they have orientating tuning with respect to the environment

What happens to a head-direction cell’s preferred firing direction when a visual cue in the environment is rotated?

The preferred firing direction rotates by the same amount as the cue. This shows that HD cells anchor their directional tuning to external environmental landmarks

How was the experiment designed to test head direction (HD) cells in a visually ambiguous environment?

Circular chamber with alternating black and white stripes of identical width

Created a visually symmetric environment with no single landmark indicating direction

Rat normally started facing a fixed “east” stripe (start position 1)

In probe trials, the rat entered from different start positions (2, 3, 4)

Goal: test whether HD tuning depends on initial orientation when visual cues are ambiguous

allocentric representation that is relative to information you have in room

What were the results when the rat’s starting orientation was varied in this symmetric environment?

HD cell’s preferred firing direction rotated with the rat’s starting orientation

A shift of the start position by 90° produced a matching 90° shift in the tuning curve

Shows HD representation can anchor to initial orientation when visual cues can’t provide unique directional information

Head direction cells provide an allocentric representation of orientation but they are invariant to?

invariant to position/location

doesn’t matter where you are in the room, depends on orientation

What do head direction (HD) cells represent, and what are they not sensitive to?

represent allocentric head orientation (a specific direction in the environment)

invariant to translation (do not encode x–y location or physical position in space)

What are tetrode recordings and how do they work?

multi-electrode recordings

A tetrode has 4 closely spaced electrodes that record the same neurons across channels.

Each neuron produces a distinct amplitude pattern across the 4 channels.

These patterns can be clustered to identify which spikes come from which neuron (spike sorting).

Enables simultaneous recording of many neurons

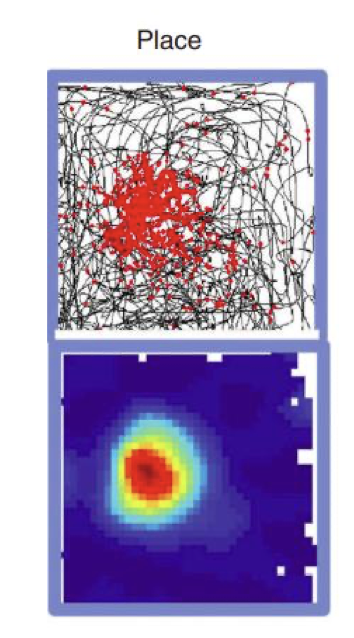

What are hippocampal place cells?

place cells fire selectively at one (or a few) locations in the environment

representation of a location in allocentric coordinates

anchoring to x-y location (does not matter what direction animal is facing!)

Are place cell representations specific to an environment?

yes they can be specific to an environment

remapped across context

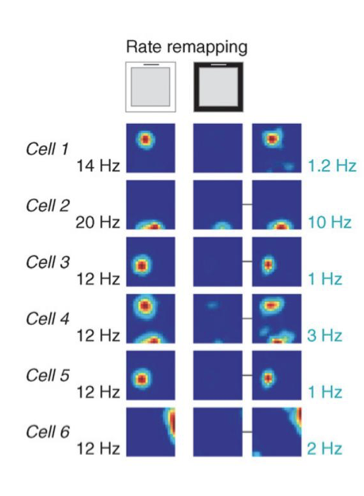

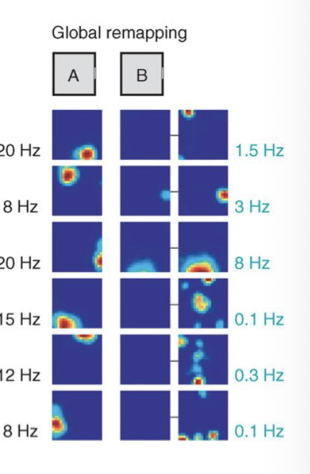

What does “remapping of place cells across environments” mean?

Place cells fire in specific locations, but their place fields depend on environmental context

When a key cue in the environment changes (e.g., white card → black card), a place cell may:

Shift its place field to a new location

Change its firing rate

Activate or deactivate entirely

This shows place cells form distinct spatial maps for different contexts, even in the same physical space (e.g. same dimensions)

What are the 2 types of remapping?

rate remapping

global remapping

What is rate remapping in place cells?

Place cell keeps the same place field (fires in the same location).

Only the firing rate changes (stronger/weaker) between contexts.

Happens when the environment stays the same, but non-spatial cues change (e.g., colour of cue card).

Indicates that the cell encodes contextual or sensory features without changing spatial selectivity

What is global remapping in place cells?

Place cell completely changes its place field:

Fires in a different location, or

Stops firing, or

Starts firing in a new place.

Happens when the environment itself changes (e.g., Room A vs Room B).

Indicates that the hippocampus forms distinct spatial maps for different environments.

Both firing location and rate are altered

How do place cells let us decode an animal’s location? Are they accurate to the animals actual position in space?

yes it is accurate (sometimes they don’t match but usually they go where the animal goes next)

Each place cell fires in a specific location → together they form a spatial code

Record many cells (tetrode recording)→ match firing pattern to the most likely position

Output is a probability map showing where the animal is most likely located

Recap: Spatial cells. What do they both provide?

HD cells: Fire when the animal’s head points in a specific direction (encode orientation)

place cell: Fire the animal is in a specific location in the environment (encode position)

together provide a ‘local’ map (current orientation or current location)

What do grid cells in the entorhinal cortex represent, and what pattern do they form?

Fire at multiple, equally spaced locations as the animal moves

Firing fields form a regular hexagonal (grid-like) lattice across the environment

GRID STRUCTURE

not random locations

MORE GLOBAL REPRESENTATION

How does grid cell spacing vary across the entorhinal cortex? Is it discrete or continuous scales?

Grid cells have different lattice scales (spacing between firing fields)

Dorsal → small, tight grids (fine resolution)

Ventral → large, widely spaced grids (coarse resolution)

this is something that is quantized (certain scales → not a continuous spacing between different grid fields)

quite discrete

Are place cells context dependent?

yes!

cells cells are not absolute measure of location on space

they are also modulated by task context such as availability or goals

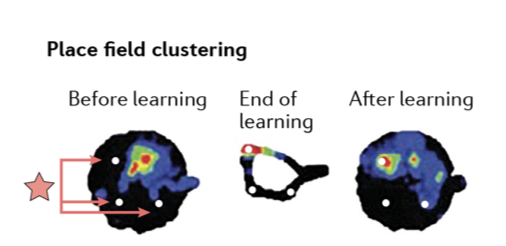

learning causes a shift in(grid shifted after learning where the rewards are in the environment)

Place cells form a flexible spatial map shaped by goals, expectations, and experience

Is the grid cell code context dependent?

yes!

grid cells are no absolute measure of location in space

they are also modulated by task context such as reward availability or goals

location of place cells stay the same but increase in activity at location of reward

How does the structure/geometry of the environment affect spatial representations (place/grid cells)?

The brain’s map is not fixed—its structure mirrors the structure of the world

→ the geometry of space influences the structure of the representation of space

What is the hippocampal zoo?

representations in hippocampus and entorhinal cortex go beyond these canonical cell classes

representations of several other variables that are important for navigation and particularly goal-directed navigation

together they represent key variables needed for building maps and navigating space