L08 - Artificial Neural Networks (ANN)

1/18

Earn XP

Description and Tags

1. Introduction to ANN 2. Fundamentals of ANN 3. The training process 4. Learning rate and optimization 5. Application and use case 6. Types of neural networks

Name | Mastery | Learn | Test | Matching | Spaced |

|---|

No study sessions yet.

19 Terms

What is ANN?

ANN = Artificial Neural Network

Inspired by the human nervous system.

Can learn and store knowledge.

Made of neurons (processing units) connected by synapses (weights).

ANN

Future Trends

Better explainability and interpretability (XAI).

More awareness about ethics, bias, and fairness.

Introduction to ANN

Potential application areas

Curve fitting (find relationships between variables).

Process control (quality, efficiency, safety).

Pattern recognition (image, speech, writing).

Data clustering (group similar data).

Prediction (time series, weather).

System optimization (minimize or maximize goals).

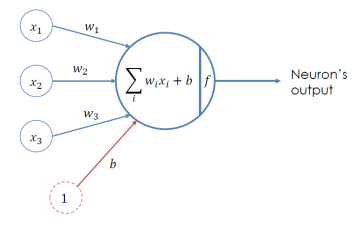

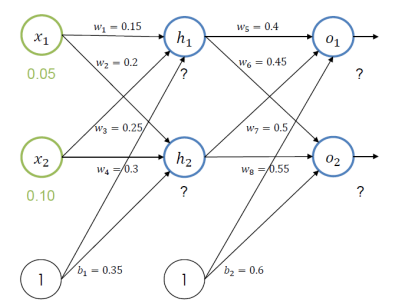

Artificial Neuron (Perceptron)

Inputs: x_1, x_2, ..., x_n

Weights: w_1, w_2, ..., w_n

Sum all weighted inputs + bias b.

Apply an activation function (f) to decide output y.

Activation Functions

Sigmoid: Outputs between 0 and 1, smooth.

tanh: Outputs between -1 and 1, centered at zero.

ReLU: Outputs positive values, negatives become 0.

Leaky ReLU: Like ReLU but allows small negative values to avoid “dead” neurons.

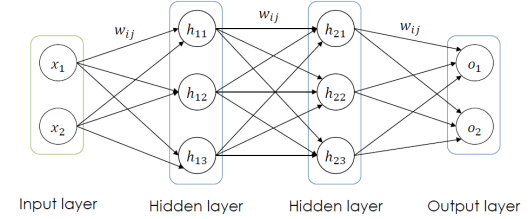

Architectures of ANN

Input layer: Passes raw data to the network, no calculation.

1st hidden layer: Makes simple decisions by weighting inputs.

2nd hidden layer: Uses results from previous layer to make more complex decisions.

Output layer: Produces the final result.

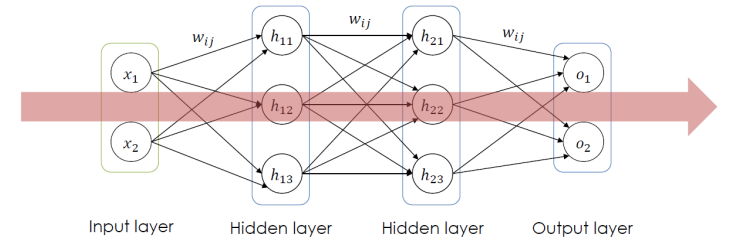

Feed Forward Process

Data flows from input → hidden layers → output.

Each layer: multiply inputs by weights, add bias, apply activation function.

Non-linear activation functions help learn complex patterns.

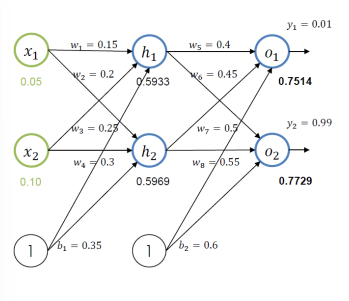

The feed forward process - example

How do we train the weights to come closer to the desired outputs?

Step 1: Compare predicted output with the desired output → calculate the error.

Step 2: Find how much each weight contributed to the error (using backpropagation).

Step 3: Adjust weights slightly in the direction that reduces the error (using gradient descent).

Repeat this many times until the outputs are close to the targets.

The training process

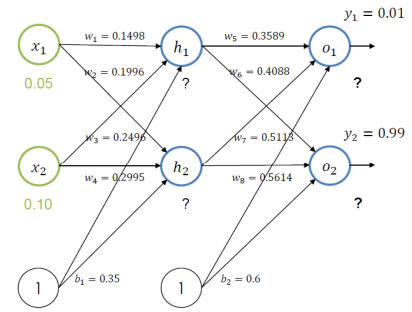

Backpropagation

After getting the output, we send the error backward through the network.

This helps us adjust the weights so the output gets closer to the desired value next time.

Backpropagation

Error calculation

Output

Hidden layer

The training process

The trained forward pass

After first backpropagation the error changes 0.2984 → 0.291

Repeating this step for 10.000 times error: 0.000035

𝑂1 = 0.0159 vs 0.01

𝑂2 = 0.9841 vs 0.99

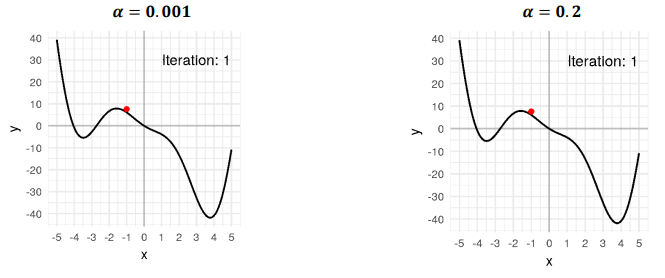

Learning rate and optimization

Definition

Learning rate: A parameter that controls how big the step is when updating weights.

Small step → slow learning but more stable.

Large step → faster learning but may overshoot or never converge.

Direction of the step is found using the gradient of the loss function.

Learning rate and optimization

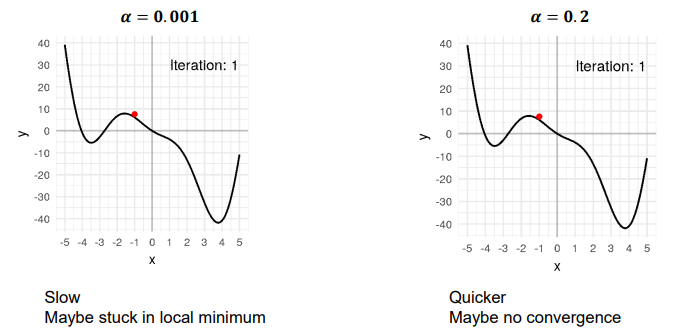

Comparison

α = 0.001 → Moves very slowly, may get stuck in a local minimum.

α = 0.2 → Moves faster, but might skip over the best point.

Learning rate and optimization

Schedule and adaptive learning rate

Learning rate schedule: Decrease learning rate over time (time-based, step-based, exponential).

Adaptive learning rate: Algorithm adjusts the rate automatically (Adagrad, RMSprop, Adam)

Prognosis/Prediction

Prognosis/Prediction involves all steps necessary to predict an unknown value from known inputs.

Types of ANN

RNN, CNN

Recurrent Neural Networks (RNNs)

Purpose, Examples, Types

Purpose: Handle sequential data (data with order in time).

Enables reasoning over time.

Examples: Handwriting recognition, speech recognition.

Types:

Basic RNN

Long Short-Term Memory (LSTM)

Gated Recurrent Units (GRU)

Key idea: Output at each step depends on the current input and previous hidden state.

Convolutional Neural Networks (CNNs)

Purpose, Examples, Layer

Purpose: Process 2D or 3D data (like images or videos).

Examples: Image recognition, classification.

Layers:

Convolution layer.

Pooling layer:

Key idea: Automatically finds important patterns in spatial data.

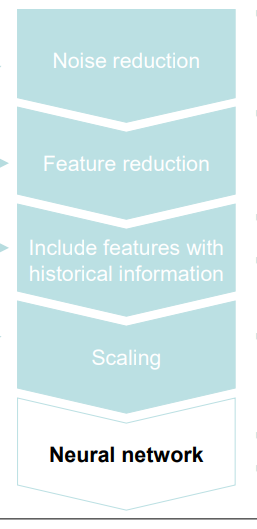

Data preparation for Neural Network

From linear regression pipeline:

1. Noise filtering (moving mean)

2. Feature reduction by filtering

3. Generation of additional features

a) Features including historical information

b) Polynomial features

4. Scaling

5. Dimensionality reduction (PCA)

6. Linear regression model