high auditory processing

1/9

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

10 Terms

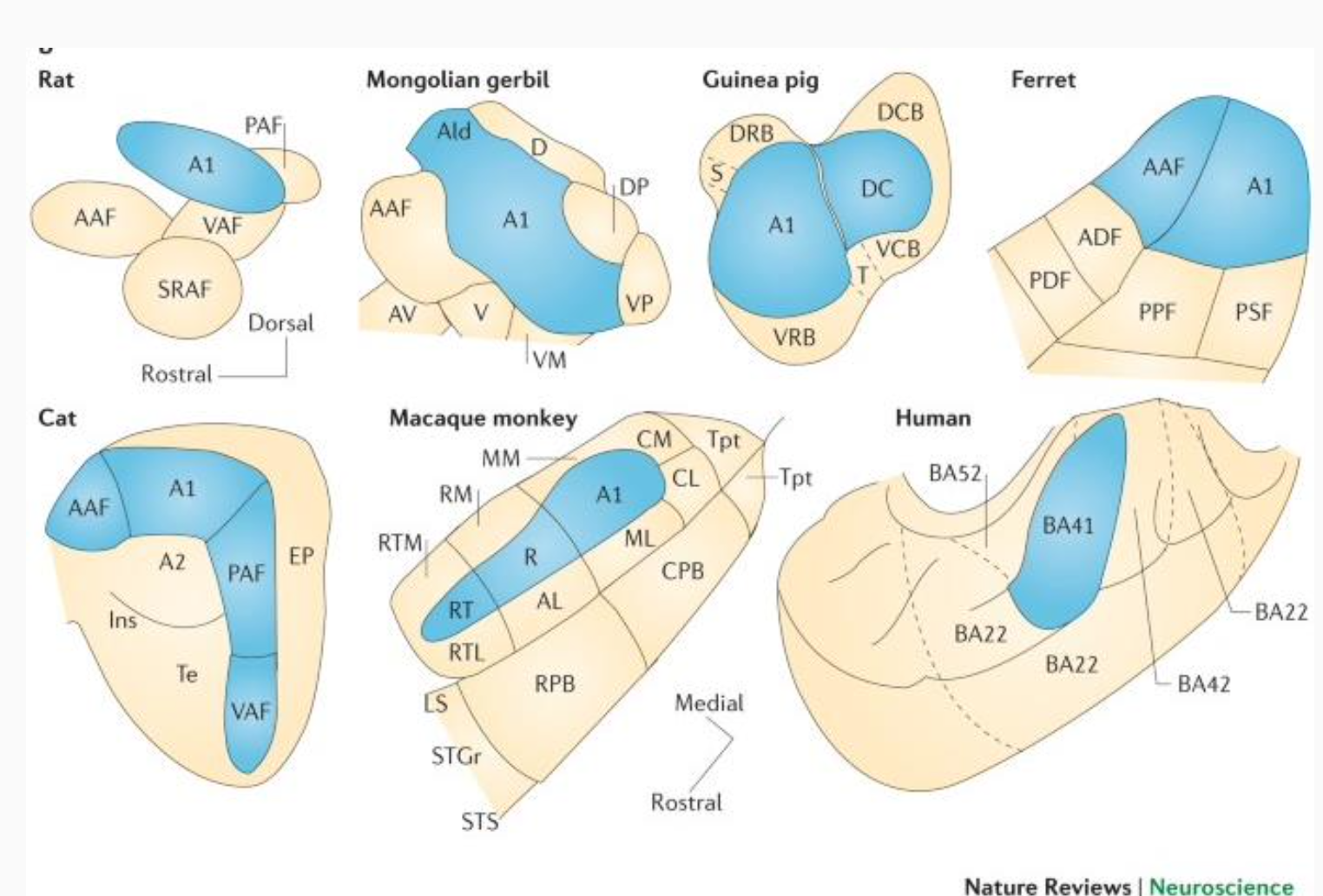

what is auditory cortex?

tonotopically organized

primary or core areas

secondary or belt areas

each of these animals has a whole bunch of different auditory cortical fields

blue = primary ones gets input from the thalamus

opposed to vision, hearing have a lot of subcortical processing

map of frequecny space → complex feature of sound: The auditory system starts with a spatial map of sound frequencies, and this representation is progressively transformed into meaningful, complex perceptual features.

complex features of sound

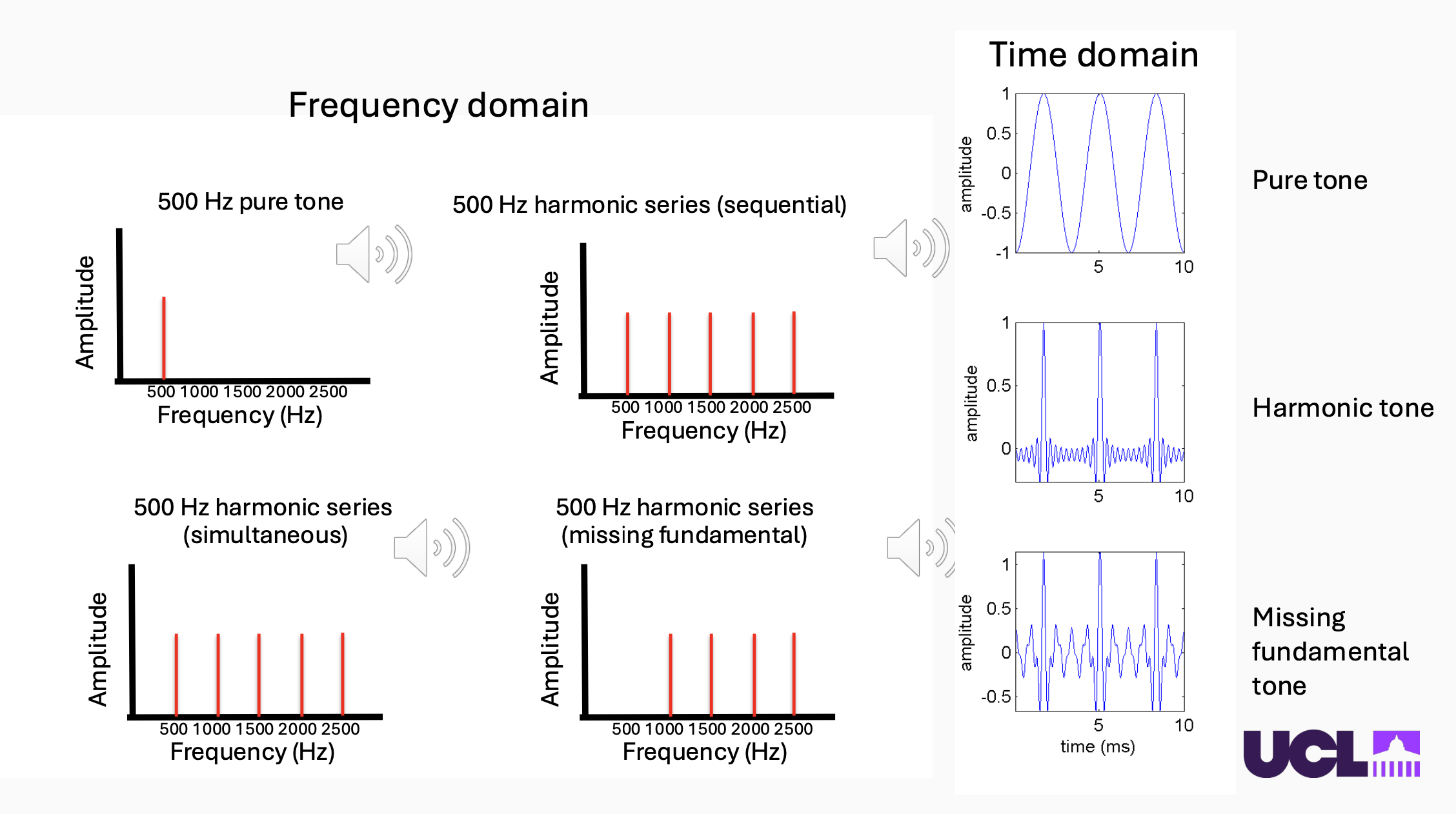

“complex” just means there is more than one frequency element. → harmonic structure

harmonic means that there are frequency elements at integer multiples of a “fundamental” frequency → brain just fuses them all together into like one sound source

how do we differentiate sounds that share harmonic structure?

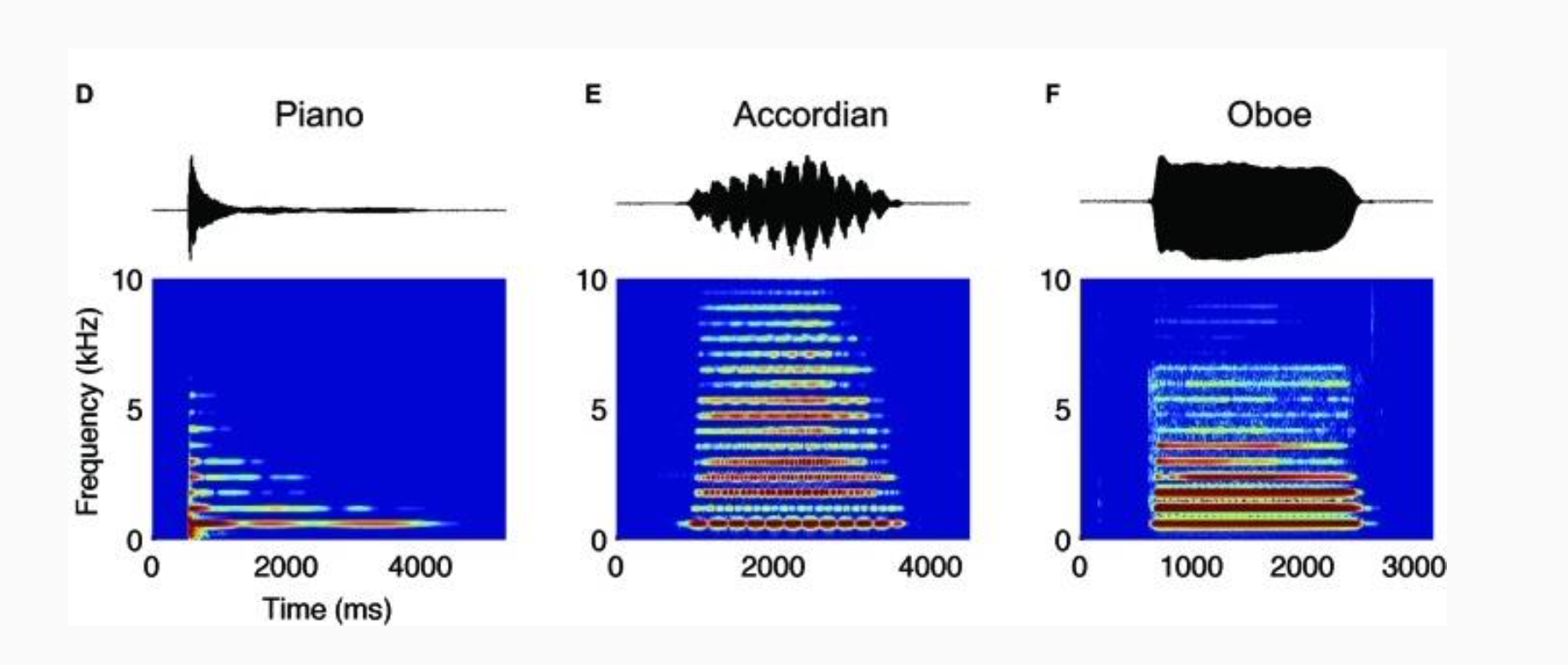

1) temporal envelope

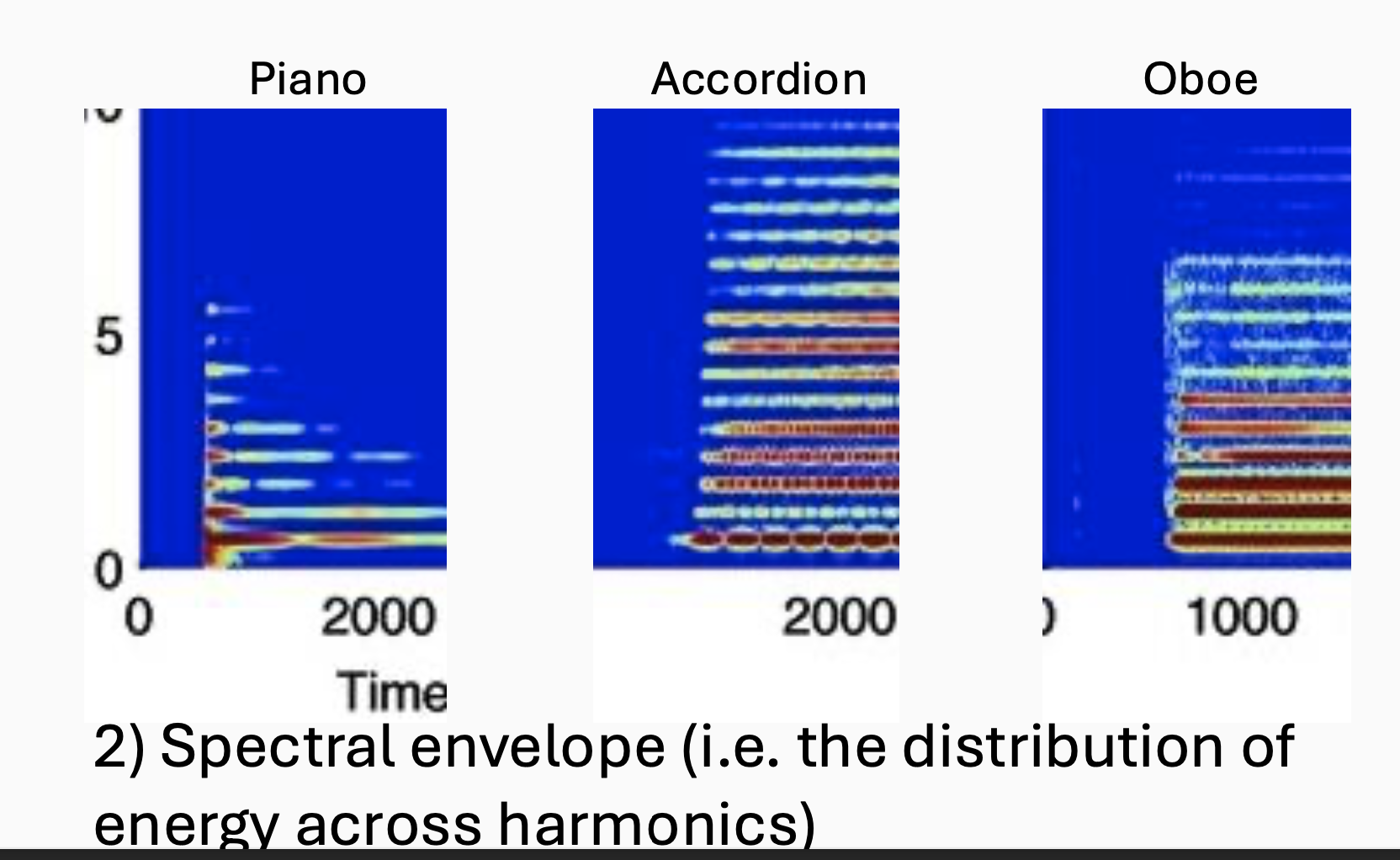

2) spectral envelope (i.e. the distribution of energy across harmonics

temporal envelope

they've all got the same fundamental frequency and the same pitch.

you can also see that the way in which they unfold over, over time is also very different. → slow change in amplitude (loudness) of a sound over time

example: the piano has this sharp onset and a rapid decay. The accordion, vibrates too much. The oboe has a much more kind of sustained profile.

spectral envelope

if we zoom in on, on the kind of onset , the distribution of energy across harmonics, and they are what gives you the identity of a sound. → overall shape of energy across frequencies in a sound spectrum

how can you tell what it is you're actually listening to?

there are two qualities that come together

1)Timbre

2) Pitch

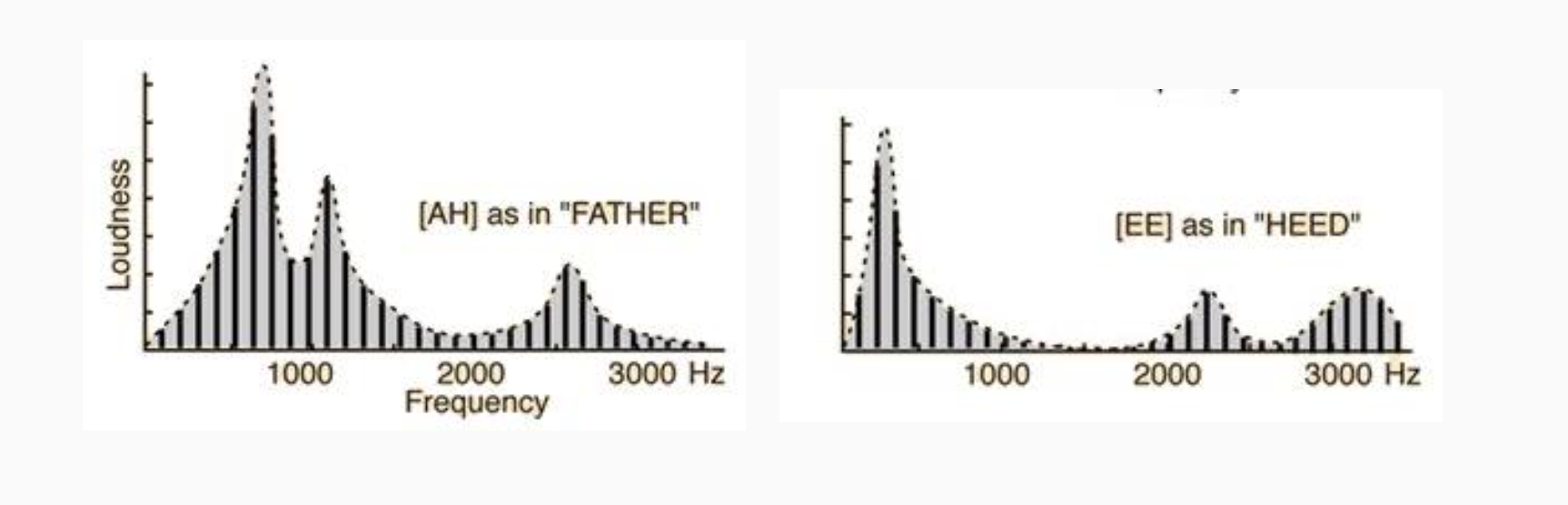

timbre

“attribute of auditory sensation in terms of which a listener can judge that two sounds similarly presented and having the same loudness and pitch are dissimilar”

→ anything about sound that isn’t loudness or pitch or position in space that makes the sound different

two different vowels set at the same pitch → generate pulse train → they have constant pitch → vocal tract is filtering that pulse train to make it have a different frequency envelope

humps in the frequency domain here as formants → particularly the position of the first and second formants is what allows you to identify different vowel sounds.

!! this ability to discriminate timbre based on these spectral envelopes is really important for for human speech.

pitch (harmonicity)

it is really, really important for: meaning(tonal languages), emotional content, identification (small vs big), sound segregation, etc

example: when you're trying to pull apart different people's voices. So the way that you distinguished two speakers when they are speaking at the same time is because of their voice having a different pitch to each other.

pitch is auditory attribute of sound according to which sounds can be ordered on a scale from low to high (basically something that allows you to order audio notes on a musical scale.) It's tempting, therefore, to think that you could read pitch directly out of the cochlea, and that pitch would be a kind of trivial thing for humans or other animals to process. But that's really not true. (only for pure tones → containing only one frequency, do not come across in real world (e.g. bird noise!!)

what really happens→

take away the fundamental frequency from a harmonic series → generate missing fundamental tone (same as pure tone that is currently missing from the harmonic tone)

Time domain:

We can see what these signals have in common is a common repetition rate to them. So they all repeat over time. And actually it's this repetitions periodicity that gives natural sounds that pitch.

pitch processing in the brain

how pitch is processed in the brain is somewhat controversial.

there is evidence that there all kind of dedicated sensors that extract the fundamental frequency of sounds.

They found this kind of cluster of pitch sensitive neurones that were hanging out in the low frequency point where two of these auditory fields met at their low frequency border in the kind of primary auditory cortex. There's sort of similar evidence from functional imaging in humans, but there's also kind of counter evidence that shows pitches everywhere.

dual processing streams in audition

We have a somewhat similar dual processing system in audition to that seen in vision, although the evidence is not quite as robust.

Temporal lobe pathway

Running down the temporal lobe

Carries “what” information

Shows specialization for voices & voice-related processing

Located relatively low down, near face processing areas

Projects to the ventral prefrontal cortex

Caudal belt / dorsal pathway

Through caudal belt areas

Information projects to parietal cortex

these dual processing is seen in both macaque brain and human brain

Many parietal and frontal areas are multimodal; possibly specialized for different types of processing; relatively agnostic to the specific sensory modality coming in.

EVIDENCE: sound localization vs. sound identification deficit after stroke

Stroke patients: damage to temporal areas causes word deafness (cannot recognise speech/sounds — "what" stream damaged); damage to posterior/parietal areas causes sound localisation deficits ("where" stream damaged). Different patients are affected — confirming anatomically separate pathways. Cat cooling experiment: silencing posterior auditory field (PAF) → severe localisation deficit but intact pattern discrimination; silencing anterior auditory field (AAF) → severe pattern discrimination deficit but intact localisation. Fully reversible when cooling stopped.

summary:

Temporal lobe damage → 'word deafness (cannot recognise speech/sounds, "what" stream damaged). Posterior/parietal damage → sound localisation deficits ("where" stream damaged).

Different patients affected → separate anatomical pathways.

experiment: Double dissociation of what and where functional specialisation (cooling tube + cats)→there are these two processing pathways, for kind of more complex auditory processing

experiment: Speech perception and production → there are distinct bits of the brain associated with different, um, communication disorders.

broca’s area → speech production: understand but can’t generate it

wernicke’s area → speech pattern: can’t understand but c*n generate