matrix final

1/17

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

18 Terms

how to find eigenvalue

det(A-λI) - can be more than one ofc

how to find eigenvector

(A-λkI)=0 - row reduce, write eigenvectors in terms of free cols/parameters

where λk is a specific lambda value

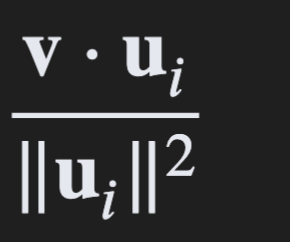

equation for finding coefficients of orthogonal basis

where u is the ith vector and v is the vector ur solving for

is lambda=0 a valid eigenvalue?

yes! just means that matrix is non-invertible

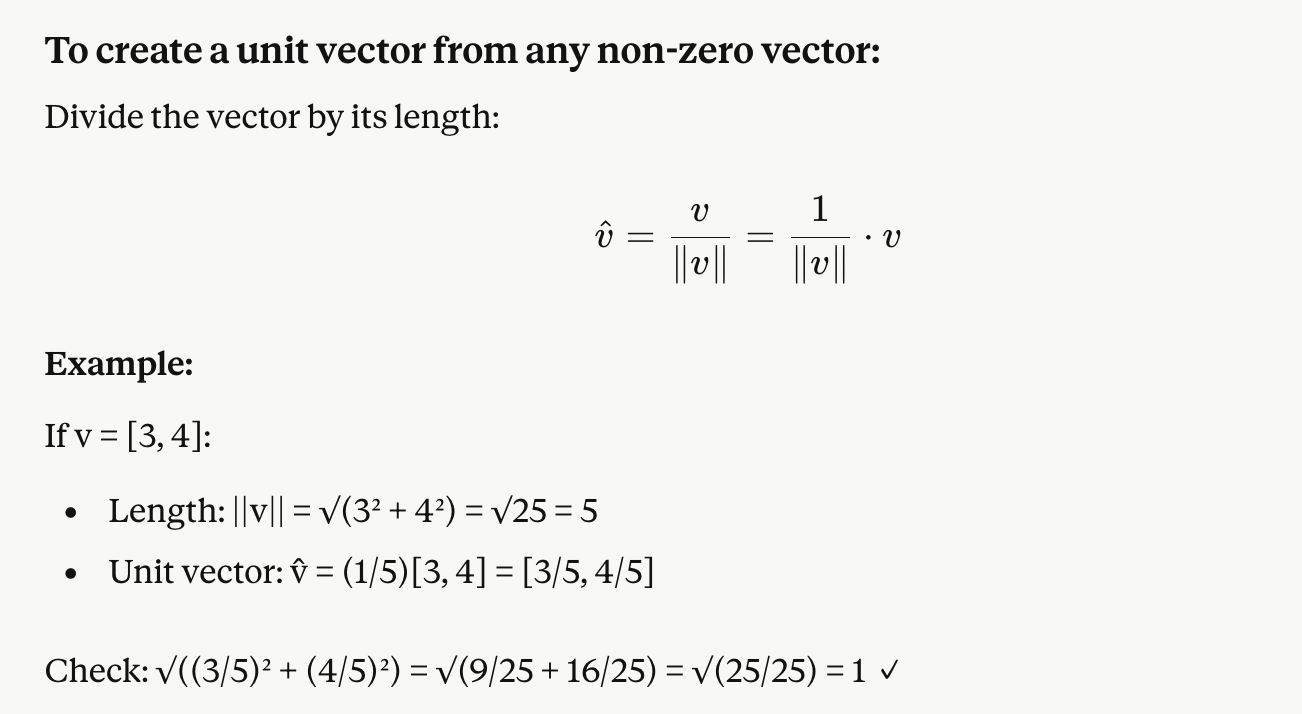

unit vector formula ( for orthonormal basis)

so here the unit vector itself isn’t 1 but when you take the mag of all components in the vector it equals 1

rules of an an orthognal matrix

the cols of the matrix (or rows) are orthonormal

the MT = M-1

all real eigenvalues are 1 or -1 ( therefore det(P)=-1or1)

two orthognoal matricies multiplied equals an orthogonal matrix

cause its the same thing as matrix multiplication with a 1×3 and a 3×1 matricies

orthogonal complement of S ( where s is a subspace)

set of vectors, where each vector is orthogonal to each vector in S

rules for orthogonal subspaces (s comp mainly)

If S is a k dim subspace for Rn then…

S comp is a subspace of Rn

S comp (dim) = n-k

only common vect contained in both is the zero vector

putting together the orthogonal basis for both S and S comp gives u orthogonal basis for Rn

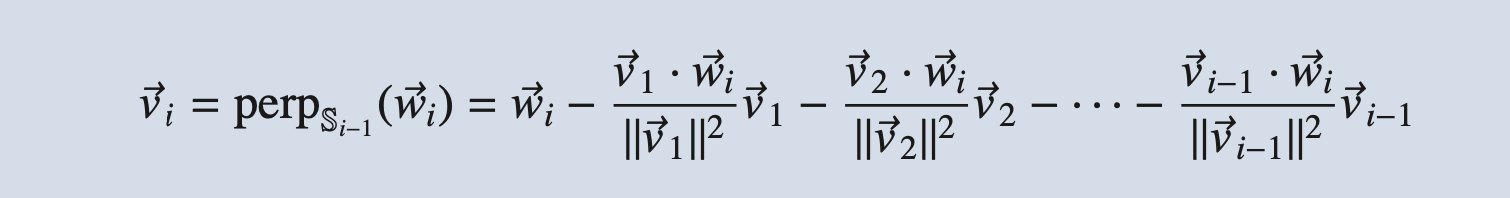

gram-schmidt process + important notes

if we get any V as equal to 0 that means that W vector is a lin combo of the others - and we can omit that W from the spanning set

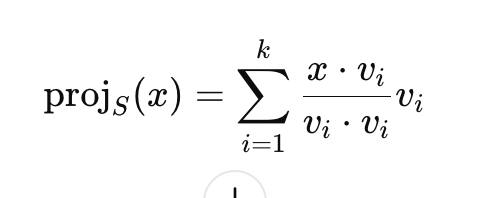

projection of x onto a subspace with an orthogonal basis

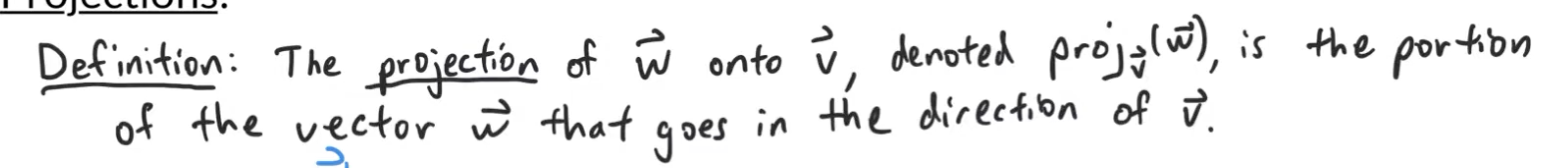

def of a projection

( or parallel to V if it easier to think of it that way)

Perpendicularity only applies to vectors not points heres why…

P lies on the line, the vector {OP} = (1,4) is:

* just a vector from the origin to that point

* not aligned with the line’s direction

By trying to find the normal by dotting it with paint P I’m treating P like a direction vector and not a point - which is incorrect , cause if it was a direction vector it would not be going in the same direction as the line … hence why this doesnt work

elementary matices notes

when writing row operations as a product of elementary matricies we reverse the order ( first row operation is the last matrix) because ur basically doing function composition

E3(E2(E1(A)))

and since matrix multiplication isnt communicative, E1 gets multiplied by A first even tho its on the right!

the product of these elementary matrices also gives you A-1 , since the collection of elementary matrices representing row reductions reduce A to the identity matrix - so they represent A-1

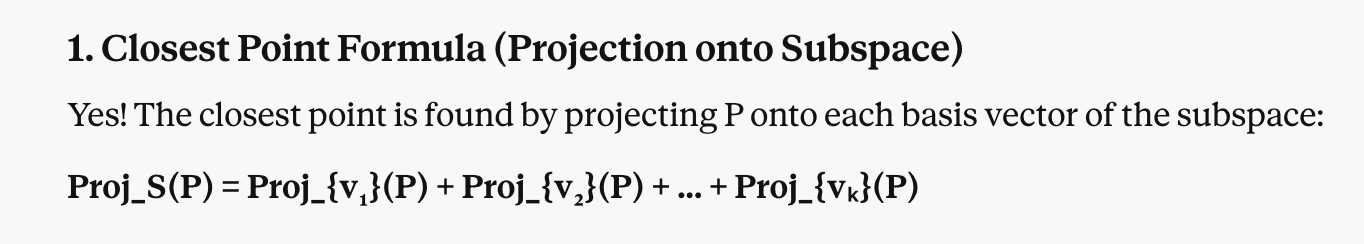

closest point in a subspace formula

solving for orthogonal vectors

dont forget u ahve 2 ways to do this

if ur finding a vector orthogonal to 2 other vectors only u can do cross product

or if u have more vectors you can solve the linear system where first vector dot x equals 0, and the secound, and the third, and so on solving for x in terms of paramters

col(A) general eq + facts

Col(A) → Ax=b

since its about outputs it is a subspace of Rm - each vect in the col space has m components

Null(A) general eq+ facts

general eq→ Ax=0

since its about inputs its a subspace of the domain Rn - each vector in the null space has n components