EECS 563 Midterm 2

1/191

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

192 Terms

What do transport services and protocols provide?

Provides logical communication between application processes running on different hosts.

What are two transport protocols available to internet applications?

TCP and UDP

What are the two transport protocol actions in end systems?

Sender and receiver

Transport layer sender

Passed an application layer message

Breaks app messages into segments,

determines segment header fields values

creates segment

passes to network layer

Transport layer receiver

Receives segment from IP

Checks header values and extracts the application-layer message

Reassembles segments into messages

passes to application layer

TCP

Transmission Control Protocol

Reliable, in-order delivery

Congestion control

Flow control

connection setup

UDP

User Datagram Protocol

Unreliable, unordered delivery

No-frills extension of “best-effort” IP

Services not guaranteed by TCP and UDP

No delay guarantees

Can not promise how fast your data will arrive

No bandwidth guarantees

Do not guarantee a fixed data rate

Transport vs Network layer

Transport layer: logical communication between processes

Network layer: logical communication between hosts

What possible problems come from the network layer?

It may:

Drop messages (datagrams)

re-order messages

delivers duplicate copies of a given message

limits messages to some finite size

delivers messages after long delays

Multiplexing at sender

Handle data from multiple sockets, add transport header (later used for demultiplexing)

Demultiplexing at receiver

Use header info to deliver received segments to correct socket

How does the server know which data belongs to which client when multiple clients send data?

By using multiplexing and demultiplexing

Connectionless (UDP) demultiplexing

When creating datagram to send into UDP socket, we must specify the destination IP address and destination port #

UDP datagrams with same dest. port #, but different source IP addresses and/or source port numbers will be directed to same socket at receiving host

Connection-oriented (TCP) demultiplexing

TCP socket is identified by 4-tuple

Demux: receiver uses all four values (4-tuple) to direct segment to appropriate socket

Server may support many simultaneous TCP sockets

TCP socket 4-tuple

Source IP address

Source port number

Dest IP address

Dest port number

Why is there a UDP?

No connection establishment (which can add RTT delay)

Simple (no connection state at sender, receiver

Small header size (8 bytes vs 20 bytes for TCP)

No congestion control

UDP can blast away as fast as desired

Can function in the face of congestion

Use cases that primarily use UDP

DNS

SNMP (simple network management protocol

Goal of UDP Checksum

Detect errors (flipped bits) in transmitted segment

UDP checksum: Sender

Treat contents of UDP segment as sequence of 16 bit integers

Add all 16-bit numbers together like normal binary addition and wraparound carry

Take one’s complement

Checksum value put into UDP checksum field

What is a wraparound in checksum?

If addition produces a carry out of the leftmost bit (17th bit), add that carry back to the result

UDP checksum: receiver

Compute checksum of received segment

Check if computed checksum equals field value

not equal - error detected

equal - no error detected

Goals of reliable data transfer (RDT)

Data integrity - no packet loss, no packet duplicated, in-order delivery

Flow control - avoid overflow on receiver’s buffer

What determines the complexity of a reliable data transfer protocol?

The characteristics of the unreliable channel.

More issues → more mechanisms needed → higher complexity

Why must sender and receiver communicate their state in reliable data transfer?

Because they cannot know each other’s state directly

they only learn via messages

rdt_send()

the function call the application layer uses to give data to the reliable data transfer (rdt) sender.

deliver_data()

when the transport layer receiver passes the correctly received data up to the application layer.

udt_send()

when the transport layer sends a packet into the unreliable channel (network).

rdt_rcv()

the event that occurs when a packet arrives from the unreliable network channel to the transport layer.

rdt1.0

Reliable transfer over a reliable channel

Underlying channel perfectly reliable

no bit errors, no loss of packets

Separate FSM for sender and receiver

rdt 2.0

Channel with bit errors

Underlying channel may flip bits in packet → packet error

How to recover from errors in rdt?

Acknowledgements (ACKs): receiver explicitly tells sender that pkt received OK

Negative acknowledgements (NAKs): receiver explicitly tells sender that pkt had errors

Sender retransmits pkt on receipt of NAK

Sender sends on packet, then waits for the receiver response

What happens if ACK/NAK is corrupted?

Sender doesn’t know what happened at receiver

Can’t just retransmit: possible duplicate

What is rdt2.0’s fatal flaw?

If ACK/NAK is corrupted

How does rdt2.1 manage duplicates?

Sender retransmits current pkt if ACK/NAK corrupted

Sender adds sequence number to each pkt

Receiver discards if sequence number is not what it is currently expecting

Difference between rdt2.0 and rdt2.1?

rdt2.0: corrupted ACK/NAK causes confusion

rdt2.1: uses sequence numbers so retransmissions do not cause duplicate delivery

rdt2.2

Same functionality as rdt2.1, using ACKs only, NO NAKs

Instead of NAK, receiver sends ACK for last pkt received OK

Receiver must explicitly include seq # of pkt being ACKed

Duplicate ACK at sender results in same action as NAK:

retransmit current pkt

What new problem does rdt3.0 solve?

Channels with errors and loss

Adds new channel assumption: underlying channel can also lose packets (data, ACKs)

what if a packet (or its ACK) is lost completely, not just corrupted?

rdt3.0 approach

Sender waits “reasonable” amount of time for ACK

retransmits if no ACK received in this time

if pkt (or ACK) just delayed (not lost)

retransmission will be duplicate, but seq # already handles this

receiver must specify seq # of packet being ACKed

All the rdt protocols are forms of …?

Automatic Repeat Request (ARQ)

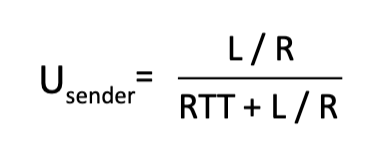

Usender: utilization

Fraction of time sender busy sending

Time to transmit packet into channel?

Length of packet/Rate

How can we increase channel utilization ?

Pipelining

Pipelining

Sender allows multiple, “in-flight”, yet-to-be-acknowledged packets

Range of sequence numbers must be increased

buffering at sender and/or receiver

Usender Formula

Go-back-N

Sender can have up to N unacked packets in pipeline

Receiver only sends cumulative ack

Sender has timer for oldest unacked packet

When timer expires, retransmit all unacked packets

Selective repeat

Sender can have up to N unacked packets in pipeline

Receiver sends individual ack for each packet

sender maintains timer for each unacked packet

When timer expires, retransmit only that unacked packet

What relationship is needed between sequence # size and window size to avoid the receiver mistaking a packet as a NEW packet vs a RETRANSMISSION of an old packet?

Size of the seq #s should be at least two times of the window size

Suppose a receiver that has received all packets up to and including

sequence number 24 and next receives packet 27 and 28. In

response, what are the sequence numbers in the ACK(s) sent out by

the GBN and SR receiver respectively?

A. [27,28], [28]

B. [24,24], [27,28]

C. [27,28], [27,28]

D. [25], [25]

E. [nothing], [27, 28]

B

What do TCP sequence numbers represent?

They represent byte positions, not packets

What does the sequence number in a TCP segment indicate?

The number of the first byte in that segment

If a segment has a sequence number 1000, what does that mean?

The first byte in that segment is byte 1000

What does the TCP ACK number represent?

The next byte the receiver is expecting

What does ACK = X mean in TCP?

I have received all bytes up to X-1 and I expect byte X next

What type of ACK system does TCP use?

Cumulative ACKs

What does TCP do if it receives a byte out of order?

It stores it instead of throwing it away.

Maximum segment size (MSS)

The maximum number of bytes of application data in a single TCP segment (not including headers)

How to set TCP timeout value?

Longer than RTT, but RTT varies

What if the TCP timeout value is too short?

Premature timeout, unnecessary retransmissions

What if the TCP timeout value is too long?

Slow reaction to segment loss

How to estimate RTT?

Average across several recent SampleRTT measurements, not just current SampleRTT

SampleRTT

Measured time from segment transmission until ACK receipt

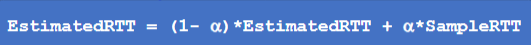

EstimatedRTT formula

influence of past sample decreases exponentially fast

typical value: alpha = 0.125

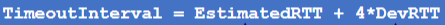

TimeoutInterval Formula

EstimatedRTT plus “safety margin”

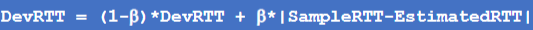

DevRTT formula

EWMA of SampleRTT deviation from EstimatedRTT

typically beta = 0.25

What does EWMA stand for

Exponential Weighted Moving Average

What does the TCP sender do given it received data from some application

Creates a segment with seq #

Seq # is byte-stream number of first data byte in segment

Start timer if not already running

What does the TCP sender do given there is a timeout?

Retransmit segment that caused timeout

Restart timer

What does the TCP sender do given ACK was received?

If ACK acknowledges previously unACKed segments

update what is known to be ACKed

start timer if there are unACKed segments

TCP event at receiver —> TCP receiver action?

arrival of in-order segment with expected seq #. All data up to expected seq # already ACKed

delayed ACK. Wait up to 500ms for next segment. If no next segment, send ACK

TCP event at receiver —> TCP receiver action?

arrival of in-order segment with expected seq #. One other segment has ACK pending

immediately send single cumulative ACK, ACKing both in-order segments

TCP event at receiver —> TCP receiver action?

arrival of out-of-order segment higher-than-expect seq. # . Gap detected

mmediately send duplicate ACK, indicating seq. # of next expected byte

TCP event at receiver —> TCP receiver action?

arrival of segment that partially or completely fills gap

immediate send ACK, provided that segment starts at lower end of gap

Delayed ACK

Receiver waits a short time before sending an ACK

TCP fast retransmit

If sender receives 3 additional ACKs for same data (“tripe duplicate ACKs”), resent unACKed segment with smallest seq #

likely that unACKed segment lost, so don’t wait for timeout

Flow control

Receiver controls sender, so sender won’t overflow receiver’s buffer by transmitting too much, too fast

How does TCP flow control prevent receiver overflow?

The receiver advertises available buffer space using the rwnd (receive window) field, and the sender limits how much unACKed data it sends to this value.

What is rwnd and how is it determined?

rwnd = available space in the receiver’s buffer (RcvBuffer), which is set via socket options and often automatically adjusted by the OS.

Before exchanging data in TCP, what do the sender and receiver do?

handshake

agree to establish connection (each knowing the other willing to establish connection)

agree on connection parameters (e.g., starting seq #s)

How many handshakes does TCP use to establish this connection

3-way handshake

SYN bit

Synchronize

Start a connection and sync sequence numbers

Used in the handshake

ACK bit

ACK field is valid

Used in almost every TCP segment after connection starts

ACK = 1 → im acknowledging data

FIN bit

Finish bit

Client, server each close their side of connection

Send TCP segment with FIN bit = 1

Respond to received FIN with ACK

on receiving FIN, ACK can be combined with own FIN

Congestion

Informally: “too many sources sending too much data too fast for network to handle”

What are the effects of congestion?

Long delays (queuing in router buffers)

Packet loss (buffer overflow at routers)

Congestion control vs Flow control

Congestion control: too many senders, sending too fast (don’t overwhelm the network)

Flow control: One sender too fast for one receiver (don’t overwhelm the receiver)

What causes congestion in this scenario?

One router, infinite buffers

input, output link capacity: R

two flows

No retransmissions needed

Delay builds up with infinite buffer as queue grows larger and larger

What causes congestion in this scenario?

One router, finite buffers

Finite buffers cause packet loss and retransmissions

Congestion idealization: perfect knowledge

Sender sends only when router buffers available

Congestion idealization: some knowledge

Packets can be lost (dropped at router) due to full buffers

Sender knows (infers) when packet has been dropped: only resends packet known to be lost

Congestion idealization: some perfect knowledge

Packets can be dropped, but the sender knows exactly when a packet is lost and retransmits only lost packets, avoiding unnecessary duplicates.

Congestion realistic scenario: un-needed duplicates

Packets can be lost, dropped at router due to full buffers — requiring retransmissions

but sender can time out prematurely, sending two copies, both of which are delivered

Wasted capacity due to un-needed retransmissions

When sending at R/2, some packets are retransmissions, including needed and un-needed duplicates, that are delivered

Costs of congestion

More work (retransmission) for given receiver throughput

unneeded retransmissions: link carries multiple copies of a packet

decreasing maximum achievable throughput

when packets are dropped, any upstream transmission capacity and buffering used for that packet was wasted!

What causes congestion in this scenario?

four senders

multi-hop paths

timeout/retransmit

Multiple senders over multi-hop paths combined with timeout-based retransmissions, which increase traffic and overload shared router buffers.

End-end congestion control

No explicit feedback from network

congestion inferred from observed loss, delay

Approach taken by TCP

Network-assisted congestion control

Routers provide direct feedback to sending/receiving hosts with flows passing through congested router

may indicate congestion level or explicitly set sending rate

TCP congestion controll: AIMD

Senders can increase sending rate until packet loss (congestion) occurs, then decrease sending rate on loss event

Additive increase multiplicative decrease

Additive Increase

Increase sending rate by 1 maximum segment size every RTT until loss detected

Multiplicative Decrease

Cut sending rate at each loss event

TCP slow start

When connection begins, increase rate exponentially until first loss event

Initial rate is slow, but ramps up exponentially fast