13-16 lecture slides

1/50

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

51 Terms

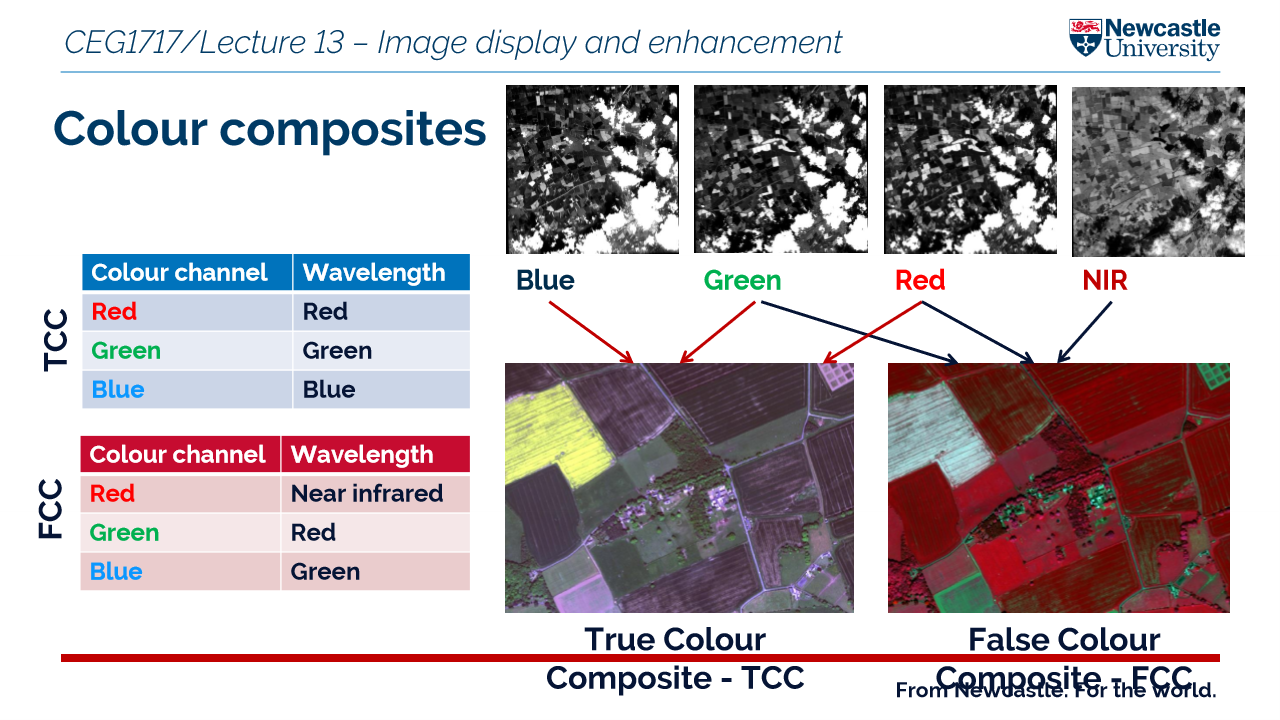

what is a false colour composite?

displays non visible wavelengths like IR - the colours do not represent what the human eye sees

what is a spectral signature?

The unique reflectance or emittance pattern of an object across different wavelengths of the electromagnetic spectrum.

think of it as a fingerprint that helps satellites identify materials on earth

However there are limitations:

illumination conditions such as cloud cover

time of day can alter reflectance

season

why is spectral signature important?

broad leaf and needle leaf both have the same visible region however have different characteristics in the NIR band!

classify land cover

detect vegetation types

monitor water bodies

distinguish snow from clouds

assess soil moisture

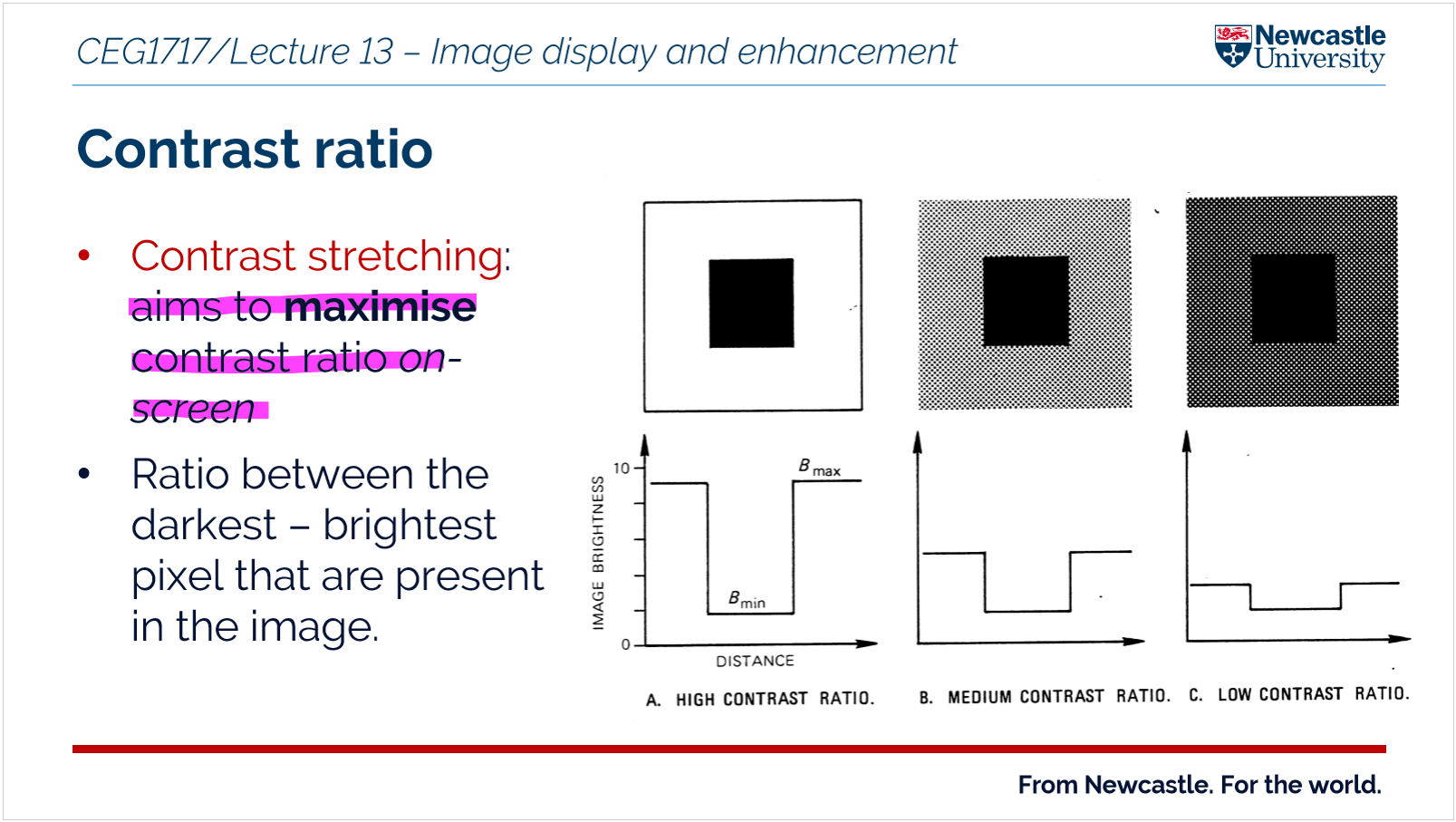

what are the reasons for applying contrast stretching to an image?

An image enhancement technique used in remote sensing to improve the visibility of features in a satellite image

Works by exbanding the range of pixel brightness values so that differences between dark and bright areas become clearer

Make features clearer

contrast manipulations involve changing the range of values in an image in order to increase contrast

Main principles:

original pixel values are unchanged - does not change the original information

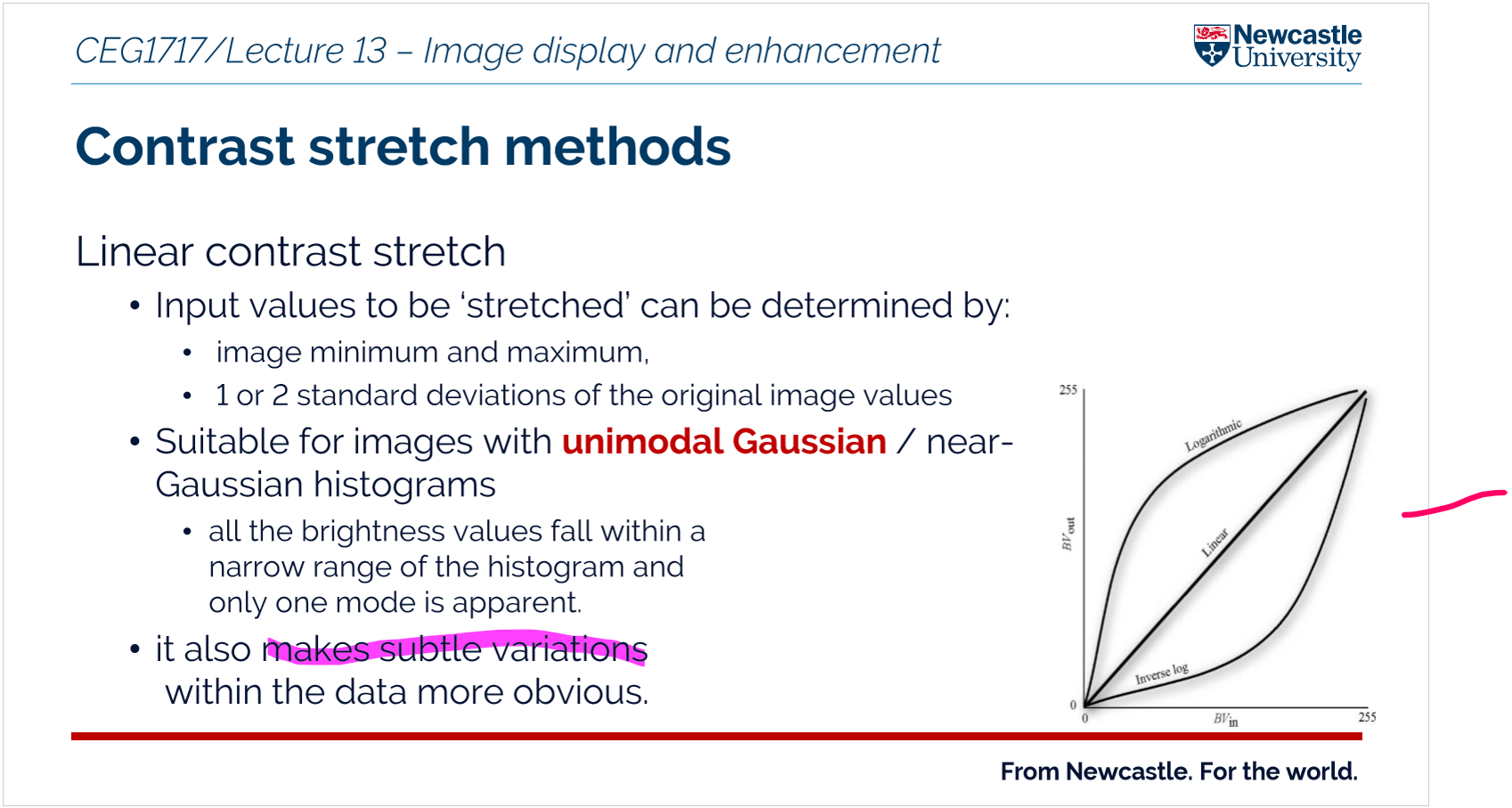

what are some of the common methods of contrast stretching?

Linear contrast stretch = Exbands the range of pixel brightness values linearly so that the image uses the full display range = this improves the visibility of features in the image

non linear = an image enhancement technique in which pixel values are redistributed using a non-linear transformation so that certain ranges of brightness are expanded more than others, improving the visibility of specific features in a digital image.

Linear contrast advantages and disadvantages

A

easy to apply in software

low computational cost

D

may not improve low contrast regionals well - it cannot highlight subtle differences

Non linear advantages and disadvantages

A

enhances specific features and subtle details

D

more computational complex

may alter the proportional relationship between pixel values, meaning the original radiance/reflectance relationships are not preserved.

selective enhancement - Certain intensity ranges are enhanced more than others, which can over-emphasise some features while suppressing others.

what is the purpose of visual enhancements such as density slicing?

to improve the quality and information content of original data before further processing

density slicing is used to used to improve the interpretability of images by grouping continuous pixel values into discrete classes, making specific features easier to identify and analyse.

what is image enhancement?

process of improving the visual appearance or interpretability of a digital image to make features easier to identify and analyse.

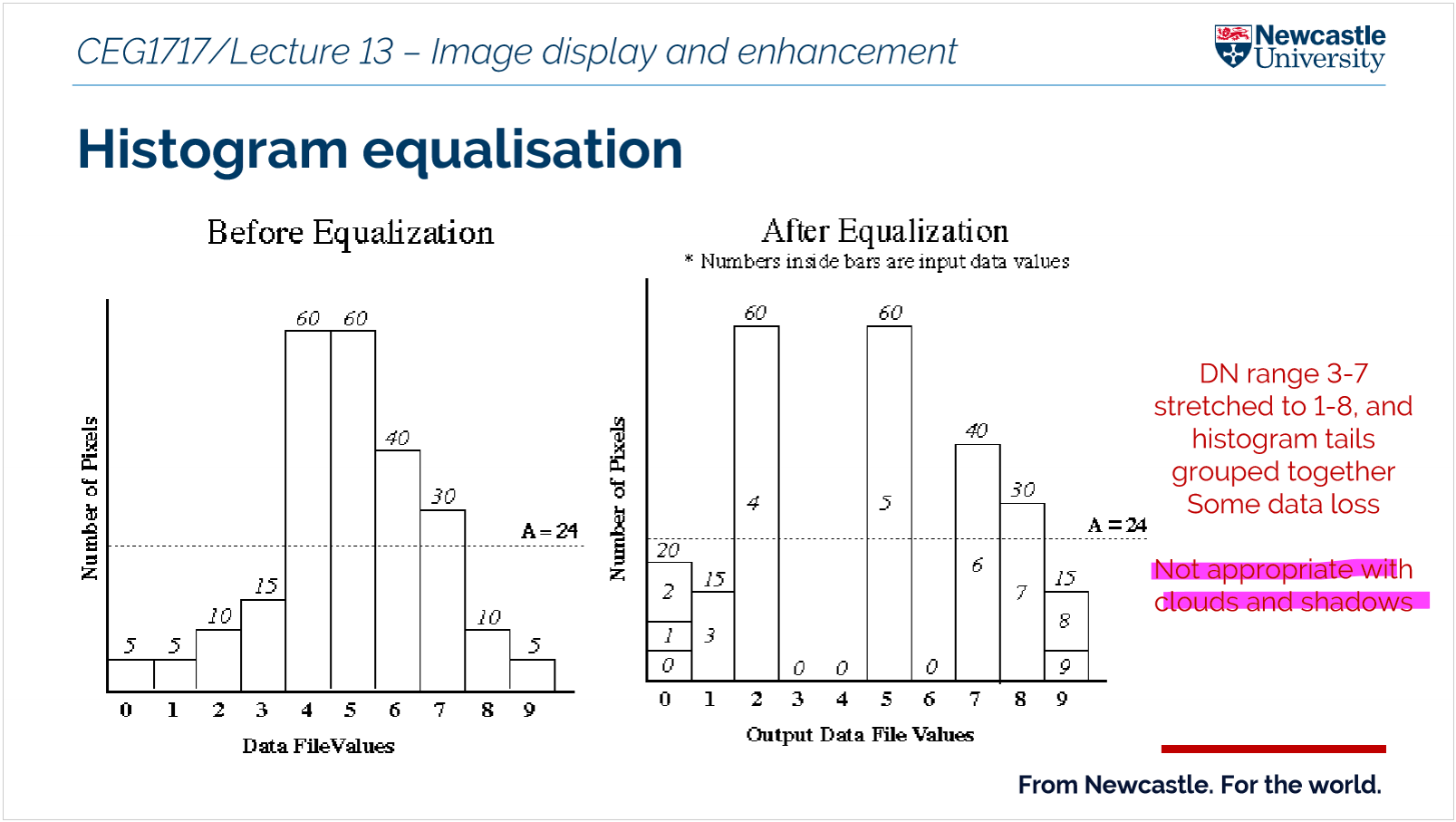

what is histogram equalisation?

an image enhancement technique that redistributes pixel intensity values so that they are spread more evenly across the available range, increasing contrast in an image.

what is density slicing?

all pixels within a slice

Way to turn continuous data into categories

The raster’s values (like elevation, temperature, or vegetation) are divided into ranges called “slices.”

Each slice is given a different color or shade.

This makes it easy to see patterns on the map.

It is called a crude classification map

an example:

0- 0.2 barren land

0.2-0.5 sparse vegetation

Describe the stages of image pre processing?

DN (raw image)

Data Clean (defects removed)

at sensor radiance

TOA (at sensor reflectance)

BOA (at surface reflectance)

Geo (geometric correction / orthorectification)

Enhance (image processing)

Products (maps, indices, classification)

1. data cleaning

radiometric correction - change the brightness

atmospheric correction

geometric correction

image enhancement

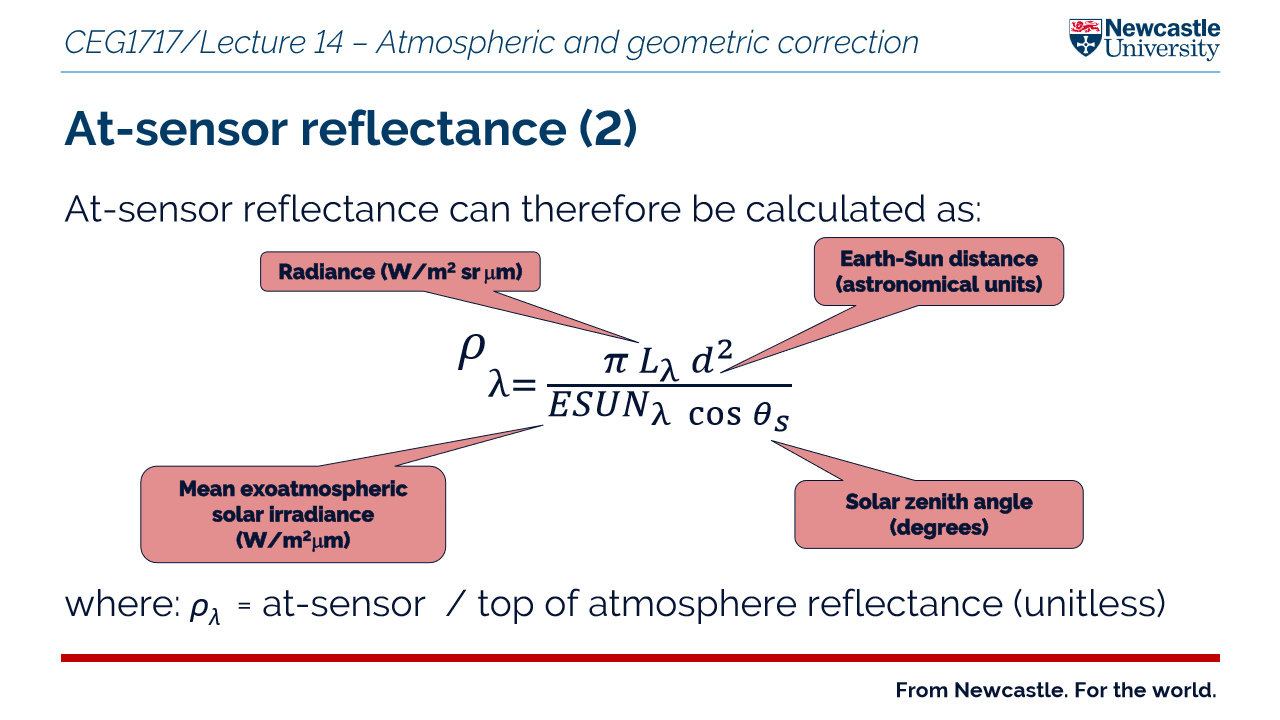

at sensor vs at sensor radiance vs at sensor reflectance

At sensor = radiance or reflectance is the raw measurement made by the sensor including atmospheric effects

At sensor radiance = refers to the measurement of the intensity of electromagnetic radiation emitted or reflected from a surface by a sensor.

At sensor reflectance = the measurement we usually want - the reflectance of the ground surface with any atmospheric influence removed

what does offset mean from atmospheric effects?

means that contrast is reduced - there is a less difference between the lightest and darkest parts of an image

what is atmospheric correction?

Conversion of imagery to at-surface (BOA) reflectance

Atmospheric corrections correct for the effects of the atmosphere on the data collected by satellites or airborne sensors, which can include scattering, absorption, and emission of electromagnetic radiation. These corrections are necessary to remove or minimize the atmospheric effects, allowing for the estimation of the actual reflectance of the Earth's surface.

Why is atmospheric correction important?

Extracts true surface properties (e.g. NDVI)

Enables time-series comparison

Allows multi-sensor comparison

Key point - can sharpen images improve spatial definition of objects/edges

When is atmospheric correction NOT necessary?

Visual interpretation

Single image / single-date classification

What atmospheric effects must be corrected?

Scattering (additive, mainly visible wavelengths)

Absorption (subtractive, mainly NIR)

Key point - Atmospheric effects either add or remove energy from what the sensor measures:

Why do we need atmospheric and geometric correction

Atmospheric and geometric corrections are essential in remote sensing to ensure accurate data interpretation and analysis.

What is the process of dark object subtraction?

It is a image based method that helps with atmospheric correction

Identify the darkest pixels in the image, which are often found in areas with high atmospheric scattering, such as deep water or shadows.

Subtract the darkest pixel value from each pixel in the image to remove the atmospheric effects.

This method is particularly effective for correcting images that are hazy due to atmospheric scattering and absorption effects.

It is a simple and effective technique for removing atmospheric effects from remotely sensed data, making it a common choice for atmospheric correction in remote sensing applications.

It removes the estimated additive effects of scattering

What is geometric distortion?

refers to any alteration or deformation of the shape, position, or proportions of objects in an image or a physical space compared to their true or intended geometry.

What are the key stages of geometric correction?

calculation of mathematical transformation

resampling

What is at sensor radiance and why does it need to be converted to at sensor reflectance?

At-sensor radiance is the measure of light received by a sensor from a target being observed.

It is influenced by factors such as the target's orientation, the path of light through the atmosphere, and the sensor's calibration. To standardize the measurement of surface reflectance across different times and locations, atmospheric correction is applied.

This correction removes the effects of the atmosphere, providing a more accurate estimate of the true surface reflectance. The conversion to at-sensor reflectance is crucial for accurate remote sensing analysis and interpretation.

What is resampling?

It is the process of recalculating the pixel values of an image when it is geometrically transformed so that the corrected image aligns with the desired coordinate system and grid

What are the three types of geometric corrections?

image to ground data

image to map

image to image

What are the two types of geometric errors?

Internal geometric errors:

These originate within the imaging device or system. They are caused by imperfections in the equipment itself.

Examples;

Lens distortion

Manufacturing defects like imperfections in the camera/ scanner

Sensor misalignment

External geometric errors:

These originate from the position, orientation, or environment of the system, rather than the system itself.

they can be systematic which is predictable or non systematic which is random

what is thematic mapping?

Type of map designed to show the distribution of a specific topic or variable across a geographic area

What is the difference between land cover and land use

Land cover = what covers the surface of the earth - this is what remote sensing can see

Land use = how people use the land

what is image classification?

Labelling of pixels on the basis of their spectral similarity ( How similar two objects are based on their reflectance values across different wavelengths bands) - water and vegetation have low spectral similarity

Important as images captured using the sensors contains huge amounts of data - too much data to analyze manually so image classification is used to make sense of them

make a meaningful digital thematic map from image data

What is the classification scheme?

A system for organizing and defining the information classes you want to map from an image

Helps link spectral classes to information classes

How can we classify an image to produce a thematic map

An image is classified by grouping pixels based on spectral similarity using supervised or unsupervised methods. After pre-processing, pixels are assigned to classes such as vegetation or water, producing a thematic map.

Classify → Group pixels → Label classes → Map

what are the two types of class?

Information - categories the analyst is trying to identify in the imagery like crop type

Spectral - Is a group of pixels that have similar spectral properties

Objective is to match the spectral and information classes

What are traditional hard classifiers?

each pixel is assigned to a single class, no unclassified pixels

Supervised image classification vs unsupervised

Supervised = user manually identifies pixels of known cover types and a computer algorithm then allocates all other pixels to one of those classes

Unsupervised = also known as clustering. user manually identifies pixels of known cover types and a computer algorithm then allocates all other pixels to one of those classes

A and D for both

Supervised

highly accurate and predictably

classes can be designed to fit into existing land cover classification

Data Labeling Requirement - time consuming

Limited to Labeled Data: requires completedness and quality of labeled data

overlap in specttral signatures - missclassification

requuires significant amounts of training data

unsupervised

more flexible as classes are generated dynamically

simpler as relies on clustering

signature overlap not a significant problem

can use when number and nature of classes is unknown

potential for misclassification

spectral classes may not relate to information classes of interest

complexity of interpretation - large number of pixels not easily categorised

what is unsupervised image classification and how does it work?

Unsupervised image classification is a machine learning approach that groups pixels or features in an image into clusters based on their inherent patterns, without requiring labeled training data. This method is particularly useful in scenarios where labeled datasets are unavailable or impractical to obtain.

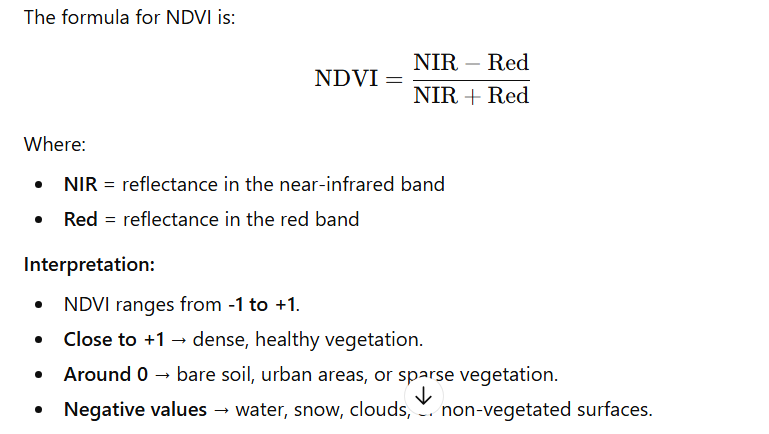

What are spectral indices and how to calculate NDVI?

simple formulas used in remote sensing to combine different wavelengths of light (captured by satellites or drones) to highlight specific features on Earth..

NDVI = (NIR - R) / (NIR + R)

What is the normalised difference vegetation inxex

a widely used remote sensing index that measures vegetation health and density. It leverages the difference in how plants reflect red and near-infrared (NIR) light.

+1 dense healthy vegetation

0 = bare soil, urban areas

What are applications of NDVI?

agricultural production

land cover change

forest monitoring

vegetation health monitoring

what should thematic maps be accompanies by?

accuracy assessment based on independent ground reference data collected using a valid sampling scheme

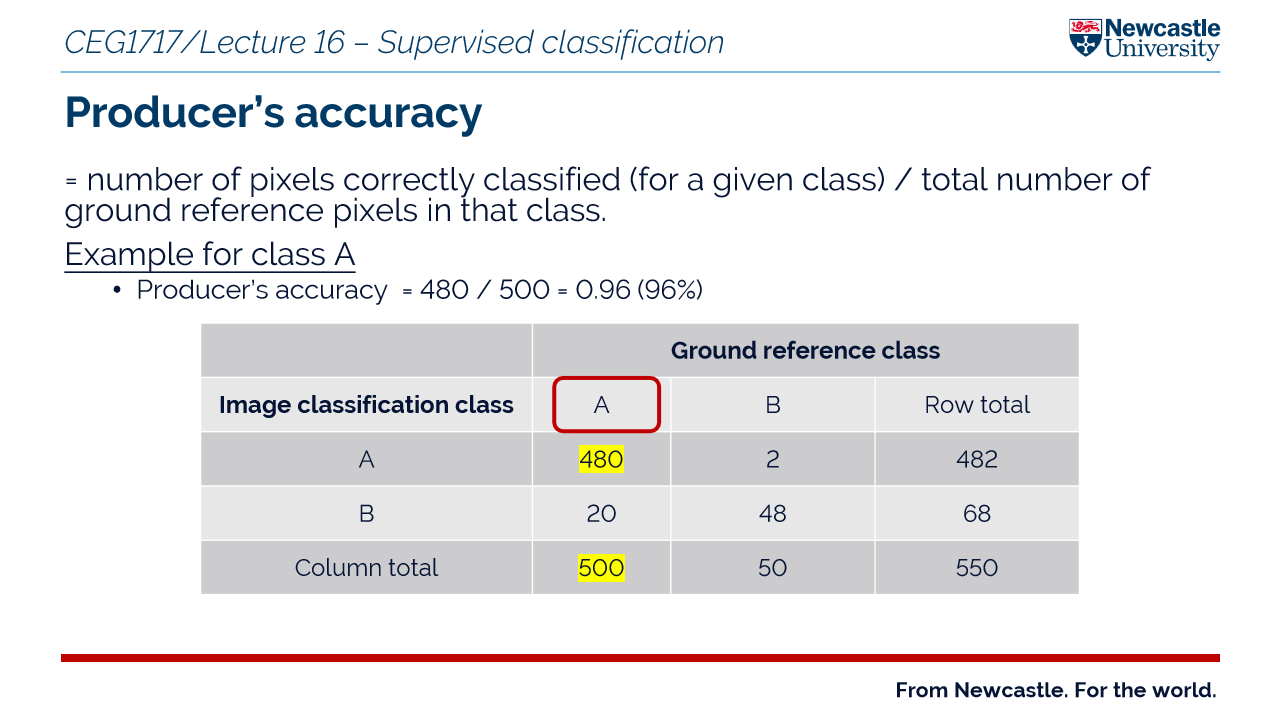

What are the use of confusion matrices?

allow the calculation of overall accuracy of thematic data products as well as indicating the accuracy of individual classes

compares classified pixels and reference ground truth pixels

for example check that a forest misclassified as grass!!

What is training data?

areas on an image where you already know what the land cover is

construction of tranining spectral signatures;

select training areas

extract spectral data

build signature database

classify the images - the algorithm compares unknown pixels to the trained signatures and assigns them to the closest matching class

What makes a good training site?

representative of entire spectral space of class

have minimal overlap with other signatures

What is minimum distance classifier?

classifies a pixel based on distance to class mean

What is parallelpiped classifier?

It classifies pixels in multispectral images by determining whether they fall within defined boundaries (parallelepipeds) around the mean values of each class in feature space.

its the box rule

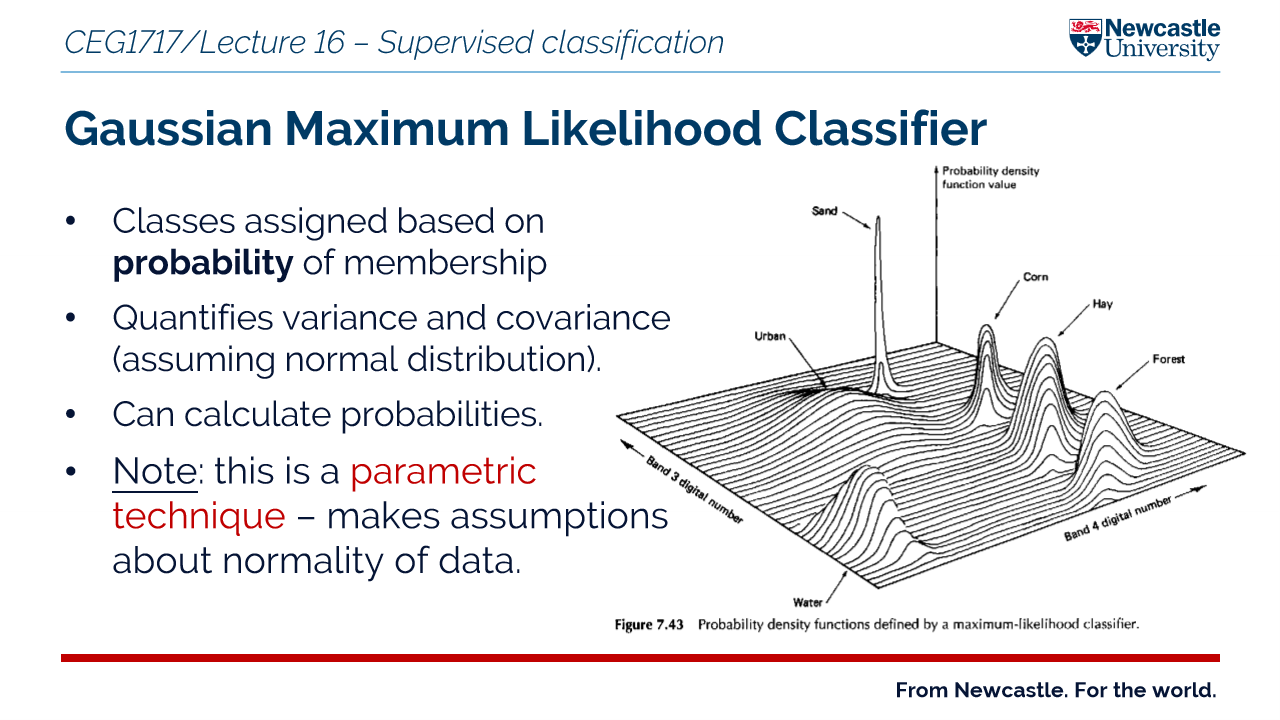

What is gaussian maximum likelihood classifier?

classes assigned based on probability of membership

It assigns a pixel to the class it is most likely to belong to (highest probability)

Height = likelihood of a pixel belonging to that class

What are the two types of error to look out for in classification?

errors of omission = sample not included in actual class like a forest pixel classified as urban

errors of commission = sample wrongly included in a class like a forest polygon is mapped in an area where there is actually no forest

Producer accuracy vs consumer accuracy

producer accuracy = probability that a reference sample is correctly classified in the map = accounts for errors for omission

users accuracy = probability that a pixel classified as a given class actually represents that class on the ground = accounts for errors of commission

What is the kappa statistic

A measure used in remote sensing to evaluate classification accuracy - it goes further than overall accuracy

Help asssess how reliable your land cover classification really is

takes into account the number of reference points that could be correctly classified by chance