Biometry Midterm II

1/38

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

39 Terms

Simple Linear Regression (Number of predictors)

Modelling the relationship between one independent variable and one dependent variable

One predictor variable

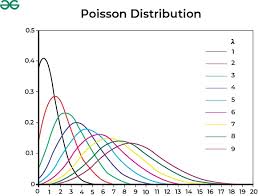

Poisson Distribution

A skewed, count data type that is a GLM.

log(y)

Multiple Linear Regression (Number of predictors)

Modelling the relationship between one dependent variable and 2+ predictor/independent variables.

Predicts outcomes through a line of best fit

The response variable in linear regression should be…

Continuous, and approximately normal

A random variable (x) is…

Some numerical outcome of a random process

Why is probability fundamental to statistics?

Probability can quantify uncertainty

How to interpret the p-value

The probability of getting our observed data assuming the null hypothesis is correct

Likelihood

Likelihood is the likelihood that observed probabilities happened under variable parameters

(Given chance, parameters that caused it are variable. Used for estimating parameters)

Probability

The chance that, under given parameters, random outcomes will happen

(Given parameters, find chance)

Probability distribution

Some representation of all probabilities of a given random variable

Numerical Discrete

Data that is counted, numerical data without decimals or fractions

Numerical Continuous

Data that is numerical and uses decimal points, fractions

Categorical nominal

Data that is NOT numerical, and has no order (favorite color, car model, etc.)

Categorical Ordinal

Data that is NOT numerical but IS ordered (gold, silver, bronze medals)

Categorical Binary

Data with only 2 possible options (yes/no, treatment/no treatment, etc.)

T-Test test statistic

(T)

ANOVE test statistic

(F)

Chi-Squared test statistic

(X²)

When to apply One-Sample t-test

When we have one group/sample, and we are measuring for some target value

One continuous variable

When to use a Two-Sample T-Test

When we have two different groups/samples, and we want to compare the means of the two.

Continuous outcome variable, and a categorical variable with 2 different groups

When to use Paired T-Test

When we have two means from the same group or matched pairs (pre- and post-treatment), usually a before and after

2 continuous measurements for each person

When to use ANOVA

When we are comparing the means for 3+ groups.

1 continuous variable and 1 categorical variable with 3+ groups

When to use MLR (Multiple Linear Regression)

When you want to predict some continuous outcome based on 2+ predictor variables.

1 Continuous outcome and 2+ predictor variables which can be categorical or continuous (Tomato plants grown with fertilizer, without, in shade, without, etc.)

When to use Generalized linear model

When our outcome is NOT normally distributed (binary data, count data, etc.)

Often with log() or with a Poisson distribution

When to use X² Test

When we want to see if the FREQUENCY of a categorical variable, proportion, matches what we expected.

When to use Non-Parametric Tests?

No assumption of normality/distribution, opting for a weaker, less powerful test if it’s your only option.

Parametric tests and their corresponding non-parametric tests

T-Test = Wilcoxon Test for One Sample

Paired T-Test = Wilcoxon Test

Unpaired/Two Sample T-Test = Mann-Whitney U Test

One Factorial ANOVA/Independent Samples = Kruskal-Wallis Test

Repeated Measures/Dependent Samples ANOVA = Friedman Test

Simple vs Multiple Linear Regression

Simple linear regression predicts a continuous variable by using one independent predictor variable

Multiple linear regression uses 2+ predictor variables to predict a single continuous variable

R² Value

R² represents what proportion of variance in our data can be explained through our model

If a predictor is non-significant, does that mean the model is also non-significant?

No, even if the predictor is non-significant, the overall model may still be significant

How to calculate predicted values from a regression equation

Plug in x to the given equation, typically just some variation of y = mx + b

When to use GLM’s (generalized linear models)

GLM’s are useful for when our data is not normally distributed, is non-linear, and/or has non-constant variance (skewed, count, binary data)

Random component of a GLM

The random component of a GLM is the outcome, the y in the y = mx + b equation. The distribution

Systematic Component of a GLM

The actual equation giving the outcome, the system. The mx + b in y = mx + b

Link Function of a GLM

Some function applied to the random variable that transforms the data into valid domains.

Coefficients put through a link scale must be transformed for interpretation

Binomial = log(y/y-1)

Poisson = log(y)

Gamma = 1/y

Log Link

A log link or log(y) is used to transform Poisson data, or skewed positive data (gamma)

Implies multiplicative effects

Reversed by exponentiating y

Logit Link

Used to transform binary data (yes/no, 0/1, etc.)

log(y/1-y)

Changes log-odds from 0 to 1 to negative infinity to positive infinity.

Remember! The slope of a logit function does not equate to an increased chance per unit of predictor variable, it equates to an increase in the Log-Odds

Interpreting GLM Coefficients

A change in a GLM graph with data that has been linked needs to be reversed before it can be interpreted. A change on the graph means a change on the link scale, not on the actual data.

Individual vs Model significance

Individual Significance (T-Test) - Evaluates if a specific predictor coefficient is significant, not 0

Model Significance (F-Test) - Evaluates the overall model, if the entire dataset is significant