Music Cog - ALL

1/610

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

611 Terms

what is the psychology of music concerned with?

the processes by which people perceive, respond to, and create music, and how they integrate it into their lives

makes important use of cognitive psychology, drawing on sensation. perception, etc.

acoustics

the science of the production, propagation, and reception of vibrations in the air that are relevant to hearing and music

the science of sound

neuroscience in music

surge of interest in neural underpinnings of human musicality, and how the neural activity may constrain or enhance our experience of music/music-making

musicology

the study of structure and history of human music

philosophy in music

brings out certain presuppositions in the practices by which psychologists try to reach an understanding as a human phenomenon

music education

music requires the development of a set of highly elaborated skills, and a growing base of knowledge that allows for the sensitive interpretation of music

ethnomusicology

the anthropological study of music, looking at the different musical cultures, and distinctness

range of research methods in the psychology of music

empirical studies

recording

physiological/brain imaging

motion capture

electromyography

qualitative/naturalistic observations

3 things we need to understand about how we use and process sound

what you don’t hear affects what you do hear

little details matter

context is vital

semiotic theory

indexicality, AKA a thing that represents a thing (like a footprint representing a foot)

affordance

the actionable possibilities that the environment provides an individual

We use context clues to understand how to use something

We need to think about a sound's features as context for what the sound comes from

feedback loops

sound gives us information about our performance

ex, a sound when we hit someone

major → _____ effect, and minor → _____ effect

positive

negative

causal influence

with sound we can make sense of vague visual stimuli

when we see sharper shapes we assign it with sharper words (Bouba and Kiki experiment)

organizing principles of sound (Gestalt principles)

Proximity → if close together, we assume the sound came from the same source

Similarity → if similar, we assume the sound came form the same source

Closure → we fill in the gaps of what we don’t hear from what we expect

Symmetry

Common Fate/Continuity → sound will come/stay in the same direction

Past Experience

scientific definition of perception

our internal experiences of the external world

sound becomes music only when it is perceived by listeners

long vs short percussion gesture test - methods

4 conditions, 2 are hybrid (audio and gesture are mismatched)

participants told they may be mismatched, and told to only rate auditory length

long vs short percussion gesture test - results

with audio alone, the ratings of sound are not rated differently (despite long and short gestures)

when we pair sounds with gestures, the sound are impacted by gesture length

our perception of the sound is longer when mixed with the visual, but solely the audio shows no difference

why do we get those results with the long vs short gesture test?

our brain is implicitly thinking for us

it uses shortcuts that lead to illusions

what are ancillary gestures

expressive body movements used by musicians during performance, that aren't directly needed

what do ancillary gestures do?

enhance communication, express emotion, and guide the listener’s interpretation

they are not prescribed in traditional music scores, or evident in audio recordings, so some assume they are not integral to formal musical analysis

growing evidence that ancillary movements alter an audience’s listening experience

effective vs ancillary gesture

effective → required for sound production

ancillary → not necessary for the creation of sound

ancillary commonly thought of as secondary, but that is naive!

visual information in music (Stravinsky’s piece)

judgements of tension were rated differently depending on whether the participant viewed the performance or simply heard it

emotions are communicated through these gestures on a number of instruments

subgenre of theatrical percussion

modern performers are giving instructions on the motions to be used while performing, many of which are ancillary

capitalizes on the relationship between gestures, music, and perception

more common now, but not a new concept

dance and ancillary gestures

dance → human movement that frequently occurs concurrently with acoustic information

if ancillary movements accompany the music without affecting its acoustic characteristics, it can technically be dance

music induced movement

dance movements made in response to music

involves reacting to the low-level temporal structure of the music but also reflect the rich hierarchy of temporal information

when asked to dance freely, movement of the extremities tend to synchronize with faster metric levels, whereas movement of the torso tends to synchronize with slower metric levels

ancillary gestures can be used to accomplish _____ what cannot be accomplished _______

perceptually

acoustically

they can create musical illusions

marimba and ancillary gestures

'when sharp wrist motions are used, the only possible results can be sounds of staccato nature, and when smooth, relaxed wrist movements are used, the player will then be able to feel and project a smoother, more legato-like style'

others argue that motion after impact is not directly relevant to the acoustic consequences of the preceding event

shaded circle illusions

we see bumps when dark on bottom, and indents when dark on top, due to our assumption that light is coming from above the top of our head and the darkness = shadows

mcgurk effect

visual information categorically changes our perception of concurrent speech

Pairing one speech sound with the lip movement used to produce a different sound

The resulting percept is essentially the average between the two conflicting acoustic and visual components

The sound "ba" with the movements "ga" give "da”

integration of visual-audio

In everyday perceiving we often experience a number of events occurring simultaneously

Visual-audio integration is a constant background process assisting with the organization of a chaotic stream of sights and sounds into the coherent perceptual experience of unified multi-modal events

one of the cues for discerning multi-modal relationships is causality

importance of causality and manipulation

The importance of causality in audio-visual integration is best illustrated by viewing the perceptual ramifications of its absence

Manipulations of weakening causal links diminish the strength of the illusion

Manipulations breaking it destroy it entirely

for example, sounds that could not be caused by impact gestures (human voices) fail to integrate with impact motions

but, some alternate sounds don’t fail to integrate

when paired with a visual impact gesture, other sounds caused by impact events may integrate to a certain degree

for example, a piano sound may integrate with an impact gesture

the illusion is contingent upon detection of congruity between the visual motion and the auditory timbre

3 audio-visual pairings marimba experiment

the note occurred slightly before (audio lead the gesture)

the note occurred slightly after (audio lagged the gesture)

the note occurred at the same time

3 audio-visual pairings marimba experiment - results

the illusion was strongest in the synchrony condition

the gestures integrated with the marimba sound in the audio lag (2) condition, but not the audio lead (1) condition

demonstrates importance of causality (can’t integrate a motion to a sound that already happened, since sound moves slower than light)

which part of the impact controls the marimba illusion?

the magnitude of post-impact (showing only the motion concurrent with the sound) was similar to that of the full-gesture video

no illusion found in the pre-impact condition

point light vs edited videos when studying

edited videos lack ecological validity, so point light versions can be better representations of complex movements

we can use this to create hybrid gestures (mixing pre-impact of one and post-impact of another)

auditory signals offer important advantages:

can reach individuals not in the range of sight

faster response times

effective interfaces in saturated environments

easiest ways to classify alarm sounds

speech → easy to understand, direct

non speech → universal, and dominates the ambient noise form other speech

medical device alarms - IEC

opted for message standardization → attempting to improve interoperability across locations

created a global standard (specific sounds)

short tone sequences signal key states, with 2 levels of urgency

individuals required to differentiate between similarly sounding alarms

issues with the current melodies of medical device alarms

they sue identical rhythms, similar frequency ranges, and share a starting pitch

ensures uniformity, but poses barriers to the effectiveness due to frequency confusion/misidentification

very poor learnability

what is alarm masking

when alarms have similarities in acoustic structure, it increases the risk of simultaneous masking (concurrent alarms prevent one another from being heard)

risk further increased by similarities in the signals temporal structure, pitch range, and timbre

alarm confusion in hospitals

terrible learnability (less than 30% can identify after training)

only 2/14 nurses could identify every alarm

the arrangement of sounds within the alarms are too similar to eachother

what is timbre and how can we use it in hospital alarms

timbre provides rich acoustic information, allowing for the differentiation between sound sources, and facilitating recognition

we can use different timbres in different alarms

but, the universal standards mandates the same timbre throughout

musical sounds - complex or simple?

musical sounds from instruments are incredibly complex, making them enjoyable to listen too:

one note produces multiple tones over the fundamental frequency (overtones/harmonics)

the notes’ strength changes overtime

hospital alarms - complex or simple?

temporally simplistic

the alarm tones contain multiple harmonics, but little temporal variation

alarms are made up of flat/simple tones

leads to uniformity, but this decision was driven by technical benefits rather than perceptual best practices

2 ways we can use music’s temporal complexity to improve alarm design

lowering annoyance

proven by experiments

improve recognition compared to current alarms

no differences in learnability proven by experiments

new 2020 standard for alarms

aligns with auditory principles and extensively validated

they are temporally simple and have few harmonics

less notes = less urgency

alarm annoyance not considered!

perceptually well-designed alarms were created. why were they rejected?

they sounded unpleasant (patterson)

issues with alarms being annoying

contributes to alarm fatigue and staff turning off alarms

overall, what can we do to hospital alarms to make them less annoying?

add temporal variability!

two key concepts in this class

our brains think for us

music reflects the features of my mind

similarly to how a glove reflects the features of my hand

mcgurk effect is the speech equivelant to the

marimba illusion

there is a sensory integration between the visual and acoustic information, creating a specific perceptual experience

unity assumption

some sight and sound go together

ventriloquist effect

our brain is always looking for sights and sounds that go together

our perception of music can be entirely changed by adding other _____

modalities, especially visual components

integration of impact gestures (marimba) and other sounds

y-axis is visual influence, long to short gestures

x-axis is: marimba, piano, horn, clarinet, voice, and white noise

important features

marimba the highest

piano about half of the marimba

horn, clarinet, voice, and white noise are all similar and close to 0

higher bars = higher binding between modalities (sense of unity)

difficult to separate these modalities

what dominates in a spatial vs temporal task?

visual system dominates for spatial, auditory system dominates for temporal tasks

in general, which modality dominates and why?

visual systems, simply due to their ease!

to see motion, we need at least 60 frames per second

to fool our soundwaves, in audio CDs we need 44,000 samples per second

duration perception and modality integration

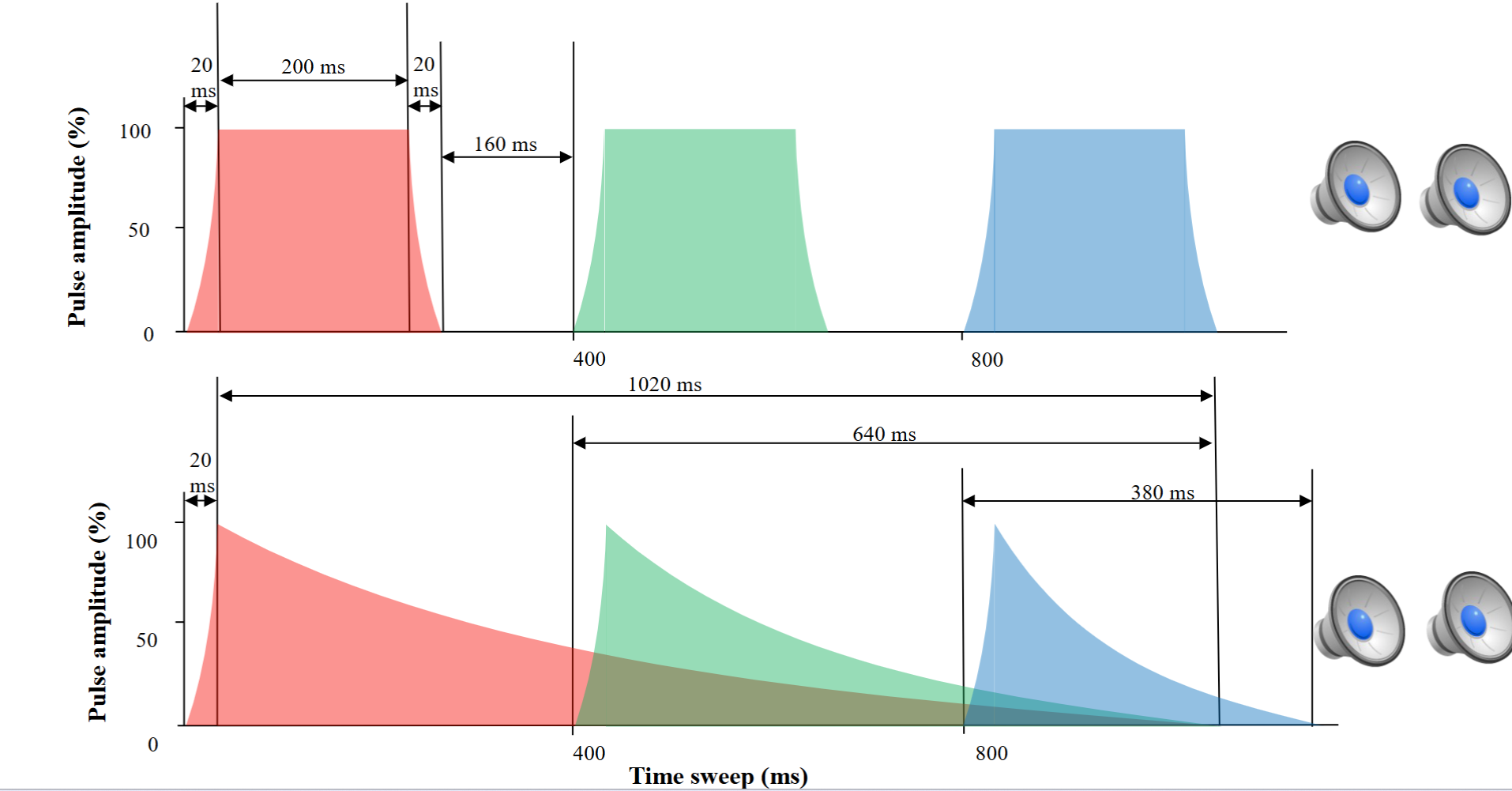

if we have a flat tone vs a percussive tone (with a spike and fall):

we see a stronger pattern of visual influence with percussive tones

likely due to the fact that the percussive tone has better ecological validity, making it easier to bind

how do musical tones differ from tone beeps?

tone beeps → unnaturally flat, with an instant start and instant end, and a constant pitch

musical tones → more variety, gradual, ebbs and flows, natural, overtones within the same note, temporal variation, etc.

in past experiments on auditory perception and visual system binding…

the majority is done with flat sounds (90% beeps), and only about 10% have temporal variation (percussive sounds)

in everyday listening, we have way more percussive sounds than simplistic ones

our everyday experiences show the opposite pattern to what is researched!

initial assumption about unity assumption in music

exp compared piano vs guitar, separated visual vs auditory

found no benefit of congruency (same instrument visual + auditory)

similar binding found in hybrid conditions

therefore, concluded there is no unity assumption in music

issue with using the guitar and piano when looking at unity assumptions

the amplitude envelopes of piano and guitar notes are pretty similar

so, failure of unity benefits may be due to the fact that some level of binding occurred in the hybrid conditions

if we retest the unity assumption with the cello and marimba, what do we find?

we find stronger binding with congruency, therefore unity assumption met

this succeeded because they have extremely different amplitude envelopes

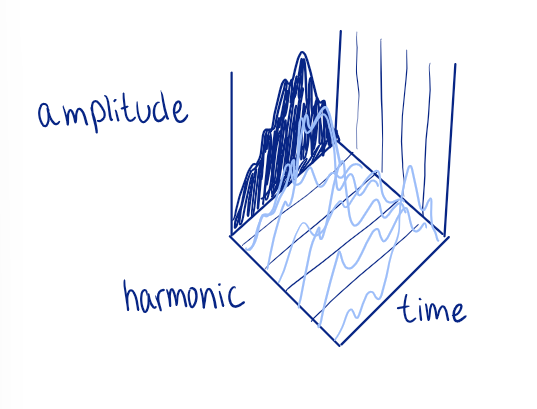

what are amplitude envelopes?

amount of energy (amplitude) over time

when we consider complex vs simple sounds, the biggest differences are in amplitude envelopes

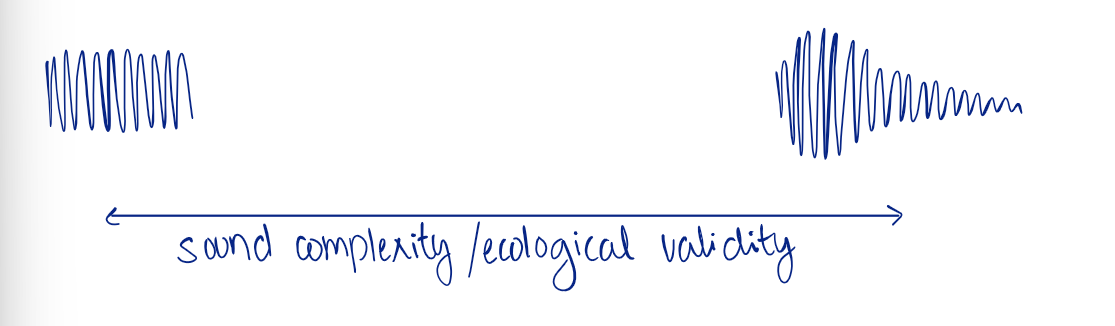

continuum of amplitude envelopes by ecological validity/sound complexity

as sound complexity increases, ecological validity increases (and vice versa)

interfaces

a device or program enabling a user to communicate with a computer (in this context, with sound)

interface may be a better term than ‘alarms’ when discussing hospital beeps

3 things to consider in alarms

learning/learnability → need to increase

memory → need to increase

annoyance → need to decrease

3 burning questions about creating new interfaces

why not add a visual interface?

why not add speech?

isn’t annoying good?

answers to the 3 burning questions

there are often times where visual attention is needed elsewhere

in a busy hospital this would be more annoying, no universality, and compromises shielding (don’t need to tell patient everything immediately)

not all alerts are alarms (these are interfaces) meaning they need not be annoying, can create fatigue

what slight change can we make to standard device sounds without changing the tones?

alter their amplitude envelopes to be more percussion-like

allows for decay and overlap

this allowed the tones to be perceived as less annoying

the benefits of this experiment are due to the temporal structure changes

stats of types of sounds in research on auditory perception

28% percussive

28% flat

1% click

8.5% other

33% undefined

many were not including the amplitude envelopes

huge issue! impossible to recreate without these/separate

other meta analyses found the undefined category to be the largest again

metaphor for the downfall of amplitude envelopes

they are like the outline/shadow of the person, rather than the entire person

we need to also consider the complexity in harmonics, not just amplitude across time

decreasing from 100% harmonic

with flat and percussive tones, decreasing from 100% harmonic (same the whole time) it becomes less annoying

percussive notes inherently less annoying too, and detection is easier

musical acoustics

focuses on the mechanisms of sound production by musical instruments, effects of reproduction processes or room design on music sound, and human perception of sound as music

3 physical characteristics of sound waves

frequency

amplitude

power spectrum

pressure wave

physical disturbances propagating through the air form

we need density in air molecules so sound can travel

tine movements on a tuning fork

Compression → as a tine moves in an outward direction, the air molecules adjacent to it cluster together

Expansion → then, as the tine moves back in, past its midpoint, the molecules spread apart

the compression and expansion of air molecules creates

an oscillation, leading to a sound wave

compression is the amp above 0, and expansion is amp below 0

the movement of a sound wave is

longitudinal

the movement of the oscillation is parallel to the direction of movement overall

a sine wave is the equivalent of a ____ tone

pure/simple

its pattern can be described fully using one frequency of the vibration

takes a very smoothed pattern

most sounds do not take this pattern

2 parameters of sine waves:

frequency

amplitude

frequency

every time a sine wave completes a full cycle (AKA a full period)

Hz → number of cycles per second

what can we use to control frequency?

tension, which causes sound frequency to increase, influencing pitch

pitch is a ____ variable

subjective

many cycles in a span of time is perceived as high

few cycles in a span of time is perceived as low

ampltitude

the maximum displacement compared to the resting state

generally, the greater the amplitude of a wave = the more energy it transmits

more energy seems to = more loudness

amplitude is related to the percept of ____

loudness

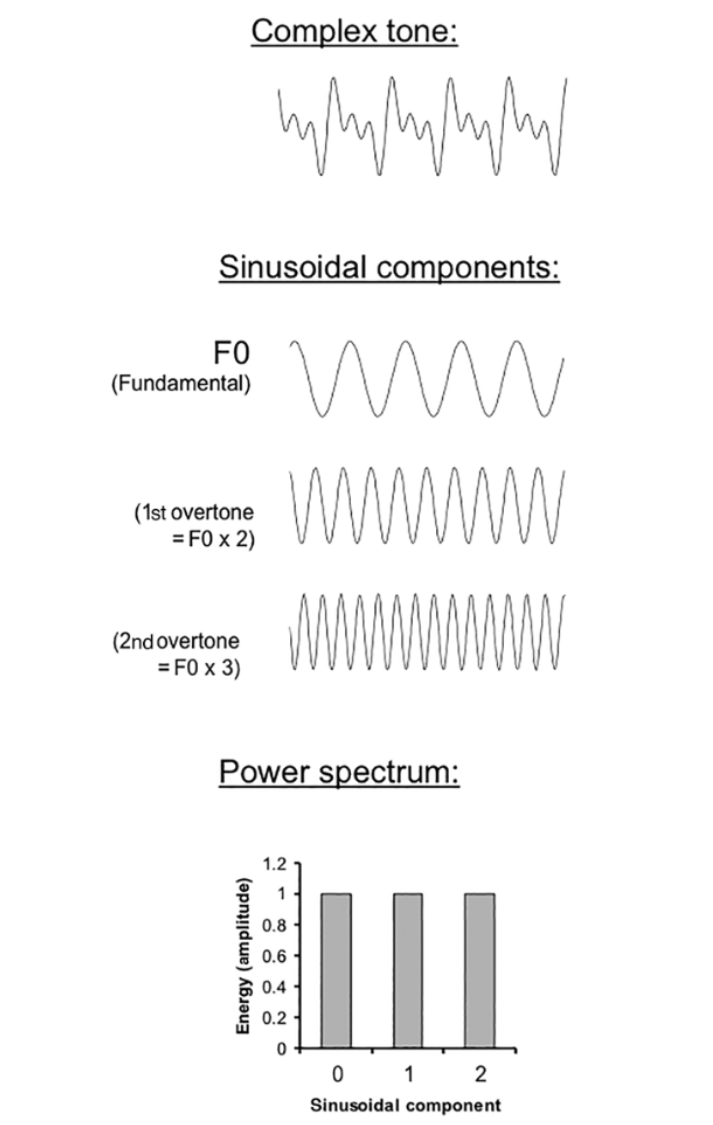

complex soundwaves

most sounds are best understood as a combination of many frequencies that occur simultaneously

any complex tone can be understood as a combination of sine waves

we can sum multiple sine waves/components to describe a complex tone

fourier analysis

the process by which a complex wave is decomposed into a set of component sinusoids

fundamental frequency

the component with the lowest frequency (longest wavelength)

often associated with the pitch of the complex tone

overtones

higher frequency components of the complex tones

the quality of sound formed by the pattern of the overtone series is

timbre

timbre helps us differentiate between different sounds

harmonic complex tones

the frequency of each overtone is an integer multiple of the fundamental frequency

tones are simply called ‘harmonics’ in these tones

for example, can have a first harmonic double the fundamental, the second triple, the third quadruple, etc.

harmonic complex tones often convey a

clear pitch

how does the numbering convention for frequency components vary between harmonics vs overtones?

harmonics → the numbering includes the fundamental, starting at 1 (F1)

overtones → a general distinction between fundamental and overtones, so the fundamental starts at 0 (F0)

anharmonic complex tones

sounds with rough or noisy timbre, they have overtones that are not so simply related to the fundamental

this is white noise!

in real life, what do we normal hear? (in regards to harmonic and anharmonic complex tones)

the sounds we hear are often never entirely one or the other

but, we still use the distinction to distinguish between different sorts of timbre

for ex, Micheal Buble’s voice is closer in structure to the sound wave of a harmonic complex tone

amplitude relationships in the overtone series

not all overtones emerge with the same intensity

overtone series determining timbre are based on numerical relationships among sound frequencies AND through amplitude associated with each frequency

power spectrum

plots the amount of energy (power) associated with each frequency component in a tone (expressed as amplitude)

can be thought of as showing the amplitude of sine waves in a complex tone

spectral centroid

how the amplitudes of different partials are distributed defines this part of timbre

spectral centroid reflects the center of gravity in the sound’s spectrum