s&p exam 4 pt 2

1/59

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

60 Terms

Auditory localization

The ability to determine the location of a sound in space.

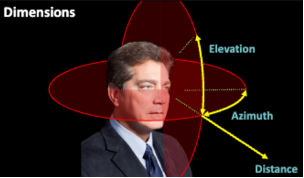

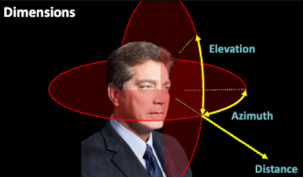

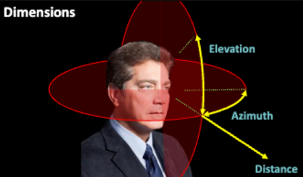

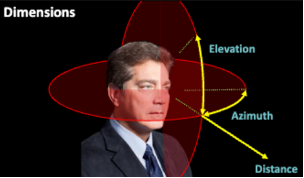

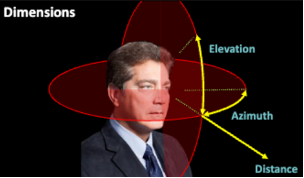

Dimensions

To figure out location

Azimuth

The left-right dimensions that help determine sound location.

goes all the way around your head

Elevation

The vertical / up and down dimensions that help determine sound location.

Go all the way around your head

If you know the azimuth and the elevation it covers all directions

Distance (in auditory localization)

The measure of knowing how far away a sound source is.

If you know all three of these dimensions then you can pick out exactly where a sound is coming from

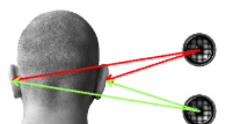

Binaural cues

Cues that use both ears at the same time to compare signals from one ear to another.

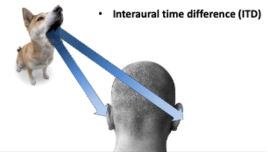

Interaural time difference (ITD)

The difference in timing of when sound reaches each ear.

Interaural level difference (ILD)

The difference in sound level reaching each ear.

The difference between the two sounds in the ear

Your head tends to absorb or reflect high frequencies

Treble

Doesn’t travel to the other side so the other ear can’t hear it

Your head tends to let lower frequencies pass through

Example: hearing loud music playing in the room upstairs - What’s making it down here are the bases /low sounds. Low frequencies are able to travel through your head

Acoustic shadow

An area where sound is blocked due to the head, resulting in lower sound intensity.

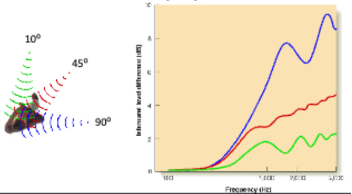

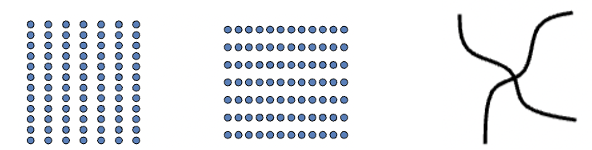

Cone of confusion

Locations in space that produce the same binaural cues, making it hard to determine sound source.

get same time and level differences; binaural cues can’t tell the difference between sounds that are a little above or below one another

Solution to this = Spectral cues

The reason dogs tilt their head: angle ears to localize sound in space

Owls have upper and lower ears: Helps locate sounds in space better

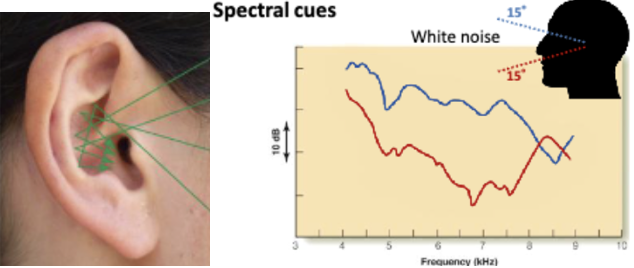

Spectral cues

Changes in sound direction that affect how sound is reflected by the ear's shape in pinna.

Folds in ear bounce sounds around in different ways depending on where the sound is coming from

Humans use as solution to cone of confusion

Have learned over life the different sound frequencies to know where in space the sound was coming from to know elevation.

Spectral cues experiment

Changes shape of ear but not blocking or clogging ear

Spectral cues were ruined; took away ability to perceive elevation

Got better over time when leaving mold in for a few weeks since you learn a new way to perceive sound in your specific ear

When they took out the mold the participant was still equally as good as it originally was before

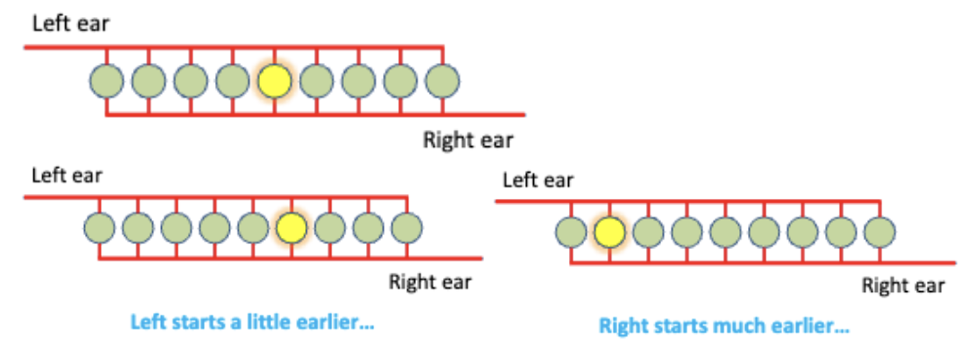

Jeffress Coincidence Model

A model explaining how neurons fire based on timing of sound signals from both ears.

Neurons are receiving signals from both the left and right ears but those signals are coming from different directions.

To make them fire, they have to get two signals at once

Looking at when those two meet in the middle, then making the neuron fire, meaning the sounds are starting at the same time

The two sounds are not normally coming in at the same time

The signal / ear that starts earlier, makes a neuron fire that is more over on the opposite side from where the sound started

Place code

The theory that neural signals' location corresponds to the actual sound location.

Evidence for this model comes from neurons in owls brain stems

Is what you would expect if the Jeff model was happening in their brain stems

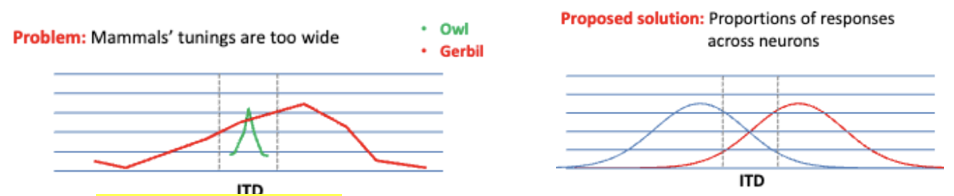

Problem: Found in mammals compared to owls that the mammal responds better to sounds at different locations because mammals tuning curve is too wide

Cell in monkey auditory cortex, responding to sounds at different locations

In humans- If you look at the proportion at how strongly each are firing then compare the strength of them to figure out what’s going on

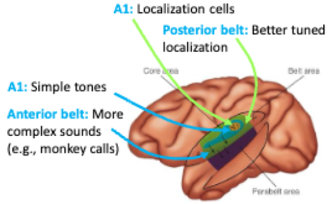

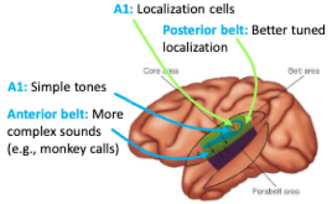

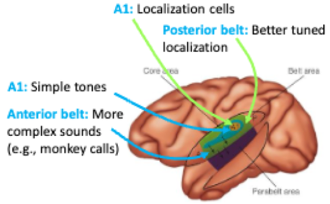

A1

Localization cells

not great but can generally sense location

simple tones

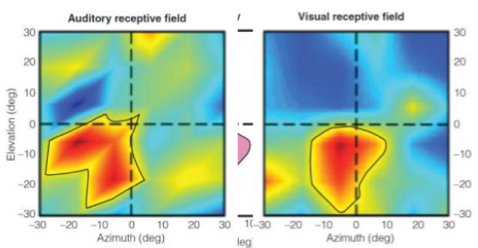

Posterior belt area

The region of the auditory cortex involved in processing complex sounds and spatial awareness.

better tuned localization

neurons tuned to more places in space and can pick out exactly where a sound is coming from

Anterior belt

The area of the auditory cortex that processes more complex auditory stimuli and contributes to sound perception and interpretation.

Even better than PBA at localizing different complex sounds

Where pathway

The auditory pathway that helps determine the location of where the sound is in the environment.

What pathway

The auditory pathway that helps in identifying what the sound is / its specific characteristics.

such as their identity and meaning.

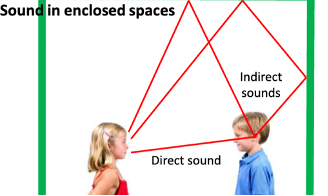

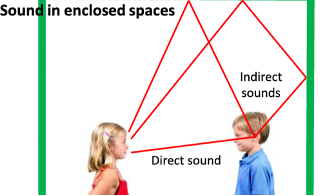

What happens to sound in enclosed spaces?

When you’re talking to someone, sound waves are going from you to their ear but also to the things around you

When in a room, some of the sound from voice is hitting the walls and are bouncing off and back to your ear

The same sound is hitting your ear over and over again because its coming from different directions

Direct sound

The sound that travels directly from the source to the listener's ears without any reflections or modifications.

Indirect sounds

Sounds that are there but you aren’t perceiving them

Spaciousness

How much of the sound you hear is indirect.

More echoes = more spacious-sounding room.

part of how acoustics affect sound

Base radio

How much bass vs. treble reaches your ears.

Helps describe how a room affects sound.

How the different frequencies are bouncing around

The ratio between how many low frequencies you’re getting vs how many high you’re getting

Part of how acoustics affect sound

Auditory scene analysis

The process of separating and distinguishing different sound sources.

Taking all soundwaves that are hitting your ear at once and splitting them up from the source its coming from

Auditory stream segregation

The grouping of a series of sounds together based on various characteristics / techniques.

Auditory locations

We use ITD, ILD, and spectral cues to tell where sound comes from.

Auditory onset time

The temporal difference between the onset of two sounds, used to help determine whether they belong to the same auditory stream.

example: Notes were coming at two separate times from the trumpet and saxophone which helps you distinguish that they are coming from two different things, even when you can’t notice the location difference

Timbre

The quality or color of a sound that distinguishes it from other sounds of the same pitch and volume, influenced by the harmonic content and envelope of the sound.

It allows listeners to identify different instruments or voices, even when they are playing the same note.

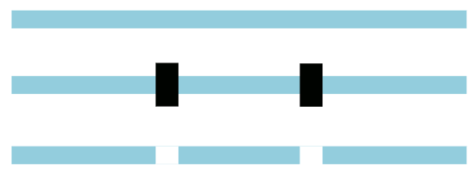

Proximity & good continuation in sounds

Grouping things together because they’re close together

See something as two overlapping curve lines, not two v shapes

Precedence effect

The phenomenon of perceiving the first sound of multiple versions as the source.

you are always hearing multiple versions of the same sound but are only perceiving the first one / the direct sound, and that’s what you use to determine the location of the sound

Reverberation time

The time taken for sound to die out in intensity after the source stops.

Intimacy time

Time gap between direct and first reflected sound.

Shorter gap feels more "intimate" or close.

The lag between direct and first indirect sound.

How long is the gap between…

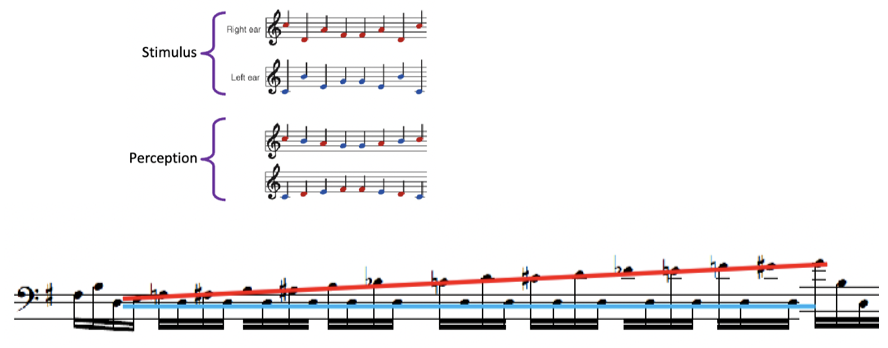

Scale illusion

Grouping notes together based on proximity and good continuation

Auditory continuity

Sound that is continuing in the background.

Similar to vision where if you see something with something blocking it, you still perceive it as the same shape

Top down processing

Your prior experience is changing how you group sounds together.

Multisensory interactions

Vision and hearing are interacting and influencing the other.

When sound is adding it gives you extra information

example: when two balls cross each other in an X with no sound compared to the same thing but with a clink sound it changes what you think you’re seeing to the two balls hitting in the middle and bouncing off each other

Vision receptive fields

Neuron responding to this same thing in space no matter if it is visual or auditory

Echolocation

The ability to locate objects using sound waves.

Bats use it but humans can also learn how to use the techniques

Universal characteristics of music

Perceptual

Similar pitches are grouped together

Octaves are perceived as similar

Physiological

Music elicits emotions

People move with music

Social

Performed in social contexts

Caregivers sing to infants

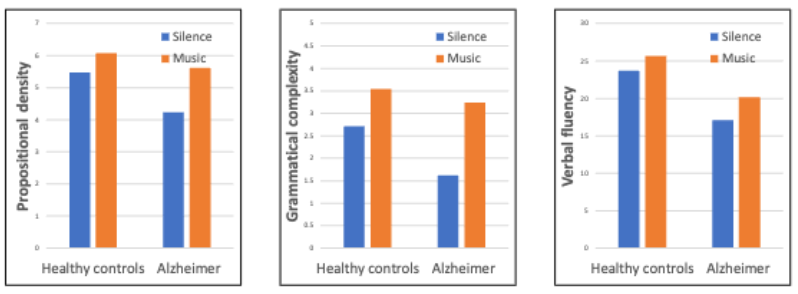

MEAMs (Music-evoked autobiographical memory)

Memories that are triggered by music, often relating to personal experiences.

example: Alzheimer patients vs. healthy controls

“recount in detail an event in your life”

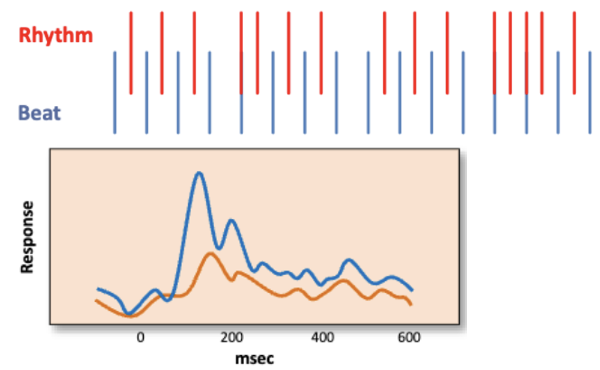

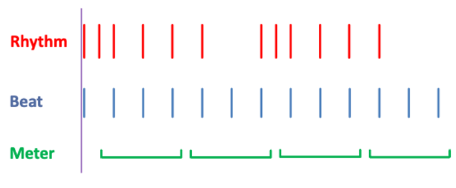

Beat (in music)

The steady pulse of music, guiding the rhythm.

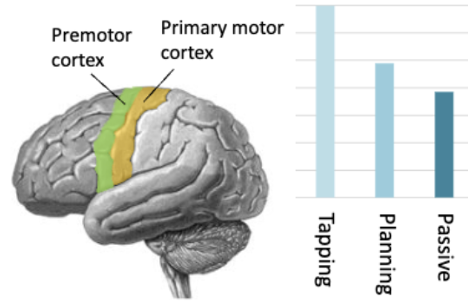

How the basal ganglia is involved with sounds

greater activation for beats

greater connectivity for beats

How the premotor cortex is involved with sounds

Strong activation for tapping

But also some just for listening

Rhythm (in music)

The arrangement of sounds in time, including onset times of notes.

Meter (in music)

The recurring pattern of beats in music, often grouped in twos or threes.

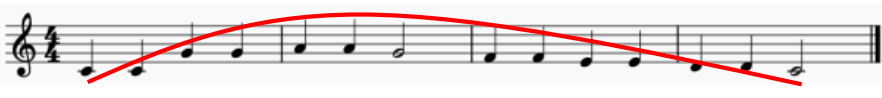

Melody

A series of tones arranged in a meaningful way that relate to each other.

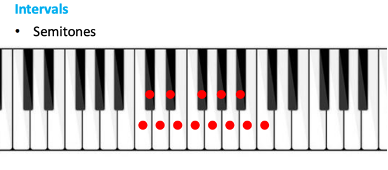

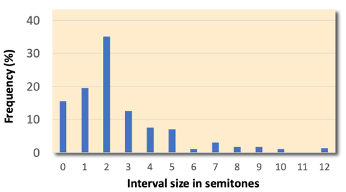

Intervals (in music)

The distance between two tones.

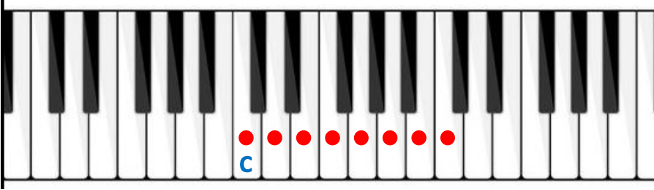

Semitones

The smallest interval used

Large intervals are rarer but are more likely to be up

Gap fill: Large intervals likely to be followed by motion in opposite direction

Phrases

Smaller series of notes

Likely to have longer pauses at the end

Likely to have larger interval after

Trajectories

Tonality

The organization of musical notes around a specific key.

How well do different notes fit into the key?

Amusia

A musical disorder characterized by difficulty in processing pitch and melody, often leading to problems with musical perception and performance.

They don’t recognize tones as tones, and therefore do not experience sequences of tones as music

The cognitivist approach

Proposes that listeners can perceive the emotional meaning of a piece of music, but that they don’t actually feel the emotions

The emotivist approach

Proposes that a listener’s emotional response to music involves actually feeling the emotions

Expectancy

The feeling that we know what’s coming up in music

an example of prediction

The beat creates a temporal expectation that says, “this is going to continue, with one beat following the other, so you know when to tap”

P600

P = positive

600 = that it occurs about 600 milliseconds after the stimulus is presented.

The response responds to violations of syntax

Early right anterior negativity (ERAN)

Occurs in the right hemisphere, slightly earlier than the P600 response recorded by Patel

This electrical “surprise response” are physiological signals linked to the surprise / unexpected sound experienced by listeners.

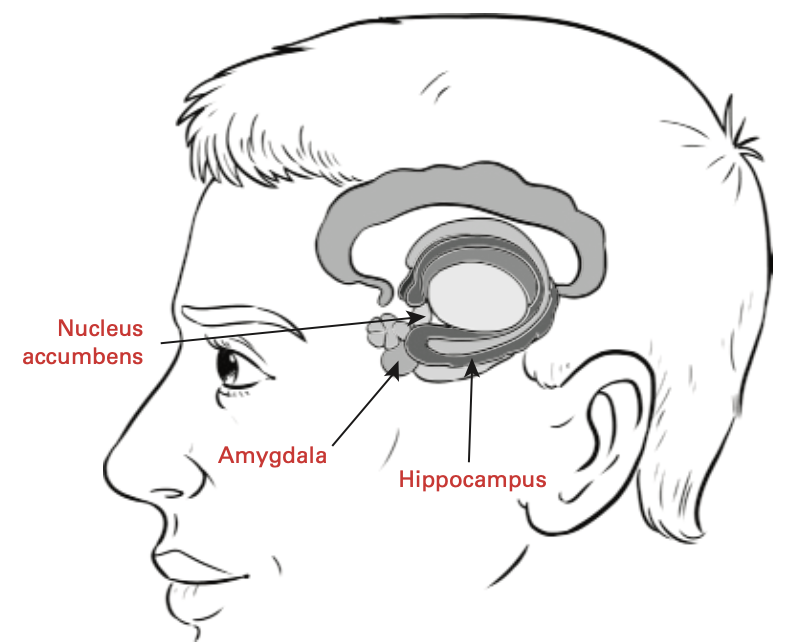

Brain regions associated with music emotions

The amygdala: also associated with the processing of non-musical emotions.

The nucleus accumbens: associated with pleasurable experiences, including musical “chills,” which often involve shaking and goosebumps.

The hippocampus: one of the central structures for the processing and storage of memories

The neurotransmitter dopamine

the nucleus accumbens (NAcc) is closely associated with the neurotransmitter dopamine, which is released into the NAcc in response to rewarding stimuli.

Opioid system (endorphins) = naltrexone

A study on the chemistry of musical emotions showed that emotional responses to music were reduced when participants were given the drug naltrexone: counteracts the effect of pleasure-inducing opioids.

They concluded that the opioid system is one of the chemical systems responsible for positive and negative responses to music.

Infants response to beat

Newborns (2–3 days)

Had neural “surprise” response to a missing beat

They have expectation for when next beat is going to come

Meter perception

Movement (bounced by parent)

Preferred meter that matched movement

Head-turning preference procedure

Measured movements of arms, legs, head, and torso

More movement to music than speech