Model Overfitting, Model Selection and Nearest Neighbor Classification

1/47

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

48 Terms

(T/F) Model fitting shows poor generalization performance

True

Steady ________ but the __________ increases as the tree size increases

training error, testing error

Even if we try to show the lowest error on the training set, it memorizes the _______

noise or outliers

What causes the training error to keep steady while the testing error increases with the tree size?

The limited training size and high model complexity

Pruning provides _________ and __________ tree depth

early stopping(it stops expanding the tree), limits

What is the goal with the model selection use of a validation set?

Estimated a models generalization error using “out-of-sample” data

A better indication of real-world performances carefully balancing the data split to ensure both ___________ and ___________

robust model training, reliable error evaluation

Evaluates the model on a ____________ validation set that is ____________ from the training process

seperate, excluded

What are the pruning stopping conditions (Pre-pruning)?

stop if all instances belong to the same classes

stop using if all attribute values are the same

stop if the number of instances are less than some user specified threshold

stop if the class distribution of instances are independent of the available features (e.g. GINI or INFORMATION GAIN)

stop if the estimated generalization error falls below a certain threshold

Name a post pruning procedure

Subtree replacement

With subtree replacement, you trim the nodes of a decision tree in a _______________. If _____________ error improves after trimming, replace _________ by a __________ .

bottom up fashion, generalization, sub-tree, leaf node

The class label of a leaf node is determined from the majority class of instances in the ______________

sub-tree

Pros of a decision tree

versatile

extremely fast at classifying unknown records

relatively inexpensive to construct

robust to noise (especially when methods to avoid overfitting are employed)

can easily handle redundant attributes

can easily handle irrelevant attributes

Cons of a decision tree

interacting attributes: attributes that are able to distinguish between classes when used together

But individually, they provide little to no information

due to the greedy nature of the splitting criteria in decision trees, such attributes could be passed over in favor of other attributes that are not as useful

Large Decision Tees are hard to intepret

Tree pruning is needed to tackle overfitting

Occam’s Razor

given two models of similar generalization errors, one should prefer the simpler model over the more complex model

A complex model has a greater chance of…

being fitted accidentally

Model Evaluation

estimates the performance of classifiers on previously unseen data

Hold-out

reserving k% for training and 100 - k% for testing

Cross Validation (K-Fold)

is a type of repeated hold out that partitions the data into k disjoint subsets, training with k - 1 partitions and testing with the remaining one

Model fitting

A model memorizes training data but performs poorly on unseen data

Class Imbalance

Lots of classification problems where the classes are skewed (more records from one class than another) causing models to bias towards the majority

Evaluation measures such as accuracy are not well suited for …

imbalanced class

______________ can fail to detect more trivial models and rare classes that can be more interesting

frauds

intrustions

defects

class imbalance

Oversampling

replicating instances from minority labels

Downsampling

is when the frequency of the majority class is reduced to match the frequency of the minority class

Oversampling and Downsampling does NOT…

reflect the real distribution of data and may lead to poor generalization

Percision

the fraction of positive examples predicted correctly by the model from all positive predictions

True Positive Rate (sensitivity)

the faction of positive examples predicted correctly by the model from all the positive examples

True Negative Rate (specificity)

the fraction of negative examples predicted correctly by the model

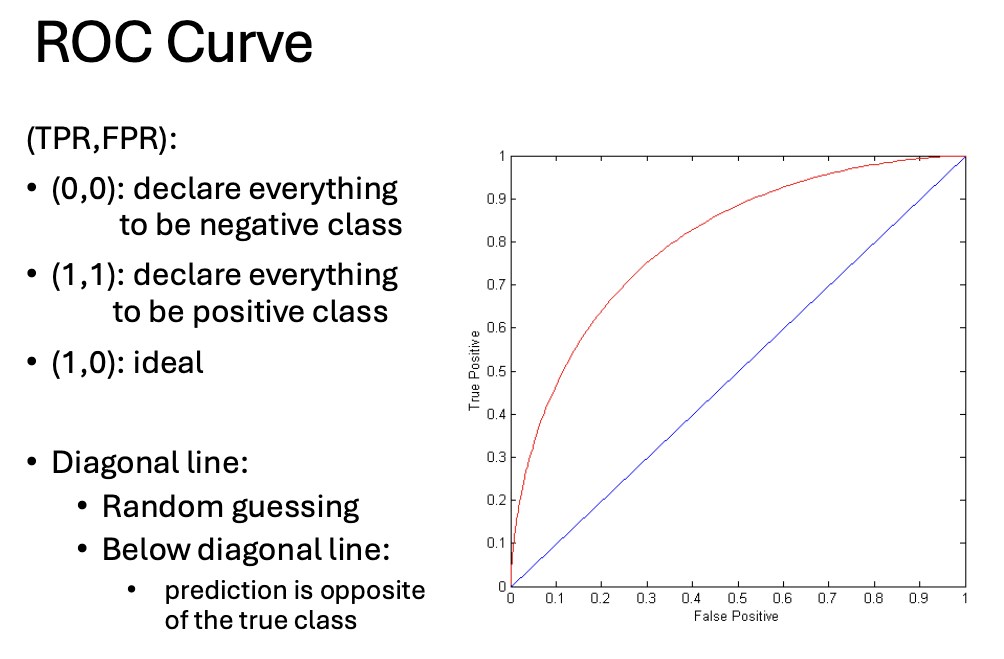

ROC (Receiver Operating Characteristics)

is a graphical approach for displaying the trade-off between detection rate and false alarm rate plotting TPR against FPR

To draw a ROC curve, classifier must produce ____________ output

continuous-valued

Nearest Neighbor classification is mainly used when all attribute values are _______________ although they can be modified to deal with _______________

continuous, categorical attributes

Nearest Neighbor Classification

estimates the classification of an unseen instance using the classification of the instance or instances that are the closest to it (lazy learner)

Pros of KNN

simple and intuitive

no training phase

versatile

adaptable to multi-class problems

Cons of KNN

computationally expensive

sensitive to feature scaling

choice of K and distance metric

K-NN can struggle with imbalanced datasets

If k is too small, it can be …

sensitive to noise points

If k is too large…

the neighborhood may include points from other classes

A major problem when using the Euclidean distance formula (and many other distance measures) is that the __________ frequently swamp the ____________

large values, smaller one

What proximity measure is the best for documents?

co-sine similarity

Class weighting is crucial in critical systems like:

spam filtering and cancer diagnosis

A nearest neighbor classifier represents each example as a _________ in a d-dimensional space where d is the number of attributes

data point

Given a test instance, we compute its proximity to the _____________ according to one of the proximity measures

training instances

Find the k training instances that are ________ to the unseen instance. Take the ___________ classification for these k instances.

closest, most commonly occurring

Outputs are used to ____ test records, from the most likely positive class record to the least likely positive class record

rank

By using different thresholds on this value, we can create _________________ of the classifier with TPR/FPR tradeoffs

different variations

Many classifiers produce only ___________________

discrete outputs (i.e., predicted class)

How do you construct an ROC curve?

Use a classifier that produces a continuous-valued score for

each instance

• The more likely it is for the instance to be in the + class, the

higher the score

• Sort the instances in decreasing order according to the score

• Apply a threshold at each unique value of the score

• Count the number of TP, FP, TN, FN at each threshold

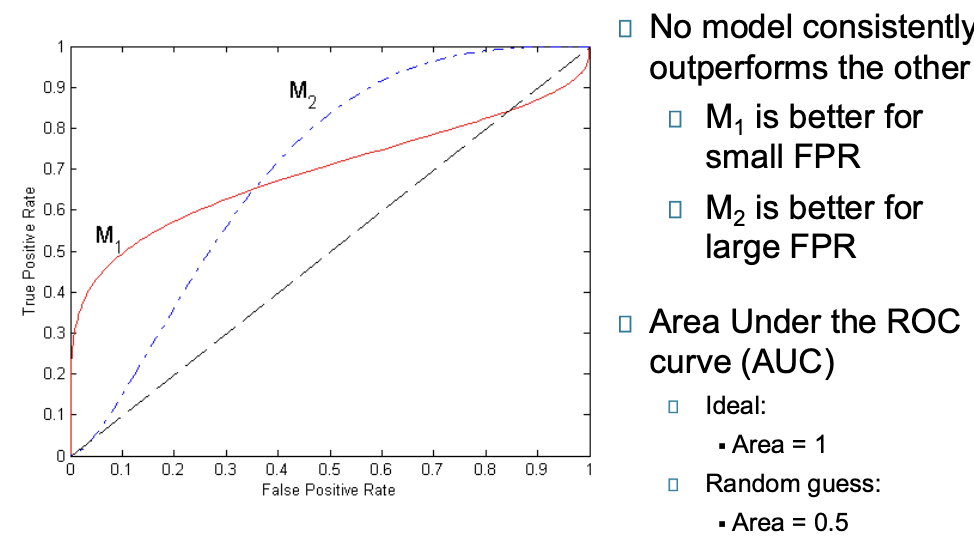

No model consistently ___________ the other

outperforms