Unit 10 - The Replication Crisis & Meta-analysis + Literature Reviews

1/55

Earn XP

Description and Tags

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

56 Terms

The Replication Crisis

_____________________ - Began with a paper in medicine, John Ioannidis made an article that argued “the majority of research claims are false findings” because…

Statistics -> blamed for the replication crisis

Studies w/ a small sample size less likely for findings to be true

Studies with SMALL effects — will be failed to replicate

More tests you do, the more likely a whole lot will be false

More flexibility like design, and define of constructs.. Results will be false

Greater the financial and other interests— if something is funded very well, results may be false due to other interests

Therefore --- most research findings are false! We should look at fields other than medicine

Replication crisis in Psych

____________________ -The crisis reached psychology through a number of events.

Daryl Bem

John Barge

Dietrich Staple

Number of authors got together to see how reproducible psychological science it. Hundreds of studies from the best journals:

1/3→1/2 replicated

2/3→1/2 did NOT

Daryl Bem

____________________ - Argued for ESP (Extrasensory phenomena),got a paper published in JPSP arguing for it, presented subjects with primes showing it affected judgement, priming them AFTER, arguing they knew what was coming, creating much controversy, either we accept it exists or there’s something wrong with the way we do research

John Barge

____________________ - Priming – participants were primed with old/elderly words and walked slower. But lots of this could be explained by experimenter bias -> Popular area of research that failed to replicate. Kahneman referred to priming as a dumpster fire but it was a popular area of research that failed to replicate

Dietrich Staple

____________________ - Data fraud/falsified research

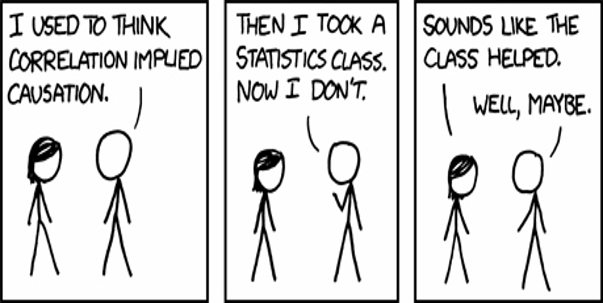

Statistical significance testing

Why is psych research failing to replicate?

____________________ - an argument that if your results are less than p = 0.05, results must be important. Greater than p = 0.05, then it is NOT important.

ISSUE: If you run enough participants, you will find an important/significant result, that’s not the way science is supposed to work

Small sample sizes

Why is psych research failing to replicate?

___________________ - causes to get quirky results (small sample fallacy) which you cannot replicate. We can only get 60 participants for UR for example

HARKing

Why is psych research failing to replicate?

__________________ - hypothesis AFTER knowing, but hypothesis is supposed to be BEFORE the study

Shrinking effect sizes

Why is psych research failing to replicate?

___________________ - Magnitude of effect shrinks more and more eventually disappearing– could be a result of a product that really isn’t there, but by chance you originally get a large effect

Researcher gets an interesting result, gets published, keeps trying to replicate.

Questionable research practices

Why is psych research failing to replicate?

_____________________ - Not doing research the way it’s supposed to be done

Example: Wonder Woman “posing” example -> Didn’t get results they wanted, so added more participants, and more, and more, until there was enough to have an effect/see results wanted

Publication bias

Why is psych research failing to replicate?

____________________ - Non-statistically significant research is not published– but in journals, you ONLY see success, not failures. Gives false impressions we never get to see the failures

Publish or perish

Why is psych research failing to replicate?

________________ - Pressure to produce results that are statistically significant so you can be published, get promotions, etc.

Could put off ppl from doing important research that is just not statistically significant, or cause them to publish questionable results

Eye-catching results

Why is psych research failing to replicate?

____________________ - Look for results that will give attention to us– but could be one-off and unreplicable. We always look for results that are counterintuitive that no one would expect.

Research design 101

Why is psych research failing to replicate?

________________ - Just being sloppy in research design

Conflicts of interest

Why is psych research failing to replicate?

___________________ - personal interests that can impact judgement

Getting a big grant to develop a new therapy, will you publish a lot of research that it doesn’t work?

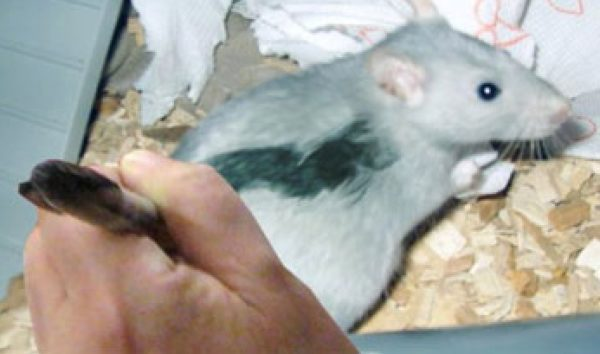

Fraud

Why is psych research failing to replicate?

________________ - deception for personal gain– fail to replicate (stapel, painting the mice)

Not valuing replication studies

Why is psych research failing to replicate?

_______________ - journals are reluctant to publish old studies just replicated. People want exciting new research.

Not tradition in psych research to reproduce old studies like in other sciences, whereas it may be in hard sciences

Responses to the Crisis

_______________ -There were many different responses, some of the old schools psychologists were not happy

Susan Fisk

Roy Baumeister

Wolfgang Strobe

Susan Fisk

_______________ - was furious after she accused her peers of ‘methodological terrorism’ when she was talking about was when she and others published research, terrorists appeared in blogs and criticized how the research is done.

She felt that’s not the way science should work and colleagues should interact with each other. Told them to write something to criticize and publish in a journal and we can have a debate.

Critics argued those journals are controlled by old school researchers like her. We often will not get our critiques published so we’re forced to go to blogs.

Roy Baumeister

_______________ - A better response in a paper arguing replications fail because the researchers are not as experienced as the original researchers.

You don’t immediately know how to do it, just like a plumber.

He makes an interesting argument for small sample sizes suggesting it’s better to do a number of small studies honing your technique rather than one big super size study which has lots of power but could be flawed

Wolfgang Strobe

_______________ - “What can we learn from many labs replications?” studies were done to try and replicate psychology research

Referring to attempts by multiple laboratories failing to replicate original findings

Critics of “replication crisis” article

Wolfgang says:

ISSUE: Would need a representative sample of ALL social psychology studies— not just handpick studies to replicate

ISSUE: Given social psychology operates in a SOCIAL context, a failure to replicate a particular study tells us little (referenced Aronson & Mills)

SOLUTION: Replicating or failing to replicate a research result is a scientific finding and, as such, not final… It does not mean one is right, one is not, we just have 2 results

SOLUTION: Our focus should be on theories --- and no one crucial experiment can determine the fate of a theory… Need multiple studies to see if a theory is supported or not

Aronson & Mills

_______________ - Women asked to join a group and have an embarrassing initiation reported it was more interesting than those who didn’t.

A study on how people interpret sex done in the 1960s would be interpreted much different than in 2020 due to how attitudes of sex have changed

General public think…

Lilienfeld argued public is skeptical of psych for the following reasons…says it’s not thought of as a proper science

People think psych is just “common sense”…

“Psych doesn’t use the scientific method”

“Psychology cannot generalize because everyone is unique”

“Can’t always predict people’s behaviour”

“Psych is not useful to society”

“Psych results don’t replicate”

Ended paper by saying there is some reason the public is skeptical. If you wander into chapters and the psychology section, you’ll find a self-help section that’s not scientific

Psych is just “common sense”

_______________ - a lot of results are obvious in hindsight. However there are ones contrary. And even those that are, they are valuable.

“Psychology does not use the scientific method”

_______________ - we do.

“Psychology cannot generalize because everyone is unique”

_______________ - If we do a study on this group, it cannot generalize to another. Both true and false, everyone who has cancer is unique, doesn’t mean you cant make generalizations. But you must also recognize they are unique.

“Psychology cannot make predictions”

_______________ - we cant predict how people will behave in a particular situation. Weather forecaster cant predict weather beyond 3 days, many sciences struggle to make predictions of all sorts (earthquakes), psychology can make predictions of course they’re limited

“Psychology not useful to society”

_______________ - it is! for instance look at treatments and people with autism , psychological disorders, etc.

“Psychology’s results don’t replicate”

_______________ - there are cases, if you look at the bulk, many replicate again and again

Dr. Phil

_______________ - If you ask the world who the most famous psychologist is they’ll say __________; endorsement of polygraphs and EEG feedback for ADHD—yet APA invited him to speak at their convention. Not a reputable psychologist anymore.

Ferguson

Wrote a response to Lilienfeld. Contrasted the roles of a lawyer versus a scientist whose jobs are advocating diff. people/advocating for the truth

Says recently we’ve begun to confuse science and advocacy “The difficulty is that advocacy and science are diametrically opposed in method and aim…

Science is dedicated to a search for ‘truth’ even if that truth is undesired, inconvenient, unpalatable, or challenging to one’s personal or the public’s beliefs and goals…

Advocacy is concerned with constructing a particular message in pursuit of a predetermined goal that benefits oneself or others”

Where do we go from here?

___________________ - There’s been many lessons learned. They come and go, the better we handle the current one, the better we handle future crises

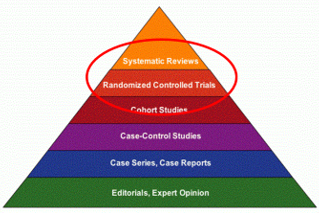

The Pyramid of Research Evidence

___________________ - When we think of this, the lowest level are editorials, then case studies, then case-control, etc. All are valuable. But randomized control trials were long thought to be at the top of evidence pyramids. These days there’s something higher: systematic reviews.

Literature reviews

___________________ - combine the results from many studies --- greater power and value. Permit researchers to address big broad questions. Baumeister said they are special!

The best way of research evidence

Three kinds:

Narrative reviews

Systematic reviews

Meta-analysis

Narrative reviews

Types of literature reviews 1/3

__________________ - non-systematically examine the published literature to address broad theoretical questions. Ex. a term paper

ISSUE: Often cherry-pick what info suits them best

Systematic reviews

Types of literature reviews 2/3

_________________ - systematically review the literature so all of it is represented in some way— NOT a cherry-picked sample

Meta-analysis

Types of literature reviews 3/3

________________ - systematically review info from literature statistically. ALL different types of studies conducted…

The term was created by Gene Glass—he did a large one looking at “does psychotherapy work?” concluding it is.

Became popular in psychology, education, and medicine

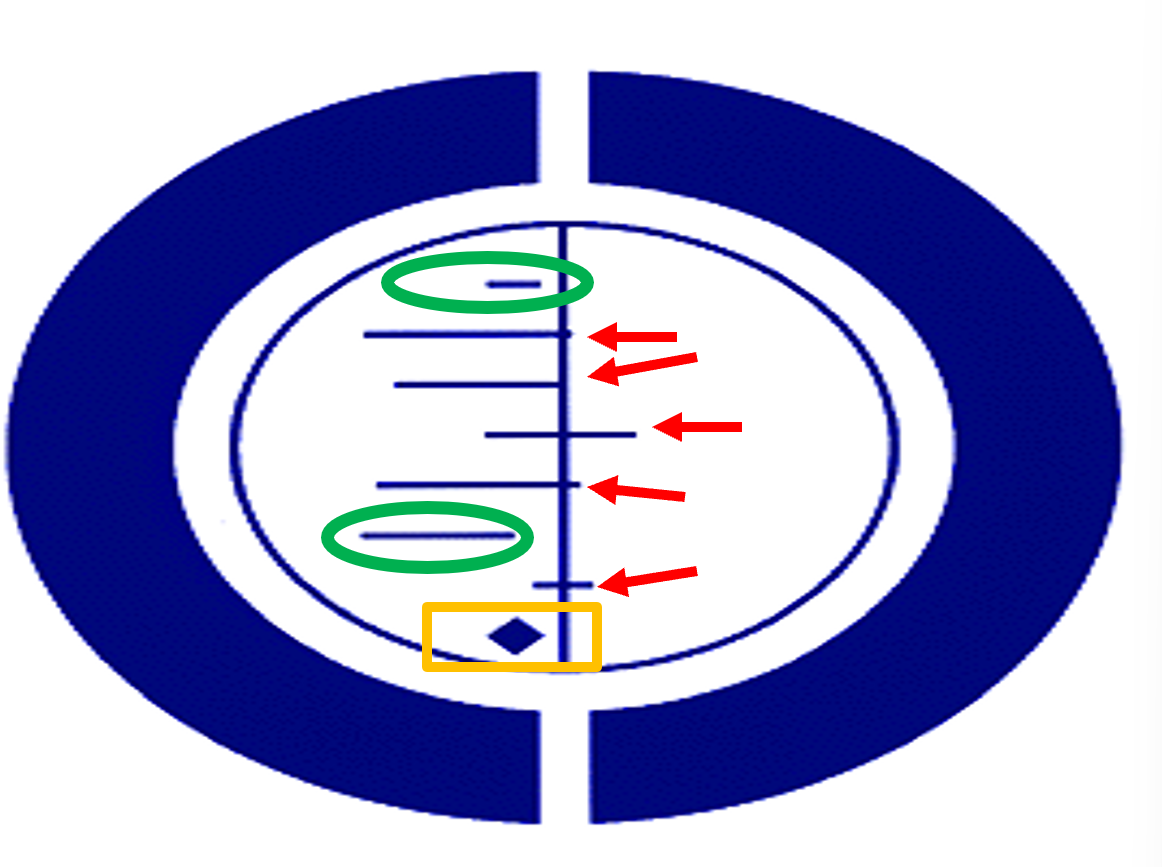

Meta-analysis EXAMPLE

The Cochrane Collaboration: do meta-analysis on topics related to medicine

Got symbol from study on premature babies suffering from lung issues. Thought a treatment might be helpful.

Two studies suggested it was effective, the effect was different from 0 (green)

5 studies didn’t find any differences (red)

A meta-analysis combined results from all 7, it didn’t touch the 0 line suggesting the drug was effective, many babies saved (yellow)

Steps to conducting a meta-analysis

Pick a topic

Collect all relevant quantitative studies (sufficient #, but not too many it’s overwhelming)

Explicitly state exclusion/inclusion criteria for studies (which studies in, which are out? Meta-analysis in Australia, researchers in Brazil started one of the same topic, but they had missed many important studies

Meta-analysis requires calculating effect sizes

IQ Scores

The average IQ in the population is μ=100

About σ=15 points above or below μ=100 captures most IQ scores (68%)

IQ scores are distributed normally, roughly 68% haves scores between 85-115

A herbal supplement’s effect on IQ scores gives the treatment group an advantage of 3 IQ points over the control group (x̄=103)

d=(μ-x̄)/σ

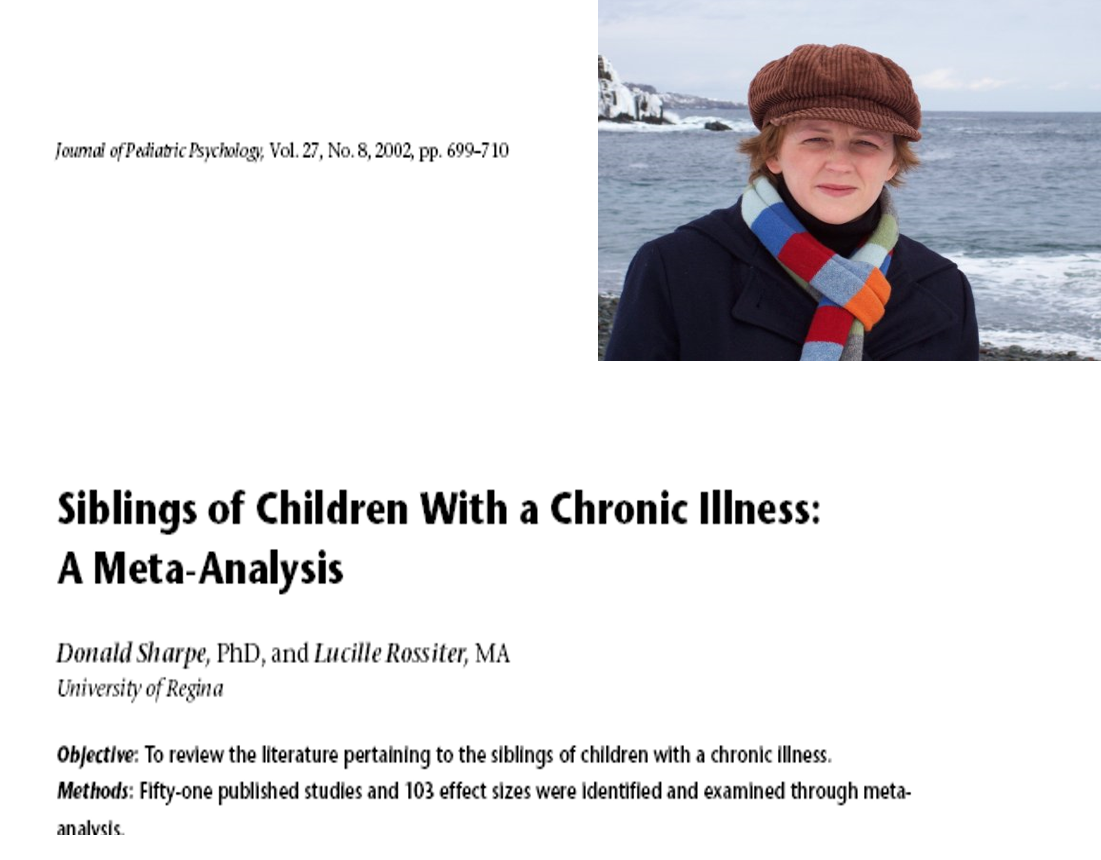

Cohen’s d

______________ - a popular measure of effect size

D of .2 is “small,” .5 is “medium” and .8 is “large” effect size

Each study outcome is converted to an effect size

These effect sizes themselves are summed and averaged.

Questions about effect size

If there is an effect-- Is the average effect size different from zero?

Do the set of effect sizes vary?

Can we explain variability in effect sizes by moderator variables?

Effect sizes EXAMPLE

Meta-analysis requires calculating effect sizes

EXAMPLE: If you had a sibling with a chronic illness, it may cause issues with a sibling because you are not paying attention to one kid over the other

Mean effect size d = -.20 (small and negative— 51 published studies, 103 effect sizes)

Effect sizes varied:

Reports from child (-.13) / parents (-.23)

what you were measuring– psych functioning (-.22), sibling relationship (+.12)

Chronic illness type: cancer (-.28) and cardiac (+.20)

Severity: Effects more likely when severity was LESS (-.26) compared to (-.17)

Cumulative and meta-meta-analyses

_____________ - Take results from multiple meta-analysis and put them together

Qualitative meta-analysis

_______________ - using qualitative research and combining it into a meta-analysis

Four conclusions from any literature review (Baumeister)

Hypothesis = correct

Hypothesis not proven but best guess

There is not enough evidence or the evidence is flawed!

HYPOTHESIS IS WRONG

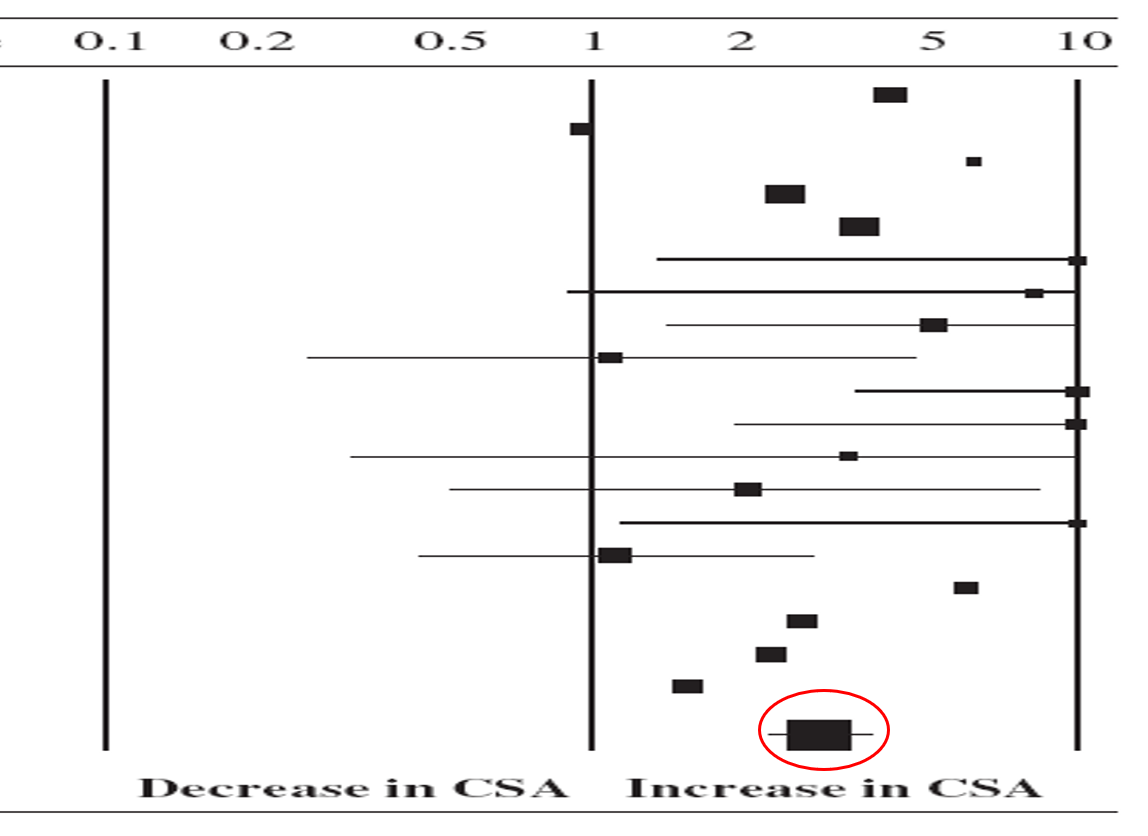

Blinding

Assumption that non-epileptic seizures (no changes to brainwave) may relate to child sexual abuse— Meta-analysis by him and Cathy Faye in Australia seemed to support this due to higher effect size…. Effect seems quite large and substantial

BUT studies were divided into blinded researchers and not blinded researchers– some researchers were more likely to rate someone with CSA when searching for it

…recommendation was that researchers MUST be blinded to avoid this

Hypothesis was WRONG or evidence is flawed

3 Criticisms of Meta-Analysis (Eysenck)

_____________________ - They were controversial when they first appeared. Hans Eysenck said:

Apples & Oranges

Garbage in, garbage out

File Drawer

Apples and oranges

Three Criticisms of Meta-Analysis 1/3

_____________________ - combining results from studies that measure different things

There are all diff. types of outcomes and theories– how could you get to an answer when they are so different? (all kinds of therapies, all kinds of outcomes)

SOLUTION: important to define collection of studies properly to avoid measuring diff. things

Garbage in, garbage out

Three Criticisms of Meta-Analysis 2/3

__________________ - combining low quality studies and giving them the same weight as good studies but defining “good quality” is an issue in and of itself

SOLUTION: Rate studies for quality and give more weight to those seen as good studies, but indicate how you’ve made those determinations

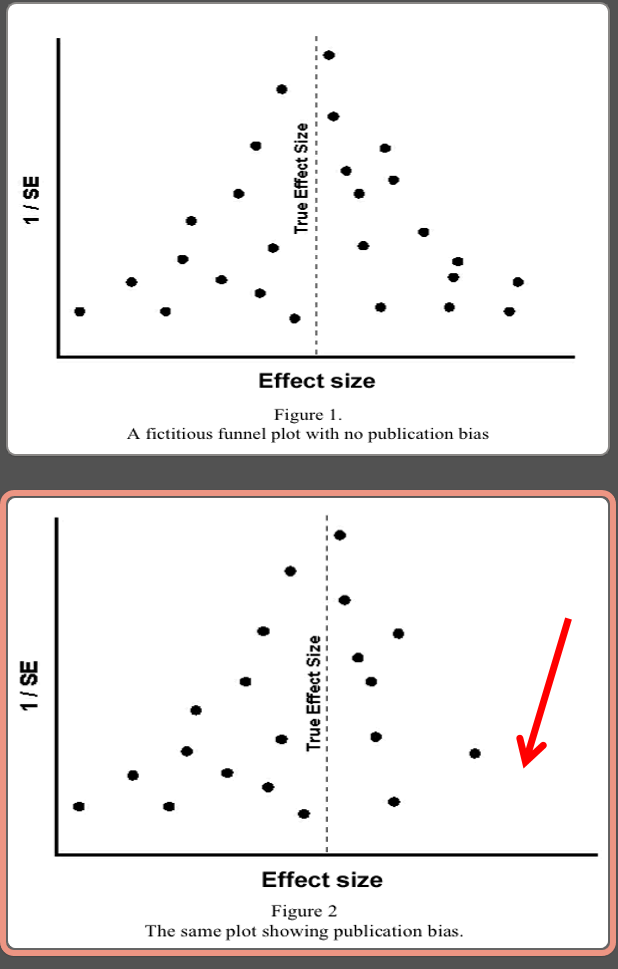

File drawer

Three Criticisms of Meta-Analysis 3/3

___________________ - the failure to obtain all or a representative sample of studies

If you look at published literature, it is overwhelmingly statistically significant, but don’t find the studies that DON’T work

SOLUTION: Use funnel plots to look for a “funnel shape” or spread of studies normally distributed… Missing some studies which could suggest publication bias.

Psychotherapy and Meta-Analysis (Glass)

___________________ - I think Eysenck was motivated by his disagreement with the conclusion reached by Gene Glass, he was himself a behavioural therapist, but didn’t believe all therapies were effective. Anyone who suggests all therapies work is problematic.

He called on the idea “Everyone has won and all must have prizes” (Alice in Wonderland)

Ferguson Video game violence meta-analysis

_________________ - Small effect between video games and violence– slight negative effect but ALSO prosocial effect of same-ish area

“Fatally flawed [meta-analysis]… should not have been published in this journal or any other journal”

![<p>_________________ - <span><strong>Small effect between video games and violence– slight negative effect but ALSO prosocial effect of same-ish area</strong></span></p><p><span><strong>“</strong><em>Fatally flawed [meta-analysis]… should not have been published in this journal or any other journal</em>”</span></p>](https://knowt-user-attachments.s3.amazonaws.com/cfa2b8d3-aae7-4505-91d5-807eb7b4bbb6.png)

Effects of CSA Meta-analysis

___________________ - Caused a big controversy…. Looked at college students (high-functioning group) who had history of child sexual abuse, they found the negative effects was neither common or typically intense. Not so different from others conclusions.

A long paper, hunch is that reviewers read through so much and didn’t pay as much attention to what was said in the discussion. Disagreements were all related to the last few pages. Nobody really noticed them originally, but Dr. Laura said its endorsing this, congress got involved, etc.

The paper had said….

[For children] “A willing encounter with positive reactions would be labeled simply adult-child sex”

“Adolescents are different from children in that they…know whether they want a particular sexual encounter, and to resist an encounter that they do not want…adult-adolescent sex”

Meta-Analysis Today

___________________ -Meta-analysis still remains a fairly controversial technique. Some of his most cited publications are his meta-analysis, you get more impact than publishing a regular study. Literature reviews can be very influential.