Behavioral final cram

1/115

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

116 Terms

real incentives (experiment)

payment based on decisions/choices

flat fee (experiment)

$$ for participation

lab vs. field experiment

lab=controlled experiement

field=natural environment

between subject vs. within subject experiment

between subject: every subject/group of subjects has a different treatment

within subject: every subject is exposed to multiple treatments

deception vs no deception (experiment)

deception= don’t reveal the true purpose of the experiment

weak preference

>= ; “is weakly preferred to”

transitive

strict preference

> <

transitive

indifference

~

transitive

Transitivity

if x >= y & y>=z, then x>=z

strict, weak, & indifference preference relations are all transitive

completeness

either x>=y or y>=x. if both=indifferent

completeness+transitivity=weak order (*note: this doesn’t mean weak preference, weak preference becomes a weak order once its assumed that its complete & transitive)

reflexivity

x is >= X; every option x is at least as good as itself

almost always holds

symmetry

~ is symmetric, if x~y, y~x (consistency across equivalent or mirrored situations)

ordinal utility

can replace u by v with v(x) = f(u(x)) for all x, as long as f is an increasing function

prefer higher utility

utility differences have no meaning (can be 0.007 or 67)

cardinal utility

can replace u by v with v(x) = f(u(x)) for all x, as long as f is a linear increasing function

higher utility means preferred

a larger utility difference means a stronger preference

(strong) pareto

1 policy is better if no one is worse off & at least 1 person is better off than before

utilitarianism

1 policy is better than another if it generates greater amt of social welfare (cardinal utility, sum of all utility of all ppl in society)

revealed preferences

people’s preference are revealed through their choices

projection bias

people project their current preferences onto the future

people’s preferences depend too little on what they will be in the future & too much on the present

duration neglect

ranking of past experiences are insensitive to variations in duration, ppl mostly focus on peaks & ends

peak-end rule

ranking of past experiences based on their peaks & end only (ppl forget bad/boring time consuming parts in the middle)

diversification bias

people overestimate the degree to which they will like variety in the future (think they want more variety, but they would rlly choose the same thing over time)

risk vs. uncertainty

risk= known probabilities, unknown outcomes

uncertainty= unknown probabilities & unknown outcomes

expected value

EV(Lottery)=p1*x1+…+pnxn

multiplying probabilities by outcomes

expected utility

EU(Lottery)=p1*u(x1)+…+pnu(xn)

certainty equivalent

same utility level as initial position, without risk, amount you’re indifferent between the lottery with

risk seeking

expected value is lower than CE

prefers risk/lottery to the expected value

convex utility function

risk averse

expected value is > CE

prefers receiving the expected value of a lottery for sure to the lottery itself

concave utility function

risk neutral

indifferent between risk or no risk

EV(L)~L

CE(L)=EV(L)

linear utility

Sure thing Principle

common component x between options, therefore it should not affect the preference between the 2

Asian disease problem

violation of expected utility

=the framing of the situation affects ppl’s choices (shouldn’t occur)

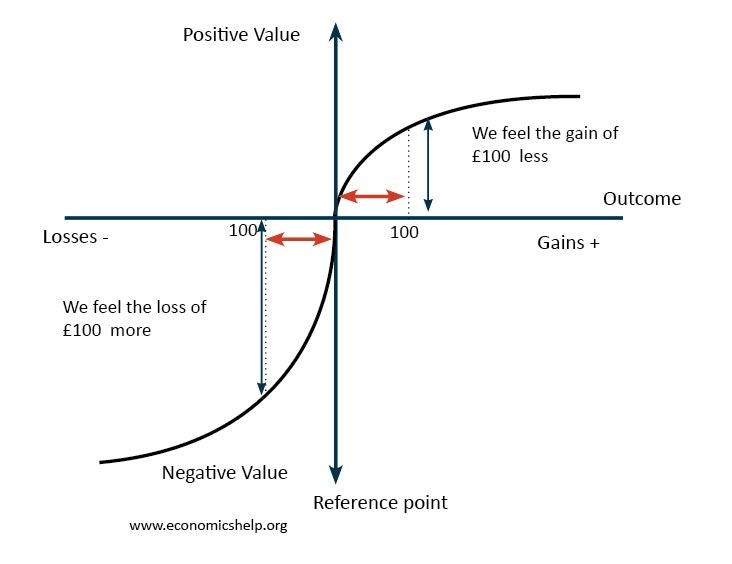

prospect theory

reference points

derive utility (utility seen as gains and losses, which have emotional attachment) from the payoff relative to a reference point (not in cumulative/absolute terms)

diminishing sensitivity & reflection effects

gains concave

losses convex

value function

loss aversion

losses hurt 2x as much as gains, convexity of losses

probability weighing

diminishing marginal sensitivity

a change from 0-10 appears larger than a change from 100-110, both for gains & losses

utility=concave for gains & convex for losses

risk averse for gains, risk seeking for losses (reflection effect)

Reflection Effect

risk attitudes for gains are opposite to those for losses:

risk averse for gains (concave)

risk-seeking for losses (convex)

St. Petersburg paradox

fair coin tossed repeatedly until heads is obtained, if it takes n tosses you earn $2^n. how much are you willing to pay to play?

expected to pay infinite amount because the expected value is infinite, but nobody pays a large amount (eu is a small #)

Violation of expected value

explained by expected utility

*shows that expected utility>expected value to describe ppl’s behavior

Loss Aversion

ppl fear losses more than enjoyment from gains

around the origin/reference point, the utility function is steeper for losses than for gains (losses have a bigger impact)

Alais Paradox

replacing the value of a sure thing shouldn’t change people’s preferences

ex: 89% chance of 60 in choice 1 for both, 89% chance of 0 for both in 2 makes them change preferences

violation of expected utility & sure thing principle

consisted with prospect theory

driven by certainty effect: ppl overweigh outcomes that are certain

maximin

choose option with greatest minimum utility payoff

maximax

choose option with greatest maximum utility payoff

minimax regret

choose option with lowest maximum regret

to calculate: find the difference in utility levels between the options (first note which choice is a win (predicted=outcome & there is no regret, those have a regret of 0; the other choice is the difference)

then compare the regrets & choose the lower one

subjective expected utility model

assign subjective probabilities to different options & then use expected utility

different people have different probabilities (unlike expected utility where everyone has the same probability)

still satisfies the sure thing principle

Ellsberg Paradox

consistent with ambiguity aversion; we dislike not knowing the probabilities

violation of expected utility

should prefer bets where you know the probability for certain (ex: 30/50 balls are blue > 20/50 balls are purple or yellow & u bet on how much are purple (don’t know the exact #)

calculate the expected utility: should have higher EU from known probabilities

decision time, temporal distance, & consumption time

decision time = when you make the decision

temporal distance = distance between deciding & consumption (the longer this is = further in the future the consequences of our decisions)

consumption time = consequences of the decisions occur

outcome stream

specifies what the consequences of our decision will be at every pt in time

transform outcomes into utility levels (turning it into a utility stream)

x= (x0,x1, …, xn)

utility stream =

u=u0,u1,…,un)

discounted utility (DU(x))

value of the utility stream

weighted utilities

further into the future= utility gets a lower weight

equation: DU(x) = u(x0)+d(1)u(x1)+…+d(n)u(xn)

impatience + DU = decreasing D(t); larger t (time)=lower discount function of t, further in the future = less utility

impatience

preference for positive utility sooner rather than later

impatience for unpleasant events (ex:dentist)

you prefer to postpone the event; it will hold a lower weigh in the future bc of discounted utility

*note: duration neglect & diminishing marginal utility are unrelated to delaying negative utility!!!

reasons for impatience

market interest

risk & uncertainty

pure time preference

health behavior

occupational choice

behavior of children & adolescents

contant impatience

adding a common delay to all options will not change preferences

adding a delay of sigma to both = unchanged preferences

(s:x)>(t:y), then (s+sigma:x)>(t+sigma:y) is still prefered

common time delay doesn’t affect preferences

time consistency

keep consumption time fixed, change decision time = preferences remain the same

decreasing impatience

time inconsistent; make plans & don’t stick to them

you’re more willing to wait to choose the better option (ex: get more money) if its far into the future.

if its recent/soon you will be more impatient & choose whatever is closer in time

Exponential Discounting

D(t)= deltat

delta = discount factor

(0<delta<1)

delta = 1/(1+r)

r=discount rate

therefore, larger discount factor = smaller discount rate

constant impatience & time-consistent behavior

Quasi-hyperbolic discounting

constant impatience when all outcomes are received in the future

decreasing impatience if possible to receive an outcome immediately

delta=discount factor, beta = present-bias parameter

decision changes due to time = time inconsistent

rational discounting

perfectly rational economic agent should behave this way

time consistency

exponential discounting can be considered rational

self-commitment

commitment to do a choice in the future

2 challenges to assumption that discounted utility function is independent from outcomes & depends only on time

Magnitude effect=large outcomes are discounted @ slower rate than small ones

Sign effect= losses are discounted @ a lower rate than gains (losses hurt more than gains, value function, reflection effect, convexity of losses, etc)

impatience for gains, but not for losses

contradiction/violation of discounted utility; prefer to get unpleasant things “over with”

dentist today is preferred to dentist in 1 week, despite being unpleasant

going to dentist today reduces unpleasant anticipation

preference for variation

ppl choose variety (ex: 2 different restaurants rather than the same one 2 days in a row)

preference for improving profiles

choice between:

a. 50 (today), 100 (1 month), 150 (2 months)

b. 150 (today), 100 (1 month), 50 (2 months)

ppl choose A

possibly due to loss aversion, prefer to gain over time rather than decrease

loss aversion in B

preference for spread

ppl prefer to spread out/distribute things they enjoy over time (rather than all at once or asap)

game theory 2 types of games

simultaneous-move games

all players decide simultaneously; cannot first observe what others have done

Nash eq

sequential-move games

observe what others have done

sublime perfect eq

why people deviate from nasheq/subgame perf. eq

limited strategic reasoning

either/both you yourself are limited or believe that others are

guessing game

utility depends on own payoff & payoff of others

“social preferences”; WB depends on more than your own utility

dictator game, ultimatum game, trust game

guessing game

state a # between [ , ], you win if you’re closest to 2/3 of the mean of all #s chosen.

playing 0 is a Nash EQ & there is no other Nash Eq

your best response is always to minimize the distance, aka lower the x

other method for obtaining Nash Eq: iterated elimination of dominated strategies

formula: #*P (p=%/prob given in the question)

elimination of all 1st order dominated strategies = eliminate all #s greater than & including #*P

Why don’t ppl play the Nash eq?

1. limited strategic reasoning

2. believe that others have limited strategic reasoning

ultimatum game

Steps:

proposer gets an amount S

proposer offers amount x to responder

responder can accept or reject the offer

accept= responder gets x & prosper gets s-x

reject= both get 0

EQ:

subgame perfect eq: if player’s utility depends only on their own payoff

2 subgame perfect eq

1. proposer proposes 1 cent & responder accepts bc they accept all + offers & reject offers of 0

2. proposer proposes 0 & responder accepts bc they accept anything

ultimatum game in practice

responders’ utilities cannot only depend on their own payoff (also depend on payoffs/intentions of other players too)

2 options for proposers utilities

they derive utility only from their own payoff & expect responders to reject small + offers

they derive utility not only from own payoff (also depends on others)

Dictator Game

Steps:

proposer gets amount s

proposer offers x to responder but responder cannot do anything (has no role, cannot accept or reject)

responder gets x & proposer gets s-x

outcome prediction:

if proposer’s utility depends strictly on own payoff, then he will propose 0 (no risk of rejection)

if the proposer gives anything more than 0, then their utility must depend on more than their own payoff

Trust Game

Steps:

proposer gets amount s

proposer sends x to responder

experimenter increases x to (1+r)x

responder returns y to proposer

result: proposer & responder both send 0

tragedy bc its not pareto optimal: they could both get more than eq if they could commit

public good

steps:

player 1 starts with an endowment

player 1 contributes to the public good some amount X

*if 1 player uses the public good, this doesn’t prevent other players from using it (all players benefit equally)

payoff eq for player I: Pi I = e-xi+msumofxi

Nash eq= no one contributes (assume someone else will anyway)

again results in a tragedy

IRL, ppl do contribute; driven by social preferences, ppl don’t want to be the free rider

Social Preferences

U(x,y) depends on X & Y

*standard preferences = U (x,y) only depends on x

Altruism

u(x,y) increases if y increases

envy

u(x,y) decreases if y decreases

rawlsian

u(x,y) increases if the payoff of the worst off increases

inequality aversion

u(x,y) increases if inequality |y-x| decreases

reciprocity

reward players with good intentions & punish those with bad intentions

outcome fairness

derive utility from final allocation of payoffs, not only from our own payoffs

process fairness

derive utility from how we get to the final allocation of payoffs

opportunity cost

missed value of the BEST NOT CHOSEN ALTERNATIVE

sunk costs/sunk cost fallacy

a) costs that are beyond recovery @ time of a decision & should therefore have no effect on the decision

b) the idea that a person/company is more likely to continue w/ a project if they’ve already invested a lot of time/effort into it (even tho this may not be the best decision) (violation of standard economic theory)

why do ppl believe in the fallacy?

ppl feel a need to justify decisions made in the past

ppl tend to be risk seeking when it comes to losses

decoy effect/expansion condition

the introduction of an inferior product/irrelevant alternative should not change your mind

the decoy is strictly worse than the target in all dimensions but better than the competition in 1 (no one should buy the decoy)

expansion c: if you choose x from {x,y} & if you don’t prefer z over x or y, then you must also choose x from {x,y,z}

but some ppl fall for the fallacy & change their mind

compromise effect

ppl’s tendency to choose an alternative that represents a compromise/middle option in the menu

ex: 1.5 litre coca cola bottle

endowment effect

an individual values something they already own more than in case they don’t yet own it (mugs ex)

*economic model predicts that losses & gains should be valued the same, but ppl value losses larger than gains (losses loom over gains)

gap in WTA & WTP is the endowment effect (they should be the same)

value function

used to describe the endowment effect, shows the larger magnitude (steeper line) of losses

important pt = reference pt

allows you to model if you’re disappointed or surprised by something (ex: firms make use of this by giving you a 30 day free trial)

side of losses is steeper than side of gains

Heuristic

shortcuts for the brain, a rule of thumb, can lead to predictable mistakes

when thinking of a problem, the brain uses shortcuts instead of computing probabilities & utilities

adjustment

subjects mis estimate the math of: 1×2×3×4×5×6 vs 6×5×4×3×2×1 (they think first is lower than the second, they don’t adjust upwards enough)

ppl adjust wrongly, in a predictable manner

Anchoring

an anchor is an initial value or estimate

it can influence the person making the estimation

ex to test this:

give ppl an irrelevant #. ask them if their estimate is > or < the irrelevant number. ask them what their true estimate is

diminishing sensitivity to gains

with a concave utility function for gains, you prefer to segregate gains (aka experience them separately = gives u more utility)

diminishing sensitivity to losses

with a convex utility function for gains, you prefer to integrate losses (combine them to decrease the bad feeling/effect)

mental accounting

ppl categorize/put money in different categories/accounts in their mind

open mental account when a payment is incurred

close the account when the benefits arrive

*timing plays a role in opening & closing of the different accounts (should not happen)

*budgeting is also important bc money is reserved for different budgets & not used intermixably

representativeness

estimating the probability that some outcome was the result of a process by reference to the degree to which the outcome is representative of that process (how similar the outcome is to ppl’s mental representation of that event)

Law of small numbers

ppl exaggerate how much small samples represent the population (could be outliers, etc)

gamblers fallacy

thinking that statistical outcomes are corrected in the short run

ex: thinking that throwing 6-6-6-6-6-6-6 is more unlikely than 5-3-4-5-2-5-1

regression to the mean

failling to see that statistical processes will return to their average in the long run

could be good/bad luck

base rate neglect

failing to take the base rate of an event into account (dont make correlations)

Ex: thinking someone works a specific job bc of personality characteristics, but irl need to consider the % of ppl with that type of job

Probability calculation: independent events

p(a) * p(b)

Probability calculation: bayes Rule

P(B|A) = (p(A|B)*p(B))/((p(A|B)*p(B) + p(A|-B)*P(-B)

Probability calculation: dependent events

p(a|b) * p(b)

availability heuristic

assessing the probability that you think some event will occur based on how easily it comes to your mind (ez=higher prob given)

retrievability of instances

ppl are asked to come up with instances of an event & they place more weight on things that are more salient/they remember more (ex dying of shark over dog, even though dogs are deadlier statistically) MEMORY*

effectiveness of a search set

ppl search for sets in their mind (try to remember/based off memory)

ex: how many words that start with the letter k vs have the letter k as a third letter (ppl think start with but its rlly as 3rd letter) MEMORY

imaginability

ppl predict something based on how they can generate a rule in their mind (have to imagine something)

ex: how many groups of 3 vs groups of 9 (ppl think there’s more of 3 than 9 but its the same)

confirmation bias

ppl look for evidence confirming the bias in their mind already, instead of looking for or considering evidence that disproves it

tendency to interpret evidence as supporting prior beliefs to a greater extent than warranted

conjunction fallacy

overestimating the probability of a conjunction (string of events all of which must happen)

ppl tend to use the probability of one event as an anchor & adjust downward insufficiently

(ex: what’s more probable: Linda works at a bank or Linda works at a bank & is a feminist; bank its statistically more probable, regardless of Linda’s personality or characteristics)

P(AnB)

disjunction fallacy

underestimating the probability of a disjunction (string of events, 1 of which has to happen)

P(A or B)

ppl tend to use the prob of 1 event as an anchor & adjust upward insufficiently

ex: prob of ppl with the same birthday is higher than you expect