Test 1 Psych 20

1/190

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

191 Terms

Prototype theory

We have one (abstracted average) prototype of a category

Prototype

One idealized average of all members of a category you have percieved

Exemplar theory

Representation corresponds to an actual category member

The best specific examples of a category you have actually percievd

Basic-level categories

The first term used to describe an object (ex: chair)

Superordinate category

Even more general term than first term (ex: seat)

Subordinate category

More specific term than first term (ex: office chair)

Group attribution error

When you apply the qualities (or perceived qualities) of a group to an individual

Leads to stereotyping and prejudice

Mental Representation

Some sort of internal model (or knowledge) that is linked to an external stimulus or information

2 possibilities: analog representation or propositional representation

Analog Representation

Mental representation in the form of sensory; perceptual experience

Ex: visual image, taste

Propositional Representation

Mental representation in the form of symbols; abstract assertions that maintain the relationship of referent

Ex: semantic language

Computer metaphor

Supported by the idea that, when shown an ambiguous image, people have a harder time switching between the two representations in their head after only seeing one interpretation, but they can see it switch when they actually look at the image again

Navigate the island

Participants look at and remember a simple map with a few landmarks —> participants push a button when a zipping dot would reach one landmark to another

Favors analog representation: the further the two locations, the longer participants took to push the button

Mental rotation

Visual images of cube shapes that have been rotated differently —> how long does it take for participants to answer whether or not the shape is the same or different than the original

Favors analog representation: the larger the angle of rotation, the longer the response time

Increased activation in the visual cortex alongside increased rotation

Visualization

Imagination to simulate a task

Mental vs physical trombone practice: group that only mentally practiced saw improvement, but group that mentally and physically practiced saw most improvement

Mental muscle building: mental training groups improved strength over control (stimulating muscle growth) but less than actual movement groups

Aphantasia

Lack of willed vivid imagery

Difficult to study; self-reported using VVIQ

Vividness of Visual Imagery Questionnaire (VVIQ)

Standard test for aphantasia or extent of vivid imagery in general

Hyperphantasia

Extremely vivid mental imagery

Binocular rivalry task

Alternative method to study vividness of imagery;

Prime patients to visualize one interpretation of a binocular rivalry stimulus —> those with aphantasia do not show priming effect of imagery

Inner speech

Speaking to yourself; single words, ideas, etc that pop up

Inner monologue

Specifically the narration of own life; more grammatical and continuous

Associated with default mode network in brain

VISQ-R

VISQ-R

Questionnaire on inner monologue/speech

No difference in cognitive ability between those with high/low scores

Frisson Response

“Aesthetic chills”

Skin tingling, goosebumps, shivers in response to musical aesthetics

Physical fear response yet we feel comfortable after

Mozart effect

Study that claimed that listening to Mozart before a test of spatial reasoning improved scores —> found later that same effect happened with other types of music and even other stimuli

Default Mode Network (DMN)

High activity in people with frequent/persistent inner speech/monologue

Associated with tasks involving introspection, basically the “ego”

Mind wandering, introspection, prospective thinking

Reduction in DMN activity and connectivity associated with “living in the moment”, meditation, exercise

Internal Representation Questionnaire (IRQ)

Revised version of the VVIQ and VISQ into a single more comprehensive questionnaire

4 categories: visual, verbal, manipulation (spatial cog), orthographic (visualize written words)

Compressions (sound)

Areas of high density and pressure where particles are pushed together

Rarefactions (sound)

Areas of low density and pressure where particles are pulled apart

Sine wave (“pure tones'“)

Simplest sound wave, only has one frequency (the fundamental)

Frequency

Measured in Hz. Doubling —> octave up

Related to perceived pitch

Amplitude

Measured in dB

Related to the perceived loudness (intensity)

Harmonics

Higher multiples of a fundamental frequency —> gives sound “timbre” (unique qualities that distinguishes a voice from an instrument for ex)

Changes sound without changing its pitch

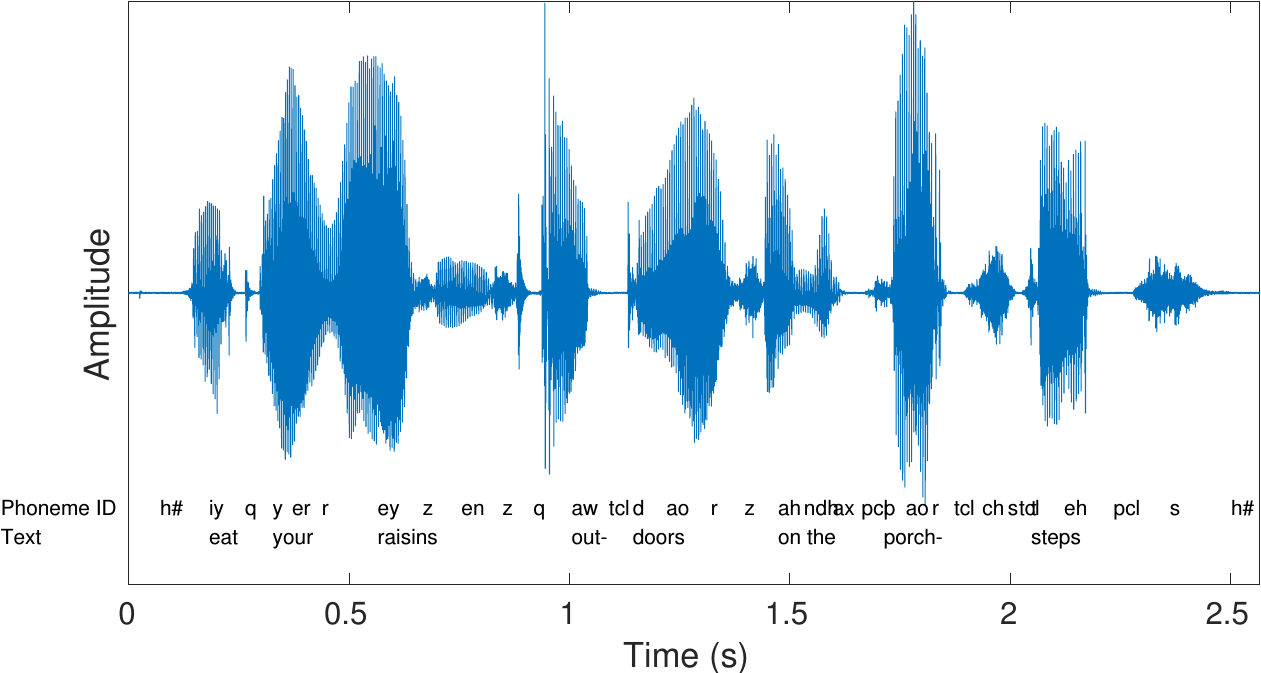

Waveform

Intensity over time

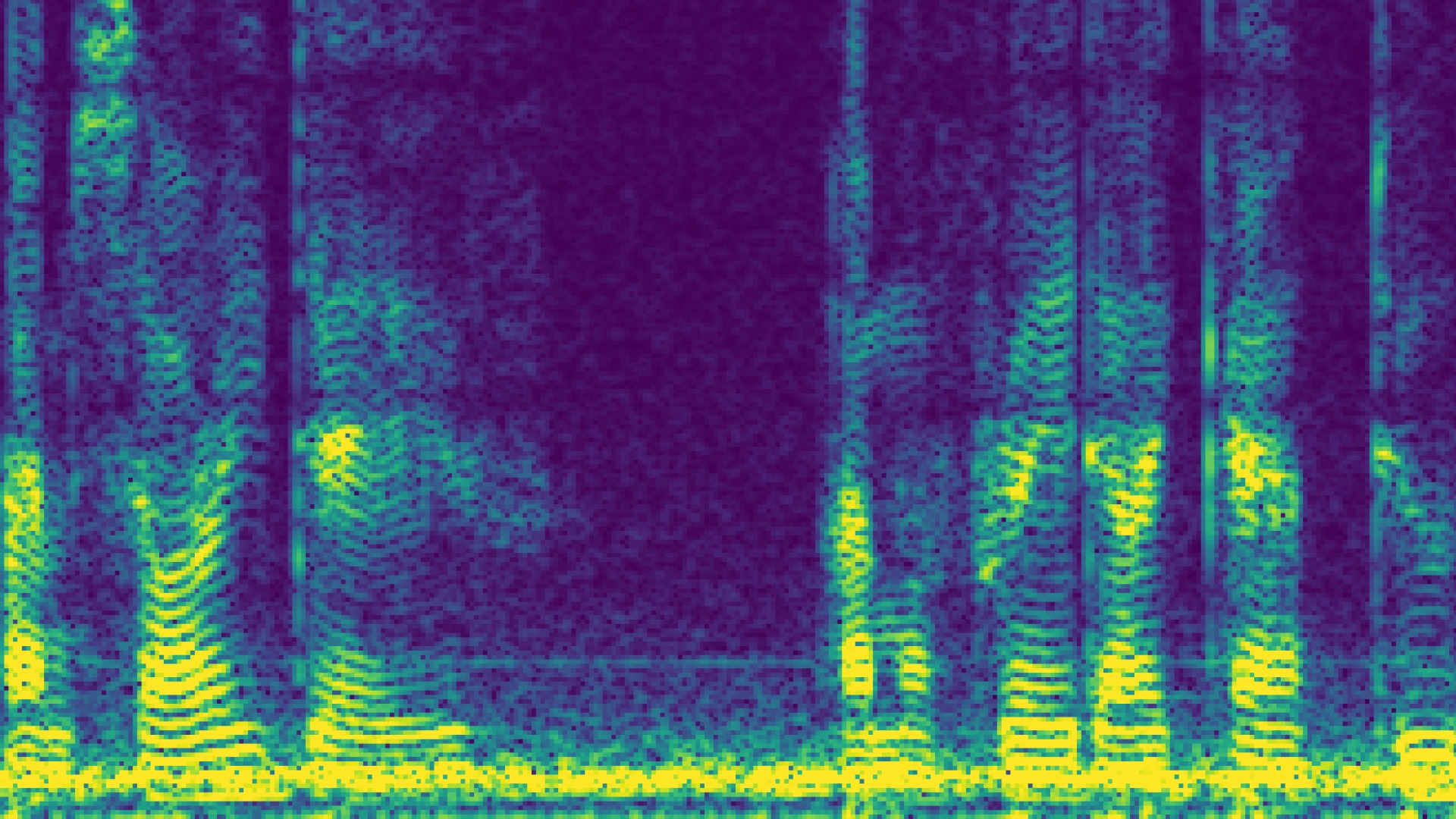

Spectrogram

Frequency and intensity over time

Pure tones = one frequency, one line

Complex tones = multiple frequencies at once, many lines

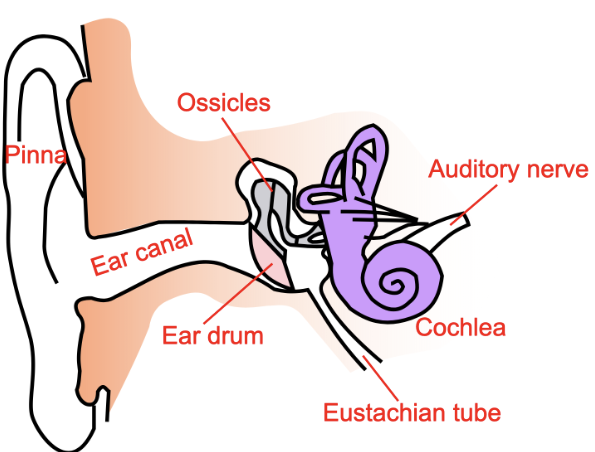

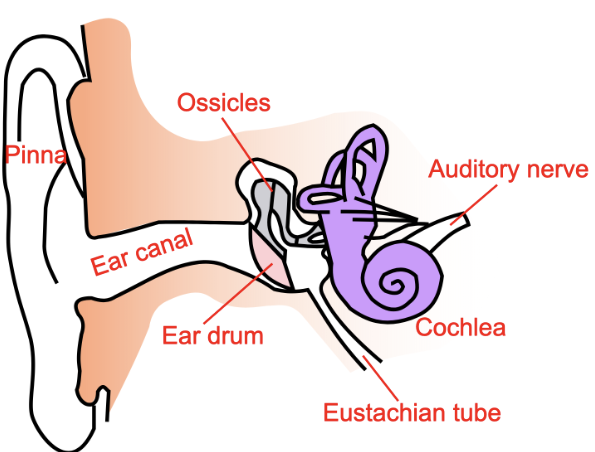

External auditory canal

Basilar membrane

Located within the cochlea; forms bulges due to sound —> pushes hair cells up

Hair cells

Specialized neurons

Auditory equivalent of photoreceptors (but they detect mechanical energy (pressures on the hair) instead of light)

How do hair cells work

Converts mechanical sound vibrations into electrical signals for the brain. Sound causes fluid in the cochlea to vibrate, bending hair-like stereocilia atop these cells, which opens ion channels, depolarizes the cell, and releases neurotransmitters to the auditory nerve

Too loud sounds → break tips of hair sounds → permanent damage because hair cells cannot repair themselves

Cochlea (“acoustic prism”)

Physical structure mirrors spectrogram

High frequencies stimulate hair cells near base, low frequencies stimulate hair cells near apex

Transmits electrical impulses to auditory complex

Cochlear implant

Artificially produces electrical impulses

Can stimulate much less variations of sound —> much lower sound quality

Conductive hearing loss

Vibrations inhibited due to ear wax buildup, infection, otosclerosis (degeneration of ossicles)

Hearing loss due to physical obstructions to ear

Sensorineuron hearing loss

Caused by damage to the inner ear’s hair cells or nerve pathway to the brain

Metabolic - can be caused by certain drugs (ototoxicity)

Sensory - caused by exposure to loud noises over long periods of time

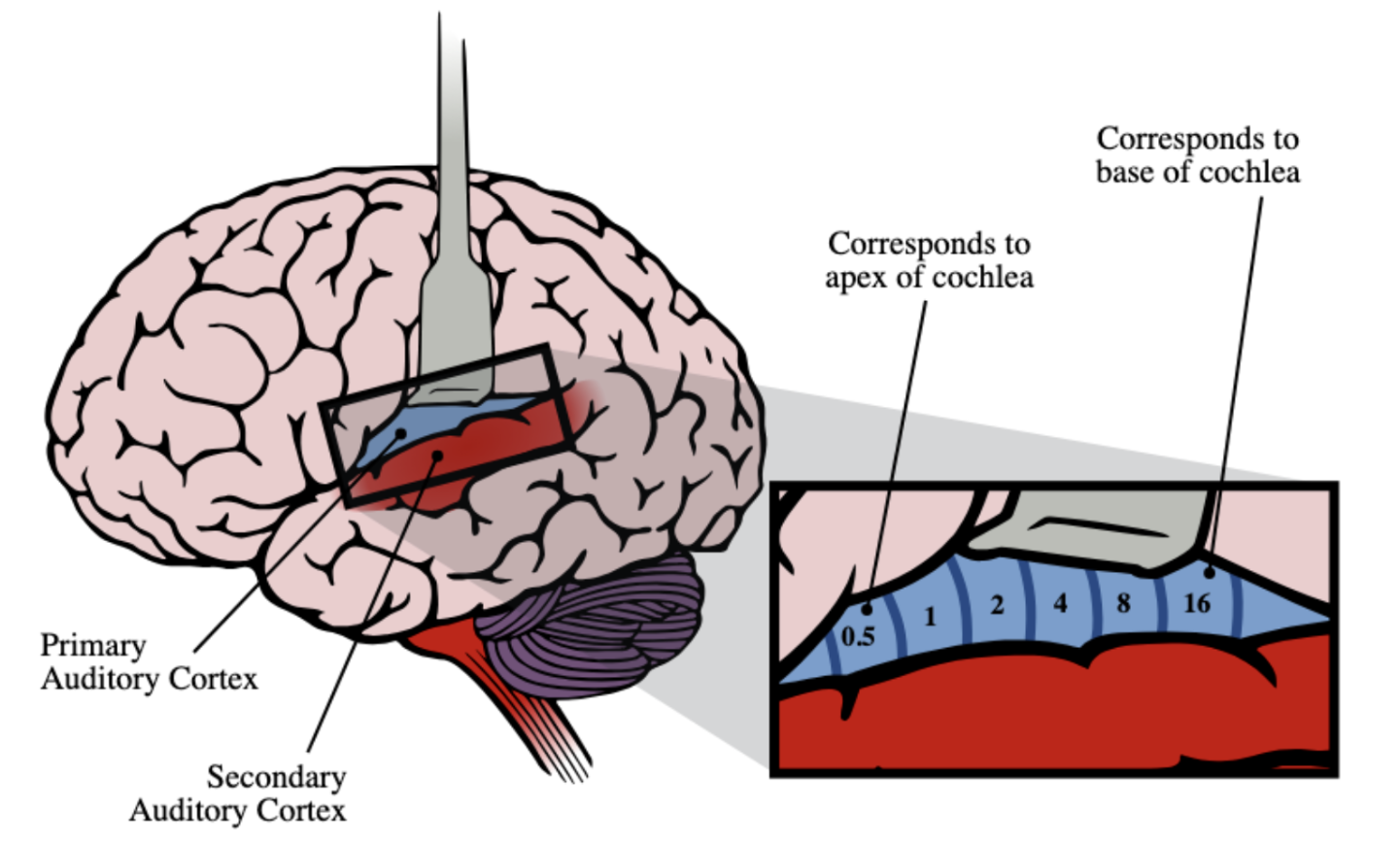

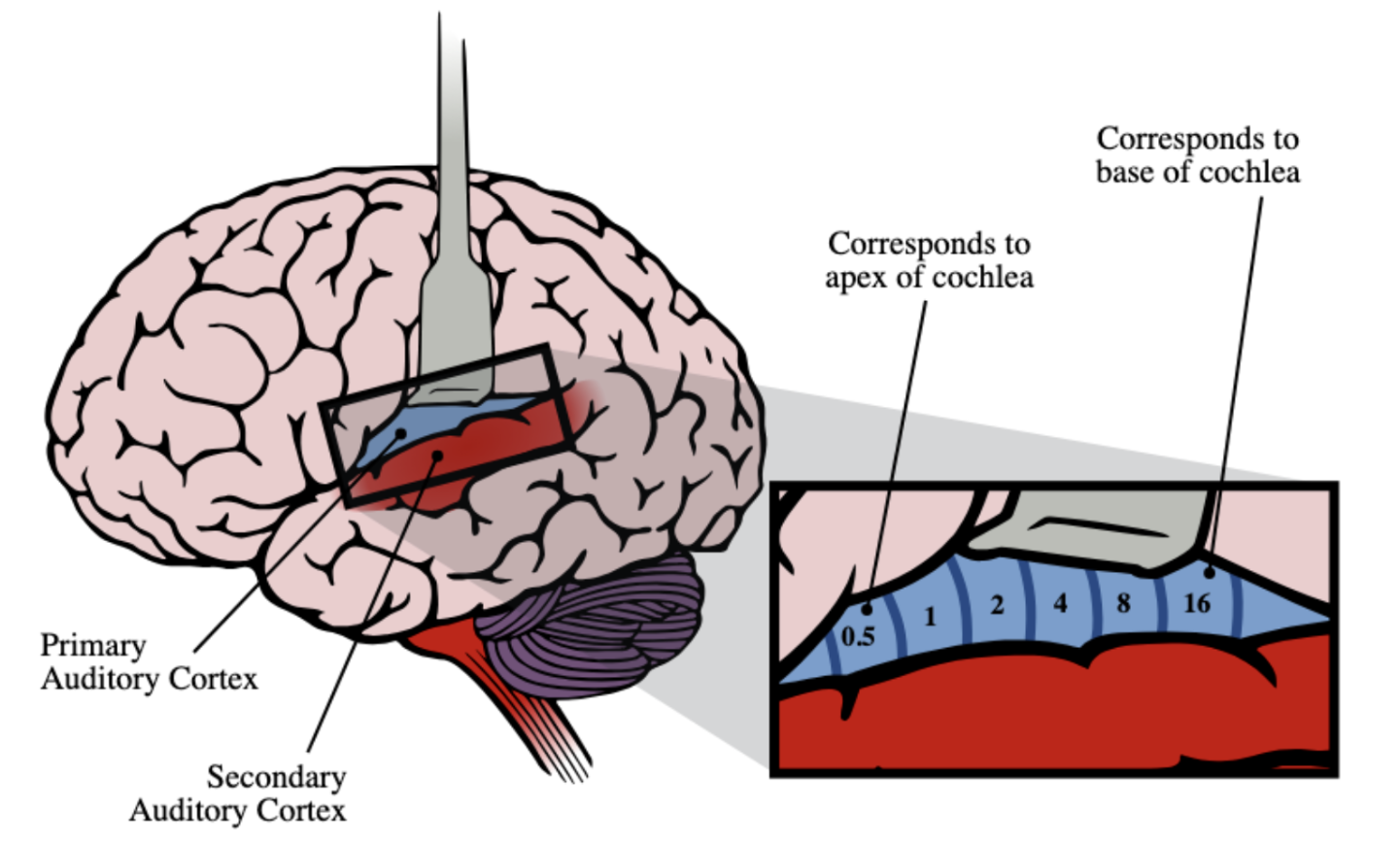

Auditory cortex

Primary auditory cortex (A1) in the temporal lobe

After A1, splits into dorsal (where) and ventral (when) stream, like vision!

Tonotopic organization

Tonotopic organization

Different neurons react differently to different pitches, organized spatially

Binaural cues

Sound localization technique using auditory signals from both ears

ITD & ILD

Interaural Time Different (ITD)

Sound reaching opposite ear from source takes longer

Interaural Level Difference (ILD)

Sound reaching opposite ear from source is quieter

Monaural cues

Sound localization technique using auditory signals from one single ear

Pinna folds

Pinna folds

Shape of ear; used for sound localization as their shape filters incoming sound waves differently depending on their source

Cone of confusion

Region where you can’t discriminate the location of a sound

ITD and ILD are ambiguous

Best way to resolve = moving the head around

Localizing distance (sound)

The best for sound is 1 meter

Inverse square law —> we underestimate long distances

We are good at telling of things are approaching or receding

Reverberations

Sound bounces off surfaces

Sound localizing technique; if someone is far in a room, much of the sound will be bouncing off the surface. If someone is close, much of the sound will be direct

Auditory Stream Segmentation

We need to segment one “stream” (one source) of sound from others in an environment where they’re all mixed together

Use auditory grouping principles

Auditory Grouping Principles

Proximity (in time) - sounds occurring close together in time are likely to be perceived as one stream

Size and pitch - bigger things (ex: animal vocal tracts) vibrate slower —> lower pitch

Timbre

Continuity

Cocktail effect

Ability to focus attention on one speaker alone

Acoustic startle response

Very rapid motor response to a loud unexpected noise

Amusia

Inability to perceive / reproduce tone

“tone deafness”

Music agnosia

Inability to hear music holistically

Can be selective to music; cannot recognize familiar songs

Motion adaptation effect

Stationary objects appear to move in the opposite direction after prolonged viewing of a moving stimulus

Apparent motion

Stationary objects, displayed in quick succession or viewed from a moving reference frame, are perceived as moving

Motion pareidolia

Our brain can perceive coherent motion if instructed to / told random shifts make a certain motion

Works with random dots if you prime or direct people to see it

Motion parallax

As we move side to side, objects that are closer to us move faster and objects that are further from us move slower

Helps with depth perception

Temporal resolution (speed of sight)

Ability of the visual system to separate events over time

Flicker fusion

Visible persistence

Flicker fusion

Light that is flashing super fast just looks solid —> can’t separate so they “fuse” together

Speed of sight = ~30 ms

Happens because neural communication isn’t instantaneous

Visible persistence

The brain continues to perceive an image after the physical stimulus has disappeared

Causes motion blur in humans —> used in film by cameras and animation

Global superiority effect

Largest grouping (global) is preferred over smaller grouping

Property of an object

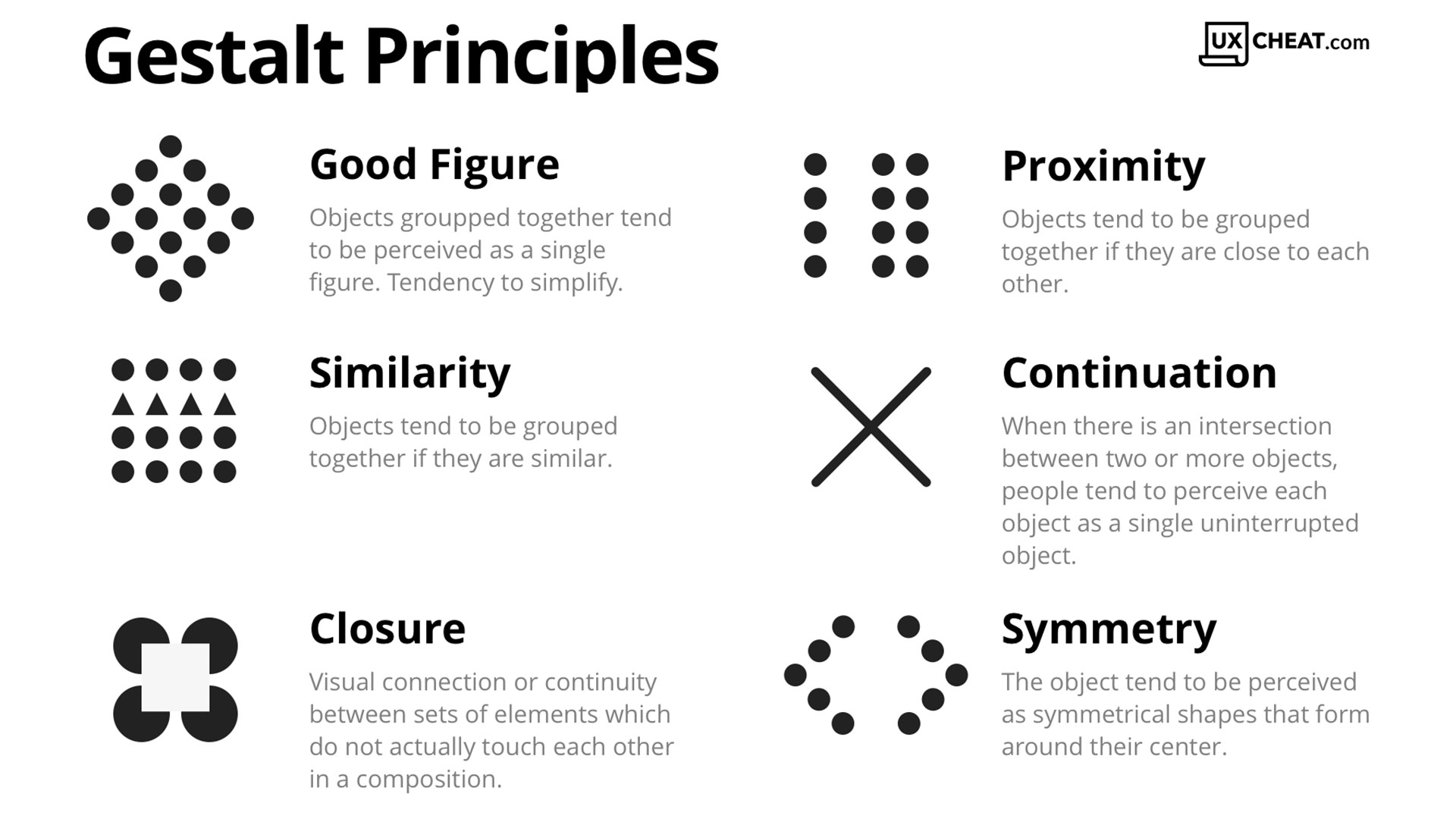

Gestalt grouping principles

Systems for organizing a messy world into discrete systems of objects

Subjective edge

A visual phenomenon where the brain perceives a clear edge, border, or shape even though no physical contrast, color, or luminance change exists at that location

Continuation & Closure

Object properties

A singular level of hierarchical structure

Stable grouping of visual info (Gestalt)

Figure as opposed to ground

Shading

Canonical viewpoint

Most representative / “obvious” viewpoint

Accidental viewpoint

Rare angle of an object —> harder to identify

Two theories of Object Recognition

Distributed and Local

Distributed object recognition

Recognition by components; segments an object into geometrical components (geons)

Advantage: can be modeled computationally

Could better recognize entry-level categories (ex: bird, dog)

Local object recognition

Recognition by views; different views of the same object are stored in LTM (exemplars)

Disadvantage: computationally expensive because it uses much more storage

Could better recognize specific instances of object type (ex: my dog)

Object agnosia

Difficulty or inability to engage in object recognition; 2 forms

Apperceptive agnosia

Cannot identify objects based on vision because they only pay attention to fragments

Can draw from memory but cannot reproduce new things

Associative agnosia

Can see and even reproduce objects, but can’t recognize them as what they are (name, use, meaning)

Problem with perception and memory (forming associations)

Visual indeterminacy

Damage to inferior temporal lobe

Visual indeterminacy

Before object recognition occurs, object is “indeterminant”

Optic ataxia

Inability to guide hand or eye movements using visual info, despite having normal vision and motor strength

Can identify objects, but difficulty acting on them

Damage to posterior parietal lobe

Pareidolia

Seeing patterns (objects or faces) in things that are not such (houses, cars, clouds, etc)

Prosopagnosia

Difficulty recognizing faces specifically

Fusiform Face Area (FFA)

Damage to this area located in the right temporal lobe —> prosopagnosia

Active when people recognize/discriminating specific types of things (cars, words)

Greebles

Highly homogenous artificial stimuli used to study object classification similar to facial recognition

FFA is active in “experts” that can distinguish them

Thatcher effect

Inverted faces are less subject to holistic (global) processing

Flash face distortion effect

A visual illusion where rapidly alternating faces, viewed in the periphery, appear grotesquely deformed or caricatured

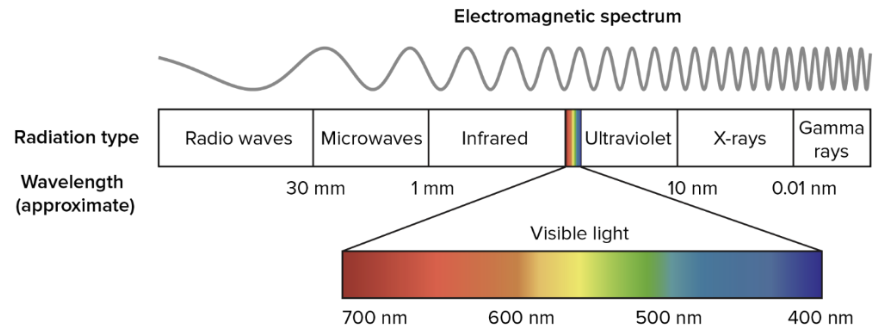

Light

Comprised of photons, particles, and waves

Wavelength

Determines the color of light

Amplitude

Determines the brightness of light

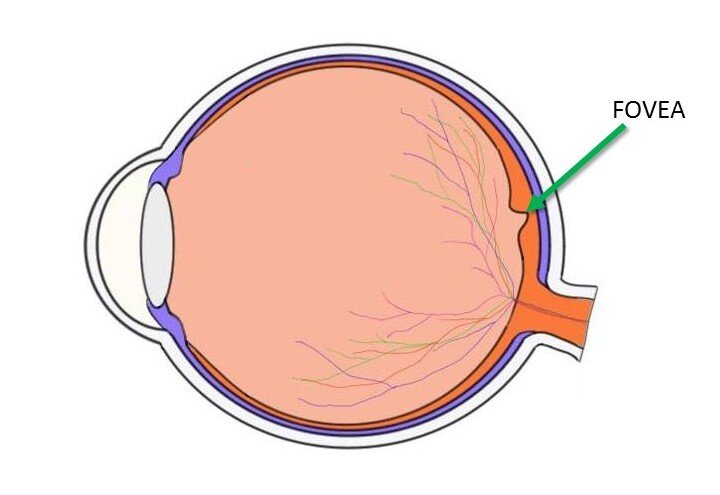

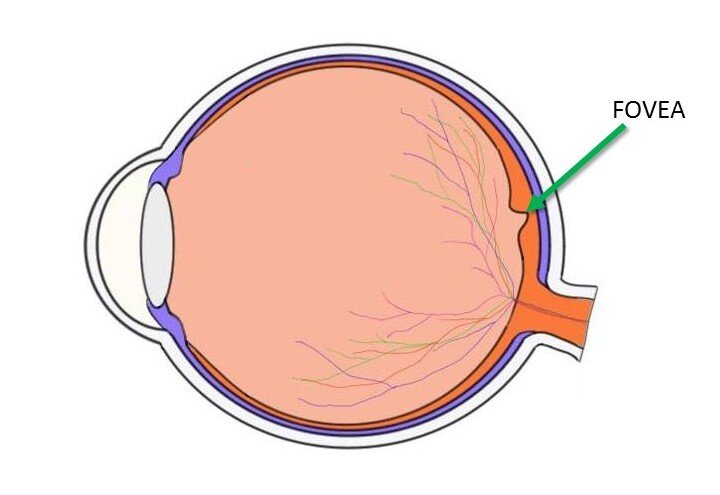

Fovea

Focus; exactly what you’re currently looking at

Sharpest, most detailed, color-sensitive

Retina

Converts light into electrical signals, enabling vision

At the back of the eye

Optic nerve

Bundle of nerve fibers that transmit visual data form the retina to occipital lobe

Rods

Low light vision (scotopic); night vision

Sensitive to fast motion

Low resolution; poor edge detection (high convergence)

Concentrated at the peripheries of the eye

~120 million per eye

Cones

Requires bright light (photopic)

3 types: short, medium, long —> corresponds to the wavelength of light they’re most sensitive to (not physical length)

Allows us to perceive color

High resolution, good edge detection (low convergence)

Concentrated in the fovea

~6 million per eye

Neural convergence

Each cone provides a much larger relative input to visual cortex than rods, even though rods outnumber them

90% of the brain’s input originates from cones

Optic disk

“Blind spot” where the optic nerve leaves the retina

Optic chiasm

X-shaped structure where info from the optic nerve splits into either hemisphere

Lateral Geniculate Nucleus (LGN)

Structure in thalamus that routes information from the optic chiasm to the primary visual cortex

Primary visual cortex & area V1 (primary visual area)

Cortical area for processing information

At the back of the occipital lobe

Retinotopic organization

The precise, ordered mapping of the visual field from the retina onto the brain’s visual areas, specifically V1

Adjaent neurons respond to adjacent areas of the visual scene

Cortical magnification

Much more neurons are used to process information from the fovea rather than the periphery

2 Streams of information that flow from V1

Dorsal pathway & Ventral pathway

Dorsal pathway

Visual processing stream connecting the Occipital to Parietal lobe

Spatial location and action (“where”)