Biostats Exam 2

1/28

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

29 Terms

steps of hypothesis testing

state the hypothesis (both null and alternative)

conduct statistical test (such as chi square)

find p value

conclusion

when is Tcv positive vs. negative?

positve when we load confidence to the right, negative when we load it to the left. This is because it should be the same sign as we expect t to be.

What are the appropriate hypotheses for each statistical analysis?

z scores do not involve hypothesis testing. You only convert a value to a z-score to find a probability/area under curve

chi square gof: the null is that the observed frequencies match the expected frequencies.

chi square indep: the null is that the first categorical variable and second categorical variable are independent of each other.

one sample t test: is our sample actually apart of the population we are trying to measure? Null is that the population mean and sample mean are the same.

two sample t test: Compare the means of two independent groups. Null is that the means of two samples are the same.

paired t test: Compares the means of two related measurements. So the null is that the difference between the two means is zero.

Mann Whitney U: Non-parametrically compared the means of two independent groups. Null is that the two groups are the same (not their means!!)

ANOVA: Compares the means of more than two independent groups. The null is that the means of each group are the same.

Tukey: means of each pairing are the same

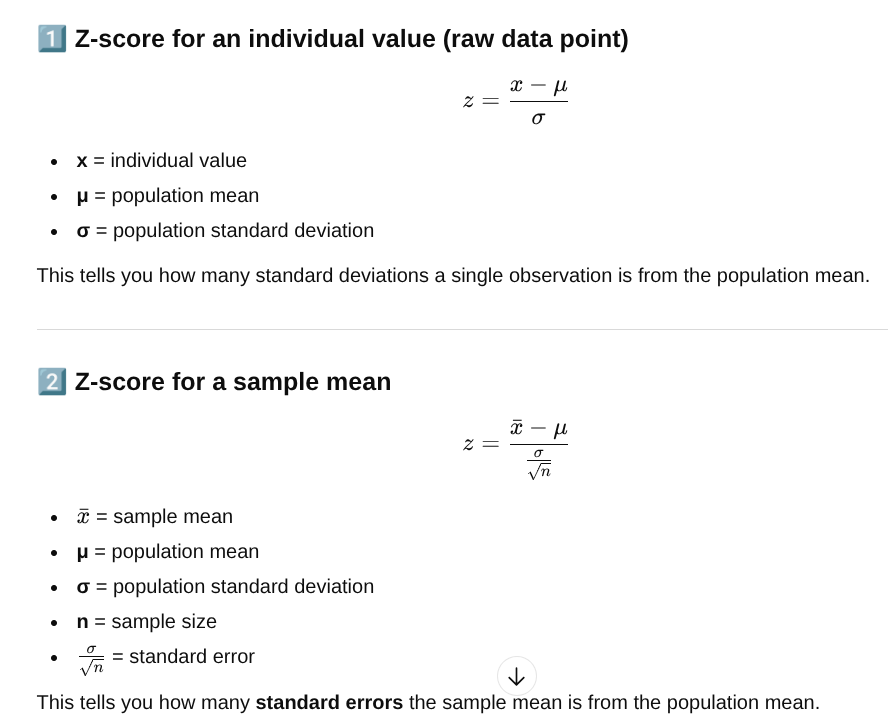

what exactly does the z score/distribtion tell you?

it tells you how far away a value (either a value obtained from an individual in the sample, or a sample mean) is from the mean, specifically in standard deviations. From it you can obtain the probability of a test statistic to the extreme of z occuring, given null is true.

Every z score corresponds to a specific area under the curve (+ or -). The table will tell you the area under the curve to the extreme of the z score. So if you have a negative z score, the table tells you the area to the left of that. If you have a positive z score, the table tells you the area under the curve to the right of that.

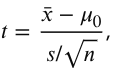

what exactly does the t score/distribtion tell you, how does it relate to p?

How many standard errors your sample statistic is away from another mean (population or other sample)

A t value measures how many standard errors the sample mean is away from the hypothesized population mean, or how far away it is from the null expectation. That t value is then used to calculate a p-value, which represents the probability of observing that t value or one more extreme given the null hypothesis is true. (asking: how surprising is it that we got this t value?)

one sample t test

tells you whether the sample came from the population we are trying to test. Null is that there is no significant difference between the two, and the sample came from the pop.

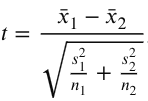

two sample t test

compares whether experimental population represents a different population than the control, or whether two independent groups are different from eachother (NO OVERLAP BETWEEN GROUPS). The null is that there is no difference between the two. (example drug vs. control in different patients, male vs female students)

Can’t just add the two standard errors because it inflates variability. This formula helps avoid assumption of equal variances between the two 2 samples.

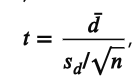

paired t test

Compares whether two related/non-independent groups are significantly different from each other. Null is that there is no difference. (example: brain vs. body temp in the same animal, same patients before and after a drug, identical twins with one receiving drug a and one receiving drug b)

how to calculate different confidence intervals

one sample: y bar ± t (subscript CV) x SEy

for Tcv find the critical value with the df

two sample

y bar 1 - y bar 2 ± t(CV) x (SEy1-SEy2)

paired

same as one sample but with d not y

what exactly is the definitition of a confidence interval

for estimating Mu and making a confidence interval: Mu at 95% confidence is between +2SEy and -2SEy from y bar

for sampling distribution/meta experiment: 95% of y bars in a sampling distribution lie between ±2SEy from Mu

for a population distribtuon: 95% of yi’s in a population lie between ±2sigma of Mu

strategy of finding d in a paired t test??

It depends on what you are looking for in an experiment and what your null hypothesis is. You can technically do either, you just have to make sure your wording is consistent. You can set the t equation to give you a positive t, which means that the mean of the first group is HIGHER than that of the second group (aka there was a decrease from the first to second group).

You can also set the equation to give you a negative t, which means the mean of the first group is LOWER than the second group (aka there was an increase from the first to second group)

critical value/how to interpret it

A critical value is a threshold that tells you how extreme a test statistic has to be to reject the null hypothesis.

when to use a(1) vs a(2) in a t-dist

depends on where you want to load confidence. If you only load it into one tail, you want to see if a mean is less than or more than, rather than just different. If the hypothesis is not directional and you want to test both extremes, you can load it into two tails to see if the mean could be less or more, you are testing for both here not just less than or more than (is the sample mean unequal to the pop. mean)

example: use a(1) when you want to test if the sample mean is larger than the population mean. Therefore you would load confidence to the left, because you are looking for a probability of the sample mean being in that area aka above the population mean.

making conclusions???

Include whether there is a significant difference between the two means. Then apply that if possible. What could be causing the absence/presence of this difference?

define a normal distribution and its equation

a continuous probability distribution describing a bell shaped curve, and can be used to approximate frequency distributions.

Qualities of the normal distribution

has a function for a bell curve

mean=median=mode

empirical rules apply

no kurtosis

(can be applied to the probability density function → probability of a variable falling into a specific range across the distribution, the porb. density is highest at the mean)

list the empirical rules in the context of a confidence interval and estimating mu

ȳ±1(SEȳ) →_a_<μ<_b_

I am 68% CONFIDENT that μ is between a and b.

ȳ±2(SEȳ) →_a_<μ<_b_

I am 95% CONFIDENT that μ is between a and b.

ȳ±3(SEȳ) →_a_<μ<_b_

I am 99.7% CONFIDENT that μ is between a and b.

list the empirical rules in the context of a sampling distribution

~68% of sample means (y-bars) should lie within ±1SE of µ

~95% of sample means (y-bars) should lie within ±2SE of µ

~99.7% of sample means (y-bars) should lie within ±3SE of µ

list the empirical rules in the context of a population distribution

~68% of values in the population (yi's) should lie within ±1σ

of µ

~95% of values in the population (yi's) should lie within ±2σ

of µ

~99.7% of values in the population (yi's) should lie within ±3σ

of µ

The four moments

location, measured by the mean

spread, measured by variance and standard deviation

symmetry, measured by skewness, how close mean and median are.

Positively skewed is the outliers are high (mean is bigger)

neg skew is outliers are low values

peakedness, measured by kurtosis

can be leptokurtic (positive kurtosis, high peak)

platykurtic (negative kurtosis, flat curve)

Statistical errors

experimenter error (accumulating inaccuracies through carrying out an experiment, caused by the experimenter themselves)

chance error (error due to small sampling. Can be mitigated by increasing n. This decreases standard error because it equals s/√n)

statistical error (type I: aka alpha error, you reject the null but it was actually true

type II: aka beta error, you retain the null but it was actually false)

Power

the ability to reject the null when you should be

can be affected by

raising sample size (increases power)

increasing alpha (increases power)

increasing effect size (increases power, decreases standard error)

inherent variability (decreases power)

Definition of P-value

The probability of obtaining a test statistic as extreme or more extreme than the one obtained given that the null hypothesis is true

Why do we never use confidence intervals to test hypotheses?

A 95% CI is equivalent to a two-tailed test at α = 0.05. If your hypothesis is one-tailed, the CI doesn’t match your α correctly.

doesn’t give you an exact p-value

however if t is more extreme than tcv usually the null is rejected

What is standard deviation? What is standard error? What do they tell us about data?

s=typical distance of values from sample mean

SEy=standard error of the mean, measures how precisely sample mean represents population mean

chi square gof vs test for independence

sum((O-E)²)/E

goodness of fit: there is one nominal variable, tests against model for random chance

independence: you have two nominal variables, calculates expected frequency from the data we collect

4 assumptions of a t test

representative samples

each sample in the study is normally distributed

if more than 1 sample, the samples a independent (for a two sample t test not paired)

if more than 1 sample, there are equal variances among samples

z sub .7 meaning

z score at which the area to the right is .7 (so it is asking for 30th percentile)