Lecture 9

1/17

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

18 Terms

LLM Crohn’s Interviews: Evidence-based decision making

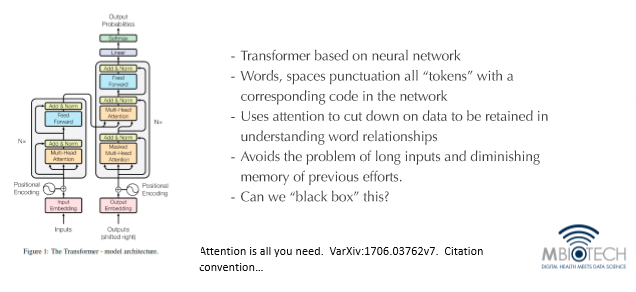

● Engine of large language models

● Fundamental contribution/invention of transformer model: attention

○ Past neural networks: long sentences are a problem

○ Now: system can handle an entire field

■ Focused on a few words at a time, carefully structured by “few other tools”

○ Transformer model sits on a foundation of a neural network

Strategy: Use Disease Expertise to interrogate LLM decision making

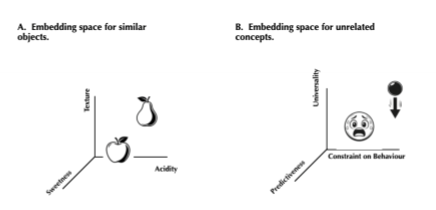

What is an Embedding Space

● Created dimensions to meaningfully distinguish a pear and an apple

○ To not confuse one with another

● Location of pear in ordinate space = embedding or vector

○ Vector - array or coordinates

● Embedding space - Assigning meaning to words or concepts

○ The closer things are, the more similar they are

○ Can be more than 3 dimensions

○ ChatGPT: ~2,000 dimensions

● Gravity and Fear (emotion) on Figure B

○ Embedding space can be for dissimilar embeddings

○ Can work on concepts, not just objects

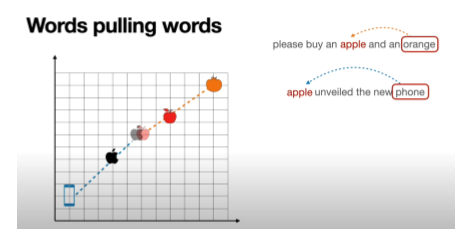

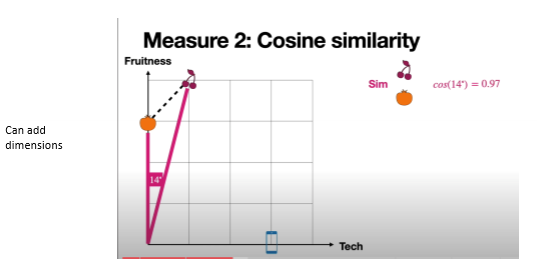

Embedding: Setting up similarity scores

● Apple the fruit or Apple the company?

○ Relate to other words in a sentence

Similarity Scoring

● Origin of embedding space expressed as an angle

○ Look at angle that describes 2 points of reference

● When in same sentence: how close they are in the embedding space

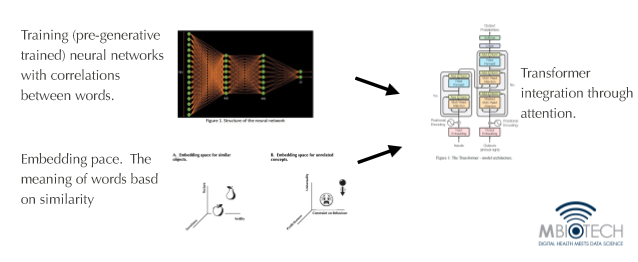

Transformer based large language models

● Most language models use transformer-based models

○ Transformer is the revolution

○ Transformer plays an integrative role between two areas:

■ Embedding space - helps in understanding meaning of concepts or words based on how similar they are in that space

■ Neural network - foundational core

● Pretraining is just training

○ Neural network is chained onto large volume of data/literature from different disciplines

○ Develop correlations between words within this training

■ Apple associated with sweetness, but not typically associated with automotive

○ Transformer model integrates pretraining and embedding space through its attention mechanism to read a sentence

■ Sees apple = knows what the apple is referring to

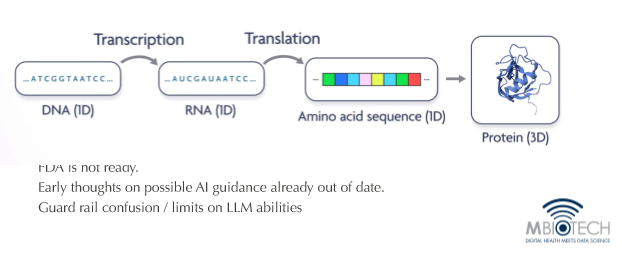

The problem in protein structure to be solved

● Challenge: Amino acid sequence and protein

○ How does the amino acid sequence give rise to 3d structure of protein?

○ Practical POV: DNA is relevant in its capacity to produce proteins

■ Proteins is the endgame

■ No point in discussing DNA if it does not affect proteins in any way

○ Better understand amino acid sequence

■ To create new 3d structures of proteins and modify existing 3d structures of proteins (a lot of work and expensive)

○ Protein reconstruction and development is nowhere near as easy as sequencing DNA

○ We need to understand this transition better

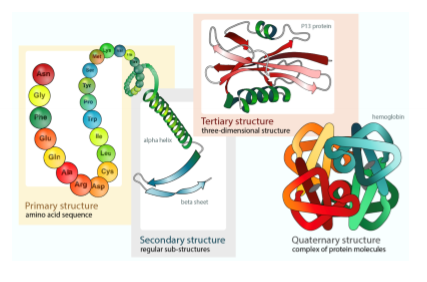

Protein folding (conformation) Review:

● AA sequence: primary structure

○ Can form secondary structures

● Main concern: tertiary structures ➡ conformation

○ Undergoing changes all the time, not static!

● Quaternary structure: assembly of protein using other proteins

○ Not really going through this

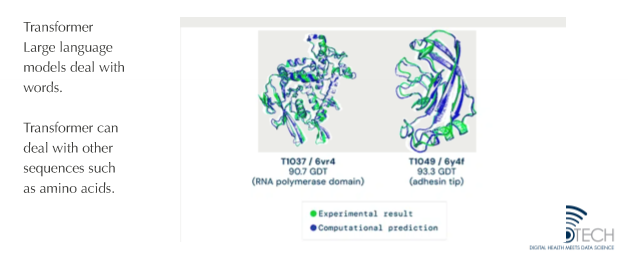

AlphaFold Evidence that AI can crack fundamental problems in protein folding

● First time computer program successfully predicted 3d structure of tertiary protein from primary structure (breakthrough)

● LLM and Protein Structure

○ Concept: continuous information (one word follows another word follows another word)

○ Protein follows another aa follows another aa…

○ Repurposing LLM based on transformers, but talking about amino acids

■ Aa became the language of LLMs

DeepMind (google) new product

● AlphaFold: application of AI that allows us to predict how proteins will fold, allowing us to discover new proteins

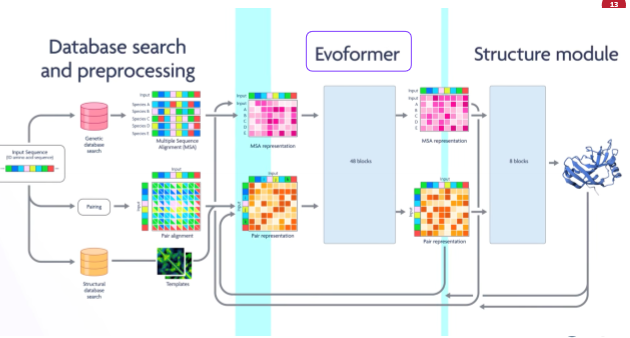

● What’s going on with AlphaFold

○ Integrates evolutionary information, and proteins from known protein structures

○ Key: evoformer - use of a transformer for serial positioning of aa

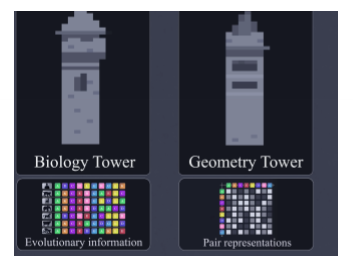

● Integration of information from biology AND pure geometry

AlphaFold

Workflow of alpha fold

Integrates evolutionary information and proteins from non-protein structure

Predicting Protein Confirmation: Two data sources by alphaFold

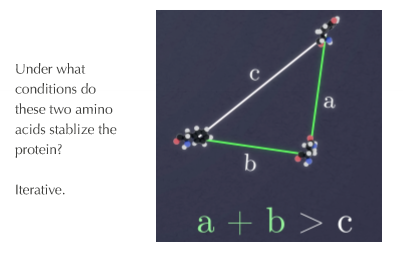

Geometry Tower: Triplet of attention or the “Triangle Equality”

● Comparing location of 2 aa = find configuration of 2 aa, the way they interact, and stabilize aa

● Can be done with multiple aa at the same time

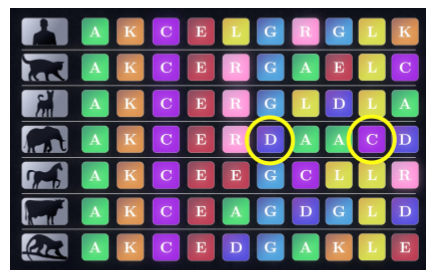

Using evolutionary history to add to Data about amino acid positions to predict protein structure

● Conserved when constantly cutting between species

● Stability of protein when found in a row

Alphafold: Seismic acceleration of protein structure discovery

Drawing on information on what amino acids are conserved

● What aa are conserved in context of other aa

● Range of aa combination in terms of sequence and 3d structure

● 150,000 proteins known before into 250 million proteins nowadays

● Sets a stage for practical application

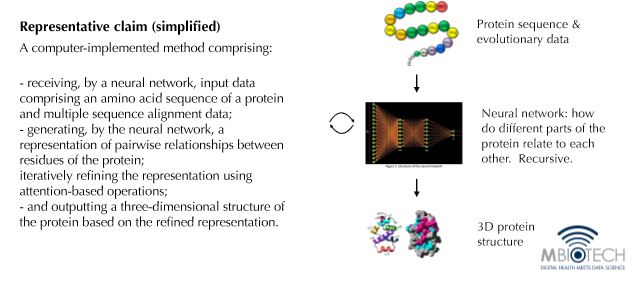

Al method claims for AlphaFold

Method Claims for AlphaFold (how it is patented)

● Limited patent: early stages were not patented

○ Publish first before patenting

■ Issues with prior art; 1 year grace period before deciding to patent in USA and Canada

● 1st claim (method claim): take primary structure of protein, combine with known evo data, and combine with neural network training

○ Primary sequence data + evo data + neural network = 3d protein structure

○ Not algorithmic; physical outcome

■ 3d protein structure AND primary structure of polypeptide

Creating Novel proteins: RF diffusion

● Type in protein along with its following properties

○ “Generate protein”

● Creating novel functional proteins

Benefits of predicting protein tertiary structure

Benefits of Predicting Protein Tertiary Structure

1. Discovering drugs

● Biologic - proteins

2. Effect of genetic variants

● What genetic variation means in terms of impact

3. Modeling protein-protein interactions

● Drug and binding site (back to biologics)

4. Engineering artificial proteins