L2: Rise of neural networks and deep learning

1/15

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

16 Terms

Training

Input data into neural networks

Choose algorithms

Feed data and adjust parameters

Evaluate generalisation to real data

Prediction

Receive data

Generate predictions based on patterns

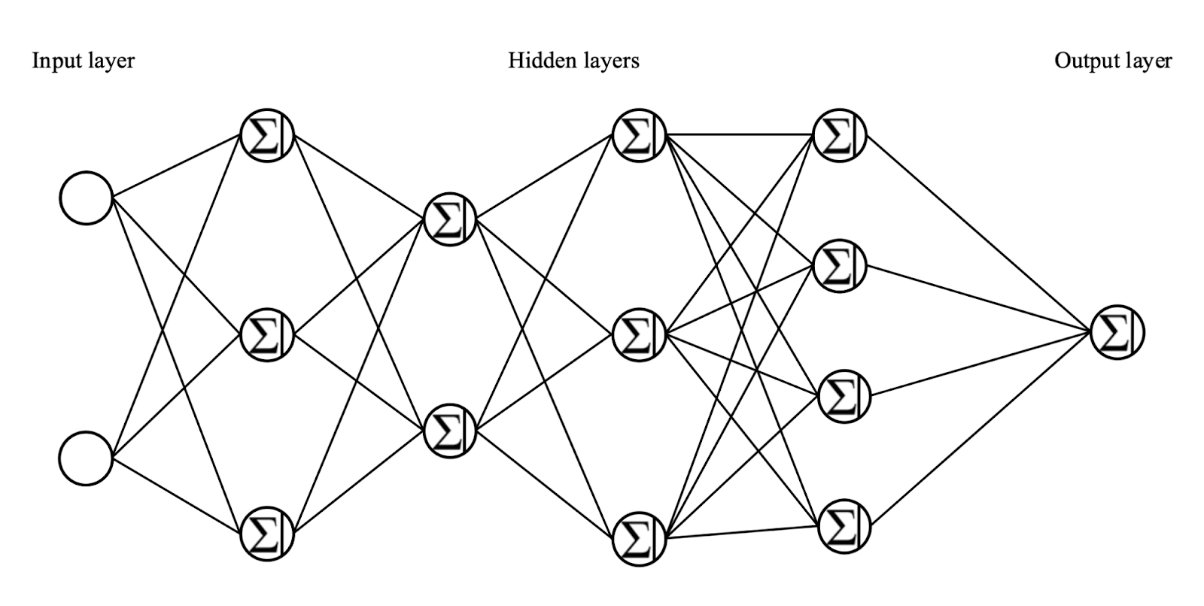

Structure of a deep network

Hidden layer: Columns of middle neurons

Deep network: Machine learning model with multiple hidden layers of interconnected nodes between input and output layers

Hyperparameters

Define the structure of the neural network

E.g. number of hidden layers, neuron per layer

Forward propogation

Synapse takes value from input and multiplies it by its weight

Neuron adds output of all synapses and applies activation function

Synapse weight → Connection strength

Trained network

Gives correct score for all training data

Weights = Trainable parameters

Neural network is like a machine with knobs (e.g. synthesizer)

Weights = knobs

Training = turning knobs til output sounds right

Number of trainable parameters (500B+)

How do you train large neural networks?

Initialise with random weights

Measure the error

J = how wrong the model is

Minimise error → Adjust parameter so J gets smaller

Naive (Brute-force) approach

Try every knob position

Find where J is lowest

IMPOSSIBLE

Minimisation: Backpropagation

Use backpropagation and update weights with gradient descent

Works for deep networks

How to do backpropagattion?

Randomly pick value for w

Pick two more around the point

Compute gradient (slope of error)

Update weights in downhill direction

Repeat until error is near 0

Prediction

Learning to predict the future from the past