CGS 2545 Final Exam Review

1/139

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

140 Terms

How do you recognize a poor table structure and produce a good one?

normalization

Normalization

a process that assigns attributes to entities so that data redundancies are reduced or eliminated

determination

the state in which knowing the value of one attribute makes it possible to determine the value of another attribute

What are the stages of normal forms?

UNF, 1NF, 2NF, 3NF, BCNF, 4Nf

the higher the normal form

the more JOIN operators for a specified output, the more it will cost in resources to answer queries

denormalization

a process by which a table is changed from a higher level normal form to a lower level normal form, usually to increase processing speed

Improved performance comes with

more data anomalies

Reduced performance comes with

fewer data anomalies

prime attribute or key attribute

an attribute that is part of a key or is the whole key

non-prime attribute or non-key attribute

an attribute that is not part of any candidate key

normalization is used for 2 situations

designing a new database structure based on business requirements/ in conjunction with ERD diagrams

1 multiple choice option

update anomalies

insertion anomalies

deletion anomalies

what is the main objective of normalization?

ensure each table conforms to the concept of well-formed relations

What are the characteristics of proper normalization

each table represents a single subject

3 multiple choice options

what normal forms are mainly theoretical interests and not used in a business environment

5NF and domain key normal form (DKNF)

functional dependence

the value of one or more attributes determines the value of one or more other attributes

two functional dependencies that are of particular interest

partial and transitive

partial dependency

a condition in which an attribute is dependent on only a portion (subset) of the PK

transitive dependency

a condition in which an attribute is dependent on another attribute that is not part of the PK

repeating group

a characteristic describing a group of multiple entries of the same type for a single key occurrence

what are the three steps to 1NF?

1. Eliminate repeating groups 2. Identify the PK 3. Identify all dependencies

dependency diagram

a representation of all data dependencies (PK, partial, or transitive) within a table

Conversion from normalization to 2NF steps?

1. Make new tables to eliminate partial dependencies 2. Reassign corresponding dependent attributes

conversion to 2NF to 3NF steps?

1. Make new tables to eliminate transitive dependencies 2. Reassign corresponding dependent attributes

surrogate key

a system-assigned primary key, generally numeric and auto-incremented, and other tables can use the surrogate key as their FK

atomic attribute

an attribute that cannot be further subdivided to produce meaningful components. For example, a person's last name attribute cannot be meaningfully subdivided.

granularity

refers to the level of detail represented by values stored in a table's row

data stored at the lowest level

of granularity is said to be atomic data

view

a virtual table based on a SELECT query that is saved as an object in the database

base table

the table on which a view is based

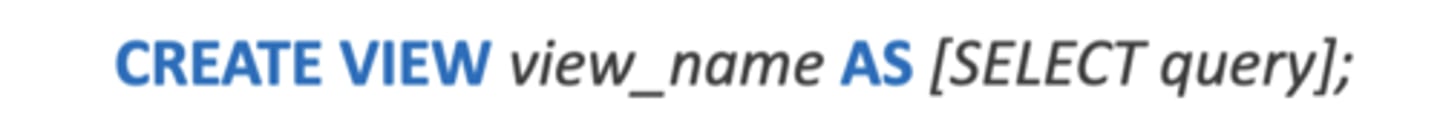

What command is used to make a view in DMBS

Create View

Batch update routine

a routine that pools transactions into a single group to update a master table in a single operation

updatable view

A view that can update attributes in base tables that are used in the view.

what are some restrictions for updatable views?

can't use GROUP BY or HAVING expressions or aggregate functions, can't use set operators such as UNION, INTERSECT, and MINUS, and for the base table to be updated must be key-preserved, meaning the values of the PK must till be unique in the view

additional updatable views restrictions

Can't use a Subquery in the Select list, Can't use a Subquery in the FROM clause

what did the SQL-99 standard define

the use of persistent stored modules, which are blocks of standard SQL statements and procedural extensions that are stored and executed at the DBMS server

PSM (persistent stored modules)

lets an admin assign specific access rights to a stored module to ensure only authorized users can use it

MS SQL Server uses what languages?

Transact-SQL and other extensions, including the .NET family

Oracle implements PSMs through

Procedural Language SQL

PSM's can be used for the following what

Triggers, Stored Procedures, Procedural SQL functions

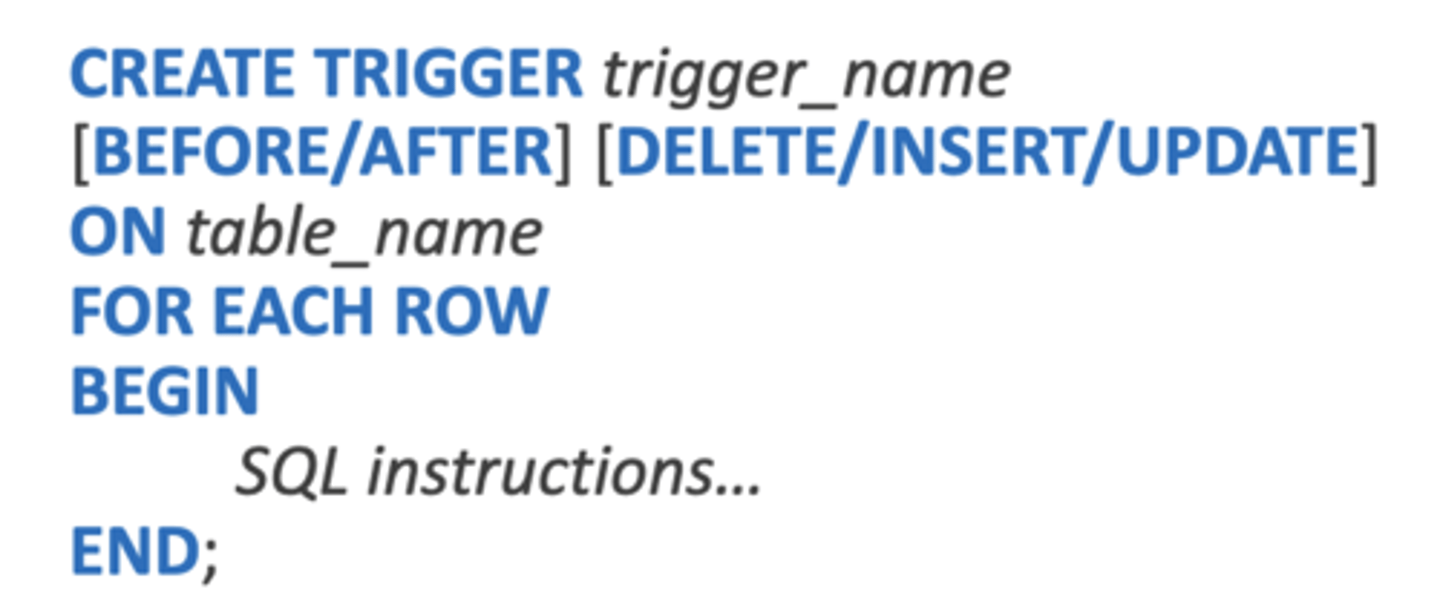

trigger

a procedural SQL code that is automatically invoked by the relational database management system when a data manipulation event occurs

key word to make a trigger in mySQL

create trigger

embedded SQL

sql statements contained in application programming languages such as C++, ASP.NET, Java

host language

any language that contained embedded SQL

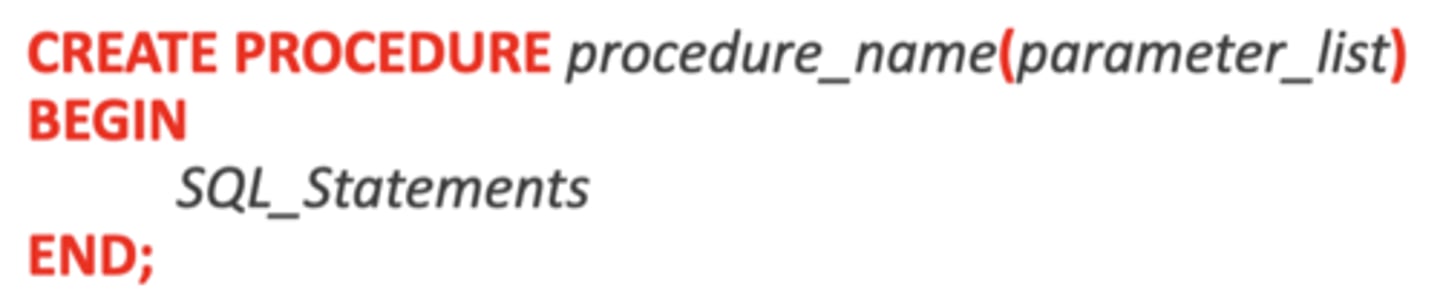

stored procedure

business logic stored on a server in the form of SQL code or another DBMS-specific procedural language

what are 2 advantages to using stored procedures

they reduce network traffic and increase performance, because the procedure is stored at the server and there is no transmission of SQL statements over the network

2 multiple choice options

Key work to make a stored procedure

create procedure

what is important to change when defining a stored procedure

the delimiter

transaction

a sequence of database requests that accesses the database; a logical unit of work that is either entirely completed or aborted

Suppose a sales rep sells a product to a customer. Suppose that the customer charges the purchase to their account. The rep will need to do these?

Write a new customer invoice, Reduce the QOH from the products inventory, Update the log of transactions, Update the customer's balance

To perform a transaction, what SQL operation would you need?

Insert a new record into an invoice table

3 multiple choice options

consistent database state

a state in which all data integrity constraints are satisfied

If any transactions fail, what will happen?

they will roll back to the original position

every transaction property has four properties, known as?

ACID (Atomicity, Consistency, Isolation, Durability)

atomiciy

all parts of a transaction are togther treated as a single, indivisible, logical unit of work

consistency

data integrity constraints are satisfied as part of the

transaction. Transactions must start and end in consistent states!

isolation

a data item used by one transaction is not available to

other transactions until the first one ends.

durability

once transaction changes are done and committed,

they cannot be undone or lost, even in the event of system failure

Serializability

the selected order of concurrent transaction operations creates the same final database state that would have been produced if the transaction had been executed in a serial fashion

transaction support is provided with 2 lines of what?

Commit; Rollback

Commit

changed made by the transaction are permanently recorded, and ended

if the end of the SQL command set is reached successfully,

all changes are permanently recorded within the database

COMMIT

if the SQL command set abnormally terminates, the

database changes are aborted and the database is reverted

back to its original state

ROLLBACK

how does a DBMS transaction keep track of the updated progress?

transaction log

concurrency control

a DBMS feature that coordinates

simultaneous execution of transactions in a multiprocessing

database system while preserving data integrity

why is it important to have a concurrency control?

because simultaneous execution of transactions over a shared database can create integrity and consistency problems, causing lost updates, uncommitted data, and inconsistent retrievals

lost update

A concurrency control problem in which a data update is lost during the concurrent execution of transactions.

uncommitted data

A concurrency control problem in which a transaction accesses uncommitted data from another transaction.

inconsistent retrieval

a concurrency control problem in

which a transaction uses an aggregate function on data

while other transactions are updating the data.

scheduler

a DBMS component that establishes the order

in which concurrent transaction operations are executed;

Serializable Schedule

a schedule of operations in which

the interleaved execution of the transactions yields the

same result as if they were executed in serial order.

lock

a device that guarantees unique use of a data item

in a particular transaction operation

Pessimistic Locking

the use of locks based on the

assumption that conflict between transactions will occur.

lock manager

a DBMS component that is responsible for

assigning and releasing locks

Lock Granularity

the level of lock use. Locking can occur

on one of many levels: database, table, page, row, or field.

Database-Level Lock

a type of lock that restricts database access to the owner of the lock and only allows one user at a time to access the database.

tabel level lock

a type of lock that allows only one

transaction at a time to access a table.

Page-Level Lock

a type of lock that restricts access to a

diskpage (a section of a disk).

row level lock

a less restrictive type of lock that allows

concurrent transactions to access different rows of the

same table, even if the rows are on the same page.

field level lock

a lock that allows concurrent transactions

to access the same row, but different fields; this lock is the

most flexible, but it incurs a significant overhead cost.

what are the different type of locks or modes

Binary Lock, Shared/Exclusive

Binary Lock

A lock that has only two states: locked and unlocked. If a data item is locked by a transaction, no other transaction can use that data item.

exclusive lock

a lock issued when a transaction requests

permission to update a data item and no locks are held on

that item by another transaction

shared lock

a lock issued when a transaction requests

permission to read data and no exclusive locks are held on the data by another transaction.

deadlock

a condition in which two or more transactions

wait indefinitely for the other to release the lock on a

previously locked data item. Also known as a deadly

embrace.

What are the three ways to prevent deadlocks?

Deadlock prevention, Deadlock detection, Deadlock Avoidance

deadlock prevention

a transaction requesting a lock is aborted if

there is a chance for deadlock.

deadlock detection

the DBMS periodically tests the database for

deadlocks; if one is found, one transaction is aborted.

deadlock avoidance

transactions are required obtain every lock it

will need before it can be executed.

big data

a movement to find new ways to manage large amounts of web-generated data and derive business insight from it, while providing high performance and scalability at a reasonable cost

What characteristics does big data display?

volume, velocity, and variety

volume

a characteristic of Big Data that describes the

quantity of data to be stored

scaling up

a method for dealing with data growth that involves migrating the same structure to more powerful

systems.

scaling out

a method for dealing with data growth that

involves distributing data storage structures across a cluster

of commodity servers.

velocity

a characteristic of Big Data that describes the

speed at which data enters the system and also the speed

at which it is processed

Velocity of processing can be divided into 2 categories:

stream processing, feedback loop processing

Stream Processing

the processing of data inputs in order

to make decisions about which data to keep and which data

to discard before storage

Feedback Loop Processing

Analyzing stored data to produce actionable results.