Lin Alg Midterm #2

1/32

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

33 Terms

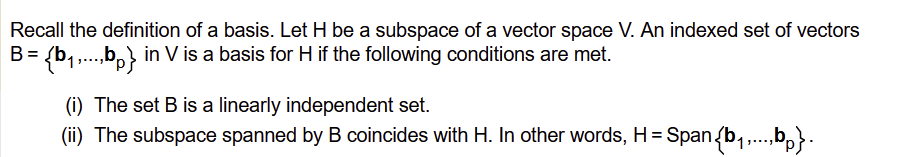

Basis

a set of vectors that serves as a fundamental building block for a vector space. To be a basis, the set must be linearly independent (no vector can be formed by others) and span the space (every vector in the space is a linear combination of the basis vectors).

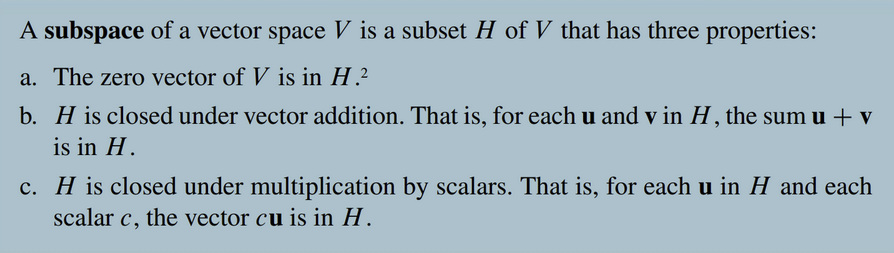

Subspace Definition

A vector space is a subspace

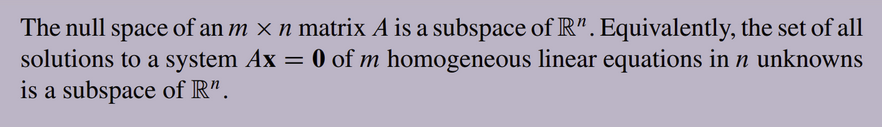

Relation between null space and subspace

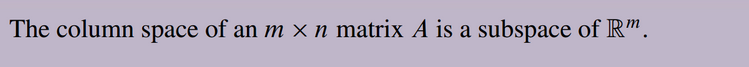

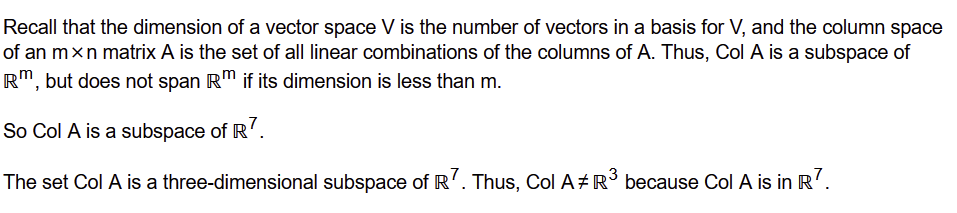

Relation between column space and subspace

Row space

The row space of a matrix is the set of all possible linear combinations of its row vectors, forming a subspace within Rn. It is equivalent to the span of its rows and has the same dimension as the column space (the rank of the matrix). The non-zero rows of a matrix's row echelon form (REF) constitute a basis for its row space.

Span of a matrix is the same as the column space

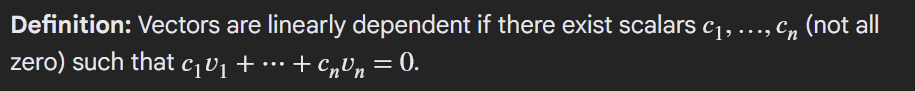

linear dependence relation

Dimension of a subspace

Number of vectors in the basis of the subspace

HW #21

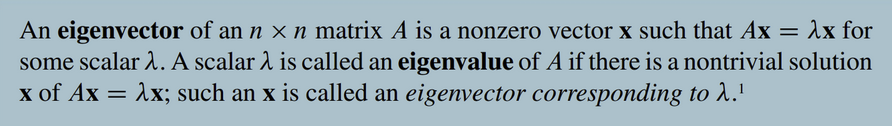

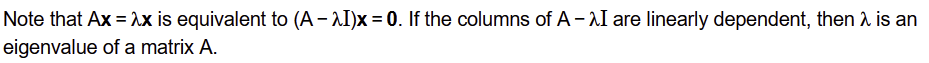

Eigenvector and eigenvalue

Eigenspace

The eigenspace is the set of all solutions to the equation

(A - λI) = 0

In other words, the eigenspace is the null space of (A - λI)

The basis of the eigenspace can be found by solving the equation, or generating a set of all linearly independent eigenvectors

Determine eigenvectors from eigenvalue

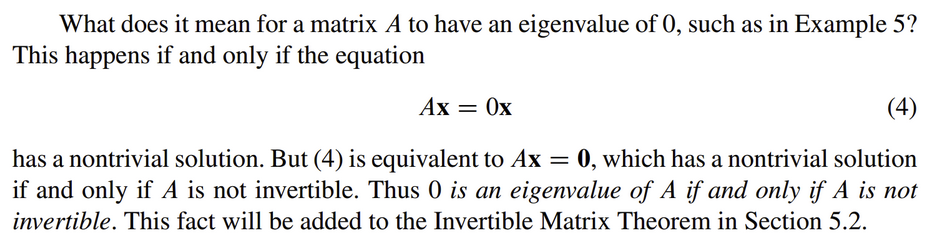

Conditions for eigenvalue of 0

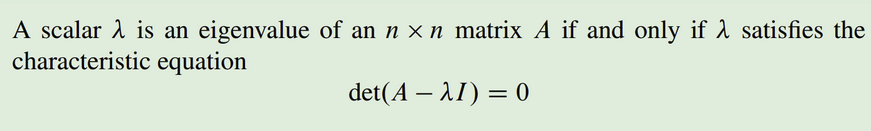

Characteristic equation

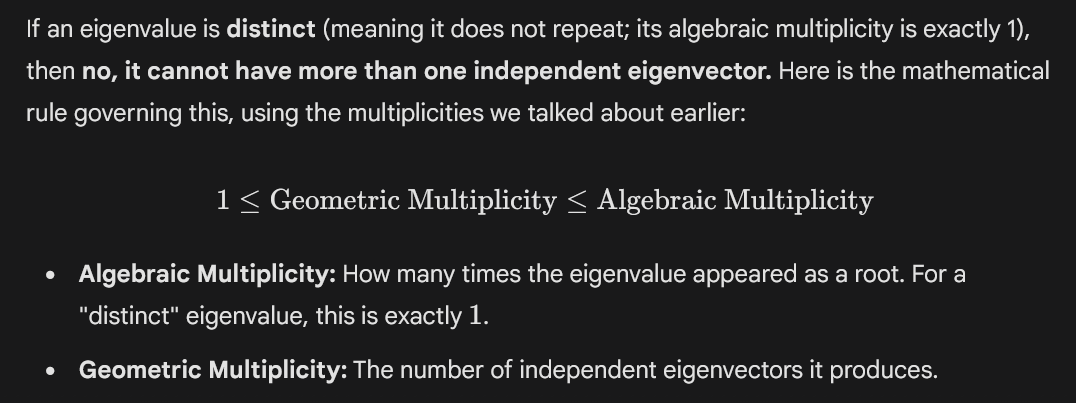

Multiplicity (algebraic) of an eigenvalue

The number of times the eigenvalue occurs as a factor in the characteristic polynomial.

(λ - 2)2 means multiplicity of 2 for eigenvalue of 2

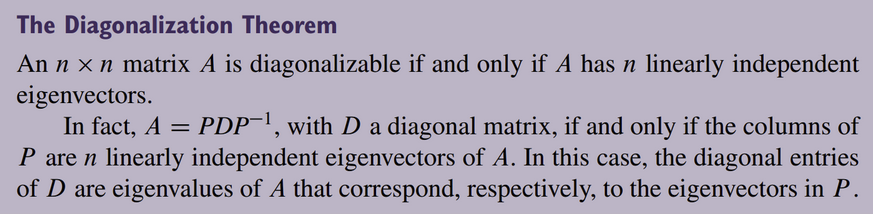

Diagonalization

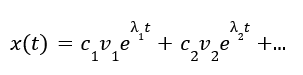

General solution of x’=Ax

Spanning Set

A collection of vectors that can generate any vector in a vector space (or a subspace) through linear combinations

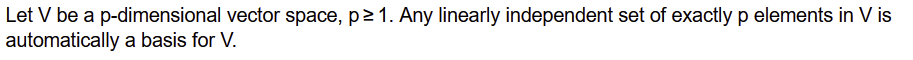

Fact about linearly independent set of vectors

Every linearly independent set of vectors in Rn consists of at most n vectors.

Explanation: “Think of Rn as a space with exactly n distinct dimensions. Every time you add a linearly independent vector to your set, you are pointing in a brand new, mathematically unique direction that cannot be reached by combining the previous vectors.”

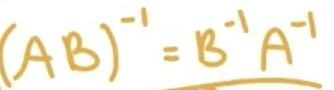

Similar matrices

Definition: A is similar to B if there exists an invertible matrix P such that A=PBP-1 or equivalently B=P-1AP.

Similar matrices have same eigenvalues and characteristic polynomial. Matrices with the same eigenvalues are not always similar.

Similar matrices generally do not have the same eigenvectors

Similar matrices and diagonalizability

If A and B are similar, and A is diagonalizable, then B is also diagonalizable.

Way to find general real solution

Multiply by cost + i sint

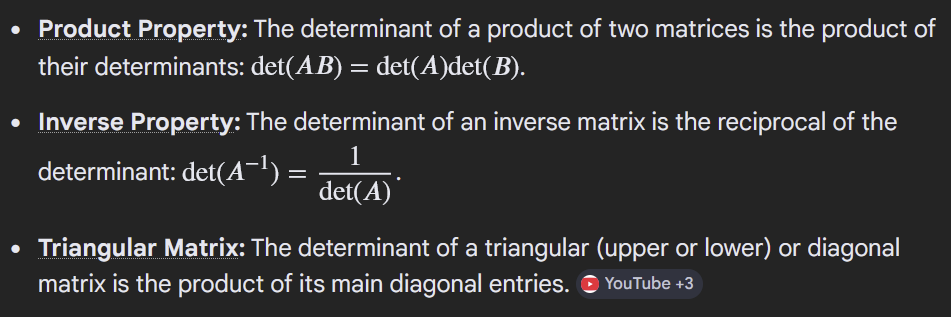

Properties of determinant

The determinant of a matrix A and its transpose AT are equal

Zero vector in eigenspace and eigenvector

The zero vector technically satisfies Ax=λx for any matrix but cannot an eigenvector because the linear algebra gods said so

The zero vector however IS in the eigenspace of a matrix

An eigenspace is a subspace of the vector space on which the matrix A acts.

Algebraic Multiplicity and Geometric Multiplicity

How diagonalization relates to multiplicities

algebraic multiplicity must equal geometric multiplicity for all eigenvalues

If for a n x n matrix, all eigenvalues are distinct, and the number of eigenvalues is n, then the matrix is diagonalizable

Transformation matrix relative to a basis

Spring 2023 Exam #2 Q8

Column space (Also called the range)

Range of a linear transformation

B-matrix of a linear transformation T (same as: Find a matrix for T relative to the basis { … })

Behavior at origin for differential equation problems

Real Eigenvalues (No imaginary part):

Repeller: Both eigenvalues are positive (pushing outward).

Attractor: Both eigenvalues are negative (pulling inward).

Saddle: One is positive, one is negative (pushing one way, pulling another).

Complex Eigenvalues (α ± βi):

Spiral away from origin: Real part (α) is positive.

Spiral toward origin: Real part (α) is negative.

Ellipses: Real part (α) is exactly 0.