Comp 210 Final UNC

1/72

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

73 Terms

What is another name for a depth first traversal?

Pre-order traversal

What is the worst case time complexity of searching, inserting, or deleting* in a BST?

O(log n)

For deleting*, finding the element to replace it with (the smallest on right or largest on left) takes O(log n)

What traversals do you use for a stack?

Pre-order, post-order, and In-order traversals

What traversals do you use for a queue?

Breadth-first traversal

What is the complexity of quick-sort?

worst - O(n^2). When pivots aren't in the center (when it's already sorted) pivot is always in one extreme end so you are making it smaller by one only and are not dividing by 2 every time but only one

average - O(n log n)

best - O(n log n)

(b/c each iteration goes down by 1/2) so first is O(n) but next is 2 iterations of O(n/2) and then 4 of O(n/4)

stack space: O(log n)

Note: O(n log n) means the first iteration in O(n) but then the next ones are O(log n) b/c they are dividing by 2 for each iteration hence O(n log n)

What is a con of a hash table?

Takes up a lot of space

What is the average and worst case complexity of a search function on a hash table?

Avg - O(1)

Worst - O(n). Collisions = (in chaining have to search through linked list one by one) in linear probing, have to follow each one it checked before it found an empty index so also O(n).

What is the average and worst case complexity of an insert function on a hash table?

avg - O(1)

worst - O(n) (collisions)

If chaining is used, worst is O(1), because you can just insert at the beginning of the linked lists for collisions

In Linear probing, it's O(n)

What is the time complexity of inserting, searching, and *deleting for chaining and linear probing with a low probability of collisions (without resizing)?

Chaining: Insert = O(1), search = O(1), *delete = O(1)

Linear Probing: Insert = O(1), search = O(1), *delete = O(1)

What is the time complexity of inserting, searching, and *deleting for chaining and linear probing with a high probability of collisions (without resizing)?

Chaining: Insert = O(1) bc you can insert at beginning of linked list, search = O(n), *delete = O(1)

Linear Probing: Insert = O(m), search = O(m), *delete = O(1)

where m is the size of the array.

Chaining is O(n) for search because you are searching the n amount of elements in the array list as opposed to every single element in the array.

Note: make m (size of table/array) a prime number to minimize collisions

What does the performance of a hash table depend on?

Quality of the hash table

Load = # elements in table / table size =N/M

What is the average case time complexity of inserting, searching, and *deleting for chaining and probing with resizing?

O(1) for everything

What is the worst case time complexity of inserting, searching, and *deleting for chaining and probing with resizing?

Chaining and probing: Insert = O(n) bc it inserting it could make it necessary to resize, search = O(1) just trust, *delete = O(n) b/c deleting could also make it necessary to resize

What is the complexity of a delete* function on a hash table?

Avg and worst is O(1)

What are the solutions to collisions in hash tables/functions?

-Chaining. (uses linked lists for collisions)

-Linear Probing (when collision happens, goes down one at a time until it finds an empty index, then puts it in there).

How is chaining better than linear probing?

In an insert method, chaining is O(1), because you can just insert at the beginning of the linked lists for collisions

In Linear probing, the worst case is O(n) to insert for collisions bc it has to search for one by one for an open space.

Eventually, linear probing is going to run out of space but chaining can store excess in linked lists.

Double hashing consists of

Linear (goes up by one) and quadratic (goes up by i^2) probing

MaxHeap vs MinHeap

MaxHeap - parent is larger than its children

MinHeap - parent is smaller than its children

Understand both Djiksta's and Kuskal's algorithm

How do you enqueue in a Maxheap?

To Enqueue in a Maxheap: Insert in heap (tree) in last place (in breadth-first) and then just use bubbleup to get it in right place

How do you dequeue in a Maxheap?

To Dequeue in a Maxheap: takes last (in breadth-first) and the replace (the node you are gettin rid of) with last node and then bubbledown (to get in right place)

Complexity of Heap (enqueue/dequeue)

Worst:

Enqueue = O(h) [ which is O(log n) ]

Dequeue: O(h) [ which is O(log n) ]

unless you can't access last node (breadth-first) then it'd be O(n) bc you have to find that node

Consider the Queue ADT (Abstract Data Type), implemented as a Linked List, what is the worst-case time complexity of the Dequeue operation?

The element at the front of the queue (to be dequeued) is directly accessible via the head pointer. There's no need to search for it, so this step is O(1).

To actually dequeue, you just switch the pointers so obviously O(1) too.

In Kruskal's algorithm, In a spanning tree with n vertices, what are the amount of edges you need to connect? If 9 vertices, when could you stop?

(n-1)

When you get the 8th edge in your MST

Walk through a breadth-first traversal

Queue:

All you do dequeue (pop) then enqueue (push) the children, from left to right

Walk through a pre-order traversal

Start popping at root (bc before we went down)

- Pop first, then push children

Walk through a post-order traversal

(start popping at bottom) bc after we went down

- Push first, then when no children or children already popped, then you pop

(Basically you don't pop until the node does not have children or children already popped)

Walk through an Inorder traversal

It literally goes from the vertices from left to right

When you're pushing/enqueueing children, how do stacks and queues differ?

For a stack, the right child goes underneath and the left goes on top.

For a queue, the right child goes to the right and left to left

But for both, the left is child is always first

Pros and cons for ArrayList

Pros: conserve on space

Cons: Time complexity, every once in a while will have to make a new arrayList in the background

What would be the Time Complexity of Stack Push and Pop using ArrayLists?

Enqueue: Worst = O(n), Avg = O(1)

Dequeue: Worst = O(n), avg = O(1)

b/c of resizing

What are the conditions of a Heap?

-For maxHeap, parent bigger than children and for minHeap vice-versa

-The height of sub-trees below can differ by at most 1

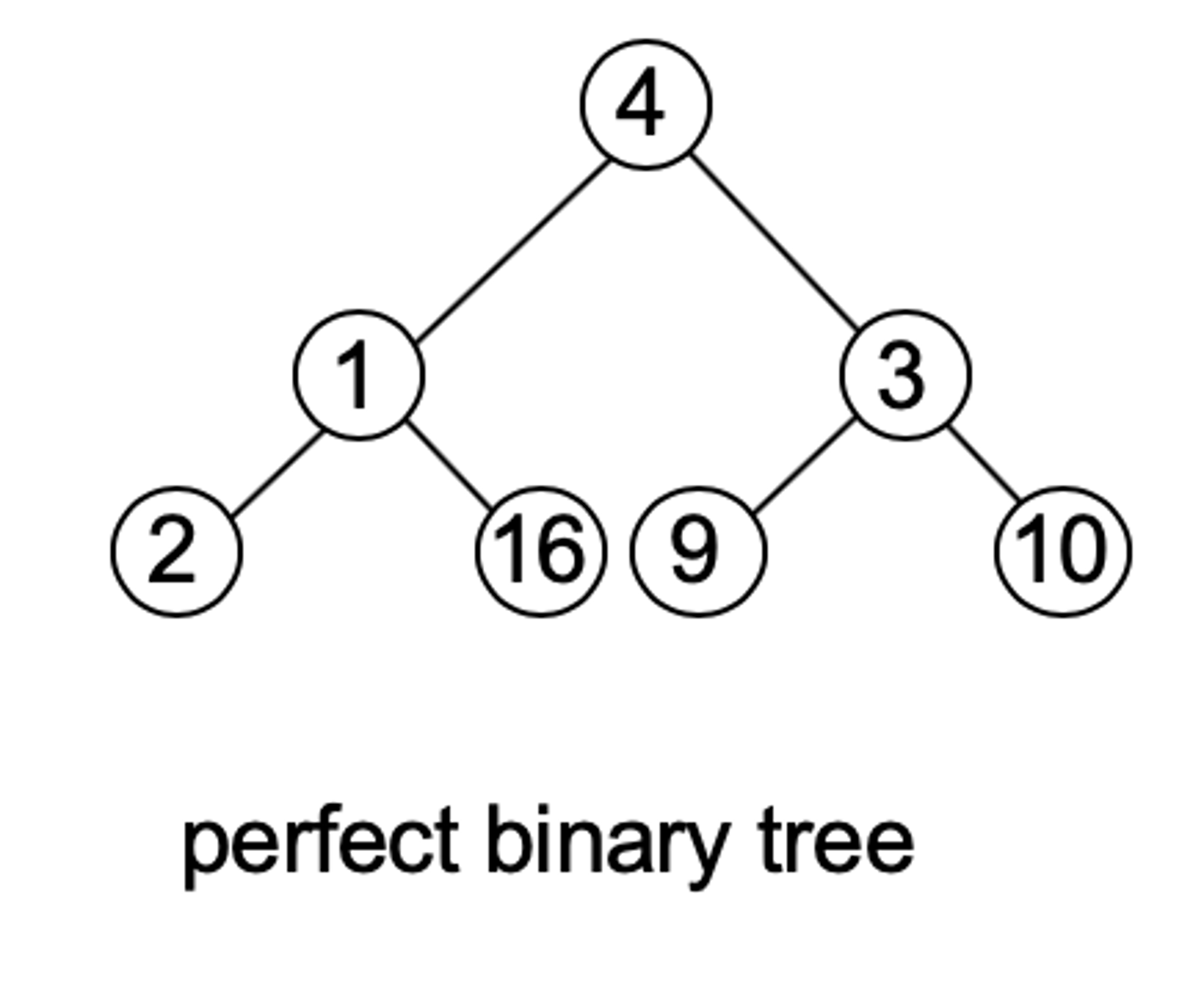

What is a perfect binary tree

(All leaf nodes are at same level; all other nodes have 2 children)

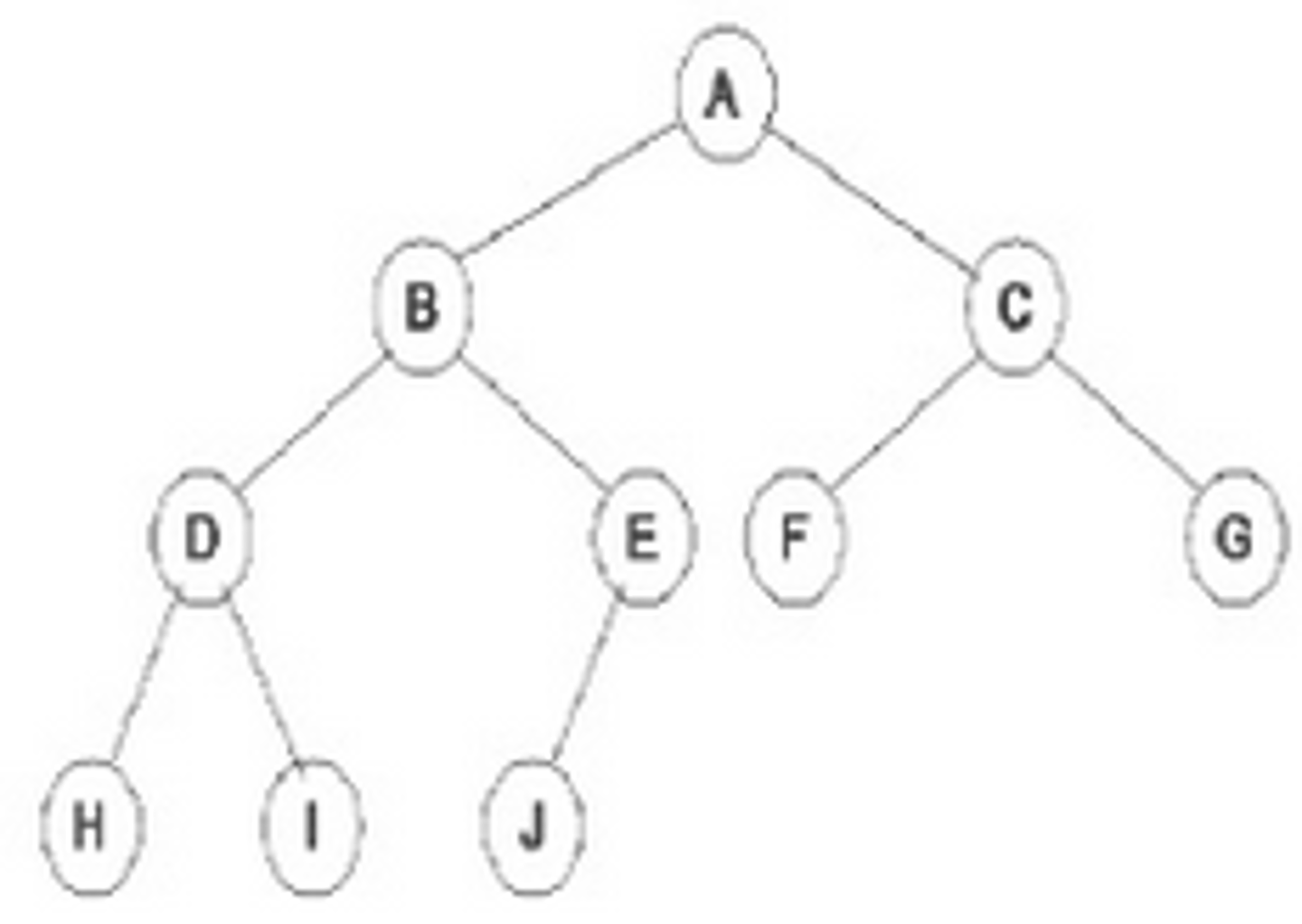

What is a full binary tree

(Every node has either 0 or 2 children)

What is a complete binary tree

(Every level, except possibly the last, is completely filled, and all nodes are as far left as possible)

What is the complexity of O(h) in a balanced binary tree?

order of height which is O(log n)

(in binary trees)

What is the worst case time complexity for an unbalanced binary tree?

It is O(h) and the worst case of O(h) is O(n)

(worst case would be if the tree takes the form of a linear chain of nodes)

What is the average time complexity of an insert operation in a binary heap that is implemented as an ArrayList of size N?

Would insert at end and then bubbleup to right position. Bubble up takes O(h) which equals

O(log n)

What is the worst-case complexity of an insert operation in a Heap with N elements that is stored as an ArrayList? Briefly explain why.

O(N) - since an insert may occasionally result in resizing of the ArrayList.

What would the average complexity of a search be in a Heap with N elements? Briefly explain your response?

O(N) - since a Heap is unordered. This would be regardless of whether the heap

used an ArrayList or a tree structure based on pointers.

How to find the left child, right child, and parent of node n with an equation in a binary heap

left child: 2( i ) + 1

right: 2( i ) + 2

parent: (i - 1) / 2

where i is the index of node n

What is the worst-case complexity of a search in a Binary Search Tree with N elements? Briefly explain why.

O(N) - since BSTs are not necessarily balanced and the worst case would be when the tree takes the form of a linear chain of nodes

In a heap, what do you do (what are the "commands")

Enqueue and dequeue

Like to use arrays or ArrayLists

WHen would you rather have a BST rather than a heap and vice-versa?

Heap: Enqueue and dequeue in O(log n)

BST: Can search in O(log n)

What are AVL trees?

They area type of self-balancing BSTs

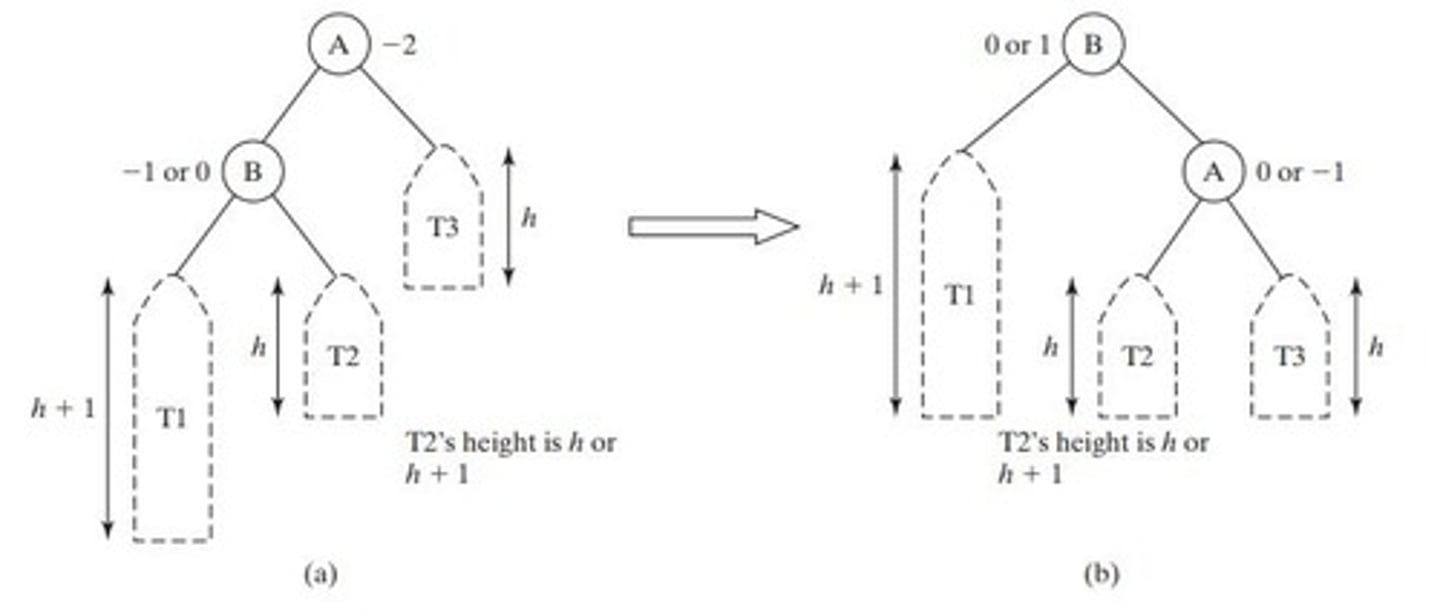

What do LL, RR, LR, and RL refer to in BSTs?

Refer to the two nodes underneath the node where the imbalance happens (on the side that is too long)

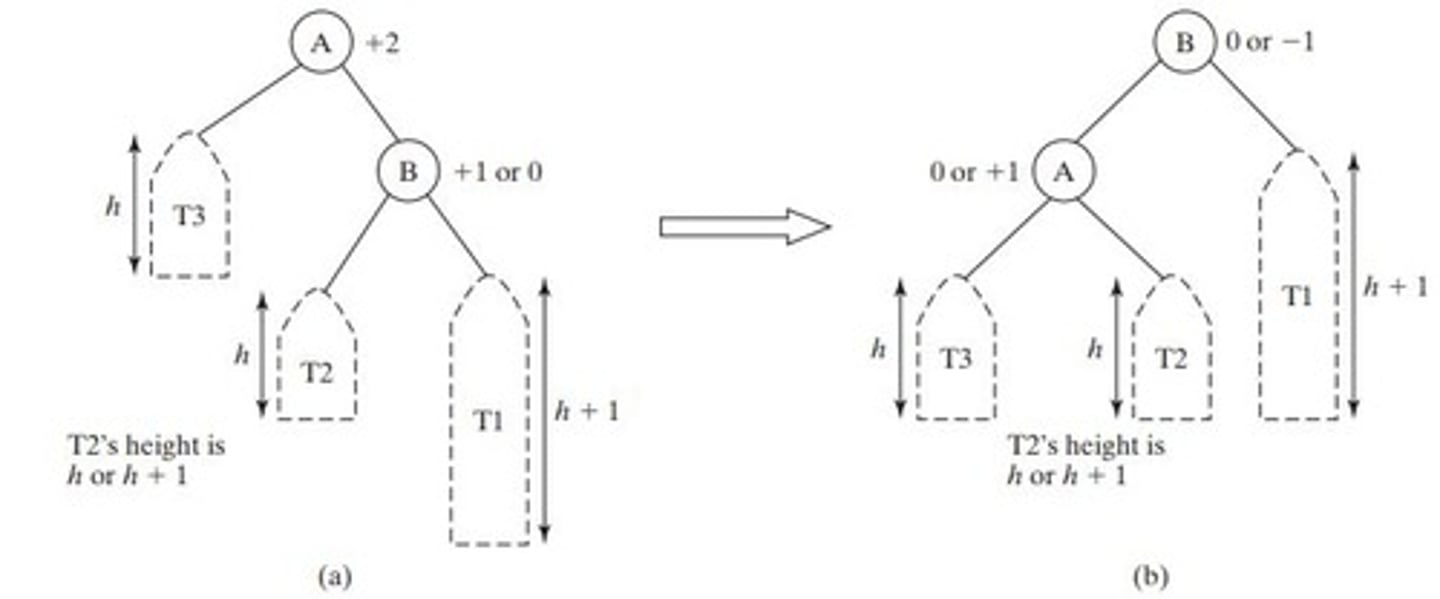

How do you solve a RR imbalance on an AVL?

Rotation to the left, and the orphaned node goes to the left side (right child)

How do you solve a LL imbalance on an AVL?

Rotation to the right, and the orphaned node goes to the right side (left child)

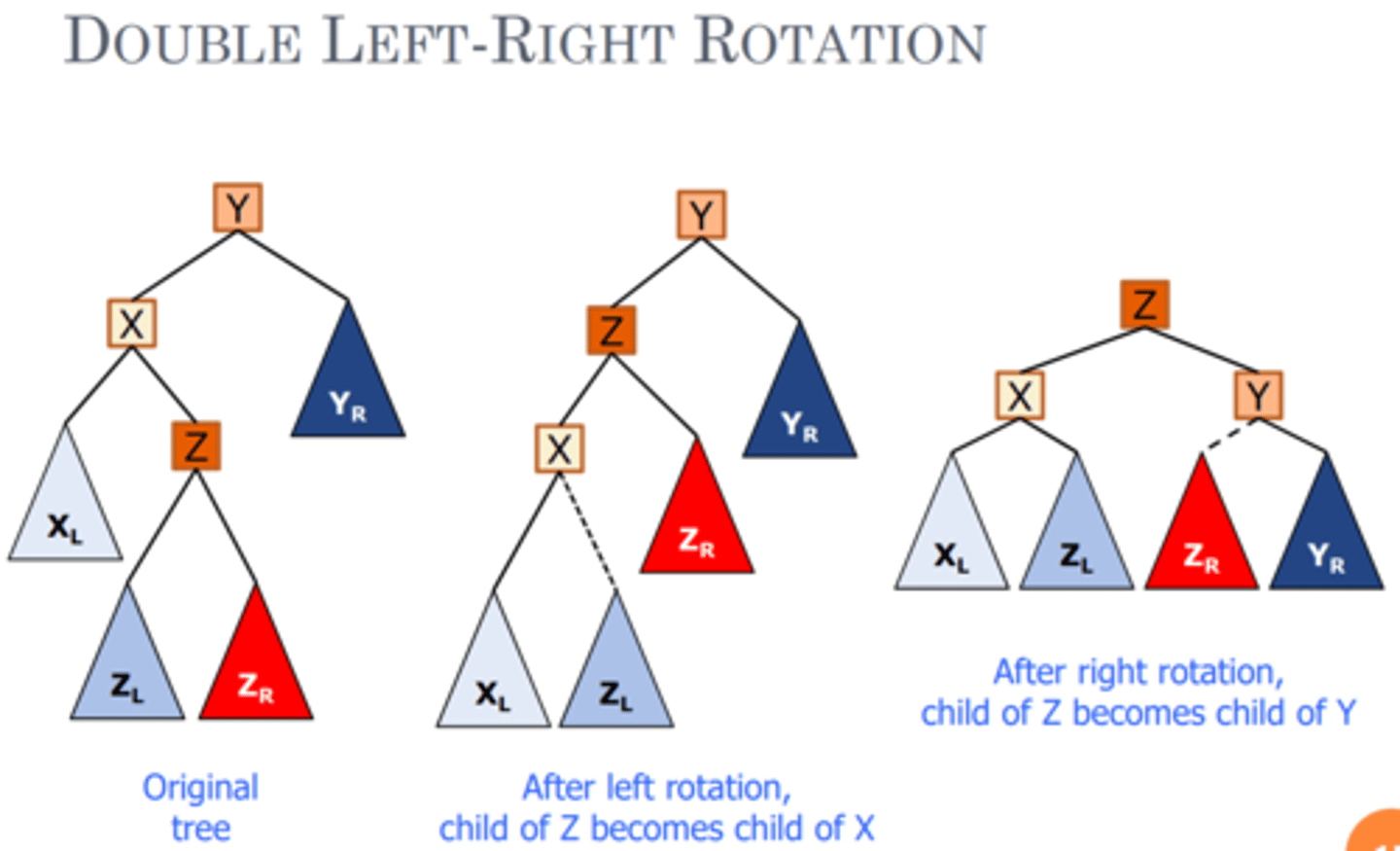

How do you solve a LR imbalance on an AVL?

- R node rotates left (L node goes down) and R's left child becomes L's right

- Then it's a LL imbalance which we know how to do (Rotation to the right at root, and the orphaned node goes to the right side (left child)

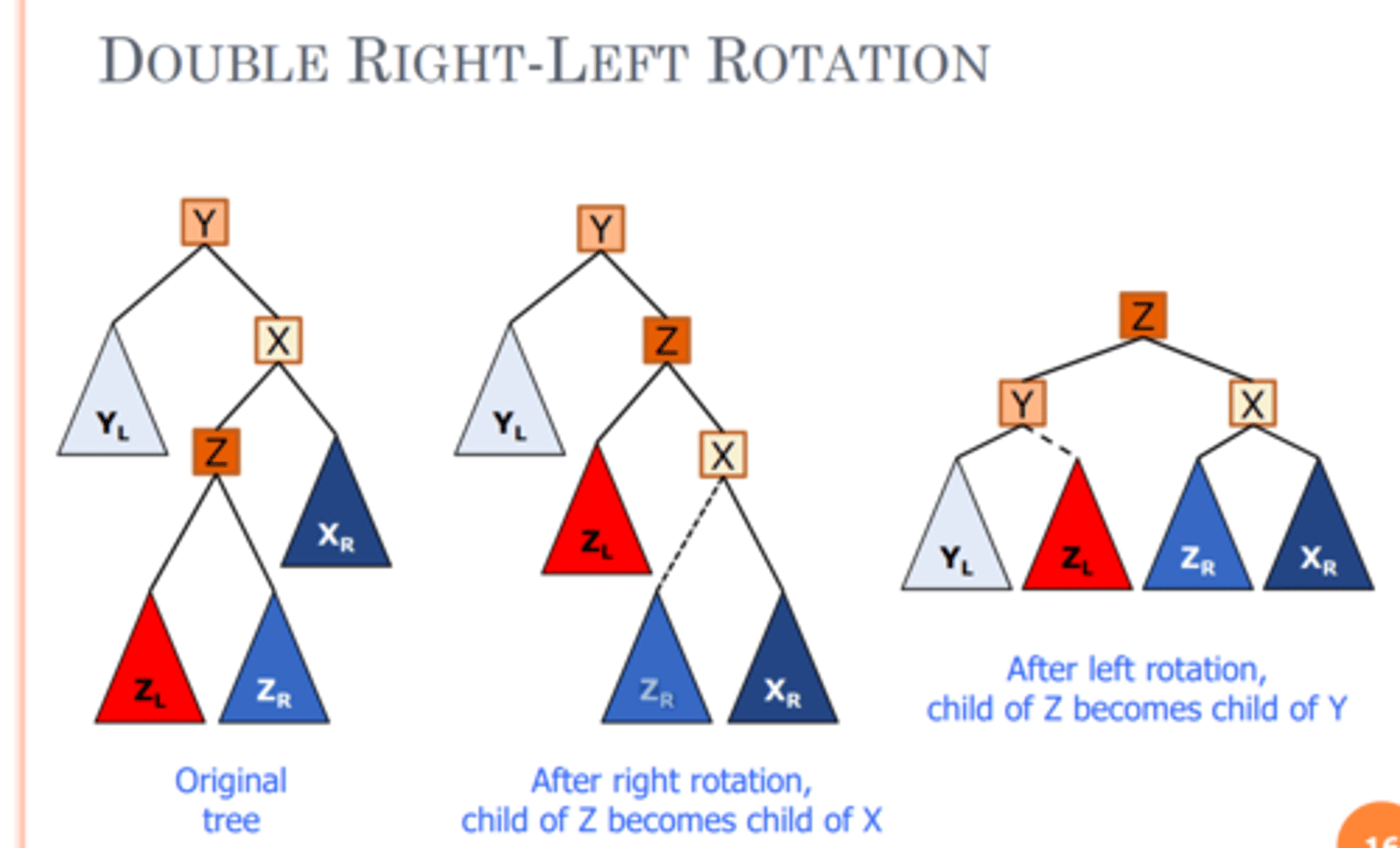

How do you solve a RL imbalance on an AVL?

L node rotates to the right (R goes down) and L's right child becomes R's left

- Then it's an RR imbalance which we know how to do

(Rotation to the right at root, and the orphaned node from the right goes

What is the AVL insertion complexity?

- Have to insert it in right place which is O(log n)

- Have to check the imbalances from bottom all the way up to the root (where insertion is taking place) and that is O(h).

O(h) and the height is always proportional to O(log n) for BSTs if the BST is balanced so O(log n)

- Then have to balance it which is only O(1)

So final answer is O(log n)

What would be the AVL deletion complexity?

- Have to search for it which is O(log n)

- Find successor which is O(log n)

- Replace which is O(1)

- Balance which is O(1)

So answer is O(log n)

What are heaps only applicable for?

For priority queues

What are RBTs

A type of self balancing BSTs

What are the conditions for RBTs?

- The root is always black

- Every path from the root to the bottom of the tree (null leaves) encounters the same number of black nodes.

- No adjacent (Parent/Child) red nodes

What are the rules/cases of insertion for RBTs?

- Rule 0: Insert new value as a red node

- Case 1: If the new node is the root, make it black

- Case 2: If parent of new node is black, no further steps

- Case 3: If both parent and pibling is red, make parent and pibling black

Make grandparent red

Then restart steps with grandparent being new node

- Case 4: If parent is red and pibling is black / empty, rotate (RR, LL, RL, or LR)

Color children red while new node is black.

Root of rotated subtree is new node

How do RBTs and AVLs compare?

- They both have O(log n) for insert, delete, search

- However, AVL trees must rebalance (i.e., rotate) more often

Walk through selection sort

Starts at beginning of a list of numbers and assigns that as the smallest number. Then goes through and reassigns the smallest number to the next and next number if it is smaller. Once it reaches the end and has the real smallest number, it swaps the first index with the smallest number. Then it assigns the smallest number to the next index and repeats the process.

Simpler: [It goes through a list of numbers (unsorted array) and finds the smallest element. Then it swaps the smallest element with leftmost unsorted position. Repeats process with remaining array.]

![<p>Starts at beginning of a list of numbers and assigns that as the smallest number. Then goes through and reassigns the smallest number to the next and next number if it is smaller. Once it reaches the end and has the real smallest number, it swaps the first index with the smallest number. Then it assigns the smallest number to the next index and repeats the process.</p><p>Simpler: [It goes through a list of numbers (unsorted array) and finds the smallest element. Then it swaps the smallest element with leftmost unsorted position. Repeats process with remaining array.]</p>](https://knowt-user-attachments.s3.amazonaws.com/56b6dfc8-34fa-49d2-af8f-e5ec6f7ec70d.jpg)

What is the best, avg, worst complexity of selection sort?

O(n^2)

[ The first line is O(n), then the next line is O(n-1), then the next O(n-2), etc. which comes out to O(n^2). ]

Best - even if it is already sorted, it still goes through the algorithm.

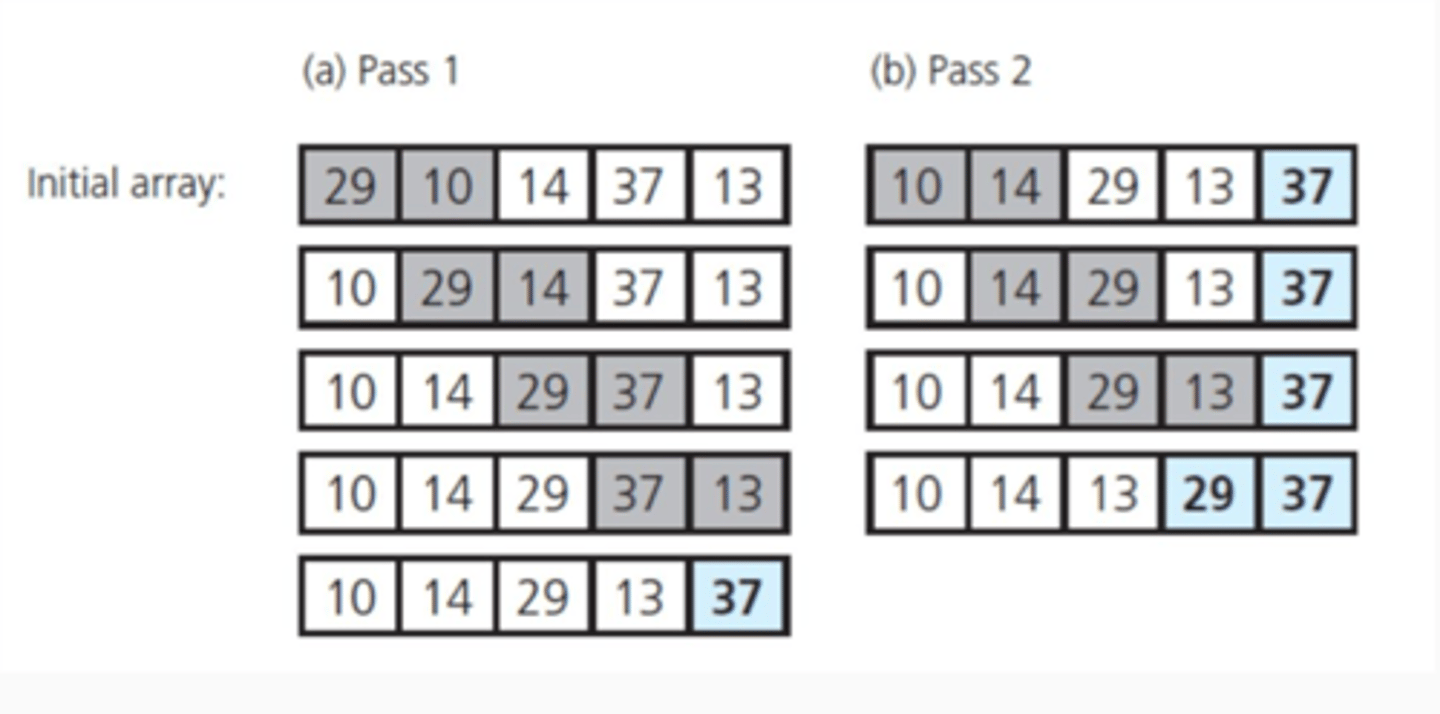

Walk through bubble sort

Start on left of an array. Checks to see if first index is greater than nxt. If so, swap. Keep going until it's not. Then repeat from beginning.

What is the best, avg, worst complexity of bubble sort?

O(n^2)

Best - O(n) if no swaps noted, then it is already sorted.

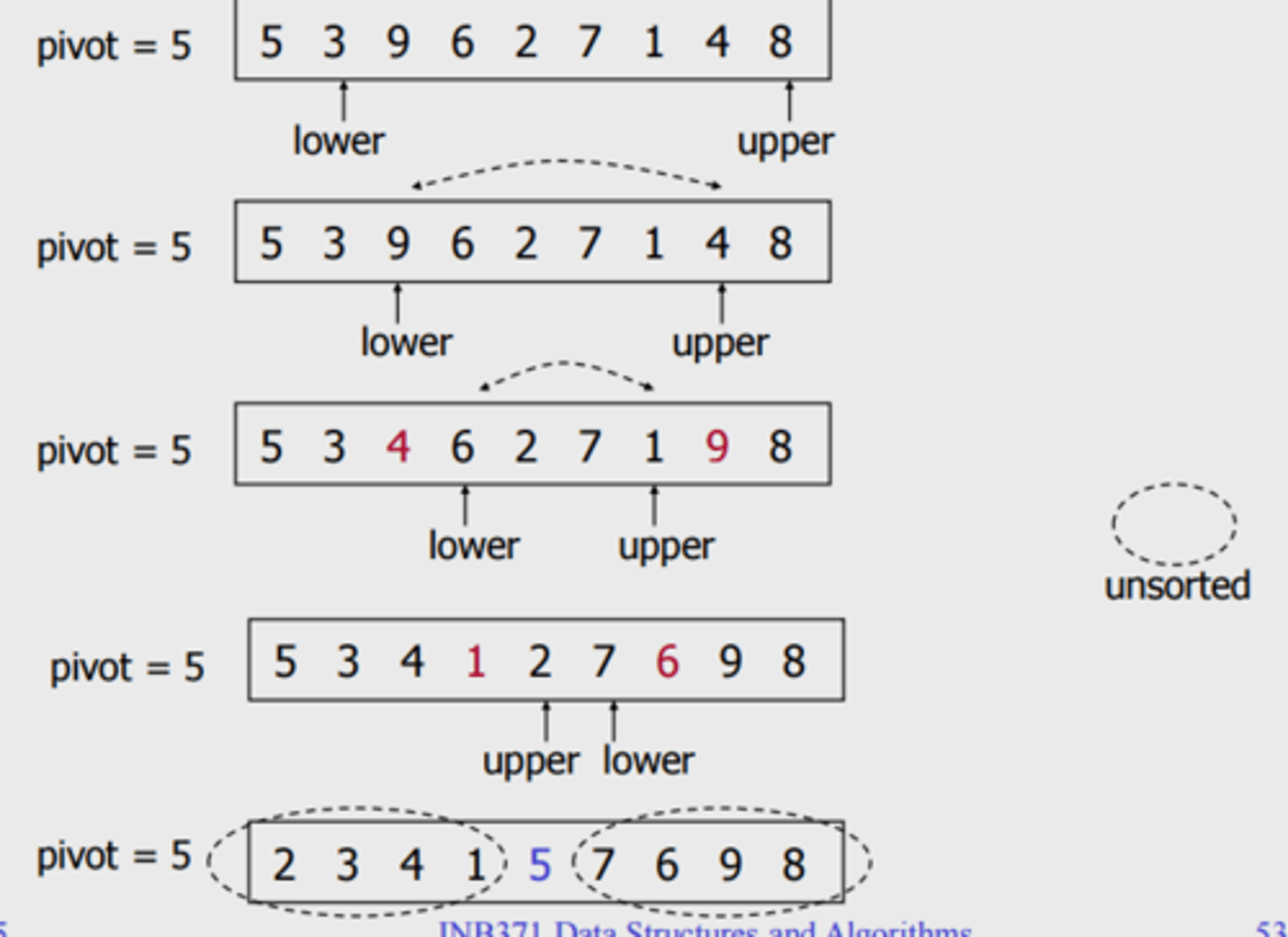

Walk through quick sort

- The 1st element is the Pivot

- Move all elements smaller than pivot to the left side by swapping (with the second and third and so on).

- Then swap Pivot farthest right element that was previously swapped (Now Pivot is in correct position)

- Apply same algorithm on each of the two halves

What is the best, avg, worst complexity of quick sort?

Best and avg = O(n log n) bc first iteration is O(n) but they are halving/splitting up into two different ones.

Worst case = O(n^2). The worst case would be it's already sorted and the pivot doesn't move. So you go through all the way the first time and nothing swapped ( O(n) ). Then pivot is next number and it goes through again ( O(n -1) ). It keeps going until the pivot is the last number in the sequence and with O(n) happening n times, it is O(n^2).

Pros and cons of quick sort

Avg case is quicker than bubble and selection

However, it takes up O(log n) space instead of O(1)

What is the complexity of building a heap vs a balanced BST?

Heap - O(n) bc of heapify

Bal. BST - O(n log n) bc Each insertion is O(log n) and there are O(n) elements to be inserted

Walk through Heap Sort

Maxheap: Replace A[1] (root) with A[size] (last in breadth first).

Size --

Then bubble down the root

Repeat

What is the best, avg, worst complexity of heap sort

O(n log n) for everything

Walk through Disjkstra's algorithm

Let PQ = minHeap Priority Queue of all vertices.

For each v in V, set dist(v) = infinity, except dist(s)=0; add v to PQ based on estimated distance dist(V).

While PQ is not empty:

i. u=dequeue(min Heap). (Root is swapped w last element and u (root) is off heap). Then bubbledown. Mark u as visited.

ii. For each unvisited neighbor v of u:

- Relax(u, v),

- Update dist(v) if required in PQ (Bubble up)

- and note u as the Previous Node(PN).

Note: relax(u, v) is when it compares the d(u) + the edge (distance) between u and v TO d(v).

Time complexity of Disjkstra's algorithm

O(m log n)

m being the edges

How do you build a heap?

Start with parent of last node. Bubble down. Then go to next's parent

What do collisions depend on?

Load factor

(N/M) where n is the number of elements and m is the size of the array

How many connected edges are there in an MST?

n-1. where n is the number of vertices

What is the maximum number of edges a dense graph could have?

n(n-1) / 2. where n is the number of vertices