[2] exam 3 Sensation and Perception - Hearing in the Environment

1/9

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

10 Terms

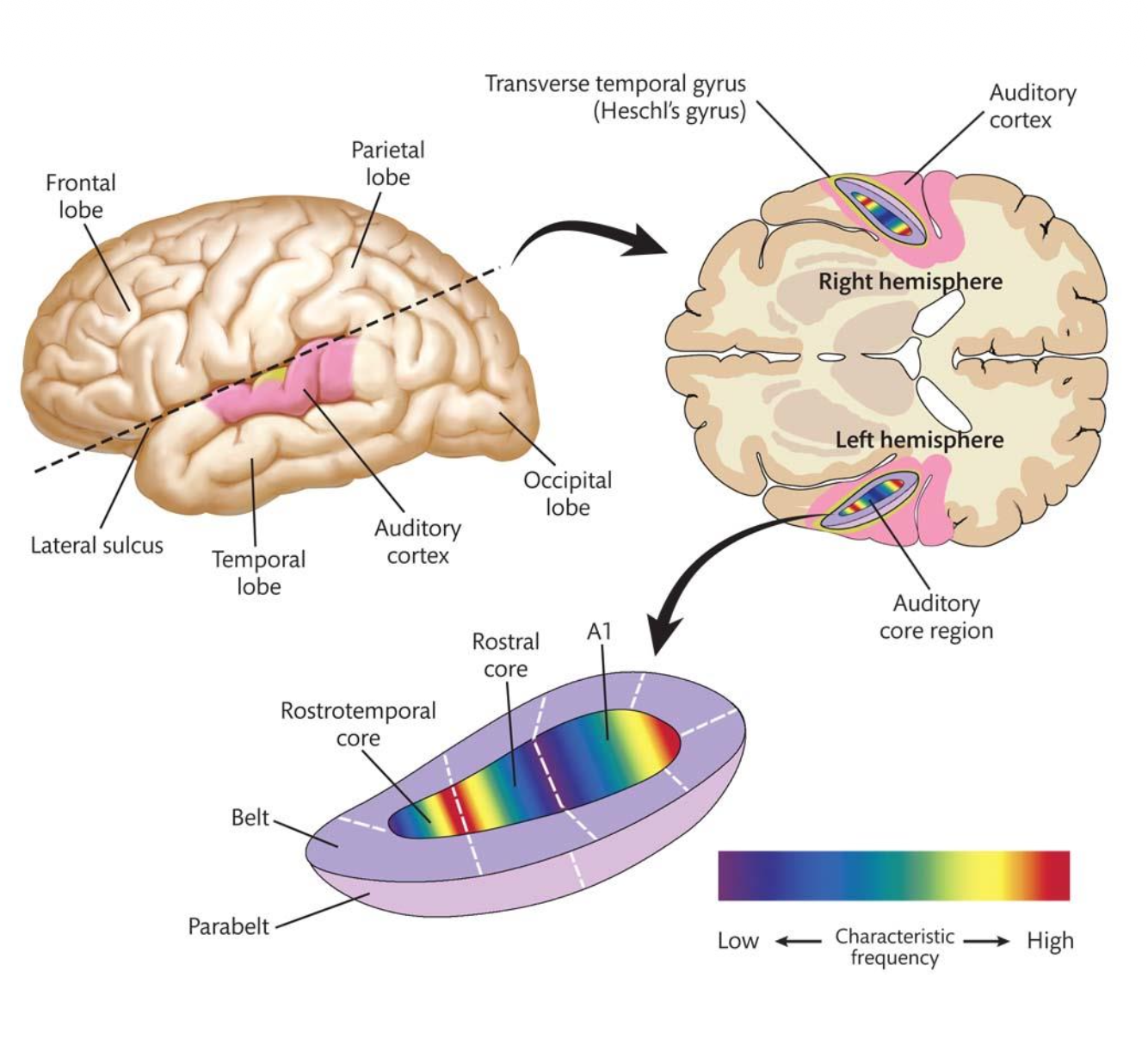

Physical Cortical Auditory Pathways – Review

Auditory Cortex has 3/4 Core Regions.

Located in a fissure atop the __.

Hierarchical Processing:

1. A1 – __(pitch, timing, intensity).

2. Belt – Complex/Simple sounds (patterns, voices, rhythm).

3. Parabelt– __.

3.

temporal lobe.

Tonotopic map.

Complex. [Surrounds A1 and receives input from it. Handles more complex combinations of sounds. Processes: Patterns of sound, Voices (recognizing speech characteristics), Rhythm and sequences].

Multisensory integration. [The outermost layer, further from A1. Integrates sound with other senses and higher-level meaning. Processes: Multisensory integration (sound + vision, etc.), Understanding context (e.g., matching a voice to a face), More abstract interpretation of sounds].

![<p><u>Parabelt: Multisenory Integration into What / Where</u></p><p><u>“What” & “Where” Regions </u></p><p>Like vision, hearing splits into “what” & “where” streams – in humans and monkeys – in <u>Parabelt/Belt</u>.</p><p class="p2"><span style="color: rgb(250, 147, 147);">• Anterior Auditory Regions: ? [<u>where/what</u>]</span></p><p class="p2"><span style="color: rgb(13, 249, 7);">• Posterior Auditory Regions: ? [<u>where/what</u>]</span></p>](https://assets.knowt.com/user-attachments/edc3d6bf-c722-41d9-8558-2f616d7fa7a5.png)

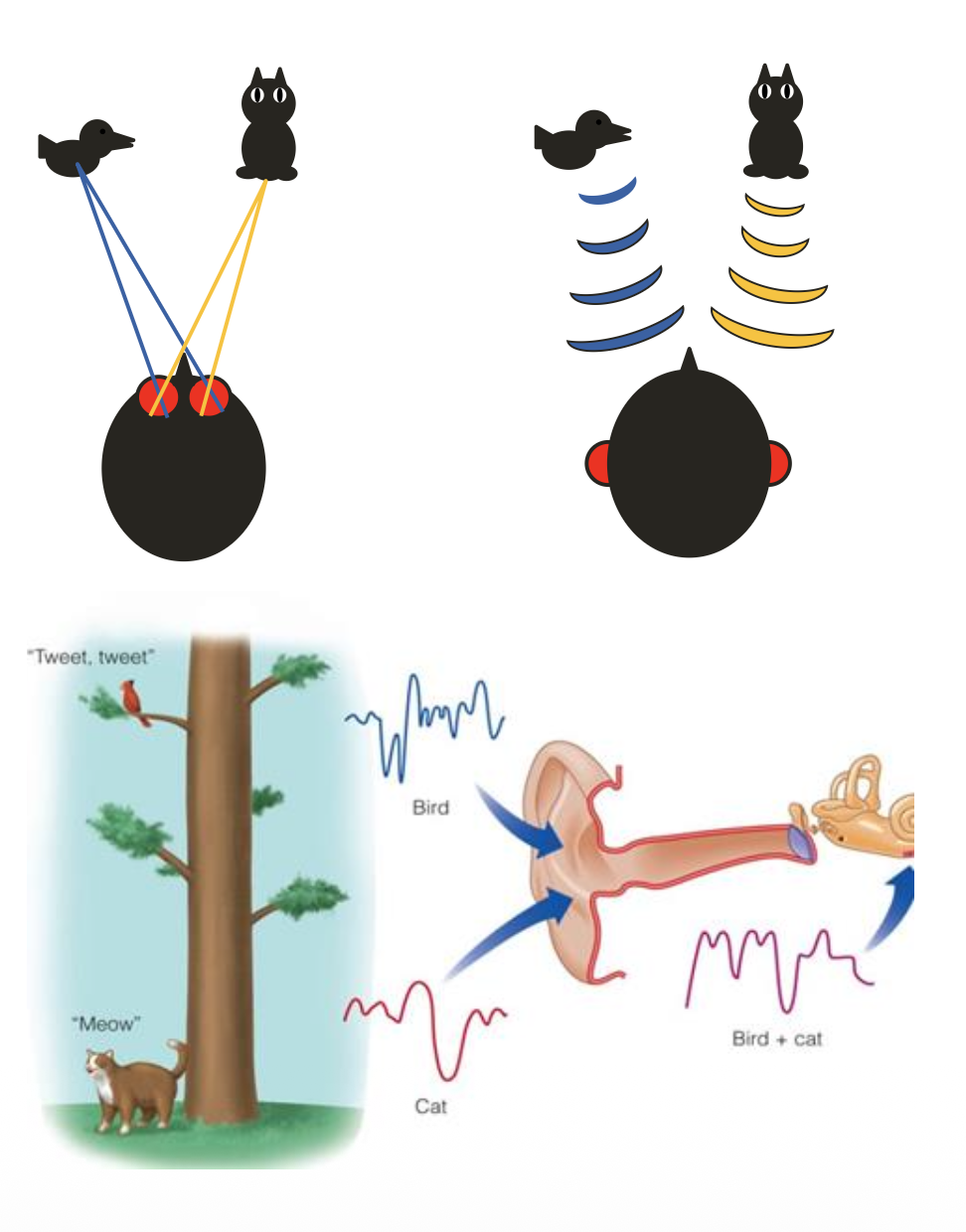

Parabelt: Multisenory Integration into What / Where

“What” & “Where” Regions

Like vision, hearing splits into “what” & “where” streams – in humans and monkeys – in Parabelt/Belt.

• Anterior Auditory Regions: ? [where/what]

• Posterior Auditory Regions: ? [where/what]

Parabelt.

identify what produced a sound.

locate where the sound came from.

Hearing in the Environment: Computation

Sound __ Requires Computation.

• No __ - brain must calculate location.

• Vision is spatiotopic/tonotopic, but hearing is spatiotopic/tonotopic.

↪ Ear receives mixed frequencies from all directions/one direction.

• Localization uses ? & ?.

↪ Most accurate at sides & least at front/behind.

Localization [Unlike vision, your auditory system doesn’t come with a built-in map of space. Instead, the brain figures it out].

spatial map in the cochlea.

spatiotopic. tonotopic [Vision is spatiotopic → the retina directly maps space (you “see” where things are). Hearing is tonotopic → maps sound frequency, not location].

all directions.

binaural cues (timing, intensity) & spectral ones (ear shape) [Sound reaches one ear slightly earlier than the other. Sound is louder in one ear than the other. Outer ear (pinna) changes sound depending on direction. Different angles → slightly different frequency patterns. Helps especially with: Front vs. back and Up vs. down].

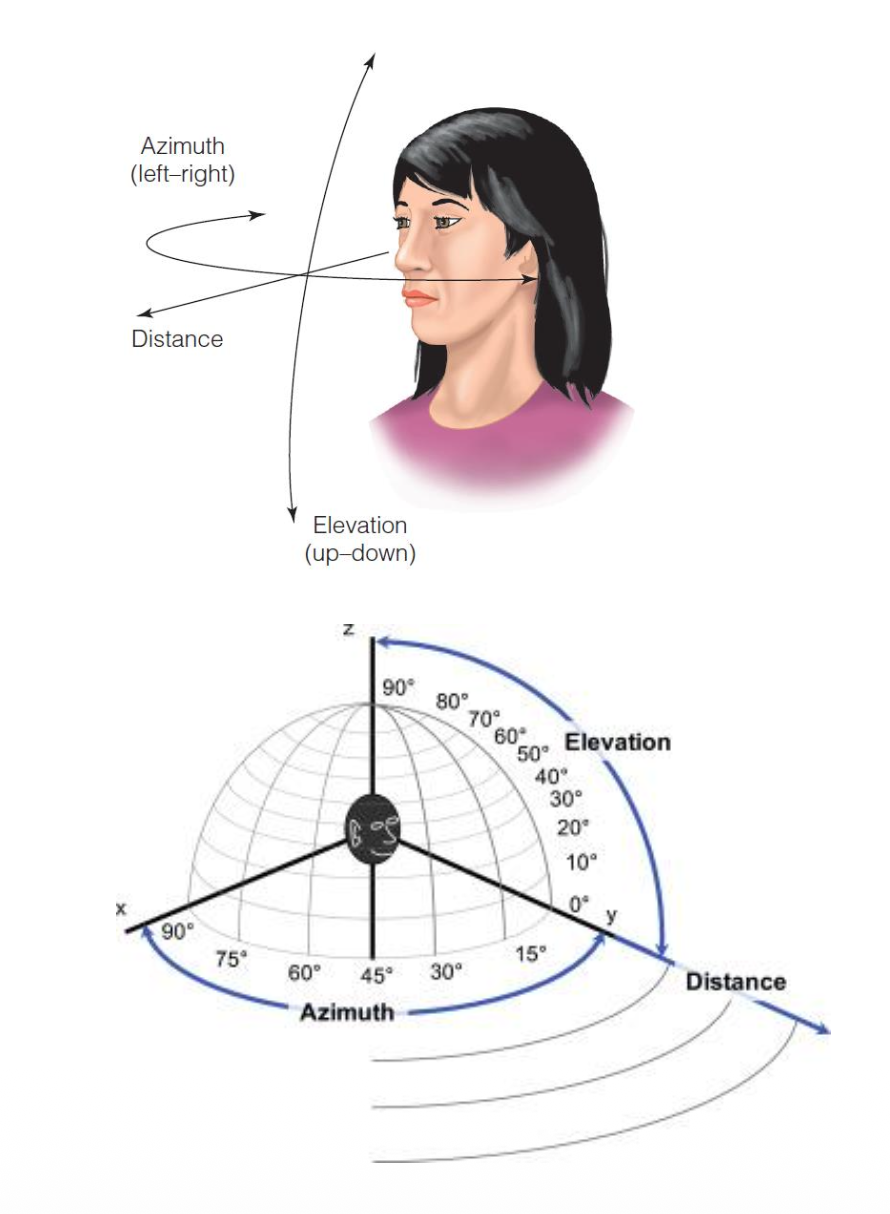

Polar Coordinate System

Brain uses __ centered on the head to locate sounds, along with ear shape & head position.

___: surrounds an observer and exists wherever there is sound.

• Azimuth coordinates: ?

• Elevation coordinates: ?

• Distance coordinates: ?

polar coordinate system.

Auditory space.

left to right (horizontal). Uses binaural cues [Horizontal direction (ear-to-ear axis). How the brain figures it out: Uses binaural cues: Timing differences (which ear hears it first), Loudness differences (which ear hears it louder) Example: Sound reaches left ear first → sound is on the left].

up & down (vertical). Uses Pinnae shape [Vertical direction (above vs below you). How the brain figures it out: Uses the shape of your outer ear (pinna). The pinna filters sound differently depending on angle. Example: A sound from above has a slightly different frequency pattern than one from below].

how far away sound is. Uses loudness, reflections, & experience [How far away the sound is. How the brain estimates it: Loudness (quieter = usually farther), Reflections/reverb (more echo = farther), Experience/expectation (you know how loud things should be). Example: A faint voice with echo → likely far away].

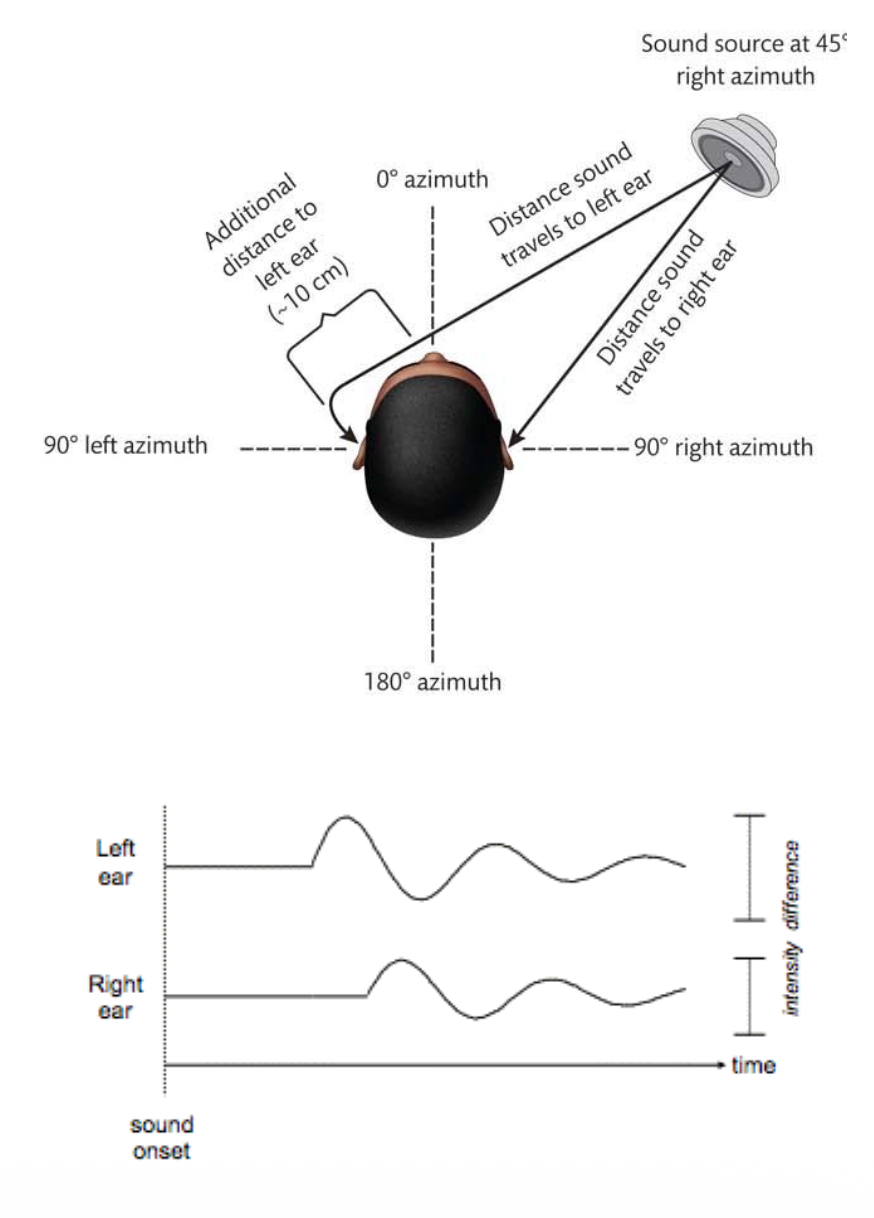

Azimuth: Binaural Cues

Binaural cues: ?

What are the 2 types of Binaural cues? What frequency (High/Low) is each cue good for?

ITD & ILD provide complementary info about the location of a sound on the horizontal plane (Azimuth)/Vertical plane.

location cues based on the comparison of the signals received by the left & right ears.

1. Interaural time difference (ITD): difference between the times that sounds reach the two ears. Good for Low frequencies [Low-frequency waves are long → easier for the brain to detect timing differences. Example: A deep drum beat arrives earlier in your left ear → brain says “left side”].

2. Interaural level difference (ILD): difference in sound pressure level reaching the two ears. Good for High Frequencies [High-frequency waves are short → easily blocked by your head → creates a bigger loudness difference. Example: A high-pitched beep is louder in your right ear → brain says “right side”].

horizontal plane (Azimuth).

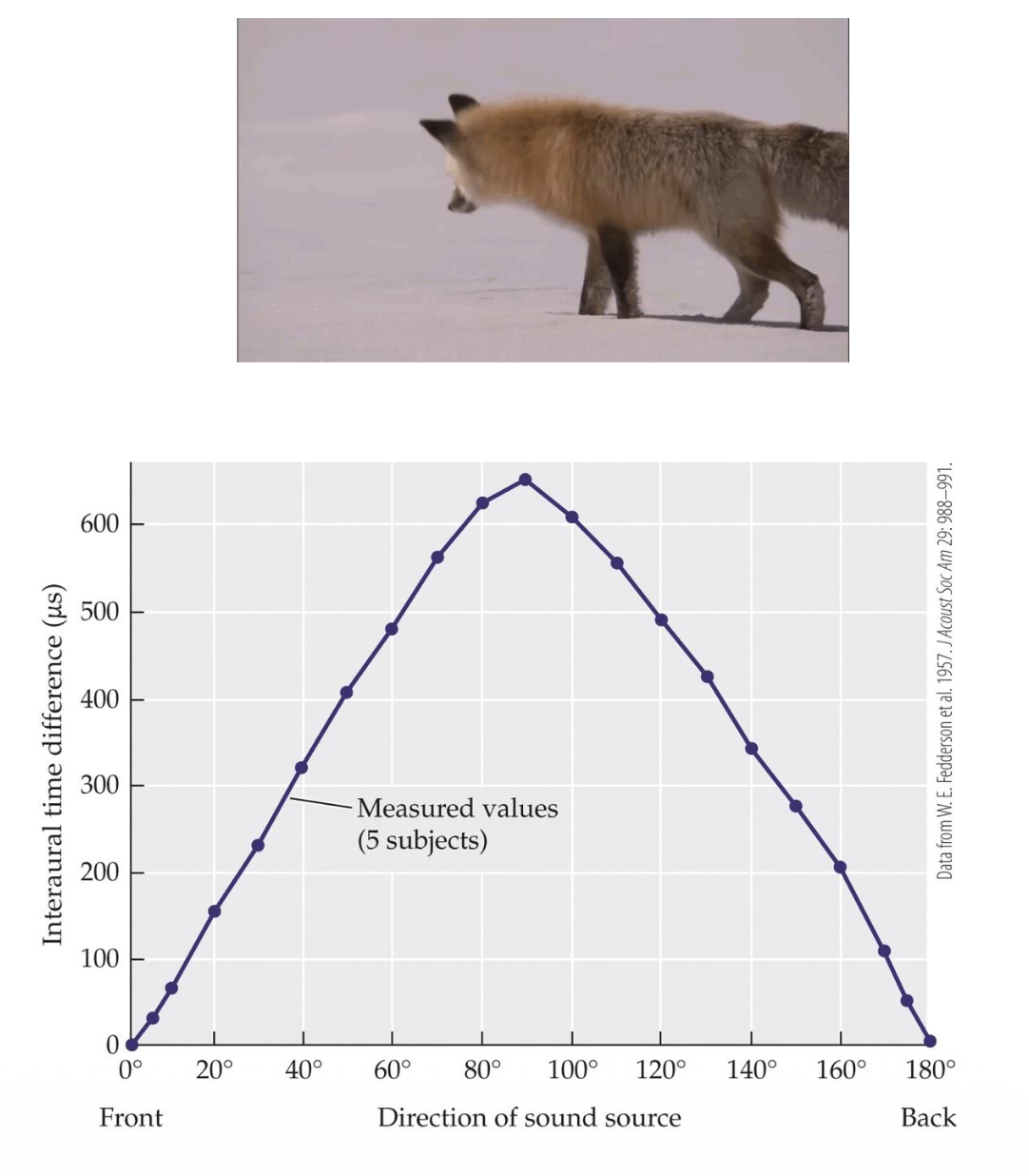

Azimuth Localization – Interaural Time Differences

1. Interaural Time Difference (ITD): __ difference in sound arriving at one ear versus the other.

• T/F: When the source is to the side of the observer, the times will differ.

↪ Max ITD at 90°/190° azimuth ≈ 600 µs (microseconds).

↪ Threshold for detection ≈ __.

↪ Most ITDs (due to head width) are above/below threshold.

• Enables localization within ?°.

time.

TRUE.

90°.

100 µs (microseconds) [This is the smallest time difference your brain can notice. Meaning: Your brain can detect extremely tiny delays].

above [Because of the size of your head, typical delays are big enough to detect. So ITD is a reliable cue in everyday life].

1 [Humans can localize sounds to about 1° of accuracy using ITDs. That’s extremely precise: Like pointing almost exactly where a sound is coming from].

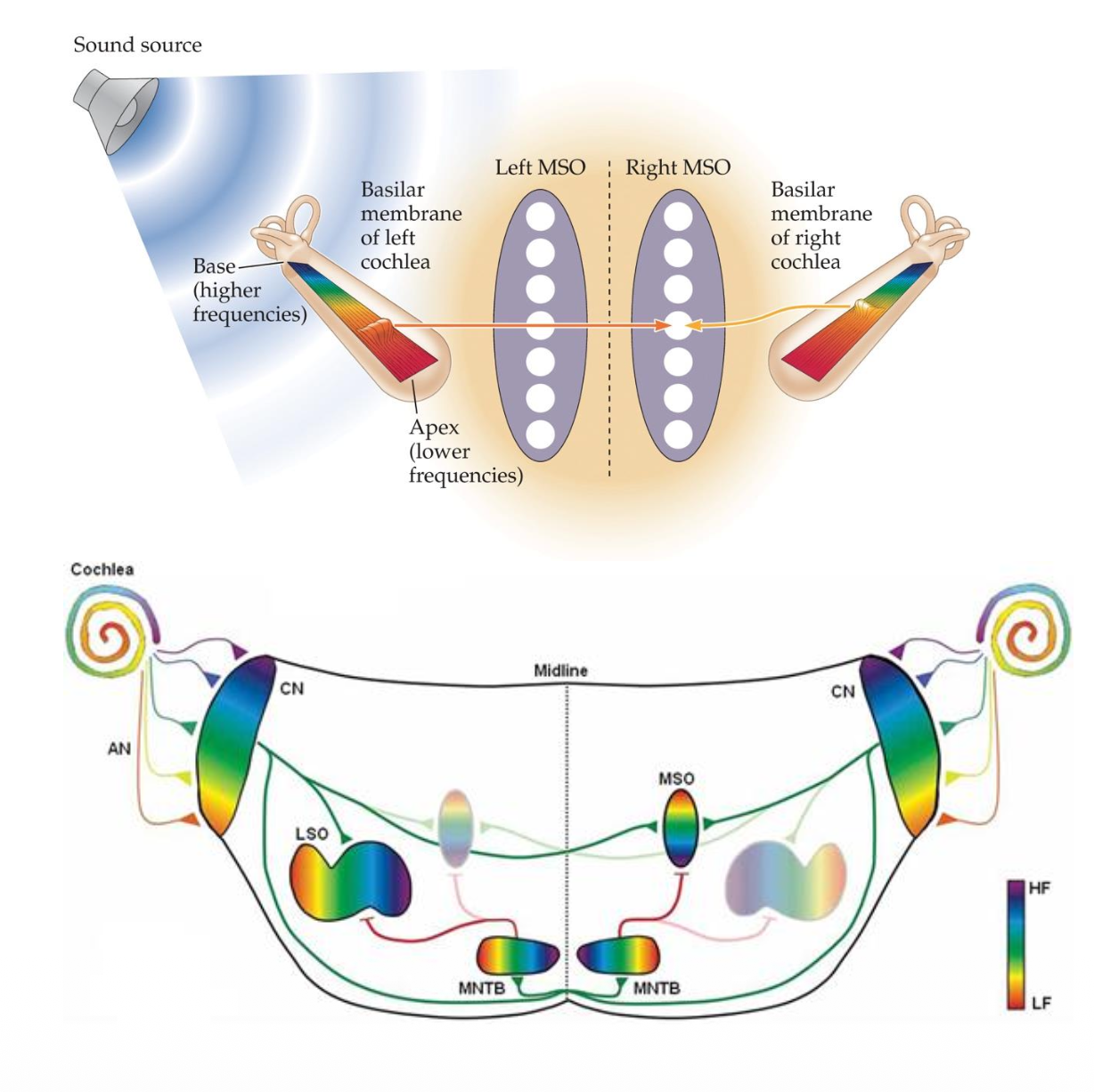

Azimuth Localization – ITD Pathways & MSO

Physiology of ITD

Signals travel from ? → ? → ?.

• MSO compares __ from both ears to detect ITDs.

↪ Especially tuned for low-frequency/high-frequency sounds.

• Neural circuits for ITD form early in life/later in life.

cochlea. cochlear nucleus (CN). medial superior olive (MSO).

timing.

low-frequency. [Basically, MSO compares: When the signal from the left ear arrives and When the signal from the right ear arrives. It fires most strongly when signals from both ears arrive at a specific time difference. Why This Works → Different neurons in the MSO are tuned to different delays. So: Some neurons fire when sound is slightly left, Others fire when sound is slightly right. The pattern of activity tells the brain the azimuth (left–right position). Best for Low-Frequency Sounds: The MSO is especially good at processing low-frequency sounds. Why? → Low-frequency waves have longer cycles → easier to track timing differences. High frequencies are too fast → timing becomes ambiguous].

early in life.

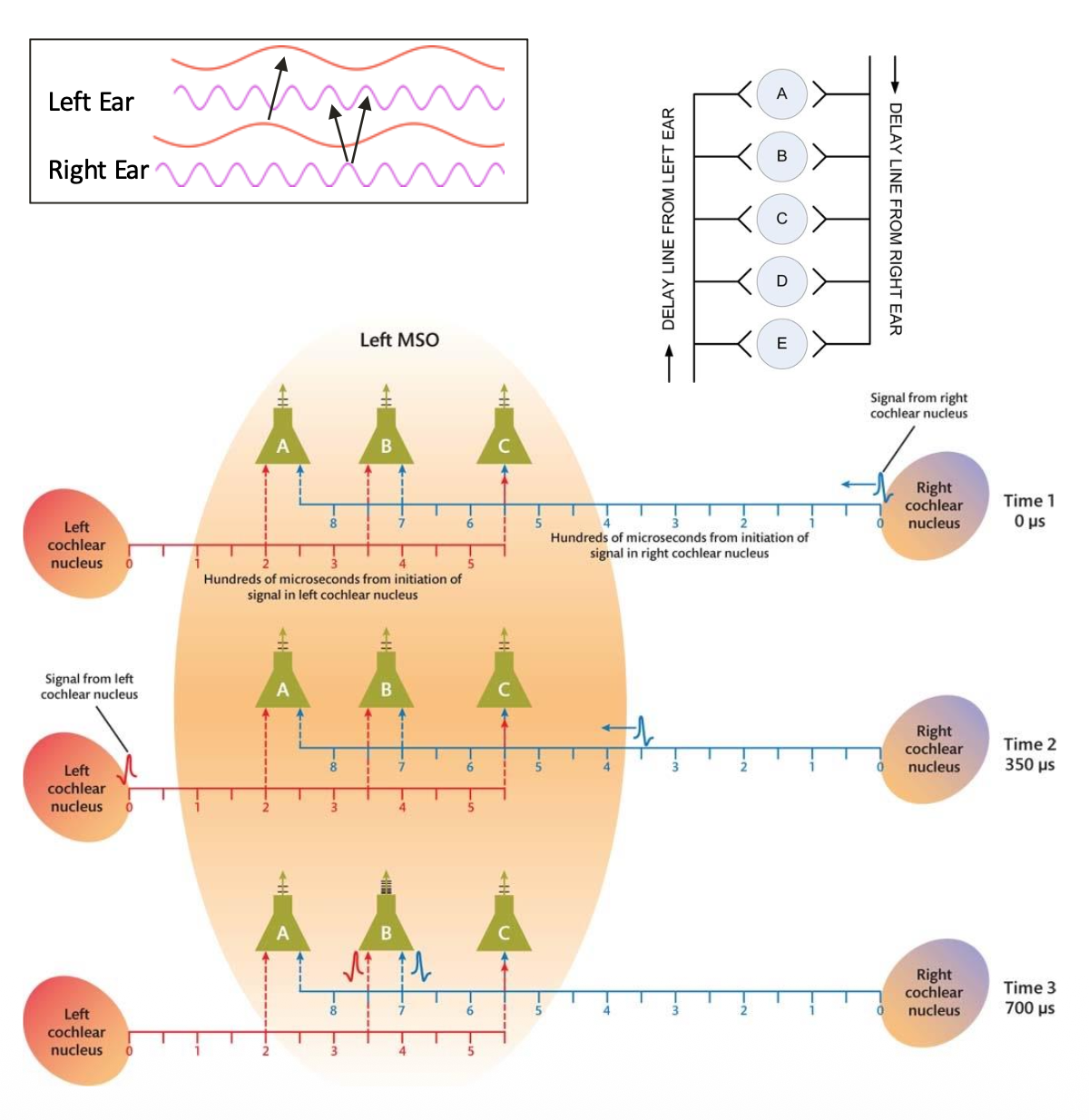

ITD Detection & Limits: Jeffress Model

? Model – ITD Detection & Limits

• Jeffress Model (1948) explains MSO ITD detection via __.

- Neurons fire only when input from __ arrives simultaneously.

↪ Matches __ of sine waveforms.

• Fails at low/high frequencies: hard to match peaks.

Jeffress.

coincidence detection [Neurons in the MSO only fire when signals from both ears arrive at the same time. So the brain: Delays signals from each ear slightly (via neural pathways), Lines them up so they arrive simultaneously at specific neurons].

both ears.

peaks [Sounds can be thought of as waves with peaks and troughs. The Jeffress model assumes: The brain aligns peaks of the waves from both ears, When peaks match → neuron fires. This works well when wave patterns are clear and spaced out].

high [High-frequency sounds: Have very short wavelengths, Peaks occur very close together. This creates ambiguity: Multiple peaks could line up → confusing which one matches. Result: The brain can’t reliably use this “peak matching” method. ITD detection becomes inaccurate for high frequencies].

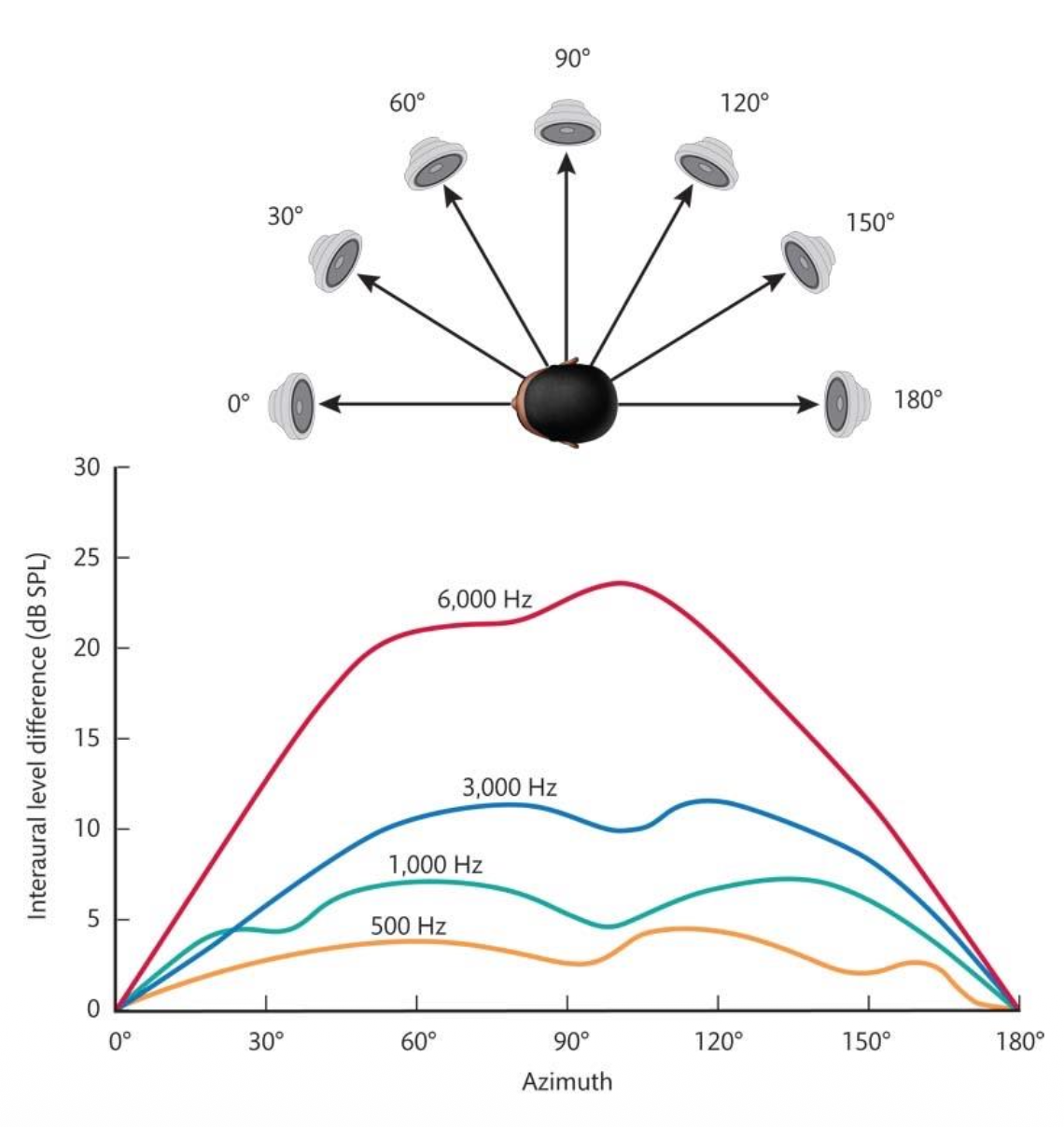

Azimuth Sound Localization – Interaural Level Difference

2. Interaural Level Difference (ILD): __ difference between one ear versus the other.

• Caused by ? + ?.

• ILDs help localize lateral sounds, especially above 1000 Hz.

↪ ILD is stronger at high frequencies (>1000 Hz).

↪ Max ILD at ±90°; none at 0° or 180°.

sound pressure level (intensity).

distance (inverse square law) + acoustic shadow (head blocks level for ear opposite of sound) [Distance (Inverse Square Law) → Sound gets weaker as it travels farther away. The ear closer to the source gets a stronger signal. Acoustic Shadow (Head Blocking Sound) → Your head acts like a barrier. It blocks or reduces sound reaching the far ear. This creates a “shadow” of lower intensity on the opposite side].