psych1b Research Methods

1/80

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

81 Terms

empiricism?

approach to understanding the world that lets us understand it through our senses, or we rely on tools to make systematic observations about the world

qual vs quan

reflexivity?

scientists self-consciously consider how their background or privilege shapes what questions they ask and what interpretations they make

producer vs consumer of research?

steps for testing theories?

1) research question

2) literature review

3) form a hypothesis- statement of how variables relate to one another

4) design a study

5) conduct it

6) analyse the data

7) report the results

what are good scientific theories?

1) are falsifiable

2) make predictions

3) are parsimonious - occams razor

pre-registering data?

idea that psychologists should post their hypothesis and research etc before conducting a study

as atm there is a tendency to do a study then pretend the conclusion is what you wanted to study all along

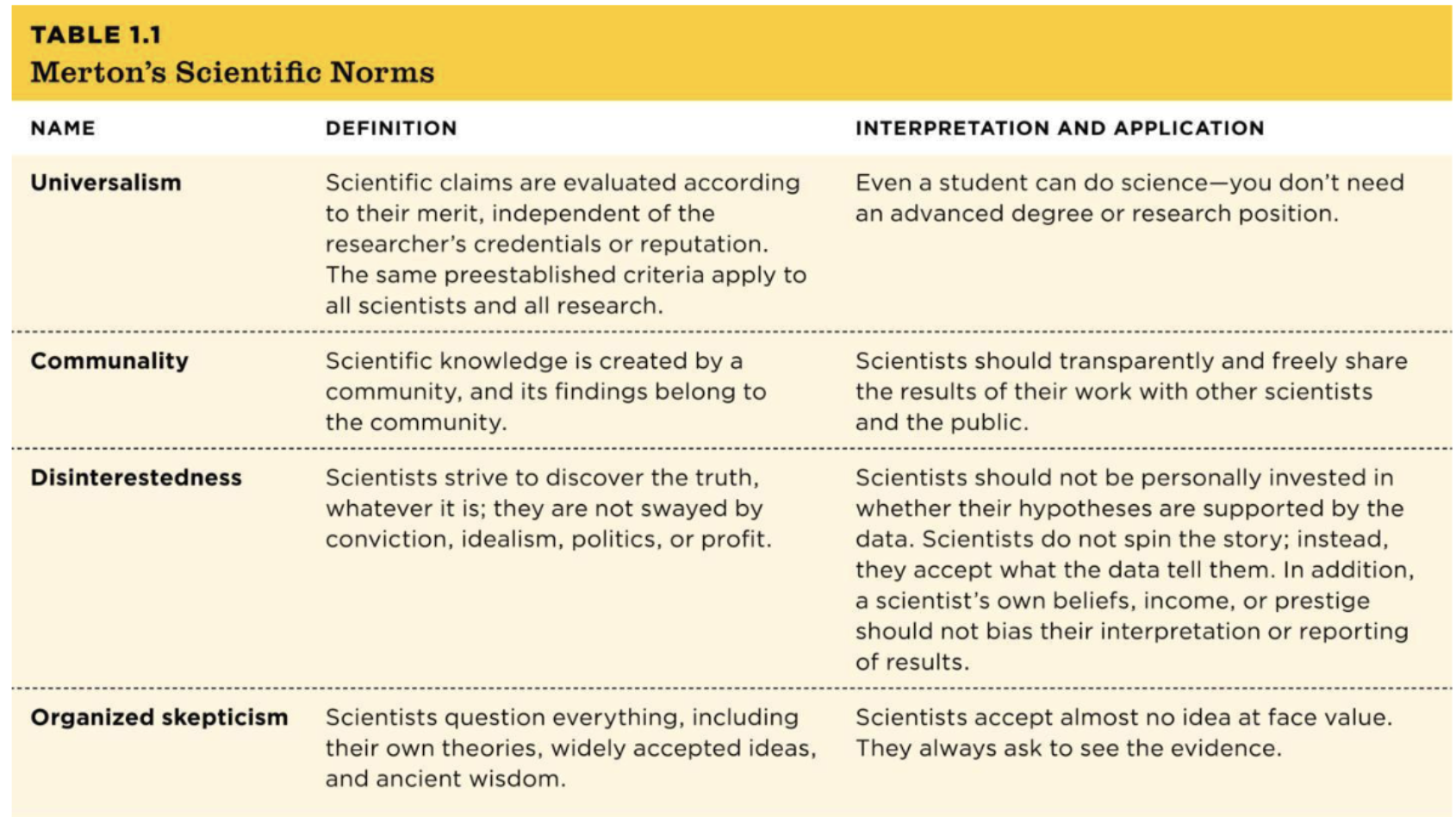

what are mertons scientific norms?

universalism- all scientific claims should have equal weight doesn’t matter who conducted it

communality- science should be shared

disinterestedness- no bias

organised skepticism- shouldn’t take anything with face value

basic research?

understand a topic at its most basic level eg investigate limitations of infant attachment

feeds back into research ya tenemos

applied research?

seeking to improve something, conducted in to solve practical problems, findings directly applied to finding a solution to a real world problem

eg which method is better for EWT

translational research?

in a lab study, eg can meditation lessons improve college students’ GRE scores

most research at edi is basic/translational

qualitative research

about understanding, not generalising, make sense of psychological phenomenon

interviews, focus groups, observations, naturally occurring data (eg comment thread)

“how and why”

rich detailed data

small carefully chosen samples

interpret and understand meaning

“some ppants talked about growth and reframing, versus sadness and depression post breakup”

why? help explain complex experiences, recognise overlooked group, explore new phenomena

helpful when little is known about a topic, when experiences vary, allow us to generate new theories

quantitiative methods

seeks to predict, measure, establish causes and correlations

what and how many

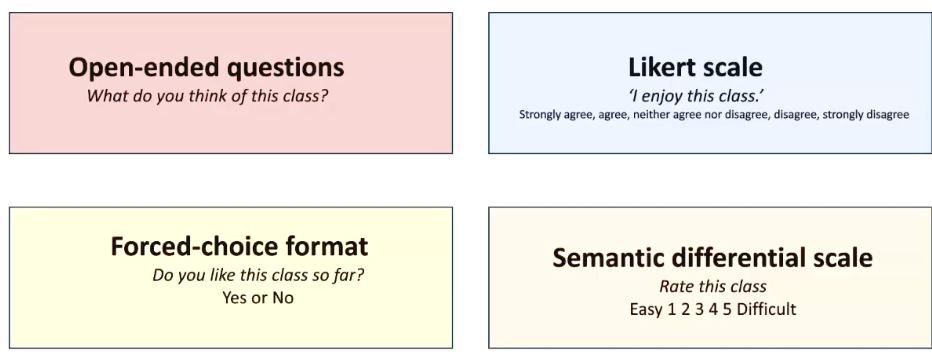

likert scales etc

broad big samples for larger more representative samples

“62% sample showed distress post breakup”

naturally occuring data versus researcher generated

letting convo unfold naturally, facebook chains, video audio recordings, versus structuring responses

nonrepresentative sampling?

not worrying about it being generalisable to whole population

also: snowball sampling, purposive sampling (try and find that subset of football fans)

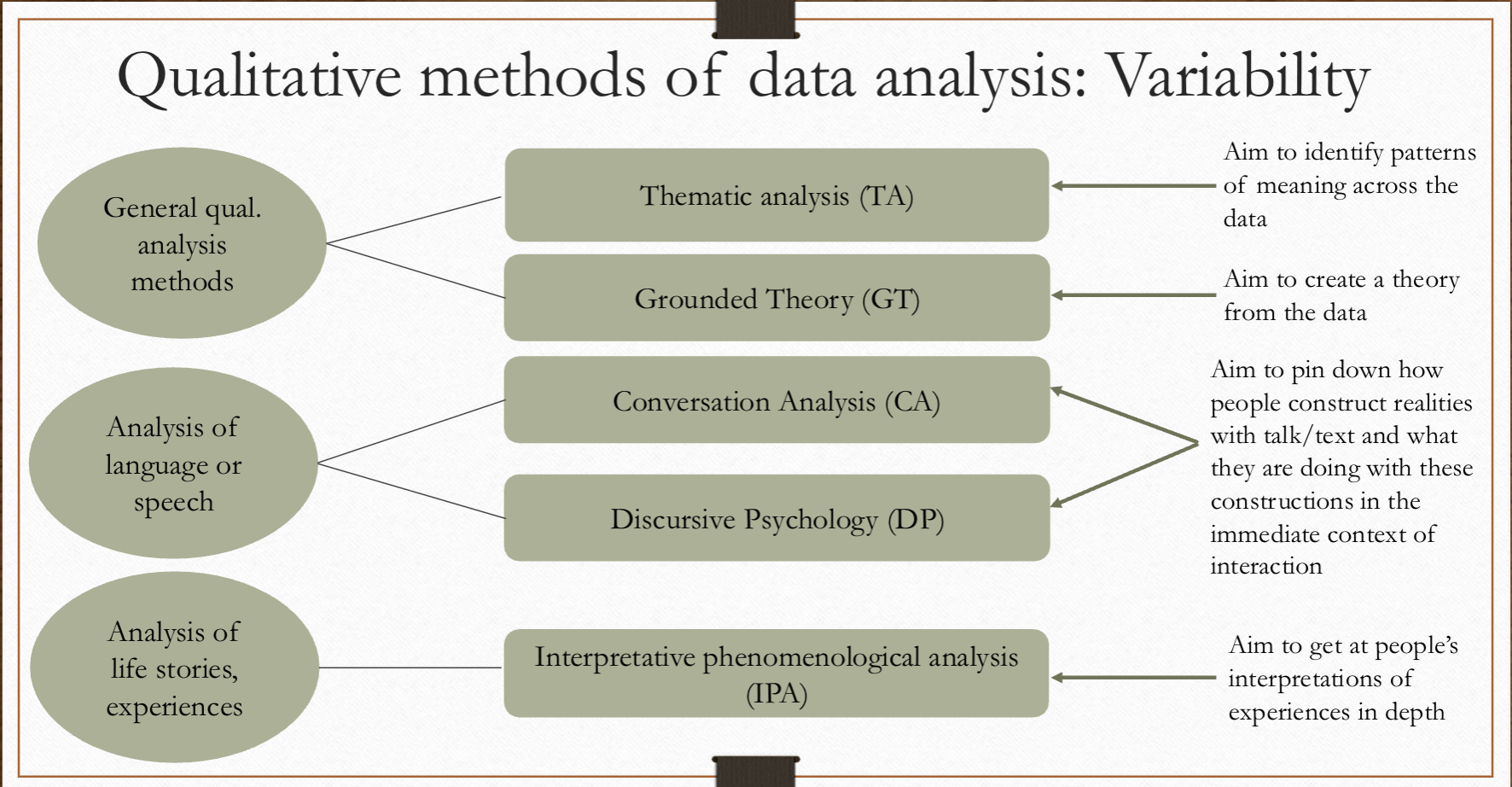

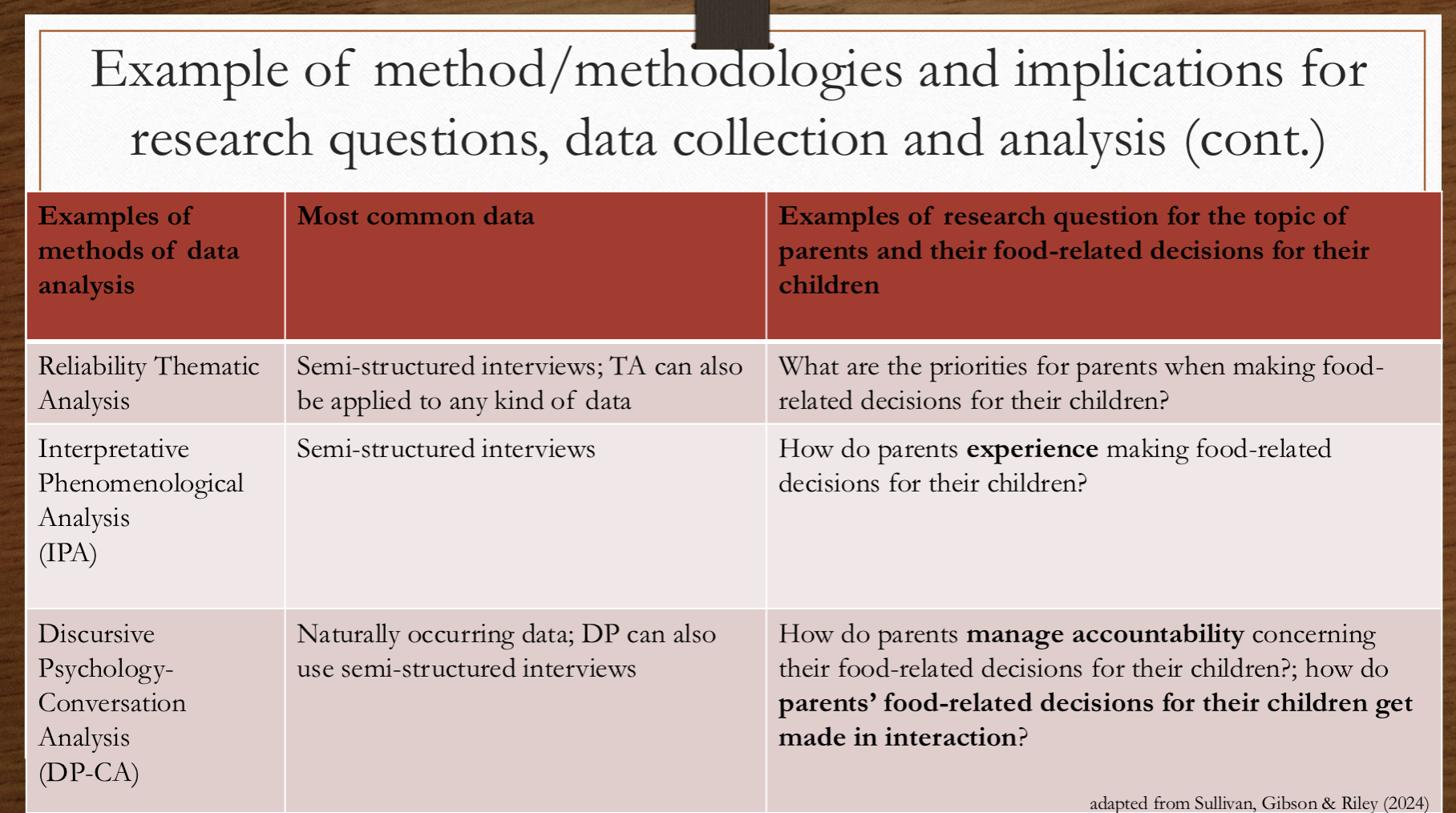

main qualitative analysis methods?

thematic analysis- very fluid, have multiple different approaches and assumptions

grounded theory- generate a new kind of theory

conversation and discursive- language and speech

IPA- analysis of life stories and experiences

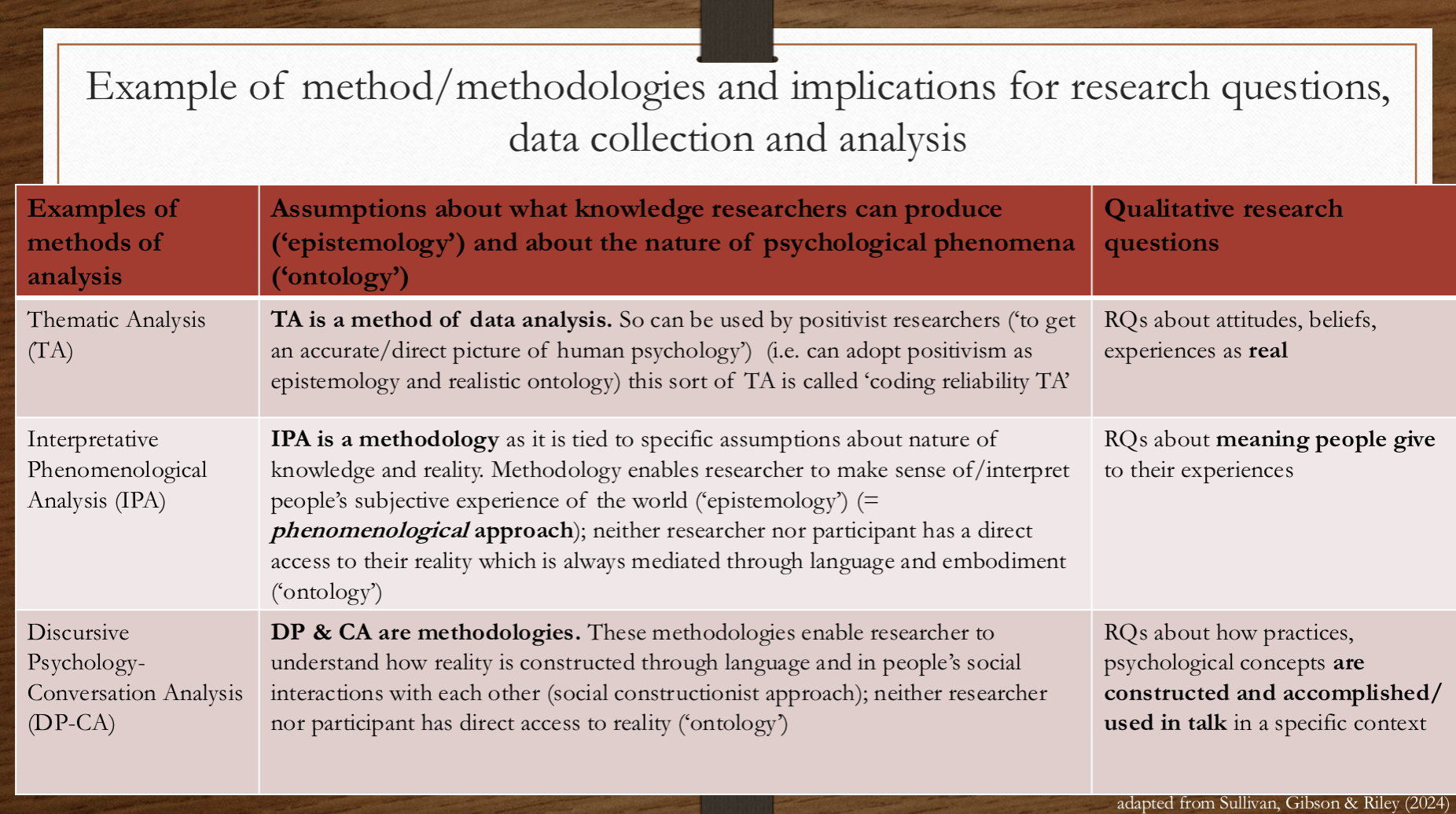

ONLY THEMATIC ANALYSIS IS A METHOD OF DATA ANALYSIS, REST ARE METHODOLOGIES

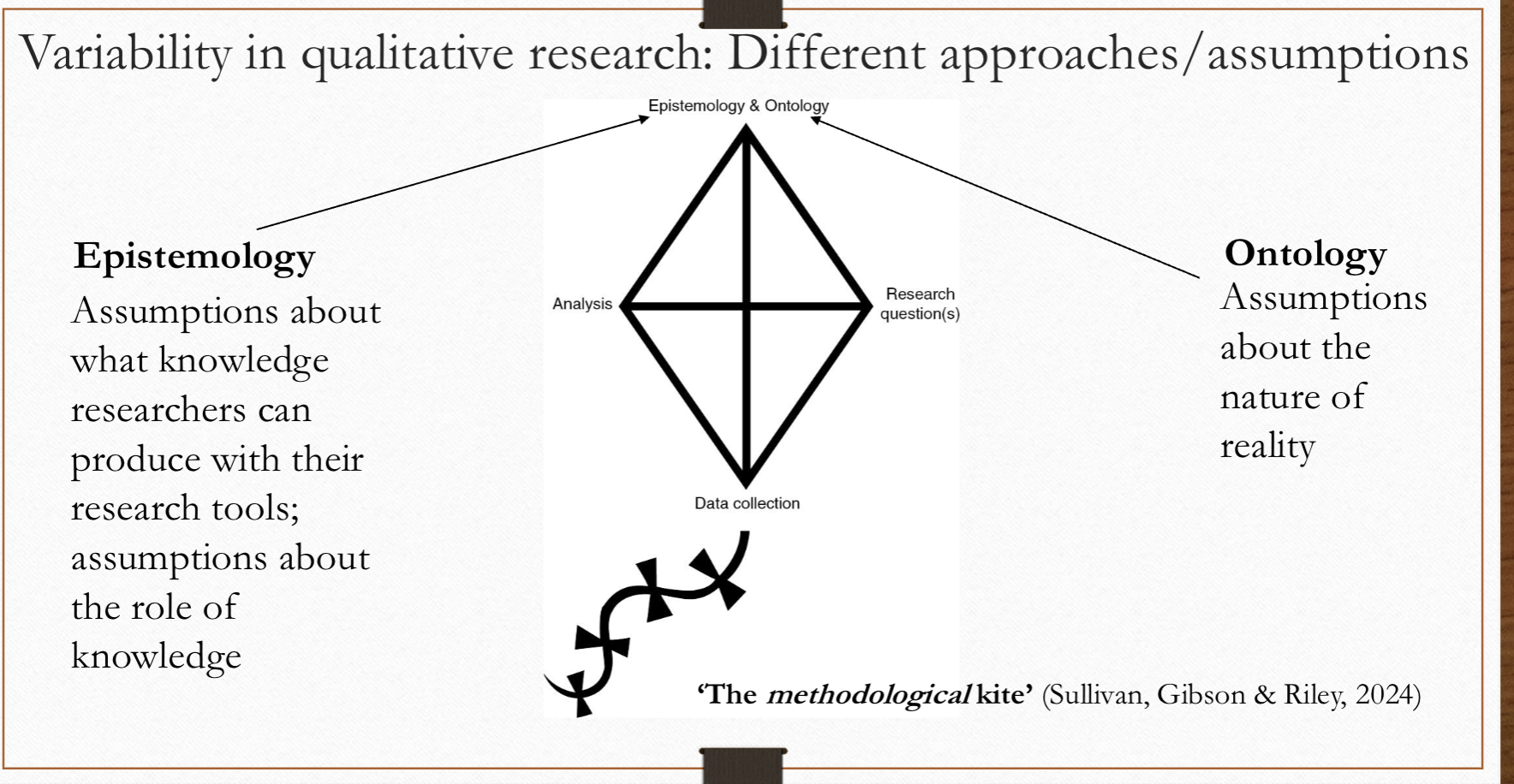

epistemology versus ontology

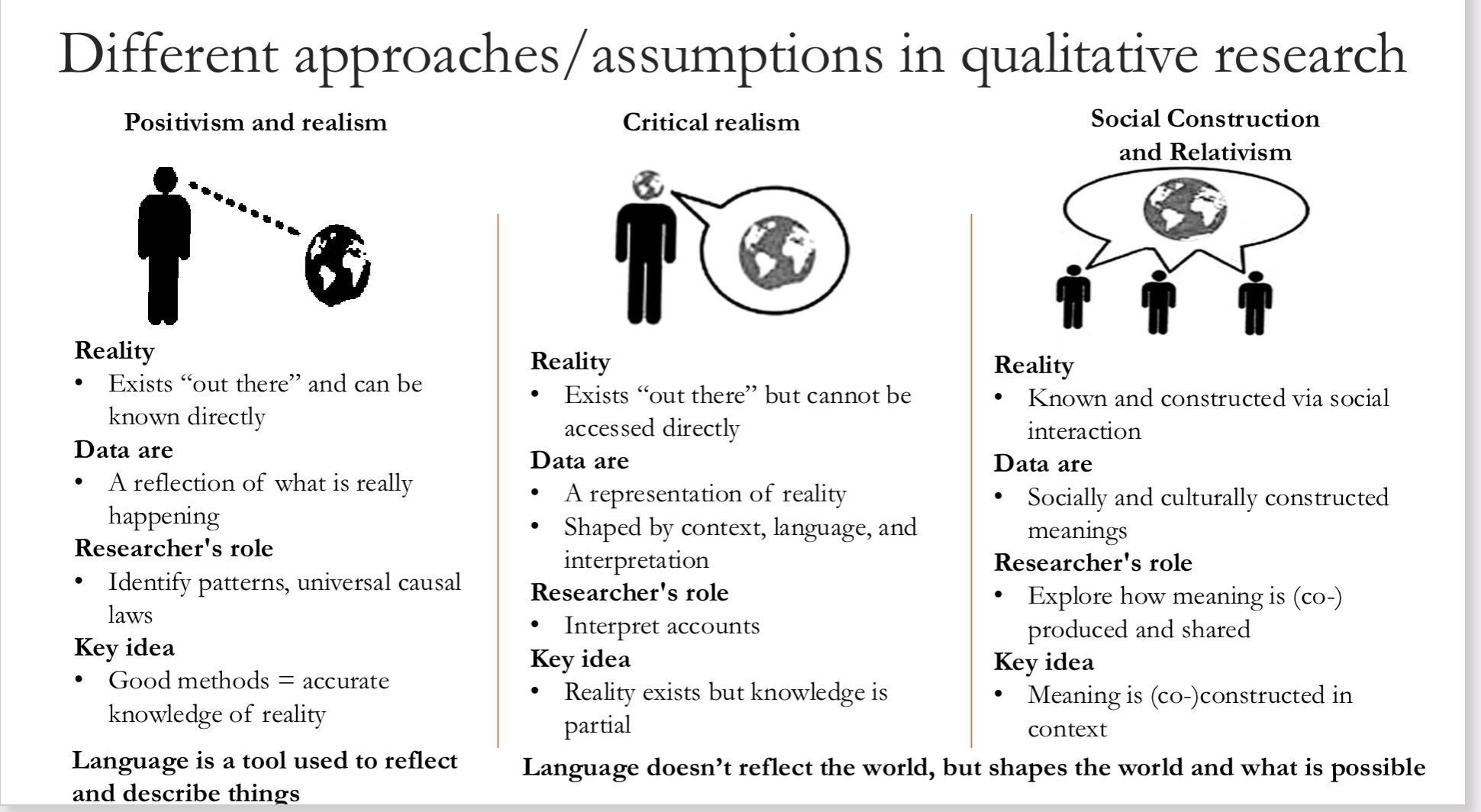

3 main approaches in qualitative research? positivism and realism, critical realism, social construction and relativism

positivism- real true reality that is out there, with good enough methods we can understand psychology, assumes language is a tool that reflects reality

critical realism- is a reality but our ability to capture it is mediated by our biases, can only access one version of it, language creates reality doesn’t reflect it

social construction- reality always constructed, cant pinpoint out in reality, language shapes everything we understand to be true, this is how knowledge is coconstrcuted etc

EXAMPLE OF METHOD AND IMPLICATIONS FOR RQs

thematic analysis- code all of data( attribute meaning to labels), and then generates common themes ( eg source of rumours= negative)

discursive- focuses on how it is said not what is said- discursive constructions (features) and what it achieves, eg what does “i don’t care” suggest

what is coding reliability TA?

multiple researchers code same thing, then their codes get compared

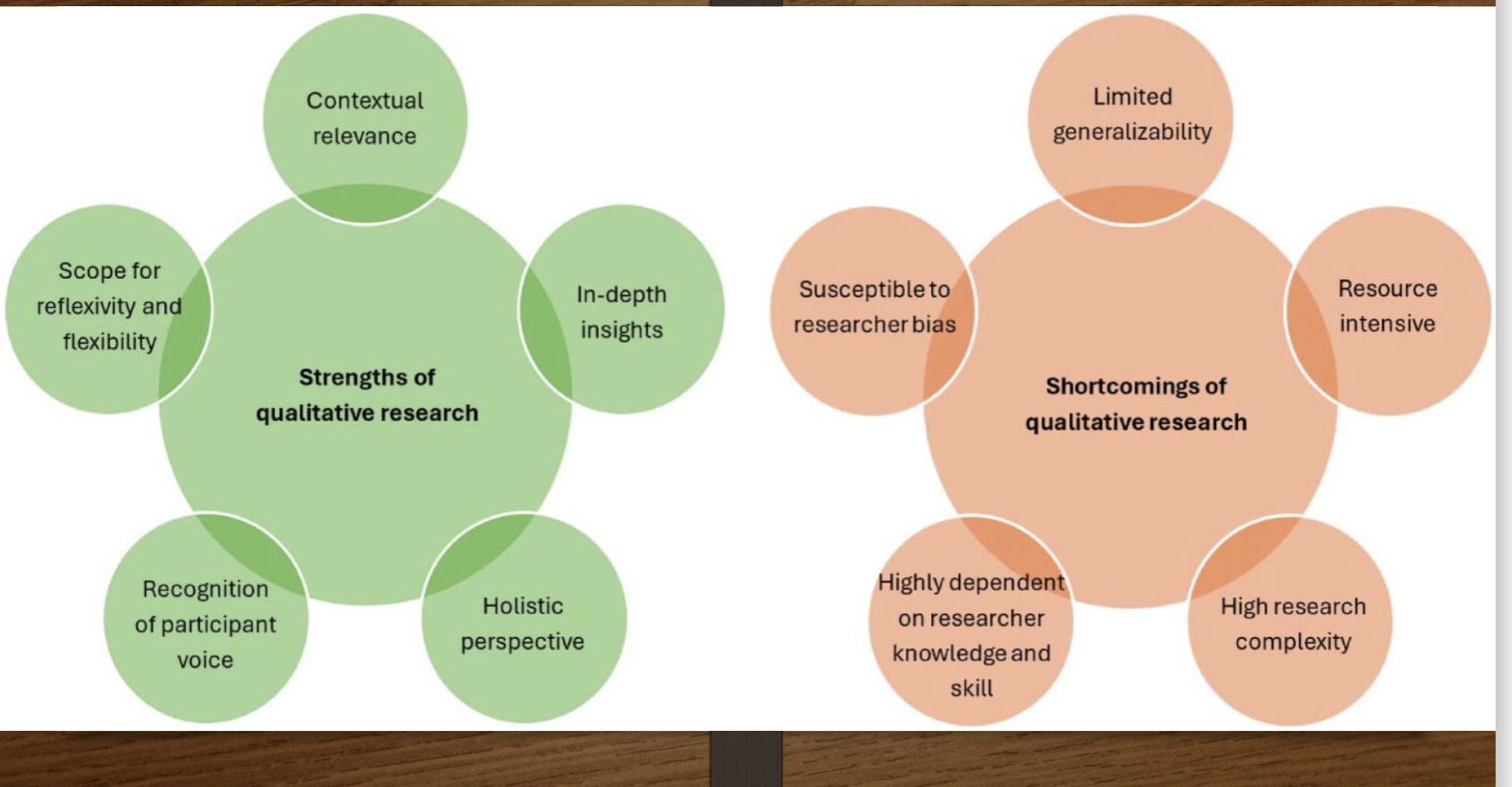

strengths and limitations of qualitative relationships?

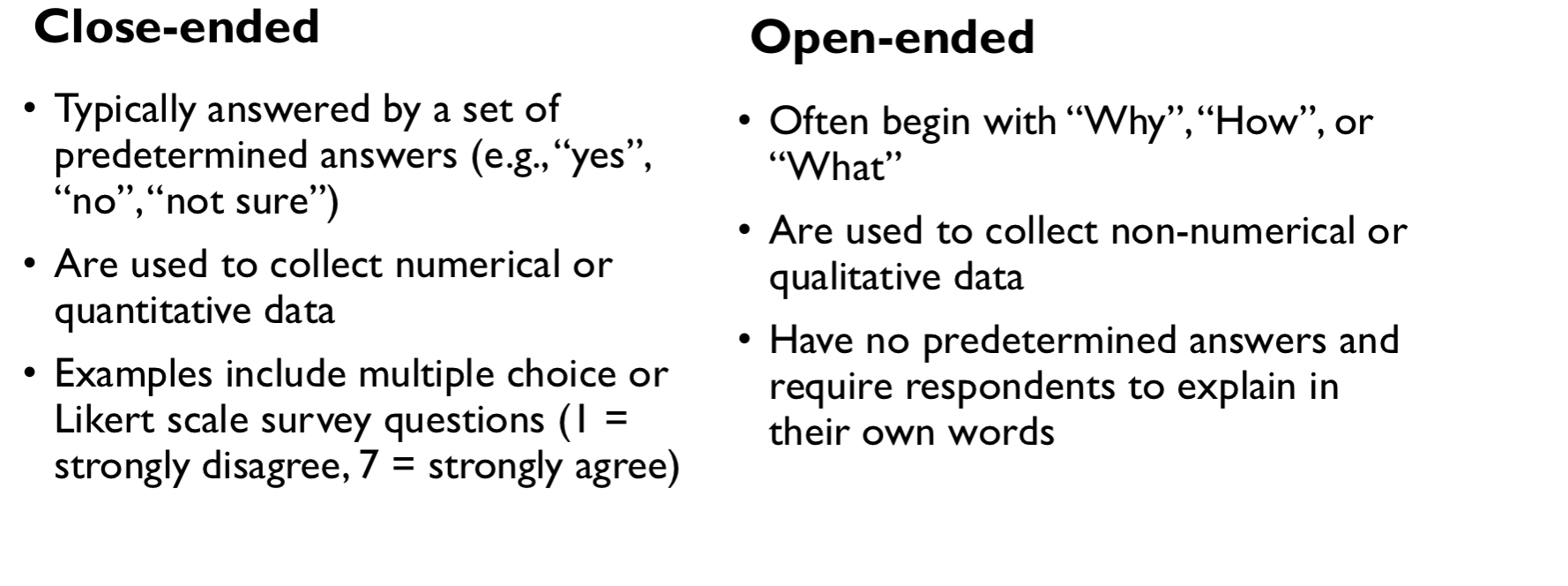

close ended versus open ended questions

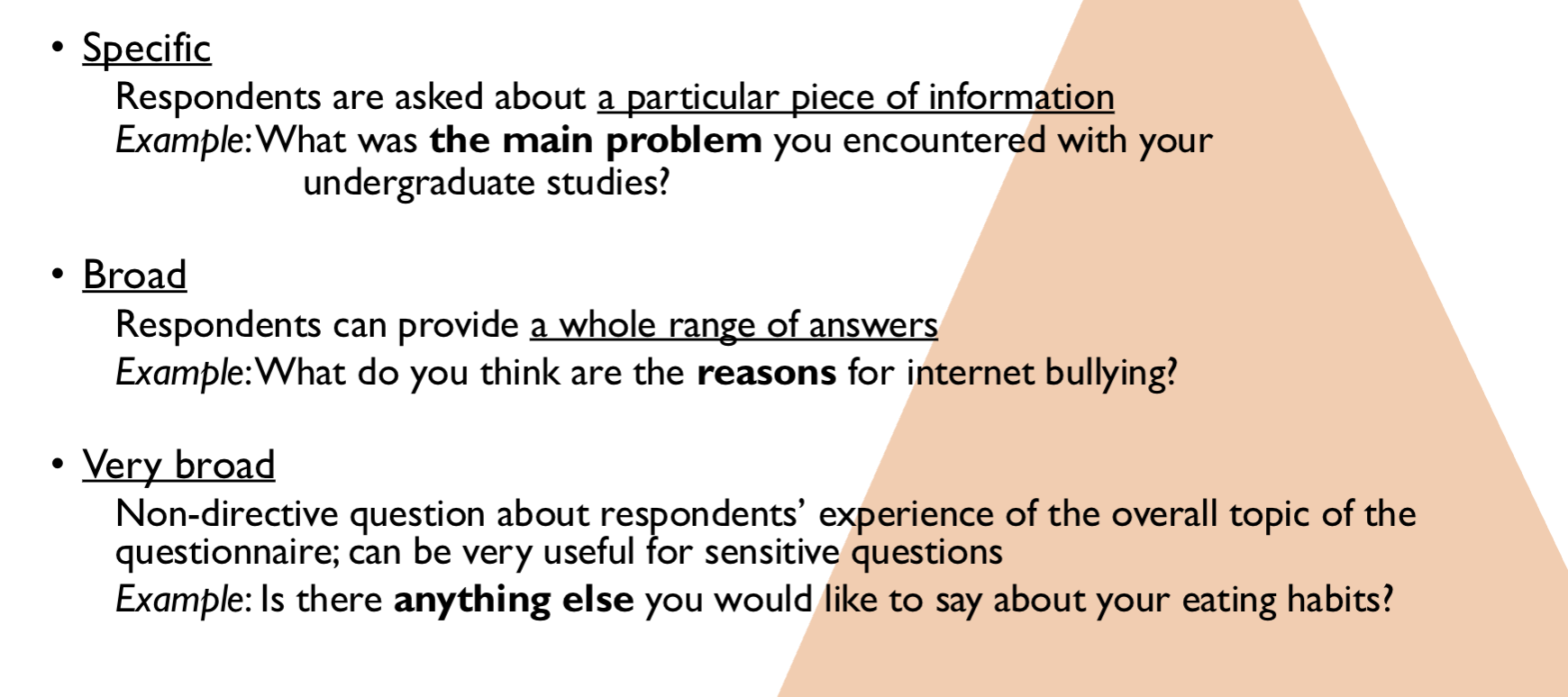

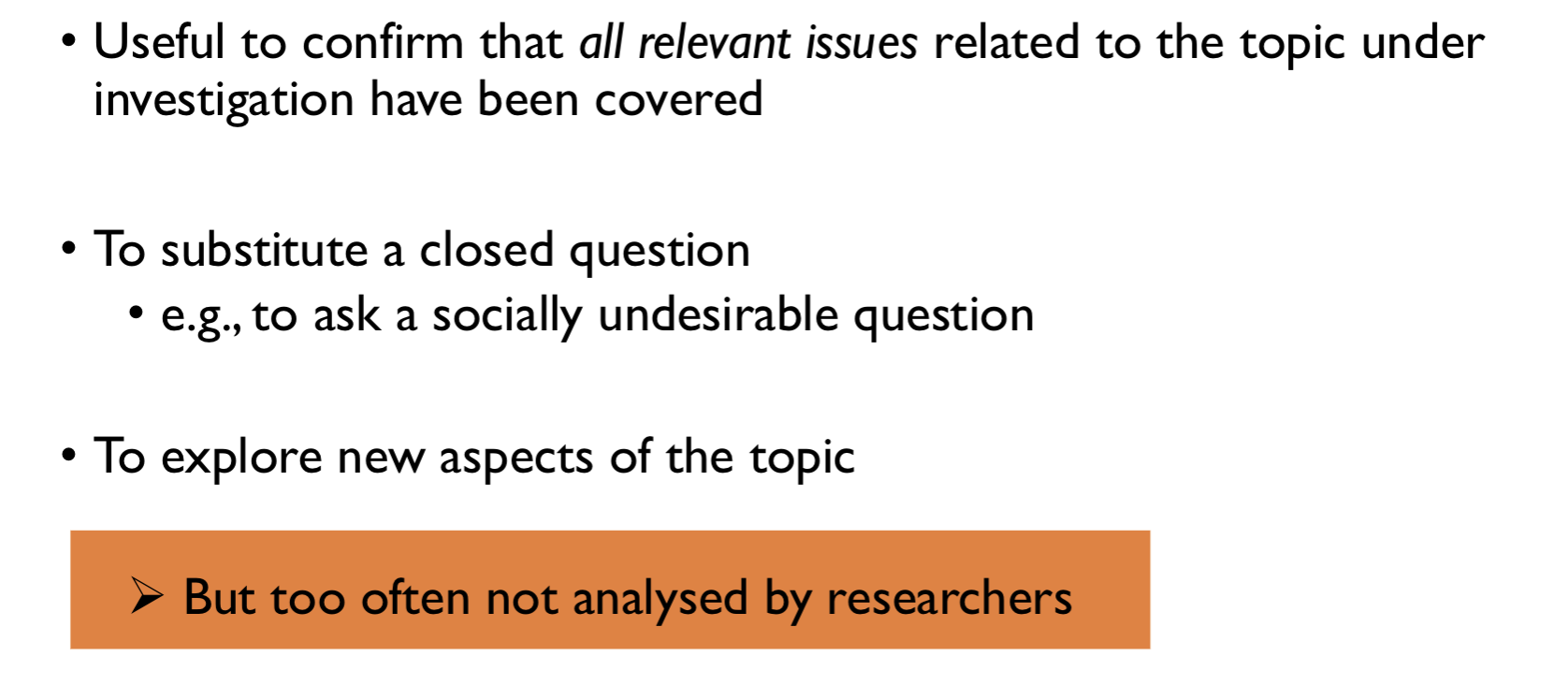

types of open ended questions + why

what is thematic analysis?

super popular and flexible

can be used with open ended questions

small and large data sets

tool for searching identifying and analysing relevant pattersn in qualitative data (themes)

can have RQs about attitudes and beliefs, about experiences, about social constructions of particular topic or phenomenon

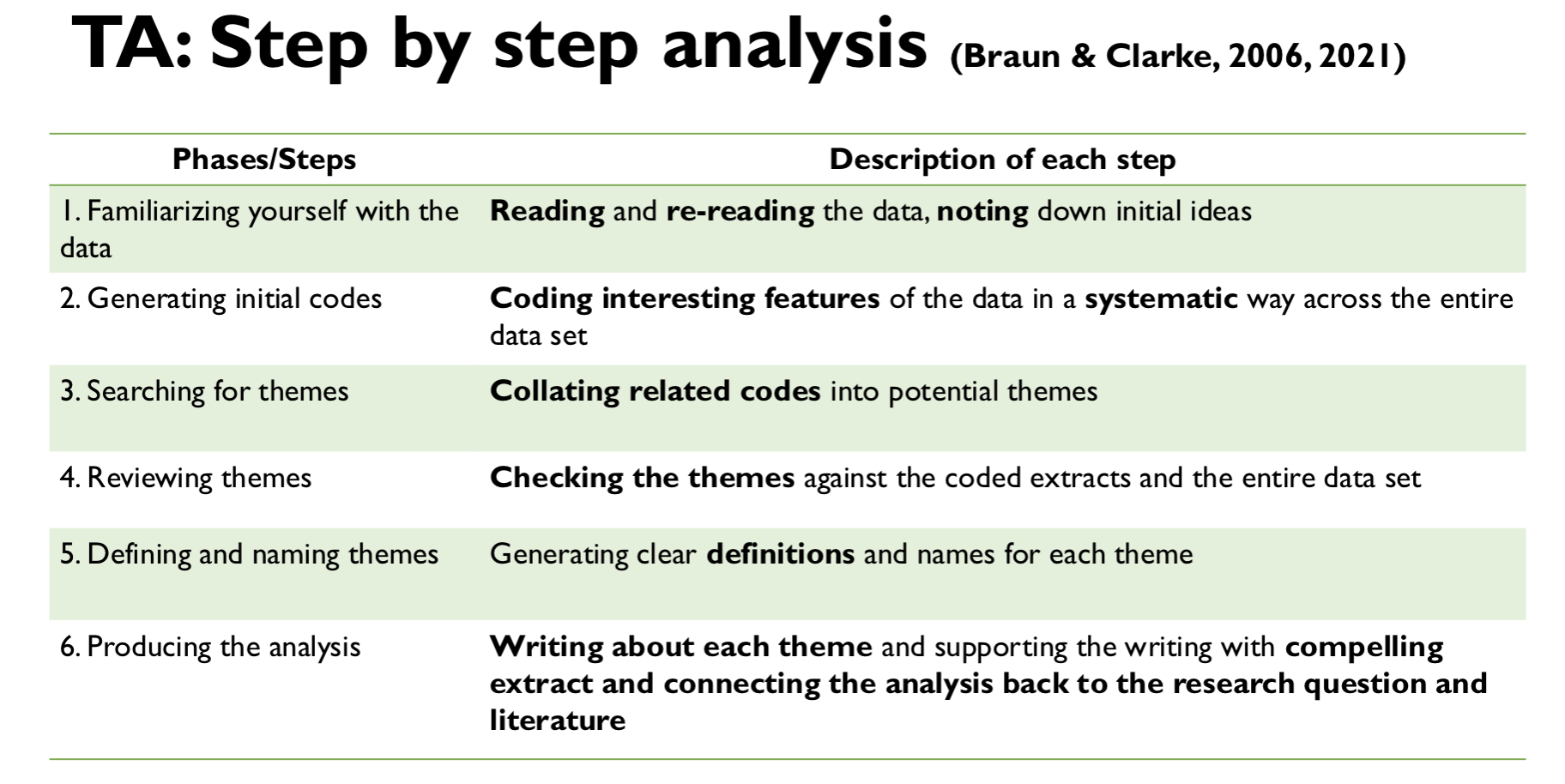

what r the 6 steps of thematic analysis?

inductive versus deductive codes(or both)

deductive- codes reflect pre-existing theories or findings- SHerlock holmes has preconceived theory

inductive- generated from data and aim at staying as close as possible to the meaning in the data - inductive hob - bottom up as you get heat from data then it rises into the theory

latent versus manifest codes

manifest/Explicit codes- explores meaning at surface of data, descriptive, captures explicitly expressed meaning, stays closes to ppant language and overt meanings of data (minimal interpretation)

latent/implicit codes- conceptual, focuses on a deeper more implicit or conceptual level of meaning (eg assumptions, values, world views that underlie the data)—— sometimes quite abstracted from obvious content of data

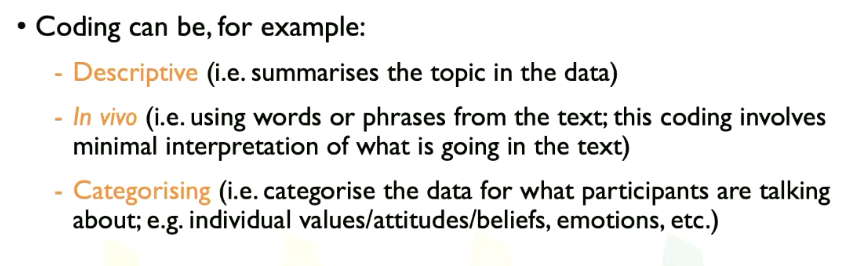

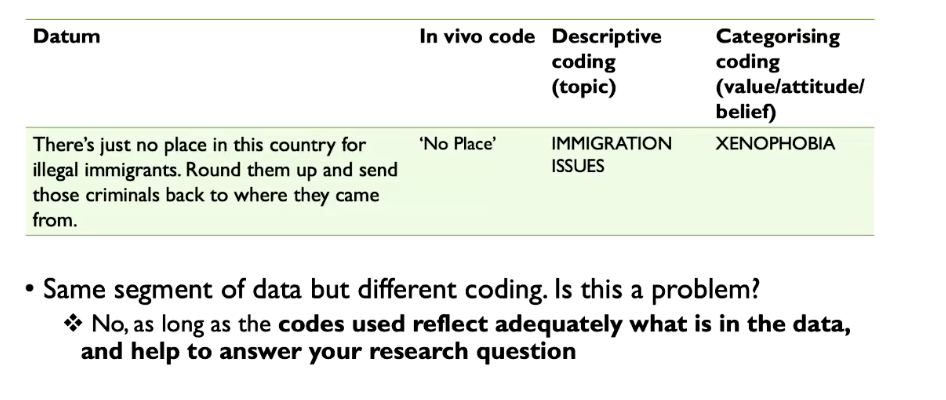

descriptive coding, in vivo, categorising codes:

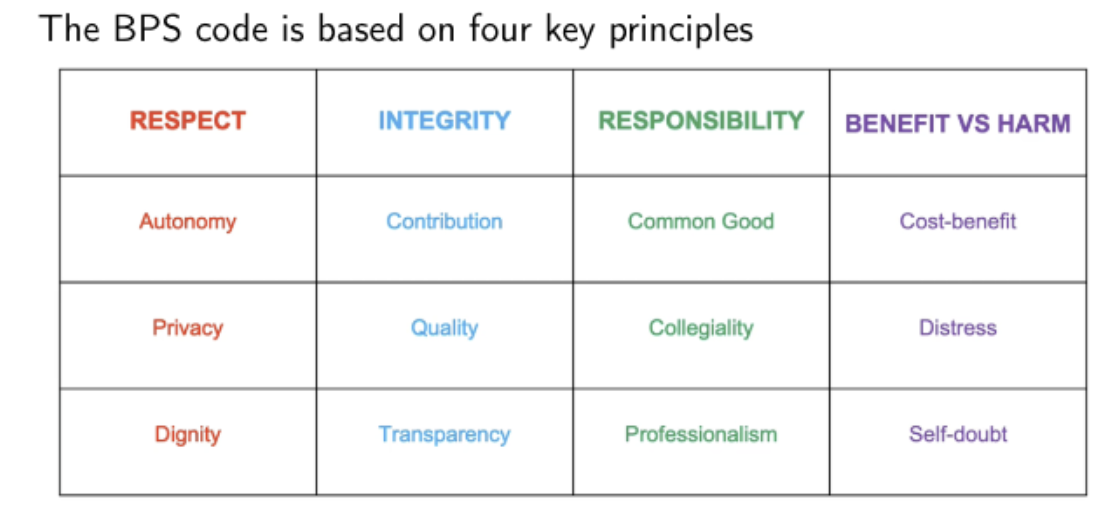

ethics and BPS principles?

moral principles that govern research

examples of risk in ethics

research involving vulnerable groups

research involving sensitive topics

deception

access to confidential info

psychological stress

invasive interventions-eg taking of blood samples

informed consent?

consent must be based on sufficient info

right to withdraw consent during data collection

data can be deleted anytime even after study

identifiable vs unidentifiable data

identifiable- can recognise the person (eg theyre on video, audio recording, unusual circumstance)

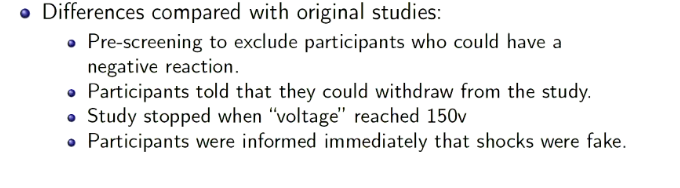

how did burger replicate milgrams research in 2009?

peer review

peer review is w journals etc, increase likelihood of honest feedback by having anonymous peer reviewers

what are confounding variables?

different factors confound results

what other factors could explain the observation you have

how did bushman study catharsis on aggression?

ppants write essay

“Steve” says it is the worst essay ever

ppants then angry

3 categories

1) imagine steves face on a punch bag and punch it

2) no instructions just hit punch bag

3) sit quietly for 2 mins

then play computer game against steve, can blast white noise - ppants who punched steves face on the punching bag used way more white noise - the least angry was condition 3

probabilistic?

findings are not expected to explain all the cases all the time

there are exceptions to psych research results

ways intuition is biased?

1) availability heuristic- think about media coverage- 30% of stories about terrorism, only 1% deaths

2) confirmation bias- ppants do questionnaire, then are told they are either depressed or not, people put into high depression group sought info that said the tool wasn’t valid, low depression group sought info it was valid

components of an empirical journal article?

abstract

introduction

method

results

discussion

references

operationalisation?

the process through which constructs which can’t be directly measured are redefined in a way that is measurable

specify scale you are using

do a lit review

common types of measurement

self report examples

observational examples - # posts on social media, delayed gratification marshmallows, student marks on an exam, observational

physiological- EEG signals, blood pressure, cortisol levels, height

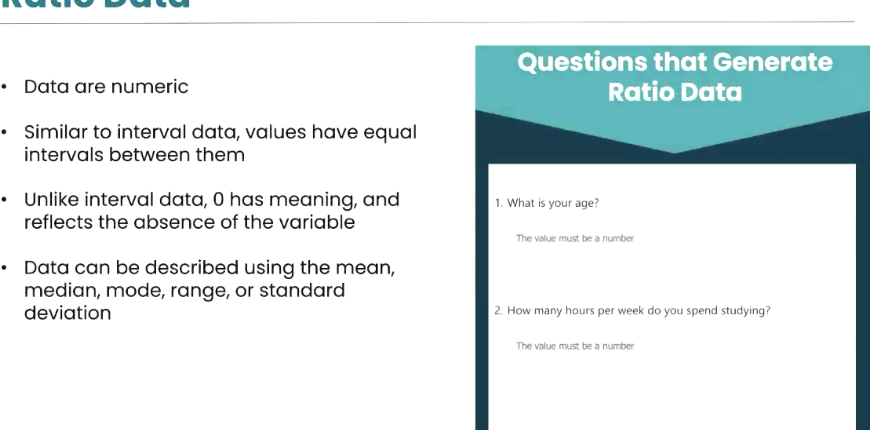

scales of measurment

categorical versus quantitative

discrete- nominal and ordinal

continuous- interval and ratio

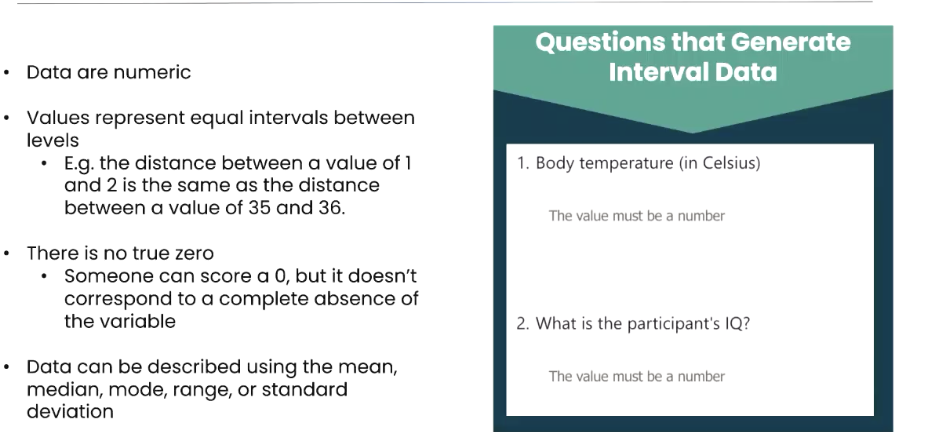

interval and ratio data

3 types of reliability

1) test-retest reliability - expect good agreement between measurement time 1 and time 2

2) interrater reliability- how 2 observers rank results

3) internal reliablity- use cronbachs alpha, shows how well items relate to each other and the tool - look for 0.8 for a reliable alpha

reliability versus validity

sameness (repeatable) versus accuracy

for something to be valid it must be reliable

but being reliable doesn’t make it valid

scatterplots?

used often for evaluating reliability

correlation coefficient- r value

what is criterion validity?

are values produced by your measure associated with other behaviours related to the construct

can be assessed through known-group paradigms, allows you to test validity using groups in which the construct of interest has already been established

convergent validity?

your tool corresponds with other tools

discriminant validity?

ensures that a test or measure designed to assess a specific construct (e.g., intelligence) does not unintentionally correlate with measures of conceptually distinct constructs (e.g., personality).

for which type of validity do we need to collect empirical evidence?

criterion validity- check what we are measuring is associated with other measures of the same thing

check questions 9th march

what is a variable?

changes

measured variable (dv) and manipulated variable (IV)

conceptual variable to operational definition (whats being measured here/manipulated)

three types of claims that can be made

frequency claim- %%% - particular level or degree of a single variable - ONLY ONE MEASURED VARIABLE

association- eg playing musical instrument leads to better cognition- TWO MEASURED VARIABLES OR MORE- argues one level of a variable is likely to be associated with a particular level of another variable - ASSOCIATE AND CORRELATE

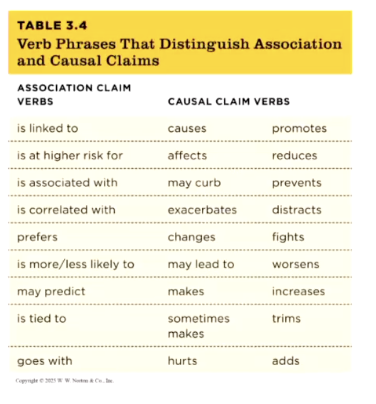

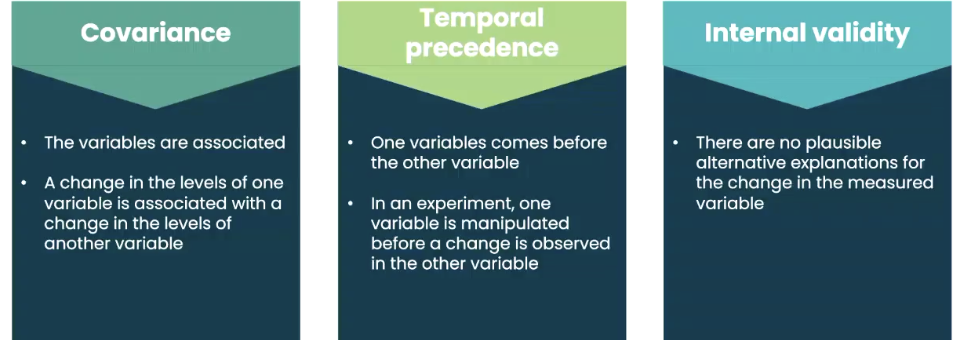

causal- one variable causes another to change, eg family meals curb eating disorders - SUPPORTED BY EXPERIMENTS- HAS A MANIPULATED VARIABLE AND A MEASURED VARIABLE

verbs for causal claims

27:42 march 9th

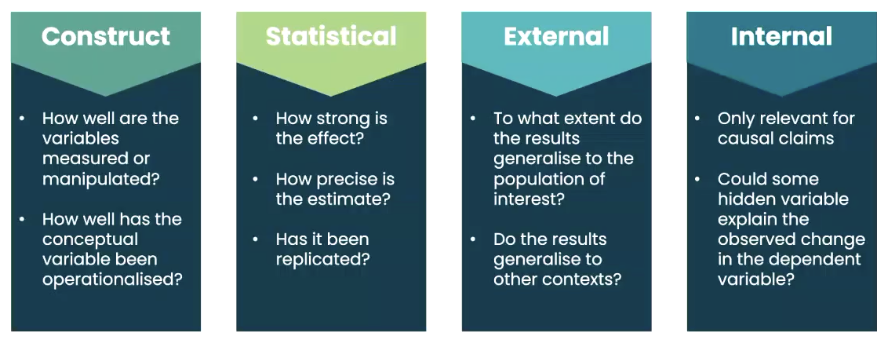

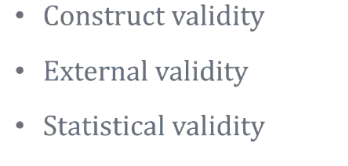

what are the big four validities?

interrogating frequency claims

construct validity

external validity / generalisability

statistical validity

interrogating association claims

construct validity

statistical validity

external validity

looking at preciison of estimate and correlation coefficient and generalisability

interrogating causal claims

Experiments can support causal claims!

when are causal claims a mistake? (example)

what are the other validities to interrogate in causal claims?

interrogating the 3 types of claim using the big 4 validities

which type of validity is most important?

experiment- internal validity >

association claim - external validity <

whats the validity most important to interrogate for every study?

construct

what sort of tests can we do to increase construct validity?

things like when was the phone first picked up in the morning versus put down at night (reverse) for seeing how much sleep people get)

how do we check external validity?

representative sample- is sample unrepresentative or biased

does point estimate generalise to whole pop?

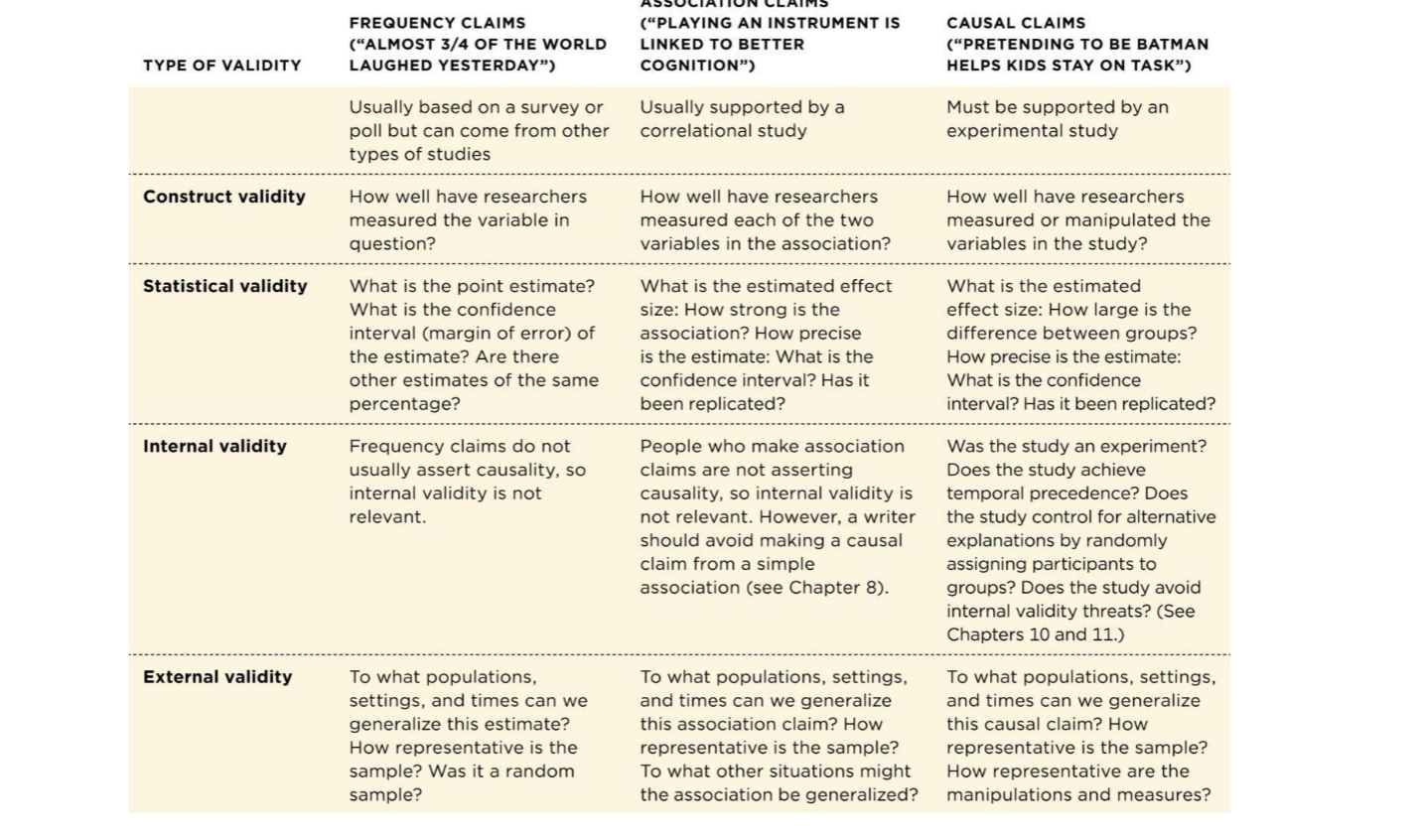

different question formats

how to write a well worded question

be careful of leading people on (good thing to investigate is the survey used!)

double barrelled questions

don’t use negatively worded questions - write them as simply and clearly as possible (nazis)

priming participants - order that you give people questions may prime ppants to diff answers

what are 3 different types of shortcuts ppants use when bored to answer questions

response sets (nondifferentiation), hitting buttons

acquiescence (bias to agree)- agree as bored

fence sitting - hitting the middle option lots

theyre a threat to construct validity as the survey may not measure the variable of interest

what might affect accurate responses?

social desirability bias— make things anonymous

sometimes we don’t know why we do these things

self-reporting memories of events

rating consumer products - price and prestige are a factor

2 example of observational research

mehl - recorded 30 second sample every 12.5 minutes

no sex difference in words spoken by day

campos- observed families in evening to measure emotional tone and what parents and children talked about at dinner

parents talk about health benefits, children talk about how much they disliked the food

example of observer bias

when researchers see what they expect to see

langer and abelson asked therapist to watch a man talking about his feelings and work expeirences

analytic therapists who were told the man was a job applicant rated hihm as attractive and innovative

analytic therapists who erre told the man was a patient rated him as defensive and frightened

HOWEVER BEHAVIOUR THERAPISTS WERE UNAFFECTED BY THE LABEL

construct validity of observations?

threatened by observer bias, observer effects and reactivity

observer effects?

see what we expect to see

students given rats, half told rats are smart half told rats are just average

the rats the observers thought were smarter learnt the maze quicker

whats blinding

experimenters dont know who is in which trial

whats reactivity?

people react differently

solution 1- blend in

soultion 2- wait it out (-eg jane goodall)

3- measure the consequences of behaviour

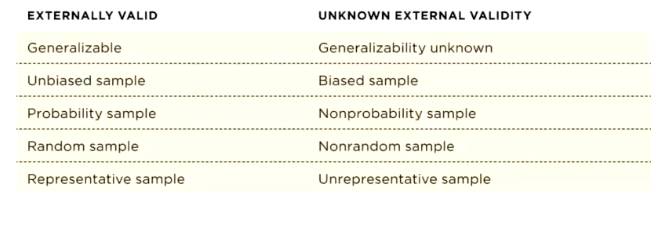

externally valid versus unknown external validity

also for y2, it doesn’t matter if not generalisable to EVERYONE, pop of interest is more important

biased sample vs representative sample

biased- whenever we dont use a propbability sample technique, may be caused by convenience sampling (people it is easy to contact), self-selection volunteer sampling,

representative samplign- simple random sampling (make a list of everyone in the population and then select ppants with random sampling software), systematic sampling ( every nth person). cluster sampling (groups of ppl exist in your population, randomly select number of prisons for example), multistage sampling (Randomly sample prisons, then randomly sample prisoners from those prisons), stratified random sampling (try and represent everyone in proportion to real life groups), oversampling (very small numbers of trans people, sample more of them so proportionate number)

random sampling versus random assingment

random sampling, increases external validity

random assignment, increases internal validity as you ABBA people

unrepresentative sampling non probability sampling techniques?

convenience sampling- whoever is available

purposive sampling (snowball sampling)

quota sampling - just say i need X of men and X of women

when is external validity not a priority?

nonprobability samples in the real world - is thing that is biased relevant to what is being measured

what does a larger sample increase?

statistical validity