Exam 2 - Module 7 (Autoencoders, Dimension Reduction, Clustering)

1/9

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

10 Terms

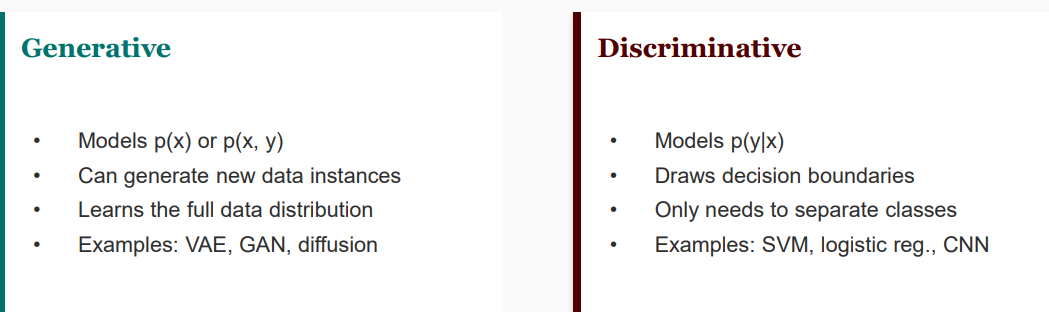

Generative vs Discriminative

Autoencoders

unsupervised neural network that learns to compress data (encoder) into latent reperesentation and then reconstruct it back (decoder) to its original form

Latent space

lower-dimensional representation of the input data

A good latent space and AE Tradeoff

A good latent space is compressed (d≪n) and smooth. Standard AEs can have "gaps" in the latent space that make it difficult to sample new

The latent dimension size controls the information-detail tradeoff

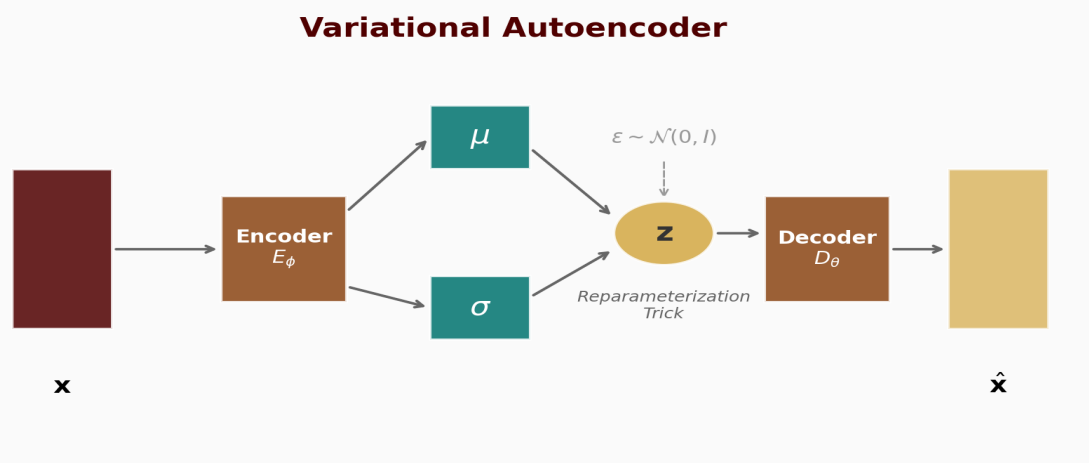

Variational Autoencoders (VAE)

Instead of a point, we get a region of latent space, which makes it smooth and continuous

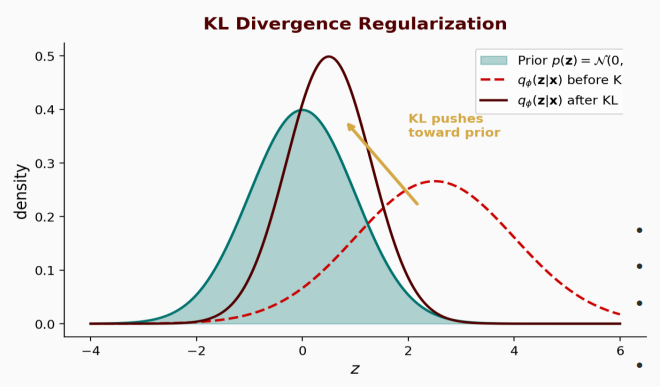

VAE Loss Function

balances reconstruction with regularization (pushing the latent distribution toward a simple prior like N(0,I))

KL Divergence

quantifies the "information loss" and is a is a measure of how one probability distribution differs from a reference probability distribution

Reparameterization Trick

VAE Loss Tradeoff

create highly disentangled features (interpretable) but result in blurrier reconstructions

Generative Adversarial Networks (GANs)