module 6 - ethical decision making

1/24

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

25 Terms

Rational Decision Making

logical, step-by-step process to choose the highest-value option using all available information

the "ideal" approach.

2 elements:

positive valence

a formula for best choice

a systematic process

steps:

(1) Identify problem/opportunity

(2) Choose best decision process

(3) Develop possible choices

(4) Select highest-value option

(5) Implement

(6) Evaluate.

Theory vs. Reality in Rational Decision Making

Theory:

goals are clear

alternatives can be calculated

decision-maker picks maximum-payoff option

Reality:

goals conflict

info-processing is limited

alternatives evaluated one-by-one

decisions anchor to an implicit favourite

unconsciously selected early

using it as a benchmark against other options

Environment changes fast so info goes stale

Time pressure prevents thorough analysis.

Bounded Rationality

People want to be rational but cognitive limits get in the way

can only interpret and act on a fraction of available information

Leads to satisficing

"good enough" option rather than the optimal

Example: Job hunting

accept first reasonable offer instead of comparing every possible job on the market

Intuitive Decision Making?

non-conscious, fast

built from distilled experience

Holistic

whole picture at once

Emotionally charged ("gut feeling")

Uses action scripts

pattern recognition from past experience. Not all emotional signals are intuition; some are just reactions.

What are System 1 and System 2 thinking? (Kahneman, 2011)

System 1 (Fast): Automatic, unconscious, effortless, emotional. Storyteller — creates coherence and suppresses ambiguity. Focuses on concrete, immediate payoffs. Used for ~95% of decisions. Resistant to change regardless of evidence. System 2 (Slow): Conscious, effortful, logical, deliberate. Steps: get facts → weigh & decide → act → follow up. Acts as "spokesman" that endorses System 1 outputs. Scarce cognitive resource — depletes with use. Example: Employees know they should save for retirement (System 2 knows) but rarely sign up for RRSP (System 1 wins).

How should System 1 and System 2 work together in decision making?

System 1 emotional responses are inputs, not final answers — System 2 should weigh them against other factors. Example: An investment opportunity triggers fear (System 1 signal). System 2 should examine whether the risk is real, while also considering long-term strategic value that System 1 might undervalue. Neither system is inherently superior — good decisions use both.

What is the Social Intuitionist Model? (Jonathan Haidt)

Challenges purely cognitive approaches to ethics. We make quick moral judgments first, then use logic to justify them after. Intuition and social norms drive ethical determinations, not deliberate reasoning. The Elephant Metaphor: Rider = rational mind (System 2), Elephant = emotional mind (System 1), Path = environmental factors. The rider looks in charge, but when elephant and rider disagree — the elephant wins. Implication: You can't reason someone out of a moral position they didn't reason themselves into.

What is Bounded Ethicality? (Bazerman, HBR 2020)

Systematic cognitive barriers that prevent us from being as ethical as we wish to be. Just as bounded rationality limits smart decisions, bounded ethicality limits moral ones. Goal: aim for maximum sustainable goodness — the realistic level of value creation we can achieve. Establish a "North Star" — we'll never reach it, but it guides us toward creating more good.

Dual Process Approach to Ethical Decision Making (Johnson, 2018)

Neuroimaging shows ethics involves multiple brain regions — not just one. Ethical thinking activates both cognitive and emotional brain areas. Both logic and emotion are essential to good ethical choices. Strategy 1 (engages System 2): Compare options side-by-side instead of evaluating one at a time — reduces bias. Strategy 2 — Rawls' Veil of Ignorance: Make decisions as if you don't know where you'd land in the outcome — forces fairness. Example: cutting a pizza when you're last to take a slice — you'll cut equal slices.

What is the core argument of 'Leaders as Decision Architects'? (HBR, 2015)

People make poor decisions not because they're dumb but because of how the brain is wired — cognitive biases. Two root causes: (1) Insufficient ability/motivation — failure to take action at all. (2) Cognitive biases — taking action but introducing systematic errors. Examples of bias effects: underestimating project timelines, overconfidence in strategy, not choosing optimal retirement benefits. Solution: don't try to rewire the brain — change the environment in which decisions are made.

What is Choice Architecture and what are Nudges? (HBR, 2015)

Choice Architecture: carefully structuring how information and options are presented to improve decisions without removing freedom. Nudges: small environmental changes that guide people toward better choices. Set defaults: opt-out vs. opt-in (e.g., auto-enrolling employees in retirement plans at 6%). Arouse emotions: new employees reflect on strengths on day 1 → emotional bond → better retention. Simplify processes: centralized hospital records → doctors more motivated to update info. Harness biases: "man on the bench effect" — strong talent pipeline reminds underperformers they could lose their jobs → motivates improvement (loss aversion). Google cafeteria nudge: signs about plate size → proportion using small plates increased 50%.

How can leaders engage System 2 thinking in organizations? (HBR, 2015)

Use joint evaluations: compare candidates/options against each other, not separately — reduces bias. Create reflection opportunities: workers who spent 15 min writing what they learned performed 22.8% better; those who also explained to a peer performed 25% better. Use planning prompts: structured prompts before acting. Inspire broader thinking and consideration of disconfirming evidence.

What is the Status-Quo Trap and how do you counter it? (Hammond et al., 1998)

Trap: Strong bias toward doing nothing and sticking with the current situation. Status quo feels psychologically safer — less risk of blame. More choices = stronger pull of the status quo. "I'll rethink it later" — but later never comes. Counters: Ask whether you'd choose this option if it weren't already the status quo. Don't treat status quo as your only alternative. Don't exaggerate the cost of switching. Remember: desirability of the status quo changes over time.

What is the Confirming-Evidence (Confirmation) Bias and how do you counter it? (Hammond et al., 1998)

Trap: Seeking and over-weighting info that supports what you already believe; dismissing contradictory info. Affects both where we gather evidence and how we interpret it. We fail to search impartially for evidence. Counters: Examine all evidence with equal rigor. Assign a devil's advocate. Don't ask leading questions of advisers. If an adviser always agrees with you — find a new adviser. Be honest about your own motives.

What is the Anchoring Bias and how do you counter it? (Hammond et al., 1998)

Trap: Over-relying on the first piece of information received — all subsequent judgments are pulled toward that anchor. Initial impressions, estimates, or data dominate thinking. Used strategically by negotiators. Example: A job candidate anchors salary negotiation by naming a high initial number — the employer's counteroffer is pulled upward. Counters: View problems from multiple perspectives before settling. Think independently before consulting others. Seek diverse opinions. Avoid anchoring your own advisers by sharing your estimate first. Start estimates with extreme values (high/low ends).

What is the Sunk-Cost Trap (Escalation of Commitment) and how do you counter it? (Hammond et al., 1998)

Trap: Continuing to invest in a losing decision because of resources already spent — "throwing good money after bad." Unwillingness to admit a mistake drives escalation. Four causes: self-justification, self-enhancement, prospect theory, sunk-cost effect. Example: A bank keeps lending to a struggling business because of prior loans — a new banker with no prior involvement can evaluate the decision more objectively. Counters: Bring in someone uninvolved with earlier decisions. Create a stop-loss in advance. Seek factual and social feedback. Don't cultivate a failure-fearing culture — it makes people double down on bad decisions.

What is the Framing Trap and how do you counter it? (Hammond et al., 1998)

Trap: The way a problem is worded fundamentally changes the choice made. People are risk-averse when framed around gains. People are risk-seeking when framed around avoiding losses. A frame can also introduce anchors or status-quo biases. Example: "90% fat-free" vs. "10% fat" — same fact, but the first framing is perceived as healthier. Counters: Never accept the initial frame — restate the problem multiple ways. Frame problems in neutral terms combining both gains and losses. Challenge advisers' frames.

What is the Overconfidence Bias and the Dunning-Kruger Effect?

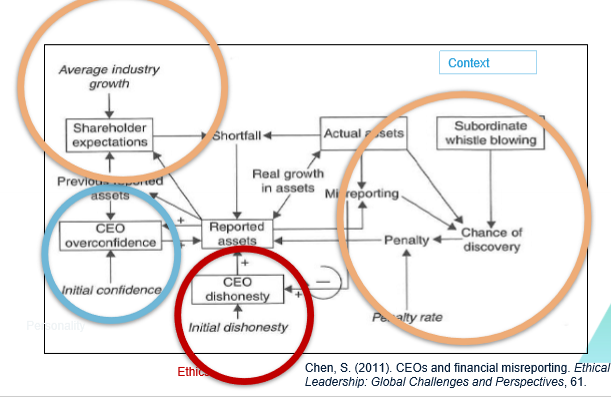

Overconfidence Bias: Believing too much in our own decision-making ability, especially outside our expertise. We overestimate our skill relative to others. We take credit for positive outcomes without acknowledging chance. Dunning-Kruger Effect: People with limited knowledge in a domain greatly overestimate their competence. Experts tend to underestimate themselves; novices overestimate. Example (CEO misreporting): Overconfident CEOs inflate reported assets expecting performance will catch up — interacts with initial dishonesty and chance of discovery (Chen, 2011). Counter: Always consider extreme values (high/low range) before settling on an estimate.

What is Availability Bias (Recency Error)?

Trap: Overweighting information that is most easily recalled. Recent events feel more common or likely than they are. Vivid/intense events are recalled more easily and weighted more heavily. Example: After seeing a plane crash in the news, people fear flying even though statistics haven't changed. Or a manager rates an employee based on their last two weeks, ignoring consistent performance all year. Counter: Ask "Is this actually common, or just memorable?"

How should leaders make decisions when the future is unpredictable? (Empson & Howard-Grenville, HBR 2024)

Context: Organizations disrupted by politics, climate, AI, hybrid work. Clarify what matters most — provide clarity of purpose. Question old assumptions — use disruption to reevaluate whether current values/practices still serve you. Introspect and reflect — experiment, challenge assumptions that no longer work. "Hold fast and stay true" — nautical command during a storm: grip something secure, watch the compass. In chaos, focus on what stays constant. Collaborate for collective capacity. All storms eventually pass — don't feel lost at sea.

What are Keystone Habits and why do they matter? (Duhigg, 2012; Paul O'Neill / Alcoa)

A keystone habit is a foundational behavior that triggers a cascade of other positive changes. Once established, it alters how you think, feel, and act — spilling over into other areas. Operates through System 1: becomes automatic and cue-triggered over time. Alcoa case (Paul O'Neill): O'Neill made worker safety the single keystone habit. Every unit had to report injuries within 24 hrs with a prevention plan. This forced communication, accountability, and process improvement company-wide. Result: safety improved dramatically and stock performance surged. Examples: Regular exercise → better eating, sleep, stress management. Making the bed → order, discipline, productivity. Team check-ins → trust, accountability, open communication.

How do automatic habits relate to System 1 thinking? (Wood & Rünger, 2016)

Habit formation transfers effortful System 2 actions into efficient System 1 routines — freeing mental resources. Brain links cues (time, place, emotion) to responses through repetition. Control shifts from prefrontal cortex to basal ganglia (automaticity). Risk: habits can perpetuate poor routines if left unchecked. Organizational example: Routinely debriefing after failure (rather than assigning blame) becomes a default cultural response — embedded through habit, not policy.

Why don't we think deeply about everything? + The Unconscious Mind

Deep thinking is cognitively expensive and unpleasant. Brain studies: electrical patterns during deep thinking were most similar to when subjects held their hand in ice water. We physically cannot think deeply about everything — System 1 handles most decisions automatically. The Unconscious Mind (Rebel Brown, The Influential Leader): Over 95% of decisions are driven by the unconscious mind. Unconscious mind processes 11 million bits/sec; only ~126 bits/sec reach consciousness. Our beliefs filter what data reaches conscious awareness — change your beliefs, change your decisions.

How do you increase motivation to make ethical decisions?

Create ethically rewarding environments: raises, promotions, recognition for ethical behavior. Reduce the cost of moral action: policies that make it easy to report unethical behavior (psychological safety). Tap into moral emotions: sympathy, disgust, and guilt prompt action. Positive emotions (joy, happiness) make people more optimistic and more likely to help others and act on their moral values.