Data Science Underfitting & Overfitting

1/11

There's no tags or description

Looks like no tags are added yet.

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

12 Terms

What does underfitting mean?

The model is too simple to learn the real pattern in the data so it performs poorly on both the training and test set

Signs of underfitting:

training accuracy is low

test accuracy is low

training & test accuracy are usually close together

Causes of underfitting:

poor quality of data

model’s hidden layers are not deep enough

not enough epochs

How to fix underfitting:

add more hidden layers/neurons

add more epochs

dataset cleaning, scaling, feature selection

What does overfitting mean?

The model is too complex and learns the data too well, including noise and unimportant details so it performs well on the training set but poorly on the test set

Signs of overfitting:

training accuracy is very high

test accuracy is much lower

there is a large gap between the train and test sets

Causes of overfitting:

the dataset wasn’t randomized

the dataset was too small

too many epochs

the number of neurons was too large with too many layers

How to fix overfitting:

reduce the number of neurons and hidden layers

generate more data

use drop out

What does early stopping do?

after each epoch, the model checks the validation loss. if the model stops improving for several epochs, stop training and revert back to the best model

What does dropout do?

during training, some neurons are randomly turned off to force the network to not depend too much on any single neuron

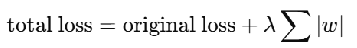

What does L1 regularization do?

it penalizes the absolute value of weights, pushing some weights closer to exactly zero and can make the model more sparse

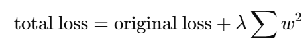

What does L2 regularization do?

it penalizes the square of weights, so very large weights are punished more strongly