Regression Analysis

1/20

Earn XP

Description and Tags

MKTG 3260

Name | Mastery | Learn | Test | Matching | Spaced | Call with Kai |

|---|

No analytics yet

Send a link to your students to track their progress

21 Terms

Regression Analysis

technique in which one or more variables are

used to predict the level of another by use of the straight-line formula

Bivariate Regression

only two variables are being analyzed, and

researchers sometimes refer to this case as “simple regression”

Multiple Regression

more than one IV are used to predict a single DV

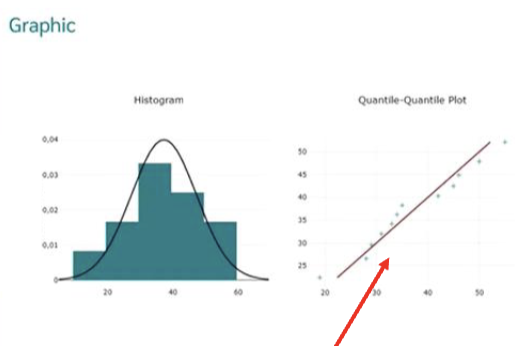

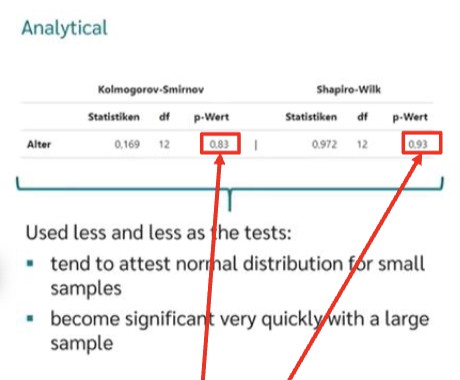

The closer the points are to the line…

the more normally distributed the data is

The p-value is greater than 0.05 then..

there is no deviation from the normal distribution

Intercept

the constant, or a, in the straight-line relationship that is the value of y when x equals to 0

Slope

the b, or the amount of change in y for a one-unit change in x

DV

y, the variable that is being estimated by the x(s) or IVs

IV

the x variable(s) used in the straight-line equation to estimate y

Least squares criterion

a procedure that assures that the computed regression equation is the best one possible for the data being used

R square

a number ranging from 0 to 1 that reveals how well the straight- line model fits the scatter of data points, the higher, the better. Below .25 is bad

Adjusted R Squared

for the number of IVs and the sample size; the higher, the better

Independence assumption

a statistical requirement that when more than one x variable is used, no pair of x variables has a high correlation

Multicollinearity

2 or more predictors correlate strongly with each other (= violation of the independence assumption that causes regression results to be in error)

Variance Inflation Factor (VIF)

identifies that x variables contribute to multicollinearity and should be removed from the analysis to eliminate it. Any variable with a ____ if 10 or greater should be removed

Multiple R

also called the coeffience of determination, a number that ranges from 0 to 1 that indicates the strength of the overall linear relationship in a multiple regression: the higher the better

Trimming

process of iteratively removing x variables in multiple regression which are not statistically significant, rerunning the regression, and repeating until all the remaining x variables are significant

Beta coefficients and standardized beta coefficients

are the slopes (b values) determined by multiple regression for each IV x. These are standardized to range from .00 to .99, so they can be compared directly to determine their relative importance in y's prediction

Dummy Variable

use of an x variable that has a 0, 1 or similar nominal coding.

Stepwise multiple regression

appropriate when there is a large number of IVs that need to be trimmed down to a small, significant set and the researcher wishes the statistical program to do this automatically

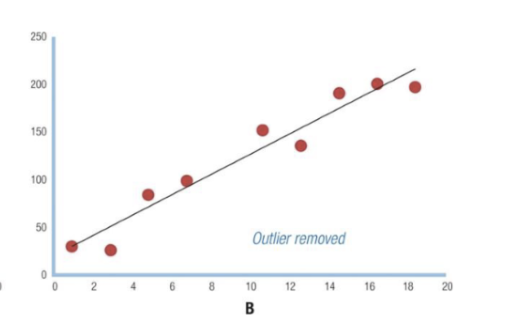

Removing outliers…

can improve regression results. Often identified as mean +/- 2 s.d.