Summary_Psychodiagnostics_GGZ2030.docx

Summary Psychodiagnostics GGZ2030

Psychological test = a standardised measure of a sample of behaviour that establishes norms and uses important test items that correspond to what the test is to discover about the test-taker. Is also based on uniformity of procedures in administering and scoring tests.

The standardised measure of a sample of behaviour = an established reference point that a test scorer can use to evaluate, judge, measure against, and compare.

- We get to this reference point by establishing norms 🡪 rely on the number of test-takers who take a given test, to establish what is normal in the group.

- Then scorers can determine where an individual falls within that group 🡪 that is why the larger the sample the better.

- Norms: to compare and give meaning to results.

Test items = the questions that a test-taker is asked on any given test.

- They must be relevant to what the test is trying to measure.

- They must have large sets to establish a proper measurement 🡪 it needs to have a lot of questions. More questions equal more chances of establishing what a test-taker knows and does not know to find their norm.

Uniformity of procedures in administering and scoring of tests = refers to administrators presenting the test in the same way, test-takers taking the test in the same way, and scorers scoring the test the same way every given time that this test is given, taken, and scored 🡪 this helps with:

- Validity = testing exactly what one is trying to discover.

- Reliability = being able to recreate the same or similar outcome every time the test is taken. There could have been emotional stress or other factors that may have made the individual score slightly lower or higher, but in general the score remains in the same scoring range 🡪 that establishes what the norm is, namely in the middle of this scoring range.

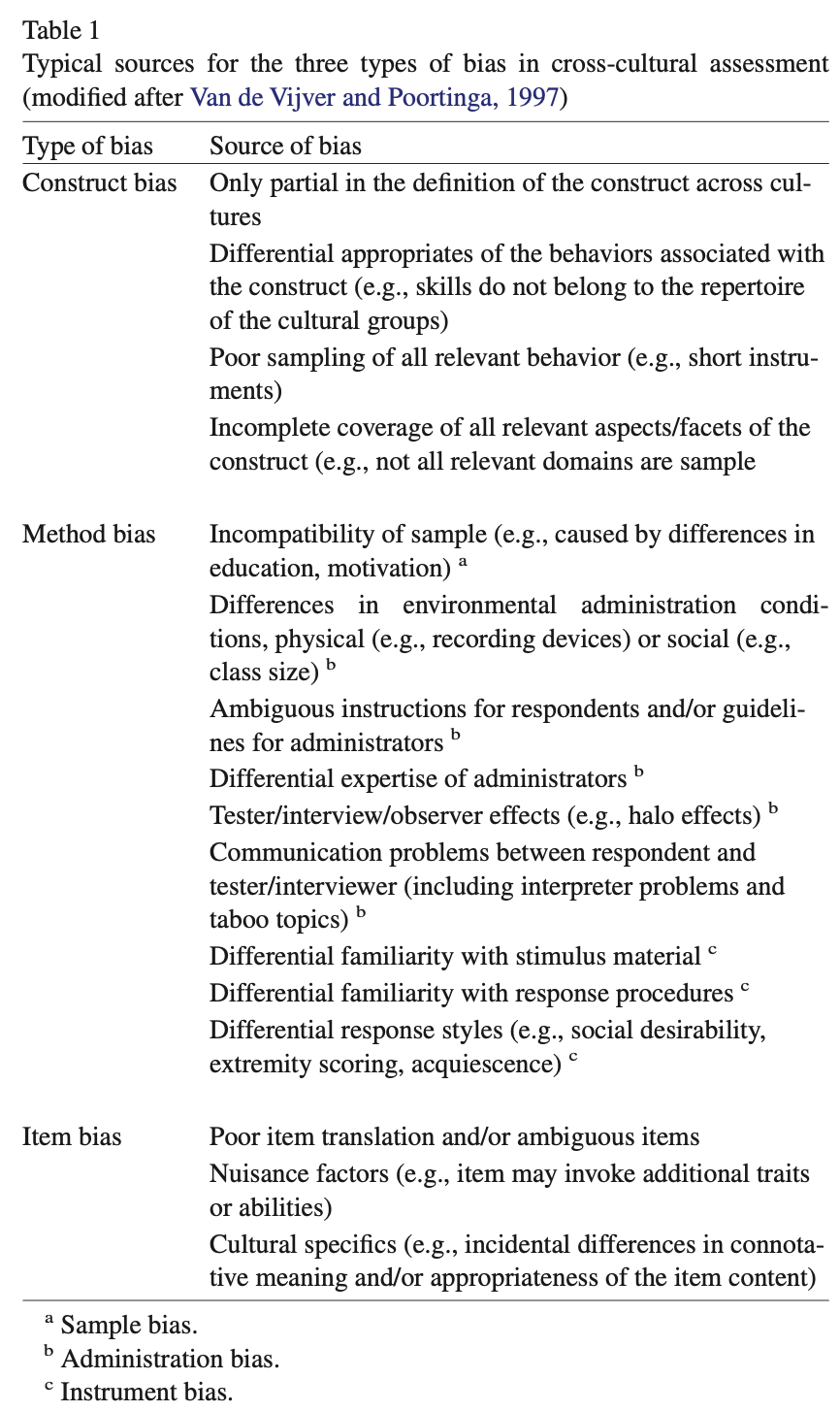

Reliability | Validity | |

|---|---|---|

What does it tell you? | The extent to which the results can be reproduced when the research is repeated under the same conditions. | The extent to which the results really measure what they are supposed to measure. |

How is it assessed? | By checking the consistency of results across time, across different observers, and across parts of the test itself. | By checking how well the results correspond to established theories and other measures of the same concept. |

How do they relate? | A reliable measurement is not always valid: the results might be reproducible but they’re not necessarily correct. | A valid measurement is generally reliable: if a test produces accurate results, they should be reproducible. |

Some of the primary purposes of psychological assessment are to:

- Describe current functioning, including cognitive abilities, severity of

disturbance, and capacity for independent living.

- Confirm, refute, or modify the impressions formed by clinicians through their less structured interactions with patients.

- Identify therapeutic needs, highlight issues likely to emerge in treatment,

recommend forms of intervention and offer guidance about likely outcomes.

- Aid in the differential diagnosis of emotional, behavioural, and cognitive disorders.

- Monitor treatment over time to evaluate the success of interventions or to

identify new issues that may require attention as original concerns are

resolved.

- Manage risk, including minimization of potential legal liabilities and

identification of untoward treatment reactions.

- Provide skilled, empathic assessment feedback as a therapeutic intervention

in itself.

Although specific rules cannot be developed, provisional guidelines for when assessments are likely to have the greatest utility in general clinical practice can be offered.

- In pre-treatment evaluation:

- Goal: to describe current functioning, confirm or refute clinical impressions, identify treatment needs, suggest appropriate interventions, or aid in differential diagnosis.

- Assessment is then likely to yield the greatest overall utility when:

- The treating clinician or patient has salient questions.

- There are a variety of treatment approaches from which to choose and a body of knowledge linking treatment methods to patient characteristics.

- The patient has had little success in prior treatment.

- The patient has complex problems and treatment goals must be prioritised.

3 readily accessible but inappropriate benchmarks can lead to unrealistically high expectations about effect magnitudes:

- It is easy to recall a perfect association. However, perfect associations are never encountered in applied psychological research.

- It is easy to implicitly compare validity correlations with reliability coefficients because the latter are frequently reported in the literature. However, reliability coefficients evaluate only the correspondence between a variable and itself, so they cannot provide a reasonable standard for evaluating the association between 2 distinct real-world variables.

- Monomethod validity coefficients are presented everywhere in the psychological literature (e.g., self-reports are compared with self-reports). Because the systematic error of method variance is aligned in such studies, the results are inflated and do not provide a reasonable benchmark for considering the real-world associations between 2 independently measured variables.

Instead of relying on unrealistic benchmarks to evaluate findings 🡪 psychologists should be satisfied when they can identify replicated univariate correlations among independently measured constructs.

Therapeutic impact is likely to be greatest when:

- Initial treatment efforts have failed

- Clients are curious about themselves and motivated to participate

- Collaborative procedures are used to engage the client

- Family and allied health service providers are invited to furnish input

- Patients and relevant others are given detailed feedback about results

Monomethod validity coefficients = are obtained whenever numerical values on a predictor and criterion are completely or largely derived from the same source of information.

- E.g., a self-report scale that is validated by correlating it with a conceptually similar scale that is also derived from self-report.

Distinctions between psychological testing and psychological assessment:

- Psychological testing = a relatively straightforward process wherein a particular scale is administered to obtain a specific score 🡪 the score has 1 meaning.

- Psychological assessment = concerned with the clinician who takes a variety of test scores, generally obtained from multiple test methods, and considers the data in the context of history, referral information, and observed behaviour to understand the person being evaluated, to answer the referral questions, and then to communicate findings to the patient, his or her significant others, and referral sources.

- Scores can have different meanings 🡪 after considering all relevant information

- Assessment uses test-derived sources of information in combination with historical data, presenting complaints, observations, interview results, and information from 3rd parties to disentangle the competing possibilities.

Distinctions between formal assessment and other sources of clinical information:

- Psychological assessments generally measure many personality, cognitive, or neuropsychological characteristics simultaneously 🡪 they are inclusive and often cover a range of functional domains, many of which might be overlooked during less formal evaluation procedures.

- Psychological tests provide empirically quantified information, allowing for more precise measurement of patient characteristics than is usually obtained from interviews.

- Psychological tests have standardised administration and scoring procedures 🡪 in less formal assessments, standardisation is lacking.

- Psychological tests are normed 🡪 permitting each client to be compared with a relevant group of peers 🡪 allows the clinician to formulate refined inferences about strengths and limitations.

- Research on the reliability and validity of individual test scales sets formal assessment apart from other sources of clinical information. Without this, practitioners have little ability to measure the accuracy of the data they process when making judgments.

- The use of test batteries in psychological assessment 🡪 in a battery, psychologists generally employ a range of methods to obtain information and cross-check hypotheses. These methods include self-reports, performance tasks, observations, and information derived from behavioural or functional assessment strategies.

Assessment methods:

- Unstructured interviews

- Elicit information relevant to thematic life narratives, though they are constrained by the range of topics considered and ambiguities inherent when interpreting this information.

- Structured interviews and self-report instruments

- Elicit details concerning patients’ conscious understanding of themselves and overtly experienced symptomatology, though they are limited by the patient’s motivation to communicate frankly and their ability to make accurate judgements.

- Performance-based personality tests

- Elicit data about behaviour in unstructured settings or implicit dynamics and underlying templates of perception and motivation, though they are constrained by task engagement and the nature of the stimulus materials.

- Performance-based cognitive tasks

- Elicit findings about problem solving and functional capacities, though they are limited by motivation, task engagement, and setting.

- Observer rating scales

- Elicit an informant’s perception of the patient, though they are constrained by the parameters of a particular type of relationship and the setting in which the observations transpire.

Clinicians and researchers should recognize the unique strengths and limitations of various assessment methods and harness these qualities to select methods that help them more fully understand the complexity of the individual being evaluated.

Low cross-method correspondence can indicate problems with one or both methods. Cross-method correlations cannot reveal what makes a test distinctive or unique, and they also cannot reveal how good a test is in any specific sense.

Clinicians must not rely on 1 method.

One cannot derive unequivocal clinical conclusions from test scores considered in isolation.

Because most research studies do not use the same type of data that clinicians do when performing an individualised assessment, the validity coefficients from testing research may underestimate the validity of test findings when they are integrated into a systematic and individualised psychological assessment.

Contextual factors play a very large role in determining the final scores obtained on psychological tests, so contextual factors must therefore be considered 🡪 contribute to method variance. However, trying to document the validity of individualised, contextually included conclusions is very complex.

The validity of psychological tests is comparable to the validity of medical tests.

Distinct assessment methods provide unique sources of data 🡪 sole reliance on a clinical interview often leads to an incomplete understanding of patients.

It is argued that optimal knowledge in clinical practice/research is obtained from the sophisticated integration of information derived from a multimethod assessment battery.

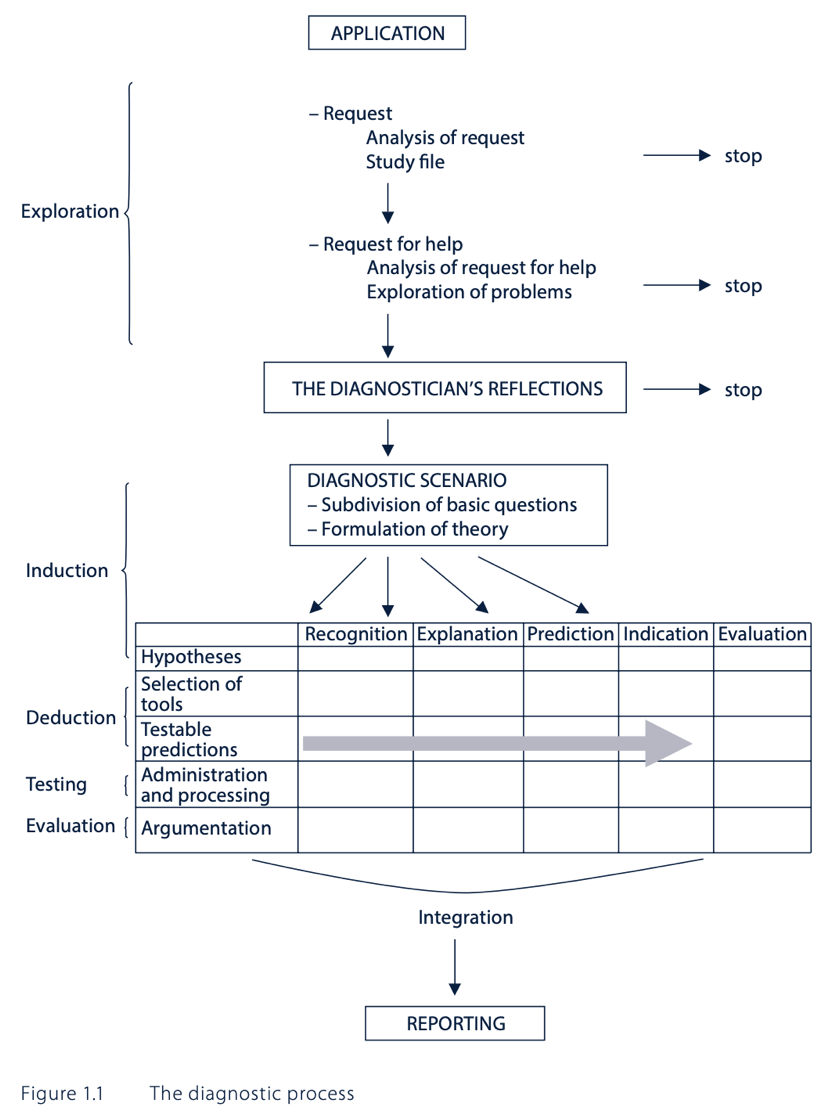

Clinical diagnostics is based on 3 elements:

- Theory development of the problems/complaints and problematic behaviour

- Operationalization and its subsequent measurement

- The application of relevant diagnostic methods

Testing a diagnostic theory requires 5 diagnostic measures:

- Converting the provisional theory into the concrete hypotheses

- Selecting a specific set of research tools, which can either support or reject the

formulated hypotheses

- Making predictions about the results or outcomes from this set of tools, in

order to give a clear indication as to when the hypotheses should be accepted

or rejected

- Applying and processing instruments

- Based on the results that have been obtained, giving reasons for why

the hypotheses have either been accepted or rejected

This results in the diagnostic conclusion.

5 basic questions in clinical psychodiagnostics – classification of requests:

- Recognition = what are the problems? What works and what does not?

- Explanation = why do certain problems exist and what perpetuates them?

- Prediction = how will the client’s problems subsequently develop in the future?

- Indication = how can the problems be resolved?

- Evaluation = have the problems been adequately resolved because of the intervention?

The quantity and type of basic questions to be addressed depends on the questions that were discussed during the application phase. Most requests contain 3 basic questions: recognition, explanation, and indication. In practice, all the basic questions chosen are often examined simultaneously.

Recognition:

- Identifying both the complaints and adequate behaviour of the client and/or his environment to obtain a better understanding of the client’s problem.

- Recognition may occur because of:

- Comparison to a predefined standard = criterion-oriented measurement

- Comparison to a representative comparison group = normative measurement

- Comparison to the individual him-/herself = ipsative measurement

- Classification (“labelling”) 🡪 the clinical picture is assigned to a class of problems.

- All-or-nothing principle = DSM-categories

- More-or-less principle = dimensions of a test or questionnaire

- Diagnostic formulation = focuses on the individual and his/her own unique clinical picture 🡪 holistic theory.

- Allows for the uniqueness of the individual, based on a description of the client and its context 🡪 helps the therapy planning, but there is an occasional lack of empirical support.

- Diagnostic formulation usually involves simultaneous recognition and explanation.

Explanation:

- Answers the question of why there is a problem or a behavioural problem.

- It includes:

- The main problem or problem component

- The conditions that explain the problem’s occurrence

- The causal relationship between points 1 and 2.

- Explanations may be classified according to:

- The locus = the person or the situation

- Person-oriented = explanatory factor lies in the person him-/herself; the behaviour is viewed separately from the context.

- Situation-oriented = explanatory factor lies in the event of a well-known context. Explanatory events may precede the behaviour that it is to be explained or follow it.

- The nature of control

- Cause = determines behaviour by previous conditions

- Reason = explanatory factor determined by a voluntary or intentional choice

- Causes explain behaviour while reasons make behaviour understandable.

- Synchronous and diachronous explanatory conditions

- Synchronous = coincide with the behaviour that is to be explained at the time

- Diachronous = precede this behaviour (prior to the behaviour)

- Induced and persistent conditions

- Induced = give rise to a behaviour problem (produce)

- Persistent = perpetuate the behavioural problem (maintain)

- When treating a problem, it is best to search for factors in the current situation that perpetuate the problem, because we can exert an influence on these.

- The locus = the person or the situation

Prediction:

- Involves making a statement about the problem behaviour in the future.

- It is a chance statement 🡪 this chance plays a part in determining the treatment proposal (e.g., long- versus short-term, admission or ambulant treatment, etc.).

- Prediction pertains to a relation between a predictor and a criterion:

- The predictor is the present behaviour

- The criterion is the future behaviour.

- We can only determine the chance that behaviours will collectively occur in a particular population (and not in a certain client) = risk assessment.

Indication:

- Focuses on the question of whether the client requires treatment and, if so, which caregiver and assistance are the most suitable for this client and problem.

- The indication is a search process, carried out by a therapist.

- Before the indication can start, the steps for explanation and prediction must be completed.

- There are 3 additional elements:

- Knowledge of treatments and therapists

- Knowledge of the relative usefulness of treatments

- Knowledge of the client’s acceptance of the indication 🡪 e.g., do not want to follow the treatment

- Indication strategy which takes the client’s preference into account:

- The client’s perspective is examined and explicated

- The diagnostician provides the client with information about the courses of treatment, processes, and therapists.

- The client’s expectations and preferences are compared to those that the diagnostician deems to be suitable and useful, and during a mutual consultation, several possible treatments, which are acceptable to both parties, are formulated.

- The client selects a therapist and a treatment.

Evaluation:

- Evaluation of the assertions about diagnosis and/or intervention takes place based on both the progress of the therapeutic process and the results of the treatment.

- This establishes:

- Whether the therapy took account of the diagnosis and treatment proposal 🡪 if this was not the case, the diagnostic process was unnecessary

- Whether the process and the treatment have brought about change in the client’s behaviour and experience

Diagnostic cycle = a model for answering questions in a scientifically justified manner:

- Observation = collecting and classifying empirical materials, which provide the basis for forming thoughts about the creation and persistence of problem behaviour.

- Induction = the formulation of theory and hypotheses about the behaviour.

- Deduction = testable predictions are derived from these hypotheses.

- Testing = new materials are used to determine whether the predictions are correct or incorrect.

- Evaluation

The diagnostic cycle is a basic diagram for scientific research, not for psychodiagnostic practice.

The diagnostic process:

- Application:

- The diagnostician’s first task is to analyse the request for help, results in:

- Information about the referrer:

- It is important to understand the referrer’s frame of reference.

- The analysis of the request results in clarification of the relationship between the diagnostician and the referrer.

- It is important to make a distinction between the referrer in name (🡪 requires the examination to be carried out) and the actual referrer (🡪 initiative taker).

- Referrers differ from each other in terms of nature and extent of the powers which are available to them, e.g., psychiatrist versus in-taker.

- Details about the type and content of the request:

- Open-ended format = the referrer will not formulate any hypotheses about the problem.

- Closed format = the referrer formulates hypotheses about the problem.

- The content of a request is partially connected to the setting from which the request originates.

- Information about the referrer:

- Analysis of the request includes exploration of the client’s mindset.

- The diagnostician’s first task is to analyse the request for help, results in:

- The diagnostician’s reflections:

- Due weight is given to each of the carious pieces of information.

- This reflection phase requires insight into the diagnostician’s position and professional and task-oriented practices.

- The diagnostician should be aware of his potential biases in both general clinical judgement and towards clients.

- The diagnostic scenario:

- The diagnostician organises all the requester’s and client’s questions from the application phase, all the questions that have occurred to him and his knowledge of the problem.

- Based on this information, he proposes an initial, tentative theory about the client’s problematic behaviour.

- The basic questions are asked.

- The diagnostic examination:

- Hypothesis formulation

- The diagnostician formulates several hypotheses based on the diagnostic scenario.

- In the context of the recognition question, the hypotheses centre on the presence of psychopathology or a differential diagnosis.

- In the context of the explanation question, the hypotheses require a list of explanatory factors and their predisposing or perpetuating roles.

- In the context of the prediction question hypotheses are based on empirical knowledge of successful predictors.

- In the context of the indication question, hypotheses are assumptions about which treatment and which therapist(s) are best suited to a client with a specific problem.

- These hypotheses are founded on the conclusions that have been drawn from recognition, explanation, and prediction, but are also based on:

- Theory of illness = how the client formulates his/her problem, how he/she views his/her complaints and what the disease attributes are.

- Theory of healing = which type of help he/she expects to receive and the way he/she expects to receive it.

- Theory of health = what he/she hopes to achieve with the treatment.

- Selection of examination tools

- Formulation of testable predictions

- Administration and scoring

- Argumentation

- Report

- Hypothesis formulation

Critical comments on DTCs:

- Many patients have more than 1 complaint/problem 🡪 difficult to choose a department.

- Some clients do not have clearly defined complaints/problems

- Within departments that address certain complaints/problems, there is a strong focus on the reported complaints/problems. Due to this, there is a danger that other, even more important existing disorders may be overlooked.

- DTCs assume an interaction between a specific diagnosis and a type of treatment.

- When working with DTCs, there is often insufficient time to carry out a comprehensive diagnostic examination of the causes of the complaint(s)/problem(s).

BDI-II:

- You can only use this for screening not for classification.

- It is not a diagnostic tool 🡪 it is for finding out the severity of depression.

- Cut points are used to classify patients (versus non-patients):

- A score of 13 or higher indicates depression in healthy (and somatic/primary care) populations.

- A score of 19 or higher indicates depression in psychiatric settings.

- Cut points that are optimal within a specific sample do not necessarily emerge as optimal in other samples from the same population or generalise well to the population at large.

- 1 explanation for the need for higher cut points in psychiatric patients is that many symptoms which are assessed as part of the BDI-II (sleeplessness, trouble with concentration) are also affected by other psychiatric diseases or side effects of psychopharmaceuticals, rather than specific symptoms of depression.

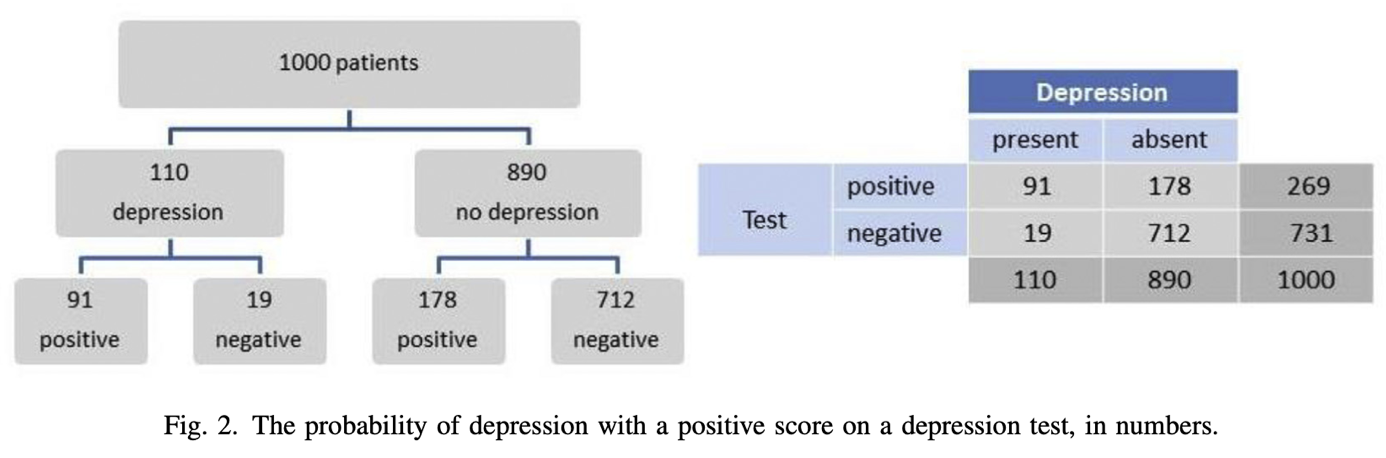

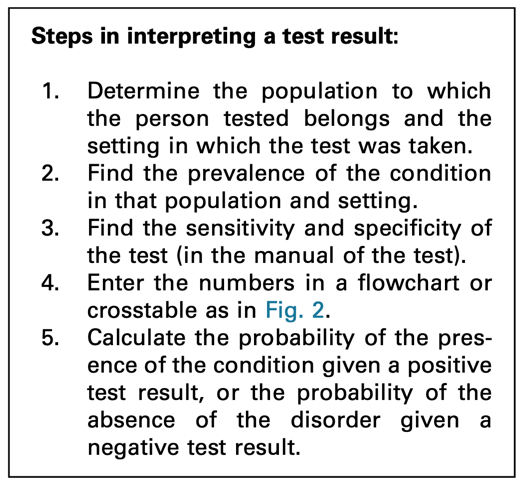

Sensitivity = the number of individuals we can correctly diagnose with depression 🡪 from the 100 people receiving a diagnosis, 86 would be diagnosed with depression correctly 🡪 the other 14 individuals are false negatives.

Specificity = the number of individuals we can correctly diagnose as healthy 🡪 from the 100 people receiving a diagnosis, 78 would be diagnosed healthy correctly 🡪 the other 22 individuals are false positives.

The Youden criterion (= sensitivity + specificity) is not always correct 🡪 it may give counterintuitive results in some circumstances.

- The depression cut point should be higher than the non-depression cut point.

- But if the non-depression cut point is 21 (in total of 40) the optimal cut point of depression (according to Youden criterion) would be 19.

- However, the results show an optimal cut point of 28 for depressed patients.

Based on BDI-II scores alone:

- Misses between 15-20% of persons with depression 🡪 false negatives

- Wrongfully classify 20-25% as having depression even though they don’t 🡪 false positives

The Narcissistic Personality Inventory is the same as the Self Confidence Test. Narcissists could underreport on psychological tests as they want to do good and won’t show they are suffering from a disease.

- Do not rely on 1 test but use different tests to get a differential diagnosis.

- It is generally useful to use BDI-II in this case.

Kohut’s self-psychology approach offers the deficit model of narcissism, which asserts that pathological narcissism originates in childhood because of the failure of parents to empathise with their child.

Kernberg’s object relations approach emphasises aggression and conflict in the psychological development of narcissism, focusing on the patient’s aggression towards and envy of others 🡪 conflict model.

Social critical theory (Wolfe) 🡪 narcissism was a result of the collective ego’s defensive response to industrialisation and the changing economic and social structure of society.

Narcissistic personality disorder (NPD), DSM-V criteria:

- Grandiosity and self-importance

- Persistent fantasies of success, power, attractiveness, intellectual superiority

or ideal love

- Sense of superiority and specialness

- Wish to be admired

- Strong sense of entitlement

- Manipulates and exploits others

- Lack of empathy

- Believes others are envious of him/her and envy of others

- Arrogant and contemptuous attitudes and behaviours

DSM-IV mainly focused on the disorder’s grandiose features and did not adequately capture the underlying vulnerability that is evident in many narcissistic individuals.

At least 2 subtypes or phenotypic presentations of pathological narcissism can be differentiated:

- Grandiose or overt narcissism = may appear arrogant, pretentious, dominant, self-assured, exhibitionist or aggressive 🡪 thick-skinned

- Vulnerable or covert narcissism = may appear overly sensitive, insecure,

defensive and anxious about an underlying sense of shame and inadequacy 🡪 thin-skinned

The former defending the latter: grandiosity conceals underlying vulnerabilities

Both individuals with grandiose and those with vulnerable narcissism share a preoccupation with satisfying their own needs at the expense of the consideration of others.

Pathological narcissism is defined by a fragility in self-regulation, self-esteem, and sense of agency, accompanied by self-protective reactivity and emotional dysregulation.

Grandiose and self-serving behaviours may be understood as enhancing an underlying depleted sense of self and are part of a self-regulatory spectrum of narcissistic personality functioning.

Psychodynamic approaches:

- Psychodynamic psychotherapy is grounded in psychoanalytic principles, but it is based on a broader theoretical framework capturing relational, interpersonal, intersubjective, and embodied experiences of both the social world and the internal world.

- Transference-focused psychotherapy = based on the principles of psychoanalytic object relations theory, originally for borderline patients. It’s aimed at the active exploration of the patient’s aggression, envy, grandiosity, and defensiveness. Interpretations are targeted towards uncovering the negative transference, challenging the patient’s pathological grandiose defences, and exploring their sensitivity to shame and humiliation.

- Mentalisation-based treatment = this group and individual therapy is based on attachment theory, and it integrates psychodynamic, cognitive, and relational components, originally for borderline patients. It focuses on enhancing mentalisation = the ability to reflect on one’s own and others’ states of mind and link these to actions and behaviour.

Cognitive-behavioural approaches:

- In the cognitive-behavioural framework therapists and researchers have adapted Beck’s cognitive therapy model to treat narcissistic thoughts and behaviours. Cognitive techniques such as cognitive reframing, problem-solving and altering dysfunctional thoughts, coupled with behavioural modification techniques such as impulse control, maintaining eye contact, and reducing grandiosity, have been demonstrated in narcissistic patients to strengthen the therapeutic alliance and increase adherence to therapy and therapeutic goals.

- Schema-focused therapy = an integrative psychotherapy that expands strategies from cognitive-behavioural therapy, but also includes elements from object relations, psychodynamic and gestalt therapeutic models. No clinical trials of schema-focused therapy have been conducted for NPD, but clinical reports suggest that it may be effective for the disorder. It focuses on challenging early maladaptive schemas regarding relationships to self and others and on promoting a healthier “adult mode” of functioning. The therapist uses a process of “re-parenting”, encouraging the patient to better regulate narcissistic fluctuations in emotional reactivity and to develop empathy for and achieve emotional intimacy with others.

- Dialectical behaviour therapy = combines individual and group therapy sessions and incorporates cognitive-behavioural principles with acceptance and mindfulness-based skills. Group skills-training sessions are used to promote mindfulness, emotion regulation, distress tolerance and interpersonal effectiveness. It reduces the feelings of shame and self-criticism.

- Meta-cognitive interpersonal therapy = manualized step-by-step treatment particularly focusing on perfectionism. It is aimed at dismantling narcissistic processes, with shared understanding of the patient’s problems in their autobiographical context, progressing to recognition of maladaptive schemas and interpersonal functioning, and finally to promoting change through identification of grandiosity, distancing from old behaviour, reality- and perspective-taking and building more healthy schemas.

Treatment challenges:

- If the diagnosis is made, patients often reject it as it challenges their sense of

specialness and/or may accentuate feelings of low self-worth, shame and

humiliation.

- Patients may feel criticised or unfairly treated by the clinician.

- Patients may resent the perceived power or expertise of the clinician and reject any treatment offered.

- Patients may also wish to please the therapist and be their favourite patient.

- People with NPD may report being particularly sensitive to the side-effects of medication, particularly those that affect their sexual function or intellectual capacity.

- Patients may also resent the idea that they might be dependent on

pharmacological interventions.

The mainstay of treatment for NPD is psychological therapy.

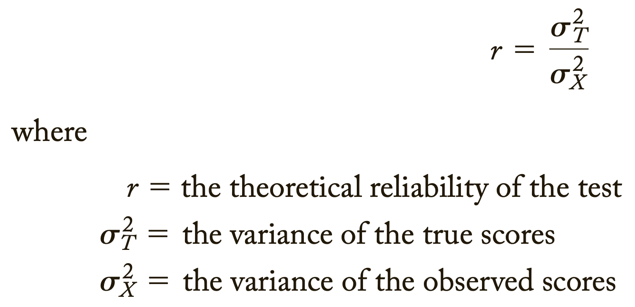

Cronbach’s Alpha = a psychometric statistic

- Introduced by Cronbach in 1952

Before Cronbach’s Alpha:

- Split-half reliability 🡪 was actually very similar to Cronbach’s Alpha but was limited in that you had to choose what split-half 🡪 different split-halves give different estimates of internal consistency.

- Kuder-Richardson 🡪 it was used exclusively for items that were scored dichotomously

Cronbach’s Alpha is much more general than these two 🡪 it represents the average of all possible split-halves. In addition, it can be used for both dichotomous and continuously scored data/variables.

Coefficient Alpha = Cronbach' Alpha

What is it?:

- It is an estimate of reliability 🡪 an estimate of internal consistency reliability.

- Cronbach’s alpha is an indicator of consistency, just like all the other reliability estimates.

- It’s not a measure of homogeneity and it’s not a measure of unidimensionality 🡪 scale.

- Consistency in measurement is good.

Cronbach’s Alpha is a coefficient, and it can range from 0.00 to 1.00.

- Technically you can have a negative reliability estimate in the context of Cronbach’s Alpha, but this doesn’t happen often 🡪 it is a very bad sign.

- 0.00 = no consistency in measurement

- 1.00 = perfect consistency in measurement

- 0.70 means that 70% of the variance in the scores is reliable variance.

- Therefore, 30% is error variance 🡪 we want this to be low.

Internal consistency reliability is relevant to composite scores = the sum (or average) of 2 or more scores.

- E.g., items in a test: item 1 + item 2 + item 3, etc.

- Internal consistency is not relevant to the individual item scores.

Cronbach’s Alpha measures internal consistency between items on a scale.

- Internal means that we are looking at how a participant is responding across all items 🡪 so if a participant is responding on the upper end of the scale like neutral, agree or strongly agree, we would expect them to be responding that way for each item in the scale.

- We don’t want to see that they are responding like strongly agree for some items and then strongly disagree for other items 🡪 that is inconsistent.

- If you have a mix of positive and negatively worded questions 🡪 your Cronbach’s Alpha will be negative 🡪 you need to make sure to reverse code your variables first 🡪 they all need to go in the same direction (does not matter if this is positive or negative).

- You do not do Cronbach’s Alpha for your entire questionnaire because people are going to be answering differently across scales and across items 🡪 it is just a Cronbach’s Alpha for each scale.

The steps needed to generate the relevant output in SPSS:

- Analyze

- Scale

- Reliability analysis

- Select the items and move them over with the arrow 🡪 you must do the Cronbach’s Alpha for each scale separately 🡪 you cannot do it for your whole questionnaire at once 🡪 people answer differently across scales and items

- Select “Alpha” in model

- Label your scale to name the output (not compulsory, but helpful)

- Statistics tab: choose:

- Descriptives for 🡪 “item”, “scale”, and “scale if item deleted”

- Summaries 🡪 “means” and “correlations”

- Inter-item 🡪 “correlation”

- Continue

- OK

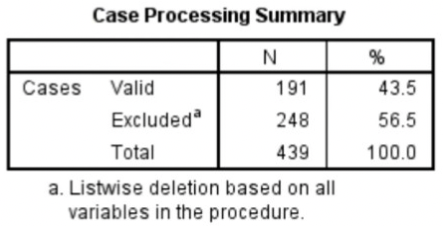

Interpreting Cronbach’s Alpha output from SPSS:

- Case processing summary

- Listwise deletion = if a participant had one missing value, that participant was omitted from the Cronbach’s Alpha calculation 🡪 excluded for all items when one item was missing.

- Listwise deletion = if a participant had one missing value, that participant was omitted from the Cronbach’s Alpha calculation 🡪 excluded for all items when one item was missing.

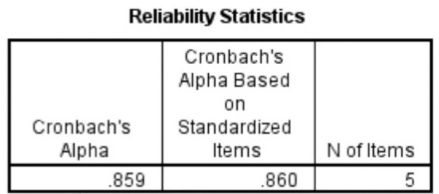

- Reliability statistics

- Cronbach’s Alpha should be .70 or higher

- If less than 10 items, it should be higher than .50 🡪 hard to get a high Cronbach’s Alpha with few items.

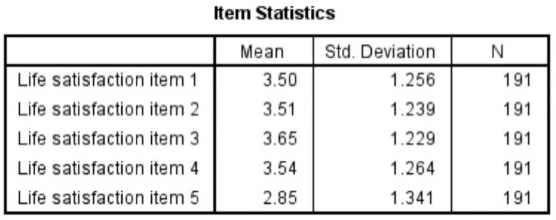

- Item statistics

- Mean, standard deviation, and sample size for each of the items on the scale.

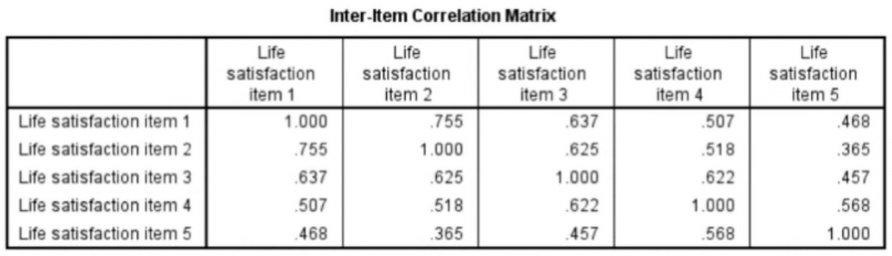

- Inter-item correlation matrix

- The correlation of every item in the scale with every other item.

- You would expect all these correlations to be positive because all your questions should be worded in the same way 🡪 all in the same direction.

- The larger the value is closer to one, the stronger the relationship between the responses.

- 1.000 in the table when an item is correlated with itself 🡪 perfect correlation.

- It is symmetric: values are mentioned twice

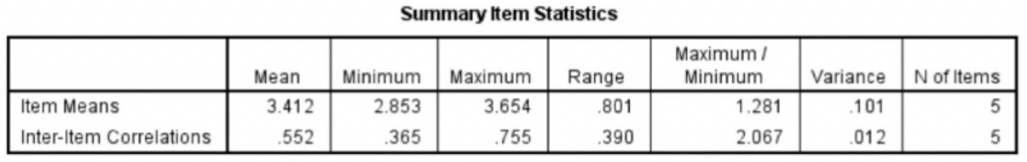

- Summary item statistics

- Item means and inter-item correlations

- Range = maximum value – minimum value

- If you have a low Cronbach’s Alpha because you have few items in your scale, report the inter-item correlation (mean).

- If you have a high Cronbach’s Alpha, you do not need to worry about it.

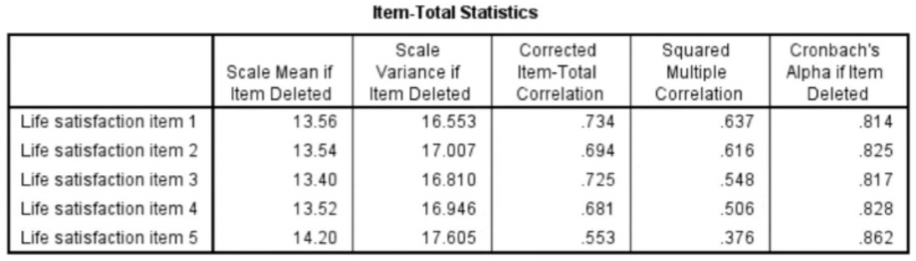

- Item-total statistics:

- Corrected item-total correlation = the correlation of each item with all other items combined.

- Should be higher than .40

- Cronbach’s Alpha if item deleted = if item is deleted from the scale

- You can look at this when you have a low Cronbach’s Alpha 🡪 you can see what happens when you delete a particular item 🡪 will it increase?

- Corrected item-total correlation = the correlation of each item with all other items combined.

- Scale statistics

High Cronbach’s Alpha: suggests that a questionnaire might contain unnecessary duplication of content.

Reliability = how consistently a method measures something.

- When you apply the same method to the same sample under the same conditions, you should get the same results 🡪 if not, the method of measurement may be unreliable.

There are 4 main types of reliability 🡪 each can be estimated by comparing different sets of results produced by the same method.

Test-retest reliability:

- Measures the consistency of results when you repeat the same test on the same sample at a different point in time.

- You use it when you are measuring something that you expect to stay constant in your sample.

- Why it’s important:

- Many factors can influence your results at different points in time 🡪 e.g., respondents might experience different moods, or external conditions might affect their ability to respond adequately.

- Test-retest reliability can be used to assess how well a method resists these factors over time 🡪 the smaller the difference between the 2 sets of results, the higher the test-retest reliability.

- How to measure it:

- To measure test-retest reliability, you conduct the same test on the same group of people at 2 different points in time.

- Then you calculate the correlation between the 2 sets of results.

- Improving test-retest reliability:

- When designing tests or questionnaires, try to formulate questions, statements and tasks in a way that won’t be influenced by the mood or concentration of participants.

- When planning your methods of data collection, try to minimise the influence of external factors, and make sure all samples are tested under the same conditions.

- Remember that changes can be expected to occur in the participants over time and take these into account.

Interrater reliability/interobserver reliability:

- Measures the degree of agreement between different people observing or assessing the same thing.

- You use it when data is collected by researchers assigning ratings, scores, or categories to one or more variables.

- Why it’s important:

- People are subjective, so different observers’ perceptions of situations and phenomena naturally differ 🡪 reliable research aims to minimise subjectivity as much as possible 🡪 so that a different researcher could replicate the same results.

- When designing the scale and criteria for data collection, it’s important to make sure that different people will rate the same variable consistently with minimal bias 🡪 this is especially important when there are multiple researchers involved in data collection or analysis.

- How to measure it:

- To measure interrater reliability, different researchers conduct the same measurement or observation on the same sample.

- Then you calculate the correlation between their different sets of results.

- If all the researchers give similar ratings, the test has high interrater reliability.

- Improving interrater reliability:

- Clearly define your variables and the methods that will be used to measure them.

- Develop detailed, objective criteria for how the variables will be rated, counted, or categorised.

- If multiple researchers are involved, ensure that they all have the same information and training.

Parallel forms reliability:

- Measures the correlation between 2 equivalent versions of a test.

- You use it when you have 2 different assessment tools or sets of questions designed to measure the same thing.

- Why it’s important:

- If you want to use multiple different versions of a test (e.g., to avoid respondents repeating the same answers from memory), you first need to make sure that all the sets of questions or measurements give reliable results.

- How to measure it:

- The most common way to measure parallel forms reliability is to produce a large set of questions to evaluate the same thing, then divide these randomly into 2 question sets.

- The same group of respondents answers both sets, and you calculate the correlation between the results 🡪 high correlation between the 2 sets indicates high parallel forms reliability.

- Improving parallel forms reliability:

- Ensure that all questions or test items are based on the same theory and formulated to measure the same thing.

Internal consistency:

- Assesses the correlation between multiple items in a test that are intended to measure the same construct.

- You can calculate internal consistency without repeating the test or involving other researchers 🡪 so it’s a good way of assessing reliability when you only have one data set.

- Why it’s important:

- When you devise a set of questions or ratings that will be combined into an overall score, you must make sure that all of the items really do reflect the same thing 🡪 if responses to different items contradict one another, the test might be unreliable.

- E.g., internal consistency tells you whether the statements are all reliable indicators of customer satisfaction.

- How to measure it:

- 2 common methods are used to measure internal consistency:

- Average inter-item correlation = for a set of measures designed to assess the same construct, you calculate the correlation between the results of all possible pairs of items and then calculate the average.

- Split-half method = you randomly split a set of measures into 2 sets. After testing the entire set on the respondents, you calculate the correlation between the 2 sets of responses.

- E.g., the correlation is calculated between all the responses to the “optimistic” statements, but the correlation is very weak. This suggests that the test has low internal consistency.

- 2 common methods are used to measure internal consistency:

- Improving internal consistency:

- Take care when devising questions or measures: those intended to reflect the same concept should be based on the same theory and carefully formulated.

Which type of reliability applies to my research?:

- It’s important to consider reliability when planning your research design, collecting, and analysing your data, and writing up your research.

- The type of reliability you should calculate depends on the type of research and your methodology.

- If possible and relevant, you should statistically calculate reliability and state this alongside your results.

Summary types of reliability:

Test-retest reliability The same test over time Measuring a property that you expect to stay the same over time | You devise a questionnaire to measure the IQ of a group of participants (a property that is unlikely to change significantly over time). You administer the test 2 months apart to the same group of people, but the results are significantly different, so the test-retest reliability of the IQ questionnaire is low. |

|---|---|

Interrater reliability/interobserver reliability The same test conducted by different people Multiple researchers making observations or ratings about the same topic | A team of researchers observe the progress of wound healing in patients. To record the stages of healing, rating scales are used, with a set of criteria to assess various aspects of wounds. The results of different researchers assessing the same set of patients are compared, and there is a strong correlation between all sets of results, so the test has high interrater reliability. |

Parallel forms reliability Different versions of a test which are designed to be equivalent Using 2 different tests to measure the same thing | A set of questions is formulated to measure financial risk aversion in a group of respondents. The questions are randomly divided into 2 sets, and the respondents are randomly divided into 2 groups. Both groups take both tests: group A takes test A first, and group B takes test B first. The results of the 2 tests are compared, and the results are almost identical, indicating high parallel forms reliability. |

Internal consistency The individual items of a test Using a multi-item test where all the items are intended to measure the same variable | A group of respondents are presented with a set of statements designed to measure optimistic and pessimistic mindsets. They must rate their agreement with each statement on a scale from 1 to 5. If the test is internally consistent, an optimistic respondent should generally give high ratings to optimism indicators and low ratings to pessimism indicators. The correlation is calculated between all the responses to the “optimistic” statements, but the correlation is very weak. This suggests that the test has low internal consistency. |

Errors of measurement = discrepancies between true ability and measurement of ability

- Error implies that there will always be some inaccuracy in our measurements 🡪 we need to minimise errors

Tests that are relatively free of measurement error: reliable

Tests that have “too much” measurement error: unreliable

Classical test score theory = assumes that each person has a true score that would be obtained if there were no errors in measurement.

- Because measuring instruments are imperfect, the score observed for each person almost always differs from the person’s true ability or characteristic.

- Measurement error = the difference between the true score and the observed score

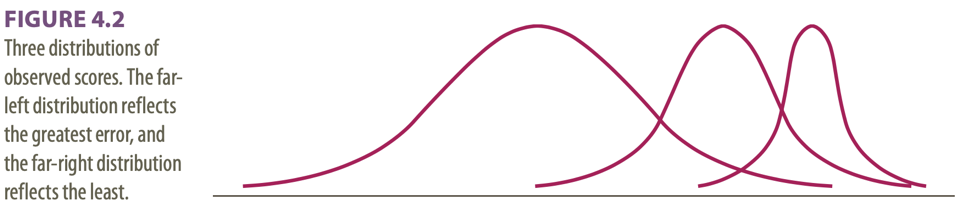

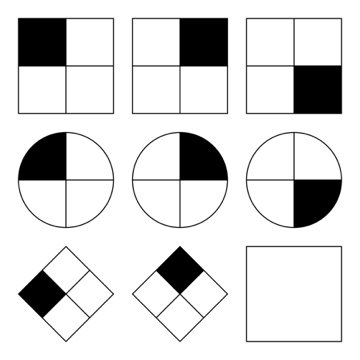

3 different distributions shown:

- Far left distribution: great dispersion around the true score 🡪 you might not want to depend on a single observation because it might fall far from the true score.

- Far right distribution: tiny dispersion around the true score 🡪 most of the observations are extremely close to the true score 🡪 drawing conclusions based on fewer observations will likely produce fewer errors than it will for the far-left curve.

- In the middle

Dispersions around the true score: tell us how much error there is in the measure

- A major assumption in classical test theory = errors of measurement are random

- If errors of measurement are not random 🡪 scores will not have a normal distribution

Classical test theory assumes that the true score for an individual will not change with repeated applications of the same test 🡪 because of random error, repeated applications of the same test can produce different scores 🡪 random error is responsible for the distribution of scores.

The standard deviation of the distribution of errors for each person tells us about the magnitude of measurement error.

- We usually assume that the distribution of random errors will be the same for all people 🡪 classical test theory uses the standard deviation of errors as the basic measure of error = standard error of measurement (σmeas)

- Tells us, on the average, how much a score varies from the true score.

- In practice, the standard deviation of the observed score and the reliability of the test are used to estimate the standard error of measurement.

Domain sampling model = considers the problems created by using a limited number of items to represent a larger and more complicated construct 🡪 another central concept in classical test theory.

- Conceptualizes reliability as the ratio of the variance of the observed score on the shorter test and the variance of the long-run true score.

- The measurement considered in the domain sampling model is the error introduced by using a sample of items rather than the entire domain.

- Sample = shorter test 🡪 e.g., with measuring spelling ability

- The task in reliability analysis is to estimate how much error we would make by using the score from the shorter test as an estimate of true ability.

As the sample gets larger, it represents the domain more and more accurately 🡪 the greater the number of items 🡪 the higher the reliability

Because of sampling error, different random samples of items might give different estimates of the true score: true scores are not available so need to be estimated.

- The distribution of these estimates should be random and normally distributed.

Turning away from classical test theory because it requires that the same test items be administered to each person 🡪 low reliability when few items.

Item response theory (IRT) = the computer is used to focus on the range of item difficulty that helps assess an individual’s ability level.

- E.g., if the person gets several easy items correct, the computer might quickly move to more difficult items 🡪 then this level of ability is tensely sampled.

- The overall result: a more reliable estimate of ability is obtained using a shorter test with fewer items.

Difficulties of IRT: requires a bank of items that have been systematically evaluated for level of difficulty 🡪 complex computer software is required and much effort in test development.

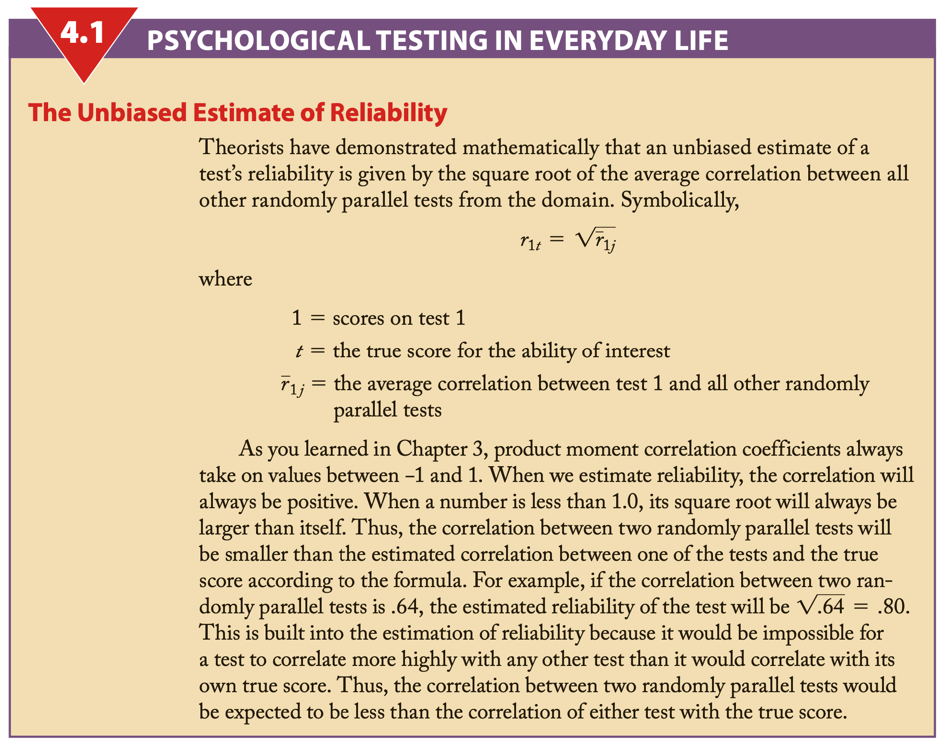

Reliability coefficient = the ratio of the variance of the true scores on a test to the variance of the observed scores.

σ2 = describes theoretical values in a population

S2 = values obtained from a sample

If r = .40: 40% of the variation or difference among the people will be explained by real differences among people, and 60% must be ascribed to random or chance factors.

An observed score may differ from a true score for many reasons.

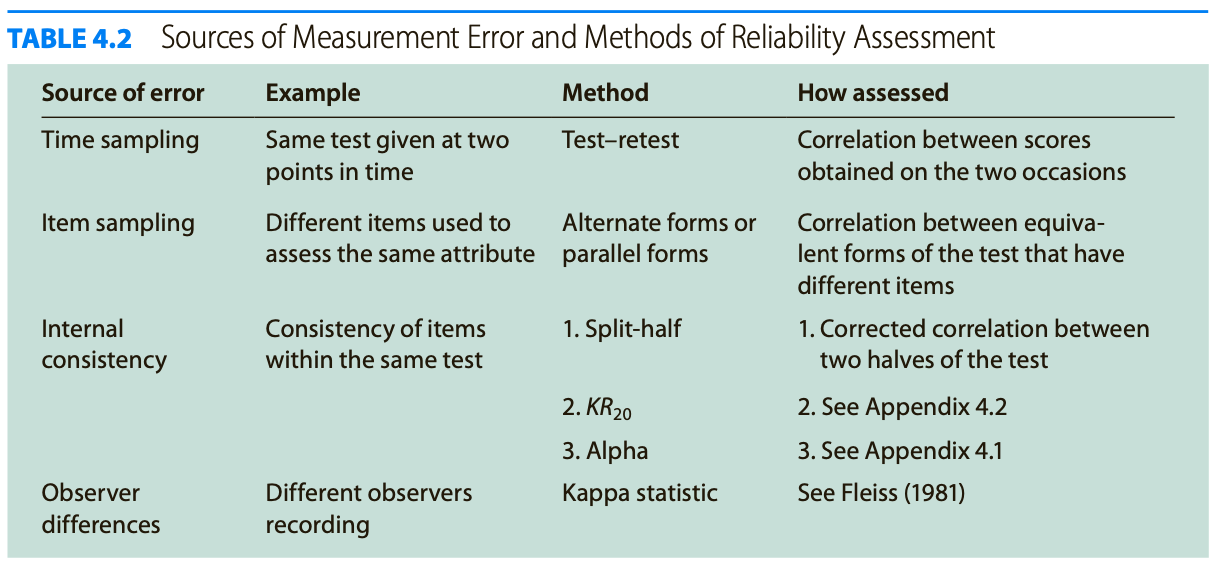

Test reliability is usually estimated in 1 of 3 ways:

- Test-retest method = we consider the consistency of the test results when the test is administered on different occasions.

- Parallel forms = we evaluate the test across different forms of the test.

- Internal consistency = we examine how people perform on similar subsets of items selected from the same form of the measure.

Test-retest method:

- Time sampling

- Evaluation of the error associated with administering the test at 2 different times

- Only when we measure “traits” or characteristics that do not change over time

- Easy to evaluate: find the correlation between scores from the 2 administrations

- Carryover effect = occurs when the first testing session influences scores from the second session 🡪 e.g., remembering answers

- Due to this, the test-retest correlation usually overestimates the true reliability

- One type of carryover effect = practice effects = some skills improve with practice

- Systematic carryover does not harm the reliability 🡪 when everyone’s score improves 5 points 🡪 no new/low reliability

- Random carryover effects 🡪 when something affects some but not all takers of the test 🡪 new reliability/low reliability

- The time interval between testing sessions must be selected and evaluated carefully

- Too close in time: high risk of carryover (and practice) effects

- Too far apart: many other factors can intervene to affect scores, e.g., change of a characteristic

Parallel forms:

- Item sampling

- To determine the error variance that is attributable to the selection of 1 set of items

- Compares 2 equivalent forms of a test that measure the same attribute

- The 2 forms use different items 🡪 however, the rules used to select items of a particular difficulty level are the same

- Equivalent forms reliability = when 2 forms of the test are available, one can compare performance on one form versus the other

Internal consistency:

- All the measures of internal consistency evaluate the extent to which the different items on a test measure the same ability or trait 🡪 low estimates of reliability if the test is designed to measure several traits

- Split-half method

- A test is given and divided into halves that are scored separately

- The results of one half of the test are then compared with the results of the other half

- If a test is long: best method is to divide the items randomly into 2 halves

- Some people prefer to calculate a score for the first half of the items and another score for the second half = easier

- However, can cause problems: if the second half of the items is more difficult than the first half

- If the items get progressively more difficult, then you might be better advised to use the odd-even system = one sub score for the odd-numbered items of the test and one for the even-numbered items

- However, can cause problems: if the second half of the items is more difficult than the first half

- Half-length of the test: has a lower reliability value than the complete test 🡪 test scores gain reliability as the number of items increases

- To correct for this: Spear-Brown formula = allows you to estimate what the correlation between the 2 halves would have been if each half had been the length of the whole test

- Increases the estimate of reliability 🡪 estimate of reliability based on 2 half-tests would be deflated because each half would be less reliable than the whole test

- To correct for this: Spear-Brown formula = allows you to estimate what the correlation between the 2 halves would have been if each half had been the length of the whole test

- No Spear-Brown formula when: 2 halves have unequal variances 🡪 Cronbach’s Alpha

- Provides the lowest estimate of reliability that one can expect

- If alpha is high, then you might assume that the reliability of the test is acceptable because the lowest boundary of reliability is still high 🡪 the reliability will not drop below alpha

- A low alpha level gives you less information 🡪 the actual reliability may still be high

- Can confirm that a test has substantial reliability, but cannot tell you that a test is unreliable

- Kuder-Richardson technique

- Addition to split-half technique: also estimating internal consistency

- No problems with unequal variances

- Considers simultaneously all possible ways of splitting the items

- Formula is for calculating the reliability of a test in which the items are dichotomous 🡪 scored 0 or 1 (usually right or wrong)

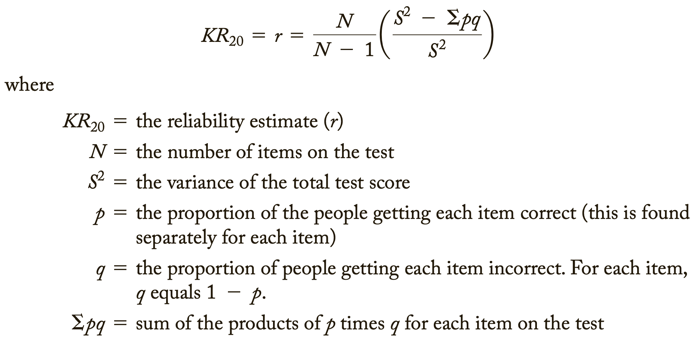

- Kuder-Richardson 20, KR20 or KR20

- pq = variance for an individual item/Σpq = the sum of the individual item variances

- Adjustment for the number of items in the test 🡪 will allow an adjustment for the greater error associated withs shorter tests

- Because the Kuder-Richardson procedure is general, it is usually more valuable than a split-half estimate of internal consistency 🡪 it evaluates reliability within a single test administration

- Covariance = occurs when the items are correlated with each other 🡪 they can be assumed to measure the same general trait, and the reliability of the test will be high

- Only situation that will make the sum of the item variance less than the total test score variance

- Difficulty = the percentage of test takers who pass the item

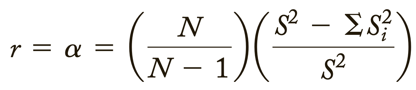

- Coefficient/Cronbach’s Alpha

- Kuder-Richardson technique requires that you find the proportion of people who got each item “correct” 🡪 many types of tests have no right or wrong answers, e.g., when Likert scales are used

- A more general reliability estimate: estimates the internal consistency of tests in which the items are not scored as 0 or 1 (right or wrong)

- Only difference with above is that Σpq has been replaced by ΣSi2

- = is for the variance of the individual items (i)

- Si2 can describe the variance of items whether or not they are in a right-wrong format

- Thus, Coefficient Alpha is the most general method of finding estimates of reliability through internal consistency

Factor analysis = popular method for dealing with the situation in which a test apparently measures several different characteristics.

- Can be used to divide the items into subgroups: each internally consistent

- The subgroups of items will not be related to one another

- Is of great value in the process of test construction: can help a test constructor build a test that has sub measures for several different traits 🡪 these subsets will then be internally consistent

Difference score = created by subtracting one test from another

- The difference between performances at 2 points in time

- The difference between measures of 2 different abilities (standardized units needed, Z-scores)

- In a difference score, the error (E) is expected to be larger than either the observed score (X) or the true score (T) 🡪 E absorbs error from both scores used to create the difference score

- Furthermore, T might be expected to be smaller than E because whatever is common to both measures is cancelled out when the difference score is created.

- As a result, the reliability of a difference score is expected to be lower than the reliability of either score on which it is based.

Psychologists with behavioural orientations usually prefer not to use psychological tests 🡪 direct observation of behaviour.

- Psychologists cannot always monitor behaviour continuously 🡪 they often take samples of behaviours at certain time intervals

- In practice, behavioural observation systems are frequently unreliable because of discrepancies between true scores and the scores recorded by the observer.

- Interrater-/interscorer-/interobserver-/interjudge reliability = estimating the reliability of the observers = assessing the problem of error associated with different observers

- Consider the consistency among different judges who are evaluating the same behaviour

2 of 3 ways of measuring this type of reliability:

- Record the percentage of items that 2 or more observers agree upon 🡪 most common, but not good, e.g., not considering chance

- Kappa statistic = measure of agreement between judges who use nominal scales to rate

- Indicates the actual agreement as a proportion of the potential agreement following correction for chance agreement 🡪 best method

- Values of Kappa:

- 1 = perfect agreement

- -1 = less agreement than can be expected based on chance alone

- Higher than .75 = excellent agreement

- .4-.75 = fair to good/satisfactory agreement

- Less than .4 = poor agreement

Standard reliability of a test depends on the situation in which the test will be used:

- Sometimes bringing a test up to an exceptionally high level of reliability may not be worth the extra time and money.

- On the other hand, strict standards for reliability are required for a test used to make decisions that will affect people’s lives.

When a test has an unacceptable low reliability 2 approaches are:

- Increase the length of the test 🡪 the number of items/questions

- The larger the sample, the more likely that the test will represent the true characteristic 🡪 according to the domain sampling model: each item in a test is an independent sample of the trait or ability being measured.

- The reliability of a test increases as the number of items increases.

- By using the Spearman-Brown formula, one can estimate how many items will have to be added to bring a test to an acceptable level of reliability 🡪 so the test will not be too long or costly 🡪 = prophecy formula

- The larger the sample, the more likely that the test will represent the true characteristic 🡪 according to the domain sampling model: each item in a test is an independent sample of the trait or ability being measured.

- Throw out items that run down the reliability

2 approaches to ensure that items measure the same thing:

- Perform factor analysis

- Tests are most reliable if they are unidimensional = one factor should account for considerably more of the variance than any other factor 🡪 items that do not load on this factor might be best omitted.

- To divide the test into homogeneous subgroups of items.

- Discriminability analysis = examine the correlation between each item and the total score of the test

- When the correlation between the performance on a single item and the total test score is low, this item is probably measuring something different from the other items on the test.

- It also might mean that the item is so easy or so hard that people do not differ in their response to it.

- In either case: the low correlation indicates that the item drags down the estimate of reliability and should be excluded.

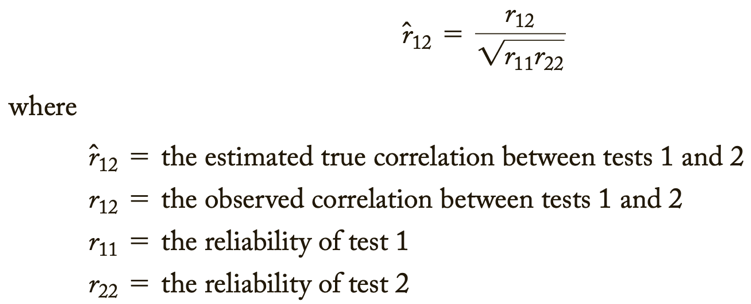

Low reliability 🡪 findings/correlation not significant 🡪 potential correlations are attenuated, or diminished, by measurement error 🡪 obtained information has no value

- Correction for attenuation = dealing with the problem of low reliability by estimating what the correlation between tests would have been if there had been no measurement error 🡪 “correct” for the attenuation in the correlations caused by the measurement error.

- For this, one needs to know only the reliability of 2 tests and the correlation between them.

Adding more questions to heighten the reliability can be dangerous: it can impact the validity and it takes more time to take the test.

Too high reliability 🡪 remove redundant items/items that do not add anything 🡪 we want some error

Great instruction goes together with a high reliability.

For research rules are less strict than for individual assessment.

The Dutch Committee on Testing (COTAN) of the Dutch Psychological Association (NIP) publishes a book containing ratings of the quality of psychological tests.

- As a result of the growing awareness of the importance “to promote the better use of better tests” the NIP founded the COTAN.

The COTAN adopts the information approach, which entails the policy of improving test use and test quality by informing test constructors, test users, and test publishers about the availability, the content, and the quality of tests.

2 key instruments used are:

- Documentation of Tests and Test Research

- Contains a description of all tests available in The Netherlands (650+)

- Contains excerpts of research conducted with these tests

- A criterion for being listed in the book is that at least some research in Dutch samples on norms, reliability, or validity has been published.

- The descriptions have a fixed format and concern 8 characteristics:

- Target group(s)

- Construct(s) to be measured

- Availability/publisher

- Administration procedure

- Time needed for administration

- Content (e.g., number and type of items)

- Scoring (way of scoring, available norm groups, type of norms)

- References

- Dutch Rating System for Test Quality

- Evaluates the quality of a test on 7 criteria

- Rating each criterion: insufficient, sufficient, or good

- The 2009 revision:

- Similarities: the general structure and the assessment criteria

- Changes:

- Texts of all criteria were adapted to apply to both paper-and-pencil tests and computer-based tests

- For each criterion the items and recommendations were extended to include new developments (or revived old techniques)

The 7 criteria of the Dutch Rating System for Test Quality:

- Theoretical basis of the test

- Should enable the prospective test user to judge whether the test is suitable for his/her purposes.

- 3 items that deal with the theoretical basis and the logic of the test development procedure.

- Key item:

- Asks whether the manual clarifies the construct that the test purports to measure, the groups for which the test is meant, and the application of the test.

- Quality of the test materials

- The new rating system contains separate items for paper-and-pencil tests and computer-based tests.

- Both sets contain 8 items of which 3 are key items.

- Key items:

- The standardization of the test content

- The objectivity of the scoring system

- The presence of unnecessary culture-bound words or content that may be offensive to specific ethnic, gender, or other groups.

- Comprehensiveness of the manual

- Evaluates the comprehensiveness of the information the manual provides to the test user to enable the well-founded and responsible use of the test.

- Contains 7 items of which 1 is a key item.

- Key item:

- Ask whether there is a manual at all.

- For computer-based tests 3 extra items are available 🡪 about installation of the software, etc.

- Norms

- Scoring a test usually results in a raw score 🡪 partly determined by characteristics of the test, such as number of items, time limits, item difficulty, and test conditions 🡪 raw scores are difficult to interpret and unsuited for practical use.

- To give meaning to a raw score 2 ways of scaling or categorizing raw scores can be distinguished:

- Norm-referenced interpretation = a set of scaled scores or norms may be derived from the distribution of raw scores of a reference group.

- Domain-referenced interpretation = standards may be derived from a domain of skills or subject matter to be mastered, or criterion-referenced interpretation = cut scores may be derived from the results of empirical validity research.

- Raw scores will be categorized in 2 (e.g., “pass” or “fail) or more different score ranges.

- The provision of norms, standards, or cut scores is a basic requirement for the practical use of most tests, but there are exceptions.

- Criterion is assessed using 2 key items and 3 separate sections on norm-referenced, domain-referenced, and criterion-referenced interpretation.

- Key items:

- Checks whether norms, standards, or cut scores are provided

- Asks in which year or period the data were collected

- Reliability

- Reliability results should be evaluated from the perspective of the test’s application 🡪 different estimation methods may produce different reliability estimates, and in different groups the test score may have different reliabilities.

- The objective of the reliability analysis is to estimate the degree to which test-score variance is due to true-score variance.

- 3 items, of which 1 is a key item.

- Key item:

- Checks whether any reliability results are provided at all.

- Construct validity

- Validity = extent to which a test fulfils its purpose

- When the purpose is description, other validity information is required than whether the purpose is prediction of classification.

- Construct validity is required for almost all tests, whatever the purpose of test use.

- Construct-related evidence should support the claim that the test measures the intended trait or ability.

- The structure of the items with respect to construct validity is the same as for reliability.

- First, the provision of results is ascertained by means of a key item.

- Criterion validity

- Will not be required for tests that are not intended for prediction.

- Demonstrates that a test score is a good predictor of non-test behaviour or outcome criteria.

- Prediction can focus on:

- Past = retrospective validity

- Same moment in time = concurrent validity

- Future = predictive validity

- The structure of the items with respect to criterion validity is the same as for construct validity.

Quality of Dutch tests:

- For 5 of the 7 criteria, the quality of a considerable majority of the test (about 2/3 or more) is at least “sufficient”.

- However, for 2 criteria (norms and criterion validity) the quality of most tests is “insufficient”.

Overall picture is positive showing that the quality of the test repertory is gradually improving.

Standards for educational and psychological testing; 3 sections:

- Foundations = focuses on basic psychometric concepts such as validity and reliability

- Operations = considers how tests are designed and built and how they are administered, scored, and reported. It also reviews standards for test manuals and other documentation.

- Application = takes on a wide range of issues, ranging from training required to administer and interpret tests.

Validity = does the test measure what it is supposed to measure?

- Evidence of validity of a test establishes the meaning of a test.

- The agreement between a test score or measure and the quality it is believed to measure.

Validity is the evidence for inferences made about a test score.

3 types of evidence:

- Construct related

- Criterion related

- Content related

Every time we claim that a test score means something different from before, we need a new validity study 🡪 ongoing process.

- Validity really refers to evidence supporting what can be said on the basis of the test scores and not to the tests themselves.

Subtypes of validity:

- Face validity

- Measures whether a test looks like it tests what it is supposed to test.

- Face validity if the items seem to be reasonably related to the perceived purpose of the test.

- Not technically a form of validity

- Can motivate test takers 🡪 the test appears relevant

- Content validity

- Considers the adequacy of representation of the conceptual domain the test is designed to cover.

- Based on the correspondence between the item content and the domain the items represent 🡪 items are rated in terms of relevance to the content.

- Of greatest concern in educational testing

- Logical rather than statistical

- Threats to content validity:

- Construct underrepresentation = the failure to capture important components of a construct 🡪 the assessment is “too small”

- Construct-irrelevant variance = occurs when scores are influenced by factors irrelevant to the construct 🡪 the assessment is “too big”

- Criterion validity

- Tells us how well a test corresponds with a particular criterion = the standard against which the test is compared.

- Evidence is provided by high correlations between a test and a well-defined criterion measure.

- Types of criterion validity: past present or future

- Retrospective validity = the extent to which an instrument that purports to measure a particular behaviour can be shown to correlate with past occurrences that demonstrate this behaviour. Not used very often past (you already know what the diagnosis contains so there is bias with answering)

- Predictive validity = evidence that a test score or other measurement correlates with a variable that can only be assessed at some point after the test had been administered or the measurement made. future (take a test to see if therapy will work then you give therapy and see if the test predicted the outcome)

- Comes from studies that use a test to forecast performance on a criterion that is measured at some point in the future.

- The test itself is the predictor variable and the outcome is the criterion.

- Many tests do not have exceptional predictive validity.

- Concurrent validity = the degree to which the measures gathered from one tool agree with the measures gathered from other assessment techniques. present (test and clinician gives diagnosis and you see if they give the same answer)

- Obtained from correlations between the test and a criterion when both are measured at the same point in time.

- Another use: when a person does not know how he or she will respond to the criterion measure.

- Criterion-referenced tests = participants are evaluated against the specific criterion instead of how they performed relative to other participants. These tests have items that are designed to match certain specific instructional objectives.

- A style of test which uses test scores to generate a statement about the behaviour that can be expected of a person with that score.

- Construct validity

- Construct = something built by mental synthesis (e.g., intelligence).

- Is used when a specific criterion is not well-defined.

- Is used to determine how well a test measures what it is supposed to measure.

- Is established through a series of activities in which a researcher simultaneously defines some construct and develops the instrumentation to measure it.

- Required when: “no criterion or universe of content is accepted as entirely adequate to define the quality to be measured”

- It involves assembling evidence about what a test means. Done by showing the relationship between a test and other tests and measures.

- Types of construct validity:

- Convergent validity = when a measure correlates well with other tests believed to measure the same construct. Obtained in one of 2 ways:

- We show that a test measures the same things as other tests used for the same purpose.

- We demonstrate specific relationships that we can expect if the test is really doing its job.

- Discriminant validity = proof that the test measures something unique/distinct from other tests.

- Indicates that the measure does not represent a construct other than the one for which it was devised.

- To demonstrate this, a test should have low correlations with measures of unrelated constructs or with what the test does not measure.

- By providing evidence that a test measures something different from other tests, we also provide evidence that we are measuring a unique construct.

- Convergent validity = when a measure correlates well with other tests believed to measure the same construct. Obtained in one of 2 ways:

Examples of the subtypes of validity:

Face validity Does the content of the test appear to be suitable to its aims? | You create a survey to measure the regularity of people’s dietary habits. You review the survey items, which ask questions about every meal of the day and snacks eaten in between for every day of the week. On its surface, the survey seems like a good representation of what you want to test, so you consider it to have high face validity. |

|---|---|

Content validity Is the test fully representative of what it aims to measure? | A mathematics teacher develops an end-of-semester algebra test for her class. The test should cover every form of algebra that was taught in the class. If some types of algebra are left out, then the results may not be an accurate indication of student’s understanding of the subject. Similarly, if she includes questions that are not related to algebra, the results are no longer a valid measure of algebra knowledge. |

Criterion validity Do the results accurately measure the concrete outcome that they are designed to measure? | A university professor creates a new test to measure applicants’ English writing ability. To assess how well the test really does measure students’ writing ability, she finds an existing test that is considered a valid measurement of English writing ability and compares the results when the same group of students take both tests. If the outcomes are very similar, the new test has high criterion validity. |

Construct validity Does the test measure the concept that it’s intended to measure? | There is no objective, observable entity called “depression” that we can measure directly. But based on existing psychological research and theory, we can measure depression based on a collection of symptoms and indicators, such as low self-confidence and low energy levels. |

Validity coefficient = correlation that describes the relationship between a test and a criterion 🡪 tells the extent to which the test is valid for making statements about the criterion.

- Between .30-.40 are commonly considered adequate.

- Larger than .60 rarely found in practice.

- Is statistically significant if the chances of obtaining its value by chance alone are quite small 🡪 usually less than 5 in 100.

- A score tells us more than we would know by chance.

- The validity coefficient squared = the percentage of variation in the criterion that we can expect to know in advance because of our knowledge of the test scores.

- E.g., we will know .40 squared, or 16%, of the variation in college performance because of the information we have from the SAT test.

- In many circumstances, using a test is not worth the effort because it contributes only a few percentage points to the understanding of variation in a criterion.

- However, low validity coefficients (.30 to .40) can sometimes be especially useful even though they may explain only 10% of the variation in the criterion.

- In some circumstances, though, a validity coefficient of .30 or .40 means almost nothing.

Because not all validity coefficients of .40 have the same meaning, you should watch for several things in evaluating such information 🡪 evaluating validity coefficients:

- Look for changes in the cause of relationships (between test and criterion)

- Be aware that the conditions of a validity study are never exactly reproduced.

- What does the criterion mean?

- Criterion-related studies mean nothing at all unless the criterion is valid and reliable.

- Some test constructors attempt to correlate their tests with other tests that have unknown validity 🡪 a meaningless group of items that correlates well with another meaningless group of items remains meaningless.

- The criterion should relate specifically to the use of the test.

- E.g., because the SAT attempts to predict performance in college, the appropriate criterion is GPA (a measure of college performance). Any other inferences made based on the SAT require additional evidence.

- Review the subject population in the validity study

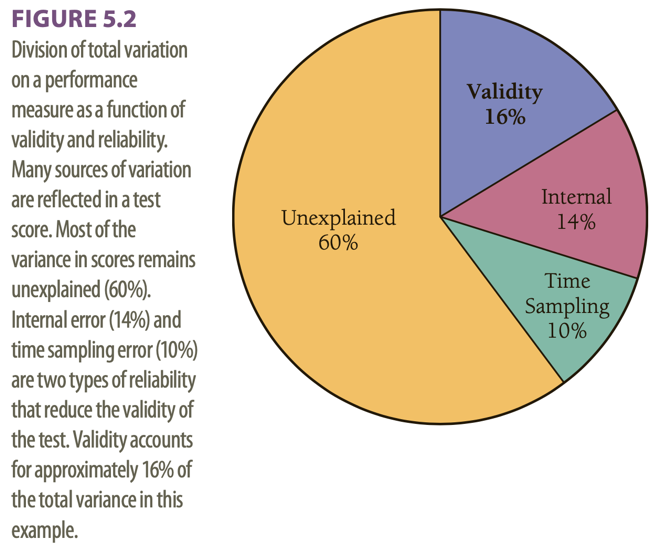

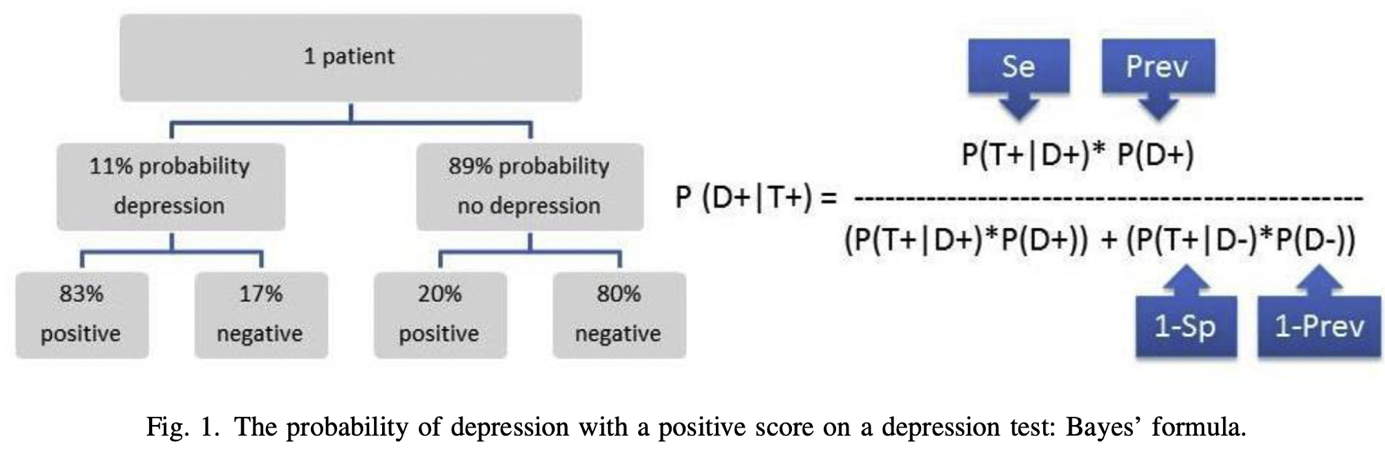

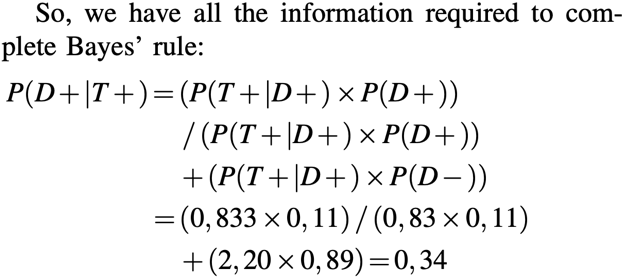

- The validity study might have been done on a population that does not represent the group to which inferences will be made.