Intro to AI

What is supervised Learning (Learn By Example)?

data is trained on labeled data -maps between input to labeled output.

acts as a teacher → goal is to train the model or machine to make predictions and decisions based on the data that is given.

What is classification?

sorts data into predefined classes and apply learned characteristics to them.

predicts discrete label or category for given input

Ex: Spam detection, disease detection, image classification, bank loan prediction

What is regression?

used to predict a continuous numerical value of a given input

Ex: house price, stock price

What is unsupervised Learning (learn By Observations)?

trained on unlabeled data

model learns by finding patterns - model learns through observations and finds structure in the data.

What is clustering?

groups data points into clusters where data points in the clusters are similar to each other

Ex: search engine, face recognition, targetted marketing, recommender system

What is Association Rule Mining?

used to discover interesting relationships or patterns between variables in large datasets (often in the form of if-then rules)

Ex: Market Basket: identifying which products are mainly purchased tgt, recommendation system, census data, medical diagnosis

What is reinforcement learning (Learn from Mistakes)?

agent is rewarded or penalized based on correct or wrong actions - the agent interacts with the environment and finds the best outcome, leading to less mistakes overtime

model trains itself based on the reward points it gets

What are the 3 steps for ML?

Train - train model with data

Validate - evaluating model’s performance on another data set and fins tuning the hyperparameters without affecting final result

Test - final test, use on test set (not involved in training or validating)

we test to avoid overfitting

model performs well for specific trained set and not for unseeded data (too specific to that set)

the model should provide generalization

should be applicable to different data

retrain if model does not work anymore

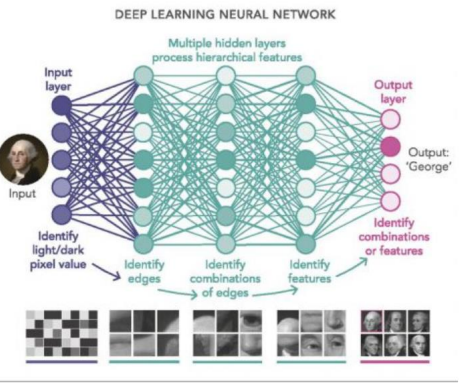

What are neural networks?

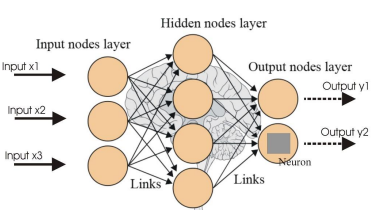

aims to mimic how the human brain works + its structure

consists of layers of interconnected nodes (neurons) that signal e/o

good for complex tasks like facial recognition, nlp, speech recognition

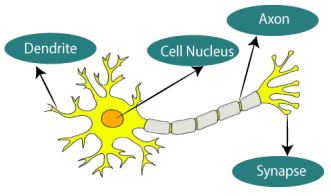

In human brain, dendrites: input, cell body: processor, synapse: link, axon: output

Neural Network:

Input Layer: takes in raw data as input - node represents feature of data

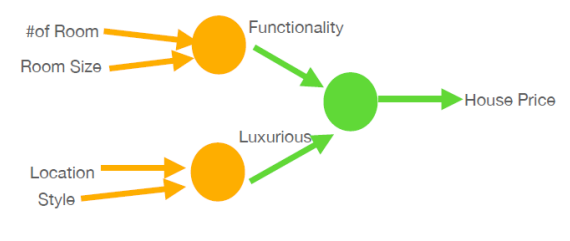

Hidden Layer: process input through series of transformation

they learn and extract patterns - no. of hidden noes represent model’s complexity

Output Layer: produces final result

e.g. class label or classification or number for regression

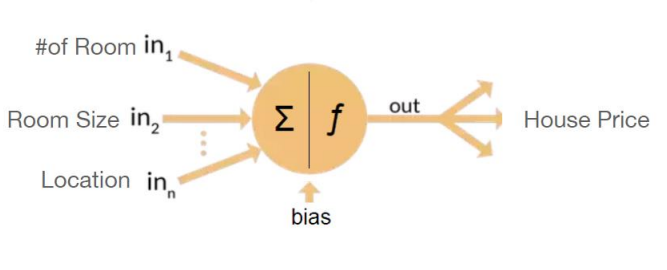

Simple Neural network with bias calc. and no hidden layers

more complex with hidden layers

In facial recognition, features are transformed into matrix of numbers that ML models can use to identify faces

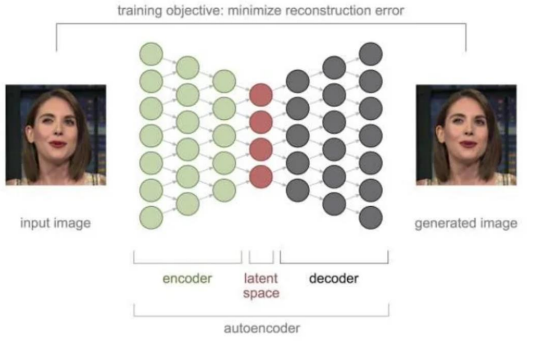

Autoencoders: NN that encodes input data into lower dimensional rep. and converts it back to og dimension

dimension reduction, anomaly detection

learn compressed rep. (encoding) while preserving important features

How do you evaluate the performance of your model?

Split data into 3 sets (not so small data)

1. Training Set: contains majority of data used to teach/train models to recognize patterns

Ex: perfect weight in MLP: learns weights between neurons to best fit data

2. Validating Set: tune hyperparameters and avoid overfitting

Finding right parameters (fine tunning)

Ex: best hidden unit in MLP

3. Test set: evaluate model’s performance on completely unseen data

used after training and validation process is complete

parameters are fixed

output is error metric: accuracy, precision, recall, MSE

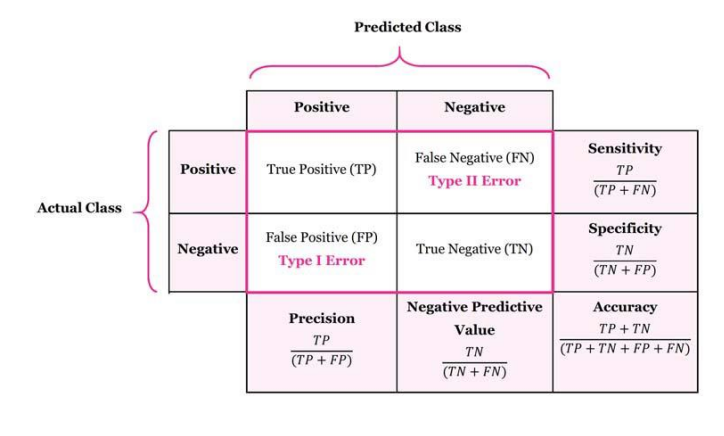

How can we tell how well our machine works in supervised learning?

Regression: How close the system matches the OG data

Classification: how close you can predict the outcomes

What is the accuracy rate?

ratio of correctly predicted instances

(TP + TN) / Total

best when data is balanced

What is recall/sensitivity?

correctly predicted positive instances over the actual total positive instances

TP/(TP + FN)

how well model identifies actual positives

What is precision?

correctly predicted positive instances/ total predicted positive instances

Of all the predicted positives, which of them are actually positive?

how accurate is the model when predicting positives

Ex: in medical diagnosis, high precision minimizes false alarms

TP/ (TP + FP)

What are all the rates?

TP: TP/(TP + FN)

TN: TN/(TN + FP)

FP: FP/(FP + TN)

FN: FN/(TP + FN)

What is specificity?

correctly predicted negative rate/ total negative instances

TN/ (TN + FP)

how well model identifies actual negative value

What are some applications of generative AI?

Image/ Video generation

Music/sound generation

text generation and copyright

healthcare: personalized treatment plans

gaming: character and environmental creation

customer service and chatbots: virtual assistants

What is an ROC curve?

plots FPR (x-axis) and TPR(y -axis), the closer curve is to 1, the better

the bigger the area under the curve, the better the classification

can properly identify between positives and negatives

What is a chatbot?

computer program designed to simulate conversations with human users

What is natural language understanding?

uses computer software to understand text/speech input

Parsing: breaks text into structured format that computers can understand

allows computers to respond to human text instead of relying on computer language syntax

makes it possible to carry out dialogue with computers with human language (since human language is complex and ambiguous)

What are the key components of NLU?

Intent Recognition:

identifying the user’s objective

establish meaning of text

Entity Recognititon:

identifies things in the message and extracts details about those entities

Ex: Name: John (person, organization, etc… different groups of entities)

What is the main goal of NLP?

enable computers to understand, interpret and generate human language in a way that is meaningful and relevant.

What are the phases of NLP?

Lexical Analysis:

breaking text into smaller units/ tokens

indeitfyig words, numbers, punctuations

removing spaces and formatting (unnecessary)

Syntactic Analysis:

examines sentence structure

parts of speech

determines how words relate to each other

Semantic Analysis:

understand literal meaning of words and phrases

identify relationships btw. words

synonyms, antonyms

interpret figurative language

Discourse Analysis:

understand relationship btw. sentences

identify main topic and theme

recognize overall structure

track reference (like pronouns) across the text

Pragmatic Analysis:

interprets intent behind words

considers real-world context

understands implied meaning

shift from literal meaning to what text implies in the specific context

What are some examples of NLP?

Googling: Q & A

Summarizattion: text analysis and generation

Machine Translation: translate from one language to another (combines fragments of translations together)

What is genrative AI?

AI that generates new content like images, sounds, text

Ex: Chat, DALLE (image generation), deepfake

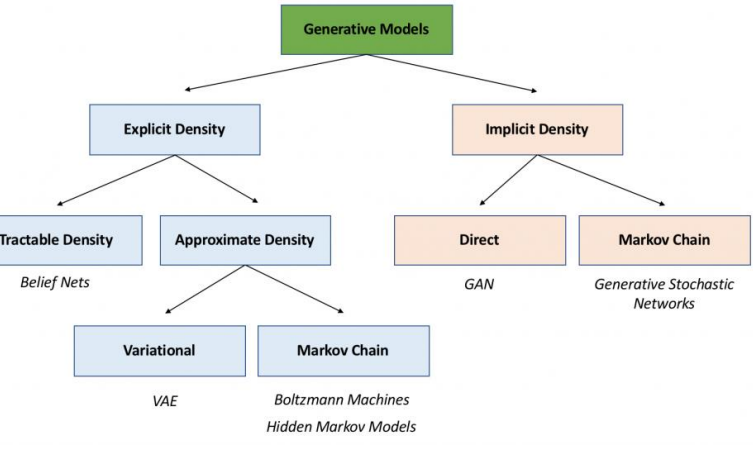

What are the types of generative models?

Explicit Desnity Models

Explicity define PDF (compute likelihood of data points and generate samples from them)

Ex: PixelCNN, Autoregresive models → put together pixel by pixel

Implicity Density Models

Do not define PDF (generate samples directly and learn to approximate data distribution in a non-parametric way)

GANs

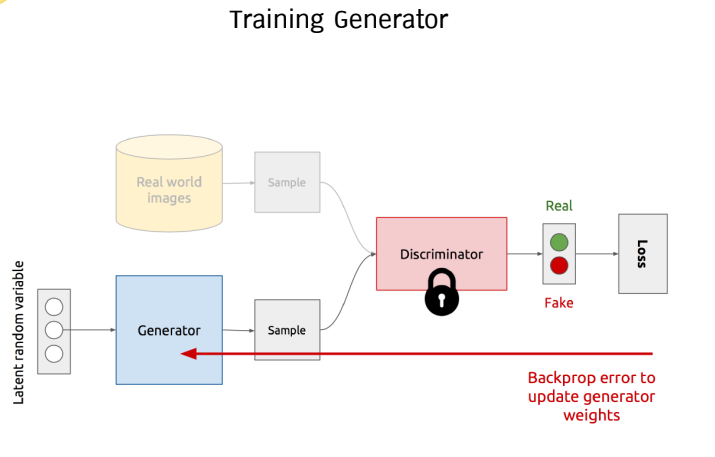

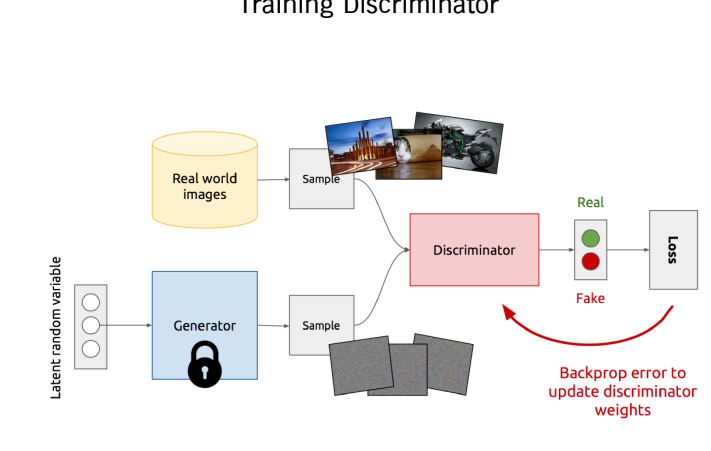

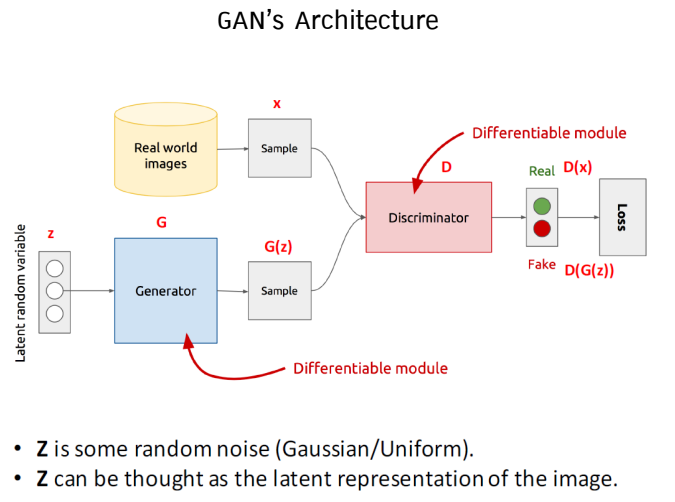

What are GANs?

Generative Adversial Networks: 2 part model

Genrator: creates data

Trains by backpropagating to the generator

Discriminator: evaluates authenticity (generated vs. real)

Trains by backpropagating weights from the real and generated image to adjust

What are the steps to training GANs?

Collect and preprocess dataset

train generator and discriminator iteratively

evaluate and fine-tune model

What are deepfakes?

synthetic media where a person’s likeliness, action ,voice is replaced with another using models like GANs

Ex: Entertainment: movies, dubbing, Education: re-enactments, Creative Arts

What are some ethical issues concerning deepfakes?

privacy

fraud

damage to reputation

misinformation

manipulation

copyright

How are deepfakes made?

Training: collecting image and vids

AI techniques: GANs

Tools: software like DeepFaceLab

What are autoencoders?

DNN used for generating deepfakes

Encoder: maps input data to lower dimension (captures only details)

Decoder: creates the OG as close as possible

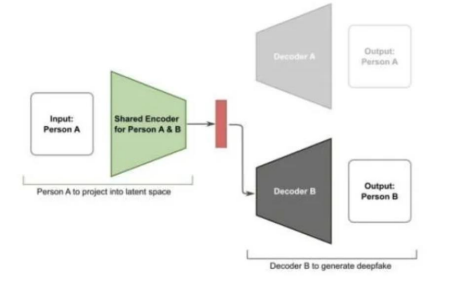

How do shared encoders help in deepfakes?

using shared encoders will produce overlapping latent space

can exhibit same emotion, head posture

What is deep learning?

uses neural networks to mimic the way the brain works

effective for complex tasks

What is computer vision?

allows computers to enable dericing info from images, videos, visual inputs

What are some things we can see in the future with AI?

Customized Chatbots: create their own mini chatbots catering to specific needs

Science: researchers can analyze mass data, discover complex relationships, and uncover patterns

Foreign Policy: accelerate AI development to maintain competitiveness

Climate Crisis: optimizing energy consumption, predicting natural disasters