Virtual memory

Transparency- Run as if it owns all mem

Efficiency- Minimal overhead in time and space

Protection- One proc can’t read/modify another program or the kernel’s memory

Went from whole process swapping to on-demand paging

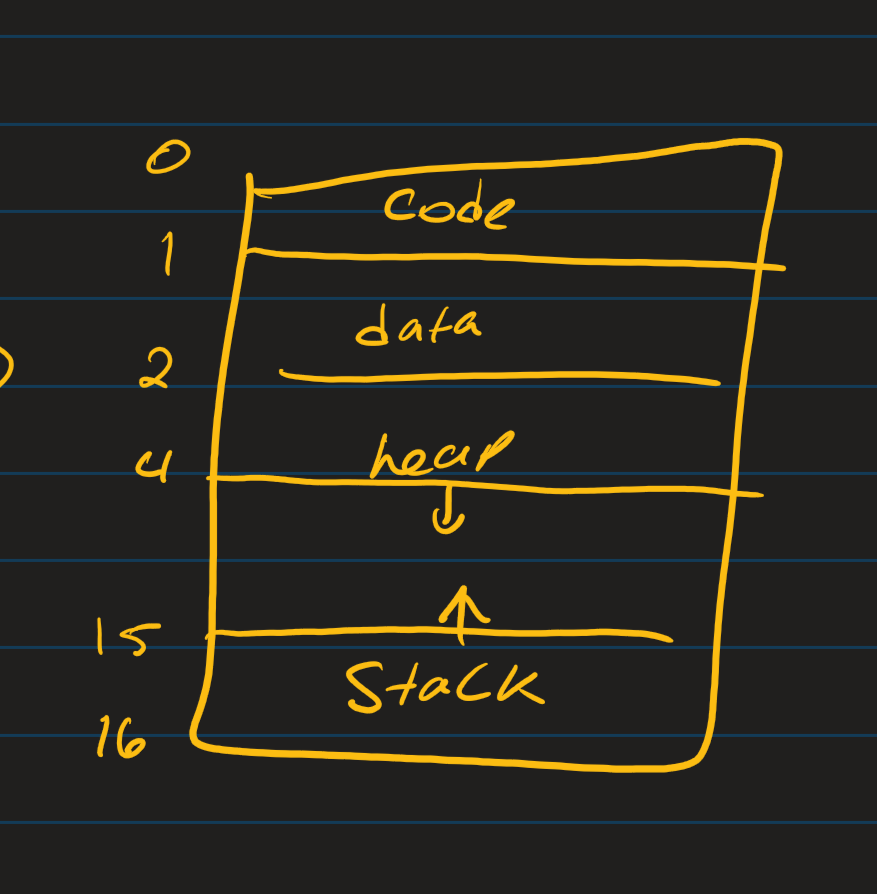

An address space is the process’s private view of memory

Within the chunk of memory for process A

The heap grows up as we request dynamic memory, stack grows downward as frames are pushed on calls

The illusion is contiguous memory, but physically, each region may be scattered across frames

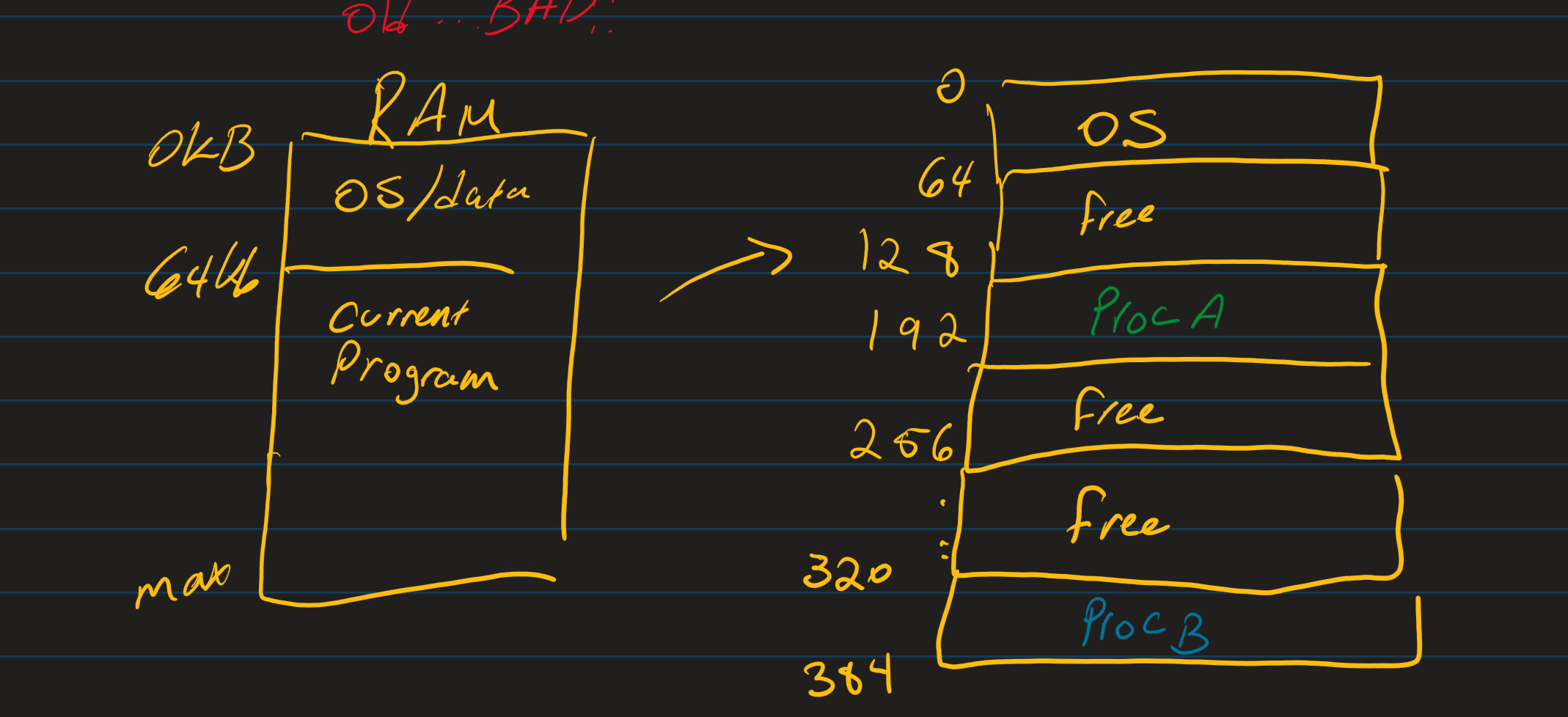

Mechanisms and Address Translation

Base and bounds

The CPU adds a base register to every logical address to produce a physical address and checks a bound register to ensure the address is in range.

eg) base 1000, bound = 500 → Legal physical address (PA) is 1000 to 1499 is valid

eg) base 2000, bounds = 300 → Legal PA is 0×2000 to 2299

Pros: Simple protection and relocation

Cons: External fragmentation over time, Free regions become split into small holes that are hard to use

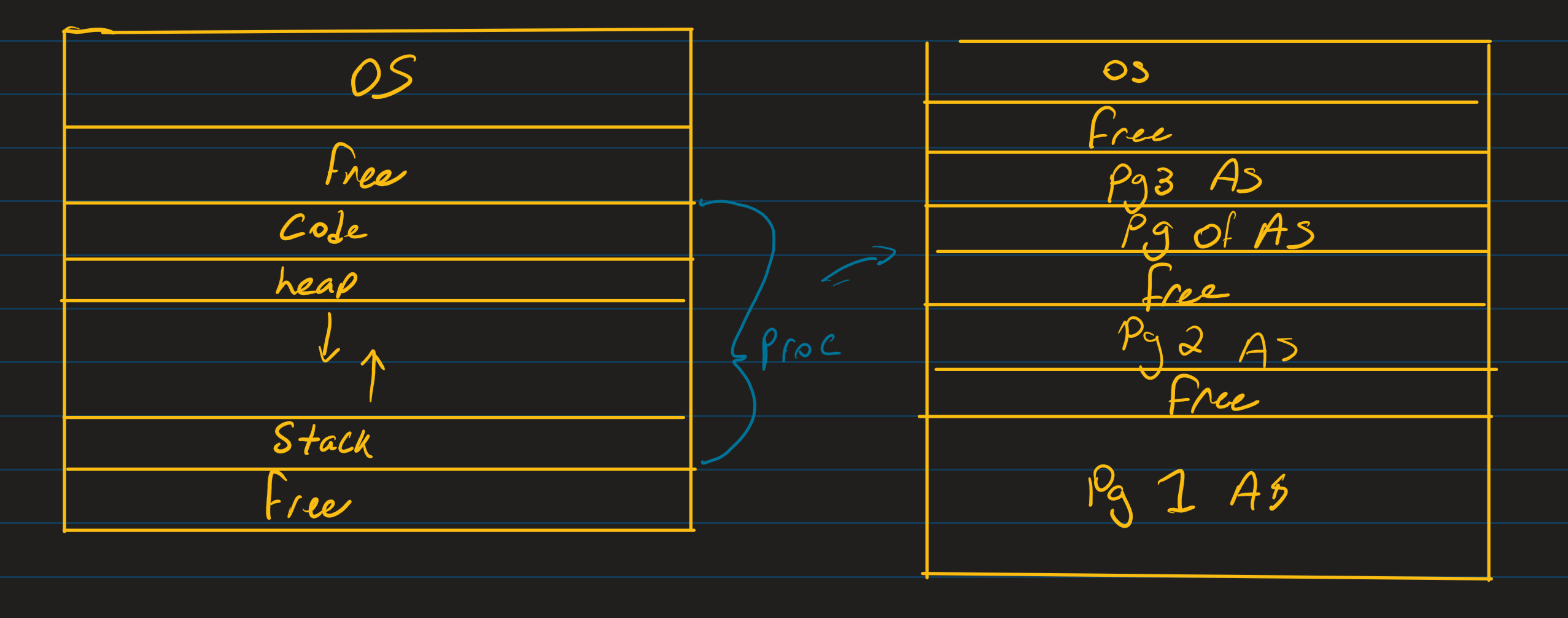

Paging

Paging will fix this by using fixed-size chunks. A process’s Virtual Address space (VA) is Divided into pages. Physical memory is divided into frames of the same size.

Page table: Maps from virtual page numbers (VPN) to physical frame numbers (PFNs)

Fixed size eliminates external fragments, but we can still have internal fragmentation (When we don’t use a full page)

Sharing becomes easier, 2 processes can map the same physical frame read-only for shared libraries (.so, .dll, .h)

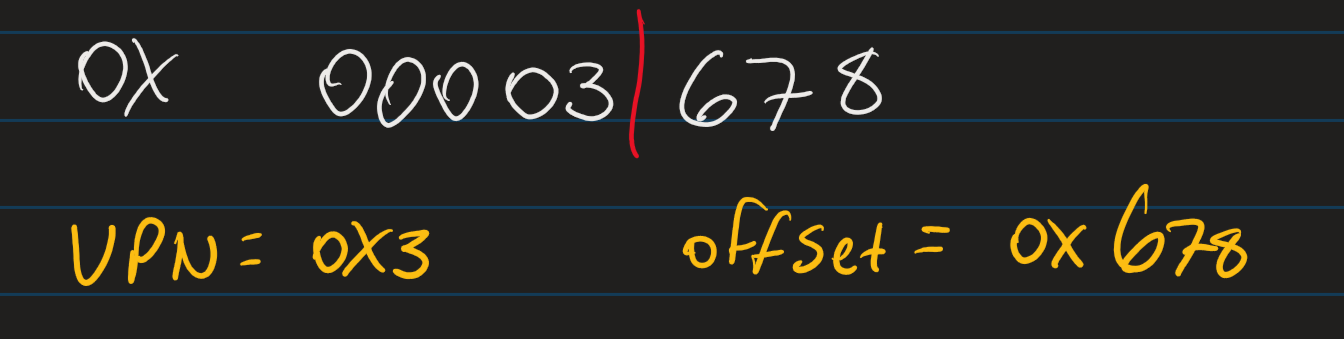

Eg)

Page size = 4KiB= 12 bits; 32-bit VA; 20 bits for page

Page table lookup to get physical address (For example, PA is 0×15)

PA = 0×15 | 0×678 = 0×15_678

TLB and Page Table Walk

Translation Lookaside Buffer = Small, fast cache of VPN→PFN and Permissions

On a hit → immediate translation

On miss→ HW walks page table to find a PTE, then fills the TLB

If the TLB is full, evict based on an eviction policy like random (the best policy)

PTE Fields:

Valid (V)- Map exists in mem

R/W/X: Read/Write/Execute permissions

User/Supervisor (U/S): User-mode or Kernel-mode

Accessed/Dirty (A/D): Reference and write tracking

Page Table lives in RAM → They themselves consume some RAM

Flat 32-bit, 4B PTEs, 1M PTEs → About 4MiB per proc

Hence Multi-leveled page tables: Allocate lower levels on demand;

Space scales with used virtual regions

Page Faults :/

Page Fault types:

Not present: PTE invalid, Page absent, OS must bringthe required page into memory from disk to resolve the page fault. or allocate a new page

Protection Violation PTE present, but perms deny access

Often results in Kill(segfault) unless OS can adjust (eg. COW)

Fault Handling:

Trap to kernel, save context

Inspect Faulting VA and cause (not present vs protection)

If not present, choose free frame or a victim (replacement)

If evicting a victim and its dirty: write back to disk

Read required page (or allocate a new one) into a frame

Update PTE; Flush/Adjust TLB as needed

Resume at faulting instruction

Replacement policies

FIFO- First in First out, Evict oldest

LRU- Evict least recently used. Better matches on locality but need to track on timer bit

OPT- Evict page used farthest in the future

Performance Implication

If TLB hit rate dominates: Hits are ~1 CPU cycle; misses add a multi-level walk in RAM.

Page Size: Larger pages → Fewer PTE and fewer TLB misses, BUT more internal fragmentation and potentially more I/O per fault

Memory overhead: Page tables and TLB shootdowns matter at scale

Disk I/O: Page faults to disk are orders of magnitude slower; avoiding them is critical