Chapter 6 - Instrumental/Operant Conditioning

Instrumental Conditioning Background

Classical Conditioning vs. Instrumental Conditioning

Here are the basic structures of classical conditioning (CC) and instrumental conditioning (IC):

CC: Stimulus + Stimulus = Conditioned Reflexive Response

An example is footsteps + food = salivation to footsteps

IC: Voluntary Response/Behaviour + Consequence = Change in Frequency of Voluntary Behaviour

An example is biting one’s nails + punishment = no more biting of nails

An important difference between CC and IC is that the resulting response in CC is reflexive, whereas the resulting response in IC is voluntary. For instance, Pavolv’s dog does not consciously choose to drool as a response to the bell; it is a reflexive behaviour. Conversely, if I bite my nails, someone makes a mean comment about them, then I might voluntarily choose not to bite my nails anymore — this would be an instance of instrumental conditioning.

The Basic Procedure of Instrumental Conditioning

In IC, voluntary responses are modified:

STEP I: The organism ‘reacts or behaves’

Example: A dog sits

Experimental Example: A starved rat gets a basketball in a mini basket

STEP II: A behaviour modification technique is applied

Example: A treat is given to the dog

Experimental Example: The rat is given food pellets

CONSEQUENCE: The reaction or behaviour either occurs more frequently or is reduced/stopped

Example: The dog sits to receive a treat

Experimental Example: The rat dunks the basketball to get food

Note that IC can also be used to produce complex behaviours.

Instrumental Conditioning Definitions

Basically, IC is a type of learning in which the consequences of behaviour tend to modify that behaviour in the future. Essentially, behaviour that is rewarded or reinforced tends to be repeated, whereas behaviour that is ignored or punished is less likely to be repeated.

Instrumental behaviour — Behaviour that occurs because it was previously needed for producing certain consequences.

Examples include…

Lever-pressing to receive a reward

Turning the key to start the car

Pulling the handle on a slot machine to win

Driving slowly as to not get a speeding ticket

Avoiding an electric fence to avoid getting shocked

Instrumental conditioning — Procedures developed to study instrumental behaviour through reinforcement and punishment.

Essentially, it’s looking at the whole operant conditioning process

Early Instrumental Conditioning Studies

Thorndike’s Early Studies

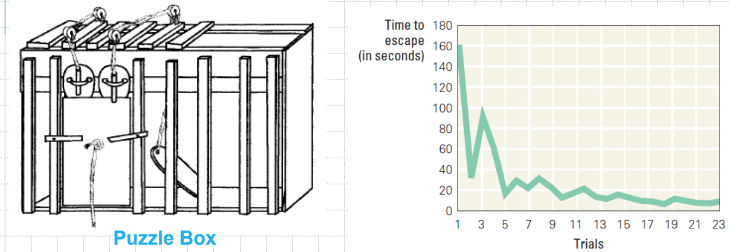

Edward L. Thorndike (1874-1949) was the first serious theoretical analyst of instrumental conditioning. His earlier experiments involved cat puzzle boxes, in which the cat had to learn to perform a particular behaviour to exit the puzzle box.

Thorndike’s puzzle box experiment:

The basic procedure of the experiment involved putting a hungry cat into a puzzle box — the cat had to pull a lever and a weighted string (in that order) to open the door of the puzzle box and get food (a positive reinforcer).

The observations were as followed: When initially placed in the box, Thorndike described the cats’ behaviour as being chaotic and erratic (e.g., random behaviours such as meowing or scratching various things). Eventually, the cat would incidentally pull the string and exit the puzzle box. However, after several trials, the cat would reduce the time it took to exit the puzzle box because it learned what behaviour it had to do (e.g., pull the string) in order to get out of the box and get to the food.

Main takeaway from the puzzle box experiment:

The cat tracked the outcome of its behaviour every trial, and eventually learnt that producing a particular behaviour in the box led to a specific outcome…

The structure of this is S → R → O

In context (S), response (R) produces outcome (O)

How this knowledge guides future behaviours:

Given S → R → O…

Behaviours with positive outcomes increase

Behaviours with negative outcomes decrease

Methodological issues with the puzzle box experiment:

How long do you wait until you say the cat didn’t learn (e.g., cutoff)?

You repeat the trials over and over again, resetting the animal and device

The results are hard to compare across animals

How do you generate a prediction from latencies?

Instrumental Conditioning Procedures

Discrete-Trial Procedures

Discrete-trial procedures include puzzle boxes and maze learning. The nature of discrete trial procedures is that a trial ends when the instrumental behaviour is displayed (e.g., when the cat in Thorndike’s puzzle box experiment, the trial ends).

Runway Maze (Straight-Alley Maze)

The idea of a runway maze (or straight-alley maze) is to put an organism in the start box (S), and measure its running speed latency to get to the goal box (G). For instance, I might put a rat in the start box, put fruit loops at the goal box, and measure the rat’s running speed latency over several trials.

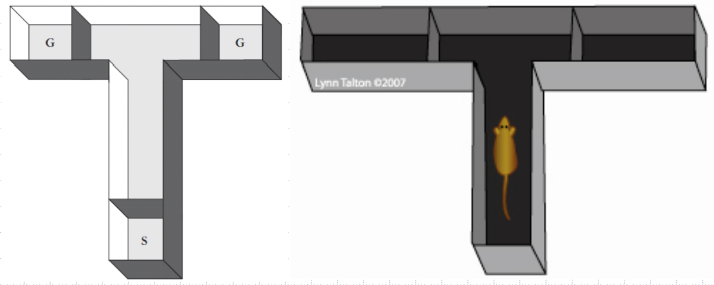

T-Maze

The T-maze is typically used for memory studies and other aspects of behaviour. The main idea behind this type of maze is that the organism is place at the start box (S), and usually at one of the two goal boxes (G) has a reward. After the organism finds the reward on the first trial, you measure the running speed latency, but the main way they demonstrate learning is by making the correct turn towards the reward. For example, I might put a rat in a T-maze, put fruit loops in the left goal box, and measure the rat’s running speed latency in addition to observing whether it makes the correct turn over several trials.

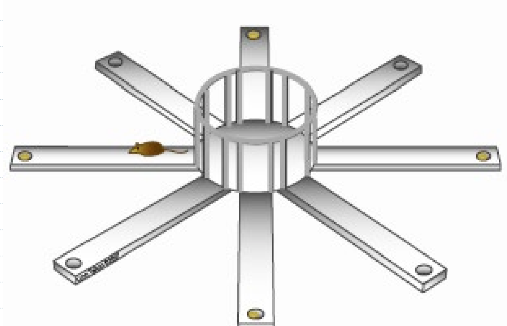

8-Arm Radial Maze

The eight-arm radial maze is also used for memory studies and other aspects of behaviour. The main idea for this type of maze is to place rewards on different arms at different times — this makes the organism learn the apparatus, and learn when and where to go for the reward. Typically, the arms are about three to four feet off the ground; the reason for this is that organisms like rats don’t like heights, so they aren’t as likely to go out on the arms unless there is a good reason to do so (e.g., a reward). Additionally, there can be discriminative stimuli for each arm (such as different flooring, lighting, or odours) for the organism to more easily distinguish each arm or to impact the likelihood for it to go on particular arms.

Free Operant Procedures

Free operant procedures differ compared to discrete trial procedures in one main way:

In discrete trial procedures, only one instrumental behaviour can be displayed per trial (e.g., when the organism display the target instrumental behaviour, the trial ends)

In free operant procedures, more than one instrumental behaviour can be displayed per trial (e.g., the organism can display any number of instrumental behaviours over a the duration of a trial)

An operant response is defined in terms of its effect on the environment. In other words, operant responses are so because they impact the environment in some way. For example, a rat that presses the lever in a Skinner Box is producing food because of its lever-pressing.

Different types of operant responses:

Lever-pressing

Rats learning lever-pressing

Chain-pulling

Rats or birds learning chain-pulling

Nose-poking

Rats learning to poke something with their nose

Pecking

Birds learning to peck something

What is the dependent variable?

Response-rate

Total number of responses

Latency to respond

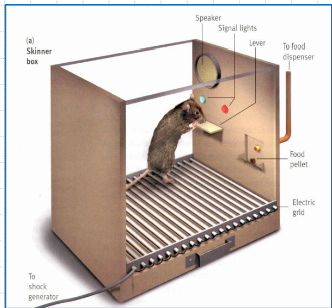

B.F. Skinner and the Skinner Box

Skinner was considered the leading authority of IC, and was influenced by Thorndike. Skinner invented the Skinner Box to test IC through shaping. One advantage of the Skinner Box is that you can allow for more than one instrumental behaviour per trial compared to discrete trial procedures (many levers, many chains, etc.).

An example of a Skinner Box is the chamber that trains rats to bar-press for rewards

The Initial Learning Procedure in a Skinner Box

IC involves learning familiar responses in new situations or in new ways. In other words, it involves taking what is already known by organism and modifying it in different ways — it’s not about teaching brand new behaviours, but modifying existing behaviours.

For instance, rats in a maze study may need to learn where and what to run for — rats don’t need to learn how to run, but rather where to run, where to turn, and what they will find at the goal box.

Basically, the organism is constructing new responses from familiar components.

For example, to press a lever, rats have to combine various familiar behaviours (e.g., raising their paws, standing on their hind legs, and so on)

Shaping and Chaining

Shaping reinforces any movement in the direction of the desired response.

In other words, shaping is done by rewarding successive approximations, which is quicker than waiting for the response to occur and then reinforcing it.

It is used effectively to condition humans and many types of animals, such as parents and their children, teachers and their students, or coaches and their athletes.

It incrementally builds a complex response through successive approximations

For example: In a lever-pressing rat experiment, you could wait for dumb luck for the rat to press the lever for the first time. However, rewarding successive approximations will speed up the learning process. For example, shaping could take on the form of rewarding the rat for looking in the direction of the lever — which increases the probability of facing the lever. After doing this, you could withhold the reward until the rat approaches the lever — which increases the probability of the rat getting closer to the lever. The next step might be withholding the reward until the rat touches the lever; you would repeat this step-by-step process until you eventually get to the desired operant response — pressing the lever.

Another example is getting a young child to say a word — you reward the child for saying a letter of the word, which increases the likelihood of getting the child to actually say the word.

Chaining is the process of building complex operant response sequences by linking together S → R → O conditions.

An example is initially training an animal to pick up an object. Then, rewarding the animal for both picking up the reward and throwing it. It allows for a series of behaviours (as opposed to shaping, which simply elaborates on a single response).

Another example is the rat basketball experiment seen in class.

Shaping and Chaining Combined

Here’s the summary of what shaping and chaining are:

Shaping:

Shaping through successive approximations builds a complex response incrementally

Initially, the contingency (e.g., reward given if behaviour is produced) is introduced for a simple behaviour (rudimentary version of R, which is the desired operant response); as the rate of the behaviour increases, the contingency is provided for increasingly complex forms of the behaviour (e.g., from touching to pressing a lever)

Gradually, it builds a complex R that an animals would never spontaneously produce

Chaining:

Chaining builds complex R sequences by linking together S→R→O (if S, then R, leads to O) conditions

An example is training an animal to pick up an object and then rewarding it for both picking it up and then throwing it (e.g., chaining behaviours together)

It allows for a series of behaviours (as opposed to shaping, which simply elaborates on a simple response)

Shaping and chaining can be used together to train animals to complete incredibly complex behaviours. Both techniques require skill and patience from the trainer.

Can keep an animal motivated and interested

Must select proper training sequence

Cannot move too fast

How to Get a Rat to Lever Press

25:28 of the audio lecture

IC in the Skinner Box

Outcomes (O):

± food delivery

± shock through wires in the floor (punishment)

Behaviour (R): rate of lever pressing

Context (S): light that signals box is “on”

Note than animal is “free” in the chamber, no experimenter intervention

Free-operant learning

Also, many possible contingencies can be introduced

Positive Reinforcement: Press lever (R) → GET FOOD | Positive Punishment Press lever (R) → GET SHOCK |

Negative Reinforcement Press lever (R) → STOP SHOCK | Negative Punishment Press lever (R) → STOPS FOOD |

Structure of the IC Skinner Box Experiment

Initially, tries many things; eventually, accidentally presses the lever, produces a positive effect

Now starts hanging around the lever, accidentally presses it again

Rat has learned a contingency: if light on (S), pressing lever (R) → food (O); spends much of tis day pressing and eating

Basic Pattern of IC

Generalizing & Discrimination

Influencing Factors

A summary of response-outcome procedures with consequences:

Procedure

Type

Outcome

Result

Positive Reinforcement

Positive

Response produces appetitive stimulus

Increase in response rate

Positive Punishment

Positive

Response leads to aversive stimulus

Decreased response rate

Negative Reinforcement

Negative

Response removes/avoids aversive stimulus

Increase in response rate

Omission Training

Negative

Response removes/avoids appetitive stimulus

Decrease in response rate

Distinguishing Between Reinforcement and Punishment

Positive Reinforcement:

Add something to increase behavior.

Negative Reinforcement:

Remove something to increase behavior.

Positive Punishment:

Add something to decrease behavior.

Negative Punishment:

Remove something to decrease behavior.

Instrumental vs. Classical Conditioning

IC: The animal operates on the environment.

CC: The environment operates on the animal (learning involves predictive CS-US relationship).

Characteristics of IC vs. CC

Characteristics

Classical Conditioning

Instrumental Conditioning

Type of association

Between two stimuli

Between a response and its consequence

State of subject

Passive

Active

Focus of attention

On what precedes response

On what follows response

Type of response

Involuntary

Voluntary

Typical bodily response involved

Internal (emotional)

External (physical movement)

Complexity

Relatively simple

Simple to highly complex