Week 7 - Linear Algebra; Introduction to Matrices

Learning Objectives

Define, describe, identify, manipulate, and exemplify different types of matrices, vectors and vector space and the application of some operations on vectors and matrices.

In particular:

Define, identify, and manipulate matrices and vectors.

Apply basic matrix operations, including addition, subtraction, and scalar multiplication.

Define and differentiate between various types of matrices (e.g. square, diagonal, identity, zero).

Apply and explain the properties of basic matrix operations, including commutativity, associativity, and distributivity.

Calculate powers of matrices.

Define norms on vectors and the geometric interpretation of vectors.

Define and exemplify vector spaces and their properties.

Define matrix equations.

Introduction to Matrices

Matrices are rectangular arrays of numbers, symbols, or expressions, and are foundational in various mathematical applications, particularly in artificial intelligence and statistics.

Overview of Matrices and Vectors

Matrix: A structure with m rows and n columns denoted as A = [aij] where i is all positive natural numbers up to m, j is all positive natural numbers up to n and aij is a real number. aij denotes the elements/entries of a matrix → use two indices for row and columns.

So for [a b c/ d e f/ g h i], a21 is d, a33 is i, a11 is a, a23 is f, a13 is c etc.

row 1: a b c

row 2: d e f

row 3: g h i

NB: We always read the dimension of a matrix as [number of rows] x [number of columns]

Examples of matrix types include:

Square matrix (n×n)

Row vector (1×n)

Column vector (n×1)

Vector: A one-dimensional matrix, meaning there are comprised of one column/row (Rm)

Row vector: ( v' = [v_1, v_2, ..., v_n] )

Column vector: ( v = [v_1, v_2, ..., v_n]T )

the transpose of a column vector is a row vector (and vice versa)

Transpose of Matrix A is denoted by [aji] or AT

columns and rows are flipped

Matrix Operations

Basic Operations

Scalar Multiplication: Multiplying each element of a matrix by a scalar.

Matrix Addition: Defined as ( A + B = [aij + bij] ).

Matrix Subtraction: Defined as ( A - B = A + (-B) ).

Matrix Multiplication: An operation combining two matrices to yield a new matrix, where the number of columns in the first must match the number of rows in the second.

Properties of Matrix Operations

Commutative property for addition: ( A + B = B + A ).

Non-commutative property for multiplication: (AB ≠ BA)

Note that for multiplication of two matrices, the number of columns in the first matrix must match the umber of rows in the second matrix.

The size of the resultant matrix will be [number of rows in the first matrix] x [number of columns in the second matrix]

[m x k] * [k x n] = [m x n]

Associative property for both: ( A + (B + C) = (A + B) + C ).

Distributive property for both: For all matrices A, B, C; ( A(B + C) = AB + AC ).

Types of Matrices

Zero Matrix

A matrix with all elements are zero, denoted as 0 or 0m,n.

Matrix of Ones

A matrix where all elements are equal to one, denoted as 1 or 1m,n.

Identity Matrix

A square matrix with ones on the leading diagonal and zeros elsewhere, denoted In.

note that the leading diagonal are all elements aii

basically the matrix form of 1, AI = A

Diagonal Matrix

A matrix where non-diagonal elements are zero; only diagonal elements may hold values.

Symmetric Matrix

A matrix satisfying A = AT , i.e. a matrix and its transpose describe the same matrix.

when aij = aji for all elements of A

Permutation Matrix

A square matrix where each row and column has exactly one entry of 1, and the rest of the elements are zero.

I is an example of a permutation matrix, as are some linear transformations e.g. reflection about line y = x

When multiplied with another matrix, they cause the entries to change position but not value.

By choosing the positions of 1s in a permutation matrix, we can control how the positions of the entries in another matrix will change through matrix multiplication

For the 1st row in this permutation matrix, 1 is in the 2nd position. So it takes the 2nd element in the 1st column of the other matrix and moves it to the 1st position

For the 2nd row in this permutation matrix, 1 is in the 4th position. So it takes the 4th element in the 1st column of the other matrix and moves it to the 2nd position

Triangular Matrices

Upper triangular: All elements below the main diagonal are zero.

Lower triangular: All elements above the main diagonal are zero.

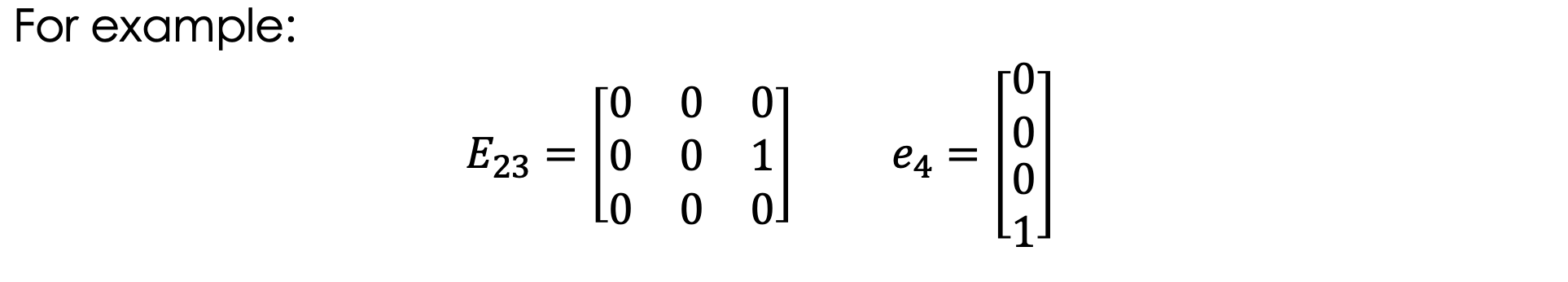

Matrix Unit and Unit Vector

Matrix units and unit vectors have exactly one entry of 1; the rest of the entries are 0.

Matrix Unit Eij: the i,j-th element is 1

the size of the matrix should be detailed

for a matrix unit Eij, the minimum size of the matrix is i x j

Unit Vector ei: the i-th element is 1

Unit matrix ≠ matrix unit

Unit matrix = identity matrix

Norms and Geometric Interpretation of Vectors

Norms

Norms allow us to compute the similarity between vectors.

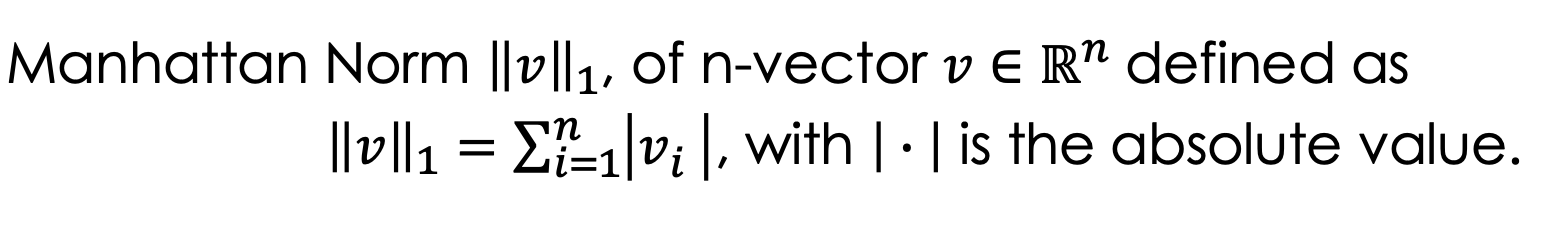

L1 Norm - Manhattan Distance

denoted ||v||1

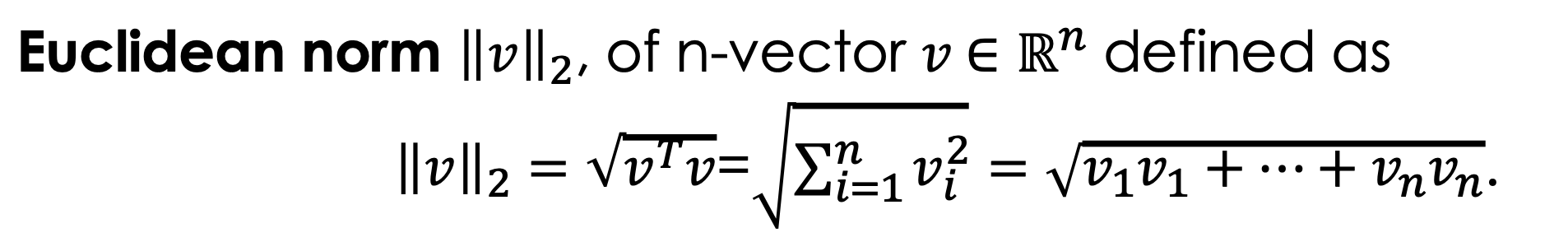

L2 Norm - Euclidean Distance

denoted ||v||2

shortest straight line distance from origin

Geometric Interpretation of Vectors

Vectors are also geometric objects that can be represented as a directed line in a i-dimensional graph that starts at the origin and ends at the corresponding point in the co-ordinate system.

Vector space (or linear space)

Vector spaces are defined by groups: a set of vectors closed under vector addition and scalar multiplication. Basically, vector space is a ‘structured space’ where vectors exist.

Euclidean space Rn is a common example.

Real valued vector space V = (S, +, • ) is a vector space if V is abelian, and

where the set of vectors S is not empty

+ is vector addition

• is scalar multiplication

After reviewing the following sub-notes, the proper definition of vector space will make more sense:

Groups

Groups consist of a set of elements that adhere to specific operations, such as addition and scalar multiplication, which satisfy the group axioms: closure, associativity, identity, and invertibility.

A group (S, *) is defined by a set S along with a binary operation * that combines any two elements a and b in S to produce another element in S, thereby fulfilling the group properties.

Closure: for all x and y in S, the result of the operation x * y is also in S.

Identity: for every element x in S, there exists an element e in S such that x * e = x and e * x = x, ensuring that the operation does not alter the original element.

Associativity: for all x, y, and z in S, the equation (x * y) * z = x * (y * z) holds true, confirming that the grouping of operations does not affect the outcome.

tldr: brackets don’t matter

Inverse: for every element x in S, there exists an element x' in S such that x * x' = e and x' * x = e, indicating that each element has an inverse → a counterpart that effectively 'cancels' it out under the operation.

tldr: every element has an inverse depending on what the binary operator does (e.g. for +, inverse = negatives of each other,; for *, inverse = reciprocals)

Mnemonic: AI- IC (AI, I see!)

So e.g. (Z, +) is a group:

(Z , +) is a group, where Z represents the set of all integers.

'+' denotes the binary operation of addition.

The identity element is 0, because adding 0 to any integer x yields x.

Every integer has an inverse; for any integer x, there exists an integer -x such that x + (-x) = 0, satisfying the inverse property.

Abelian/commutative groups

Abelian groups are where the property of commutativity also holds. This is the type of group we utilise to define vector space.

Commutativity: for all x, y in S, (x * y) = (y * x)

Vector subspace

For a vector space V = (S1, +, •) and a vector space U = (S2, +, •), U is a subspace of V if:

S2 is a subset of S1

S2 contains the zero vector

U is a valid vector space ((S2, +) abides by all group axioms + commutativity)

If a vector space only contains the zero vector, it is a subspace of any other vector space

All vector spaces are subspaces of themselves.

Linear Combinations and Dependencies

Linear Combination: Formed by the addition of scalar multiples of vectors (supported by the group axiom of closure)

Trivial linear combinations: all scalars are 0

Linear Dependencies: A set of vectors is linearly dependent if at least one vector can be expressed as a combination of others.

Further, a set of vectors is linearly dependent if there is away to get the zero vector through scalar multiplication and then vector addition

So a set of vectors is linearly independent if you can only express a vector through trivial linear combinations of other vectors (i.e. by adding by 0).

e.g. a set of unit vectors is linearly independent

Matrix Equations

Formulated as ( AX = B ) where ( A, X, B ) are matrices of appropriate dimensions.

Solutions can be found analogous to solving linear equations.

Recap of Key Concepts

Matrices and vectors are crucial for computational operations in mathematics. Understanding their operations, properties, and classifications helps in numerous applications, particularly in solving linear equations and modeling data.