Hidden Markov Models

Often, we want to reason about a sequence of observations:

speech recognition

robot localization

user attention

medical monitoring

Need to introduce time (or space) into our models

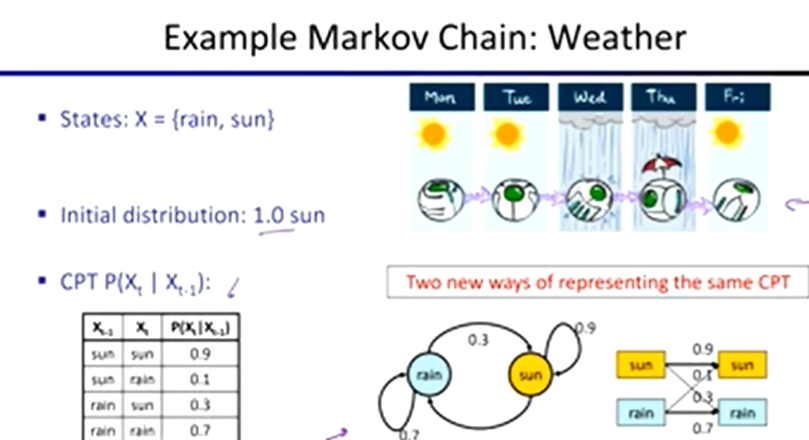

Markov Models

value of X at a given time is called the state

parameters:

called transition probabilities or dynamics

specify how the state evolves over time

stationarity assumption

transition probabilities the same at all times

no choice of action

Conditional Independence

past and future independent given the present

each time step only depends on the previous

this is called the (first order) Markov property

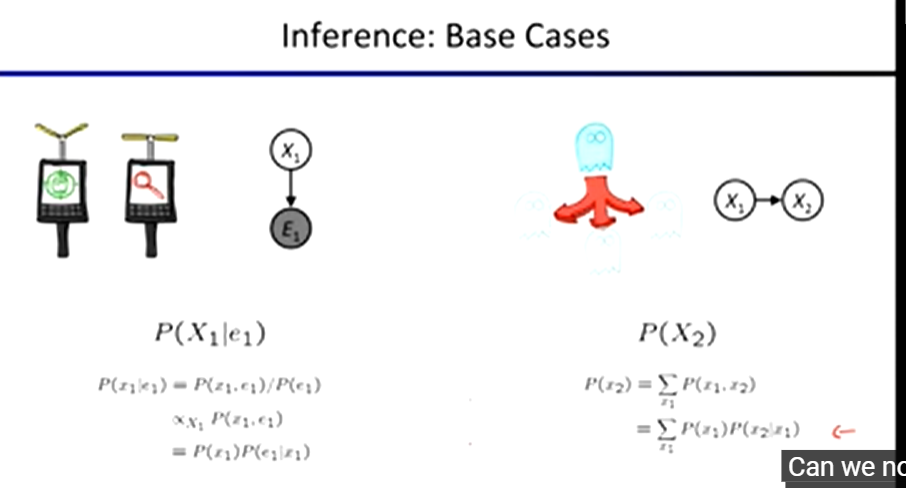

we can always use generic BN reasoning if we truncate the chain at a fixed length

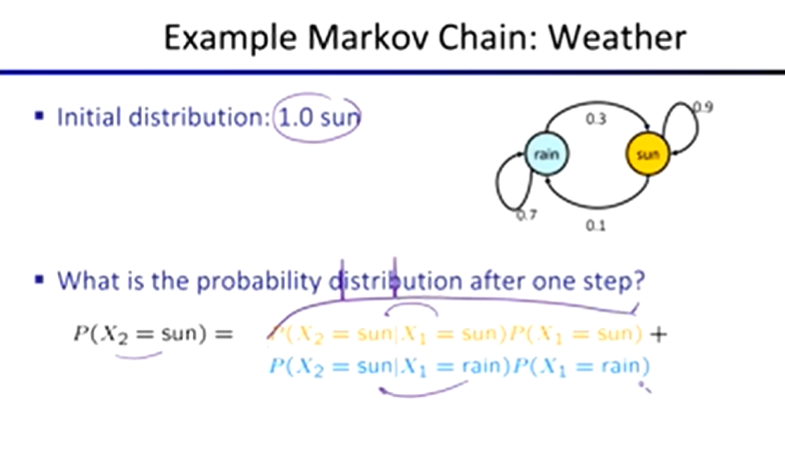

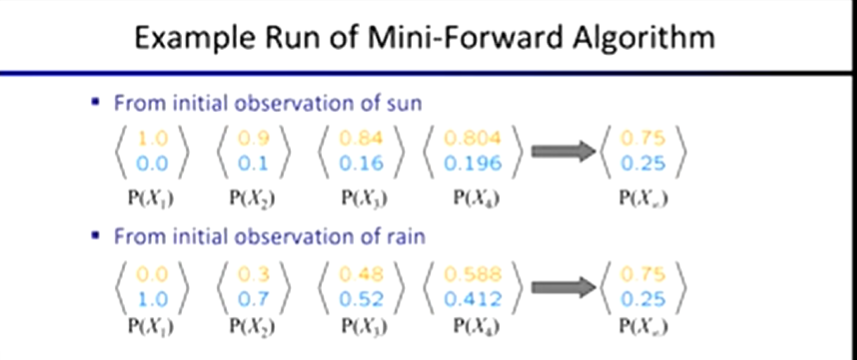

Mini-Forward Algorithm:

P(x1) = known

P(xt) = ∑ P(xt | xt-1) P(xt-1)

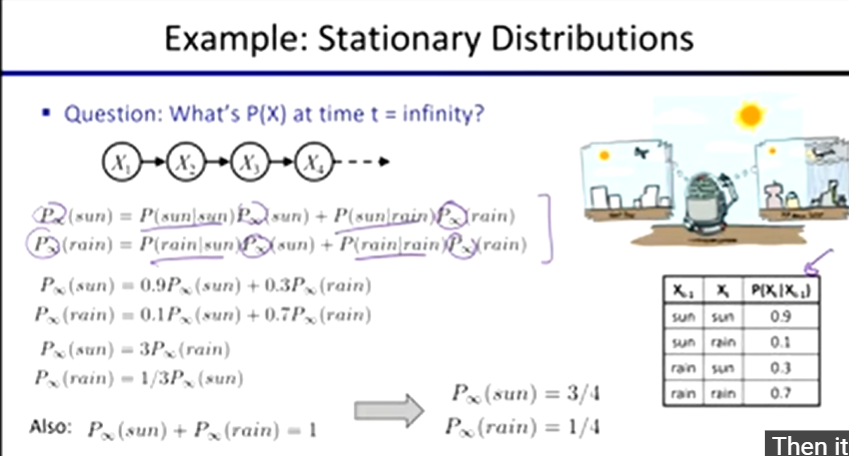

Stationary Distributions

the distribution we end up with is called the stationary distribution Px of the chain

P∞(X) = P∞ + 1(X) = ∑ P(X | x) P∞(x)

Hidden Markov Models

underlying Markov chain over states X

observe outputs (effects) at each time step

Conditional Independence and HMMs

Markov hidden process:

future depends on past via the present

Current observation independent of all else given current state

Filtering / Monitoring

task of tracking the distribution Bt(X) = Pt(Xt | e1, …, et) over time

start with B1(X) in an initial setting, usually uniform

as time passes we update B(X)

Passage of Time:

Assume we have current belief P(X | evidence to date)

B(Xt | e1 : t)

then, after one time step passes

P(Xt + 1 | e1 : t) = ∑ P(Xt + 1 | xt , c1 : t) P(xt | c1 : t)

∑ P(Xt + 1 | xt) P(xt | c1 : t)

Observation:

assume we have current belief P(X | previous evidence)

B’(Xt + 1) = P(Xt + 1 | e1 : t)

then, after evidence

P(Xt + 1 | e1 : t + 1) = P(Xt + 1 , et + 1 | e1 : t) / P(et + 1 | e1 : t)

The Forward Algorithm

given evidence at each time and want to know

Bt(X) = P(Xt | e1 : t)

we can derive the following updates

P(xt | e1 : t) ∝ x P(xt , e1 : t)

= ∑ P(Xt - 1 , xt , e1 : t)