Clinical Investigation Exam 2

~3 questions per lecture

Observational Studies [8 questions]

Recognize situations where an observational study design may be appropriate to answer a research question.

Limitations

cannot show causality — only associations

Used

when RCTs cannot be done

Why Used

provide preliminary data to inform the design and how to conduct a RCT when feasible

may have more generalizability than RCT

Identify key features that distinguish each of the different types of observational study designs from each other, and from interventional studies: cross sectional, case control, cohort- retrospective and prospective.

Cross sectional study

What:

prevalence study — examines relationships between disease (outcome) or drug (exposure) and other characteristics in a population at one point in time, cannot measure incidence of disease

Set Up: gather data at one time on both exposure and outcome

exposure and outcome present

exposure and no outcome

unexposed and outcome present

unexposed and no outcome

Use: study conditions that are frequent with long duration of expression

Case control study

What: compares people who have disease/outcome (cases) to those who do not (controls) with respect to variables of interest (causes or exposures)

Set Up

Use

Strengths

Weaknesses

Cohort study

What: incidence study measures outcome(s) in a population that didn’t start with outcome, relates hem to development of outcome over time

Retrospective or prospective — ALWAYS longitudinal

Set Up

Use

Strengths

Weaknesses

Identify where each type of observational study design sits in the evidence hierarchy pyramid.

Higher quality of evidence w/ lower risk of bias

systematic reviews and meta-analyses of RCT

RCT

cohort studies

case control studies

cross sectional studies, surveys

case reports

mechanistic studies

editorials, expert opinion

Lower quality of evidence w/ higher risk of bias

Identify advantages and disadvantages of each type of observational study design.

Cross Sectional

Advantages

quick, inexpensive, ethically safe

measures multiple exposures/outcomes at once

Good for hypothesis generation

Disadvantages

cannot establish causality or temporality

ONLY measures prevalence, not incidence

Prevalence = the amt of people with a disease or condition at a point in time

highly prone to bias (recall, observer, sampling, nonresponse)

unequally distributed confounders

NOT for rare or short duration diseases

Case-Control

Advantages

for rare diseases or long latency [effective]

faster and cheaper than cohort designs

study of multiple exposures for one outcome

Disadvantages

Bias (recall, selection)

difficult to select appropriate controls

only estimate odds ratios

odds of an event occurring in one group versus another, estimating means you don’t know the true risk or probability

Confounders may distort associations

Cohort (prospective)

advantages

temporal relationship can be established between exposure and outcome

Temporal relationship = exposure happens before the outcome, you must be exposed before it can cause an effect logically

measure incidence and risk

study multiple outcomes for one exposure

data collection more complete and standardized

Disadvantages

Time consuming, expensive

Bias (loss to follow up)

not for rare diseases

blinding difficulty

Cohort (retrospective)

Advantages

faster, less expensive than prospective

when data already exists its good

study of exposures that don’t happen anymore

Ex. Atomic bomb radiation exposure

Disadvantages

poor or incomplete data quality

prone to confounding and bias (selection)

cannot control for unmeasured variables

Recognize types of bias that may be present in observational studies.

Selection bias

What: systematic error in how groups are created or chosen, differ in prognosis or baseline factors

Which studies: Cohort Retrospective, Case-Control,

Recall Bias

What: participants with disease may remember past exposures differently

Which studies: Case-Control, Cross sectional

Observer Bias

What: investigator’s knowledge of exposure/outcome influences data collection or interpretation

Which studies: Cross Sectional

Sampling/ Nonresponse bias

What: Study sample not representative of population or certain groups less likely to respond

Which studies: Cross Sectional

Confounding [by indication]

What: indication for a treatment (not the treatment itself) is what causes the outcome

Which studies: Case-Control

Regression to the Mean/Hawthorne effect

What: extreme values tend to move toward the average upon repeat measurement/ behavior changes because subjects know they’re being observed

Which studies:

Recognize potential confounders that may be present in observational studies.

What is a confounder?

a variable associated with both the exposure and the outcome, but not on the casual pathway

Measured confounders

collected and adjusted for.

Examples: age, gender, comorbidities

Unmeasured confounders

unknown or unavailable variables

Residual confounding

leftover bias even after adjustment

Confounding by indication

reason for treatment is related to outcome

Choose appropriate strategies to minimize the effects of confounders in observational studies.

During study design

Restriction

limit study to subjects within one category of a confounder

Matching

pair cases and controls with similar confounding factors

Propensity score matching

statistical method to match exposed/unexposed subjects based on probability of receiving treatment

During Data Analysis

Multivariate regression

adjust for multiple confounders simultaneously

Purpose: controls for age, sex, comorbidities

Propensity score adjustment

uses predicted probability of exposure to balance groups

Purpose: mimics randomization statistically

Instrumental Variable Analysis

uses a variable associated with exposure but not with outcome to simulate randomization

Purpose: provider preference for a drug

Sensitivity analysis

tests robustness of results by varying assumptions

Purpose: assess impact of unmeasured confounders

Conceptual Frameworks

Known knowns: measured and adjusted

Known unknowns: known but unmeasured → listed as limitations

Unknown knowns: indirectly measured through proxies

Unknown unknowns: completely unrecognized residual confounders

Interventional Studies [3 questions]

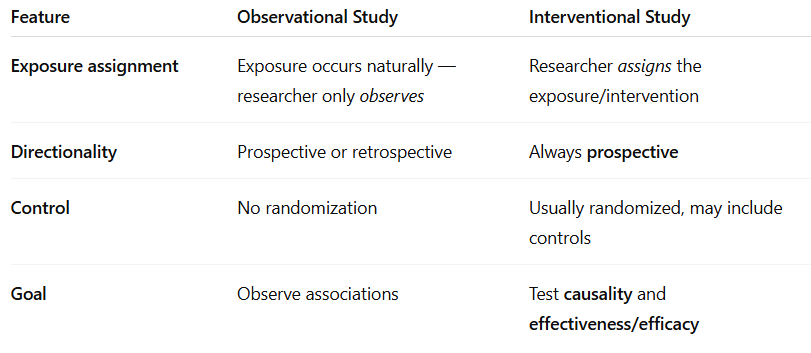

Distinguish how interventional studies differ other types of study designs.

Key difference: interventional → investigator determines who receives intervention

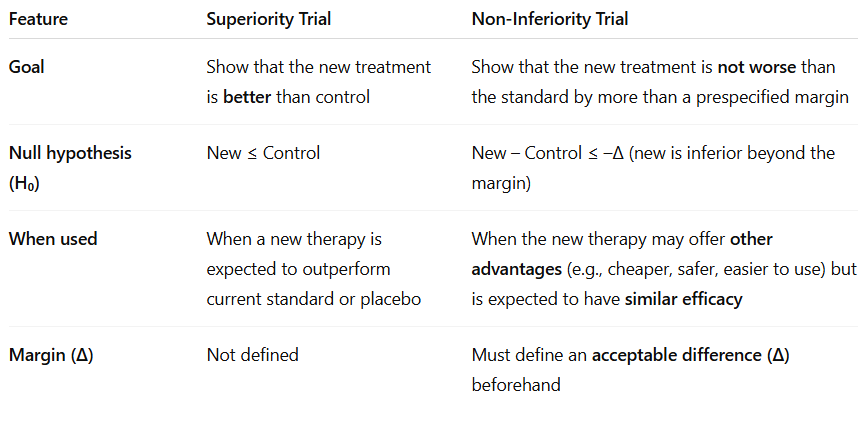

Identify how superiority designs differ from non-inferiority intervention designs and the appropriate situations for each.

Choose an appropriate study design based on the research question and outcome of interest.

Is a new treatment effective compared to placebo?

superiority RCT

Is a new treatment as effective as standard care but more convenient or cheaper?

non-inferiority RCT

Is an intervention feasible or produces a signal for benefit?

non-randomized or pre-post study

Does a treatment have a temporary effect that can be reversed?

crossover study

Can a real-world intervention improve outcomes in practice?

pragmatic or cluster RCT

Are two interventions interacting or synergistic?

factorial design

Understand differences in how control groups are selected for each intervention design (e.g. RCT, Cross-over, pre-post, etc.)

Traditional RCT

Type: randomized to treatment vs control/placebo

GOLD STANDARD, minimize bias

Non-Randomized controlled trial

Type: assignment by investigator or patient choice

HIGH BIAS

Historical Control

Type: compare to data from prior patients or past standard

temporal and selection bias

Pre-Post (before-after)

same patients before and after intervention

regression to the mean and temporal confounding

Crossover design

each subject serves as their own control, separated by washout

efficient, sensitive to order and carryover effects

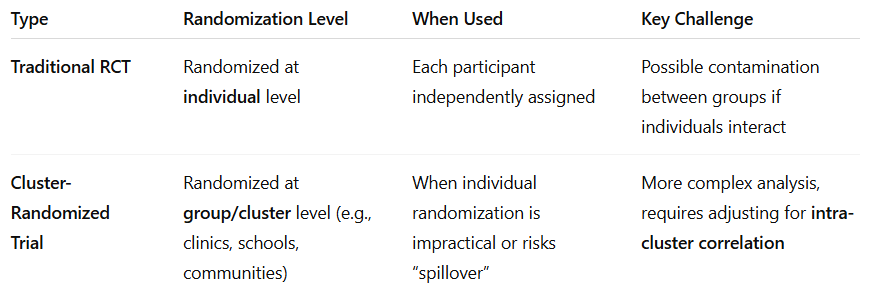

Cluster randomization

whole groups randomized

when individual randomization would contaminate other participants

Recognize the key features of a factorial design.

Purpose — evaluate two or more interventions at same time

Design — 2×2 randomization

Main question — can both treatments work independently or synergistically?

Interaction (effect modification) — occurs when effect of one intervention depends on presence/absence of other

Advantages — test multiple interventions with fewer subjects; examines interaction

Disadvantages — added complexity; risk of adverse effects from combos

Understand how randomization differs in traditional RCT vs cluster-randomized trial.

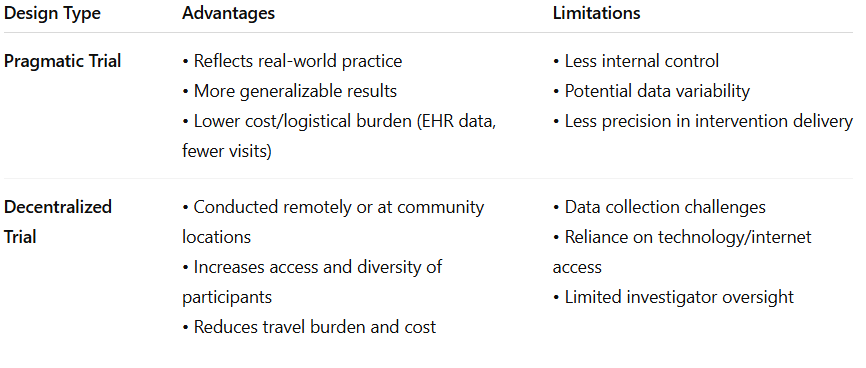

Identify key features that make a trial pragmatic.

Purpose — real world effectiveness tested

Population — broad inclusion criteria; clinical practice patients

Intervention — implemented in routine care

Follow-Up and data collection — EHRs, registries, remote systems

Randomization — preserved to maintain internal validity

Strength — high external validity

Limitation — less control → more variability and potential bias

Recognize advantages and limitations of alternative study designs such as pragmatic and decentralized trails.

Randomized Control Trials: Superiority [8 questions]

Identify strengths and challenges/limitations of randomized trial designs.

Strengths

Gold standard for causality

balances known and unknown confounders

blinding minimizes bias in treatment and outcome

internal validity due to standardized protocols and SOPs

Challenges

expensive and time consuming

ethical barriers

efficacy does not mean effectiveness

volunteer bias, Hawthorne effect, regression to the mean

not great for rate outcomes or long-term endpoints

Define equipoise in clinical research.

The ethical and scientific state of genuine uncertainty about whether one treatment is better than another.

Trial is justified when there is true uncertainty about which option is superior

Purpose: participants are not knowingly deprived of effective therapy, maintains ethical balance in randomization

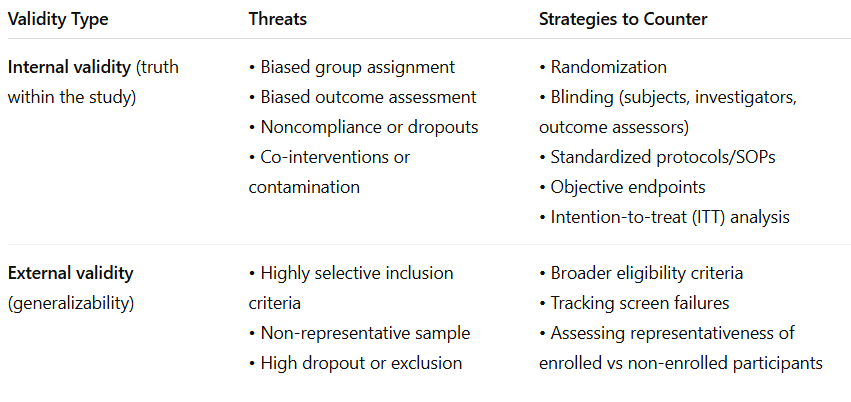

Identify threats to a RCTs internal and external validity, and strategies used to counter them.

Recognize when stratified/block randomization should be considered.

Use stratified/block randomization in:

small sample size

when prognostic factors influence outcomes strongly (age, sex, comorbidity)

Subgroup analyses are planned

Example: if age and sex affect outcomes, create strata (male/female; >65/>65) and randomize within each stratum to maintain balance

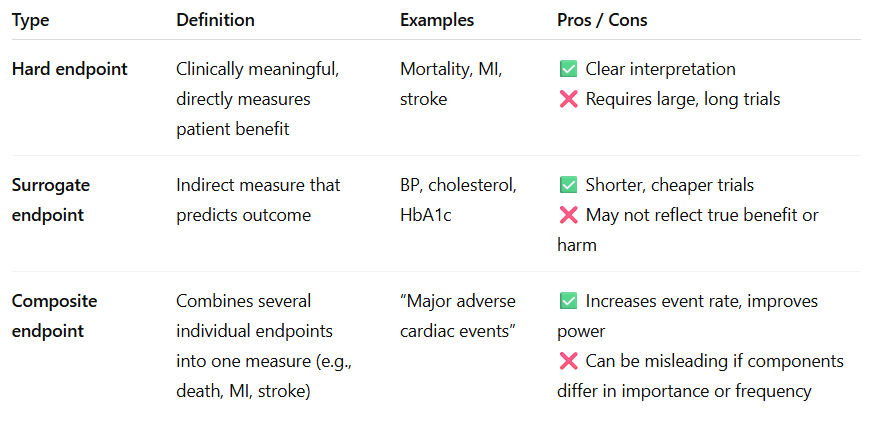

Distinguish between hard vs surrogate endpoints, and how composite endpoints are created and used.

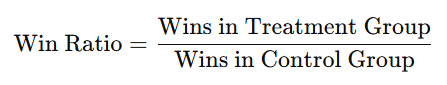

Understand what a win-ratio analysis is and how it is calculated.

When composite endpoints include events of varying clinical importance.

Compares pairs of patients (treatment vs control) hierarchically by severity

compare for most important outcome

if tie → next outcome

continue until winner is determined

Advantages

prioritizes clinically important outcomes

Prevents trial events from overshadowing serious ones

Limitations

complex, careful hierarchy design required

less commonly used outside of cardio trails

Identify the reasons why RTC may be stopped early.

Benefit — intervention shows clear, overwhelming efficacy, continuing is unethical

Harm — excess adverse events or mortality in treatment arm

Futility — statistically impossible to show a difference with remaining data

Understand the role of subgroup analyses as well as the limitations of multiplicity testing.

Subgroup analysis

examines whether treatment effects differ among specific populations

Key rules

pre-specified (a priori)

multiplicity increases chance of false positives aka Type 1 error

findings are hypothesis-generating unless preplanned

Multiplicity

multiple statistic comparisons increase false-positive risk — must adjust alpha or use hierarchical testing

Recognize the difference between a priori vs post-hoc analysis

Priori

defined before seeing data

theory driven, part of stat plan

limited comparisons

ex. predefined subgroups or secondary outcomes

Post-Hoc

after seeing data

exploratory, hypothesis generating

many comparisons → increase type 1 error

Ex. unplanned new analysis after results seen

Interpret a Kaplan-meier survival curve.

Time to event graph

x-axis = time

y-axis = proportion of pts free from event

separation of curves → difference in survival, log rank test assesses statistical significance

Describe what a time-to-event analysis and how it is used.

how long until specific event occurs (death, relapse)

includes if and when event occurs

accounts for censoring

cox proportional hazards model → hazard ratio

Understand the difference between an intention-to-treat vs per-protocol analysis

Feature | Intention-to-Treat (ITT) | Per-Protocol (PP) |

|---|---|---|

Who is analyzed | All randomized participants, regardless of adherence | Only participants who followed protocol |

Preserves randomization? | ✅ Yes | ❌ No |

Reflects real-world use? | ✅ Yes | ❌ No |

Bias risk | Lower | Higher (attrition bias) |

Effect estimate | Conservative | May overestimate effect |

Regulatory preference | Primary analysis (FDA/EMA) | Supportive/sensitivity analysis |

ITT answers - does it work in practice

PP answers - does it work if taken exactly as prescribed

Understand the relationship among the determinants (α, β, etc.) that go into calculating sample size.

Term | Meaning | Effect on Sample Size |

|---|---|---|

α (alpha) | Type I error — probability of false positive (usually 0.05) | Lower α → larger sample needed |

β (beta) | Type II error — probability of false negative (usually 0.1–0.2) | Lower β (higher power) → larger sample |

Power (1–β) | Probability of detecting a true effect (typically 80–90%) | Higher power → larger sample |

Effect size | Expected difference between groups | Smaller effect → larger sample |

Event rate | Frequency of outcome | Lower event rate → larger sample |

Underpowered studies risk TYPE II ERRORS

Pragmatic, Randomized Controlled Trials [2 questions]

Distinguished how efficacy of an intervention differs from effectiveness in context of traditional RCT vs pragmatic clinical trial designs.

Concept | Traditional RCT (Explanatory) | Pragmatic Clinical Trial (PCT) |

|---|---|---|

Focus | Measures efficacy — Can it work under ideal conditions? | Measures effectiveness — Does it work in real-world practice? |

Setting | Controlled research environment with expert investigators | Conducted in everyday clinical settings (e.g., community clinics, health systems) |

Participants | Highly selected, homogenous, adherent | Diverse, typical patients seen in real-world care |

Protocol | Strict adherence, standardized procedures | Flexible, embedded within normal clinical workflow |

Goal | Determine biological or mechanistic cause–effect relationship | Evaluate impact on clinical decisions, patient outcomes, and policy |

Efficacy = ideal world → can it work?

Effectiveness = real world → does it work in practice?

Identify the main limitations to traditional randomized controlled trials that pragmatic trials are intended to address.

Traditional RCT Limitation | How Pragmatic Trials Address It |

|---|---|

Slow adoption of findings — research results take years to influence practice | PCTs embed research directly in health systems to speed translation |

Poor generalizability — highly selected populations | Include diverse, routine-care patients to reflect real-world use |

Unrealistic conditions — rigid protocols, perfect adherence | Flexible protocols aligned with usual care |

Expensive and time-consuming | Use EHR data and routine workflows to reduce cost and time |

Limited relevance to decision-makers | Prioritize outcomes meaningful to patients, clinicians, and policy makers |

Overreliance on surrogate or composite outcomes | Focus on practical, patient-centered outcomes |

Recognize the key features of a trail that make it pragmatic.

Pragmatic Clinical Trial → designed to improve practice and policy, not just test hypothesis

Core characteristics

real world clinical settings

health-system stakeholders involved

routine workflows and standard care procedures used

electronic health records is how data is collected

compares real-world alternatives, not just placebo

diver populations

measures outcomes important to decision makers like pts, clinicians, payers

cluster randomization used

seeks to generate real-world evidence more than lab-based efficacy data

Identify the potential challenges/disadvantages of a pragmatic design.

Challenge | Explanation |

|---|---|

Complex analysis | Multiple sites, variable implementation, and missing data complicate interpretation. |

Funding constraints | Large-scale, system-based research can still be costly. |

Regulatory uncertainty | Fewer formal FDA/NIH standards for pragmatic approaches. |

Ethical considerations | Consent can be complex when trials are embedded in routine care. |

Investigator experience | Many researchers lack training in pragmatic methods. |

Blurred responsibilities | Providers act as both clinicians and investigators — potential conflicts. |

Identify the domains of pragmatism of a trail that are evaluated using the PRECIS-2 scoring wheel.

Evaluates: how a pragmatic trial is across 9 domains

scored: 1 (very explanatory) → 5 (very pragmatic)

Wheel = closer to rim → more pragmatic; Trials near center → explanatory

Domain | What It Evaluates |

|---|---|

1. Eligibility | How inclusive or restrictive the patient criteria are |

2. Recruitment | How participants are identified and invited (routine vs special) |

3. Setting | Whether the trial occurs in specialized research centers or typical care environments |

4. Organization | The level of extra support or infrastructure needed beyond normal care |

5. Flexibility (Delivery) | How much the intervention protocol mirrors everyday practice |

6. Flexibility (Adherence) | Whether strict adherence is enforced or naturally observed |

7. Follow-up | Frequency/intensity of monitoring compared to normal care |

8. Primary Outcome | Whether outcomes are directly meaningful to patients/clinicians (vs surrogate) |

9. Primary Analysis | Whether analysis includes all real-world participants or only highly compliant ones |

RCT Designs: Noninferiority

Understand the key concepts in noninferiority trial design. Why does this design exist? What are the advantages, disadvantages? How does equipoise impact these trials?

Concept | Superiority Trial | Noninferiority Trial |

|---|---|---|

Goal | Show treatment is better than control | Show treatment is not unacceptably worse than control |

Null Hypothesis (H₀) | No difference (H₀ = 0) | New treatment is worse by ≥ Δ (H₀ ≥ Δ) |

Test Type | Two-sided | One-sided |

Common α and β | α = 0.05, β = 0.20 | α = 0.025 (1-sided), β = 0.20 |

Reject H₀ when… | CI does not cross 1 or 0 (depending on measure) | CI does not cross Δ (margin) |

Understand the similarity and differences between how superiority and noninferiority trails are conducted, analyzed, and interpreted.

Aspect | Superiority | Noninferiority |

|---|---|---|

Comparator | Placebo or active control | Active control (SOC) |

Hypothesis | H₀: No difference (H₀=0) | H₀: New ≥ Δ worse |

Statistical test | Two-sided | One-sided |

Alpha (α) | 0.05 | 0.025 |

CI threshold | Does not cross 0 or 1 | Does not cross Δ |

Goal | Show new treatment is better | Show new treatment is not unacceptably worse |

Interpretation | If CI excludes null → superior | If CI excludes Δ → noninferior |

Next step | If NI shown, can test for superiority | Must meet NI first before claiming superiority |

Ethical context | Placebo often ethical | Placebo unethical when effective therapy exists |

Be able to read/understand/apply (parts of) a journal article of a noninferiority trial.

Meta-Analysis

Be able to identify the strengths and weaknesses of meta-analysis as a study design.

Understand the steps to conducting a meta-analysis and how the study design impacts the effect estimate.

Understand the difference between random-effects and fixed-effects models.

Understand and apply the various sources and measures of heterogeneity.

Be able to read and interpret tables and forest plots.

Understand the tradeoff between the research questions (inc/exc), number/similarity of studies, bias and heterogeneity.