TCP Congestion Control

Goals

Efficiency

High link utilization and low latency

Fast re-convergence upon the arrival/completion of a flow

“Distributedness”

The algorithm must operate without central coordination

Fairness

Split bandwidth proportionally between flows

Many ways to define fairness for more than 2 hosts

Max-min fairness - increase of the bandwidth of a flow, does not decrease the rate of a smaller flow

Approaches Towards Congestion Control

End-End Congestion Control

No explicit feedback from network

Congestion inferred from observed loss, delay

Approach taken by TCP

Network-Assisted Congestion Control

Routers provide direct feedback to sending/receiving hosts with flows passing through congested router

May indicate congestion level or explicitly set sending rate

TCP ECN, ATM, DECbit protocols

TCP Sending Behaviour

roughly - send cwnd bytes, wait RTT for ACKS, then send more bytes

TCP rate ~~ cwnd/RTT (bytes/sec)

TCP sender limits transmission - LastByteSent- LastByteAcked < cwnd

cwnd is dynamically adjusted in response to observed network congestion (implementing TCP congestion control)

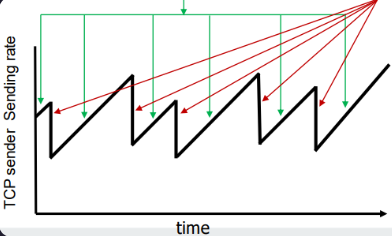

AIMD

Senders can increase sending rate until packet loss (congestion) occurs, then decrease sending rate on loss event

Sawtooth behaviour - probing for bandwidth

Additive Increase - increase sending rate by 1maximum segment size every RTT until loss detected

Multiplicative Decrease - cut sending rate in half at each loss event

Multiplicative decrease detail: sending rate is

Cut in half on loss detected by triple duplicate ACK (TCP Reno)

Cut to 1 MSS (maximum segment size) when loss detected by timeout (TCP Tahoe)

Why AIMD?

AIMD – a distributed, asynchronous algorithm – has been shown to

Optimise congested flow rates network wide

Have desirable stability properties

TCP Slow Start

AI - cwnd +=MSS every RTT

Need to wait several RTT to reach link capacity if high RTT or a fast link

When connection begins, increase rate exponentially until first loss event:

Initiall cwnd = 1 MSS

Double cwnd every RTT

Done by incrementing cwnd for every ACK received

Summary - initial rate is slow, but ramps up exponentially fast

From Slow Start to Congestion Avoidance

When should the exponential increase switch to linear?

When cwnd gets to 1/2 of its value before timeout

Implementation:Variable ssthresh

On loss event, ssthresh is set to 1/2 of cwnd just before the loss event

TCP CUBIC

Is there a better way than AIMD to “probe” for usable bandwidth?

Insight/intuition

Wmax: sending rate at which congestion loss was detected

Congestion state of bottleneck link probably (?) hasn’t changed much

After cutting rate/window in half on loss, initially ramp to to Wmax faster, but then approach Wmax more slowly

K - point in time when TCP window size will reach Wmax

K itself is tuneable

Increase W as a function of the cube of the distance between the current time and K

Larger increases when further away from K

Smaller increases (cautious) when nearer K

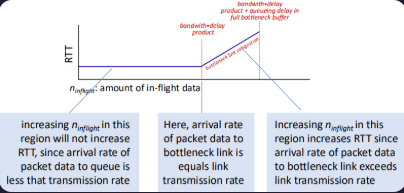

The Congested 'Bottleneck Link'

TCP (classic, CUBIC) increase TCP’s sending rate until packet loss occurs at some router’s output - the bottleneck link

Understanding congestion - useful to focus on congested bottleneck link

Insight - increasing TCP sending rate will not increase end-end throughout at congested bottleneck, which is already transmitting at full rate, R

Multiple flows collectively congest the bottleneck link

Each executes its own TCP congestion control

Each of N flows should ideally receive a throughput of R/N

Keeping the Pipe just Full Enough

Keep bottleneck link busy transmitting, but avoid high delays/buffering

Measured throughput = # bytes sent (inflight) in RTT interval / RTT

Ideal amount of inflight data for a connection = connections share of bottleneck bandwidth * min RTT