cognitive psych; week 5; body schema and multisensory integration

Reading:

Multisensory integration and the body schema: Close to hand and Within reach

Multimodal Spatial Correspondence and the role of posture: Neural Evidence

Neurons that respond to both visual and tactile stimulation have been found in several brain areas: cortically, in ventral premotor cortex, parietal area, ventral intraparietal area and superior temporal sulcus; sub-cortically such neurons have been found in the putamen and superior colliculus. Characteristically, individual multisensory cells in such areas show some correspondence in their individual spatial selectivity across different modalities. For instance, a multisensory neuron with a tactile receptive field (RF) on one hand will typically respond to visual stimulation near that hand, thereby increasing its rate of firing as the visual stimulus approaches the tactile RF, and declining as it is moved away. A critical finding was the observation that the spatial selectivity of visual responses for such multisensory neurons in areas such as the ventral premotor cortex and putamen is not merely retinotopic. For instance, in a neuron with a tactile RF on the arm or face, the corresponding visual RF may shift along with that body part if it is passively moved in space. Conversely, the visual RF may remain relatively fixed in space near the body part corresponding to the tactile RF, if only the eyes are moved. This has led to proposals that the visual responses of such multi sensory neurons may to some extent be body-part centred. In effect, this may provide information about the direction and proximity of the visual stimulus with respect to the relevant body part and to any tactile stimulation occurring there. Analogous responses can be found in some reportedly head-centred bimodal neurons of the ventral intraparietal area. One can readily imagine how such cell populations might, in principle, be useful for providing information relevant to the control of movements of the respective body part.

Effects of posture change on multisensory effects in monkey and human

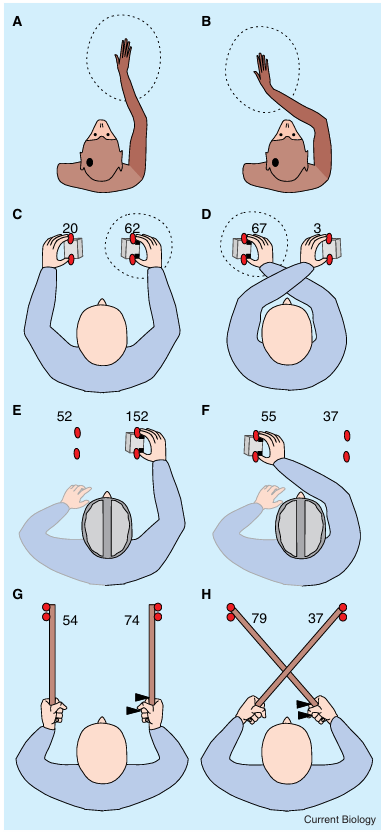

(A,B) Schematic receptive field (RF) of an illustrative left hemi sphere premotor or putamen visuotactile neuron in the monkey. (A) The tactile RF for the illustrated neuron is centered on the right hand/arm (shaded) and the visual RF on the area sur rounding the hand (dotted circle). Critically, when the monkey’s limb is passively moved over the midline (B), the visual RF shifts accordingly, such that visual stimuli near the current hand/arm position maximally excite the cell. (C,D) Behavioral conse quences of posture change on human performance for a cross modal congruency task that measured the impact of visual distractors on tactile performance. Participants hold a sponge cube in either hand, in which two vibrotactile stimulators (black squares) and 2 visual distractors (red circles) are embedded. Participants have to fixate a central point straight ahead throughout, while being required to discriminate the elevation of a series of vibrotactile targets presented unpredictably to the thumb or index finger (lower versus upper elevation, respec tively) of either hand. A visual distractor is presented randomly on each trial together with the vibration from one of the four positions with participants instructed to ignore any visual event (see text for a more detailed description of this experimental paradigm). The values given directly above each hand highlight the magnitude of the crossmodal congruency effect (in millisec onds: see text for an explanation how this effect is computed) elicited by visual distractors placed on that particular cube. The crossmodal congruency effects for vibrotactile targets pre sented to the right hand are shown. Note that the crossmodal congruency effect follows the right hand in space when posture is changed: visual distractors on the right cube interfere more strongly, if that cube is held by the right hand in an uncrossed posture (C), but lights on the left cube interfere more when the hands are crossed and the right hand now holds the left cube instead (D). (E,F) Failure to update the representation of visuo tactile space for a crossed posture in the split brain patient J.W. The amount of interference from left visual distractors essen tially remains unchanged (at 52 or 55 ms) regardless of the posi tion of the right tactually stimulated hand. (G,H) Crossmodal congruency effects can also be elicited in normal participants by visual distractors (circles) situated at the tip of a tool held in the hand, while the vibrotactile targets (triangles) are presented at the hand. After extended tool-use, the crossmodal congruency effect attributable to the visual distractors on the same versus the opposite side reverses (in analogy to panels C,D), if the tools (but not the hands) are crossed (H).

Multimodal spatial Correspondence and the Role of posture: Evidence from Human performance

An entirely different type of evidence, namely from human performance, has recently also revealed cross modal spatial interactions between vision and touch that also seem to depend on the current location of the relevant body parts. Much of this human-performance data stems from research on the crossmodal congruency task. In this task, participants typically receive vibrotactile stimulation on the thumb or index finger of one hand. Usually, the participant’s task is to make a speeded up/down discrimination in response to each vibrotactile stimulus (equivalent to a finger/thumb discrimination with the posture used).

In addition, a visual distractor may be presented ran domly from one of the four possible locations from which a vibrotactile stimulus can be delivered. Thus, the visual distractor can either appear near the same hand as the vibrotactile stimulus, or near the other hand. Hence, the distractor can be congruent or incongruent in elevation (e.g. a ‘congruent’ upper light together with upper touch, versus an ‘incongruent’ lower light together with the same upper touch). Incongruent visual distractors have been shown to delay tactile judgments and to produce more erroneous responses, leading to a crossmodal congruency effect, which is defined as the performance difference between incongruent versus congruent trials. Importantly, this crossmodal congru ency effect is more pronounced for a visual distractor near the tactually stimulated hand than for one near the other hand, or elsewhere. Critically, if the posture is changed, such that each hand is moved to a different location, the crossmodal congruency effects change accordingl. Visual stimuli that are situated closest to the current hand position produce the largest crossmodal congruency effects on vibrotactile judgments for the hand, such that the combinations of retinal visual stimulation and somatotopic vibrotactile stimulation that produce the largest interference change (or ‘remap’) with postural changes. Such ‘spatial remapping’ between vision and touch across different postures is not only found for cross modal congruency effects, but also for crossmodal precueing. This is another type of influence on human performance, whereby the presentation of a cue (e.g. visual) can enhance judgments for a target (e.g. vibro tactile) that is presented shortly afterwards in spatial proximity, no matter whether this target is in the same or in a different sensory modality as the cue. These effects are again modulated by the current posture of the hands. A critical factor for spatial precueing appears to be the proximity of the tactile and visual stimuli in external space, similar to the cellular RF findings reviewed above, and not just their initial hemispheric projections. Thus, for example, the same visual precue in the left visual field may facilitate tactile judgments on either the left or the right hand, depending on which hand is currently in spatial proximity to the precue, as determined by posture. In addition to such effects of discrete visual precueing events on tactile judgments (or vice versa), some other recent studies have shown that continuous rather than discrete vision of the hand or arm can also modulate tactile performance for the corresponding body part, even when no additional information about the position of the tactile stimulus or its identity is provided by vision. All of these human performance related phenomena might conceivably involve multi sensory neural populations similar to those revealed by single-cell recordings in monkeys. But it is important to note that at the present time this suggestion, although frequently made, remains to be demonstrated directly. Nevertheless, one can envisage how the activation of a subset of such multisensory neurons by a spatial stimulus in one sensory modality might lead to an enhanced response from the same neurons to a second stimulus, which is presented at the same (or similar) external location, but in a different sensory modality and to which the activated neurons also respond. Indeed, an enhanced neural response to multisensory stimulation from common or neighbouring locations, in comparison with either unimodal stimulation or spatially discrepant multisensory stimulation, has already been demonstrated in several brain areas. However, the paradigms used to show this have so far differed somewhat from those used to demonstrate apparent body-part-centred multisensory representations of space at the single-cell level. Nevertheless, two recent human functional magnetic resonance imaging (fMRI) studies have now shown that, depending on the current posture (either the direction of the eyes or the placement of the hand), a given vibrotactile stimulus can produce different brain activations as a function of the sight of the hand or visual stimuli near it.

Visuotactile interactions in Brain-Damaged Patients

Some potentially related crossmodal phenomena have now also been observed in brain-damaged neurolog ical patients, particularly in those exhibiting ‘spatial extinction’ after unilateral cortical or subcortical brain injury [40]. Patients showing spatial extinction can typ ically detect a single stimulus regardless of whether it is presented in the ipsilesional or contralesional hemi space. However, when presented with two stimuli concurrently, the more contralesional stimulus is ‘extinguished’ from awareness. This phenomenon can arise within each sensory modality, but can also be observed crossmodally [41,42]. For example in right hemisphere patients, a right visual event may extin guish awareness of a touch on the left hand that would otherwise have been felt. Di Pellegrino and colleagues [41] first observed that such crossmodal extinction of touch to the left hand by right vision is usually more pronounced when the right visual stimulus is presented close in space to the unstimulated, right hand. Crossmodal extinction is often reduced if the separation between right hand and right visual stimulus is increased. It has been proposed that visual stimulation near the ipsilesional hand may boost the multisensory representation of this hand in a manner similar to the behavioral cueing studies of neu rologically healthy people reviewed above, and con ceivably occurs by means of multisensory neurons, such as those found in the monkey brain. Such a boost of the ipsilesional hand’s representation may be to the detriment of the other, contralesional hand, hence producing crossmodal extinction of touch on the latter (see [36] for a recent review). To sum up thus far, multisensory spatial interactions between vision and touch have now been demon strated at the single-cell level in animals and in the per formance of neurologically healthy and brain-damaged humans. In all cases, the multisensory interactions between tactile stimulation (e.g. on the hand) and visual stimulation depend critically on the proximity of the visual stimuli to the relevant body part in external space. Thus, when posture changes, the crossmodal effects ‘remap’ in terms of the spatial combination of receptors in different modalities that need to be stimulated to produce them, while remaining relatively constant in terms of the external locations involved for the effective stimulus combinations. Such posture-based remapping may help to keep the senses spatially aligned, even though each change in posture realigns the respective receptor sheets (i.e. the sensory epithelia). As men tioned earlier, this might also be useful for the spatial control of movements based on sensory information in immediate proximity to the relevant body part, con cerning objects upon which it can act.

The single-cell results concerning visual and tactile interactions that ‘remap’ in this way have, to date, involved both cortical and subcortical structures.

Which brain structures are critical for the potentially related performance effects in humans?

One approach to this question is to assess neural activity in the normal human brain using functional neuroimaging during performance of appropriate tasks. Another approach is to examine which lesions eliminate specific crossmodal effects in animal models or in patients. As a preliminary example of the latter approach, Spence and colleagues sought to determine whether the spatial remapping of cross modal congruency effects as a function of changes in hand posture depends on cortical or sub cortical structures. They tested a split-brain patient (J.W.), in whom the cortical but not the subcortical structures of the two hemispheres were disconnected by callosotomy. Behaviorally, J.W. showed remapping of crossmodal visuotactile congruency effects if the right hand was shifted to different locations within the right visual hemifield, but not when he crossed his right hand over into the left visual hemifield. This indicates that callosal communication between cortical structures is critical for this particular effect to remap between hemifields

Rubber Hands and Virtual Roles: Roles of Vision and Proprioception

Having uncovered effects such as those described above, which depend on the current position of the hands, one can then go on to study which sources of information about hand position are critical for this aspect of bodily representation (e.g. vision, proprioception, or both). One way to approach this problem is to provide conflicting information about hand location to vision and proprioception. In a modified version of the crossmodal congruency task, Pavani, Spence and Driver used ‘rubber hands’ for this purpose. Participants placed their own hands below an occluding screen, while making upper/lower (finger/thumb) vibrotactile discriminations and trying to ignore visual distractor lights in upper or lower positions above the occluding screen. In some conditions, a pair of rubber hands was placed on top of the occluding screen, in a similar posture to the participant’s hands below it. Crossmodal congruency effects from distractor lights upon vibrotactile judgments were significantly larger in this rubber-hand condition than in a control condition with no rubber hands present, even though participants were informed that the rubber hands were dummies and, indeed, directly saw these being placed or removed in front of themselves. More over, the extent of the crossmodal congruency effect correlated with the degree to which participants subjectively felt that the rubber hand seemed to belong to their own body, even though they objectively knew that this was not the case. The extent of the crossmodal congruency effect also correlated with the extent to which participants subjectively experienced that the vibrotactile stimuli were felt at the location of the dummy rubber hands. This ‘virtual body effect’ indicates that visual information about apparent hand position can have objective as well as subjective crossmodal influences on tactile judgments, even when vision conflicts to some degree with proprioception. However, the rubber hands produced no such modulatory effect when placed in an anatomically implausible posture that was totally inconsistent with the real hands’ actual posture. Thus, while purely visual information (i.e. sight of the rubber hands) can dominate slightly discrepant proprioception, proprioception may reduce the impact of vision when the visual information about hand position is inconsistent with proprioception. In agreement with this notion, in the total absence of vision of any hands (real or dummy), proprioceptive information about current hand posture (e.g. crossed or uncrossed hands, or hands placed near versus far from one another) has been shown to modulate crossmodal interference effects. Graziano recently examined the role of visual and/or proprioceptive information about hand/arm location in controlling the spatial properties of multisensory neurons in the premotor cortex and parietal area 5 of the monkey brain. He obscured the monkey’s arm from direct view and used a stuffed dummy arm, somewhat analogous to the rubber arms used by Pavani et al., but now a lot hairier. Graziano was thus able to manipulate visual information from the dummy arm independently of proprioceptive signals concerning the actual location of the real arm. Some of the same premotor neurons showing visual RFs that shifted with the location of the visible real arm were also influenced by vision of the stuffed arm. Moreover, in analogy with the findings in humans, some neurons in parietal area 5 showed an increased neural response to vision of the dummy arm only if the dummy arm was placed in a posture and location that was anatomically plausible for the real, hidden arm of the monkey; e.g. not in cases in which the dummy hand was ‘inverted’ (i.e. with the hand pointing towards the monkey’s body) or if the ‘wrong’ dummy arm was placed above the real arm. These results indicate that visual information about body position seems to strongly influence ‘body-part centred’ multisensory spatial representations. These representations, at least in area 5, may even be detailed enough to incorporate visual discrimination between a left or right hand. But if the arm of a trained monkey is actively or passively moved under neath an occluding screen, so that no arm — neither real nor dummy — is visible, some remapping can still be shown to occur in the anterior bank of the intraparietal sulcus (IPS) and/or premotor cortex, with the visual RF tending to shift along with the unseen arm as its position changes. Thus, if sensory modalities are in conflict (e.g. when viewing dummy hands), plausible visual information about arm or hand location can dominate proprioception, perhaps due to the greater spatial acuity of vision. It is, however, also clear that proprioceptive/kinaesthetic information can play some role, as shown in the absence of visual information about limb position. The same point applies to the crossmodal congruency effects reported in human performance, which can show visual dominance when a dummy hand is seen in a possible location for the real hand, but can still be modulated by proprioceptive information about actual hand location under conditions of occlusion or darkness.

Finally, in a closely related but independent patient study, they also used a rubber hand to set visual information against proprioceptive information, when examining crossmodal extinction in neurological patients. Recall that in some right-hemisphere patients crossmodal extinction is reduced when visual events on the right occur further away from the right hand, e.g. if the right hand is placed out of view, behind the patient’s back. Farné et al. found that when the patient’s right hand was placed out of sight behind their back, but with a rubber hand placed in the previous position of the hand, crossmodal extinction returned to the previous high level. Once again, this visually driven crossmodal result depended on the rubber hand being placed in a plausible posture for that limb.

Modification of Visual-Tactile Spatial Interactions by Tool-Use

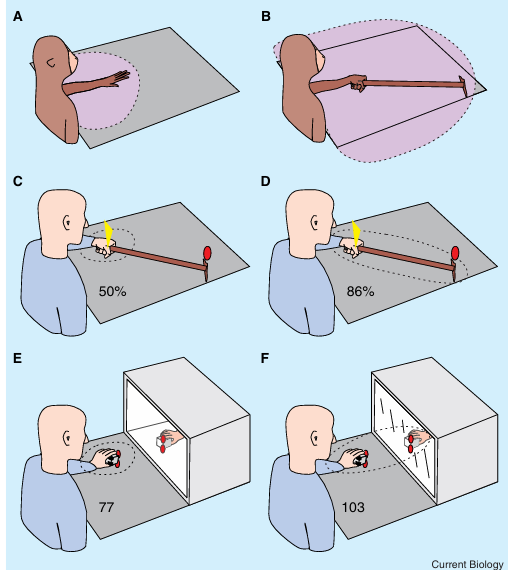

In a groundbreaking physiological study, Iriki and col leagues found that the spatial nature of visuotactile interactions at the single-cell level is modulated when a monkey actively wields a long rake with the hand in order to reach distant items of food. Iriki and colleagues recorded visuotactile neurons in the anterior bank of the IPS that had tactile RFs on the hand or arm and visual RFs nearby. Once the monkey had become skilful in using the rake as a tool to extend reachable space, just a few minutes of using the rake in this way induced an expansion of the visual RF of such neurons into more distant space. These neurons now started to respond to stimuli near the far end of the tool, which would have been unreachable without it and would have been too distant from the monkey to trigger a response from the neurons in the absence of recent tool-use. This extension of the visual RFs of multisensory neurons following tool-use seems to indicate that previous introspective, or purely speculative, claims that the ‘body schema’ can extend along a wielded tool or along frequently used objects may in fact have some correspondence to neurobiological reality. In particular, this may relate to multisensory coding of space by intraparietal neurons. It will be important to determine if similar effects can apply to premotor cortex and to other multisensory regions representing space near particular body parts. In a recent study of normal human performance, we examined whether active use of long tools could plastically alter the spatial nature of crossmodal visual tactile congruency effects in a paradigm related to that shown earlier. We tested the impact of visual distractors at the end of long ‘tools’, which could be held in either an ‘uncrossed’ or ‘crossed’ arrangement. We asked whether crossing the tools could reverse the impact of left versus right visual distractors, such that a right-hand visual distractor would come to have the most impact on judgments concerning left-hand touch (and vice versa) if the tools were held in a crossed posture. This would be different from the usual outcome of within-hemifield combinations leading to the greatest crossmodal interference, as found in the absence of tools. If the tools were held straight, cross modal congruency effects were, as usual, stronger from a visual distractor on the same side as the con current vibrotactile target. But if the tools were held in a crossed posture instead, thus connecting the right hand to the left visual field and vice versa crossmodal congruency effects became larger from visual distractors in the visual field opposite to the vibrotactually stimulated hand. Importantly and in analogy to, this change in the spatial nature of visuotactile interactions depended critically on experience in the active use of the tools; in this case, the participants crossing and uncrossing the tools repeatedly, for several blocks of trials. Our results, therefore, suggest that with prolonged use, the tool effectively becomes an extension of the hand that wields it, so that displacing its far end into the opposite hemispace has effects that are logically similar to displacing the hand itself. Although the neural basis of these results in humans remains to be established, the analogy to the conclusions of Iriki and colleagues on effects of tool-use in the monkey brain appears striking and is now beginning to be tested with neuroimaging studies in humans. Farné and Làdavas conducted a patient study even closer in procedure to Iriki and colleagues’ work on monkeys. They studied right-hemisphere patients with crossmodal extinction of touch on the left hand by a concurrent visual event near the right hand. As usual, extinction was reduced with increasing distance of the visual events on the right side from the right hand. Patients underwent about 5 minutes of training in using a long rake with the right hand to retrieve distant objects placed in front of them under visual control. Shortly after this experience, distant right-hand visual stimuli, delivered at the far tip of the tool, now produced more extinction of left-hand touch than in the pre-training condition. This effect dissipated with time passed since wielding the tool and disappeared after 5–10 minutes, analogous to the cellular effects reported in monkeys. In an independent patient study using computerized visual and tactile stimuli, Maravita et al. found that a visual event at the distant right-hand side produced more extinction of touch on the left hand if it appeared at the far end of a stick wielded in the right hand, rather than in the absence of the stick, or in the presence of a stick that contacted the distant right visual target but did not directly contact the right hand. This particular effect did not require active use of the stick as a tool, but only that the stick brought the right hand into direct contact with the distant visual target on the same right hand side, thus rendering it reachable. Finally, while the above manipulations all increased extinction between a light on the right and concurrent touch on the left hand, Maravita and colleagues were able to show that prolonged tool manipulations that link a visual event on the right to the left hand can have the opposite effect of decreasing competitive extinction between a visual event on the right and left hand touch after right-hemisphere damage.

Apparent expansion of the representation of peripersonal space around the hand following tool-use, or when viewing the hand only indirectly via a distant mirror-reflection

(A,B) Expansion of the visual RF (pink) in multimodal intraparietal neurons of the monkey for visual stimuli before (A) and after (B) training with a rake used to reach a distant food reward. These neurons had tactile RFs on the hand/arm. (C,D) Effect of tool-use on crossmodal extinction of a left tactile stimulus (yellow arrowhead) by a right visual stimulus (red oval) in a right hemisphere neurological patient. Correct detection of left tactile stimuli on bilateral stimulation trials (i.e. in the presence of a right visual stimulus) increased significantly after the patient was trained to collect small items scattered on the right side of his visual field using a rake with the left hand [70]. This decreased competition between stimuli on opposite sides may occur because, after training, both stimuli might now fall within the same expanded, bimodal representation (dotted ellipse), which expands from before training (C) to after training (D). (E,F) Effect of observing a mirror reflection of the hand while perform ing the crossmodal congruency task (anal ogous to 1C). (E) Participants can see visual distractors (red circles) that are placed close to a stuffed rubber hand situ ated inside a box and viewed through its windowed side (the participant’s hand is always hidden by an opaque screen, omitted in the illustration). (F) The hand seen in this example is the reflection of the participant’s hand, with reflections also of visual distractors nearby (the ‘window’ is in this example a mirror). The magnitude of the cross modal congruency effect (given in milliseconds) increased significantly for visual distractors placed on the sponge held by the partici pants’s hand (i.e. near the body, in peripersonal space), but seen only indirectly as distant mirror-reflections (F), compared with distractors at an equivalent optical distance but actually located by the distant rubber hand placed inside (E) the box, and thus outside peripersonal space. This result, which was replicated across three experiments, suggests that the mirror reflection may have been incorporated into the multisensory representation of peripersonal space for the hand (dotted ellipses). This outcome was found despite the fact that the visual stimuli in the two situations were closely matched optically.

Coding of Visual Input about Body Parts from Mirror Reflections

Most people are familiar with viewing themselves in mirrors, often as a part of daily routines such as grooming. One interesting aspect of such mirror situations is that they can provide cases in which tactile information regarding a body part corresponds to visual stimulation that is seen at a distance. The reflection of oneself and that of the razor or hair brush being moved across the face or head is seen ‘through the looking glass’ at twice the distance between the viewer and the mirror. Thus, mirror-situations have one aspect in common with the wielding of long tools; that is, they can both provide exceptions to the usual rule that only visual stimuli near one’s own body surface can correspond to tactile stimulation there. In the case of tools, tactile stimulation at the hand wielding the tool can correspond to visual object(s) across which the far end of the tool is being moved; in the case of mirrors, tactile stimulation can now correspond to visual stimulation seen indirectly in the mirror reflection at a distance. Maravita recently examined whether visual stimuli that are presented near the hands, but are seen only indirectly as distant mirror reflections are processed as if they were falling within the peripersonal space of the hands. This was tested by using a further variation on the cross modal congruency paradigm. For tactile judgments, a larger crossmodal congruency effect from visual dis tractors was found for mirror reflections of lights near the hands than for visual stimuli placed at the equivalent optical distance away, but seen directly. This applied even when the lights seen directly were close to either rubber hands (that were known not to be the participant’s own), or to the real hands of an experimental confederate, which had the same posture and location as the mirror reflections of the participant’s own hands in the mirror condition, but moved asynchronously with the participant’s hands. This outcome suggests that the mirror reflec tions of lights near the participant’s hands were re coded as originating from the peripersonal space near those hands, despite having the same optical proper ties as distant lights placed beyond the reflecting surface. Although the participants’ hands were kept still when the critical stimuli were delivered in the above mirror experiments, one could argue that participants saw reflections of their own hands moving in the mirror con dition at other points in time during the experiment, yet not when the contents of the box were observed instead. Future research might address the extent to which these crossmodal phenomena involving mirrors and video-feedback depend on the temporal synchrony between movements and visual feedback about body-part loca tion. Another interesting question is whether the cross modal mirror phenomena depend on extensive experience in the use of mirrors, which most adult humans have. This could be examined by manipulating the extent of experience with mirrors in nonhuman pri mates, while implementing not only behavioral mea sures of crossmodal phenomena, but also neural recordings of multisensory cellular responses.