Lecture 7 - Instrumental or Operant Conditioning

Midterm Feedback

“Except for” MC questions instilled doubt, more time-consuming

Many MC questions involved similar answers, hard to differentiate

Too many written answer questions for the time constraint

Many choices of written answer prompts was really good

Maybe having a review session before the exam would be beneficial — we didn’t even get to finish the sixth chapter, yet we were examined on it

Lesson Outline

Instrumental Conditioning (IC) Background

Procedures

Influencing Factors

Consequences

Associations in Instrumental Conditioning

Instrumental Conditioning Background

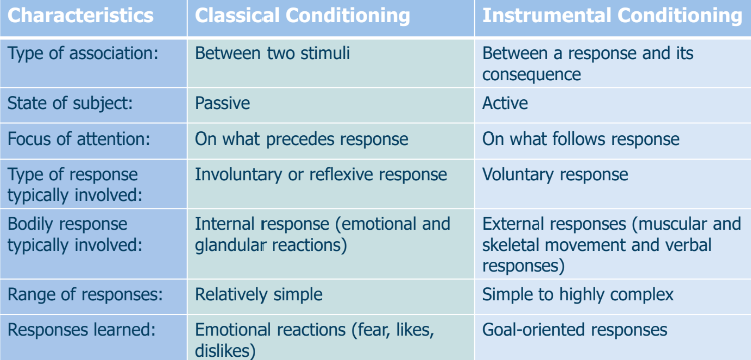

Classical Conditioning vs. Instrumental Conditioning

Classical Conditioning

Stimulus + Stimulus = Conditioned Reflexive Response

Ex: Footsteps + Food = Salivation to Footsteps

Instrumental Conditioning

Voluntary Behaviour + Consequence = Increase/Decrease in Voluntary Behaviour

Ex: Biting one’s nails + Punishment = No More Nail-biting

Commonalities and Differences:

Resulting behaviour is reflexive in CC and voluntary in IC

Basic IC Procedure

In instrumental conditioning, voluntary responses are modified through the following steps:

The organism ‘reacts or behaves’

A behaviour modification technique is applied

Consequence: The reaction or behaviour either occurs more frequently or is reduced/stopped.

Note: IC can produce complex behaviours.

Definitions

Instrumental behavior: Behavior that occurs due to its previous role in producing consequences (e.g., Study hard to get an A+).

Instrumental conditioning: The procedures developed to study instrumental behavior through reinforcement and punishment. Examples of instrumental behavior:

Turning the key to start a car.

Pulling the handle on a slot machine to win.

Driving too fast leads to a speeding ticket.

Touching an electric fence results in a shock.

Background

A type of learning in which the consequences of behaviour tend to modify that behaviour in the future.

Rationale:

Behaviours that are rewarded or reinforced tend to be repeated.

Behaviors that are ignored or punished are less likely to be repeated.

Early Studies on Instrumental Conditioning

Thorndike’s Early Studies

Edward L. Thorndike (1874-1949):

The first serious theoretical analysis of instrumental conditioning

Initially, a lot of behaviours were tried out

Animal tracks outcomes of behaviours

S → R → O

In context (S), response (R) produces outcome (O)

This knowledge guides future behaviours:

Behaviours with positive outcomes increase

Behaviours with negative outcomes decrease

Thorndike’s puzzle boxes:

Cool, but there are some methodological problems:

Have to repeat trials over and over, resetting animal and device

Cutoff? What is the worst performance?

Decreases with learning

Hard to compare across animals, trials

How do you generate a prediction from latencies?

Instrumental Conditioning Procedures

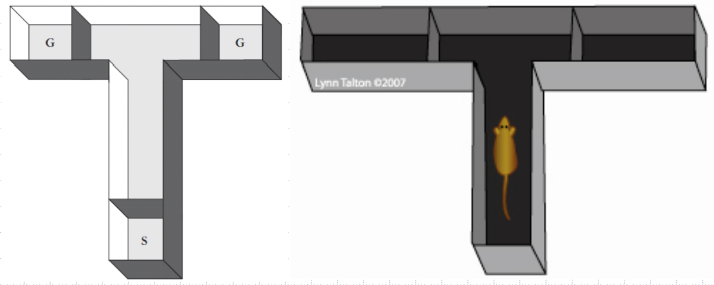

Discrete-trial Procedures

Puzzle Boxes

Maze Learning

Runway Maze (aka Straight-Alley Maze)

Stick rat in S-box (start box) for them to get to G-box (goal box)

Reward for rates is usually fruit loops — they love em’

T-Maze

Used for memory studies and other aspects

One of the two ends there is a reward… when put back into the box, they demonstrate learning (e.g., make the same turn to the correct G-Box)

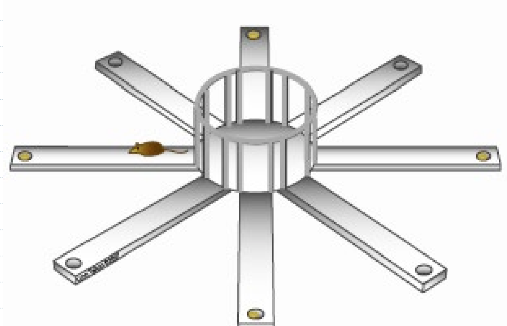

8-Arm Radial Maze

Receive different rewards at different times

Typically four feet off the ground (rats don’t like heights, but do go to ends of arms if there is a good reason for it — like a reward)

Free Operant Procedures

The operant response is defined in terms of its effect on the environment

E.g., your actions alter the environment in some way

Different types of operant responses:

1. Lever-press

Rats learning lever-pressing

2. Chain pull

3. Nose-poke

Rats poking something with nose

4. Peck

Pigeons pecking something

What is the dependent variable?

1. Response-rate

2. Total number of responses

3. Latency to respond

B.F. Skinner and the Skinner Box

Skinner was considered the leading authority of IC

Was influenced by Thorndike

Skinner invented the “Skinner Box” to test IC through shaping

Ex: One type of chamber trains rats to bar-press for rewards

The Initial Learning

The IC involves learning familiar responses in new situations or in new ways

Example: Learning where and what to run for

Rats do not need to learn HOW to run

Rats need to learn WHERE to run, WHERE to turn, and WHAT they will find at the end

Constructing new responses from familiar components

Example: To press a lever, rats hav e to combine various familiar behaviours

Raising their paws, standing on hind legs, etc.

Shaping

Reinforces any movement in the direction of the desired response

Rewards gradual successive approximations

Quicker than waiting for the response to occur and then reinforcing it

Used effectively to condition humans and many types of animals

Ex: parents and children, teachers and students, coaches and athletes

What is shaping?

It involves taking what is known by organism and modifying it in different ways — it’s not about teaching brand new behaviours, but modifying existing behaviours.

What is successive approximation?

In a lever pressing rat learning experiment,, you could wait for dumb luck for it to press lever for first time. Or you reward successive approximations to SPEED UP THE LEARNING PROCESS (for example give it rewards for simply looking in direction of lever — increased probability of facing lever — after it does that over and over again, we up the ante — we withold reward, until the rat APPRAOCHES the lever — then we see probability increase of apporach in lever direction — then eventually it gets to the lever, and nothing happens, until the next step, maybe rewarding TOUCHING the lever.

Kid spelling example — kid wants to say a word, reward it when it says letter, increases likeliness of getting the kid to actually say the word.

Shaping and Chaining

Shaping:

Shaping through successive approximation builds a complex R incrementally

Initially, the contingency is introduced for simple behaviour (R)

As the rate of R improves, the contingency is moved to a more complex version of R

Gradually, it builds a complex R animal that would never spontaneously produce

Chaining:

Chaining builds complex R sequences by linking together S→R→O (if S, then R, leads to O) conditions

Initially, train the animal to pick up an object

Next, reward it for picking it up and then throwing it

It allows a series of behaviours (as opposed to shaping, which simply elaborates ona simple response)

Shaping and chaining can be used together to train animals to complete incredibly complex behaviours. Both techniques require skill and patience from the trainer.

Keep an animal motivated and interested

Select proper training sequence

Cannot move too fast

How to Get a Rat to Lever Press

IC in the Skinner Box

Outcomes (O):

± food delivery

± shock through wires in the floor (punishment)

Behaviour (R): rate of lever pressing

Context (S): light that signals box is “on”

Note than animal is “free” in the chamber, no experimenter intervention

Free-operant learning

Also, many possible contingencies can be introduced

Positive Reinforcement: Press lever (R) → GET FOOD | Positive Punishment Press lever (R) → GET SHOCK |

Negative Reinforcement Press lever (R) → STOP SHOCK | Negative Punishment Press lever (R) → STOPS FOOD |

Structure of the IC Skinner Box Experiment

Initially, tries many things; eventually, accidentally presses the lever, produces a positive effect

Now starts hanging around the lever, accidentally presses it again

Rat has learned a contingency: if light on (S), pressing lever (R) → food (O); spends much of tis day pressing and eating

Basic Pattern of IC

Generalizing & Discrimination

Influencing Factors

Quality of the Outcome

Appetitive stimulus: ‘pleasant’ event or outcome in the context of IC

Aversive stimulus: ‘unpleasant’ event or outcome in the context of IC

Relationship Between the Instrumental Behaviour and the Outcome

Positive contingency: The instrumental response causes an outcome/stimulus to appear

Negative contingency: The instrumental response causes a stimulus to dissapear or be eliminated

Magnitude of Reinforcement

As magnitude increases:

Acquisition of a response is faster

Rate of responding is higher

Resistance to extinction is greater

Ex: people work harder for $30/hr than $10/hr

Immediacy of Reinforcement

If reinforcement is immediate, responses are conditioning more effectively

Ex: Addiction to drugs can happen quickly because the euphoric effects are felt almost instantly

As a rule, the longer the delay in reinforcement, the more slowly the response will be acquired

Ex: Eating habits are hard to change because of the long delay between better health and weight loss

Note: For rats, the association between their behaviour and the reward or punishment should be maximum one minute

Level of motivation

Higher motivation leads to faster learning

Skinner found maximum motivation occurred when rats were food deprived for 24hrs — makes the rats more interested and motivated to obtain food

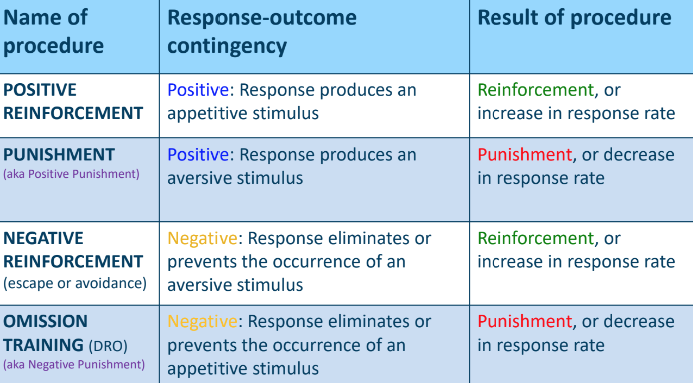

Consequences

Changes in instrumental behaviour are determined by the nature of the outcome, and whether or not the outcome is presented or eliminated

Reinforcement: Where the relationship between the response (R) and the outcome (O) increases the probability of the response occurring

Punishment: Where the relationship between the response (R) and outcome (O) decreases the probability of a response occurring

Reinforcement

Anything that strengthens a response (or increases the probability that the response will occur)

Primary and Secondary Reinforcers

Primary reinforcers fulfill basic physical needs for survival

Do not depend on learning

Ex: food, water, termination of pain

Secondary reinforcers are acquired or learned by association with other reinforcers

Ex: money, praise, awards, good grades

Punishment

Anything that suppresses a response (or decreases the probability that the response will occur)

Types of Consequences and Their Procedures

Four main scenarios outline the consequences of behaviour:

Positive Reinforcement:

Behaviour produces an appetitive stimulus

Probability of behaviour increases

Contingency is positive

Human Example: Slot machines

Experimental Procedure: Lever-pressing for food

Positive Punishment (Punishment)

Behaviour produces an aversive stimulus

Probability of behaviour decreases

Contingency is negative

Human example: ticket after speeding

Experimental example: rats not pressing lever to avoid shock

etc…

Instrumental vs. Classical Conditioning

Associations in IC

Associative Structure of Instrumental Conditioning

Originated with Thorndike

Role of Pavlovian mechanisms in instrumental conditioning

Focus on individual responses and their stimulus antecedents and outcomes (molecular approach)

REMINDER: If S, Then R, Produces O (if you find yourself in a situation with particular stimuli, then a specific response will lead to a particular outcome)

Thorndike’s Law of Effect: S-R Learning

If a response in the presence of a stimulus results in a satisfying event then the S-R association is strengthened. If the response is followed by an annoying event then the S-R association is weakened.

The reinforcer (O) serves to ‘stamp in’ the S-R association

Motivation for instrumental behaviour:

Activation of the S-R association upon exposure to contextual stimuli (S), in the presence of which the response was previously reinforced

No learning about ‘O’ or ‘S-O’ or ‘R-O’

The O was not learned about; rather, it was a means of learning

THORNDIKE WAS NOT CORRECT — but he wasn’t entirely wrong either. S-R is learned about, it can be produced independently of what the O is. It’s really applied when overlearning (or habitual learning) occurs.

Back in the day Dean would smoke. He would light up in particular smoking contexts — it became habitual.

So O (outcome) MATTERS!

Reward expectancy:

Can the expectancy of a particular outcome (S-O_ modulate instrumental behaviour?

Does the expectancy of a certain outcome drive the response?

Earliest theory — Hull (1930), Spence (1956):

Two factors motivate the instrumental response (TWO PROCESS THEORY):

S-R Association

The stimulus comes to evoke the response directly

S-O Association

Response is motivated by expectancy of reward (classical conditioning occurs here — context reflexively makes organism expect a reward)

The Modern Two-Process Theory

Rescorla & Black (1967)

S-O Association (Pavlovian Learning) → Conditioned, central emotional state (positive or negative based on the reinforcer) → Response

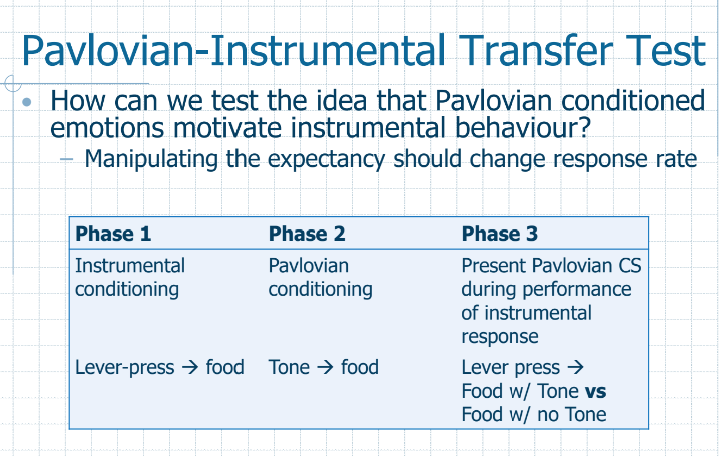

Pavolvian-Instrumental Transfer Test

Phase 1: Lever-press → food learning (instrumental conditioning)

Phase 2: Tone → food learning (classical conditioning)

Phase 3 (Test Phase): the organism is given the opportunity to produce instrumental responding (with a lever present in the environment). Sometimes the tone will play with when the lever is pressed, sometimes it won’t. Instrumental responding increases when the CS is present vs. when it’s not.

Conclusion — CS produces a reflexive conditioned expectancy in the organism, which makes the organism work harder (press the lever harder)

PIT in Humans…

Hnadrgrip stronger when purple stimuli was present

They didn’t report

they didn’t know they were squeezing any differently when CS was present in the third phase vs when it wasnt

Conditioned Emotional State or Reward-Specific Expectancy

Does classical conditioning influence instrumental behaviour via a positive or negative emotional state (based on reinforcer valence) or do subjects acquire specific expectations of the reinforcer?

is it any reward your predicting that will increase responding, or is it a particular reward? in the transfer test, the reward was the same. could it be any work towards a reward in phase 1 and prediciton of reward in phase 2?

The Experiment:

Phase 1 — Classical Conditioning

Lever-pressing leads to chocolate reward

Chain-pulling leads to cheese reward

Phase 2 — Instrumental Conditioning

Yellow light predicts when chocolate reward is given

Red light predicts when cheese reward is given

Phase 3 — Test Phase

When lever-pressing, sometimes yellow light would be on, sometimes red light would be on, and sometimes neither would be on

When chain-pulling, sometimes yellow light would be on, sometimes red light would be on, and sometimes neither would be on

Explanation:

Lever pressing only increased when the yellow light was on (e.g., specific to the reward it was working for)

When the red light was on, it did not increase

When the expectancy of reward and working towards reward are the same, effort in instrumental responding increases

If you predict cheese, you’re not gonna work harder to get chocolate (they are not generalizing across rewards)

R-O Associations in Positive Reinforcement

The instrumental response

Stereotypy vs. behavioural variability

Relevance (belongingness and instinctive drift)

The outcome of the response

Quality and quantity

Positive and negative contrast

The relation or contingency between the response and outcome

Temporal contiguity

Contingency

The Instrumental Response

What is this R that is learned?

Initially, thought to be a rote motor program

However, if the normal motor program is blocked, the animal will use other methods to achieve the same ends

Ex: wading/swimming; pressing lever with nose

R is a “behavioural unit”

Not a single behaviour but a class of behaviours producing an effects

Some cognitive psychologists would call it a goal or intention

Stereotypy vs. Response variability

It is possible to maintain variability of responses using reinforcement

However, unless variability is explicitly reinforced, responding will become more stereotypical

Thorndike’s belongingness

Easier to train responses that ‘belong’ with the reinforcer

e.g., cannot train yawns or scratching as an escape response

Breland & Breland’s instinctive drift

Extra responses that are performed instinctively because they are related to the reinforcer

They compete with the response required by the training procedure

e.g., cannot teach raccoons to drop coins in a box

The Instrumental Reinforcer

Quantity of the reinforcer (canadian dollars vs. rupees)

Quality of the reinforcer (brussel sprouts vs. ice cream)

quantity and quality of reinforcer example

food-deprived rats changed amounts of food an dhow much insturmental responses would be dislayed to receive different quantities of different food

More acidic food does not increase instrumental output

For neutral food, you prefer medium over small, but not larger amount

For sweet food, more ouput as amount increases

RECAP:

S-O association is reward expectancy (make prediction of outcome — e.g., prediction of reward)

Example": Dean’s nephew — Kid recognized hockey arena and predicted that popcorn was coming, so he immediately started thinking ‘reward’ after being exposed to particular environment. S-O association, or reward expectancy (element of classical conditioning: reflexive association between stimulus and outcome)

Note that influence of reward expectancy is that it increases instrumental response to receive the reward — unless the reward expected and the reward gotten by instrumental response doesn’t align (see Pavlovian instrumental transfer test)

R-O association is when we learn association between making a response and the outcome it leads to… we learned this with positive reinforcement (response ledas tot he presence of something good, which goes back to fuel our response more).

Response itself is being learned

Reinforcer or outcome is being learned

The link between them is also learned

The Instrumental Reinforcer

Quantity of the reinforcer (canadian dollars vs. rupees)

Quality of the reinforcer (brussel sprouts vs. ice cream)

quantity and quality of reinforcer example

food-deprived rats changed amounts of food an dhow much insturmental responses would be dislayed to receive different quantities of different food

More acidic food does not increase instrumental output

For neutral food, you prefer medium over small, but not larger amount

For sweet food, more output as amount increases

Does prior experience with a reinforcer influence IC?

Shifts in reinforcer quality and quantity

Example: food deprived rats performed instrumental response for food

Phase 1: Groups 1 and 2 received small food reward after (2 pellets) — run same pace as groups 3 and 4

Phase 1: Groups 3 and 4 received large food reward after (22 pellets) — run same pace as groups 1 and 2

Phase 2:

Group 1 continues to receive 2 food pellets

Same pace ran in phase 2 as in phase 1 (line of best fit accounts for variability)

Group 3 continues to receive 22 food pellets

Same pace ran in phase 2 as in phase 1 (line of best fit accounts for variability)

(SL group, small-large group) Group 2 now receives 22 food pellets

Ran faster in phase 2 than in phase 1 — they are happy that they’re getting more; hell yeah I am running faster

Group 4 now receives 2 food pellets

Ran slower in phase 2 than in phase 1 — they are used to putting in effort for large food amount, so they reduce their instrumental response (it’s still a reward, but it’s a decrease in instrumental behaviour because prior experience matters — 2 is better than 0, but worse than 22)

Conclusion: prior experience DOES matter — positive contrast (experienced in group 2) and negative contrast (experienced in group 4)

In other words, you shouldn’t ONLY look at the magnitude of the reward — you should also look at prior experiences

The Response-Reinforcer Relation

Understanding the relationship between a response and its consequence is critical for efficient instrumental behaviour

First, temporal relation: The time between the response and the appearance of the reinforcement

E.G., The more the delay between the response and reinforcer, the harder the learning

EXAMPLE:

Rats lever press for food, and food pellets are delivered after different fixed delays

conclusion: Immediate reinforcement is most effective

Is it possible to overcome the delay effect? YES — Marking the target instrumental response. Where your instrumental response produces a marking that sort of reminds you that your response has been taken into account (e.g., “

Example of Marking;

Rats lever press for food, food delivered after 30-sec delay

Group 1 - no signal

Group 2 (MARKING GROUP)- 5-sec light right after lever press (the light acts as a CS, or predictive stimulus, for the reward)

Learns very quickly

Group 3 - 5-sec light right before food delivery (doesn’t learn at all — TOO MUCH OF A DELAY, REALLY AN EXAMPLE OF FLOCKING… THE LIGHT BEING A PERFECT PREDICTOR OT HE REWARD 5 SECS BEFORE ITS RECEIVED BLOCKS THEM FROM UNDERSTANDING THAT THEY CAUSED THE LIGHT IN THE FIRST PLACE)

Second, response-reinforcer contingency: The extent to which the response is necessary any sufficient for occurence of the reinforcer (causal effect)

E.G., you have to learn that you caused the reinforcer by producing the instrumental response (cause and effect)

Skinner’s superstitious behaviour — idea that we create contingencies in our mind that don’t necessarily exist. The pigeons in skinner’s experiment would be doing weird things like pecking or jumping around (imitating the thing they did the first time they received randomly-timed reward)… accidental/adventitious reinforcement.

Contiguity was all that mattered according to Skinner — they created their own contingency, and as long as the temporal contiguity is there, then reinforcement can occur

However, contrasting evidence suggests that contiguity is not the only explanation (some dude re-did skinner’s pigeon experiment):

Similar behavioural responses developed in many different pigeons

Food delivery increased strength only of terminal responses

Periodic presentation of reinforcer produces behavioural regularities based on the interval

conclusion — specific types of behaviours were reproduced that had food-getting qualities

Why are high terminal and low interim responses observed?

Periodic deliveres of food activate feeding sysems and corresponding pre-organizrd spec ies typical foraging and feeding repsonses

Just after food:

d

d

Do R-O Associations Exist?

Instrumental devaluation

Phase 1 — if you push a rod to the left, you get chocolate, and to the right, you get cheese

Phase 2 — you devalue the reinforcer of one of them (e.g., overload them with cheese)

Phase 3 — the rat will only produce the instrumental response that leads to the OTHER reinforcer (in this case, chocolate), that can only be explained by R-O associations

Colwill & Recorla (1986):

Associative Structure of Instrumental Conditioning

Hierarchical S(R-O) conditioning — about knowing WHEN the R-O association exists

S activates R (Thorndike, habitual behaviour)

S also activates R-O association

EXAMPLE: Response (not being a brat) leads to outcome (popcorn) only when exposed to a certain stimulus (the hockey arena)

Context lets you know that R-O association is now active