Data Gov

details

lockdown browser

3.25 pm

120 mins

location classroom

types of Qs

mcqs: single correct and multiple correct, fill in the blanks & T/F - 35 qs (2 points each)

short answer (2-3 sentences is enough) - 3 qs (10 points each)

sample scenario → respond with different view points

source

readings

slides and notes

in-class discussions

notes

big data

5 Vs

velocity - speed at which data is generated (real-time trading data, monthly data, yearly data)

volume - amount of data generated (youtube, instagram, snapchat, sales data)

variety - types of data (structured, unstructured)

veracity - trustworthiness of data (source, credibility)

value - what value does the data add to the organisation and customer (shareholders)?

big data v small data

unstructured v structured data v semi-structured data

steps of big data solutions

data ingestion

data storage

data processing

algorithms

process/set of rules to be followed in calculations or operations to solve a problem

SEEP framework

security

Confidentiality: allowing only authorised people to access sensitive information

Integrity: consistency, accuracy and trustworthiness of data

Availability: data is always made available when authorised people need it

security | privacy |

encryption, access control, network security, breach response | consents, policies, data removal, discovery and classification |

how do data privacy policies get enforced? | what data is important? why? |

threat types

external - hacker (black, gray, white) expert, intruder

internal - compromised, oblivious, negligent, malicious, professional

tools - malicious code, scanners, penetration attempts, denial of service, social engineering, ransomware

defenses against ransomware

backups

encryption

mitigation methods

technical controls - security system maintenance, penetration testing, system monitoring, backups, access controls, encryption, anti-malware measures

user actions - password, install and update security softwares, be vigilant

organizational controls - training, ensuring safety protocols upto standards, minimize access, breach mgmt

legal mechanisms - GLBA (financial), HIPAA (health), FERPA (academic), GDPR (international law for any company processing data of people from the EU → EU data protection directive was the predecessor), privacy act (federal data)

herley’s premise

users ignorance of security advice is justified

overwhelmed users

benefit is perceived to be moot

advice helps users mitigate direct costs, but increases indirect costs

economics

data quality

non-rivalrous

non-excludable

monetizing data

new revenue streams

direct sales

data sharing agreements

targeted advertising

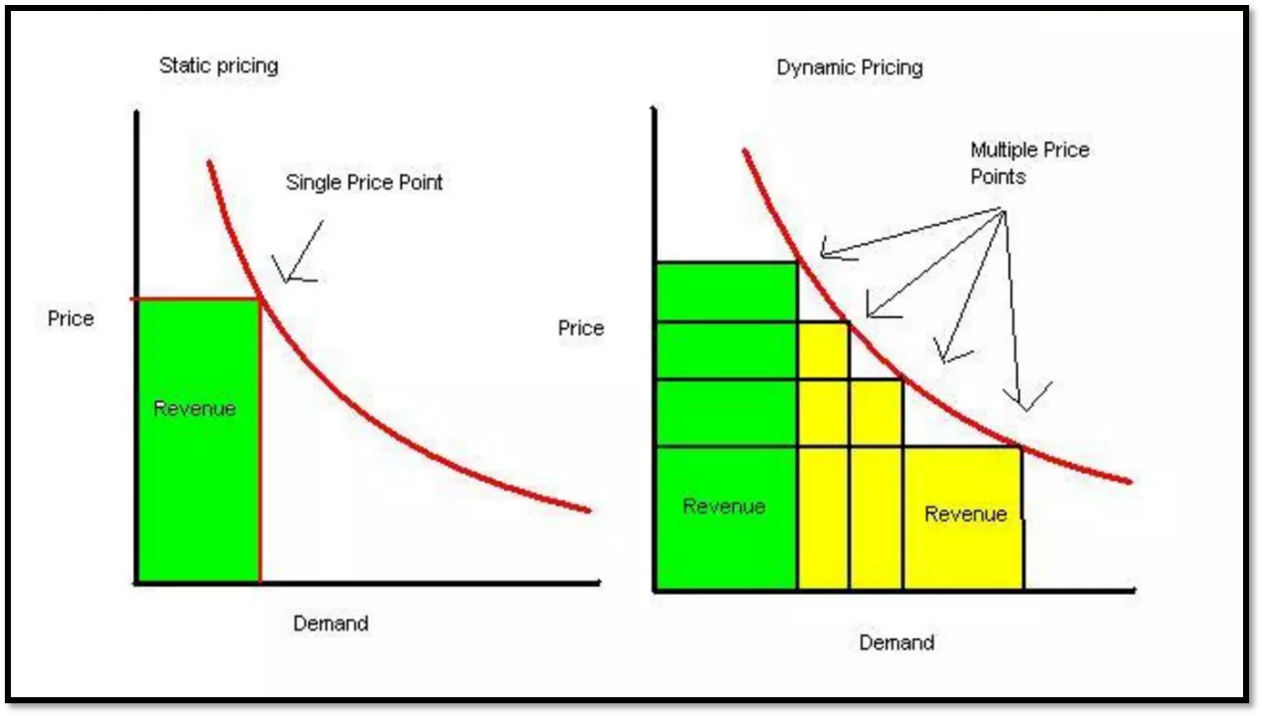

pricing strategies

barter system - oldest form, exchange of goods & serv, incurs taxes

fixed pricing (the price tag) - same price for all buyers, very stable

automated dynamic pricing - data is more imp, very dynamic, price changes

business value

investment in hardware, software, training, management for big data tools

initial and operational cost

outcome of using big data

customer spending increases

research and analytics, accuracy better

predictive analytics

better strategizing

(increasing revenues, lowering costs, increasing productivity, reducing risk)

efficiency - assumption that a price is reflective of everyone in the market having all available information

data brokers

companies/individuals that sell personal information about people to other companies

websites track your information

types of data we share

what companies do with BD v. what companies say about BD

ethics

outcomes based reasoning

military decision making, public policies

‘right’ decisions, actions and policies optimize the balance of benefit over harm

hiroshima bombing, lifeboat on titanic

utilitarianism

focused on outcomes/consequences

utility of a policy is measures by how much it promotes the good

but how can good be measured?

ignores human rights, justice

principle of utility: greatest happiness of all those whose interest is in question is universally desirable

in short ‘the greatest good’

long term or short term happiness

deontology

greek deon = duty

adherence to rules, actions taken to achieve a goal

consequences do not matter

the golden rule: do unto others as you want them to do to you

trolley problem

virtue ethics

if act furthers a virtuous trait, it is ethical

happiness

excellence

character

12 moral values - honesty, self control, humulity, justice, courage, empathy, care, civility, flexibility, perspective, magnanimity, wisdom

privacy

right to be left alone, to control, manage, edit and delete information about themselves and decide the extent of spread of their information

privacy - what info goes where (identity, location, activity, health records, employment records)

security - ensures info doesnt go anywhere it isnt supposed to; helps enforce privacy policies

fair information practices (Cant Ur Dick Please So I Always Orgasm?)

collection limitation

use limitation

data quality

purpose specification

security safeguards

individual participation

accountability

openness

online social networks - easy access, readily available; one point of attack, data is not secure

managing privacy:

anonymization

deanonymization - vulnerable to conclusions derived from data aggregation

encryption - symmetric v. asymmetric

tools/ways

clear internet browser, OS

TOR, JAP, Hide the IP (hides info)

Privacy Bird, Guard, Bugnosis (deduces complex privacy statements)

PRIVACY ACTS

COPPA - childrens online privacy protection act

for children

gives parents control of info collected of their children (<13)

GLBA - gramm leach bliley act

for financial institutions

financial privacy rule

safeguards rule

pretexting provisions

HIPPA - health insurance portability and accountability act

for health information

patient can amend, access, place restrictions and request accountability

FERPA - family education rights and privacy act

for students

confidentiality of student educational records

state laws

international laws

GDPR - general data protection regulations (EU)

bias

bias

prejudice in favor or against something, often in an unfair way

example: labeling a yellow watermelon as "uncommon" because it deviates from the typical red one

bias in technology

algorithms and AI: not inherently biased but reflect biases from data or design

example: job application filters biased against women in tech due to past data patterns

fault

technological determinist

technology shapes our future and determines social change

any flaws seem inherent to the technology

if an algorithm used for job applications is biased, a technological determinist would blame the algorithm's design or coding for the bias

social determinist

think about the use of the program and not the creation of the program

humans are flawed, the tools are neutral

example contd. a social determinist would argue that the bias comes from societal patterns (historical inequality) reflected in the data used to train the algorithm, not the technology itself

types

Every Dick Can Try Pleasing Me, I’m Extra Sexual

bias

meaning

example

preexisting bias

bias that exists before system creation, often from societal norms or values

search engine results for CEO favor images of men due to historical underrepresentation of women

technical bias

arises from constraints or limitations in tools, algorithms, or data structures

image recognition struggles to classify images from regions underrepresented in training data

emergent bias

develops after system deployment due to unexpected user interactions or societal changes

translation app starts generating gendered translations based on user behavior over time

data-driven bias

bias from flaws or imbalances in the data used to train models

selection bias: urban data leads to underrepresentation of rural populations

interpretation bias

errors from human assumptions or perspectives when analyzing data

assuming correlation implies causation, e.g., linking ice cream sales to crime rates in summer

evaluation bias

occurs when evaluation datasets or benchmarks do not represent the real world

facial recognition software performs poorly for darker-skinned women due to lack of representation

class imbalance bias

occurs when certain classes are overrepresented or underrepresented in training data

medical diagnosis models perform poorly on rare diseases due to limited training examples

sampling bias

arises when collected data is not representative of the true population

imagenet dataset: 45% images from the US, only 1% from china, skewing towards western contexts

measurement bias

arises when proxies for variables are flawed or noisy

sat scores as proxies for intelligence, reflecting access to resources more than innate ability

impact of bias across the pipeline

data collection: imbalanced datasets skew outcomes

model design: lack of fairness metrics

training/deployment: feedback loops perpetuate bias

evaluation: ignoring subgroup disparities

interpretation: human errors reinforce bias

mitigating bias

solutions:

remove or reweight problematic signals in datasets

develop robust metrics to evaluate biases

implement diverse, inclusive benchmarks for training models

example: increasing representation of minoritized groups in datasets to improve fairness

fairness, justice and discrimination

fairness - ensuring algorithms act in fair ways

accountability - who is responsible for automated behaviour? supervision/auditing of large scale machines

transparency - why does an algorithm behave in a way? can it explain itself?

justice - quality of being fair and reasonable

people are to be treated impartially, fairly, properly and reasonably by the law and its enforcers

no harm befalls anyone, and if it does, remedial action is taken and morally right consequences are delivered

discrimination - unjust or prejudiced treatment of people from a different group

disparate treatment

US legal term for differentially treating a protected class

requires proof not only of the treatment but also the intent to treat them differently because of the status

disparate impact

US legal term for discriminatory effects even after applying superficially neutral rules

not hiring anyone under 6 ft, knowing well enough that avg women height is less than that

no intent needed

fairness is hard

to make algo less biased, remove sensitive factor

but variable could be correlated to other factors

also, it is easy to understand one factor if we have a few other factors (determine race from address, spending, etc)

COMPAS example

black people wrongly labelled high risk, white people wrongly labelled low risk

137 qs asked, no q was about race

more examples

29% people received credit scores that differed by atleast 50 points between three credit bureaus (either algo is highly biased or arbitrary)

medical school using program to screen job applicants - unfairly discriminate towards females and ethnic minorities (from surname, POB)

husky is classified as a wolf solely based on existence of snow in the background of a picture

theories of justice

john rawls - comprehensive/principle based

society’s basis is a set of tacit agreements - the social contract

agreed upon principles must not be dependent of ones place in society

believed that rational, self interested people with similar needs would choose these 2 principles to guide their interactions

principle of equal liberty - equality in the assignment of basic rights and duties (is the opportunity to receive open to everyone with the same criteria)

difference principle - principle of fair equality in opportunity - is the system not harming the least fortunate (defined before the system was implemented)

emphasizes redistributing wealth to benefit the least well off in society

robert nozick - comprehensive/principle based

inequalities are seen as a fact of life

is there deception or fraud or manipulation in the acquisition of data used in the program

deception or fraud in the use of the program

fairness of process by which property is acquired and transferred rather than the distribution itself

michael walzer - contextual/casuistical

different spheres of distribution

data from one sphere is used in allocation of a decision that is measuring success or failure in another sphere

distributing different social goods according to their distinct social meanings, argues against a single metric of equality

example

John Rawls - health care should be distributed equally, such that it would benefit the least well-off.

Nozick - access to healthcare would depend on one’s ability to pay, as long as the acquisition of wealth was just.

Walzer - healthcare should be distributed based on need and not influenced by one’s wealth, as it belongs to a different sphere with its own criteria for justice.

health and data

sources - clinical data, medical publications, clinical references, genomic data, streamed data, web and social networking data, business organizational and external data = big data

health data is sensitive

poses for diseases, health disorder, pre-existing conditions mental illness, drug abuse, suicide attempts, STDs, depression, abortion

what if health insurance companies get their hands on this data

HIPAA

wearables

dont actually make you healthier

motivation - leaderboards - setting goals

improve provision of care - for elders specially

population health implications

ethical concerns

health monitoring by wearables - new norm

beyond the superficial use, identification of early onset of disease, sleep and physical activity is revealing of psychological state

fitbit data is now googles data since g bought f

genetic information

biological samples - biological material in which DNA is present

genetic data is information about these characteristics

genome - total DNA sequence of a cell

unique features - identifying, ubiquitous, longevity, predictive, individual and familial in nature

tech and data assistance to healthcare

leverage technology to create a better system

must build business case to fund this

focus on electronic medical records is important but

health information exchanges provide immediate benefit and more cost savings

predictive analytics

AI

AI - mimics human intelligence like hearing, problem solving

ML aims to teach a machine how to learn a task and provide accurate results by analyzing patterns

use of AI

using common sense

learning and adapting constantly

understanding cause and effect

reasoning ethically

real AI

general purpose AI - science fiction robot types (full human mimicking)

specific purpose AI - nontrivial, automated cars, pokemon go, speech and image recognition

turing test

humans v AI

complex, messy and ambiguous tasks that come naturally to humans are hard for AI

clearly defined problems that we think need intelligence are solved by AI (chess)

AI lacks a broad understanding of the world, common sense, out of the box creativity, no consciousness

humans are good at finding solutions in messy situations

adapting/self evaluation/creativity

explaining our reasoning

tasks that need a broader understanding of our world - morally weighted questions

humor

social reasoning - knowing what it is like to be human

application

LLMs - predictive texts

generative AI - uses ML to create new original content like images, videos

GPT - generative pretrained transformer (create outputs, trained on huge amounts of data, uses deep learning to analyze relationships between data - word prediction)

two main approaches towards moral decision making for AI

game theory

studies settings where multiple parties have different preferences (utility/objective functions) and different actions

each agents utility depends on another’s actions - circular approach

how can agents rationally form beliefs over what other agents will do and hence how agents should act - predictive behaviour

machine learning

optimize a performance criterion using example data or historical data

association - market basket analysis

uses - prediction, knowledge extraction, compression, outlier detection

supervised learning - classification, regression

unsupervised learning

reinforcement learning

trolley problem in autonomous cars - if it were done by a human, would it be a crime?

ethical implications

people losing jobs to automation

workers displaced by AI

AI is faster and less expensive

people might have too much leisure time

utter boredom - wall-e

lost sense of being unique

what if we lose our humanity

if AI is created, would they be equal to humans

lost privacy rights

intelligent scanning of data

loss of accountability

if expert medical system kills a patient with a wrong diagnosis

autonomous cars

voting systems

might end the human race

laws of robotics

law 0 - a robot may not injure humanity through interaction

law 1 - law 0 + unless it would violate a higher order law

law 2 - a robot must obey humans except where such orders conflict with a higher order law

law 3 - robot should protect its own existence unless… higher law

gamification and addiction

gamification - adding game elements, mechanics, and thinking to non-game applications to engage people and influence behavior

main components - motivation, mystery, triggers

desire to play games is human nature

games influence behavior through fun and entertainment

entertainment inspires sharing

ethical concerns

exploitation and manipulation

data generation in gaming

50tb of data daily

2 billion gamers globally

EA hosts 2.5 billion game sessions per month (50 billion minutes)

game metrics

measures include player behavior, monetization, technical performance

game analytics involves analyzing these metrics

gaming industry statistics

65% of american households have someone who plays video games regularly

67% of households own a gaming device

average gamer age: 35

97% of americans aged 12–17 play video games

psychology and addiction

video games use rewards and punishment systems

emotional symptoms: restlessness, preoccupation, lying about time spent, isolation

physical symptoms include impacts on the brain similar to drug addiction

digital heroin

gaming and technology affect the brain's frontal cortex similar to cocaine

raises dopamine levels, driving addiction

social media positives

finding new friends, staying in touch, raising awareness, emotional support, creativity

useful for remote or socially anxious individuals

social media negatives

feelings of inadequacy, depression, anxiety, cyberbullying

compulsive use can harm relationships and productivity

how to reduce social media addiction

delete apps, turn off notifications, set limits, take up non-tech hobbies

transparency and accountability

problem with AI/ML systems

AI exceeds human performance but makes mistakes

lack of trust due to lack of explainability

tradeoff between accuracy and explainability

explainable AI

black-box predictions are not enough

explanations should be understandable to non-specialists

tradeoff between expert systems (good for explanations, less accurate) and neural networks (accurate, but not explainable)

crowdsourcing

uses internet workers to perform micro-tasks to solve complex problems

examples include wikipedia, recaptcha, foldit, and app testing

popular crowdsourcing tasks

sentiment analysis, search relevance, content moderation, data collection and categorization, transcription

surveillance and power

employee monitoring:

employers monitor for reasons like protecting trade secrets, reducing liability (e.g., harassment), and productivity.

searches depend on privacy expectations—invasive searches (body, clothing) vs. non-invasive (desks, company-provided devices).

employers can monitor communications (calls, emails) if policies eliminate privacy expectations.

telephone recording:

one-party consent (e.g., federal law, 38 states) vs. two-party consent (e.g., CA, FL, IL).

keystroke monitoring is legal but cannot capture passwords.

surveillance after hours:

legal questions: can actions be regulated without violating rights?

surveillance capitalism:

a new economic order: human experience becomes raw material for prediction and sales.

justifications often cited: consent, anonymity, and security.

power in surveillance:

persistent tracking reduces the power of those being watched (e.g., orwell’s "1984").

examples include the shift from "big brother" to the "electronic panopticon."

surveillance in data analytics:

surveillance creates negative externalities, such as loss of autonomy over personal data.

target individuals cannot avoid observation or identify watchers, leading to power imbalances.

data, democracy, and digital divide

democracy and technology:

democracy relies on informed citizens with access to unbiased information.

mass media historically acted as a "fourth estate" but struggles with issues like agenda setting and economic independence.

pillars of democracy (IF RIPE)

election of officials through free and fair elections

inclusive suffrage

right all citizens to run for public office

freedom on expression

right to information other than official sources

right to form political parties and interest groups

public sphere:

a space for public discourse; challenges include fragmentation and political/commercial bias.

internet’s democratizing potential:

features include global reach, low entry barriers, and participatory culture.

issues: information overload, accountability, and fragmentation of discussions.

participatory culture:

encourages users to contribute and share ideas, with support for informal mentorship.

social media enhances this but also creates echo chambers and filter bubbles.

digital divide:

barriers: tech literacy, language, broadband cost, and irrelevant content.

strategies: improve connectivity, contextual content, and education on technology use.

readings

biases

stanford vaccine algorithm: this case highlights how a vaccine allocation algorithm led to the exclusion of frontline doctors, raising questions about design flaws and the prioritization criteria embedded in the algorithm.

racial bias in medical algorithms: a healthcare algorithm was found to systematically prioritize white patients over sicker black patients, illustrating how historical inequities in healthcare data can perpetuate racial disparities in treatment.

fairness, justice, and discrimination

bias in criminal risk scores: research revealed that bias in criminal risk assessment tools is mathematically inevitable, highlighting trade-offs between predictive accuracy and fairness.

amazon ai recruiting tool: amazon’s ai recruiting tool showed gender bias by penalizing resumes that included references to women, such as participation in women’s organizations.

credit scores: this case examines systemic biases in credit scoring systems and the limitations of ai in addressing these entrenched disparities, raising questions about transparency and accountability.

transparency/accountability

houston school district: the district’s secret teacher evaluation system sparked controversy for its lack of transparency, leading to debates on fairness and accountability in automated decision-making.

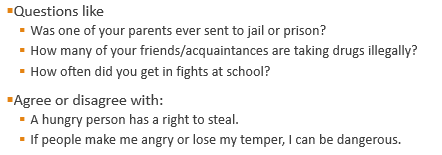

cheating-detection: automated cheating detection systems in educational settings have raised ethical questions about privacy, false accusations, and due process.

blame on humans: in several instances, humans have been unfairly blamed for errors stemming from algorithmic decisions, highlighting the need for clearer accountability structures.

gamification

uber’s psychological tricks: uber uses gamification techniques like progress bars and incentives to influence driver behavior, often pushing them to work longer hours without fully understanding the psychological impact.

democracy/digital divide

what facebook did to american democracy: facebook’s algorithms and platform design have been scrutinized for amplifying divisive content and misinformation, which influenced democratic processes and public opinion.