psych chapt 7

Chapter 7 - Learning

- 7.1: The Scientific Study of Learning

- Learning is defined broadly as a relatively permanent change in behaviour not due to drugs, injury, or disease.

- Events we can perceive in the world around us affects behaviour. And Behavior produces effects on the environment as well.

- (Sending, reading, texting, answering)

- Some skills are innate, which means they are not a result of learning, we are born with them.

- Reflexes involve situations that naturally produce corresponding behaviour.

- Pavlovian conditioning occurs when we associate 2 events - a signal and whats signaled. For ex, many people have particular ringtines on their phone for different people; when you hear bell chiming, you know its your dad.

- Operant conditioning how we learn what happens when we do something.

- Your cat learns to run to you when you shake treats.

- Social learning - when we learn something by watching others. Your cat might learn to open doors by watching you.

- Latent learning - when we learn something but dont show it until we have a reason to show our knowledge.

- 7.2. Pavlovian Conditioning

- Ivan Pavlov elucidated classical conditioning. His work provided basis for B.F.Skinner and John Watson.

- Ivan pavlov studied how dogs digest food, starting with salvation. He wanted to see how much dogs salivate through powder meat but even if the meat was put on the lab coat dogs started to salivate. They knew the food was gonna be given to them. → associating 2 events that occur together.

- Stimulus : can be anything in the environment that (a) we can detect (b) is measurable, and © can evoke a response or behaviour.

- Unconditional stimulus : produces an unconditioned response - an innate relex.

- In pavolivian experiment, the food was uncondiitioned stimulus and the salivation was unconditioned response.

- Conditioned stimulus: signals or predicts an unconditional stimulus.

- Conditional response - a conditional reflex. In pavlovs experiement, the condictional stimulus was the laboratory coat and the conditional response was salivation to prepare for the upcoming food.

- Neutral stimulus: a type of stimulus in which an environment event currently hsa no meaning. This stimulus doesnt predict wheter uncondition stimulus will occur.

- Lab coat without meat powder.

- Reflexes involve unconditional stimuli and responses; in these cases; the stimulus forces the involuntary response.

- Unconditioned Stimulus - dont need to learn how to respond, natural reflex.

- Conditioned - something was learned. Conditional; probability of leearing occurring in the uncondition stimulus does or does not occur.

- Ivan Pavlov elucidated classical conditioning. His work provided basis for B.F.Skinner and John Watson.

- 7.2.1 Assosicating Stimuli: Ordinal Position Methods

- Conditional Stimulus = lightning

- Conditional response = wince/startle

- Unconditioned stimulus = Loud thunder

- Unconditional response = startle

- Excitatory conditioning - There is positive correlation between the conditional stimulus and the unconditional stimulus.

- The conditional stimulus is presented before the unconditional stimulus.

- In short-delayed conditioning, the unconditional stimulus occurs within a few seconds of the start of the conditional stimulus. You hear thunderclap shortly after you see lightning.

- In long-delayed conditioning, the unconditional stimulus occurs after the conditional stimulus has been there for a while. You hear tornado warning sirens or see the sky turn green minutes before you see the tornado.

- Trace-conditioning, the unconditional stimulus occurs minutes or hours after the conditional stimular has stopped. You eat gas station sushi hours before you feel the effects of salmonella.

- Simultaneous conditioning - the unconditional stimulus occurs with the start of the conditional stimulus. Thunder and lighting heard same time.

- Backward conditioning, the unconditional stimulus occurs few seconds before the start of the conditional stimulus. Hear thunder before you see lightning.

- Excitatory conditioning - There is positive correlation between the conditional stimulus and the unconditional stimulus.

- Events that occur together can be associated, even when they are not related to eachother.

- 7.2.2 Pavlovian Taste Aversion Learning

- This is a type of trace conditioning.

- In taste aversion learning, we eat a new food item, and then experiment illness several hours later. The next time you encounter that food item, you will avoid that food item and will likely report feeling ill all over again.

- Conditional Stimulus = taste of food

- Conditionsl response = nausea

- Uncondition stimulus = illness causing bacteria

- Unconditional response = sickness

- Garcia and Koeling (1966) studied flavour conditioning with rats.

- 7.2.3 Pavlocian Extinction

- In pavlolvian conditioning, the signal occurs without whats signaled and the conditional responses go away, the condition stimulus is presented alone, and the conditional response decreases.

- Pavlov wearing his laboratory coat without feeding his dogs for several days would be an example of extinction, and the dogs would eventually stop salivating when they saw this lab coat.

- Pavlovian extinction is a procedure that involves repeatedly presenting a conditional stimulus without an unconditional stimulus.

- Spontaneous recovery: after extinction and a break without the signal thats signaled, the signal occurs alone and the condition response reappears

- In pavlolvian conditioning, the signal occurs without whats signaled and the conditional responses go away, the condition stimulus is presented alone, and the conditional response decreases.

- Acquisition occurs first, extinction next, spontaneous recovery.

7.2.4 Pavlovian Conditioned Inhibition and Safety Signals

- Pavlovian conditioning is one way we develop fears.

- Often, war veterns, first responders, and other peope exposed to trauma develop PTSD, which involves intense fear.

- Exploding landlines and gunmines and unconditional stimuli, and their effects, such as fear are unconditional responses.

- When they return home, fireworks or loud noises can contribute to conditional stimuli.

- Safety signals, aka conditional inhibitors, are generally arbitrary stimuli like lights and tones.

- Puffs of air and shock are unconditional stimuli, and experimenters measure eye blinks (startle) or skin conductance (sweating) as fear responses.

- Jovanonic and collages concluded PTSC may be a fear condition disorder.

7.2.5 How is Pavlovian Conditioning Related to what we do?

- Pavlovian conditioning plays an important role in our emotions.

- We experience unconditional stimuli that can be pleasant/appetitve stimuli or unpleasant/aversive. Pleasant stimuli - romantic partner and cookies Unpleasemstn stimuli hot surfaces and spoiled food.

- Stahl et al.'s (2009) results tell us that if a new product is paired repeatedly with an actor we respect and like, we will associate our liking of the actor with the product.

7.2.6 Stimulus Generalization

- Pavlovian conditioning can produce conditioned excitation- associating 2 events together- and inhibition - one event signals that other wont occur.

- Stimulus generalization involves reposning similarly to conceptually or physically similar stimuli.

- A pigeon might learn to associate food with a vertinel line on a key.Then the pigiein would start to peck at the vertical line on the key

- Stimulus generalixation tends to happen with phobias; a person may be bitten by a brown recluse spider and develop a fear of all spiders.

- In conditional generalization, a fear response conditioned to neutral faces with high pupil distance to mouth width ratios would generalize to the other neutral faces with similar features but not to neutral faces with low pupil distance to mouth width ratios.

7.2.6.2 Stimulus Discrimination

- Stimulus Discriminaion involves responding different to different events.

- In stimulus discrimination, conditional responses only occur when the original conditional stimulus is introduced.

- If a pigeon receives food after a vertical line is presented but not after a horiizional line is presented, then it will not peck at the other line tilts.

7.2.6.3 High Order Conditioning

- An already-conditioned signal paired with a neutral stimulus or corrently meaningless event.

7.2.7 The development of Behaviourism and “Little Albert”

- Behaviourism is am appreach to science that focuses on how we learn new behaviours and how those behaviours change across different situations.

- Pavlonian conditioning changed John. B.Watson’s perspective, and Watson argued that psychology as a natural science should limit its studies to phenomena that could be measured. → credited with the establishment of behaviourism.

7.2.7.1 Little Albert

- Little Albert would start crying at just the sight of the white rat; the rat signaled the loud noise.

- Little Albert demonstrated stimulus generalization—crying and crawling away from objects similar to the furry rat (i.e., the rabbit, dog, or fur coat). However, Little Albert did not generalize his fear to some white objects

7.2.8 Systematic Desensitization

- Our conditional fears can interfere with day-to-day functioning and with activities that we might otherwise enjoy with with lower anxiety levels.

- Joseph Wolpe (1958) developed a therapeutic treatment for phobias called systematic desensitization based on Pavlovian Conditioning.

7.3 Operant Conditioning (instrumental)

- Describes disuations in which we can choose among different options based on our previous experiences. → learn that our behaviour has consequences.

- Operant Conditioning can predict whom you choose to spend more time talking to.

7.3.1 Scientist: Thordike and Intrumental Processes

- Began studying “learning” in 1890s.

- Thordike paved the way for “behaviourism” philosophy to flourish.

- Best known for cat in puzzle boxes. Put cat in box that requires alot of behaviourism to open a door to see how long it took. Because cats learned how to manipulate an instrument, such as a pedal, Thorndike called this type of learning instrumental. → which is why operant conditioning is called instrumental.

- Developed the law of effect. He was interested in how the consequences of behaviour influences subsequent behaviour.

- The “effect” in the law of effect referred to the conseuencfes of behavior.

The law of effect was twofolded: (a) behaviours that yielding satisfying consequences are more likely to recur, and (b) behaviours that result in discomfort are less likely to be repeated.

7.3.2 (scientist) Skinner and Operant Processes

- In the 1930s, skinner founded radical behaviourism - the philosophy of science that treats thinking and feeling like any other behaviour. We just have to be able to measure the behaviour and see effects on the enviorionment.

- Since we want to study complex behaviour like thinking and feeling, operant relaced “instrumental” conditioning.

- Skinner and Thorndike differ in that Skinner included the consequence as a part of what we learn about our behavior (see Skinner, 1963). Thus, we learn about general antecedents, behavior, and their consequences. Another way to think about it is we learn about specific stimuli, responses, and outcomes.

- Antecedents: anything in the physical environment that we can detect and that tells us something about the consequences of our actions.

- Behaviour: anything we can do that is affected by the environment, can be repeated and counted, affects the environment.

- Consequences: stimuli that can increase/decrease the probability of future behaviour. (Events that happen after and because of a response).

- Dead man test: a term used to help define behaviour: if a dead man cant do it, it is not behaviour.

7.3.3 Reinforcement Contingencies

- Skinner identified contingencies (If, then rule) if you do this, then this will happen.

- Skinner explained that with reinforcement, the consequences of a response increase the probability of this behaviour, whereas punishment decreases the probability of behaviour.

4 reinforcement contingency procedures and processes are as follows:

- Positive reinforcement: Some behaviours prudces a stimulus that leads to more of that same kind of behavour in the future. → positive because of the added consequence, reinforcement because of the effect of increasing this behaviour.

- EX. turning on yuor computer by pushing a button - something that you will do again in the future.

- Negative reinforcement: Some behavours removes a stimulus that leads to more of that kind of behaviour in the future.

- EX. when computer displays a blue screen with an error message, you push power button which removes the blue screen with an error message but also turns of your computer (conseqeucne).

- Occurs in 2 forms : escape and avoidance.

- In escape, the operant response removes an already-occurring aversive stimulus. That is, you get away from or escape the thing you don't want happening.

- Muting a cell phone before bed prevents calls from waking us up.

- In avoidance: the aversize stimulus is not currently present but will occur unless you produce a response to cancel it.

- Positive punishment: Some behaviour produces a stimulus that leads to less of that kind of behaviour in the future. Addition of somethin unpleasant to decrease that behaviour. It is positive because of the added conssquence and punishment because of th effect of decreasing that behaviour.

- Negative punishment: Some behaviour removes a stimulus that leads to less of that kind of behaviour in the future. Removal of behaviour that was pleasement resulting in that behaviour to be decreased. Negative because of the removed consequence and it is punishment because of the effect of decreasing that behaviour.

- Reinforcement is an increase in future behavior; punishment is a decrease in future behavior; positive adds a consequence; and negative removes a consequence.

- Target Behaviour: a type of behaviour, specifically, the response in which we’re interested.

7.3.2 Operant Extinction

- Behaviour goes where reinforcement flows.

- Extinction is a procedure in which a consequence previously followed behaviour but no longer does.

- Decreases responding but this process takes a while → depends on how regularly the consequence was delivered.

- There are 3 behavioural effects of extinction:

- Temporary increase

- in responding - an extinction burst

- Emotional and aggressive responding

- Responding eventually stops.

- Partial reinformecent extinction effect: An effect in which behaviour reinforced only occasionally lasts longer without consequences than behaviour reinforced every time when consequences are no longer available,

- You would notice immediately that you didnt get candy after visiting the bank if you were used to getting it everytime.

7.3.5 Shaping New Operant Responses

- Positive reinforcement is used (a) to keep behaviour going or (b) to increase the magnitude of behavior or © as a part of shaping by the method if successive approximations to teach new responses.

- Shaping involves selecting and reinforcing more complex responses that look like the response to want.

- Operant chambers were invented by Skinner to automate the presentation of stimuli and collection of responses. Operant Chambers typically have a way to present different colours of light as antecedent stimuli.

- The receptacle for the food dispenser makes a clicking sound when food is delivered and is lit when food is available; the light and the sound that signal food delivery become conditional stimuli.

7.3.6 Reinforcers

- Reinforcers are events or stimuli that follow behaviour and increase the future likelihood of that kind of response.

- Example: Reprimands should function as a positive punishment but that means the rock throuwing would decrease. Reprimands are definitely positive in contingency but it sems that rock throwing has increased. That makes reprimands positive reinforcement.

- Positive reinforcers: (added by the response) trophies, money, praise, and food are often positive reinforcers given when a response occurs

- Negative reinforcers: (removed from the response) Putting on sunglasses in the bright sun means that you removed the harsh light (negative reinforcer)

- Reinforcers are divided into primary and secondary.

- Primary (unconditional) not learned, they naturally affect the response they follow and include stimuli. (natural environment, not by other people)

- Secondary: (conditional) depend on what has already been learned. They signal or have been associated with primary reinforcer.

- Generalized conditioned reinforcers - objects traded for several other reinforcers - special because they dont loose their power to reinforce behavior.

7.3.7 Scheduling Conseqeunces

- Schedules of reinforcement are the rules we use to determine when we get reinforcers for behaviour. (change behaviour)

- Comes from Ferster and Skinner (1957) who found that these orderly patterns of behaviour exist for all intermittest reinforcement schedules.

- Continuous reinforcements = every response is reinforced, intermittent reinforcement = only some responses are.

There are 4 main schedules of (intermittent) reinforcement: fixed ratio, fixed interval, variable interval, variable ratio.

- Ratio: schedules deliver reinforcers after a specific number of response. This schedule can be fixed. And the animal will learn that a specific number of responses are required to receive reinforcement. Can also be variable- changes with each trial.

- Interval: deliver reinforcers after atleast two responses and a specified amount of time. The first response starts a timer, and the next response after the timer finishes produces a reinforces.

7.3.7.1 Ratio Reinforcement Schedules

- In a fixed ratio schedule, we must produce the target response a specific numbr of times and the last response produces a reinforcer.

- Ratio schedules are based on a number of responses, not time.

- In a variable ratio schedule, we hav eto respond to a different number of times for each reinforcer, and the number of response changes around an average for each reinforcer.

- VR 3 schedule means that on average the third response triggers reinforcement.

- intermittent schedules of reinforcement in order from lowest to highest rate of responding: Fixed interval, variable interval, fixed ratio, variable ratio.

7.3.7.2 Interval Reinforcement Schedules

- In a fixed interval, a response starts a timer, the specific amount of time must elapse, and the next response will trigger the delivery of a reinforcer.

- Ex: A fixed interval of one minute (i.e., FI 1 min) means that the first response starts a 1-minute timer; although responses may continue during the interval, the animal will not receive a reinforcer until 1 minute has passed.

- Variable interval: we have to wait different amounts of time for each reinforcer.

- Some intervals are longer, and some are shorter than the previous one.

- Characterized by a slow and constant pattern of responding.

- Some intervals are longer, and some are shorter than the previous one.

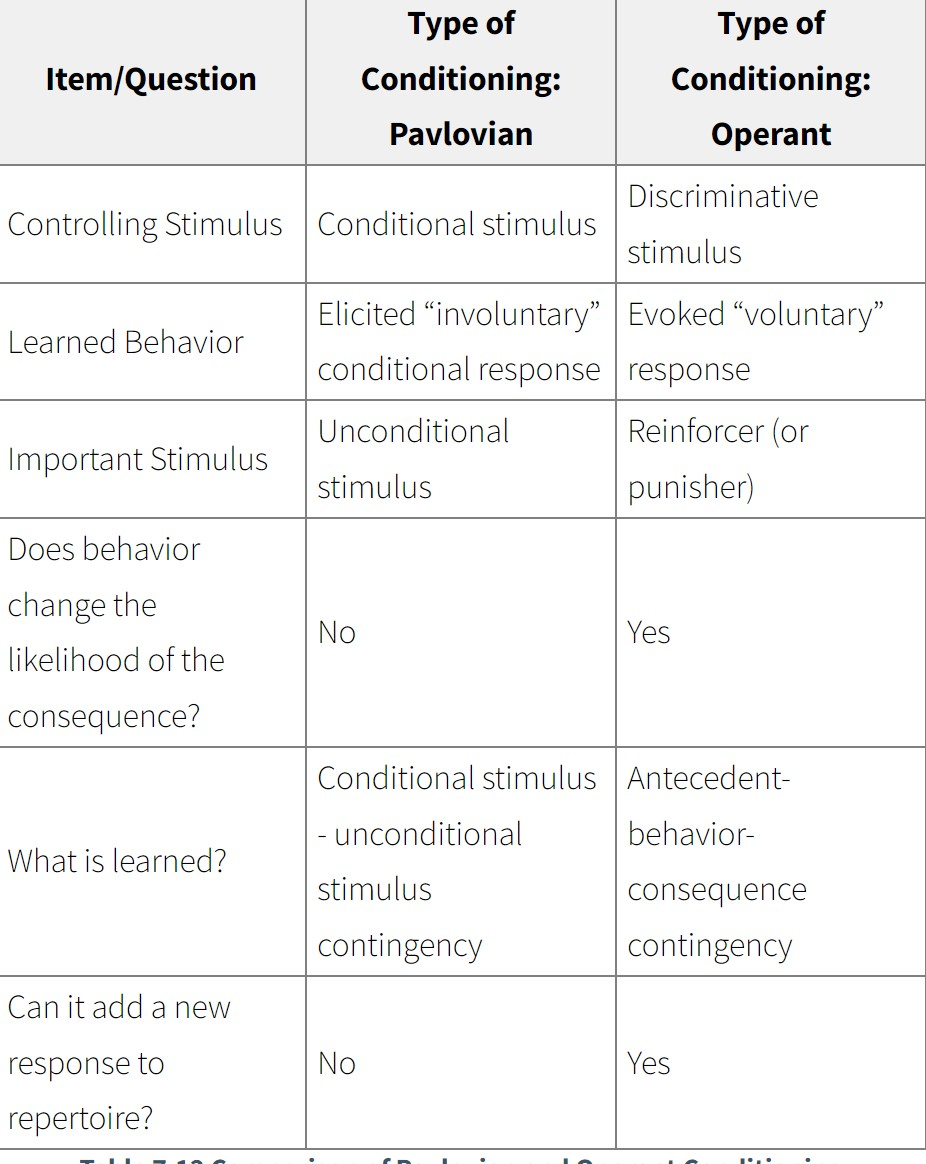

7.4 Comparing Pavlovian Operant Conditioning

- Pavlovian and Operant Conditioning are different forms of learning.

- Differences: sources of the controlling stimulus, type of response, and what is learned.

- With operant conditioning, the animal is required to respond in order to receive the consequence.

- Behaviour is determined in both pavlovian and operant conditioning.

- Confused about which conditioning?: Whenever you’re confused about which type of conditioning occurred, just ask yourself if the response changed the probability of the unconditional stimulus or consequence.

- Operant behavior changes whether the consequence will be delivered; the unconditional stimulus is presented regardless of behavior in Pavlovian conditioning.

- 7.5 The energence of Cognitive Psychology

- Watsons behaviourism was popular to psychology.

- 7.5.1 Tolman and Latent Learning

- Edward C.Tolman felt that explanations for behaviour should include more than environmental stimuli and pumbility observable behaviour. → credited with the establishment of cognitive psychology.

- His approach to studying behaviour is called operational behaviourism.

- Most famous for experiments on latent learning using rats running through T-mazes.

- Edward C.Tolman felt that explanations for behaviour should include more than environmental stimuli and pumbility observable behaviour. → credited with the establishment of cognitive psychology.

- Latent learning is learning that we cant see until we’re motivated to show it; no change in performance until we receive an award.

- A cognitive map is any visual representation of a person's (or a group's) mental model for a given process or concept.

- Tolmans experiments and theories greatest impact: represented one of the foundations of cognitive learning - the learning doesnt have to involve changes in performances we can see.

7.5.2 Bandura and Social Learning

- Early 1960s - Albert Bandura departed from a strict methodological behavioral orientation and discovered a new type of learning - based on studies with kindergarten students.

- Called it observational learning, now it is known as social learning.

- In social learning, we learn it from other people, specifically in imitation.

- Social learning explands learning further into the cognitive domain,

- Transferred association: In order to copy the behaviour of another, the observer must see the model's behavior and see the model earn a reward for that behavior.

Banduras (1977) theory specifies that observational learning entails four phases/processes/stages: attention, retention, production, motivation

- In the attentional phase, we must notice the models behaviour. Children with autism spectrum disorder have trouble with this phase → dont always benefit from social learning.

- In the retention phase, we think about performing the models action ourselves.

- In the production phase, we actually perform the models actions

- In the motivational phase, our imitated behaviour produces the same reward the model earned.

7.6 Biological Constraints on learning and learned helplessness

- Seligman proposed some stimuli are more likely than others to become signals for important events, he called it biological preparedness.

- Its easier to condition a Pavlovian fear to snakes and spiders than to arbitrary stimuli like flowers and tones.

- Noted that phobias are often different from fear conditioning in the laboratory for these reasons:

- Phobias can be learned in a single trial

- Phobias can persist even when we know object is harness

- Phobias are things that could hard our ancestors

- Phobias dont extinguish quickly.

- We can see biological preparedness in the lab when we use shock as an unconditional stimulus to associate with snakes and spiders as conditioned stimuli.

- Where pavlovian and operant conditioning interact: learned helplessness.When we experience an aversive stimulus, usually involving pain. → activates the sympathetic division of the autonomic nervous system. The resulting unconditional responses (increases repoiration/heart rate) release hormones from the adrenal gland.

- A student failing a math class may try several different strategies (longer and more frequent study sessions; using different study techniques, etc.) to escape/avoid failing the class. If these attempts do not work, the student may simply stop trying to succeed in math (learned helplessness to a specific situation) while continuing to study for other classes.

Chapter 8 -Memory

8.1 Metaphors for Memory

- Search Metaphor: A way of describing the processes involved in memory using terms and phrases that relate them to looking around in physical or virtual space.